Overcoming Molecular Dynamics Trajectory Analysis Challenges: A Guide for Biomedical Researchers

Molecular dynamics (MD) simulations generate vast, complex trajectory data that poses significant analysis challenges for researchers in drug development and biomedical sciences.

Overcoming Molecular Dynamics Trajectory Analysis Challenges: A Guide for Biomedical Researchers

Abstract

Molecular dynamics (MD) simulations generate vast, complex trajectory data that poses significant analysis challenges for researchers in drug development and biomedical sciences. This article provides a comprehensive framework for overcoming these hurdles, covering foundational principles, advanced methodological applications, practical troubleshooting, and rigorous validation techniques. We explore common problems such as periodic boundary artifacts, solvent overload, and structural drift, while detailing solutions using tools like CPPTRAJ and MDAnalysis. Furthermore, the article highlights the growing integration of machine learning for extracting meaningful biological insights from trajectory data, enabling more accurate prediction of drug solubility, binding affinities, and protein function. This guide aims to equip scientists with the knowledge to transform raw simulation data into reliable, actionable results for accelerating drug discovery and development.

Understanding Core Challenges in MD Trajectory Analysis

Troubleshooting Guides

Guide 1: Fixing Periodic Boundary Conditions (PBC) Artifacts

Problem: After loading your trajectory, your protein complex appears exploded, with domains scattered across the simulation box or molecules split across boundaries. This chaos is caused by molecules crossing periodic boundaries during simulation [1].

Diagnosis: This is a classic PBC visualization issue. The simulation physics are correct, but the raw coordinates make molecules that crossed the box edge appear on the opposite side [1] [2].

Solutions:

- CPPTRAJ Method: Use the

center,unwrap, andautoimagecommands to reassemble your complex [1]. - MDAnalysis Method: Use Python transformations for the same effect [1].

- MDVWhole Tool: For complex molecular assemblies (like lipid bilayers or vesicles), use this open-source tool designed to repair assemblies broken by PBCs across entire trajectories [2].

Guide 2: Managing Solvent Overload

Problem: Your trajectory file is massive, and analysis is slow because your protein is drowned in thousands of water molecules and ions [1].

Diagnosis: While essential for a realistic simulation, explicit solvent is often unnecessary for analyzing the protein itself [1] [3].

Solutions:

- Strip Solvent with CPPTRAJ: Remove water and ions to create a smaller, analysis-friendly trajectory [1].

- Implicit Solvent Consideration: For future simulations requiring binding or solvation free energies, be aware that implicit solvent models (like PCM, COSMO, SMD) are faster but can struggle with charged species and explicit hydrogen bonding, potentially leading to inaccuracies [3].

Guide 3: Correcting Structural Drift

Problem: Your entire protein complex tumbles and translates through space during the simulation, making measurements like distances or RMSD meaningless without alignment [1].

Diagnosis: This is overall rotation and translation of the molecule, which must be removed to analyze internal conformational changes [1].

Solutions:

- RMS Alignment in CPPTRAJ: Fit each frame of the trajectory to a reference (e.g., the first frame or an average structure) using the protein backbone [1].

- Drift Correction in MDAnalysis: Incorporate fitting directly into your trajectory transformation pipeline [1].

Guide 4: Reducing Bloated File Sizes

Problem: Trajectory files are enormous, consuming significant storage space and slowing down analysis and sharing [1] [4].

Diagnosis: High-frequency saving of coordinates for all atoms (including solvent) leads to very large files [4].

Solutions:

- Stripping Solvent: As in Guide 2, this is the most effective way to reduce file size [1].

- Lossy Compression: Use the NetCDF4/HDF5 format with quantization. This strategically reduces coordinate precision to save space. Studies show that a quantization level of 100x (storing coordinates to 0.01 Å) maintains a good balance, keeping energy errors low for most terms [4].

- Save Only Protein Atoms: When writing the processed trajectory, ensure you only include the atoms of interest [1].

Frequently Asked Questions (FAQs)

Q1: My trajectory is fixed for PBC and aligned. Why do my distance measurements still look strange? A: Ensure you are calculating distances between the same atoms in each frame after the molecule has been re-imaged and aligned. The "unwrap" command is critical for maintaining connectivity across domains before alignment [1].

Q2: Is it safe to delete my original trajectory after creating a cleaned-up version? A: No. Always preserve your original trajectory. Processing steps are often irreversible, and you may need the raw data later for different analyses or if an error was made [1].

Q3: What is the most common mistake when trying to fix PBC artifacts? A: Failing to properly identify and center the "anchor" domain first. The most stable component (usually the largest protein domain) should be centered, and other components unwrapped relative to it [1].

Q4: How can I analyze a nucleic acid trajectory? A: Use specialized tools like DSSR from X3DNA. It can process multiple PDB-formatted snapshots from a trajectory to analyze helicoidal parameters, torsion angles, and other essential nucleic acid properties [5].

Q5: What is the trade-off with trajectory compression? A: Lossy compression reduces file size but introduces small errors in atomic positions. This can subsequently affect energies calculated from the trajectory. The bond energy term is particularly sensitive for flexible water models [4].

The Scientist's Toolkit: Essential Software for MD Trajectory Processing

The table below lists key software tools for addressing the "Four Horsemen" of MD chaos.

| Tool Name | Primary Function | Key Strength |

|---|---|---|

| CPPTRAJ | Comprehensive trajectory analysis & processing [1] | Versatile, command-line control, part of AMBER [1] |

| MDAnalysis | Python library for trajectory analysis [1] | Python integration, scripting flexibility [1] |

| PyTraj | Python wrapper for CPPTRAJ [1] | CPPTRAJ power in Python environment [1] |

| MDVWhole | Repairing complex molecular assemblies across PBCs [2] | Handles large, multi-component assemblies [2] |

| DSSR/X3DNA | Analyzing nucleic acid structures in trajectories [5] | Specializes in DNA/RNA structural parameters [5] |

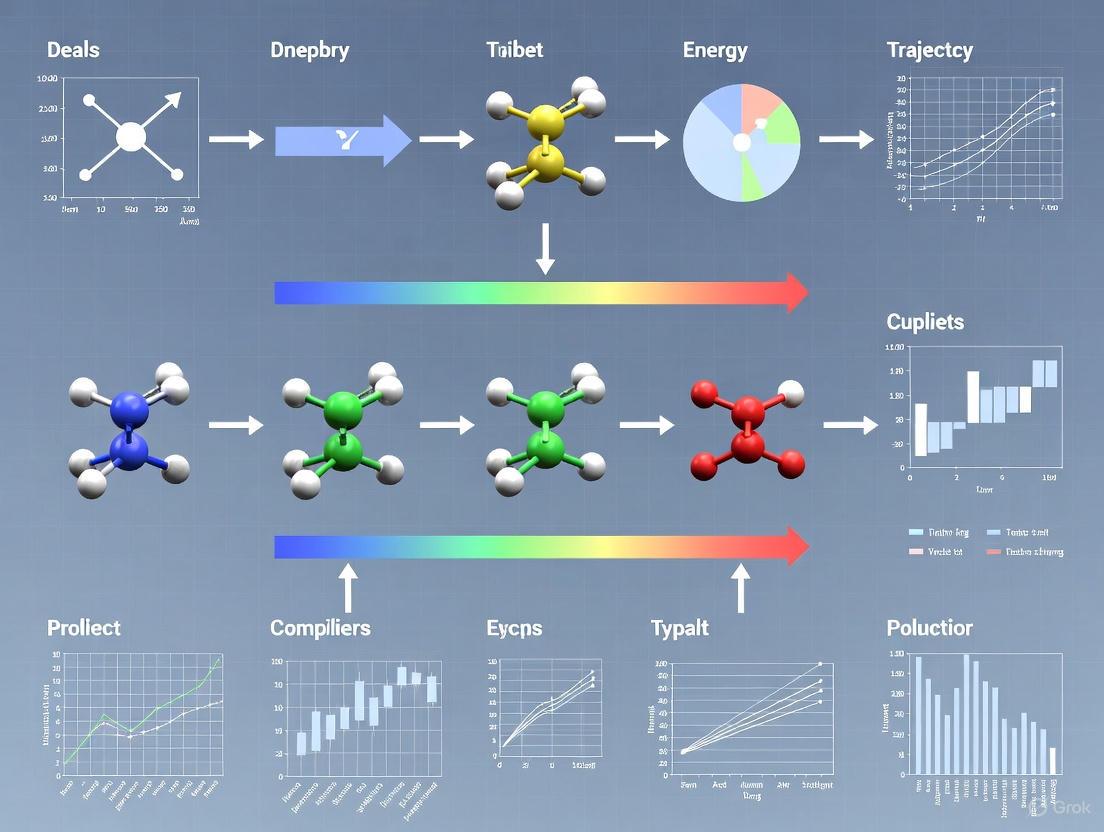

Workflow Visualization

The following diagram illustrates a recommended workflow for tackling the common problems in MD trajectory analysis.

MD Trajectory Cleanup Workflow

Experimental Protocols & Data

Protocol 1: Full Trajectory Cleanup Pipeline using CPPTRAJ

This protocol combines steps to fix PBC, remove solvent, and align the trajectory [1].

- Input: Raw trajectory file (

simulation.nc) and topology file (system.prmtop). - Center the Protein: Center the primary protein domain in the box.

- Unwrap Other Molecules: Ensure ligands or other domains stay connected to the protein.

- Run Autoimage: Rebuild the simulation box around the centered and unwrapped molecules.

- Strip Solvent: Remove water and ions to drastically reduce system size.

- Align Trajectory: Remove overall rotation and translation by fitting to the backbone.

- Output: Save the final, cleaned trajectory.

Protocol 2: Quantifying the Impact of Trajectory Compression

This protocol outlines how to compress a trajectory and evaluate the effect on energy calculations, based on findings from quantitative studies [4].

- Compress Trajectory: Convert your trajectory to a NetCDF4/HDF5 format with quantization (e.g., using CPPTRAJ). The quantization level (e.g., 100x) determines the precision loss [4].

- Calculate Energies: Perform energy calculations (e.g., MM-PB/GB-SA) on both the original and compressed trajectories.

- Quantify Error: Calculate the Root Mean Square Error (RMSE) for the total energy and individual terms (Electrostatics, VdW, Bonds, Angles) between the two datasets [4].

- Analyze Trade-offs: Evaluate if the achieved compression ratio is worth the introduced energy error for your specific analysis goals. A quantization of 100x often offers a good compromise [4].

Quantitative Data on Trajectory Compression

The table below summarizes how different compression methods affect coordinate precision and subsequent energy calculations, based on research data [4].

| Format / Method | Coordinate Precision | Avg. Position RMSD (Å) | Energy RMSE (kcal/mol) | Key Consideration |

|---|---|---|---|---|

| NetCDF4 (i1.nc4) | 1x (0.1 Å) | ~0.1 | Can be >1 | Significant energy error, not recommended. |

| NetCDF4 (i3.nc4) | 1000x (0.001 Å) | ~0.001 | Low (~0.001) | Good for most analyses, similar to ASCII .crd. |

| GROMACS XTC | 3 decimals (in nm) | ~0.01 | Low | Good compression, standard in GROMACS. |

| Amber ASCII (.crd) | 3 decimals (in Å) | ~0.001 | Low (but VdW higher) | Very large file size, not efficient. |

Note: The flexible bond energy term is particularly sensitive to compression and shows higher errors for non-constrained water models [4].

Navigating Periodic Boundary Condition (PBC) Artifacts in Biomolecular Visualizations

FAQs: Understanding PBC Artifacts

Q1: Why do my molecules appear broken or have bonds extending across the simulation box? This is a classic visualization artifact, not an error in the simulation dynamics. Under Periodic Boundary Conditions (PBC), the simulation box is surrounded by translated copies of itself. When a molecule crosses the boundary of the primary box, it is "broken" as one part exits (e.g., on the right) and simultaneously re-enters from the opposite side (e.g., on the left). Visualization software often renders this as a long bond across the entire box [6]. The molecule itself remains chemically intact.

Q2: What causes sudden "jumps" in measured distances between atoms over time? These jumps occur because standard trajectory analysis might track coordinates without accounting for PBC. When a molecule crosses the box boundary, its coordinates can discontinuously jump from one side of the box to the other. The measured distance between this molecule and a stationary reference point will then show a sudden, large change, even though the actual physical separation has changed only minimally [7].

Q3: How does the shape and size of the periodic box influence artifacts? The box size and shape are critical factors [8] [9].

- Size: A box that is too small can cause a macromolecule to artificially interact with its own periodic image. A common recommendation is to have at least 1.0 nm of solvent between the solute and the box edge [10] [8].

- Shape: A cubic box is simple but inefficient, containing solvent in corners far from the solute. For globular molecules, a rhombic dodecahedron or truncated octahedron is often more efficient as it more closely approximates a sphere, requiring ~29% fewer solvent molecules for the same minimum image distance [8] [9].

Q4: What are the system-level requirements for PBC to function correctly? Two key restrictions must be met [9]:

- Charge Neutrality: The net electrostatic charge of the entire system must be zero to avoid infinite energy sums when PBC are applied. This is typically achieved by adding counterions [10].

- Cut-off Criterion: The cut-off radius (Rc) for short-range non-bonded interactions must be less than half the length of the shortest box vector: Rc < ½ min(||a||, ||b||, ||c||). This ensures a particle only interacts with the closest image of any other particle [9].

Troubleshooting Guide: Resolving Common Visualization Issues

Issue 1: Broken Molecules and Unphysical Bonds

Problem: During visualization, molecules are split across the box with long, seemingly unphysical bonds stretching from one side to the other [6].

Solution: Use the trjconv utility in GROMACS to make molecules "whole" again.

- Protocol:

- Mechanism: The

-pbc molflag identifies all molecules in the system and reassembles each one completely within the primary simulation box, removing the visual artifact of bonds crossing the periodic boundary [6].

Issue 2: Jumps in Trajectories and Distance Measurements

Problem: Analysis of atomic distances over time shows discontinuous jumps, complicating the study of continuous diffusion or conformational changes [7].

Solution: Remove these jumps from the trajectory before analysis.

- Protocol:

- Mechanism: The

-pbc nojumpalgorithm checks the periodic image of each particle relative to its position in the reference structure and adjusts coordinates to avoid discontinuous jumps across the box, resulting in a continuous trajectory [7] [6].

Issue 3: System is Not Centered for Visualization

Problem: The molecule or complex of interest drifts from the center of the box, making visualization and filming difficult.

Solution: Center the system on your molecule of interest.

- Protocol:

- Mechanism: When prompted, select the group (e.g., "Protein") you wish to center in the box. The command shifts the entire system so that the center of mass of the selected group is placed at the center of the box [6].

Comprehensive Visualization Workflow

For a clean, publication-ready visualization, a specific sequence of trjconv commands is recommended. The diagram below illustrates this workflow.

Table 1: Key Software Tools for Managing PBC and Trajectory Analysis.

| Tool Name | Primary Function | Relevance to PBC Artifacts |

|---|---|---|

GROMACS (trjconv) |

Molecular dynamics simulation and trajectory processing | The primary tool for correcting PBC-related visualization issues, including making molecules whole, removing jumps, and centering [7] [6]. |

| MDTraj | Python library for MD trajectory analysis | A modern library that can read and write trajectory data in multiple formats and includes functions for handling PBC during analysis, such as RMSD calculations [11]. |

| VMD / PyMOL | Molecular visualization | Industry-standard visualization software. Correcting trajectories with tools like trjconv before loading them into these programs is essential for accurate visual interpretation [12]. |

| Particle Mesh Ewald (PME) | Algorithm for long-range electrostatics | A method used during the simulation itself to handle electrostatic interactions in a periodic system, mitigating artifacts from the artificial truncation of these forces [9] [13]. |

Advanced Considerations and Recent Research

Quantitative Impact of System Size

Recent research systematically investigates how the simulated system's size influences properties under PBC. The table below summarizes key findings from a 2024 study on peptide membranes [13].

Table 2: Effects of Increasing Simulation Box Size on Membrane Properties [13].

| Property Measured | Small System (Under PBC Influence) | Large System (PBC-Free Core) | Change |

|---|---|---|---|

| Hydrogen Bond Lifetime | Baseline | ~19% Increase | Significant increase, indicating small boxes may artificially stabilize interactions [13]. |

| Amino Acid Dihedral Angle Mobility | Restricted | Higher | Greater conformational freedom in larger systems free from PBC constraints [13]. |

| Property Convergence (e.g., Area per Molecule) | Non-convergent | Convergent | Macroscopic properties only reach stable values in sufficiently large systems [13]. |

Best Practices for Minimizing PBC Artifacts in Research

- Validate Box Size: Do not use a box that is merely "large enough" to hold the solute. Ensure the shortest box vector is at least twice the non-bonded cut-off radius [9]. For macromolecules, maintain a minimum of 1.0 nm of solvent padding in all directions [10] [8].

- Choose an Optimal Box Shape: For a globular protein in solution, a rhombic dodecahedron is highly recommended as it minimizes the number of solvent atoms needed while maintaining the required distance to periodic images, significantly improving computational efficiency [8] [9].

- Pre-process Trajectories for Analysis: Never analyze raw trajectories for properties like diffusion, distance, or RMSD. Always apply appropriate PBC corrections first to ensure data continuity and validity [11] [6].

- Be Aware of Advanced Artifacts: Understand that PBC can suppress long-wavelength fluctuations (e.g., in membranes) [13], influence bulk properties like system dipole moments [10], and conserve linear momentum but not angular momentum [10].

Managing Structural Drift and Ensemble Variability in Protein Simulations

Troubleshooting Guides

FAQ 1: Why does my protein simulation look exploded when I load it into visualization software?

Problem: Your protein complex appears fragmented, with domains scattered across the simulation box or pieces seemingly teleported to opposite sides.

Root Cause: This visual chaos is primarily caused by Periodic Boundary Conditions (PBC), a computational method that simulates bulk environment by treating the simulation box as a repeating tile. When molecules cross the box boundaries, they reappear on the opposite side. While physically correct for the simulation, this creates visualization artifacts because different parts of the protein cross boundaries at different times [1].

Solution: The solution involves "re-imaging" the trajectory to place all molecules back into the primary simulation box relative to a fixed anchor point, typically your main protein domain.

Protocol 1: Fixing PBC Artifacts with CPPTRAJ

Protocol 2: Fixing PBC Artifacts with MDAnalysis in Python

Problem: Your entire protein complex tumbles and translates through space during simulation, making meaningful analysis of internal motions and distance measurements impossible.

Root Cause: Structural drift occurs as proteins naturally diffuse and rotate in solution. Without alignment, this overall motion contaminates measurements of internal conformational changes [1].

Solution: Perform least-squares fitting of each trajectory frame to a reference structure (usually the first frame or an average structure) to remove overall translation and rotation.

Protocol: Structural Alignment with CPPTRAJ

Quantitative Assessment of Structural Drift: After alignment, quantify residual structural changes using Root-Mean-Square Deviation (RMSD):

FAQ 3: How can I efficiently analyze conformational heterogeneity from my simulation ensemble?

Problem: You need to identify and characterize the distinct conformational states sampled during your simulation and understand transitions between them.

Root Cause: Proteins exist as dynamic ensembles of interconverting structures rather than single static conformations. Analyzing this heterogeneity requires specialized approaches beyond simple averaging [14] [15] [16].

Solution: Employ dimensionality reduction and clustering techniques to identify metastable states and quantify transitions between them.

Protocol: Conformational State Analysis with EnsembleFlex

EnsembleFlex provides a comprehensive suite for analyzing conformational heterogeneity:

Advanced Protocol: Markov State Model Analysis

For more sophisticated ensemble analysis, Markov State Models (MSMs) can identify kinetic states and transition rates:

Workflow Diagram

Research Reagent Solutions

Table 1: Essential Software Tools for Managing Structural Drift and Ensemble Variability

| Tool Name | Primary Function | Key Features | Application Context |

|---|---|---|---|

| CPPTRAJ [1] | Trajectory processing & analysis | PBC correction, structural alignment, RMSD calculation, solvent stripping | AMBER ecosystem; comprehensive trajectory preprocessing |

| MDAnalysis [1] | Python trajectory analysis | PBC handling, transformation pipeline, interoperability with Python data science stack | Custom analysis workflows, integration with machine learning libraries |

| EnsembleFlex [15] | Ensemble analysis | Dual-scale flexibility analysis, dimensionality reduction, binding site mapping, conserved water detection | Conformational heterogeneity analysis, drug design applications |

| MDTraj [11] | Molecular dynamics trajectory analysis | Fast RMSD calculations, secondary structure assignment, order parameter extraction | Lightweight, efficient analysis with Python integration |

| SAMSON [17] | Structure validation & preparation | Alternate location resolution, steric clash detection, rotamer editing | Pre-simulation structure validation and cleanup |

Key Quantitative Metrics for Ensemble Variability

Table 2: Essential Metrics for Quantifying Structural Drift and Ensemble Variability

| Metric | Calculation Method | Interpretation | Threshold Guidelines |

|---|---|---|---|

| RMSD (Root-Mean-Square Deviation) | $$RMSD = \sqrt{\frac{1}{N}\sum{i=1}^{N} \deltai^2}$$ | Measures global structural change from reference | <2Å: minimal drift; 2-4Å: expected flexibility; >4Å: possible unfolding |

| RMSF (Root-Mean-Square Fluctuation) | $$RMSF = \sqrt{\langle (ri - \langle ri \rangle)^2 \rangle}$$ | Quantifies per-residue flexibility | High values indicate flexible loops/termini; low values indicate stable core |

| Principal Component (PC) Variance | Eigenvalue decomposition of covariance matrix | Identifies dominant collective motions | First 3-5 PCs typically capture >70% of significant motions |

| State Populations | Cluster analysis or MSM eigenanalysis | Proportion of simulation in each conformational state | Determines thermodynamic stability of states |

| Transition Rates | Markov State Model analysis | Kinetics of interconversion between states | Slow rates (μs-ms) indicate high energy barriers |

- Always preserve original trajectories - processing steps are irreversible [1]

- Validate structures before simulation to prevent failures from structural issues [17]

- Remove solvent before analysis when focusing on protein dynamics to reduce file size and speed up computation [1]

- Use multiple metrics to characterize ensemble variability from complementary perspectives [15]

- Combine experimental and computational data when possible for more accurate ensemble generation [14]

FAQs on Data Handling and Analysis

Q1: What are the primary challenges when working with multi-terabyte Molecular Dynamics (MD) trajectory data? The key challenges involve the sheer volume and complexity of the data. Modern high-performance computing allows simulations of biologically large systems, generating trajectories with millions to billions of atoms over long timescales. This results in hundreds to thousands of individual trajectories, creating massive datasets that are difficult to store, process, and visualize. The complexity of these systems makes it challenging to scrutinize the trajectories and extract biologically significant information about structures and dynamics [12].

Q2: What are the best practices for ensuring robustness and reproducibility in my simulations? To ensure your simulations are robust and reproducible, you should follow hypothesis-driven approaches and use reliable tools and databases. Critical practices include maintaining detailed records of simulation parameters, using validated force fields, and ensuring proper data curation. Promoting reproducibility and accessibility through reliable databases is also a fundamental best practice [18].

Q3: How can I effectively visualize such large trajectory datasets? Effective visualization is a major challenge. Traditional frame-by-frame visualization is often insufficient. Consider these approaches:

- Advanced Visualization Tools: Utilize modern software that can leverage GPU acceleration for handling large datasets [12].

- Web-Based Tools: Use web-based visualization tools for easier access, collaboration, and sharing of simulations [12].

- Interactive Widgets: Employ notebook-like environments with interactive molecular visualization widgets to facilitate analysis [12].

- Novel Techniques: Explore new visualization techniques being developed specifically to handle the scale and complexity of modern MD data, including immersive virtual reality environments for a more intuitive exploration [12].

Q4: Can machine learning help in analyzing these large datasets? Yes, machine learning, particularly deep learning, is a powerful tool for analyzing high-dimensional MD data. It can be used to embed complex simulation data into a lower-dimensional latent space that retains essential molecular characteristics. This simplifies the analysis and allows for the development of surrogate models that can predict constitutive behavior and failure, significantly reducing the need for repetitive, costly simulations [19] [12].

Troubleshooting Common Experimental Issues

Issue 1: Inability to process all trajectories due to memory limitations.

- Problem: Loading multiple multi-gigabyte trajectory files for analysis crashes the software or exhausts server RAM.

- Solution:

- Implement Data Chunking: Use analysis tools that can read and process the trajectory in segments rather than loading it entirely into memory.

- Leverage Stochastic Sampling: If studying a stochastic process, analyze multiple smaller, randomized subsets of frames to estimate the overall system behavior. This provides a statistically valid result without processing every frame.

- Use Optimized Software: Employ specialized trajectory analysis packages designed for high-throughput data handling.

Issue 2: Difficulty in quantifying dispersion and identifying dominant motion paths in a cluster of trajectories.

- Problem: Visual inspection of hundreds of overlapping trajectories is overwhelming, making it impossible to quantify the common path and variance.

- Solution: Apply probabilistic trajectory modeling tools. These tools model the ensemble of trajectories as a Gaussian process, which provides a statistical summary. The output includes a mean path (the average trajectory) and a confidence region (a volume showing where a certain percentage of trajectories travel), thereby quantifying dispersion and identifying dominant motion patterns [20].

Issue 3: Long simulation times are a bottleneck for exploring different conditions.

- Problem: Running full MD simulations for every parameter variation or initial condition is computationally prohibitive.

- Solution: Develop a machine learning-based surrogate model (metamodel). Train a model, such as a Gated Recurrent Unit (GRU) neural network, on existing MD simulation data. Once trained, this model can almost instantaneously predict the constitutive response and failure of the material under unseen loading patterns, bypassing the need for new, full-scale simulations [19].

Issue 4: Choosing an inappropriate data visualization for communicating results.

- Problem: The selected chart type leads to misinterpretation of the hierarchical or comparative data.

- Solution: Match the visualization to the data type and communication goal.

- For Hierarchical Data: Treemaps can show part-to-whole relationships for large, hierarchical data, but they are hard to use for precise comparisons. Use them with caution, ensuring high-level categories have visually distinct borders [21].

- For Comparisons: Sorted bar charts are often preferable for comparing the values of different items, as the human brain can pre-attentively process length comparisons more accurately than area [21].

- For Relationships: Scatter plots are excellent for showing the relationship between two numeric variables [22].

Data Presentation: Comparison of Storage Solutions

The table below summarizes key quantitative aspects of different storage formats and strategies for managing large trajectory data.

Table 1: Comparison of Trajectory Data Storage and Analysis Formats

| Format / Strategy | Typical Size Reduction | Read Speed | Write Speed | Best Use Case |

|---|---|---|---|---|

| Uncompressed Trajectory | Baseline | Very Fast | Very Fast | Short-term, active analysis |

| Lossless Compression (e.g., XTC) | ~50-80% | Fast | Fast | Long-term storage, full-fidelity analysis |

| Lossy Compression | ~90-99% | Fast | Medium | Remote visualization, initial exploratory analysis |

| Feature-Reduced Data (e.g., order parameters) | ~99%+ | Very Fast | Slow | Machine learning training, specific property analysis |

| Surrogate ML Model | >99.9% (after training) | Instant (post-inference) | Very Slow (training) | Rapid prediction and scenario testing [19] |

Experimental Protocol: Creating an ML-Based Surrogate Model

This protocol outlines the methodology for developing a machine learning surrogate to replace full MD simulations, based on the approach cited in the research [19].

Objective: To create a trained neural network model that can predict the rate-dependent and path-dependent constitutive and damage behavior of a composite material, bypassing the need for full MD simulations.

Materials:

- Hardware: High-Performance Computing (HPC) cluster with GPUs.

- Software: MD simulation package (e.g., GROMACS, LAMMPS), Python with deep learning libraries (TensorFlow/PyTorch), and data processing libraries (NumPy, Pandas).

Methodology:

- Data Generation via MD:

- Model Constituents: Run separate MD simulations for each constituent material (e.g., epoxy matrix, glass fiber) and their interface.

- Systematic Loading: Apply a diverse set of strain paths and loading rates to each model to cover a wide spectrum of mechanical responses.

- Data Extraction: For each simulation, extract the stress tensor and a damage indicator (e.g., void fraction, number of broken bonds) at each time step. The time step information must be embedded with the strain path data.

Data Curation and Preprocessing:

- Aggregation: Compile the stress, damage, and corresponding strain-time data from all simulations into a unified dataset.

- Normalization: Normalize all input (strain, time) and output (stress, damage) variables to a common scale (e.g., 0 to 1) to ensure stable and efficient model training.

- Partitioning: Split the dataset into training (∼70%), validation (∼15%), and testing (∼15%) subsets.

Model Training and Validation:

- Architecture: Construct a multi-task Gated Recurrent Unit (GRU)-based neural network. The GRU layers are designed to capture path-dependent and rate-dependent temporal evolution.

- Multi-Task Output: Configure the network with two output heads: one for predicting the stress tensor and another for predicting the damage indicator.

- Training Loop: Train the model on the training set, using the validation set for hyperparameter tuning and to prevent overfitting. The loss function should combine the mean squared error for stress and the cross-entropy loss for damage.

- Testing: Evaluate the final model's performance on the unseen test set by comparing its predictions against the ground-truth MD simulation results.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Software and Analytical Tools for MD Trajectory Analysis

| Tool Name | Function | Application Note |

|---|---|---|

| GROMACS/LAMMPS | High-performance MD simulation engines | Used for generating the initial multi-terabyte trajectory data. |

| Teetool | Python package for probabilistic trajectory analysis | Models trajectories as a Gaussian process to visualize confidence regions and quantify dispersion [20]. |

| GRU-based Neural Network | Deep learning model for sequential data | Serves as the core architecture for the surrogate model, capturing rate- and path-dependent material behavior [19]. |

| Web-Based Visualization Tools (e.g., Mol* Viewer) | Accessible platforms for viewing and sharing trajectories | Facilitates collaboration and provides an intuitive interface for inspecting structural dynamics without local installation of heavy software [12]. |

| Jupyter Notebooks | Interactive computing environment | Ideal for integrating analysis scripts (e.g., with Teetool), data visualization widgets, and documentation into a single, reproducible workflow [20]. |

Workflow Visualization

The following diagram illustrates the integrated strategy for handling multi-terabyte trajectory datasets, from data generation to insight delivery.

Frequently Asked Questions (FAQs)

1. What do sudden jumps in my RMSD plot indicate, and how can I resolve them? Sudden jumps in RMSD often result from periodic boundary condition (PBC) artifacts, not true structural changes. Your protein may be moving across the simulation box, causing coordinates to "wrap" and misalign when visualized or analyzed [1].

- Solution: Process your trajectory to remove PBC artifacts before analysis. Use tools like

CPPTRAJorMDAnalysisto center your protein in the box and "unwrap" the coordinates so the molecule remains intact across periodic images [1].

2. My Radius of Gyration (Rg) suggests protein unfolding, but the structure looks native. What should I check? A rising Rg can indicate expansion or unfolding [23]. First, visually inspect multiple trajectory frames to confirm global structural changes versus local fluctuations. Then, cross-validate with other metrics:

- Check SASA: An increasing SASA corroborates unfolding as the protein interior becomes solvent-exposed [24].

- Check RMSD: Rising RMSD suggests a global conformational shift from the native state [25].

- Check Intra-protein H-bonds: A significant decrease in intramolecular hydrogen bonds supports loss of compact structure [24].

3. How do I interpret conflicting stability signals from SASA and Rg? Rg and SASA typically correlate, measuring overall compactness and solvent exposure. Conflict may arise from localized changes:

- Scenario: Rg is stable, but SASA increases.

- Interpretation: The protein's overall dimensions remain stable, but surface residues may be undergoing rearrangement, creating new pockets or crevices without major expansion.

- Action: Perform per-residue SASA analysis or inspect structural snapshots to identify localized surface changes.

4. Why is my protein-ligand interaction energy favorable, but the ligand dissociates? Favorable average interaction energy can be misleading if the ligand samples both bound and unbound states during simulation.

- Diagnosis: Plot the ligand's center of mass (COM) distance from the binding pocket over time. A increasing distance confirms dissociation [26].

- Root Cause: The simulation may have captured a genuine dissociation event, or the initial pose was unstable. Check if the ligand RMSD relative to the protein is stable before dissociation [26].

5. How can I ensure my RMSD analysis is meaningful? A drifting RMSD can indicate structural drift, but it must be analyzed relative to a correct reference and with proper alignment.

- Proper Workflow:

- Remove PBC effects to ensure the protein is whole and coordinates are continuous [1].

- Align all frames to a reference structure (e.g., the initial protein backbone or a stable domain) to remove overall rotation and translation [1].

- Calculate RMSD for the protein backbone or specific domains of interest [26].

Metric Interpretation and Troubleshooting Guide

The table below summarizes the fundamental metrics, their structural significance, and common issues encountered during analysis.

| Metric | Primary Structural Insight | Typical Output for a Stable, Folded Protein | Common Calculation Pitfalls & Fixes |

|---|---|---|---|

| Root Mean Square Deviation (RMSD) [25] [26] | Measures the average change in atom positions relative to a reference structure over time; quantifies global conformational stability. | Reaches a stable plateau after an initial equilibration period. | Pitfall: Noisy or continuously rising RMSD.Fix: Ensure trajectories are centered and aligned to a reference (e.g., backbone atoms) after fixing PBC issues [1]. |

| Radius of Gyration (Rg) [23] [26] | Measures the overall compactness and density of the protein structure. | Maintains a stable, low value, indicating a tight, folded core. | Pitfall: Misleading Rg values due to protein tumbling or PBC artifacts.Fix: Use a centered and aligned trajectory. A rising Rg should be correlated with SASA and visual inspection [23] [1]. |

| Solvent Accessible Surface Area (SASA) [24] [26] | Quantifies the surface area of the protein accessible to a solvent molecule; indicates packing efficiency and exposure of hydrophobic cores. | Remains relatively constant, with minor fluctuations. | Pitfall: SASA increases without a corresponding Rg increase.Fix: Analyze for local surface changes or pocket opening. Ensure the calculation uses a consistent solvent radius (typically 1.4 Å). |

| Interaction Energy [26] | Calculates the non-covalent interaction energy (electrostatic + van der Waals) between molecules, such as a protein and ligand. | Remains stably favorable (negative) for a specific, bound complex. | Pitfall: Favorable energy despite ligand dissociation.Fix: Always correlate with ligand RMSD and COM distance to confirm the bound pose is maintained throughout the simulation [26]. |

Essential Experimental Protocols

Protocol 1: Standard Workflow for MD Trajectory Analysis

This protocol outlines the critical steps for preparing a raw molecular dynamics trajectory for robust analysis of RMSD, Rg, SASA, and other metrics [1] [26].

Procedure:

- Fix Periodic Boundary Conditions (PBC): Use a tool like

CPPTRAJorMDAnalysisto center the protein in the simulation box and unwrap coordinates. This ensures the protein appears as a single, continuous molecule.- CPPTRAJ Command: [1]

- Strip Solvent: Remove water and ions to drastically reduce file size and speed up subsequent analysis.

- CPPTRAJ Command: [1]

- Align Trajectory: Perform a least-squares fit of all trajectory frames to a reference structure (e.g., the initial protein backbone) to remove global rotation and translation. This is essential for meaningful RMSD calculations.

- MDAnalysis Command: [1]

- Calculate Metrics: Perform the desired analyses on the cleaned trajectory.

Protocol 2: Assessing Protein Stability via RMSD, Rg, and SASA

This protocol describes how to jointly interpret key metrics to evaluate the stability of a protein or protein-ligand complex during a simulation [23] [25] [24].

Procedure:

- Run Simulation: Perform an all-atom molecular dynamics simulation for the desired timescale (e.g., 100 ns - 1 µs).

- Process Trajectory: Follow Protocol 1 to generate a clean, aligned trajectory.

- Calculate Time-Series Data:

- RMSD: Calculate the backbone RMSD relative to the energy-minimized starting structure for every frame.

- Rg: Compute the radius of gyration for the protein's Cα atoms for every frame.

- SASA: Calculate the total solvent-accessible surface area for the protein for every frame.

- Correlate and Interpret:

- A stable, folded protein will show RMSD, Rg, and SASA values that converge to a plateau after initial equilibration, with fluctuations around a stable average [25].

- A destabilizing or unfolding event is characterized by a coordinated increase in RMSD, Rg, and SASA, indicating a loss of native structure and expansion of the protein [23] [24].

- For protein-ligand complexes, calculate the same metrics for the protein alone and monitor ligand-specific metrics like ligand RMSD to assess binding stability [26].

Protocol 3: Calculating Protein-Ligand Interaction Energies

This protocol outlines the steps for determining non-covalent interaction energies, a key metric for quantifying binding affinity in complexes [26].

Procedure:

- Prepare the System: Ensure your topology and trajectory files include both the protein and the bound ligand.

- Define Interaction Groups: Specify the atom groups for the protein and the ligand.

- Calculate Energy Components: Use molecular mechanics to calculate the van der Waals and electrostatic interaction energies between the two groups for each trajectory frame. Many MD packages (e.g., GROMACS via

gmx energy, SILCSBIO CGenFF Suite [26]) have built-in utilities for this. - Analyze Time-Series: The total interaction energy is the sum of the two components. A stable, favorably bound complex will exhibit a consistently negative (favorable) total interaction energy throughout the simulation.

- Cross-Reference with Structural Data: Always correlate stable, favorable interaction energies with a stable ligand RMSD and a consistent protein-ligand binding pose to confirm the integrity of the complex.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential software tools and their functions for molecular dynamics trajectory analysis.

| Tool Name | Primary Function | Key Utility in Trajectory Analysis |

|---|---|---|

| GROMACS [27] | A versatile package for performing molecular dynamics simulations. | The primary engine for running simulations. Its suite of tools (gmx rms, gmx gyrate, gmx sasa, gmx energy) is used for calculating RMSD, Rg, SASA, and interaction energies, respectively. |

| CHAPERONg [27] | An automation tool for GROMACS-based simulation pipelines. | Automates the entire workflow of system setup, simulation run, and post-processing analysis, including the calculation of RMSD, Rg, and SASA, reducing manual intervention and potential for error. |

| CPPTRAJ [1] | The main analysis utility in the AmberTools package. | A powerful and versatile tool for processing and analyzing MD trajectories. Excels at fixing PBC issues, stripping solvent, aligning trajectories, and calculating a vast array of structural and dynamic metrics. |

| MDAnalysis [1] | A Python library for analyzing molecular dynamics trajectories. | Provides a programmable environment for trajectory analysis. Allows users to write custom Python scripts to load, manipulate (e.g., fix PBC, align), and analyze trajectories, offering high flexibility. |

| VMD [23] | A molecular visualization and analysis program. | Primarily used for visualizing trajectories to qualitatively confirm structural states, binding poses, and artifacts. It also contains built-in functions for various analyses. |

Advanced Analysis Techniques for Drug Discovery Applications

Radial Distribution Functions (RDF) for Analyzing Solvation and Binding Sites

Radial Distribution Functions (RDF) are fundamental tools in molecular dynamics (MD) analysis for quantifying how particle density varies as a function of distance from a reference particle. The RDF (g_{ab}(r)) between types of particles (a) and (b) is defined as:

[g{ab}(r) = (N{a} N{b})^{-1} \sum{i=1}^{Na} \sum{j=1}^{Nb} \langle \delta(|\mathbf{r}i - \mathbf{r}_j| - r) \rangle]

This function normalizes to 1 for large separations in a homogenous system, effectively counting the average number of (b) neighbours in a shell at distance (r) around an (a) particle and representing it as a density [28]. In thesis research, RDF analysis provides critical insights into solvation shells, binding site identification, and molecular interaction patterns in drug development studies.

Implementing RDF Analysis: A Practical Guide

How do I calculate a basic Radial Distribution Function?

Solution: Use the InterRDF class in MDAnalysis for average RDFs between two groups of atoms.

This code calculates the average RDF between all atoms in the solute and solvent groups, providing insights into overall solvation structure [28].

What's the difference between InterRDF and InterRDF_s?

Solution: InterRDF calculates average RDFs between groups, while InterRDF_s provides site-specific RDFs for individual atom pairs.

Site-specific RDFs are crucial for understanding detailed interactions in binding pockets and specific solvation sites [28].

Troubleshooting Common RDF Analysis Issues

Why is my RDF plot too noisy or inconsistent?

Problem: Noisy RDF profiles often result from insufficient sampling or incorrect trajectory handling.

Solutions:

- Increase sampling: Ensure your analysis includes enough trajectory frames for statistical significance

- Check system stability: Verify that your simulation has properly equilibrated before analysis

- Adjust bin parameters: Increase the number of bins (

nbins) for higher resolution or decrease if oversampled - Validate trajectory loading: Confirm your Universe object properly loads all trajectory frames using

print(len(u.trajectory))

How do I calculate coordination numbers from RDF data?

Problem: Researchers often need to extract quantitative coordination numbers from RDF profiles.

Solution: Use the cumulative distribution function available in MDAnalysis.

The position of the first minimum in (g(r)) typically defines the first solvation shell radius (r1), and (N(r1)) gives the coordination number [28].

How do I analyze specific molecular orientations in RDF calculations?

Problem: Standard RDF analysis doesn't account for molecular orientation effects.

Solution: Implement vector-based analysis for oriented systems, as demonstrated in dye-polymer systems:

This approach helps analyze how molecular orientation affects distribution functions in anisotropic systems [29].

Experimental Protocols and Methodologies

Protocol 1: Solvation Shell Analysis for Transition Metal Complexes

Application: Analyzing solvation shells of electrochemically active redox couples in aqueous solution [30].

Methodology:

- System Preparation: Run ab initio molecular dynamics using PBE functional with/without Tkatchenko-Scheffler van der Waals corrections

- Trajectory Analysis: Calculate RDFs between metal centers and solvent oxygen atoms

- Key Systems: Analyze Cr²⁺/³⁺, V²⁺/³⁺, Ru(NH₃)₆²⁺/³⁺, Sn²⁺/⁴⁺, Cu¹⁺/²⁺, ferrocene methanol, Fe²⁺/³⁺, and ferrocene

- Data Interpretation: Identify solvation shell boundaries from RDF minima and calculate coordination numbers

Expected Results: Distinct solvation shells with different coordination numbers for various oxidation states, providing insights into solvation structure changes during redox processes.

Protocol 2: Binding Site Analysis for Drug Discovery

Application: Characterizing ligand binding sites and interaction patterns in protein-ligand systems.

Methodology:

- Trajectory Preparation: Run MD simulation of protein-ligand complex in explicit solvent

- Site Identification: Select key binding site residues and ligand atoms

- Site-Specific RDF: Use

InterRDF_sto calculate pairwise RDFs between binding site atoms and ligand atoms - Residence Time Analysis: Calculate survival probabilities for solvent molecules in binding site

- Competitive Binding Assessment: Analyze RDFs between binding site and water/co-solvent molecules

Interpretation: Sharp, high peaks in site-specific RDFs indicate strong, specific interactions, while broad peaks suggest transient or weak interactions.

RDF Analysis Workflow Visualization

RDF Analysis Workflow

Key Parameters for RDF Analysis

Table 1: Critical RDF Calculation Parameters and Their Effects

| Parameter | Default Value | Effect on Results | Recommendation |

|---|---|---|---|

nbins |

75 | Higher values increase resolution but may introduce noise | Adjust based on system size (75-150) |

range |

(0.0, 15.0) | Defines distance range for analysis | Set maximum to half box size to avoid PBC artifacts |

exclusion_block |

None | Excludes bonded neighbors from same molecule | Use (n, n) for molecules with n atoms each |

density |

False | When True, returns true density (n{ab}(r)) instead of (g{ab}(r)) | Use False for standard RDF, True for coordination numbers |

Table 2: Common RDF Analysis Issues and Solutions

| Problem | Possible Causes | Solutions |

|---|---|---|

| Noisy RDF | Insufficient sampling, small bin size | Increase trajectory frames, adjust nbins |

| Unphysical peaks | PBC artifacts, incorrect atom selection | Check PBC handling, validate atom groups |

| Flat RDF | Incorrect group selection, system not equilibrated | Verify group definitions, check equilibration |

| Memory errors | Large systems, too many frames | Use step parameter in run(), analyze subsets |

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Essential Tools for RDF Analysis in Molecular Dynamics

| Tool/Resource | Function | Application Context |

|---|---|---|

| MDAnalysis | Python toolkit for MD trajectory analysis | Loading trajectories, RDF calculation, custom analysis [31] |

| InterRDF class | Calculates average RDF between atom groups | General solvation structure analysis [28] |

| InterRDF_s class | Calculates site-specific RDFs | Binding site analysis, specific interaction studies [28] |

| GROMACS | MD simulation package | Trajectory generation, system preparation [32] |

| BioExcel Building Blocks | Workflow library for MD setup and run | Streamlined simulation setup and analysis [32] |

Advanced RDF Applications and Methodologies

How do I interpret RDFs for ionic liquid systems?

Context: Analysis of imidazolium-based ionic liquids in ethylene glycol requires special consideration of cation-anion and ion-solvent interactions [33].

Interpretation Guide:

- First peak position: Indicates contact ion pair distance

- Peak height: Reflects interaction strength and specificity

- Second shell structure: Reveals medium-range ordering and solvation patterns

- Chain length effects: Longer alkyl chains (C4mim+, C6mim+) show solvophobic solvation and structure-making tendencies [33]

Methodology:

- Combine RDF analysis with volumetric properties and conductivity measurements

- Calculate ion association constants from coordination number analysis

- Use molecular dynamics simulations to complement experimental RDF data

How do I validate RDF results for publication?

Quality Control Checklist:

- Verify statistical convergence by comparing RDFs from trajectory subsets

- Check for PBC artifacts by ensuring no peaks beyond half box length

- Validate atom selections by visualizing representative frames

- Confirm appropriate normalization by checking RDF approaches 1 at large r

- Compare with known experimental data where available

- Report all parameters (nbins, range, exclusion_block) in methods section

Integration with Broader Thesis Research

For comprehensive molecular dynamics trajectory analysis in thesis research, RDF analysis should be integrated with:

- Structural analysis: RMSD, RMSF, and radius of gyration calculations

- Energetic analysis: Binding free energy calculations

- Dynamical analysis: Hydrogen bond lifetimes, diffusion constants

- Experimental validation: Comparison with experimental spectroscopy data

This integrated approach provides a complete picture of molecular behavior in solution and binding environments, supporting robust conclusions in drug development research.

Calculating Binding Free Energies Using MM/PBSA and Umbrella Sampling

Frequently Asked Questions (FAQs)

MM/PBSA Methodology

Q1: My MM/PBSA results are unstable and vary significantly between short simulation replicates. What is the likely cause and how can I address this?

A: The likely cause is insufficient sampling. Short molecular dynamics (MD) simulations may give the illusion of convergence but often fail to capture slow conformational transitions that critically affect computed free energies.

- Problem Identification: If repeated short simulations (e.g., 5-10 ns) of the same system yield binding free energies with variations much larger than the expected chemical accuracy (1 kcal/mol), it indicates the underlying conformational ensemble has not been adequately sampled [34].

- Solution: Perform longer simulations or employ enhanced sampling techniques. Statistically, sampling sufficiency is a prerequisite for obtaining reliable results. Do not equate long simulation times with sufficient sampling; instead, use convergence analyses to determine if your simulation time covers the relevant conformational space. Adaptive sampling strategies can help balance efficiency and reliability [34].

Q2: How should I adapt the MM/PBSA protocol for membrane protein-ligand systems?

A: Standard MM/PBSA protocols, designed for soluble proteins, often fail for membrane systems due to the heterogeneous dielectric environment of the lipid bilayer. Key adaptations include [35]:

- Membrane-aware Dielectric Modeling: The continuum solvent model must account for the low-dielectric membrane region. Use tools like

APBSmemor the updatedMMPBSA.pyin Amber, which provide automated options for calculating membrane placement parameters (thickness, center) directly from the trajectory, ensuring a consistent and physically realistic dielectric environment for the calculation [35]. - Multi-Trajectory Approach: For systems with large ligand-induced conformational changes, a single trajectory might be insufficient. Instead, use a multi-trajectory approach where separate simulations are performed for the unbound protein (e.g., in the apo state) and the protein-ligand complex. This prevents the forcing of an unrealistic conformation in the unbound state [35].

- Entropy Corrections: Incorporate entropy estimates, such as those from Truncated Normal Mode Analysis (NMA), which can be particularly important for capturing the thermodynamic impact of conformational changes in membrane proteins [35].

Q3: The entropic term (-TΔS) in MM/PBSA is computationally expensive and noisy. When is it necessary to include it?

A: The entropic contribution is often small compared to the large, opposing enthalpic and solvation terms. However, it can be critical for achieving accuracy in certain scenarios [36].

- You can often omit it when performing high-throughput ranking of congeneric ligands (very similar compounds), as the relative differences in entropy might be consistent and the ranking is driven by the enthalpy/solvation terms.

- You should include it when calculating absolute binding affinities, when comparing ligands that bind with significantly different modes (e.g., one induces a large conformational change while another does not), or when striving for the highest possible quantitative accuracy. For these cases, methods like normal-mode or quasi-harmonic analysis are used, though they are computationally demanding [36].

Umbrella Sampling Methodology

Q4: How can I objectively determine a good reaction coordinate (RC) for Umbrella Sampling without prior knowledge of the end state?

A: Manually selecting an RC is a major source of error. Automated approaches can now determine effective RCs directly from simulation data [37].

- Protocol: Initiate a non-equilibrated MD simulation from the known native state basin. From this trajectory, use statistical analysis tools, such as time-lagged independent component analysis, to identify the slowest collective degrees of freedom. These can serve as excellent RCs. This is the core idea behind tools like AutoSIM, which automates this process to generate free energy surfaces for functional conformational transitions without requiring a priori information on the RC or the final state [37].

Q5: How can I assess and improve the convergence of my Umbrella Sampling simulation?

A: Convergence must be statistically evaluated, not assumed from simulation length [37].

- Convergence Assessment: A robust method is to combine statistics from multiple independent Umbrella Sampling runs. By comparing the free energy profiles from these replicates, you can quantify statistical uncertainty. If the profiles differ significantly, convergence has not been achieved.

- Improving Convergence: If simulations are not converged, systematically increase the sampling within each umbrella window. Furthermore, the automated protocol in AutoSIM allows for combining statistics from multiple runs to systematically assess and improve the quality of the free energy projection along a chosen RC [37].

Troubleshooting Common Problems

MM/PBSA Troubleshooting

| Problem | Possible Causes | Diagnostic Steps | Solutions |

|---|---|---|---|

| Unphysical or wildly fluctuating results | 1. Severe undersampling [34].2. Incorrect system setup (e.g., bad contacts, incorrect protonation).3. Numerical instability in PB solver. | 1. Check for convergence by dividing the trajectory into halves and comparing results [34].2. Visually inspect multiple trajectory frames for stability.3. Check energy logs for crashes. | 1. Extend simulation time or use enhanced sampling [34].2. Re-run system minimization and equilibration.3. Adjust PB grid parameters (density, cutoff). |

| Poor correlation with experimental data | 1. Force field inaccuracies [34].2. Lack of conformational sampling [34].3. Missing entropic term [36].4. Ignored key ligand/protonation states. | 1. Verify force field compatibility with your ligand.2. Check if simulations sampled all relevant rotamers/poses.3. Test the impact of including -TΔS. | 1. Use a different/validated force field or charge model.2. Use longer or replica-exchange simulations.3. Include entropic correction for final calculation.4. Test multiple ligand tautomers/protonation states. |

| High computational cost per snapshot | 1. Large system size.2. Fine PB grid settings.3. Entropy calculation. | 1. Profile the time taken by different calculation parts. | 1. Use the Generalized Born (GB) approximation for initial screening.2. Use a distance-dependent dielectric for initial tests.3. Increase the time between analyzed snapshots. |

Umbrella Sampling Troubleshooting

| Problem | Possible Causes | Diagnostic Steps | Solutions |

|---|---|---|---|

| Poor overlap between umbrella windows | 1. Windows are too far apart along the RC.2. The force constant for the harmonic bias is too weak.3. The RC is poorly chosen and couples multiple pathways. | 1. Plot the probability distribution of the RC for each window.2. Check the histogram of collected data in each window. | 1. Decrease the spacing between windows.2. Increase the harmonic force constant.3. Re-evaluate the choice of RC; consider a 2D PMF. |

| Free energy profile not converging | 1. Inadequate sampling within windows [34].2. Slow degrees of freedom orthogonal to the RC are not sampled. | 1. Perform block analysis (e.g., with WHAM) to see how the PMF changes with increasing simulation time.2. Check for collective motions not captured by the RC. | 1. Extend sampling in each window, especially in transition state regions [37].2. Use an additional CV in a 2D US simulation or employ replica-exchange US. |

| High energy barriers that are rarely crossed | 1. The RC does not correspond to the natural reaction pathway.2. The underlying atomistic energy landscape has a genuine high barrier. | 1. Monitor if the system explores the entire RC range as expected. | 1. Use automated tools (e.g., AutoSIM) to find a better RC [37].2. Apply a secondary bias along an orthogonal CV or use metadynamics. |

The Scientist's Toolkit: Essential Research Reagents & Software

Table: Key Computational Tools for Binding Free Energy Calculations

| Tool Name | Type/Category | Primary Function | Application Note |

|---|---|---|---|

| GROMACS [38] | MD Simulation Software | High-performance MD engine for running simulations. | Often used for producing the trajectories later analyzed by MM/PBSA or Umbrella Sampling. |

| AMBER [35] | MD Simulation & Analysis Suite | Includes MMPBSA.py for end-state free energy calculations. |

The recent Amber24 update includes automated membrane parameters for MMPBSA calculations on membrane proteins [35]. |

| AutoSIM [37] | Automated Sampling Algorithm | Redesigns and automates Umbrella Sampling. | Automatically determines reaction coordinates and assesses convergence, addressing key US limitations [37]. |

| MDAnalysis [39] | Trajectory Analysis Library | Python library for analyzing MD trajectories. | Extensible toolset for in-depth analysis, including RMSD, SASA, and distance calculations; supports streaming analysis. |

| ProLIF [39] | Interaction Analysis Tool | Identifies protein-ligand interactions from MD trajectories. | Useful for complementing MM/PBSA by providing a residue-level interaction fingerprint to explain affinity differences. |

Experimental Protocols & Workflows

Detailed Protocol: MM/PBSA for a Standard Protein-Ligand Complex

This protocol outlines the steps for performing an MM/PBSA calculation on a solvated protein-ligand complex using a single MD trajectory.

Step 1: System Preparation and Equilibration

- Parameterize the Ligand: Use the

antechamberprogram (from AmberTools) with the GAFF force field and AM1-BCC charges to generate topology and coordinate files for the ligand. - Assemble the Complex: Combine the protein (with a standard force field like ff19SB) and ligand parameter files to create the complex. Solvate the complex in a TIP3P water box, ensuring a minimum buffer distance (e.g., 12 Å). Add ions to neutralize the system's charge and achieve a physiological salt concentration (e.g., 150 mM NaCl).

- Energy Minimization: Perform 5000 steps of steepest descent minimization to remove bad contacts.

- System Heating: Gradually heat the system from 0 K to 300 K over 100 ps under an NVT ensemble with positional restraints (e.g., 5 kcal/mol/Ų) on the protein and ligand heavy atoms.

- System Equilibration: Perform at least 1 ns of NPT simulation at 300 K and 1 bar, gradually releasing the positional restraints. Monitor the density and potential energy for stability.

Step 2: Production MD Simulation

- Run an NPT production simulation. The length should be determined by convergence testing, but a common starting point is 100-200 ns. Save trajectory frames every 100 ps for subsequent analysis. The stability of the system should be confirmed by analyzing metrics like the root-mean-square deviation (RMSD) of the protein backbone and ligand [34].

Step 3: MM/PBSA Calculation

- Extract snapshots from the equilibrated portion of the trajectory (e.g., every 1 ns) for the MM/PBSA calculation. A typical approach uses 500-1000 snapshots.

- Use the

MMPBSA.pyscript from AmberTools. An example command is: - A sample input file (

mmppbsa.in) would contain:

Detailed Protocol: Umbrella Sampling for a Conformational Transition

This protocol describes how to use Umbrella Sampling to calculate the free energy profile (PMF) for a biomolecular conformational transition.

Step 1: Determine the Reaction Coordinate (RC) and Generate Initial Structures

- RC Selection: If the end states are known (e.g., open and closed form of a protein), a simple RC could be the distance between two key Cα atoms or the radius of gyration. For unknown pathways, use an automated tool like AutoSIM to determine the RC from an unbiased MD simulation [37].

- Steered MD (SMD): Perform a SMD simulation to rapidly pull the system from one end state (A) to the other (B) along the chosen RC. This generates a starting path.

Step 2: Set Up Umbrella Sampling Windows

- Extract multiple snapshots (e.g., 20-50) from the SMD trajectory at regular intervals along the RC. These will serve as the initial configurations for each umbrella window.

- For each window, set up an independent simulation with a harmonic biasing potential, ( U{\text{bias}} = \frac{1}{2} k (ξ - ξi)^2 ), where ( k ) is the force constant and ( ξ_i ) is the center of the RC for the i-th window. The force constant should be strong enough to ensure good overlap between adjacent windows but not so strong as to restrict sampling within the window.

Step 3: Run Equilibrated Simulations and Check Overlap

- Run an equilibrated MD simulation for each window. The simulation length must be sufficient to sample the local conformational space. Monitor the RC value in each window to ensure it fluctuates around its center.

- After simulations, check the overlap of the RC distributions between adjacent windows. If there are gaps, you may need to add more windows or increase sampling in existing ones.

Step 4: Analyze Data and Construct the PMF

- Use the Weighted Histogram Analysis Method (WHAM) to unbias the simulations from all windows and combine the data into a single, continuous PMF. Tools like

gmx wham(GROMACS) or Alan Grossfield'sWHAMcode can be used. - Assess convergence by running WHAM on successive halves of the data from each window and comparing the resulting PMFs. If they differ significantly, extend the simulations.

Method Workflow and Signaling Pathways

The following diagrams illustrate the logical workflow for the two primary methods discussed.

MM/PBSA Calculation Workflow

Umbrella Sampling Workflow

Mean Square Displacement (MSD) for Diffusion and Conductivity Studies

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental principle behind using Mean Square Displacement (MSD) in diffusion studies?

The Mean Squared Displacement (MSD) is a measure of the deviation of a particle's position from a reference position over time and is the most common measure of the spatial extent of random motion. In statistical mechanics, it quantifies the portion of a system "explored" by a random walker. For a particle undergoing pure Brownian motion in an isotropic medium, the MSD exhibits a linear relationship with time, and the slope of this relationship is used to calculate the self-diffusion coefficient, ( D ), via the Einstein relation. For ( n )-dimensional diffusion, the relation is ( \text{MSD} = 2nDt ), where ( t ) is time [40] [41].

FAQ 2: Why are my computed diffusion coefficients inconsistent when I use trajectories of different lengths from the same simulation?

This is a common issue rooted in the statistical nature of MSD calculation. As the time lag (( \tau )) increases, the number of data points available to calculate the MSD value for that lag time decreases. This leads to poorer averaging and noisier MSD values at longer lag times. Consequently, the linear segment of the MSD curve used for fitting the diffusion coefficient can shift depending on the total trajectory length. Using a trajectory that is too short may mean the MSD does not fully converge to its linear regime. For reliable results, it is recommended to use the longest possible trajectory and to select a linear fitting region that is not contaminated by noise at high lag times [42] [43].

FAQ 3: What does "unwrapped coordinates" mean, and why is it critical for a correct MSD analysis?

In molecular dynamics simulations with periodic boundary conditions (PBC), atoms that move outside the simulation box are "wrapped" back into the primary cell. Using these "wrapped" coordinates for MSD analysis will incorrectly truncate a particle's true displacement, severely underestimating the diffusion coefficient. Unwrapped coordinates are those that have been corrected for PBC jumps, allowing a particle's true path through space to be reconstructed. MSD analysis must always be performed on unwrapped trajectories to obtain physically meaningful diffusion coefficients [41] [1].

FAQ 4: How does localization uncertainty affect MSD analysis, and how can I account for it?

In single-particle tracking (SPT) experiments, the precise position of a particle has an inherent error, ( \sigma ), due to factors like limited signal-to-noise ratio and finite camera exposure. This localization error adds a constant offset to the theoretical MSD curve, modifying the equation to ( \text{MSD}(t) = 4\sigma^2 + 2nDt ) for 2D diffusion. If not accounted for, this can lead to a significant overestimation of the diffusion coefficient, especially for short trajectories or when the reduced localization error ( x = \sigma^2/D\Delta t ) is large [43] [44].

FAQ 5: What is the optimal number of points from the MSD curve to use for fitting the diffusion coefficient?

The optimal number of MSD points, ( p_{\text{min}} ), for fitting is a trade-off. Using too few points fails to capture the linear trend, while using too many incorporates noisy, poorly averaged data. The optimal number depends on the reduced localization error (( x = \sigma^2/D\Delta t )) and the total number of points, ( N ), in the trajectory. When ( x \ll 1 ) (negligible error), the first two MSD points may suffice. For ( x \gg 1 ) or larger ( N ), a larger number of points is needed, but it should be limited to the initial linear segment before the curve becomes noisy [43].

Troubleshooting Guides

Issue: Inconsistent or Physically Unreasonable Diffusion Coefficients

Problem Description: The calculated diffusion coefficient ( D ) varies significantly when using different segments of the same trajectory, different fitting ranges, or different analysis software.

Diagnosis and Solutions:

- Cause: Incorrect trajectory processing (wrapped coordinates).

- Cause: Poor choice of the MSD fitting range.

- Solution: The MSD plot should be visually inspected. The linear fit should be applied to the "middle" segment, avoiding the short-time ballistic regime and the long-time noisy region. A log-log plot can help identify the linear segment, which will have a slope of 1 for normal diffusion [41].

- Recommended Protocol:

- Calculate the MSD up to a maximum lag time of no more than one-quarter of the total trajectory length to ensure reasonable statistics.

- Plot the MSD versus lag time on a linear and a log-log scale.

- Select a linear region from the log-log plot with a slope close to 1.

- Perform a linear regression on this selected region in the linear plot.

- Calculate ( D ) from the slope: ( D = \frac{\text{slope}}{2n} ), where ( n ) is the dimensionality [41] [42].

- Cause: Insufficient sampling or trajectory length.

- Solution: The simulation or experiment may be too short to observe true diffusive behavior. If possible, run longer replicates. When combining multiple replicates, ensure they are concatenated correctly without creating artificial jumps between the end of one trajectory and the start of the next [41] [42].

Issue: High Error in Diffusion Coefficient from Single-Particle Tracking

Problem Description: The estimated diffusion coefficient from a single trajectory has a very large confidence interval, or results vary wildly between trajectories of identical particles.

Diagnosis and Solutions:

- Cause: High localization uncertainty dominating the MSD.

- Solution: Incorporate the localization error, ( \sigma ), into your MSD fitting model. Fit your MSD data to ( \text{MSD}(t) = 2nDt + (2n\sigma^2) ) to separately estimate the true diffusion coefficient and the error term. Improving the optical setup and image analysis to reduce ( \sigma ) is also recommended [43].

- Cause: Using a non-optimal number of MSD points for fitting.

- Solution: Follow the guidance to find the optimal number of points, ( p_{\text{min}} ), which depends on your specific experimental parameters ( ( N ), ( \sigma ), ( D ) ). Using this optimal number minimizes the standard error in the estimated ( D ) [43].

Issue: Abnormally High Ionic Conductivity via the Nernst-Einstein Relation

Problem Description: After calculating ion diffusion coefficients from MSD, the resulting ionic conductivity from the Nernst-Einstein relation is much higher than expected or reported in literature.

Diagnosis and Solutions:

- Cause: Incorrect MSD analysis leading to overestimation of ( D ).

- Solution: Revisit the MSD calculation protocol. The most likely culprits are using wrapped coordinates or an incorrect fitting range, as described in Issue 2.1. Meticulously check these steps first [42].

- Cause: Finite-size effects and neglect of ion correlations.

- Solution: The Nernst-Einstein relation assumes ideal, non-interacting particles. In concentrated ionic systems, ion-ion correlations can significantly reduce conductivity. Advanced methods like the Green-Kubo approach, which integrates the current autocorrelation function, may be required for more accurate results. Note that the T-MSD method has been proposed to improve the accuracy of diffusion coefficient calculation from a single trajectory [45] [42].

Experimental Protocols & Data Presentation

Standard Protocol for MSD-Based Diffusion Coefficient Calculation

The following workflow outlines the critical steps for a robust MSD analysis, integrating best practices from the literature.

Detailed Methodology:

- Preprocess Trajectory: Load the trajectory using unwrapped coordinates. If necessary, center the system and remove overall rotation/translation to focus on internal diffusion. Remove solvent and other non-essential components to reduce computational cost [41] [1].

- Calculate MSD: Use a reliable algorithm. The windowed algorithm averages over all possible time lags, but for long trajectories, a Fast Fourier Transform (FFT)-based algorithm is computationally more efficient (( N \log N ) scaling) [41]. The MSD for a discrete trajectory with time step ( \Delta t ) is calculated as: ( \overline{\delta^2(n\Delta t)} = \frac{1}{N-n}\sum{i=1}^{N-n} |\vec{r}{i+n} - \vec{r}_i|^2 ) where ( N ) is the total number of frames, and ( n ) is the lag index [40].

- Identify Linear Regime: Plot the MSD against lag time. Create a log-log plot to help identify the linear segment, which should have a slope of 1 for normal diffusion. Avoid the non-linear, short-time ballistic regime and the long-time, noisy region where statistics are poor [41].

- Perform Linear Fit: Using the selected linear segment from step 3, perform a linear regression (e.g., using

scipy.stats.linregressor equivalent) of MSD versus lag time. The output is the slope of the line and its standard error [41]. - Calculate Diffusion Coefficient: Extract the self-diffusivity using the Einstein relation: ( D = \frac{\text{slope}}{2n} ), where ( n ) is the dimensionality of the MSD (e.g., ( n=3 ) for 'xyz', ( n=2 ) for 'xy') [41].

Table 1: Common parameters and pitfalls in MSD analysis for diffusion studies.

| Parameter / Concept | Description | Impact on MSD & Diffusion Coefficient |

|---|---|---|

| Trajectory Length | Total number of frames, ( N ), or total simulation time. | Shorter trajectories lead to poorer statistics, especially at long lag times, making it difficult to identify the true linear diffusive regime [42]. |

| Fitting Range | The range of lag times, ( \tau ), used for linear regression. | A range that is too short fails to capture diffusion; a range that is too long includes noisy, unreliable data, biasing the result [43]. |

| Localization Error, ( \sigma ) | Uncertainty in determining a particle's position (in SPT). | Adds a constant offset (( 4\sigma^2 ) in 2D) to the MSD, causing overestimation of ( D ) if not modeled in the fit [43] [44]. |

| Dimensionality, ( n ) | The spatial dimensions included in the MSD calculation (e.g., 1D, 2D, 3D). | Directly scales the calculated ( D ). Ensure the correct ( n ) is used in the final calculation: ( D = \frac{\text{slope}}{2n} ) [40] [41]. |

| Periodic Boundary | The use of "wrapped" vs. "unwrapped" atom coordinates. | Using wrapped coordinates is a critical error that severely truncates displacements and underestimates the true diffusion coefficient [41] [1]. |

From Diffusion to Ionic Conductivity: The Nernst-Einstein Relation

The Nernst-Einstein equation provides a link between the microscopic diffusion of ions and the macroscopic property of ionic conductivity (( \sigma )):

[ \sigma = \frac{n q^2 D}{k_B T} ]

where ( n ) is the number density of the charge carrier, ( q ) is its charge, ( D ) is its diffusion coefficient, ( k_B ) is Boltzmann's constant, and ( T ) is the temperature. For systems with multiple ionic species (e.g., cations and anions), the contributions are summed [42].

Table 2: Troubleshooting the Nernst-Einstein relation for ionic conductivity calculation.

| Symptom | Possible Cause | Verification and Corrective Action |

|---|---|---|

| Conductivity is 2-5x higher than literature/expected values. | 1. Overestimated D from MSD: Wrapped coordinates, wrong fit. 2. Ignored ion correlations. | 1. Re-check MSD protocol using this guide's troubleshooting sections. 2. Consider using the Green-Kubo method for a more accurate result that accounts for correlated motion [42]. |

| Conductivity differs between simulation replicates. | 1. Insufficient sampling. 2. Different trajectory lengths used for analysis. | 1. Increase simulation time. 2. Use consistent and long trajectory lengths for all replicates, and ensure the MSD fitting range is standardized [42]. |

The Scientist's Toolkit

Table 3: Essential software and computational tools for MSD analysis.

| Tool Name | Type | Primary Function / Use in MSD Analysis |

|---|---|---|

| MDAnalysis | Python Library | A versatile library for analyzing molecular dynamics trajectories. Its analysis.msd.EinsteinMSD module can compute MSDs with FFT acceleration. Requires unwrapped coordinates [41]. |

| GROMACS | MD Simulation Suite | Includes the gmx msd tool for calculating MSDs and diffusion coefficients directly from simulation trajectories. Use the -pbc nojump flag to analyze unwrapped coordinates [42]. |