Overcoming Teleological Obstacles in Drug Discovery: A Strategic Guide for Research and Development

This article addresses the persistent challenge of teleological reasoning—the cognitive bias to attribute purpose or design to natural phenomena—in scientific research and drug development.

Overcoming Teleological Obstacles in Drug Discovery: A Strategic Guide for Research and Development

Abstract

This article addresses the persistent challenge of teleological reasoning—the cognitive bias to attribute purpose or design to natural phenomena—in scientific research and drug development. It explores how this 'teleological obstacle' contributes to high failure rates in clinical trials by fostering confirmation bias and oversimplified, single-target approaches. We detail foundational concepts, present methodological frameworks for bias mitigation, and provide troubleshooting strategies for common R&D pitfalls. Furthermore, we validate these approaches with evidence from educational interventions and the success of multi-target therapies, offering a comprehensive resource for scientists and drug development professionals to enhance research rigor and innovation.

Defining the Teleological Obstacle: Why Purpose-Driven Thinking Disrupts Scientific Progress

Teleological thinking is the human tendency to ascribe purpose to objects and events. This cognitive process is fundamental; early in development, children encounter objects and ask "what is this for?". This tendency also applies to events unfolding around us, where people often ascribe purpose to random occurrences [1].

While this thinking can encourage explanation-seeking and help find meaning in misfortune, it can become maladaptive at its extremes. Excessive teleological thinking is correlated with and can fuel delusion-like ideas and conspiracy theories. The key question for researchers is what drives this transition from helpful explanatory mechanism to harmful cognitive bias [1].

Core Mechanisms: Two Pathways of Causal Learning

Research reveals a fundamental distinction in how humans learn causal relationships, with direct implications for understanding teleological reasoning.

Associative Learning Pathway

This pathway involves largely automatic processes based on prediction errors. Learning occurs when outcomes are surprising; no surprise, no learning. This mechanism is evolutionarily ancient, demonstrated in species from monkeys to crickets [1].

Key Characteristic: This learning is driven by aberrant prediction errors that imbue random events with excessive significance, potentially underpinning excessive teleology [1].

Propositional Reasoning Pathway

This pathway involves explicit reasoning over rules or "propositions." It represents higher-level cognitive processing where individuals deduce relationships based on learned rules about how the world works [1].

Experimental Dissociation

The modified Kamin blocking paradigm can distinguish these pathways. In causal learning tasks, participants predict allergic reactions to food cues. The critical manipulation involves pre-learning phases that establish different rules [1]:

- Non-additive blocking tests associative learning (prediction error)

- Additive blocking tests propositional reasoning (rule-based deduction)

Table: Experimental Conditions in Kamin Blocking Paradigm

| Phase | Non-Additive Condition | Additive Condition |

|---|---|---|

| Pre-Learning | Basic cue-outcome pairing | Learn additivity rule (e.g., two foods cause stronger allergy together) |

| Learning | Establish single cue predictive power | Establish single cue predictive power |

| Blocking | Compound cues (A1B1+, A2B2+) | Compound cues (A1B1+, A2B2+) |

| Test | Measure responses to blocked cues (B1, B2) | Measure responses to blocked cues (B1, D1) |

Experimental Protocols & Methodologies

Standardized Teleology Assessment

The Belief in the Purpose of Random Events survey serves as the validated measure for teleological thinking. Participants evaluate to what extent one unrelated event could have "had a purpose" for another (e.g., "a power outage happens during a thunderstorm and you have to do a big job by hand" and "you get a raise") [1].

Kamin Blocking Experimental Protocol

Objective: To dissociate associative versus propositional learning contributions to teleological thinking.

Procedure:

- Participant Training: Instruct participants they will learn about foods that may cause allergic reactions

- Pre-learning Phase (Additive condition only): Train participants on additivity rule using distinct cues (I, J)

- Learning Phase: Establish A cues as allergy predictors

- Blocking Phase: Present compound cues (A1B1+, A2B2+) where B cues are redundant

- Test Phase: Assess responses to previously blocked cues (B1, B2, D1, D2)

Controls: Include neutral cues (UV-, WX-, YZ-) to balance responses and assess baseline responding [1].

Troubleshooting Guide: Common Research Obstacles

FAQ: What constitutes a "blocking failure" and how is it measured?

Blocking failure occurs when participants continue to ascribe predictive power to redundant B cues despite their irrelevance. This is measured by comparing response rates to blocked cues versus genuinely novel cues. Excessive teleological thinkers show reduced blocking effects, learning more from irrelevant cues and overpredicting causal relationships [1].

FAQ: Why might teleology measures correlate with delusion-like ideas?

Both phenomena may share roots in aberrant associative learning. Computational modeling suggests the relationship stems from excessive prediction errors that assign undue significance to random events, creating spurious meaningful connections [1].

FAQ: How can we minimize propositional reasoning contamination in associative learning assays?

Use the non-additive blocking paradigm without pre-training on additivity rules. This setup more purely taps into associative mechanisms without engaging higher-order reasoning about rules and propositions [1].

Applications to Drug Development Challenges

Teleological thinking barriers manifest in therapeutic development, where cognitive biases can impact decision-making.

Table: Drug Development Failure Analysis and Cognitive Connections

| Failure Cause | Percentage | Potential Teleological Connection |

|---|---|---|

| Lack of Clinical Efficacy | 40%-50% | Over-ascribing purpose to preclinical results based on spurious associations |

| Unmanageable Toxicity | 30% | Failure to block redundant cues in safety signaling |

| Poor Drug-like Properties | 10%-15% | Misattributing purpose to molecular characteristics without sufficient evidence |

| Commercial/Strategic Issues | 10% | Pattern recognition errors in market assessments |

Expert-Identified Barriers

Drug development professionals report these top challenges [2]:

- Rising clinical trial costs (49%)

- Patient recruitment difficulties (40%)

- Increasing trial complexity

These practical barriers can be exacerbated by teleological biases when researchers:

- Over-ascribe purpose to noisy clinical data

- See meaningful patterns in random trial outcomes

- Persist with unpromising drug candidates based on initial spurious associations

The STAR Framework Solution

The Structure-Tissue Exposure/Selectivity-Activity Relationship (STAR) framework addresses systematic thinking failures by classifying drug candidates more comprehensively [3]:

- Class I: High specificity/potency + high tissue exposure/selectivity (superior efficacy/safety)

- Class II: High specificity/potency + low tissue exposure/selectivity (high toxicity risk)

- Class III: Adequate specificity/potency + high tissue exposure/selectivity (often overlooked)

- Class IV: Low specificity/potency + low tissue exposure/selectivity (should terminate early)

This framework counteracts teleological biases by forcing systematic evaluation across multiple dimensions rather than over-valuing single promising associations.

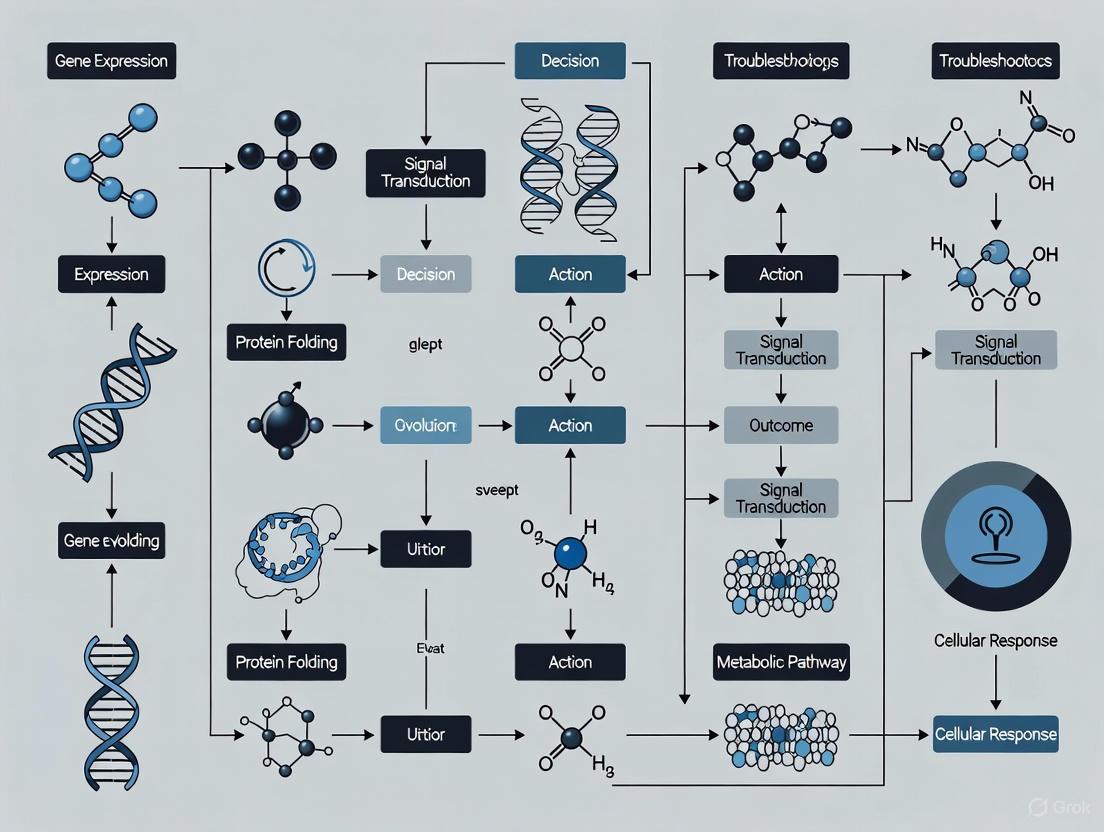

Visualizing Research Pathways

Experimental Workflow for Teleology Research

Two Pathways of Teleological Thinking

The Scientist's Toolkit: Essential Research Materials

Table: Key Research Reagents and Assessments

| Research Tool | Function/Purpose | Application Context |

|---|---|---|

| Belief in Purpose of Random Events Survey | Validated measure of teleological thinking tendency | Baseline assessment for all study participants |

| Kamin Blocking Paradigm (Non-additive) | Assess pure associative learning mechanisms | Isolating prediction error-driven learning |

| Kamin Blocking Paradigm (Additive) | Assess propositional reasoning with rule-learning | Testing explicit reasoning contributions |

| Computational Modeling Tools | Quantify prediction errors and learning parameters | Data analysis phase for mechanism identification |

| Delusion-like Ideation Measures | Assess correlated cognitive tendencies | Establishing connection to clinical phenomena |

Understanding the Teleological Obstacle

What is teleological thinking in scientific research?

Teleological thinking is the tendency to ascribe purpose or goal-directedness to objects and events. In research, this manifests as interpreting phenomena as happening for a reason rather than through natural mechanisms [4]. While natural in human cognition, this default can become an obstacle when it leads researchers to assume purposes where none exist, gather only confirmatory evidence, and fail to properly test null hypotheses [5] [6].

How does confirmation bias reinforce teleological obstacles?

Confirmation bias describes our tendency to seek, interpret, and recall information that confirms our preexisting beliefs while avoiding or dismissing contradictory evidence [7]. In active information acquisition, researchers spend significantly more time examining evidence supporting their initial hypotheses while neglecting disconfirming evidence [7]. This creates a self-reinforcing cycle where teleological assumptions appear increasingly validated through selective evidence gathering.

Troubleshooting Guides & FAQs

FAQ: Critical Research Questions

Q: My experiments keep supporting my initial hypothesis. Should I be concerned? A: Yes. Consistently supportive results may indicate confirmation bias rather than a robust hypothesis. Actively seek disconfirming evidence through controlled tests and consider alternative explanations. Consistently positive outcomes across multiple experimental iterations should raise concerns about biased design or interpretation [8].

Q: How can I distinguish between legitimate functional explanations and problematic teleology? A: Functional explanations describe how a mechanism operates within a system, while teleological explanations attribute purpose or design to that mechanism. Proper functional analysis examines actual causal mechanisms without assuming intentional design, even in biological systems [4].

Q: My team strongly believes in our working hypothesis. How can we maintain objectivity? A: Implement structured challenges through "red team" exercises where members actively attempt to disprove the hypothesis. Create an open research atmosphere where data and experimental design are examined by those not directly involved in the project [8].

Q: What practical steps can I take to minimize teleological bias in experimental design? A: Before experiments, pre-register your hypotheses, methods, and analysis plans. Define what results would support your hypothesis, what would disprove it, and what would be inconclusive. Design experiments that can genuinely falsify your predictions, not just confirm them [9] [8].

Troubleshooting Common Scenarios

Scenario: Repeated failed attempts to reproduce exciting initial findings

- Diagnosis: Potential HARKing (Hypothesizing After Results are Known) or cherry-picking in original study

- Solution: Implement exact protocol replication with predefined success criteria and sample sizes determined by power analysis

Scenario: Inconsistent results across similar experiments

- Diagnosis: Uncontrolled researcher degrees of freedom or vague hypothesis

- Solution: Apply strong inference approach by developing multiple competing hypotheses and designing crucial experiments to systematically eliminate alternatives

Scenario: Resistance to abandoning an elegant but unsupported hypothesis

- Diagnosis: Teleological commitment to a "beautiful" theory overlooking contradictory evidence

- Solution: Establish predetermined criteria for hypothesis abandonment and regularly review evidence against the hypothesis

Experimental Protocols & Data

Protocol: Testing for Sampling Bias in Information Gathering

This protocol adapts methods from active information sampling research to identify confirmation bias in laboratory settings [7].

Materials:

- Research data with conflicting evidence patterns

- Data logging system to track information search patterns

- Time-tracking software

Procedure:

- Present initial hypothesis to researchers

- Provide access to mixed evidence database (supporting and contradicting hypothesis)

- Allow free exploration of evidence for predetermined period

- Log time spent examining different evidence types

- Analyze sampling bias ratio (time with confirmatory vs. disconfirmatory evidence)

- Compare subsequent hypothesis confidence levels against evidence sampling patterns

Interpretation: A sampling bias ratio >1.5:1 indicates significant confirmation bias in information gathering. Correlate this with confidence ratings to identify overconfidence based on selective exposure [7].

Protocol: Null Hypothesis Testing Rigor Assessment

This protocol evaluates whether teleological thinking is undermining proper hypothesis testing [5].

Materials:

- Experimental design documents

- Statistical analysis plans

- Previous research reports

Procedure:

- Document all explicit and implicit assumptions in the research design

- Identify whether each assumption has been properly tested or is taken as given

- For each hypothesis, verify that the null hypothesis has been clearly stated

- Check experimental design for ability to reject the null hypothesis

- Review statistical power and effect size calculations

- Assess whether alternative explanations have been adequately considered

Interpretation: Research designs with vague null hypotheses, low power to detect effects, or inadequate controls for alternatives indicate problematic teleological influence [5] [10].

Quantitative Evidence: Teleological Thinking and Research Outcomes

Table 1: Research Practices and Their Impact on Research Waste

| Research Practice | Prevalence in Ecology | Impact on Research Waste | Primary Teleological Link |

|---|---|---|---|

| Selective reporting | 60-85% of studies | High - creates biased evidence base | Confirmation bias in result interpretation |

| HARKing | ~50% in some fields | Medium-high - distorts literature | Teleological narrative construction |

| Incomplete reporting | ~80% of studies | Medium - hinders replication | Oversimplification of complex systems |

| Poor methodological design | 30-50% of studies | High - produces unreliable results | Untested assumptions about mechanisms |

| P-hacking | 25-40% of studies | Medium - inflates false positives | Seeking patterns to support hypotheses |

Source: Adapted from research waste analyses [9]

Table 2: Experimental Findings on Confirmation Bias in Information Sampling

| Experimental Condition | Sampling Bias Ratio | Effect on Confidence | Change-of-Mind Rate |

|---|---|---|---|

| Free sampling (active) | 1.8:1 chosen vs. unchosen | Increased by 23% with biased sampling | Reduced by 35% with high confidence |

| Fixed sampling (passive) | 1:1 (no bias) | No significant change | Appropriate to evidence strength |

| High initial confidence | 2.3:1 chosen vs. unchosen | Further increased by biased sampling | Reduced by 52% |

| Low initial confidence | 1.2:1 chosen vs. unchosen | Moderately increased | Reduced by 18% |

Source: Data from active information sampling experiments [7]

Signaling Pathways & Cognitive Mechanisms

The Teleological Thinking Cognitive Pathway

This diagram illustrates the cognitive mechanisms underlying teleological thinking and how it leads to research bias.

Research Integrity Protection Workflow

This workflow details procedures to safeguard against teleological biases throughout the research process.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Combating Teleological Bias

| Tool/Resource | Primary Function | Application Context | Implementation Notes |

|---|---|---|---|

| Pre-registration platforms | Prevent HARKing and p-hacking | All experimental research | Commit to hypotheses, methods, and analysis plans before data collection |

| Registered Reports | Peer review before results | High-risk hypothesis testing | Journal evaluates methodology rather than results |

| Open science frameworks | Enable transparency and replication | All research stages | Share protocols, data, code, and materials |

| Bias detection protocols | Identify confirmation patterns | Data collection and analysis | Monitor time spent on different evidence types [7] |

| Strong inference methodology | Systematically eliminate alternatives | Hypothesis testing | Develop multiple competing hypotheses [9] |

| Blind analysis procedures | Reduce interpretation bias | Data analysis | Analyze data without knowing experimental conditions |

| Collaboration outside specialty | Introduce alternative perspectives | Study design and interpretation | Counter disciplinary assumptions |

| Cognitive load management | Reduce teleological defaults | Complex reasoning tasks | Teleological thinking increases under time pressure [11] |

Clinical drug development remains a high-risk endeavor, with an estimated 90% of drug candidates failing during clinical phases, despite rigorous preclinical optimization [3]. A significant portion of this failure—40-50%—is attributed to lack of clinical efficacy, while approximately 30% results from unmanageable toxicity [3]. This persistent high attrition rate occurs despite implementation of sophisticated target validation and drug optimization strategies, raising critical questions about potential overlooked factors in current discovery paradigms.

The predominant single-target ("one-drug-one-target") paradigm, while successful for some therapeutic areas, demonstrates fundamental limitations when applied to complex, multifactorial diseases [12]. This reductionist approach often fails to account for the networked nature of biological systems, leading to efficacy failures when compensatory pathways emerge or when on-target toxicity manifests due to insufficient tissue selectivity [3] [12].

Quantitative Analysis of Clinical Attrition

Table 1: Primary Causes of Clinical Development Failure (2010-2017 Data)

| Failure Cause | Percentage | Primary Contributing Factors |

|---|---|---|

| Lack of Clinical Efficacy | 40-50% | Inadequate target validation in human disease; poor tissue exposure; biological redundancy in complex diseases |

| Unmanageable Toxicity | ~30% | On-target toxicity in vital organs; off-target effects; tissue accumulation in sensitive organs |

| Poor Drug-Like Properties | 10-15% | Inadequate pharmacokinetics; poor solubility; metabolic instability |

| Commercial/Strategic Factors | ~10% | Lack of commercial need; poor clinical trial planning |

Table 2: Comparison of Pharmacological Paradigms

| Feature | Traditional Single-Target Pharmacology | Network/Systems Pharmacology |

|---|---|---|

| Targeting Approach | Single-target | Multi-target / network-level |

| Disease Suitability | Monogenic or infectious diseases | Complex, multifactorial disorders |

| Model of Action | Linear (receptor-ligand) | Systems/network-based |

| Risk of Side Effects | Higher (off-target effects) | Lower (network-aware prediction) |

| Failure in Clinical Trials | Higher (60-70%) | Lower due to pre-network analysis |

| Personalized Therapy Potential | Limited | High potential (precision medicine) |

Troubleshooting Guide: Addressing Single-Target Paradigm Limitations

FAQ 1: Why do drug candidates with excellent target potency and selectivity still fail in clinical trials?

Issue: Persistent efficacy failures despite optimal target engagement metrics.

Troubleshooting Guide:

- Problem: Overemphasis on structure-activity relationship (SAR) at the expense of structure-tissue exposure/selectivity relationship (STR)

- Solution: Implement the Structure-Tissue Exposure/Selectivity-Activity Relationship (STAR) framework during candidate selection [3]

- Protocol: Classify drug candidates into four categories based on potency/specificity and tissue exposure/selectivity:

- Class I: High specificity/potency + High tissue exposure/selectivity (Low dose, superior efficacy/safety)

- Class II: High specificity/potency + Low tissue exposure/selectivity (High dose, high toxicity risk)

- Class III: Adequate specificity/potency + High tissue exposure/selectivity (Low dose, manageable toxicity)

- Class IV: Low specificity/potency + Low tissue exposure/selectivity (Terminate early)

- Problem: Inadequate accounting for biological redundancy and network adaptations

- Solution: Employ network pharmacology approaches to identify critical network nodes and potential bypass mechanisms [12]

- Protocol: Construct protein-protein interaction networks using STRING and BioGRID databases; identify hub nodes using centrality measures (degree, betweenness); validate critical nodes through siRNA screening

FAQ 2: How can researchers overcome teleological thinking in target validation?

Issue: Unconscious assignment of purpose or intent to biological processes, leading to oversimplified disease models.

Troubleshooting Guide:

- Problem: Teleological explanations in experimental design and interpretation

- Solution: Implement explicit framework challenges to intentionality assumptions in biological processes [13] [14]

- Protocol:

- For each hypothesis, formulate alternative non-teleological explanations

- Actively question "why" versus "how" mechanisms in experimental design

- Incorporate evolutionary perspective to understand trait origins without assigned purpose

- Problem: Essentialist thinking about disease states and drug targets

FAQ 3: What experimental strategies can reduce toxicity-related attrition?

Issue: Unmanageable toxicity accounts for approximately 30% of clinical failures.

Troubleshooting Guide:

- Problem: Tissue accumulation in vital organs leading to toxicity

- Solution: Early assessment of tissue exposure/selectivity relationships [3]

- Protocol: Implement quantitative whole-body autoradiography in preclinical species; correlate with tissue-specific toxicity markers; use PET imaging for human tissue distribution prediction

- Problem: On-target toxicity due to target expression in healthy tissues

- Solution:* Multi-target therapeutic approach to enable lower individual target modulation [12]

- Protocol:* Identify synergistic target combinations through network analysis of disease modules; design polypharmacological agents with optimized multi-target profiles

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Network Pharmacology Research

| Tool/Category | Specific Resources | Functionality |

|---|---|---|

| Drug Information Databases | DrugBank, PubChem, ChEMBL | Drug structures, targets, pharmacokinetics data |

| Gene-Disease Associations | DisGeNET, OMIM, GeneCards | Disease-linked genes, mutations, gene function |

| Target Prediction Tools | Swiss Target Prediction, PharmMapper, SEA | Predicts protein targets from compound structures |

| Protein-Protein Interactions | STRING, BioGRID, IntAct | High-confidence protein interaction networks |

| Pathway Analysis | KEGG, Reactome | Pathway mapping and functional enrichment |

| Network Construction & Analysis | Cytoscape, NetworkX | Network visualization and topological analysis |

| Machine Learning Frameworks | DeepPurpose, DeepDTnet | Predicts new drug-target interactions |

Experimental Protocols for Paradigm Shift Validation

Protocol 1: Network Pharmacology Workflow for Target Identification

Methodology:

- Data Retrieval and Curation

- Source drug and target data from DrugBank, PubChem, and ChEMBL

- Obtain disease-associated genes from DisGeNET, OMIM, and GeneCards

- Retrieve multi-omics data from GEO, TCGA, and ProteomicsDB

- Standardize identifiers, remove duplicates, and filter based on confidence scores

Target Prediction and Filtering

- Employ both ligand-based (QSAR modeling, similarity ensemble approaches) and structure-based (molecular docking with AutoDock Vina) predictions

- Filter targets based on binding profiles, disease tissue expression, and Gene Ontology annotations

Network Construction and Analysis

- Construct drug-target, target-disease, and protein-protein interaction networks using Cytoscape

- Perform topological analysis using graph-theoretical measures (degree centrality, betweenness, closeness)

- Identify functional modules using community detection algorithms (MCODE, Louvain)

- Conduct pathway enrichment analysis using DAVID and g:Profiler

Validation

- Train machine learning models (SVM, random forests, graph neural networks) on DeepPurpose datasets

- Validate predictions through molecular docking simulations and experimental methods (SPR, qPCR)

Protocol 2: STAR Framework Implementation for Candidate Selection

Methodology:

- Compound Classification

- Determine in vitro potency (IC50/Ki) against intended target

- Assess selectivity against related target families (e.g., kinome screening)

- Quantify tissue exposure and selectivity using advanced PK/PD modeling

- Categorize candidates into Class I-IV based on integrated profile

- Dose Optimization

- For Class I compounds: Proceed with low-dose regimens

- For Class II compounds: Evaluate toxicity mitigation strategies or consider termination

- For Class III compounds: Prioritize despite modest potency if therapeutic index favorable

Protocol 3: AI-Enhanced Multi-Target Discovery Platform

Methodology:

- Data Integration

- Aggregate multi-omics data (genomics, transcriptomics, proteomics, metabolomics)

- Incorporate clinical outcomes and real-world evidence

- Apply natural language processing to unstructured biomedical literature

Predictive Modeling

- Train deep learning models (generative adversarial networks, variational autoencoders) for de novo molecular design

- Implement reinforcement learning for multi-objective optimization (potency, selectivity, ADME properties)

- Use graph neural networks for polypharmacological profile prediction

Validation

- Conduct high-content phenotypic screening on patient-derived samples

- Utilize organ-on-chip systems for human-relevant toxicity assessment

- Implement microdose clinical trials with PET imaging for human tissue distribution

Emerging Solutions and Future Directions

The integration of artificial intelligence in drug discovery platforms demonstrates potential to overcome single-target paradigm limitations. AI-designed molecules have reached clinical trials in record times, with examples like Insilico Medicine's idiopathic pulmonary fibrosis candidate progressing from target discovery to Phase I in 18 months compared to the typical 3-6 years [15] [16].

The Recursion-Exscientia merger represents a strategic consolidation creating integrated AI-powered platforms combining generative chemistry with extensive phenomic screening data [15]. Such integrated approaches enable simultaneous optimization of multiple parameters, potentially addressing the tissue exposure/selectivity challenges that contribute significantly to clinical attrition.

Network pharmacology, supported by AI and multi-omics data integration, provides a framework for intentional polypharmacology, designing therapeutics that modulate multiple network nodes simultaneously with optimized selectivity profiles [12]. This represents a fundamental shift from the serendipitous polypharmacology often observed with single-target drugs, toward deliberate systems-level therapeutic intervention.

Distinguishing Warranted and Unwarranted Teleology in Experimental Design

FAQs: Navigating Teleological Pitfalls in Your Research

What is the core difference between warranted and unwarranted teleology in experimental design?

Warranted teleology involves a purpose-driven experimental design that is justified by a sound hypothesis, appropriate controls, and a rigorous methodology that can reliably support causal inferences. Unwarranted teleology occurs when researchers claim a purpose or cause-effect relationship that the experimental design cannot support due to fundamental flaws like missing controls, uncontrolled confounding variables, or inadequate sample size [17] [18].

A key experiment failed to produce clear results. How do I troubleshoot the design?

Begin by systematically comparing your implemented design against an ideal, statistically-powered design [17]. Common pitfalls include:

- Inadequate Design: Lack of a clear hypothesis, absence of a control group, or insufficient sample size, which makes it tough to detect real effects [18].

- Confounding Variables: Hidden factors that influence your outcomes, making it hard to tell if your treatment had any real effect [18].

- Data Quality Issues: Poor data collection methods that introduce bias or errors, rendering the results unreliable [18].

How can I prevent biased assumptions from influencing my experimental conclusions?

Promote objectivity within the research team by consciously acknowledging preconceived notions. Clinging to these can cause teams to ignore surprising findings that could be game-changers. A culture that is open to unexpected results is essential for uncovering true insights [18].

My experiment has a major flaw. Is the data still publishable?

It might be, provided you are realistic and conservative in your assessment [17]. First, objectively determine what valid questions your current data can still answer. Then, clearly explain the limitations in your manuscript and detail how the research should be improved in future studies. This honest approach strengthens credibility [17].

Troubleshooting Guides: Resolving Common Experimental Obstacles

Problem: Inconclusive Results from an A/B Test

This guide addresses issues where an experiment fails to show a statistically significant difference between control and treatment groups.

Check Your Sample Size:

- Symptoms: Low statistical power, high variance in results.

- Solution: Pre-determine an adequate sample size using power analysis before starting the experiment. Insufficient sample size is a common pitfall that leaves results unreliable [18].

Verify Control Group Integrity:

- Symptoms: Unable to isolate the effect of your treatment.

- Solution: Ensure your control group is identical to the treatment group in all aspects except for the intervention. Running an experiment without a control group is like trying to measure progress without a starting point [18].

Audit for Confounding Variables:

- Symptoms: Observed effects could be caused by an external factor.

- Solution: Identify and control for potential confounders during the design phase through randomization or statistical controls. Unchecked confounders can invalidate your conclusions [18].

Problem: Failed Correlation Study in Method Comparison

This guide is based on a real consulting example where a new, cheaper measurement method (B) was being tested against a state-of-the-art method (A) [17].

Diagnose the Flaw:

- Background: A client tested 27 participants with both methods over eight days but found no significant within-individual correlation [17].

- Root Cause: The design missed a crucial element: a treatment. Without an intervention (e.g., training), there was no expected within-individual variation for the tests to capture. The design was only measuring noise [17].

Apply Corrective Measures:

- Ideal vs. Reality: The ideal design would include pre- and post-treatment measurements [17].

- Realistic Assessment: Since the current set-up cannot exploit within-individual differences, pivot to analyzing between-individual variation. Use a linear regression with relevant control variables (e.g., gender, age, weight) to see if both methods capture the same inter-subject variation [17].

- Conservative Reporting: Publish the results with a clear explanation of the limitation and a proposal for an improved study with a treatment-based design [17].

Experimental Protocols & Data

| Pitfall Category | Specific Issue | Proposed Solution | Key Reference |

|---|---|---|---|

| Overall Design | Lack of clear hypothesis | Define a focused, testable hypothesis before data collection. | [18] |

| Absence of a control group | Include a control group to establish a baseline for comparison. | [18] | |

| Insufficient sample size | Perform a power analysis pre-experiment to determine adequate sample size. | [18] | |

| Data Integrity | Uncontrolled confounding variables | Use randomization and statistical controls to account for hidden factors. | [18] |

| Poor data collection methods | Implement reliable, standardized data collection processes. | [18] | |

| Mishandling of outliers | Investigate the cause of outliers; use Winsorization or robust statistics. | [18] | |

| Statistical Analysis | Peeking at interim results | Adhere to pre-defined analysis plans to avoid inflated false positives. | [18] |

| Multiple comparisons problem | Apply statistical corrections (e.g., Bonferroni) to control error rates. | [18] | |

| Research Mindset | Biased assumptions | Foster a culture of objectivity and openness to unexpected results. | [18] |

| Unwarranted causal claims | Be conservative; explain how you addressed causality and let the audience judge. | [17] |

Key Research Reagent Solutions for Drug Discovery

| Reagent / Technology | Primary Function | Key Challenge Addressed | |

|---|---|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | Differentiate into human cells to accurately model diseases in vitro. | Overcomes limitations of animal models, which are often poor predictors of human responses. Provides a more accurate disease phenotype. | [19] |

| AI Drug Discovery Platforms | Use machine learning for small molecule discovery, analysis of cellular behaviors, and insights into disease mechanisms. | Tackles rising costs and high failure rates by improving the efficiency and accuracy of hit identification and lead optimization. | [19] |

| Traditional Animal Models | Historically used to predict human toxicity and drug efficacy. | Faces challenges due to inaccurate human response prediction, ethical concerns, and high handling costs. | [19] |

Visualizing Experimental Workflows

Diagram: Warranted vs. Unwarranted Teleology Pathways

Diagram: Troubleshooting an Imperfect Experimental Design

Practical Frameworks for Mitigation: From Hypothesis Generation to Complex Disease Modeling

Troubleshooting Guides

Guide 1: Addressing Common Misinterpretations of "Non-Significant" Results

Problem: Researchers often misinterpret results that fail to reject the null hypothesis (H₀) as evidence for the null hypothesis being true.

Solution:

- Understand that "failing to reject H₀" is not equivalent to "accepting H₀" as true. Your study provides insufficient evidence against the null hypothesis, but doesn't prove it correct [20].

- Use equivalence testing when you need to demonstrate the absence of a meaningful effect. This involves defining a bound of equivalence (a range of values considered clinically irrelevant) and testing whether your confidence interval falls entirely within this bound [21].

- Avoid claiming "no difference" based solely on a non-significant p-value. Instead, report the confidence intervals to show the range of effect sizes compatible with your data [21].

Application Example: In assessing bleeding risk for a drug where the hazard ratio (HR) is 0.86 (95% CI 0.40; 1.87; p-value=0.71), don't conclude "no increase in bleeding risk." Instead, note that the data are compatible with both protective (HR=0.40) and harmful (HR=1.87) effects, requiring further investigation [21].

Guide 2: Correcting False Discovery Rate (FDR) Control in High-Throughput Experiments

Problem: In studies testing hundreds to millions of hypotheses (e.g., genomics), traditional family-wise error rate (FWER) controls are overly conservative, while unadjusted testing yields too many false positives.

Solution:

- For high-throughput experiments where accepting some false positives is tolerable to increase true discoveries, use False Discovery Rate (FDR) control instead of FWER [22].

- Implement modern FDR methods that use informative covariates (e.g., IHW, AdaPT, FDRreg) to increase power. These methods prioritize hypotheses using complementary information while maintaining FDR control [22].

- Ensure covariates used in weighted FDR procedures are independent of p-values under the null hypothesis [23].

Application Example: In an expression quantitative trait loci (eQTL) study, use the genomic distance between polymorphisms and genes as an informative covariate, as cis interactions are more likely significant than trans interactions [22].

Guide 3: Proper Application of Multiple Testing Corrections

Problem: Researchers either ignore multiple testing issues or apply inappropriate corrections that eliminate true positives.

Solution:

- For studies with primary and secondary endpoints, use hierarchical testing procedures that reflect the relative importance of endpoints [23].

- Assign weights to hypotheses in FDR control to reflect their relative importance, where rejecting a highly weighted hypothesis carries more importance than a low weighted one [23].

- Consider using gatekeeper procedures that test primary endpoints before secondary ones, controlling the family-wise error rate while maintaining power for logically related hypotheses [23].

Application Example: In clinical trials with one primary and multiple secondary endpoints, use hierarchical weighted FDR procedures that test primary endpoints first, then proceed to secondary endpoints only if the intersection hypothesis for secondaries is rejected [23].

Frequently Asked Questions (FAQs)

FAQ 1: What is the difference between a p-value and the probability that the null hypothesis is true?

A p-value indicates the probability of observing data as extreme as yours, assuming the null hypothesis (H₀) is true. It is not the probability that H₀ is itself true [24] [25]. A common misinterpretation is that a p-value of 0.02 means there's a 2% chance the result is due to chance; rather, it means that if H₀ were true, a sample result this extreme would occur only 2% of the time [25].

FAQ 2: When we fail to reject the null hypothesis, why can't we say we "accept" it?

Statistical tests are designed to challenge or "falsify" the null hypothesis, not to prove it [20]. Failing to reject H₀ means you didn't find strong enough evidence against it, similar to a court finding a defendant "not guilty" rather than "innocent" [20]. The study may be underpowered to detect a real effect, or the effect might be too small to detect with your sample size [21].

FAQ 3: What is the relationship between statistical significance and practical importance?

Statistical significance (typically p < 0.05) does not necessarily imply practical or clinical importance [24]. With large sample sizes, very small and clinically irrelevant differences can become statistically significant. Always consider the effect size and confidence intervals alongside p-values to assess real-world implications [24].

FAQ 4: When should I use equivalence testing instead of traditional null hypothesis testing?

Use equivalence testing when your research goal is to demonstrate the absence of a meaningful effect, rather than to detect a difference [21]. This involves pre-defining a "bound of equivalence" - a range of effect sizes considered clinically irrelevant - and testing whether your confidence interval falls entirely within this bound [21].

FAQ 5: How do I choose between Family-Wise Error Rate (FWER) and False Discovery Rate (FDR) control?

Use FWER control (e.g., Bonferroni correction) when even one false positive would have serious consequences, such as in confirmatory Phase III clinical trials [23]. Use FDR control when you're willing to tolerate some false positives to increase true discoveries, such as in exploratory research or high-throughput experiments [22].

Data Presentation

Table 1: Types of Statistical Errors in Null Hypothesis Testing

| Error Type | Definition | Consequence | Typical Control Method |

|---|---|---|---|

| Type I Error (False Positive) | Rejecting a true null hypothesis [24] | Concluding an effect exists when it doesn't | Significance level (α), typically set at 0.05 [24] |

| Type II Error (False Negative) | Failing to reject a false null hypothesis [24] | Missing a real effect | Statistical power (1-β), typically 80% or higher [24] |

Table 2: Factors Influencing Statistical Significance

| Factor | Effect on Statistical Significance | Consideration for Experimental Design |

|---|---|---|

| Effect Size | Larger effects more likely significant [25] | Consider minimum clinically important difference |

| Sample Size | Larger samples more likely to detect effects [25] | Conduct power analysis before study |

| Variability | Less variability increases likelihood of significance [25] | Control extraneous sources of variation |

| Significance Level (α) | Higher α (e.g., 0.10) increases significance likelihood | Balance Type I and Type II error risks |

Experimental Protocols

Protocol 1: Implementing Null Hypothesis Significance Testing

Methodology:

- State Hypotheses: Formulate null hypothesis (H₀) and alternative hypothesis (H₁). H₀ typically proposes no effect or no difference [24] [25].

- Collect Data: Design experiment with appropriate controls, randomization, and blinding to reduce bias [24].

- Calculate Test Statistic: Compute appropriate statistic (t-value, F-value, etc.) based on your data and research question.

- Determine P-value: Calculate probability of obtaining results as extreme as observed, assuming H₀ is true [25].

- Make Decision: If p-value ≤ α (typically 0.05), reject H₀; otherwise, fail to reject H₀ [25].

Key Considerations:

- Report confidence intervals alongside p-values to show precision of estimates [24].

- Consider using S-values alongside p-values for more intuitive interpretation [21].

Protocol 2: Implementing Equivalence Testing

Methodology:

- Define Equivalence Bound: Establish range of effect sizes considered clinically irrelevant (e.g., HR between 0.9-1.1) [21].

- Collect Data: Same as traditional testing.

- Calculate Confidence Interval: Typically 95% CI for effect size.

- Make Decision: If entire confidence interval falls within equivalence bound, conclude equivalence; otherwise, cannot conclude equivalence [21].

Application Example: In non-inferiority testing for drug safety, set bound of equivalence (e.g., HR=1.25). If both point estimate and upper CI bound are smaller than this bound, conclude non-inferiority [21].

Research Workflow Visualization

Title: Null Hypothesis Testing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Components for Robust Null Hypothesis Testing

| Component | Function | Application Notes |

|---|---|---|

| P-values | Measure compatibility between data and H₀ [25] | Always report with effect sizes and confidence intervals [24] |

| Confidence Intervals | Show range of plausible effect sizes [21] | More informative than p-values alone for interpretation |

| Equivalence Bounds | Pre-specified range of clinically irrelevant effects [21] | Essential for equivalence or non-inferiority testing |

| Statistical Power | Probability of correctly rejecting false H₀ [24] | Determine sample size needed during planning phase |

| Multiple Testing Correction | Control false positives with multiple comparisons [22] | Choose between FWER and FDR based on research goals |

| Covariate Information | Complementary data to improve power [22] | Used in modern FDR methods; must be independent of p-values under H₀ |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental rationale for shifting from single-target to multi-target drug discovery? Complex diseases like cancer, Alzheimer's, and major depressive disorder are characterized by multifactorial etiologies, where biological networks and redundant pathways render single-target interventions insufficient [26] [27]. Multi-target drugs are designed to modulate several key nodes within a disease network simultaneously. This approach enhances therapeutic efficacy by tackling the disease complexity, reduces the likelihood of drug resistance common in single-target therapies, and can minimize side effects by rebalancing the entire network rather than hitting a single target in isolation [27].

FAQ 2: My multi-target compound shows high in vitro efficacy but poor in vivo outcomes. What could be the cause? This common issue often stems from suboptimal Absorption, Distribution, Metabolism, and Excretion (ADME) properties [27]. A molecule optimized for binding multiple targets may have physicochemical properties unsuitable for in vivo environments. Troubleshoot by:

- Profiling the compound's blood-brain barrier penetration (for neurological disorders) and metabolic stability [27].

- Checking if the balanced potency across targets is maintained at the site of action.

- Investigating potential off-target interactions that may cause toxicity or adverse effects, diverting the compound from its intended targets [27].

FAQ 3: How can I validate the multi-target mechanism of action for a new compound? Employ an integrated workflow combining computational and experimental methods [26]:

- In Silico Profiling: Use molecular docking and virtual screening to predict interactions with multiple predefined targets [27].

- In Vitro Binding Assays: Conduct assays (e.g., kinase panels, receptor binding assays) to quantify affinity for each suspected target.

- Cellular Phenotypic Screening: Confirm that the multi-target engagement translates to the desired phenotypic outcome (e.g., reduced tumor cell viability, suppressed inflammatory response) [26].

- Network Pharmacology Analysis: Map the compound's targets onto disease-associated pathways to understand the system-level impact [27].

FAQ 4: What are the major challenges in the preclinical validation of multi-target drugs? The primary challenges include [26] [27]:

- Complex Experimental Design: Requires developing reliable model systems that capture the interplay of multiple targets.

- High Development Costs: More extensive validation leads to increased resource investment.

- Balancing Potency and Selectivity: Achieving the right activity level across multiple targets without causing off-target toxicity is difficult.

- Predictive Model Limitations: Current computational and experimental systems struggle to accurately predict multi-target effects and systemic interactions.

FAQ 5: How can AI and machine learning accelerate multi-target drug discovery? AI addresses key bottlenecks through [28]:

- Deep Generative Models (DGMs): AI can design novel molecular structures with desired multi-target profiles from scratch.

- Reinforcement Learning (RL): This technique allows AI to iteratively optimize lead compounds against multiple objectives (e.g., target affinity, solubility, low toxicity).

- Predictive Modeling: Machine learning models can mine vast chemical and biological datasets to identify promising multi-target candidates or repurpose existing drugs, significantly reducing initial screening time [27].

Troubleshooting Guides

Issue 1: Poor Efficacy Despite Successful Target Engagement

Problem: Your compound confirms binding to multiple intended targets in biochemical assays but shows minimal effect in cellular or animal models of the disease.

| Possible Cause | Diagnostic Experiments | Potential Solution |

|---|---|---|

| Insufficient Pathway Modulation | Measure downstream biomarkers (e.g., phosphorylation levels) to check if target engagement translates to functional pathway inhibition/activation. | Re-optimize compound structure to improve functional potency, not just binding affinity. |

| Pathway Redundancy/ Compensation | Use transcriptomics or proteomics to identify other pathways that become activated, compensating for the inhibited targets. | Identify the compensating node and design a triple-target inhibitor, or combine with a second agent. |

| Sub-optimal Dosing Schedule | Perform pharmacokinetic-pharmacodynamic (PK-PD) modeling to understand the relationship between drug concentration and effect over time. | Adjust the dosing regimen (e.g., dose, frequency) to maintain effective concentrations on all targets. |

Issue 2: Undesirable Toxicity or Off-Target Effects

Problem: The multi-target agent causes toxicity in preclinical models, potentially due to unintended interactions.

| Possible Cause | Diagnostic Experiments | Potential Solution |

|---|---|---|

| Interaction with Critical Off-Targets | Run a broad panel screening against common anti-targets (e.g., hERG channel for cardiotoxicity). | Use structural chemistry (e.g., structure-activity relationship, SAR) to refine selectivity and reduce off-target binding. |

| Overly Potent Effects on One Target | Determine the IC50 for each target. A much lower IC50 for one target may lead to excessive pharmacological effects. | Re-balance the compound's potency across the target portfolio to achieve therapeutically desired levels at each node. |

| Reactive Metabolites | Identify and characterize major metabolites using liquid chromatography-mass spectrometry (LC-MS). | Chemically modify the scaffold to block the formation of toxic metabolites while retaining multi-target activity. |

Experimental Protocols for Key Methodologies

Protocol 1: In Silico Screening for Multi-Target Lead Identification

This protocol uses AI-driven molecular docking to identify compounds with potential activity against multiple disease-associated targets [27] [28].

Workflow Description: The process begins with target selection and compound library preparation. AI-powered molecular docking then screens compounds against each target. Results are integrated using multi-objective optimization to identify leads that show strong binding across multiple targets. These prioritized hits are recommended for experimental validation.

- Target Selection & Preparation: Select 2-3 key proteins from the disease pathway (e.g., GSK-3β and BACE-1 for Alzheimer's). Obtain their 3D crystal structures from the Protein Data Bank or generate high-quality homology models [27].

- Compound Library Preparation: Curate a virtual library of compounds (100,000 to 1 million molecules) from commercial vendors or in-house collections. Prepare the structures using molecular modeling software: add hydrogens, assign charges, and minimize energy.

- AI-Driven Molecular Docking: Use automated docking software (e.g., AutoDock Vina, Glide) to screen the library against each prepared target protein. Configure the software to define the binding pocket and run parallelized computations [28].

- Hit Identification & Multi-Objective Optimization: For each compound, collect docking scores (e.g., binding affinity in kcal/mol) for all targets. Use multi-objective optimization algorithms to rank compounds, prioritizing those with strong, balanced affinity across all targets, not just a single one.

- Visual Inspection & Final Selection: Visually inspect the top 100-500 highest-ranking compounds in their predicted binding poses to confirm logical interactions (e.g., hydrogen bonds, hydrophobic contacts). Select the top 20-50 candidates for experimental validation.

Protocol 2: Validating Multi-Target Engagement in Cellular Models

This protocol confirms that a candidate compound interacts with its intended targets in a live-cell context.

Workflow Description: The process starts with treatment of disease-relevant cell lines. Target engagement is measured using techniques like cellular thermal shift assay (CETSA) and phospho-flow cytometry. Downstream phenotypic effects are assessed through cell viability and apoptosis assays, with data integration confirming multi-target mechanism of action.

- Cell Culture & Treatment: Culture disease-relevant cell lines (e.g., SH-SY5Y for neurodegeneration, MCF-7 for breast cancer). Seed cells in 96-well or 6-well plates and treat with your compound at a range of concentrations (e.g., 1 nM - 100 µM) for 2-24 hours. Include controls (vehicle-only and a positive control if available).

- Target Engagement Assay (CETSA):

- Cell Lysis: For each treatment, lyse cells and divide the lysate into aliquots.

- Heating: Heat each aliquot to a different temperature (e.g., 37°C - 65°C).

- Quantification: Centrifuge to remove aggregated protein and use a Western blot or immunoassay to quantify the remaining soluble target protein. A shift in the protein's melting curve indicates compound binding.

- Downstream Pathway Analysis (Phospho-Flow Cytometry):

- Cell Fixation & Staining: At the end of the treatment period, fix and permeabilize cells. Stain with fluorescently tagged antibodies specific to the phosphorylated (active) forms of downstream proteins (e.g., p-AKT, p-ERK).

- Analysis: Analyze cells using a flow cytometer. A reduction in fluorescence intensity for multiple pathway components indicates successful multi-target pathway modulation.

- Phenotypic Readout: In parallel, run a cell viability assay (e.g., MTT, CellTiter-Glo) or apoptosis assay (e.g., Caspase-3/7 activation) to link target engagement to a biological effect.

- Data Integration: Correlate the degree of target engagement (from CETSA) and pathway inhibition (from phospho-flow) with the phenotypic outcome. Successful multi-target engagement will show strong correlation across all datasets.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item Name | Function & Application | Key Consideration |

|---|---|---|

| AI-Based Generative Software (e.g., Deep Generative Models) | De novo generation of novel molecular structures with predefined multi-target activity profiles [28]. | Requires high-quality training data on targets and compounds; expertise in computational chemistry is essential. |

| Kinase/Receptor Panels | Broad in vitro screening to quantify binding affinity and inhibitory potency against dozens to hundreds of targets simultaneously [27]. | Crucial for identifying off-target effects and confirming desired polypharmacology early in development. |

| Proteostasis-Targeting Chimeras (PROTACs) | Bifunctional molecules that recruit a target protein to an E3 ubiquitin ligase, leading to its degradation. Useful for targeting "undruggable" proteins [27]. | Can address multiple disease-relevant proteins; optimization is complex due to the ternary complex formation requirement. |

| Cellular Thermal Shift Assay (CETSA) | Validates direct target engagement in a live-cell context by measuring the thermal stabilization of a protein upon compound binding [26]. | Provides critical proof that the compound interacts with the intended target inside cells, bridging biochemical and cellular assays. |

| Unified Modeling Language (UML) / Business Process Modeling Notation (BPMN) | Visualizes and maps complex biological pathways and drug-target interactions for clearer experimental planning [29]. | Helps in modeling complex disease networks and hypothesizing the effects of multi-target interventions. |

Leveraging Drug Repurposing to Bypass Teleological Traps in Discovery

FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What is a "teleological trap" in drug discovery? A1: A teleological trap is a cognitive bias where researchers persist with a drug candidate based on its initial, intended biological purpose (its teleology), even when faced with significant obstacles or evidence suggesting alternative pathways or repurposing opportunities might be more fruitful. This can lead to wasted resources and hinder innovation.

Q2: How can drug repurposing help overcome these traps? A2: Drug repurposing actively seeks new therapeutic applications for existing drugs or failed candidates. This approach bypasses teleological traps by decoupling the compound from its original purpose, encouraging researchers to evaluate its efficacy based on new mechanistic data and phenotypic screens rather than preconceived notions of its function.

Q3: What are the first steps in initiating a repurposing screen for an obstructed candidate? A3: The initial steps involve:

- Comprehensive Data Review: Systematically re-analyzing all existing pre-clinical and clinical data for the candidate, focusing on unexpected or off-target effects.

- Mechanistic Deconstruction: Using high-throughput omics technologies (transcriptomics, proteomics) to map the compound's complete interaction profile within different cellular contexts.

- Phenotypic Screening: Implementing unbiased phenotypic screens against diverse disease models to identify novel activity.

Q4: Our team is resistant to abandoning the original indication for a promising candidate. How can we manage this persistence? A4: Implement structured, data-driven "gateway" reviews at predefined project milestones. These reviews should mandate the evaluation of repurposing hypotheses alongside the primary indication. Utilizing objective decision-making frameworks that weigh mechanistic evidence for new indications can help depersonalize the process and mitigate bias.

Troubleshooting Common Experimental Issues

Problem: High-Throughput Screen Yields Excessive False Positives in Repurposing Assays

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Compound interference with assay chemistry (e.g., auto-fluorescence, quenching). | 1. Run counter-screens with known interferents. 2. Re-test hits using an orthogonal assay with a different readout. | 1. Use data analysis algorithms that correct for interference. 2. Prioritize hits confirmed by the orthogonal method. |

| Off-target cytotoxicity causing general cell death, mistaken for specific activity. | Measure cell viability (e.g., ATP levels, membrane integrity) in parallel with the primary screen. | Exclude compounds that show significant cytotoxicity at the screening concentration. |

| Insufficient compound solubility or stability under assay conditions. | Check for precipitate formation microscopically. Re-measure compound concentration after incubation in assay buffer. | Optimize solvent (e.g., DMSO concentration), use different buffering agents, or adjust incubation times. |

Problem: Inconsistent Efficacy in Disease-Relevant Cell Models After Repurposing

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate target expression or pathway activity in the chosen cell model. | Quantify target protein/mRNA levels (via Western blot, qPCR) across different cell models. | Validate findings in multiple, well-characterized cell lines or primary cells where the target pathway is known to be active. |

| Differences in pharmacokinetics (PK) not accounted for in vitro (e.g., metabolism, protein binding). | Incorporate human liver microsome stability assays or plasma protein binding studies early in the validation process. | Adjust in vitro dosing regimens or use metabolite testing to identify the active moiety. |

| Insufficient pathway engagement despite compound presence. | Use a cellular thermal shift assay (CETSA) or target phosphorylation assays to confirm direct target engagement in the cellular context. | Titrate compound concentration to establish a clear concentration-response relationship for both target engagement and phenotypic effect. |

Experimental Protocols & Data

Detailed Protocol: Transcriptomic Profiling for Repurposing Clues

This protocol details how to use gene expression data to generate hypotheses for drug repurposing by identifying novel mechanistic pathways.

Methodology:

- Cell Treatment: Treat a disease-relevant cell line with the candidate compound at its IC~50~ concentration and a vehicle control (e.g., 0.1% DMSO) for 6 and 24 hours. Include at least three biological replicates per condition.

- RNA Extraction: Harvest cells and extract total RNA using a commercial kit (e.g., Qiagen RNeasy). Assess RNA integrity and purity (e.g., RIN > 8.0 via Bioanalyzer).

- Library Prep and Sequencing: Prepare RNA-seq libraries from 1 µg of total RNA using a standardized kit (e.g., Illumina Stranded mRNA Prep). Sequence on an Illumina platform to a depth of at least 25 million paired-end reads per sample.

- Bioinformatic Analysis:

- Alignment and Quantification: Align sequencing reads to the reference genome (e.g., GRCh38) using STAR aligner and quantify gene-level counts with featureCounts.

- Differential Expression: Perform differential expression analysis using DESeq2 in R. Identify genes with a significant adjusted p-value (p-adj < 0.05) and absolute log2 fold change > 1.

- Pathway Analysis: Input the list of significant differentially expressed genes into a pathway enrichment tool (e.g., Ingenuity Pathway Analysis - IPA, or Enrichr) to identify statistically overrepresented biological pathways and upstream regulators.

Table 1: Summary of Key Parameters for Transcriptomic Profiling Protocol

| Parameter | Specification | Notes |

|---|---|---|

| Cell Replicates | 3 biological replicates per condition | Essential for statistical power in differential expression analysis. |

| Compound Incubation | 6h and 24h | Captures both immediate-early and secondary transcriptional responses. |

| RNA Quality (RIN) | > 8.0 | Ensures high-quality, non-degraded RNA for reliable sequencing. |

| Sequencing Depth | ≥ 25 million paired-end reads | Standard depth for robust gene-level quantification. |

| Significance Threshold | p-adj < 0.05 and |log2FC| > 1 | Balances stringency for false discovery rate with biological relevance. |

Visualizations

Experimental Workflow for Repurposing

Signaling Pathway Deconstruction Logic

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Repurposing Experiments

| Item | Function in Repurposing Context |

|---|---|

| Phenotypic Screening Assays (e.g., cell viability, migration, high-content imaging) | Unbiased functional readouts to detect novel biological activity of a compound without presupposing its mechanism. |

| Transcriptomic/Proteomic Kits (e.g., RNA-seq library prep, proximity ligation assays) | Tools for comprehensive molecular profiling to deconstruct a compound's mechanism of action and identify novel pathway engagement. |

| Cellular Thermal Shift Assay (CETSA) Reagents | Used to confirm direct physical engagement between the drug candidate and its putative protein target(s) in a cellular environment. |

| Disease-Relevant Cell Models (e.g., primary cells, iPSC-derived cells, 3D organoids) | Biologically relevant systems for validating repurposing hypotheses, ensuring the new indication is testable in a pathophysiologically accurate context. |

| Bioinformatics Software (e.g., for pathway analysis, connectivity mapping) | Computational tools to interpret complex omics datasets and connect drug-induced gene signatures to diseases, generating testable repurposing hypotheses. |

Troubleshooting Guide: Common Issues in AI-Driven Target Identification

FAQ 1: How can I prevent algorithmic bias when training models on historical pharmaceutical data?

Problem: The AI model performs well on validation datasets but fails to generalize to novel target classes or diverse patient populations, potentially due to embedded biases in training data.

Diagnosis: Historical drug discovery data often overrepresents certain protein families (e.g., kinases, GPCRs) and underrepresents novel target classes, creating inherent bias in training data.

Solution: Implement a multi-faceted bias mitigation strategy:

- Data Auditing and Augmentation: Use tools like AI-based fairness libraries to audit training datasets for representation bias. Strategically augment data for underrepresented target classes using techniques like SMOTE or generative adversarial networks (GANs) [30].

- Algorithmic Debiasing: Integrate fairness constraints directly into the model objective function during training. Employ adversarial debiasing where a secondary network attempts to predict protected variables (e.g., specific protein families) from the primary model's predictions—if successful, it indicates potential bias [31].

- Multi-Modal Data Integration: Combine data from diverse sources (genomic, proteomic, structural biology) to create a more holistic representation that reduces reliance on potentially biased single-data modalities [32] [33].

Prevention: Proactively create balanced dataset curation protocols. Document data provenance and representation statistics for all training datasets.

FAQ 2: Why does my model achieve high accuracy but fail to identify truly novel "druggable" targets?

Problem: The computational model achieves >90% accuracy in validation but only identifies targets with well-established literature, failing to deliver the promised novelty.

Diagnosis: This "teleological obstacle" often stems from overfitting to historical patterns and a lack of genuine innovation in the feature space or model architecture. Models may be simply rediscovering known biology rather than predicting new biology [34].

Solution:

- Feature Engineering Review: Move beyond standard molecular descriptors. Incorporate features derived from cutting-edge structural predictions (e.g., from AlphaFold2 or RoseTTAFold) that may reveal previously unexplored binding sites [35].

- Employ Novel Optimization Strategies: Implement advanced frameworks like HSAPSO (Hierarchically Self-Adaptive Particle Swarm Optimization) for hyperparameter tuning. These methods better balance exploration of novel chemical spaces with exploitation of known productive regions, preventing premature convergence to familiar solutions [30].

- Validation Against Negative Examples: Include "non-druggable" targets and negative control cases in training and testing to ensure the model distinguishes true druggability signals from noise [30].

Prevention: Define "novelty" with specific, measurable criteria upfront. Use cross-validation schemes that explicitly test generalization to target classes excluded from training.

FAQ 3: How can I address the "black box" problem to build trust in AI-predicted targets?

Problem: The AI model suggests potential targets but provides no interpretable rationale for its predictions, making experimental validation a costly leap of faith.

Diagnosis: Many deep learning architectures (e.g., deep neural networks, stacked autoencoders) are inherently complex and non-transparent, creating adoption barriers in rigorous scientific environments [30].

Solution:

- Implement Explainable AI (XAI) Techniques:

- SHAP (SHapley Additive exPlanations): Calculate the contribution of each input feature (e.g., specific amino acid residues, molecular properties) to the final prediction.

- Attention Mechanisms: Use models with built-in attention layers that visually highlight which parts of a protein sequence or structure most influenced the decision.

- Counterfactual Explanations: Generate examples showing minimal changes to the input that would flip the model's prediction (e.g., "If this binding pocket were 0.5Å wider, the target would no longer be classified as druggable") [31].

- Model Selection: Consider using intrinsically more interpretable models like Random Forests for initial feasibility studies, where feature importance is readily available.

Prevention: Choose models that balance performance with interpretability. Plan and budget for XAI analysis as a core component of the AI-driven workflow, not an afterthought.

FAQ 4: What can I do when computational predictions and experimental validation consistently disagree?

Problem: A significant disconnect exists between in silico predictions of target druggability and subsequent in vitro experimental results.

Diagnosis: This can arise from multiple factors: the model may not account for cellular context (e.g., solvent effects, protein dynamics), the training data may be biased toward static structural snapshots, or there may be a mismatch between the prediction task and the experimental assay [35].

Solution:

- Cellular Context Integration: Refine models to incorporate data on protein dynamics, allosteric sites, and post-translational modifications rather than relying solely on static structures. Tools like molecular dynamics simulations can provide crucial supplementary data [35].

- Transfer Learning: Fine-tune pre-trained models on smaller, highly relevant experimental datasets from your specific therapeutic area (e.g., oncology, neurology) to bridge the gap between general druggability and context-specific efficacy [32].

- Iterative Feedback Loops: Implement a continuous learning pipeline where experimental results—both positive and negative—are fed back into the model to iteratively improve its performance and alignment with biological reality.

Prevention: Early in the project, ensure alignment between the computational definition of "druggability" (e.g., binding affinity, pocket presence) and the experimental readout (e.g., functional activity in a cell-based assay).

Performance Comparison of Computational Frameworks

The table below summarizes the quantitative performance of various computational frameworks for drug target identification, highlighting the trade-offs between accuracy, computational cost, and interpretability.

Table 1: Performance Metrics of AI-Based Target Identification Frameworks

| Framework/Method | Reported Accuracy | Key Strength | Computational Complexity | Interpretability | Primary Use Case |

|---|---|---|---|---|---|

| optSAE + HSAPSO [30] | 95.52% | High accuracy & stability; adaptive optimization | Low (0.010s/sample) | Low (Black-box) | High-throughput classification of druggable targets |

| SVM/XGBoost Ensembles [30] | 89.98% - 93.78% | Good performance on structured data | Medium | Medium (Feature importance) | Benchmarking and initial screening |

| Graph-Based Deep Learning [30] | ~95% (est.) | Captures complex relational data in sequences | High | Low (Black-box) | Analyzing protein sequences and interaction networks |

| 3D Convolutional Neural Networks [30] | N/A | Superior for spatial, structural data (e.g., binding sites) | Very High | Low (Black-box) | Structure-based target identification |

Experimental Protocol: Implementing the optSAE + HSAPSO Framework

This protocol provides a step-by-step methodology for implementing a state-of-the-art Stacked Autoencoder (SAE) optimized with Hierarchically Self-Adaptive Particle Swarm Optimization (HSAPSO) for drug classification and target identification, as referenced in [30].

Data Curation and Preprocessing

- Data Sources: Download drug and target data from curated public databases such as DrugBank and Swiss-Prot.

- Feature Extraction: Compute a comprehensive set of molecular descriptors for each compound (e.g., molecular weight, logP, topological indices) and protein features for each target (e.g., amino acid composition, sequence-derived motifs, physicochemical properties).

- Data Cleaning:

- Handle missing values using imputation or removal.

- Remove duplicate entries and correct misannotated data points based on primary literature.

- Data Normalization: Apply Z-score normalization or min-max scaling to all numerical features to ensure stable model training.

- Data Partitioning: Split the processed dataset into training (70%), validation (15%), and hold-out test (15%) sets, ensuring stratified sampling to maintain class distribution (e.g., druggable vs. non-druggable).

Model Initialization and Architecture

- Stacked Autoencoder (SAE) Setup:

- Design the SAE architecture with multiple encoding and decoding layers. A typical structure might be: Input layer -> 512 neurons -> 256 neurons -> 128 neurons (bottleneck) -> 256 neurons -> 512 neurons -> Output layer.

- Initialize weights using a Xavier or He initialization scheme.

- Use activation functions like ReLU or SELU for hidden layers and a linear/sigmoid activation for the output layer depending on the task.

- Pre-training: Perform unsupervised, greedy layer-wise pre-training of the SAE to learn efficient feature representations from the input data. This initializes the weights to a sensible starting point before fine-tuning.

Hierarchically Self-Adaptive PSO (HSAPSO) Optimization

- Parameter Encoding: Define a particle in the swarm where its position vector represents the hyperparameters of the SAE to be optimized (e.g., learning rate, number of units per layer, L2 regularization parameter, dropout rate).

- Fitness Function: Design a fitness function for HSAPSO to maximize. This is typically the accuracy or F1-score on the validation set after training the SAE with the particle's suggested hyperparameters.

- HSAPSO Execution:

- Initialize a swarm of particles with random positions and velocities within predefined bounds for each hyperparameter.

- For each iteration:

- For each particle, configure and train the SAE with its current position (hyperparameters).

- Evaluate the trained model on the validation set to compute the fitness.

- Update the particle's personal best and the swarm's global best.

- Adaptively update each particle's velocity and position using the hierarchical self-adaptive mechanism, which dynamically adjusts PSO's inertia weight and acceleration coefficients for a better balance between exploration and exploitation [30].

- Terminate after a fixed number of iterations or when convergence is achieved (i.e., the global best fitness stabilizes).

Model Training and Evaluation

- Final Model Training: Train the SAE model on the combined training and validation dataset using the optimal hyperparameters discovered by HSAPSO.

- Performance Assessment: Evaluate the final model on the held-out test set. Report standard metrics: Accuracy, Precision, Recall, F1-Score, and Area Under the ROC Curve (AUC-ROC).

- Robustness Check: Perform multiple runs with different random seeds to ensure the stability of the results (e.g., reported standard deviation of ±0.003 [30]).

Workflow Visualization: AI-Driven Target Identification

The diagram below outlines the logical workflow and iterative feedback loop for a robust, bias-resistant AI-driven target identification pipeline.

Diagram 1: Bias-Resistant AI Target Identification Workflow. This workflow emphasizes iterative learning and bias auditing to overcome teleological obstacles.

Research Reagent Solutions

Table 2: Essential Computational Tools & Datasets for AI-Driven Target Identification

| Resource Name | Type | Primary Function | Key Application in Workflow |

|---|---|---|---|

| DrugBank Database [30] | Chemical/Biological Database | Provides comprehensive drug, target, and interaction data. | Serves as a primary source of labeled data for training and benchmarking models. |

| AlphaFold Protein Structure Database [32] [35] | Structural Database | Provides highly accurate predicted 3D protein structures for targets with unknown experimental structures. | Enables structure-based feature extraction and target analysis where crystal structures are unavailable. |

| SWISS-MODEL [35] | Homology Modeling Tool | Provides automated protein structure homology modeling. | Generates reliable 3D models for target proteins to inform feature generation. |

| SHAP (SHapley Additive exPlanations) | Explainable AI Library | Explains the output of any machine learning model by quantifying feature importance. | Interprets "black-box" model predictions to build trust and generate biological hypotheses. |

| Python Scikit-learn | Machine Learning Library | Offers simple and efficient tools for data mining and analysis, including classic ML algorithms (SVM, Random Forest). | Useful for creating baseline models and performing standard data preprocessing tasks. |

| TensorFlow/PyTorch | Deep Learning Framework | Provides flexible ecosystems of tools, libraries, and community resources for building and deploying deep learning models. | Used to implement complex architectures like Stacked Autoencoders (SAEs) and Graph Neural Networks. |