Phylogenetic Congruence: Validating Theories for the Origin and Evolution of the Genetic Code

This article synthesizes cutting-edge research on the origin of the genetic code, focusing on the use of phylogenetic congruence as a robust validation framework for competing theories.

Phylogenetic Congruence: Validating Theories for the Origin and Evolution of the Genetic Code

Abstract

This article synthesizes cutting-edge research on the origin of the genetic code, focusing on the use of phylogenetic congruence as a robust validation framework for competing theories. We explore the foundational principles of the stereochemical, coevolution, and error-minimization theories, detailing how modern phylogenomic methodologies are applied to test their predictions. For researchers and drug development professionals, the content provides critical insights into troubleshooting phylogenetic conflicts and optimizing analytical pipelines. A comparative analysis demonstrates how congruence with independent data sources, such as biogeography and dipeptide evolution in proteomes, is used to evaluate and corroborate these theories, with significant implications for synthetic biology and the engineering of novel genetic systems.

The Genetic Code Enigma: Foundational Theories and the Quest for an Evolutionary Timeline

The genetic code, the universal dictionary that maps nucleotide triplets to amino acids, is a fundamental pillar of life. Its structure is highly non-random, with similar codons consistently corresponding to amino acids with similar physicochemical properties [1]. This optimized arrangement minimizes the impact of genetic mutations and translational errors. For decades, scientists have sought to explain how this code originated and evolved, leading to three dominant theories: the stereochemical theory, which posits direct chemical affinity between amino acids and their codons; the coevolution theory, which suggests the code expanded alongside amino acid biosynthesis pathways; and the error-minimization theory, which argues the code was shaped by natural selection to reduce the deleterious effects of translation errors [1] [2]. This guide provides an objective comparison of these theories, evaluating their core principles, supporting experimental data, and methodological approaches within the modern framework of phylogenetic congruence research.

Core Principles and Comparative Analysis

The table below summarizes the foundational hypotheses, strengths, and challenges associated with each of the three main theories.

| Theory | Core Principle | Proposed Evolutionary Driver | Key Supporting Evidence | Major Challenges |

|---|---|---|---|---|

| Stereochemical [1] [3] | Direct physicochemical affinity (e.g., hydrogen bonding, hydrophobic interactions) between amino acids and their specific codons or anticodons. | Initial assignment of codons based on molecular complementarity. | - RNA aptamer experiments show binding sites for amino acids like Arg, Ile, and Tyr are enriched in their cognate codons/anticodons [3].- Molecular modeling studies propose specific structural fits. | - Demonstrated for only a subset of amino acids (e.g., 3-7 of 20) [3] [4].- Lack of consistent, strong interactions for all amino acid-codon pairs. |

| Coevolution [1] [5] | The genetic code structure reflects the evolutionary expansion of amino acid biosynthesis pathways. New amino acids were assigned to codons previously used by their metabolic precursors. | Addition of new, biosynthetically derived amino acids into the existing code framework. | - Observed codon sharing between biosynthetically related amino acids (e.g., Serine -> Tryptophan) [5].- Historical plausibility; aligns with a code that started with a small subset of prebiotic amino acids. | - Requires a complex, pre-existing metabolic network.- Does not fully explain the code's overall error-minimizing structure. |

| Error-Minimization [1] [6] | The code's arrangement was shaped by selective pressure to minimize the functional disruption caused by point mutations or translational misreading. | Natural selection acting to buffer organisms against the harmful effects of genetic errors. | - Computational comparisons show the standard code is more robust than the vast majority of randomly generated alternative codes [1] [6].- Neighbouring codons typically code for physicochemically similar amino acids. | - Difficult to evolve via codon reassignment in a mature, complex proteome (the "frozen accident" problem) [1]. |

A critical synthesis of these theories suggests they are not mutually exclusive. The modern genetic code is likely the product of a combination of factors: initial stereochemical interactions, stepwise expansion via coevolution, and progressive refinement through selection for error minimization [1] [2]. This integrated view is increasingly tested through phylogenetic congruence, which seeks convergent timelines from independent data sources like tRNA, protein domains, and dipeptide sequences [7].

Experimental Protocols for Theory Validation

Researchers employ distinct methodological approaches to gather evidence for each theory. The protocols below detail key experiments cited in the field.

Protocol for Testing Stereochemical Theory via RNA Aptamer Selection

This method tests whether random RNA sequences that bind specific amino acids are enriched with that amino acid's cognate codons [3].

- Objective: To empirically determine if there is a statistical association between an amino acid and its coding triplets within experimentally selected RNA binding sites (aptamers).

- Materials:

- Synthetic Random RNA Library: A large pool of single-stranded RNA molecules with a randomized region (e.g., 40-60 nucleotides).

- Target Amino Acid: The amino acid of interest (e.g., Arginine), often immobilized on a solid resin.

- Binding Buffer: To control pH and ionic strength.

- RT-PCR Reagents: For reverse transcription and polymerase chain reaction to amplify selected RNA sequences.

- High-Throughput Sequencing Platform: To sequence the enriched RNA pools.

- Procedure:

- Incubation: The random RNA library is incubated with the immobilized amino acid.

- Partitioning: Unbound RNA molecules are washed away, while RNA molecules with affinity for the amino acid are retained.

- Elution: The bound RNA is eluted from the column.

- Amplification: The eluted RNA is reverse-transcribed to DNA and amplified by PCR.

- Iteration: Steps 1-4 are repeated for several rounds to enrich strongly binding sequences.

- Sequencing & Analysis: The final enriched pool is sequenced. The frequency of all nucleotide triplets in the binding sites is compared to their frequency in the initial random library. A statistically significant over-representation of the biological codons or anticodons for the target amino acid is considered supporting evidence for the stereochemical theory [3].

Protocol for Simulating Code Evolution via Error-Minimization

This in silico protocol tests how well the standard genetic code minimizes errors compared to alternatives [1] [6].

- Objective: To quantify the error-minimization level of the standard genetic code against a large sample of random or evolved alternative codes.

- Materials:

- Computational Resource: A high-performance computer or cluster.

- Amino Acid Similarity Matrix: A quantitative matrix (e.g., based on polarity, volume, or chemical properties) defining the "cost" of substituting one amino acid for another.

- Genetic Code Simulation Software: Custom scripts (e.g., in Python or R) to generate and evaluate genetic codes.

- Procedure:

- Define Cost Function: A cost function (Φ) is defined, representing the average "cost" of an error. For each codon, the cost of its being misread as each of its neighbouring codons (differing by a single nucleotide) is calculated using the similarity matrix and summed or averaged [6].

- Calculate Native Code Cost: The cost function (Φ) is calculated for the standard genetic code.

- Generate Alternative Codes: Millions of alternative genetic codes are generated by randomly assigning amino acids to codons.

- Statistical Comparison: The cost of the standard code is compared to the distribution of costs from the random codes. The result is often expressed as a percentile or p-value (e.g., the standard code is better than 99.99% of random codes) [1].

- Evolutionary Simulation (Optional): Codes can be evolved from a simple state by sequentially adding amino acids, with selection based on the cost function, to test if error-minimization can arise neutrally [6].

Protocol for Investigating Coevolution via Phylogenetic Congruence

This bioinformatics protocol tests whether independent molecular fossils converge on a consistent evolutionary timeline for the code's expansion [7].

- Objective: To reconstruct the historical order of amino acid incorporation into the genetic code and test for congruence between different phylogenetic datasets.

- Materials:

- Genomic/Proteomic Datasets: Large, curated databases of protein sequences and structures across the tree of life (e.g., from Archaea, Bacteria, Eukarya).

- Phylogenetic Analysis Software: Tools for building evolutionary trees (e.g., based on protein domains, tRNA sequences, or dipeptide composition).

- Statistical Packages: For performing congruence tests and data analysis.

- Procedure:

- Data Collection: Assemble datasets for different molecular features:

- tRNA Phylogeny: Build an evolutionary tree of tRNA molecules.

- Protein Domain Evolution: Map the evolution of structural units in proteins.

- Dipeptide Chronology: Track the evolutionary appearance of all 400 possible dipeptide pairs [7].

- Independent Timeline Reconstruction: Use each dataset to infer the relative order in which amino acids entered the genetic code. For example, amino acids found in more ancient protein domains or dipeptides are considered "early."

- Congruence Testing: Statistically compare the evolutionary timelines derived from the tRNA, protein domain, and dipeptide data. Significant congruence (i.e., all three sources telling the same story) provides strong, independent support for a coevolutionary expansion of the code [7].

- Data Collection: Assemble datasets for different molecular features:

The table below lists key materials and computational tools used in experimental and theoretical research on the genetic code.

| Item/Tool Name | Function/Application | Relevance to Theory |

|---|---|---|

| Immobilized Amino Acids | Serves as a fixed ligand for selecting specific RNA aptamers from a random pool. | Stereochemical Theory: Core reagent for affinity selection experiments [3]. |

| Random RNA Library | A diverse pool of RNA sequences used as the starting material for in vitro selection (SELEX). | Stereochemical Theory: Provides the molecular diversity to discover RNA binders. |

| Amino Acid Similarity Matrix | A quantitative table that assigns a "cost" to substituting one amino acid for another based on physicochemical properties. | Error-Minimization: The foundational metric for calculating the cost of translational errors [6]. |

| High-Performance Computing Cluster | Provides the computational power to generate and evaluate millions of simulated genetic codes. | Error-Minimization: Essential for robust statistical comparison against the standard code. |

| Phylogenetic Software (e.g., MEGA, RAxML) | Reconstructs evolutionary histories and timelines from molecular sequence data. | Coevolution & Congruence: Used to build trees of tRNAs, protein domains, etc., to infer the order of amino acid recruitment [7]. |

| Curated Proteome Databases | Provides the raw protein sequence data from diverse organisms for phylogenetic analysis. | Coevolution & Congruence: The primary data source for tracking the evolution of dipeptides and protein domains. |

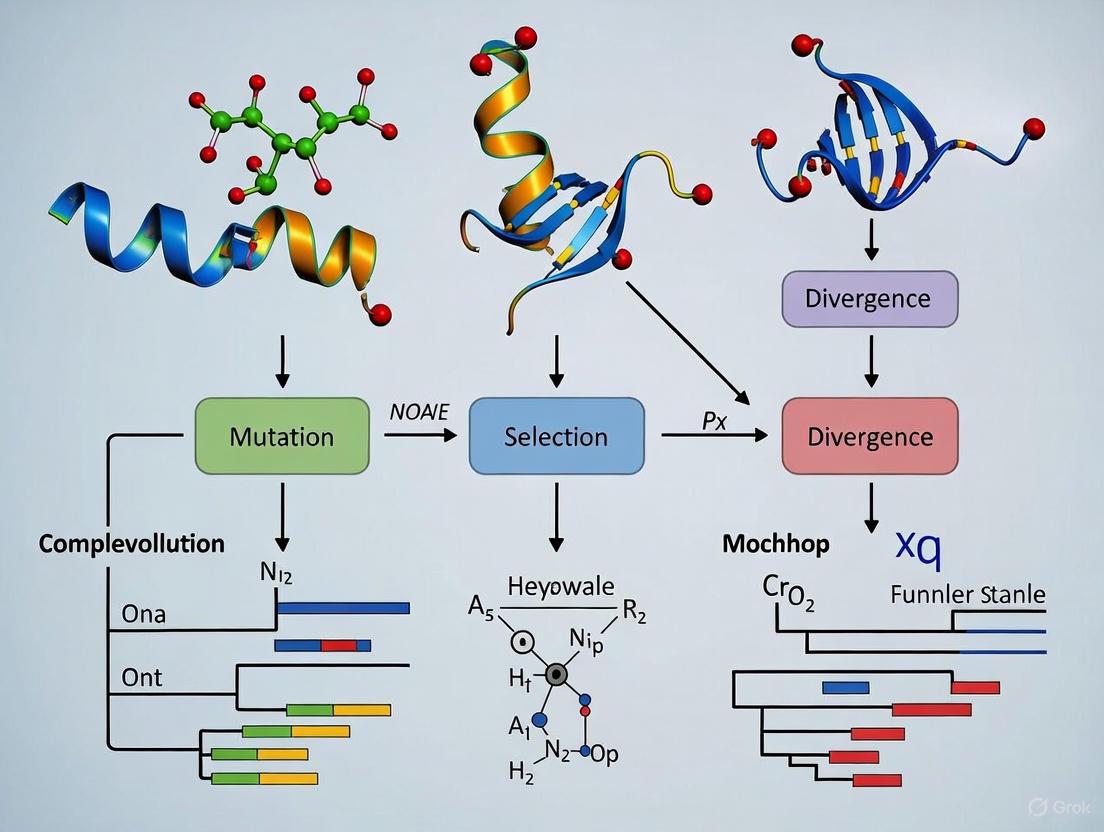

Visualizing Theoretical and Experimental Workflows

The following diagrams map the logical relationships within each theory and the key experimental workflow for phylogenetic congruence.

Stereochemical Theory Logic Map

This diagram illustrates the core premise and challenge of the stereochemical theory.

Coevolution Theory Expansion Pathway

This flowchart outlines the stepwise expansion of the genetic code as proposed by the coevolution theory.

Error-Minimization Code Robustness

This conceptual diagram shows how the standard genetic code minimizes the impact of point mutations.

Phylogenetic Congruence Research Workflow

This flowchart details the experimental protocol for testing phylogenetic congruence in genetic code evolution.

The "Frozen Accident" theory, proposed by Francis Crick in 1968, posited that the standard genetic code (SGC) became universal because any change in codon assignment after its establishment would be lethal, effectively freezing its structure [8] [9]. This perspective suggested the code's fundamental properties were largely historical accidents, preserved not due to optimality but through evolutionary inertia. For decades, this viewpoint shaped understanding of the code's invariance across life. However, contemporary research now challenges this premise, revealing a more dynamic evolutionary narrative. Evidence from comparative genomics, phylogenetic analyses, and the discovery of variant codes across diverse organisms demonstrates that the genetic code is not entirely frozen. While the core structure remains remarkably conserved, several lineages have undergone successful codon reassignments, providing a natural experimental framework to test the boundaries of code evolution and its functional constraints. This guide objectively compares the frozen accident perspective with emerging evidence, providing researchers with methodological insights and data to navigate this evolving paradigm and its implications for synthetic biology and drug development.

Theoretical Frameworks of Genetic Code Evolution

The evolution of the genetic code is explained by several non-mutually exclusive theories, which range from emphasizing historical contingency to adaptive forces. The following table provides a comparative overview of the principal theoretical frameworks.

Table 1: Core Theories of Genetic Code Evolution

| Theory | Core Principle | Key Predictions | Supporting Evidence |

|---|---|---|---|

| Frozen Accident [8] [9] | The code is universal because any change after its initial establishment would be highly deleterious, freezing a potentially arbitrary assignment. | Extreme universality of the code; variant codes are non-viable or highly constrained. | Near-universality of the SGC; computational models showing "freezing" dynamics [10]. |

| Stereochemical [1] [9] | Codon assignments are dictated by physicochemical affinities between amino acids and their cognate codons or anticodons. | Direct, measurable interactions between specific amino acids and nucleotide triplets. | Some experimental evidence for weak affinities; remains an active area of research [8]. |

| Coevolution [1] | The code's structure coevolved with amino acid biosynthesis pathways. New amino acids were assigned codons related to their biosynthetic precursors. | Patterns of codon reassignments between biosynthetically related amino acids. | Contiguous areas in the code table for related amino acids (e.g., serine family: Ser, Trp) [1]. |

| Error Minimization [8] [1] | The code was selected for robustness to minimize the adverse effects of point mutations and translation errors. | The SGC is significantly more robust than random alternative codes. | Quantitative analyses show the SGC is robust, though not optimal, with a probability of < 10⁻⁶ to reach its level by chance [8]. |

These theories provide a scaffold for interpreting empirical data. The frozen accident does not preclude a role for initial selective pressures but emphasizes the immutability of the code once a critical threshold of complexity is crossed [8]. In contrast, the discovery of variant codes provides a strong test case for evaluating these theories, particularly the strictest interpretation of the frozen accident.

Empirical Evidence: Variant Genetic Codes and Their Drivers

The discovery of variant genetic codes across diverse life forms provides direct, empirical counterpoints to a strictly "frozen" code. These variants are not random but follow predictable patterns and mechanisms.

Table 2: Variant Genetic Codes and Their Evolutionary Mechanisms

| Variant Type | Mechanism of Reassignment | Biological Context | Example |

|---|---|---|---|

| Sense-to-Sense Codon Reassignment | Ambiguous Intermediate: A codon is decoded by multiple tRNAs before the original tRNA is lost [1]. | Widespread in mitochondria and bacteria with reduced genomes. | Reassignment of the CUG codon from leucine to serine in the fungus Candida zeylanoides [1]. |

| Stop-to-Sense Codon Reassignment | Codon Capture: A codon disappears from a genome due to mutational pressure, then reappears and is captured by a mutant tRNA [1]. | Common in organelles and parasitic bacteria. | Reassignment of the stop codon UGA to tryptophan in many mycoplasmas and mitochondria [8] [1]. |

| Incorporation of Non-Canonical Amino Acids | Specialized machinery that overrides the standard interpretation of a codon. | Limited to specific lineages; requires complex auxiliary factors. | Selenocysteine: Encoded by UGA with a specific regulatory element [8] [1]. Pyrrolysine: Encoded by UAG in some archaea [8] [1]. |

A critical insight from studying these variants is that they are almost exclusively "minor" deviations, involving one or two reassignments and typically affecting rare amino acids or stop codons [8]. This supports a modified frozen accident view: while the core structure of the SGC is locked in due to the deleteriousness of large-scale change, its peripheries are susceptible to evolutionary tweaking, especially in genomes where the cost of reassignment is low (e.g., small genomes with reduced proteomes) [8] [1]. This demonstrates that the code is evolvable, but within strict constraints.

Experimental Workflow for Analyzing Code Variants

The following diagram illustrates the general workflow for identifying and validating a variant genetic code, integrating genomic, phylogenetic, and experimental data.

Diagram 1: Workflow for identifying and validating variant genetic codes.

Phylogenetic Congruence as a Validation Tool

Phylogenetic congruence—the agreement between evolutionary histories inferred from different data sources—is a powerful tool for testing evolutionary hypotheses, including the history of the genetic code [11] [12]. The principle is that if the genetic code is truly universal and frozen, then phylogenies built from different genes should be largely congruent, reflecting a single, shared evolutionary history. Incongruence, however, can signal specific evolutionary events, including codon reassignments.

Methodological Protocol: Testing for Phylogenetic Congruence

Objective: To determine whether molecular and morphological data partitions, or genes from different organelles, evolved under a single evolutionary history (tree topology) or show significant conflict.

Key Experimental Steps:

Data Partitioning: Compile sequence alignments for the taxa of interest. Partitions can be defined by:

Phylogenetic Inference: Reconstruct phylogenetic trees for each data partition independently using model-based methods (e.g., Maximum Likelihood or Bayesian Inference in software like MrBayes [11]). For morphological data, Bayesian implementation of the Mk model is commonly used [11].

Incongruence Testing:

- Bayes Factor Combinability Test: This test compares the marginal likelihoods of two models [11]:

- Model 1 (M1): Assumes each data partition has an independent tree topology and branch lengths.

- Model 2 (M2): Assumes all partitions share a single tree topology but have independent branch lengths.

- If M2 is significantly better supported, the data are considered "combinable," meaning they are best explained by a common evolutionary history (congruent). If not, significant incongruence exists [11].

- Bayes Factor Combinability Test: This test compares the marginal likelihoods of two models [11]:

Topological Comparison: Visually and statistically compare the resulting trees from each partition to identify specific, well-supported conflicting relationships (e.g., using consensus networks or metrics like Robinson-Foulds distance) [11] [13].

Application to Genetic Code Evolution: This methodology can be applied to test if a group of organisms with a suspected variant code forms a monophyletic clade in all gene trees, or if the reassignment event creates incongruence due to misannotation or convergent evolution. Studies on organelle genomes have shown that while chloroplast and mitochondrial topologies are largely congruent, specific, well-supported conflicts exist, revealing their independent evolutionary trajectories [13].

The Scientist's Toolkit: Key Research Reagents and Solutions

Advancing research in genetic code evolution and phylogenetic congruence requires a specific set of computational and experimental tools.

Table 3: Essential Research Reagents and Tools for Code Evolution Studies

| Category / Reagent | Specific Tool / Database | Primary Function in Analysis |

|---|---|---|

| Genomic Databases | NCBI GenBank, RefSeq | Source of primary genomic and organellar sequence data for identifying variant codes [13]. |

| Sequence Alignment | MAFFT, VSEARCH | Multiple sequence alignment and clustering of orthologous gene sequences [13]. |

| Phylogenetic Software | MrBayes, PartitionFinder2 | Bayesian phylogenetic inference and selection of best-fit evolutionary models for data partitions [11]. |

| Incongruence Testing | Stepping Stone Analysis (in MrBayes) | Calculating marginal likelihoods for Bayes Factor combinability tests [11]. |

| Synthetic Biology Tools | Engineered Aminoacyl-tRNA Synthetases | Key reagents for incorporating non-canonical amino acids, demonstrating code malleability [1]. |

| Validation Technology | Mass Spectrometry (MS) | Experimental validation of protein sequences to confirm codon reassignments [1]. |

The collective evidence from variant codes, phylogenetic analyses, and synthetic biology leads to a consensus view that supersedes the strictest interpretation of the Frozen Accident. The genetic code is best understood as a "thawing" or "evolvable" accident [14]. Its core structure is remarkably robust and difficult to change, justifying Crick's original insight into the deleteriousness of major reassignments. However, its peripheries are malleable under specific evolutionary pressures, such as genome reduction [1]. This revised understanding is crucial for researchers in drug development and synthetic biology. It implies that the code can be engineered, but success depends on understanding the complex, co-evolved modules that maintain its fidelity [14]. The future of genetic code research lies in leveraging phylogenetic and comparative methods to map these constraints, guiding the rational design of orthogonal translation systems for developing novel therapeutics.

The study of molecular clocks is fundamental to understanding the tempo and mode of biological evolution. This guide compares phylogenetic timelines derived from three core components of the translation machinery: transfer RNA (tRNA), protein structural domains, and aminoacyl-tRNA synthetases (AARS). By examining congruence across these evolutionary records, we validate theories about genetic code origin and expansion. The integration of these temporal signals provides a robust framework for reconstructing deep evolutionary history, with direct implications for molecular dating in biomedical and synthetic biology research. Experimental data from phylogenomic analyses reveal consistent timelines that trace back to the last universal common ancestor (LUCA) and inform the stepwise expansion of the amino acid alphabet.

The molecular clock hypothesis proposes that biomolecules evolve at rates that are approximately constant over time, providing a foundation for dating evolutionary divergences. For the genetic code's components—tRNA, protein domains, and AARS—this principle allows reconstruction of evolutionary events spanning billions of years. AARS enzymes are particularly significant as they constitute the operational interface between nucleic acids and proteins, directly implementing the genetic code by catalyzing the attachment of amino acids to their cognate tRNAs [15]. Their deep evolutionary history predates the root of the universal phylogenetic tree, making them invaluable molecular fossils for tracing life's early evolution [16].

The central thesis of phylogenetic congruence research posits that independent evolutionary records should yield consistent timelines. Recent studies have demonstrated striking congruence between the evolutionary histories of protein domains, tRNAs, and dipeptide sequences, providing compelling evidence for a coordinated expansion of the genetic code [7]. This guide systematically compares the phylogenetic timelines derived from these three systems, evaluates methodological approaches for their analysis, and presents experimental data validating their congruence, thereby offering researchers a comprehensive framework for investigating molecular evolution.

Comparative Analysis of Phylogenetic Timelines

Timeline of Aminoacyl-tRNA Synthetase Evolution

AARS enzymes are organized into two structurally distinct classes (Class I and Class II) that likely descended from complementary strands of a single ancestral bidirectional gene [17]. These enzymes emerged before LUCA and have undergone complex evolutionary trajectories including gene duplications, functional divergences, and horizontal gene transfers. The evolutionary chronology of AARS reveals a structured addition of amino acids to the genetic code, with simpler amino acids appearing earlier and more complex ones incorporated later [7].

Table 1: Evolutionary Chronology of Aminoacyl-tRNA Synthetases and Associated Amino Acids

| Evolutionary Group | Amino Acids | Evolutionary Features | AARS Class Association |

|---|---|---|---|

| Group 1 (Oldest) | Tyrosine, Serine, Leucine | Associated with origin of editing functions and early operational code | Both Class I and Class II |

| Group 2 | 8 additional amino acids | Linked to editing mechanisms and code refinement | Both Class I and Class II |

| Group 3 (Youngest) | Remaining amino acids | Derived functions related to standard genetic code | Both Class I and Class II |

Class I AARS typically specify 11 amino acids (Met, Val, Ile, Leu, Cys, Glu, Gln, Lys, Arg, Trp, Tyr), while Class II synthetases specify 10 amino acids (Ala, His, Pro, Thr, Ser, Gly, Phe, Asp, Asn, Lys) [16]. The class rule was broken with the discovery of a class I version of lysyl-tRNA synthetase in archaea, illustrating the complex evolutionary history of these enzymes [16]. The timeline of AARS evolution is characterized by functional bifurcations where ancestral enzymes with broader specificity differentiated into highly specific modern synthetases through both subfunctionalization and neofunctionalization events [17].

tRNA Phylogenetic Timeline

The evolutionary history of tRNA reveals a complementary timeline to AARS. Phylogenetic analysis of tRNA sequences has enabled researchers to categorize amino acids into three temporal groups based on their entry into the genetic code [7]. The oldest amino acids (Group 1, including tyrosine, serine, and leucine) and a second group of eight additional amino acids (Group 2) were associated with the origin of editing functions in synthetase enzymes and the establishment of an early operational code [7]. The congruence between tRNA and AARS phylogenies provides strong evidence for their co-evolution alongside the expanding genetic code.

Recent analyses of dipeptide sequences across 1,561 proteomes have revealed synchronous appearance of complementary dipeptide pairs (e.g., alanine-leucine and leucine-alanine), suggesting that dipeptides arose encoded in complementary strands of nucleic acid genomes that interacted with primordial synthetase enzymes [7]. This duality in dipeptide appearance provides a remarkable connection between tRNA evolution and the structural constraints of early proteins.

Protein Domain Timeline

The phylogenetic timeline of protein structural domains, derived from structural alignments and comparative genomics, provides a third independent record of molecular evolution. Protein structure is more highly conserved than sequence, allowing researchers to glimpse evolutionary events that predate the root of the universal phylogenetic tree [16]. Structural alignments of AARS catalytic domains have enabled reconstruction of their deep evolutionary history, revealing that the Rossmann fold of Class I AARS and the unique mixed α+β fold of Class II AARS represent ancient structural solutions to the challenge of aminoacylation.

The congruence between protein domain evolution, tRNA histories, and dipeptide sequences provides robust validation of the reconstructed timeline of genetic code expansion [7]. All three sources of evolutionary information reveal the same progression of amino acids being added to the genetic code in a specific order, supporting the hypothesis that the modern genetic code emerged through a stepwise process of alphabet expansion and refinement.

Methodological Framework for Phylogenetic Timeline Analysis

Molecular Clock Models and Their Applications

Molecular dating relies on various clock models that accommodate different evolutionary patterns:

Table 2: Molecular Clock Models for Phylogenetic Analysis

| Clock Model | Key Assumptions | Best Applications | Software Implementation |

|---|---|---|---|

| Strict Clock | Constant evolutionary rate across all branches | Shallow divergences, closely related sequences | BEAST [18] |

| Relaxed Clock (Uncorrelated) | Each branch has independent rate drawn from probability distribution | Deep divergences with rate variation | BEAST (log-normal, exponential, gamma distributions) [18] |

| Random Local Clock | Limited number of rate changes across tree | Intermediate between strict and relaxed clocks | BEAST [18] |

| Fixed Local Clock | Pre-specified clades have different but constant rates | Testing rate variation in known lineages | BEAST [18] |

The uncorrelated relaxed clock models implemented in BEAST allow each branch to have its own evolutionary rate drawn from an underlying probability distribution (log-normal, exponential, or gamma) [18]. These models are particularly valuable for analyzing deep divergences where evolutionary rates may vary significantly across lineages.

Temporal Signal Assessment with TempEst

Prior to molecular dating, it is essential to assess the temporal signal and "clocklikeness" of molecular sequence data. TempEst software provides tools for investigating the relationship between root-to-tip genetic distances and sampling dates [19]. The software can identify outliers, evaluate clocklike evolution, and suggest optimal rooting positions compatible with a molecular clock assumption. TempEst supports analysis of both contemporaneous trees and dated-tip trees where sequences have been collected at different times [19].

Ancestral State Reconstruction and Visualization

Ancestral state reconstruction methods enable researchers to infer historical character states at internal nodes of phylogenetic trees. Stochastic mapping approaches implemented in phytools allow simulation of evolutionary histories under continuous-time Markov models [20]. The resulting ancestral state probabilities can be visualized on phylogenies using color-coded branches or node symbols, providing intuitive displays of evolutionary trajectories [20]. For discrete characters, these methods can reconstruct the evolution of genetic elements, functional states, or biogeographic distributions.

Figure 1: Molecular dating workflow for phylogenetic timeline reconstruction.

Experimental Validation of Timeline Congruence

Phylogenomic Analysis of Dipeptide Evolution

A recent large-scale study analyzed 4.3 billion dipeptide sequences across 1,561 proteomes representing Archaea, Bacteria, and Eukarya to reconstruct dipeptide evolution [7]. The researchers constructed phylogenetic trees and compared them to established timelines of protein domain and tRNA evolution. Strikingly, they found congruence across all three data sources, with each revealing the same progression of amino acids being added to the genetic code [7]. This congruence provides strong evidence for the coordinated expansion of the genetic code and validates the use of multiple molecular systems for deep evolutionary reconstruction.

The study also discovered synchronous appearance of complementary dipeptide pairs (e.g., AL and LA), suggesting that dipeptides were encoded in complementary strands of nucleic acid genomes that interacted with primordial synthetase enzymes [7]. This finding connects the evolution of tRNA with the structural constraints of early proteins and provides mechanistic insight into how the genetic code might have expanded.

Urzyme Studies and Ancestral AARS Reconstruction

Urzymes (catalytically active fragments of modern AARS) provide experimental models for ancestral stages of AARS evolution. These 120-130 residue constructs retain approximately 60% of the transition state stabilization free energy of modern AARS and offer insights into early stages of genetic code evolution [21]. Recent studies have used deep learning algorithms (ProteinMPNN and AlphaFold2) to redesign optimized LeuAC urzymes derived from leucyl-tRNA synthetase, resulting in variants with enhanced solubility and catalytic proficiency [21].

Urzyme studies have demonstrated that Class I urzymes are functionally competent even when apparently "modern" amino acids (histidine and lysine) are replaced with simpler alanine side chains, supporting the hypothesis that early genetic coding operated with a restricted amino acid alphabet [17]. This experimental approach provides direct biochemical validation of inferences derived from phylogenetic timelines.

Bayesian Phylogenetic Framework with Reduced Alphabet Models

Standard amino acid substitution models assume a constant 20-amino acid alphabet over evolutionary time, making them inappropriate for analyzing ancient proteins that originated when the genetic code was still expanding. To address this limitation, researchers have developed substitution models that account for evolutionary changes in coding alphabet size, implementing them in a Bayesian phylogenetic framework [17].

These models strongly support the two-alphabet hypothesis (19 states in a past epoch to 20 now) for "old" proteins like AARS that originated before LUCA, but reject it for "young" eukaryotic proteins [17]. The application of these models to AARS phylogenies provides slightly more realistic divergence estimates that are more consistent with Earth's history, while also revealing that standard methods overestimate divergence ages for proteins that originated under reduced coding alphabets.

Figure 2: Congruence validation across independent evolutionary records.

Research Reagent Solutions for Molecular Evolutionary Studies

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Application | Key Features | Reference |

|---|---|---|---|

| BEAST Software | Bayesian evolutionary analysis | Molecular clock dating, relaxed phylogenetics | [18] |

| TempEst | Temporal signal analysis | Root-to-tip regression, outlier detection | [19] |

| Phytools R Package | Ancestral state reconstruction | Stochastic mapping, tree visualization | [20] |

| ProteinMPNN | Protein sequence redesign | Deep learning-based protein optimization | [21] |

| Reduced Alphabet Models | Ancient protein phylogenetics | Accounts for expanding genetic code | [17] |

| AARS Urzyme Constructs | Experimental evolution studies | Minimal catalytic domains of synthetases | [21] [17] |

The congruence of phylogenetic timelines derived from tRNA, protein domains, and AARS provides robust validation for current theories of genetic code expansion. The coordinated evolutionary histories of these three systems reveal a stepwise process where the genetic code expanded from a simpler form to the current 20-amino acid alphabet, with structural constraints of early proteins playing a formative role in this process. Methodological advances in molecular clock modeling, ancestral state reconstruction, and experimental analysis of urzymes have created a powerful toolkit for investigating deep evolutionary history.

For researchers in drug development and synthetic biology, these findings have practical implications. Understanding the evolutionary constraints on AARS and the genetic code informs efforts to engineer expanded genetic codes for novel amino acid incorporation [22]. The deep evolutionary perspective provided by phylogenetic timeline analysis highlights fundamental constraints and opportunities in genetic code engineering, enabling more rational design of synthetic biological systems. As machine learning approaches continue to enhance our ability to model and engineer ancestral proteins [21], the integration of phylogenetic insights with protein design promises to accelerate progress in both basic research and biotechnology applications.

The structure of the genetic code, the fundamental rules governing how nucleotide sequences are translated into proteins, has profound implications for molecular biology and bioengineering. Two prominent theories attempt to explain its organization: the Coevolution Theory and the Phylogenetic Congruence Theory. The coevolution theory posits that the genetic code expanded alongside amino acid biosynthetic pathways, with newer "product" amino acids inheriting codons from their biosynthetic "precursors" [23]. In contrast, the phylogenetic congruence theory proposes that amino acids were incorporated into the code in an order driven by structural demands of emerging proteins, as revealed through evolutionary timelines reconstructed from modern proteomes [24] [7]. This guide objectively compares experimental evidence supporting these theories, providing researchers with methodological frameworks and datasets for ongoing investigations into genetic code evolution.

Theoretical Frameworks and Supporting Evidence

The Coevolution Theory: Biosynthetic Linkages

The coevolution theory suggests the genetic code preserves a fossil record of amino acid biosynthesis evolution. Its original statistical support came from analyzing precursor-product pairs defined by known metabolic relationships [23].

Core Principle: The theory postulates that the earliest genetic code utilized a small set of prebiotically synthesized amino acids, then expanded as novel derivatives of these primordial amino acids were incorporated through evolving metabolic pathways [23]. A central tenet is that product amino acids synthesized from precursors usurped codons previously assigned to these precursors [23].

Defined Precursor-Product Pairs: The theory specifically defines biochemically justified precursor-product relationships, excluding those based on α-transaminations due to metabolic nonspecificity. The original formulation identified 13 such pairs, including:

- Glu → Gln, Glu → Pro, Glu → Arg

- Asp → Asn, Asp → Thr, Asp → Lys, Asp → Arg

- Ser → Cys, Ser → Trp

- Thr → Ile, Thr → Met

- Val → Leu

- Phe → Tyr

- Gln → His [23]

Statistical Foundation: Initial statistical analysis using the hypergeometric distribution indicated a very low probability (P = 0.00015) that the observed clustering of precursor-product amino acids in codon space could occur by chance, providing seemingly strong support for the theory [23].

The Phylogenetic Congruence Theory: Evolutionary Timelines

The phylogenetic congruence approach reconstructs evolutionary histories using comparative analysis of biological data across diverse organisms, revealing temporal relationships in genetic code development [24] [7].

Core Principle: This theory suggests the genetic code emerged through a coordinated process between operational RNA elements and structural demands of early proteins, with amino acids incorporated sequentially based on protein folding requirements rather than biosynthetic relationships [7].

Dipeptide Chronology: Research analyzing 4.3 billion dipeptide sequences across 1,561 proteomes revealed distinct chronological patterns in amino acid incorporation. The earliest dipeptides contained Leu, Ser, and Tyr, followed by those containing Val, Ile, Met, Lys, Pro, and Ala [24]. This timeline aligns with the emergence of an operational RNA code in the acceptor arm of tRNA before implementation of the standard genetic code in the anticodon loop [24].

Duality Discovery: A remarkable finding was the synchronous appearance of dipeptide-antidipeptide pairs (e.g., AL and LA) along the evolutionary timeline, suggesting an ancestral duality of bidirectional coding operating at the proteome level [7]. This synchronicity indicates dipeptides arose encoded in complementary strands of nucleic acid genomes [7].

Table 1: Key Experimental Evidence Supporting Each Theory

| Theory | Supporting Data | Analysis Method | Key Findings |

|---|---|---|---|

| Coevolution | Precursor-product amino acid pairs in codon space | Hypergeometric distribution & Fisher's method | 13 statistically significant precursor-product pairs (P=0.00015) |

| Phylogenetic Congruence | 4.3 billion dipeptide sequences across 1,561 proteomes | Phylogenomic reconstruction & timeline mapping | Amino acids incorporated in specific order: Leu/Ser/Tyr → Val/Ile/Met/Lys/Pro/Ala |

| Phylogenetic Congruence | Evolutionary histories of tRNA and protein domains | Phylogenetic tree construction & congruence analysis | Synchronous appearance of dipeptide/anti-dipeptide pairs supporting bidirectional coding |

Experimental Protocols and Methodologies

Protocol 1: Testing Coevolution Theory Statistics

Objective: Quantitatively evaluate the statistical significance of precursor-product amino acid relationships within the genetic code structure.

Methodology:

- Define Precursor-Product Pairs: Identify biochemically justified precursor-product amino acid relationships from conserved metabolic pathways, excluding nonspecific transformations [23].

- Calculate Hypergeometric Probabilities: For each precursor-product pair, compute the probability that random codon assignment would place product codons near precursor codons using the equation: P(X≥x) = 1 - Σ[(a choose i)(b choose n-i)]/(a+b choose n) for i=0 to x-1 where a = codons one mutation from precursor, b = other codons, x = product codons near precursor, n = total product codons [23].

- Combine Probabilities: Apply Fisher's method to combine individual probabilities: χ² = -2Σln(Pi) with 2k degrees of freedom (k = number of pairs) [23].

- Sensitivity Analysis: Test statistical robustness by removing potentially problematic pairs (e.g., Val→Leu, Gln→His) and recalculating significance [23].

Applications: This protocol enables rigorous testing of biosynthetic relationships within genetic code organization and can be extended to evaluate alternative precursor-product definitions [23].

Protocol 2: Phylogenetic Congruence Analysis

Objective: Reconstruct the chronological incorporation of amino acids into the genetic code using evolutionary relationships.

Methodology:

- Dataset Compilation: Assemble a comprehensive set of proteomes (1,561 proteomes representing Archaea, Bacteria, and Eukarya) and extract all dipeptide sequences (4.3 billion dipeptides in the foundational study) [24] [7].

- Phylogenetic Tree Construction: Build evolutionary trees using:

- Timeline Reconstruction: Determine the chronological appearance of amino acids and dipeptides using phylogenetic placement [24].

- Congruence Testing: Verify consistency between timelines derived from dipeptides, protein domains, and tRNA evolution [7].

- Duality Analysis: Identify synchronous appearance of dipeptide/anti-dipeptide pairs by mapping complementary sequences to the evolutionary timeline [7].

Applications: This approach reveals fundamental evolutionary patterns in genetic code development and connects code evolution to protein structural requirements [24].

Figure 1: Phylogenetic Congruence Analysis Workflow. This diagram illustrates the experimental protocol for reconstructing genetic code evolution through phylogenetic analysis of dipeptide sequences, protein domains, and tRNA molecules.

Comparative Evaluation: Statistical Rigor and Predictive Power

Critical Analysis of Coevolution Theory

Recent reappraisals of coevolution theory have identified significant methodological concerns that undermine its statistical support:

Biochemical Flaws: The theory's definition of precursor-product pairs requires energetically unfavorable reversal of steps in extant metabolic pathways to achieve desired relationships [23]. This biochemical implausibility challenges the fundamental premise of the theory.

Statistical Limitations: When correcting for problematic pair definitions and accounting for post hoc assumptions about primordial codon assignments, the probability that apparent patterns resulted from chance increases dramatically—from the originally reported 0.00015 to 0.23, or even 0.62 under more conservative corrections [23].

Methodological Criticism: The theory neglects important biochemical constraints when calculating the probability that chance could assign precursor-product amino acids to contiguous codons [23]. Alternative analytical approaches using randomized code simulations have shown substantially diminished significance, with probabilities as high as 34% that randomly generated codes would show stronger biosynthetic relationships [23].

Supporting Evidence for Phylogenetic Congruence

The phylogenetic congruence approach demonstrates strong consistency across multiple independent data sources:

Tripartite Congruence: Evolutionary timelines derived from dipeptide sequences show remarkable consistency with those reconstructed from protein domains and tRNA molecules, providing robust cross-validation [7]. This congruence across different molecular entities strengthens the validity of the reconstructed timeline.

Operational Code Evidence: The dipeptide chronology supports the early emergence of an operational RNA code in the acceptor arm of tRNA prior to implementation of the standard genetic code in the anticodon loop [24]. This history likely originated in peptide-synthesizing urzymes driven by molecular co-evolution and recruitment [24].

Structural Rationale: The phylogenetic approach connects code evolution to structural demands of protein folding, explaining the early incorporation of amino acids like Leu, Ser, and Tyr that play critical roles in protein structure and function [24] [7].

Table 2: Methodological Comparison of Theoretical Approaches

| Evaluation Criteria | Coevolution Theory | Phylogenetic Congruence Theory |

|---|---|---|

| Statistical Significance | P=0.00015 (original); P=0.23-0.62 (corrected) [23] | Congruence across 3 data sources (dipeptides, domains, tRNA) [7] |

| Biochemical Plausibility | Requires metabolically unfavorable pathway reversals [23] | Aligns with protein structural demands and folding requirements [24] |

| Evolutionary Mechanism | Code expansion via biosynthetic pathway evolution [23] | Operational RNA code preceding standard code [24] |

| Novel Predictions | Specific precursor-product codon relationships [23] | Dipeptide-antidipeptide synchronous appearance [7] |

| Experimental Validation | Statistical analysis of codon assignments [23] | Phylogenetic analysis of 1,561 proteomes [24] |

Advanced computational tools and comprehensive databases are essential for researching genetic code evolution and biosynthetic pathway design.

Table 3: Essential Research Resources for Biosynthetic and Evolutionary Analysis

| Resource Category | Specific Databases/Tools | Research Application |

|---|---|---|

| Compound Databases | PubChem, ChEBI, ChEMBL, ZINC, ChemSpider [25] | Chemical structure and property information for metabolic analysis |

| Reaction/Pathway Databases | KEGG, MetaCyc, Rhea, Reactome, BKMS-react [25] | Access to known biochemical pathways and reaction mechanisms |

| Enzyme Information | UniProt, BRENDA, PDB, AlphaFold DB [25] | Enzyme function, structure, and mechanistic data |

| Pathway Design Tools | SubNetX algorithm [26] | Extraction and ranking of biosynthetic pathways for target compounds |

| Molecular Alignment | SMILES Alignment algorithm [27] | Comparing small organic molecules based on chemical similarity |

| Protein Generation Evaluation | COMPSS framework [28] | Computational metrics for predicting functionality of generated enzymes |

Research Applications and Future Directions

Practical Applications in Synthetic Biology

Understanding genetic code evolution directly informs synthetic biology and metabolic engineering:

Pathway Design: Computational tools like SubNetX leverage evolutionary principles to design balanced biosynthetic pathways for complex natural and non-natural compounds [26]. This approach combines constraint-based optimization with retrobiosynthesis to identify feasible pathways that integrate into host metabolism [26].

Enzyme Engineering: Evaluation frameworks like COMPSS (Composite Metrics for Protein Sequence Selection) use evolutionary insights to predict functionality of computationally generated enzymes, improving experimental success rates by 50-150% [28].

Gene Synthesis Optimization: Analysis of genetic code evolution informs codon optimization strategies for heterologous expression, enabling researchers to source genes from more genetically distant organisms in the tree of life [29].

Emerging Research Frontiers

Integration with Cheminformatics: New algorithms for molecular alignment and similarity assessment enable more precise analysis of biochemical transformations in evolutionary contexts [27]. These tools facilitate tracing structural changes through metabolic pathways.

Advanced Generative Models: Neural network approaches for protein generation, combined with rigorous experimental validation, are creating new opportunities for exploring sequence-function relationships relevant to code evolution [28].

Expanded Biosynthetic Design: Tools like SubNetX demonstrate how combining evolutionary principles with computational design can produce complex secondary metabolites through balanced pathways rather than simple linear approaches [26].

Figure 2: Theoretical Comparison and Research Applications. This diagram illustrates the supporting evidence, critiques, and practical applications of the two major theories of genetic code evolution.

The comparative analysis reveals that while the coevolution theory offers an intuitively appealing explanation for genetic code organization, its statistical support diminishes significantly when accounting for biochemical constraints and methodological limitations. The phylogenetic congruence theory, supported by consistent evolutionary timelines across dipeptide sequences, protein domains, and tRNA molecules, provides a more robust framework connecting code evolution to structural demands of emerging proteins. This theoretical foundation directly enables advanced biosynthetic pathway design, enzyme engineering, and heterologous expression optimization—critical capabilities for pharmaceutical development and metabolic engineering. Future research integrating evolutionary principles with computational design promises to further expand our ability to engineer biological systems for biomedical and industrial applications.

The origin of the genetic code represents one of the most fundamental mysteries in evolutionary biology. For decades, scientists have debated whether RNA-based enzymatic activity or protein interactions emerged first in the development of life's coding systems. Recent research has leveraged phylogenomic reconstruction and the principle of phylogenetic congruence to test these competing theories, providing a robust empirical framework for understanding code evolution [30]. Phylogenetic congruence refers to the phenomenon where independent phylogenetic datasets recover similar evolutionary relationships, thereby providing strong corroborating evidence for those relationships [12]. This methodological approach has been particularly transformative for studying deep evolutionary events where traditional fossil evidence is unavailable.

The emerging consensus from congruence-based studies indicates that the genetic code did not emerge suddenly but rather evolved through a gradual process of molecular co-evolution and recruitment. Life on Earth began approximately 3.8 billion years ago, but current evidence suggests genes and the genetic code did not emerge until roughly 800 million years later [30]. This timeline has prompted sophisticated investigations into the transitional phases of code development, with particular focus on dipeptides—the simplest protein units consisting of two amino acids linked by a peptide bond. These elementary structures provide a unique window into primordial evolutionary processes precisely because of their structural simplicity and fundamental nature in protein architecture.

Methodology: Phylogenomic Reconstruction of Dipeptide Evolution

Phylogenetic Congruence as a Validation Framework

The study of genetic code origins has been revolutionized by phylogenomic approaches that systematically compare evolutionary histories derived from different biological data sources. The fundamental principle underlying this research is that congruence between independent phylogenetic datasets—such as protein domains, transfer RNA (tRNA) molecules, and dipeptide sequences—provides strong evidence for shared evolutionary history [12]. When these distinct molecular records tell the same story despite their different biochemical nature and evolutionary constraints, researchers can reconstruct ancient evolutionary events with greater confidence.

In practice, researchers apply both taxonomic congruence (separate analysis of different data partitions with subsequent comparison of resulting trees) and character congruence (combined analysis of all data in a simultaneous approach) to cross-validate findings [12]. The agreement between evolutionary chronologies derived from these different approaches significantly strengthens hypotheses about the emergence sequence of amino acids and their coding systems. This methodological framework is particularly valuable for studying events that occurred billions of years ago, where direct physical evidence is extremely limited.

Experimental Protocol for Dipeptide Chronology Reconstruction

The foundational study illuminating the dipeptide connection to genetic code evolution employed a rigorous multi-stage protocol [24] [30] [31]:

Step 1: Proteome Dataset Curation: Researchers compiled 1,561 proteomes spanning the three superkingdoms of life—Archaea, Bacteria, and Eukarya. This comprehensive taxonomic sampling ensured broad representation across the tree of life.

Step 2: Dipeptide Enumeration and Quantification: The team extracted and analyzed 4.3 billion dipeptide sequences from the curated proteomes, cataloging the abundance and distribution of all 400 possible canonical dipeptide combinations across organisms.

Step 3: Phylogenetic Tree Reconstruction: Using the dipeptide composition data, researchers constructed phylogenetic trees that described the evolutionary relationships between organisms based on their dipeptide profiles. Specialized algorithms were employed to infer ancestral states and evolutionary timelines.

Step 4: Chronological Mapping: The team mapped the appearance of specific dipeptides onto the evolutionary timeline, noting the sequence in which different dipeptides emerged and their relationship to the development of the genetic code.

Step 5: Congruence Assessment: The resulting dipeptide chronology was compared to previously established evolutionary timelines for tRNA molecules and protein domains to test for phylogenetic congruence across these independent data sources.

This systematic protocol enabled the researchers to reconstruct the evolutionary history of dipeptides and their relationship to the developing genetic code with unprecedented resolution.

Research Reagent Solutions for Phylogenomic Analysis

Table 1: Essential Research Materials and Computational Tools for Phylogenomic Dipeptide Analysis

| Category | Specific Tool/Database | Primary Function |

|---|---|---|

| Data Resources | 1,561 Organism Proteomes [24] | Source of 4.3 billion dipeptide sequences for comparative analysis |

| Computational Infrastructure | Blue Waters Supercomputer System [30] | High-performance computing for large-scale phylogenomic calculations |

| Analytical Framework | Phylogenomic Reconstruction Algorithms [24] | Building evolutionary trees from dipeptide composition data |

| Reference Databases | Structural Classification of Proteins (SCOP) [32] | Protein domain classification and evolutionary analysis |

| Validation Tools | Congruence Assessment Methods [12] | Testing agreement between independent phylogenetic datasets |

Results: Deciphering the Chronology of Code Emergence

Amino Acid Entry into the Genetic Code

The phylogenomic analysis of dipeptide sequences revealed a clear temporal sequence in which amino acids were incorporated into the developing genetic code. Researchers categorized amino acids into three distinct groups based on their evolutionary appearance, with the chronology strongly supporting the early emergence of an operational RNA code in the acceptor arm of tRNA prior to the implementation of the standard genetic code in the anticodon loop [24] [30].

Table 2: Chronological Groups of Amino Acids Based on Dipeptide Evolution

| Temporal Group | Amino Acids | Relationship to Genetic Code Development |

|---|---|---|

| Group 1 (Most Ancient) | Tyrosine, Serine, Leucine [30] | Associated with origin of editing mechanisms in synthetase enzymes |

| Group 2 (Intermediate) | Valine, Isoleucine, Methionine, Lysine, Proline, Alanine [24] | Supported early operational code and established specificity rules |

| Group 3 (Most Recent) | Remaining amino acids [30] | Linked to derived functions and standardization of genetic code |

This chronological pattern emerged from the statistical analysis of dipeptide distributions across the evolutionary tree. The early-appearing amino acids consistently formed the core of the most ancient dipeptides, while later-appearing amino acids were incorporated into dipeptides that emerged more recently in evolutionary history. This timeline aligns with and strengthens previous proposals about amino acid recruitment based on tRNA and synthetase evolution [30].

Dipeptide-Antidipeptide Synchronicity and Bidirectional Coding

A particularly remarkable finding from the dipeptide analysis was the synchronous appearance of complementary dipeptide pairs along the evolutionary timeline [24] [30]. For each dipeptide combination (e.g., alanine-leucine, "AL"), researchers observed that the reverse combination (leucine-alanine, "LA")—termed an "anti-dipeptide"—emerged at approximately the same evolutionary period.

This synchronicity suggests these dipeptide pairs arose encoded in complementary strands of nucleic acid genomes, supporting the existence of an ancestral duality of bidirectional coding operating at the proteome level [24]. The research indicates that these complementary pairs likely interacted with minimalistic tRNA molecules and primordial synthetase enzymes, forming the foundation of the emerging coding system. This finding provides a potential mechanism for how early genetic information could have been stored and expressed in complementary strands of primitive nucleic acids.

Workflow for Dipeptide-Based Evolutionary Reconstruction

The following diagram illustrates the integrated workflow for reconstructing evolutionary history from dipeptide sequences, highlighting how phylogenetic congruence between different data sources validates the resulting chronology:

Diagram 1: Workflow for Dipeptide-Based Evolutionary Reconstruction. This schematic illustrates the systematic process from data collection through phylogenetic analysis to validation via congruence testing.

Discussion: Implications for Evolutionary Biology and Beyond

Resolving the Operational vs. Standard Genetic Code Debate

The dipeptide chronology provides compelling evidence for a staged development of coding systems, beginning with an operational code that later evolved into the standard genetic code. The early emergence of dipeptides containing Group 1 and Group 2 amino acids supports the hypothesis that an operational RNA code first developed in the acceptor arm of tRNA molecules, establishing initial rules of specificity through interactions between primitive tRNAs, amino acids, and early synthesizing enzymes [24] [31].

This operational code likely functioned as a molecular recognition system that ensured basic fidelity in amino acid selection and peptide bond formation. Only later did the more familiar standard genetic code develop in the anticodon loop of tRNA, enabling the triplet-based coding system that characterizes modern life [24]. The dipeptide record suggests this transition was gradual, with overlapping phases of molecular co-evolution, editing mechanisms, and recruitment processes that collectively promoted protein folding and functional flexibility.

Thermostability as a Late Evolutionary Development

An important corollary finding from the dipeptide analysis concerns the evolutionary timing of protein thermostability. By tracing the appearance of dipeptides associated with thermal adaptation across the evolutionary timeline, researchers determined that protein thermostability was a late evolutionary development [24] [31]. This finding challenges earlier hypotheses that proposed high-temperature origins of life and instead supports the emergence of proteins in the relatively mild environments typical of the Archaean eon.

The chronological data indicate that early proteins functioned adequately under moderate temperature conditions, with specialized thermostability mechanisms developing later as life diversified into more extreme environments. This temporal sequence has significant implications for understanding both the environmental conditions of early life and the evolutionary pressures that shaped protein structure and function.

Applications in Synthetic Biology and Genetic Engineering

The evolutionary insights gleaned from dipeptide analysis have practical implications for contemporary biotechnology. Synthetic biology efforts aimed at engineering novel genetic codes or creating artificial organisms can benefit from understanding the natural constraints and historical patterns that shaped the standard genetic code [30] [33]. The resilience and resistance to change observed in ancient biological components highlight their fundamental importance, suggesting that genetic engineering efforts that work with rather than against these deep evolutionary patterns may prove more successful.

Furthermore, the recognition that dipeptides represent primordial structural elements suggests they could serve as useful building blocks for designing novel proteins with specific structural or functional properties [30]. The synchronous appearance of dipeptide-antidipeptide pairs indicates that complementary coding strategies might be productively incorporated into synthetic biological systems to enhance stability or functionality.

The phylogenomic investigation of dipeptide sequences has unveiled previously hidden connections between a primordial protein code—arising from the structural demands of emerging proteins—and an early operational RNA code shaped by co-evolution, editing, catalysis, and specificity [24]. The congruence between dipeptide chronologies, tRNA evolution, and protein domain history provides robust, multi-source validation for this reconstructed evolutionary narrative.

This research demonstrates that the genetic code preserves molecular fossils of its evolutionary history in the form of dipeptide abundance and distribution patterns across modern proteomes. Through sophisticated phylogenomic analyses that leverage the principle of phylogenetic congruence, researchers can extract these deep-time signals to reconstruct key events in the development of life's coding systems. The resulting chronology reveals a sophisticated evolutionary process that began with simple dipeptide structures and progressively built the complex, precise genetic coding apparatus that characterizes all contemporary life.

The dipeptide connection thus provides not only a window into primordial code evolution but also a powerful methodological framework for continuing to investigate life's deepest historical origins, with potential applications ranging from fundamental evolutionary biology to applied genetic engineering and synthetic biology.

Methodologies for Decoding History: Phylogenomic Pipelines and Congruence Testing

Building Phylogenetic Trees from Molecular and Morphological Data

The reconstruction of evolutionary history through phylogenetic trees is a cornerstone of biological research, fundamentally relying on two primary data types: molecular sequences and morphological characters. The interplay between these data sources provides not only a practical framework for tree-building but also a critical testing ground for broader evolutionary theories, including the origin and development of the genetic code itself. Recent phylogenomic studies have revealed that the history of the genetic code is mysteriously linked to the dipeptide composition of proteomes, suggesting an early operational RNA code prior to the standard genetic code's implementation [7] [24]. This deep evolutionary relationship underscores why congruence between molecular and morphological data partitions serves as a vital indicator of phylogenetic accuracy—when independent data sources converge on similar tree topologies, confidence in the reconstructed evolutionary relationships increases substantially.

However, the practical integration of these data types presents significant methodological challenges. Molecular and morphological data often exhibit pervasive topological incongruence, yielding different trees regardless of inference methods [11]. Understanding the sources of this conflict—whether biological phenomena like convergent evolution or methodological issues—is essential for advancing phylogenetic inference and, by extension, our understanding of fundamental evolutionary processes. This guide systematically compares the performance of molecular and morphological data in phylogenetic reconstruction, providing researchers with evidence-based protocols for maximizing phylogenetic accuracy within the broader context of validating genetic code theories.

Performance Comparison: Molecular vs. Morphological Data

Quantitative Performance Metrics

Direct comparisons of molecular and morphological data partitions across multiple studies reveal consistent patterns in their phylogenetic performance. The table below summarizes key quantitative differences:

Table 1: Performance comparison between morphological and molecular data partitions

| Performance Metric | Morphological Data | Molecular Data | Comparative Analysis |

|---|---|---|---|

| Convergence Rate | 0.026 convergences/character (quartet analysis) [34] | 0.0085 convergences/character (quartet analysis) [34] | Morphological characters experience 3x more convergence |

| Consistency Index (ci) | Significantly lower values [34] | Significantly higher values [34] | Molecular data exhibits less homoplasy |

| Number of Character States | 75.2% binary; median 2 states/character [34] | 12.4% binary; median 5 states/character (amino acids) [34] | Molecular characters have significantly more states |

| Monophyletic Preservation | 50.0% (gene order) [35] | 78.8% (concatenated PCGs) [35] | Protein-coding genes outperform morphology |

| Primary Strength | Fossil incorporation; independent evolutionary signal [11] | High resolution; extensive character sampling [35] | Complementary utility |

Congruence and Combinability Analysis

Empirical studies demonstrate that significant incongruence between morphological and molecular partitions is widespread. A meta-analysis of 32 combined datasets across metazoa revealed that these data partitions frequently yield different trees, with Robinson-Foulds distances ranging from 0.55 to 0.92 in barnacle phylogenies, indicating substantial topological differences [35] [11]. Bayes factor combinability tests further show that morphological and molecular partitions are not consistently combinable—meaning data partitions are not always best explained under a single evolutionary process [11].

Despite this incongruence, combined analyses often yield unique trees not sampled by either partition individually, revealing "hidden support" for novel relationships [11]. This synergy demonstrates that studies analyzing only one data type are unlikely to provide the complete evolutionary picture, particularly for groups with complex evolutionary histories like marine invertebrates [35] and mammals [34].

Figure 1: Experimental workflow for assessing phylogenetic congruence and combinability between molecular and morphological data partitions

Experimental Protocols for Phylogenetic Comparison

Mitochondrial Genome Phylogenetics Protocol

The relative performance of phylogenetic methods can be systematically evaluated using complete mitochondrial genomes, as demonstrated in studies of barnacle evolution [35]:

Sample Collection and Genome Sequencing:

- Collect specimens from defined geographical locations with precise coordinates

- Extract genomic DNA using commercial kits (e.g., DNeasy Blood & Tissue DNA Kit)

- Perform next-generation sequencing (Illumina NovaSeq 6000 system)

- Assemble mitochondrial genomes using de novo assembly combined with reference-based mapping (MitoZ v3.5 with genetic_code 5 and clade Arthropoda parameters)

- Polish assemblies using Polypolish v0.5.0 to correct sequence errors

- Annotate genomes to identify 13 protein-coding genes, 22 tRNAs, and 2 rRNAs

Phylogenetic Tree Construction:

- Compile dataset of complete mitochondrial genomes (e.g., 34 genomes with ingroup and outgroup taxa)

- Apply three parallel phylogenetic approaches to the same dataset:

- Gene order analysis: Use Maximum Likelihood for Gene-Order (MLGO) with 1,000 bootstrap replicates

- Concatenated protein-coding genes: Align 13 PCGs using CLUSTAL Omega, construct tree with raxmlGUI 2.0 (GTR model, 1,000 bootstrap replicates)

- Universal marker region: Extract and align COX1 marker regions (658 bp LCO1490/HCO2198), analyze with identical parameters

- Calculate Robinson-Foulds distances between resulting trees using "phangorn" package in R

- Assess monophyletic preservation of established taxonomic groups using "ape" package

Morphological-Molecular Congruence Testing Protocol

A standardized meta-analytical approach for assessing congruence between data partitions [11]:

Data Selection and Curation:

- Survey published phylogenetic analyses containing both molecular and morphological partitions

- Apply inclusion criteria: minimum molecular data (parsimony-informative characters ≥10× taxon number), sequences from ≥3 genes, ≥10 taxa after editing, morphological data (informable characters ≥1.5× taxon number)

- Remove taxa lacking either partition and all fossil taxa to balance missing data

- Eliminate parsimony-uninformative morphological characters using parsimony informative ascertainment bias model in MrBayes

- Select best-fitting molecular models using PartitionFinder 2.1.1 (AICc criterion, MrBayes models, greedy schemes)

Phylogenetic Analysis and Congruence Assessment:

- Conduct parallel Bayesian analyses using MrBayes 3.2.6 (2 runs of 4 chains, sample 10,000 trees, 25% burnin)

- Assess convergence using Tracer 1.7 (ESS scores >200, stationarity)

- Perform parsimony analyses of morphological data using TNT 1.5 (new technology searches with tree-drifting, tree-fusing, sectorial searches)

- Apply implied weighting parsimony (k=3) alongside equal weights parsimony

- Conduct Bayes factor combinability test using stepping stone analysis in MrBayes to compare:

- M1: Independent branch lengths and topologies between partitions

- M2: Independent branch lengths only

- Calculate congruence metrics: Robinson-Foulds distances, monophyly preservation rates, hidden support quantification

Comparative Analysis of Phylogenetic Inference Methods

Method-Specific Performance Characteristics

Different phylogenetic approaches exhibit distinct strengths and limitations, making them differentially suitable for various research contexts:

Table 2: Performance characteristics of different phylogenetic inference methods

| Method | Optimal Application Context | Relative Performance | Key Limitations |

|---|---|---|---|

| Gene Order Analysis | Deep evolutionary relationships; lineage-specific rearrangement patterns [35] | Identifies genome rearrangement hotspots; lower monophyletic preservation (50.0%) [35] | Limited character sampling; unsuitable for recently diverged lineages |

| Concatenated Protein-Coding Genes | Most phylogenetic studies requiring robust resolution [35] | Highest monophyletic preservation (78.8%); strong branch support [35] | Model misspecification risk; ignores incomplete lineage sorting |

| Single Marker (COX1) | Species identification; DNA barcoding; rapid assessment [35] | Effective for species-level discrimination; limited deeper phylogenetic signal [35] | Inadequate for resolving deeper relationships; single-gene limitations |

| Combined Morphological-Molecular | Fossil incorporation; total evidence approaches [11] | Reveals hidden support; unique topologies not in separate analyses [11] | Frequent incongruence; potential signal swamping |

| Morphology-Only Parsimony | Fossil-rich matrices; morphological phylogenetics [11] [34] | Historical usage; conceptual simplicity [11] | Higher convergence; limited model sophistication |

| Morphology-Only Bayesian (Mk model) | Probabilistic morphology inference; combined analyses [11] | Increasingly preferred over parsimony in simulation studies [11] | Simple assumptions; questionable fit to empirical evolution |

Convergence and Homoplasy Analysis

A critical limitation in morphological phylogenetics is the higher prevalence of homoplasy (convergent evolution) compared to molecular data. Analysis of mammalian phylogeny using 3,414 morphological characters and 5,722 amino acid sites revealed that morphological characters exhibit 1.7 times more convergences per character than molecular characters [34]. The convergence-to-divergence (Cv/Dv) ratio is 4.0 times higher for morphological characters, indicating substantially more homoplasy [34].

Crucially, this disparity appears driven primarily by the fewer number of states in morphological characters (75.2% binary) versus molecular characters (median 5 states for amino acids) rather than intrinsic differences in susceptibility to convergence [34]. When controlling for the number of character states, morphological characters show similar Cv/Dv ratios to molecular characters (0.89:1), suggesting that state space limitation rather than adaptive convergence explains the difference [34].

Figure 2: Logical relationship explaining morphological convergence and mitigation strategy

Successful phylogenetic analysis requires both laboratory reagents for data generation and computational tools for analysis and visualization:

Table 3: Essential research reagents and computational tools for phylogenetic analysis

| Category | Specific Tools/Reagents | Primary Function | Application Context |

|---|---|---|---|

| DNA Extraction & Sequencing | DNeasy Blood & Tissue DNA Kit (Qiagen) [35] | High-quality DNA extraction | Mitochondrial genome sequencing |

| NovaSeq 6000 system (Illumina) [35] | High-throughput sequencing | Genome-scale data generation | |

| Sequence Assembly & Annotation | MitoZ v3.5 [35] | Mitochondrial genome assembly | Taxonomic applications with genetic_code parameter |

| Polypolish v0.5.0 [35] | Assembly error correction | Improving assembly quality | |

| Geneious Prime [36] | Genome annotation and analysis | Plastome and mitogenome studies | |

| Sequence Alignment | CLUSTAL Omega [35] | Multiple sequence alignment | Protein-coding gene datasets |

| MAFFT v7.221 [36] | Advanced sequence alignment | Complex or large datasets | |

| Phylogenetic Inference | MrBayes 3.2.6 [11] | Bayesian phylogenetic inference | Combined morphological-molecular analyses |

| RAxML v8.2.8 [36] | Maximum likelihood inference | Large molecular datasets | |

| TNT v1.5 [11] | Parsimony analysis | Morphological data analysis | |

| Tree Visualization & Annotation | ggtree R package [37] [38] | Advanced tree visualization and annotation | Publication-quality figures |

| FigTree v1.4.2 [36] | Tree visualization | Quick viewing and basic editing | |

| iTOL [36] | Online tree visualization | Collaborative work and sharing |