Protein Engineering Strategies: A Comparative Analysis of Directed Evolution and Rational Design

This article provides a comprehensive analysis of the two dominant protein engineering strategies—directed evolution and rational design—for researchers and drug development professionals.

Protein Engineering Strategies: A Comparative Analysis of Directed Evolution and Rational Design

Abstract

This article provides a comprehensive analysis of the two dominant protein engineering strategies—directed evolution and rational design—for researchers and drug development professionals. It explores their foundational principles, core methodologies, and practical applications in therapeutic and industrial contexts. The content details common experimental challenges and optimization techniques, including the rise of semi-rational and AI-hybrid approaches. A direct comparative analysis equips scientists to select the optimal strategy for their specific project goals, concluding with an examination of future directions driven by artificial intelligence and de novo design.

Core Principles of Protein Engineering: From Natural Evolution to Computational Blueprints

Protein engineering has emerged as a transformative discipline in modern biotechnology, enabling breakthroughs in therapeutics, industrial biocatalysis, and basic scientific research. This field rests on two fundamental methodological pillars: rational design and directed evolution. These approaches embody distinct philosophies for manipulating biomolecules. Rational design operates as a precision architect, leveraging detailed knowledge of protein structure and function to make calculated, targeted changes. In contrast, directed evolution functions as a Darwinian experiment, mimicking natural selection through iterative rounds of mutagenesis and screening to discover improved variants without requiring prior structural knowledge.

The profound impact of both methodologies was recognized by the Nobel Prize in Chemistry; Frances Arnold was honored in 2018 for pioneering directed evolution of enzymes, while the 2024 prize celebrated computational protein design advancements fundamental to rational approaches [1]. This technical guide provides an in-depth analysis of both paradigms, examining their underlying principles, methodological workflows, applications, and limitations within the context of contemporary protein engineering research and drug development.

Core Principles and Methodologies

Rational Design: The Precision Architect

The rational design approach is predicated on a deep understanding of the sequence-structure-function relationship in proteins. It requires detailed, high-resolution knowledge of the protein's three-dimensional structure, active site architecture, and catalytic mechanism to make informed decisions about which amino acid substitutions to introduce.

Key Methodological Components:

Structure-Based Design: This foundational element utilizes X-ray crystallography, NMR, or cryo-EM structures, along with computational homology modeling, to identify key residues for mutation. The growing number of protein structures in databases like the PDB has greatly empowered this approach [2]. Critical regions for modification often include active site residues, substrate access tunnels, and domain interfaces that influence stability or allostery [2].

Computational Predictive Algorithms: Modern rational design employs sophisticated computational tools including molecular dynamics (MD) simulations, quantum mechanics/molecular mechanics (QM/MM) calculations, and rotamer library analyses to predict the energetic impact of amino acid substitutions on protein structure and stability [2] [3]. These tools help evaluate conformational variations and model backbone reorganization.

Evolution-Guided Atomistic Design: This strategy combines structural information with evolutionary data from multiple sequence alignments (MSAs) of homologous proteins. By analyzing natural sequence diversity, researchers can identify evolutionarily conserved positions and permissible substitutions, filtering out mutations likely to cause misfolding or instability before proceeding to atomistic design calculations [3].

Experimental Protocol for Structure-Based Enzyme Redesign:

- Target Identification: Obtain high-resolution structure of target protein (e.g., from PDB) or generate via homology modeling.

- Functional Mapping: Identify catalytic residues, substrate-binding pockets, and allosteric networks through structural analysis and literature review.

- Computational Screening: Use protein design software (e.g., Rosetta) to perform in silico mutagenesis and calculate folding free energy changes (ΔΔG) for proposed mutations.

- Variant Selection: Select a limited set of promising mutations (typically 10-50 variants) based on computational predictions.

- Gene Synthesis: Construct selected variants via site-directed mutagenesis or gene synthesis.

- Experimental Validation: Express and purify variants for biochemical characterization of activity, stability, and specificity.

Directed Evolution: The Darwinian Experiment

Directed evolution harnesses the principles of natural evolution—genetic diversification followed by selection of improved variants—in an accelerated laboratory timeframe. This approach does not require detailed structural knowledge of the target protein, instead relying on high-throughput screening to identify beneficial mutations that would be difficult to predict computationally [4].

Key Methodological Components:

Random Mutagenesis: This involves introducing random mutations throughout the gene encoding the protein of interest. The most common method is error-prone PCR (epPCR), which utilizes reaction conditions that reduce polymerase fidelity—typically employing polymerases lacking proofreading activity, manganese ions (Mn²⁺), and unbalanced dNTP concentrations—to achieve mutation rates of 1-5 base substitutions per kilobase [5]. Alternative methods include mutator strains and orthogonal replication systems for in vivo mutagenesis [6].

Recombination-Based Methods: Techniques like DNA shuffling (also known as sexual PCR) mimic natural recombination by fragmenting homologous genes with DNase I and reassembling them through a primer-free PCR reaction, creating chimeric genes from parental sequences [4] [5]. Family shuffling extends this concept by recombining homologous genes from different species, accessing nature's standing variation for accelerated improvement [5].

Semi-Rational Approaches: Modern directed evolution often incorporates limited rational elements through semi-rational design. This involves creating focused libraries at specific "hotspot" residues identified from previous evolution rounds or structural analysis, enabling more efficient exploration of sequence space [2]. Site-saturation mutagenesis comprehensively explores all 19 possible amino acid substitutions at targeted positions, providing deeper interrogation than achievable with purely random methods [5].

Experimental Protocol for Directed Evolution:

- Library Construction: Generate genetic diversity via epPCR, DNA shuffling, or saturation mutagenesis.

- Expression System: Clone variant library into appropriate expression vector and transform into host cells (e.g., E. coli, yeast).

- Screening/Selection: Apply high-throughput screen or selection to identify improved variants:

- For screening: Use microtiter plate assays with colorimetric/fluorometric substrates

- For selection: Implement systems where desired function couples to host survival/replication

- Hit Identification: Isolate top-performing variants and sequence to identify mutations.

- Iterative Cycling: Use best hits as templates for subsequent rounds of diversification and screening.

- Characterization: Express and biochemically characterize final lead variants.

Table 1: Core Principles of Protein Engineering Paradigms

| Aspect | Rational Design | Directed Evolution |

|---|---|---|

| Philosophical Basis | Precision architecture based on first principles | Empirical Darwinian experiment |

| Knowledge Requirement | High (structure, mechanism, dynamics) | Low to moderate (sequence sufficient) |

| Mutation Strategy | Targeted, specific changes | Random or semi-random diversification |

| Primary Strength | Precise control over modifications; avoids large libraries | Discovers non-intuitive solutions; no structural knowledge needed |

| Key Limitation | Limited by accuracy of structure-function predictions | High-throughput screening bottleneck; can be resource-intensive |

| Theoretical Foundation | Inverse folding problem, thermodynamic hypothesis | Population genetics, natural selection |

Comparative Analysis: Advantages and Limitations

Strategic Advantages of Each Approach

Rational Design excels when detailed structural and mechanistic information is available, allowing for precise engineering of specific properties. Key advantages include:

- Precision Control: Enables targeted modifications of specific structural elements such as active sites, ligand-binding pockets, or protein-protein interfaces [1].

- Small Library Sizes: Typically requires testing of only tens to hundreds of variants, significantly reducing experimental burden compared to large-scale screening [2].

- Intellectual Framework: Provides mechanistic insights and testable hypotheses about structure-function relationships, contributing to fundamental scientific knowledge [2].

- De Novo Capabilities: Empowers creation of entirely new protein scaffolds and functions not found in nature, as demonstrated by computational design of novel protein folds and enzymes [7] [3].

Directed Evolution offers distinct advantages for optimizing complex phenotypes or when structural information is limited:

- Bypasses Knowledge Gaps: Does not require complete understanding of protein structure or mechanism, making it applicable to poorly characterized systems [5].

- Discovers Non-Intuitive Solutions: Can identify beneficial mutations that would not be predicted by current computational models, including long-range interactions and allosteric effects [5].

- Optimizes Complex Traits: Effective for improving multi-genic properties like thermostability, organic solvent tolerance, and altered substrate specificity that involve distributed mutations [6].

- Proven Industrial Track Record: Has generated numerous commercially successful enzymes and therapeutics, validating its practical utility [8].

Technical Limitations and Challenges

Rational Design faces several significant challenges:

- Structure-Function Prediction Gap: Accurately predicting the functional consequences of mutations remains difficult, particularly for conformational changes, dynamics, and allosteric regulation [3] [1].

- Limited by Available Structures: Requires high-quality structural data, which may be unavailable for many targets, especially membrane proteins and large complexes [1].

- Negative Design Problem: Ensuring the desired state has significantly lower energy than all possible misfolded or alternative states is computationally challenging [3].

- Restricted Exploration: Tends to explore conservative variations near known functional sites, potentially missing beneficial mutations in distal regions.

Directed Evolution confronts its own set of limitations:

- Screening Bottleneck: The requirement to test large variant libraries represents the primary bottleneck, especially for properties not amenable to high-throughput assays [5].

- Methodological Biases: Random mutagenesis methods like epPCR have inherent biases (e.g., favoring transitions over transversions) that constrain accessible sequence space [5].

- Combinatorial Explosion: The number of possible variants expands exponentially with each additional mutation site, making comprehensive sampling impossible for full proteins.

- Limited De Novo Potential: Generally requires a starting protein with at least basal level of the desired activity, unlike rational approaches that can design entirely new folds.

Table 2: Practical Implementation Considerations

| Consideration | Rational Design | Directed Evolution |

|---|---|---|

| Typical Library Size | 10-10³ variants [2] | 10⁴-10¹⁴ variants [6] |

| Time Investment | Weeks to months (primarily computational) | Months to years (multiple iterative cycles) |

| Equipment Needs | High-performance computing, structural biology | High-throughput screening robotics, FACS |

| Expertise Required | Computational biology, biophysics, structural biology | Molecular biology, microbiology, assay development |

| Success Rate | Variable; highly dependent on target and accuracy of predictions | More consistent; improves with library quality and screening power |

Emerging Synergies: Integrated Approaches

The historical distinction between rational design and directed evolution is increasingly blurring as researchers develop integrated strategies that leverage the strengths of both approaches [2]. These hybrid methodologies represent the cutting edge of modern protein engineering:

Semi-Rational Design and Smart Libraries

This approach uses computational and bioinformatic analyses to identify promising target sites for randomization, creating "smart libraries" that are smaller but enriched in functional variants [2] [1]. Key implementations include:

- Sequence-Based Redesign: Using multiple sequence alignments and phylogenetic analysis to identify evolutionarily variable positions that are more tolerant to mutation [2].

- Hotspot Identification: Computational tools like HotSpot Wizard analyze catalytic residues, tunnels, and gates to pinpoint residues critical for function [2].

- FRESCO Protocol: Framework combining computational prediction of stabilizing mutations with experimental testing of small numbers of variants [3].

Machine Learning-Enhanced Protein Engineering

The integration of machine learning represents a powerful convergence of both paradigms:

- AlphaFold2 and RFdiffusion: These AI-powered platforms have dramatically improved protein structure prediction, providing critical inputs for rational design [1] [8].

- Large Language Models (LLMs): Protein language models trained on evolutionary sequence data can predict functional sequences and guide library design [3].

- Autonomous Platforms: Systems like SAMPLE (Self-driving Autonomous Machines for Protein Landscape Exploration) combine AI-based protein design with automated robotic experimentation, creating closed-loop optimization systems [1].

Applications in Research and Therapeutics

Both rational design and directed evolution have demonstrated significant impact across biotechnology and pharmaceutical development:

Therapeutic Applications

- Antibody Engineering: Rational design has created bispecific antibodies, antibody-drug conjugates, and Fc-engineered antibodies with enhanced effector functions [8]. Directed evolution through phage display has generated high-affinity therapeutic antibodies like Humira and Keytruda [8].

- Enzyme Replacement Therapies: Both approaches have been used to engineer enzymes with improved catalytic activity, stability, and reduced immunogenicity for treating lysosomal storage disorders [8].

- Vaccine Development: Rational design of immunogens based on structural biology principles has produced stabilized prefusion viral proteins for vaccines against RSV and SARS-CoV-2 [3].

Industrial and Environmental Applications

- Biocatalyst Engineering: Directed evolution has created industrial enzymes operating under extreme conditions (high temperature, organic solvents) for biofuel production, food processing, and textile manufacturing [5] [8].

- Metabolic Pathway Engineering: Both approaches have been applied to optimize enzymes in biosynthetic pathways for pharmaceutical precursors, biofuels, and biodegradable plastics [4] [8].

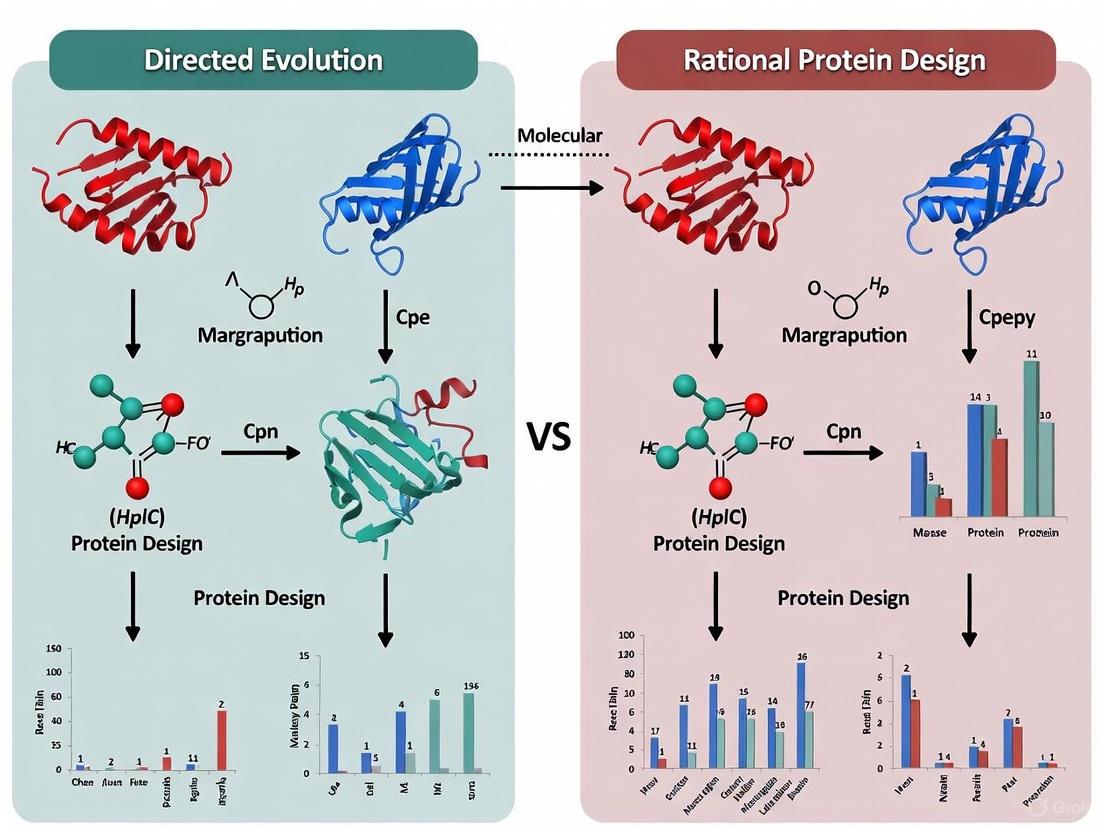

Visualizing Methodological Workflows

Directed Evolution Workflow

Directed Evolution Workflow

Rational Design Workflow

Rational Design Workflow

The Scientist's Toolkit: Essential Research Reagents and Methods

Table 3: Key Research Reagents and Methods in Protein Engineering

| Tool Category | Specific Examples | Function in Protein Engineering |

|---|---|---|

| Mutagenesis Methods | Error-prone PCR, DNA shuffling, Site-saturation mutagenesis | Introduce genetic diversity for directed evolution or specific changes for rational design |

| Structural Biology Tools | X-ray crystallography, Cryo-EM, NMR spectroscopy | Provide high-resolution protein structures for rational design efforts |

| Computational Platforms | Rosetta, AlphaFold2, RFdiffusion, Molecular Dynamics | Predict protein structures, design novel sequences, and model protein dynamics |

| Screening Technologies | FACS, Microfluidic droplet sorting, Phage/yeast display | Enable high-throughput identification of improved variants from large libraries |

| Expression Systems | E. coli, P. pastoris, HEK293 cells, Cell-free systems | Produce protein variants for functional characterization and screening |

Rational design and directed evolution represent complementary rather than competing paradigms in protein engineering. Rational design offers precision and deep mechanistic insight but requires extensive structural knowledge and accurate computational models. Directed evolution provides a powerful empirical approach for optimizing complex traits without requiring complete structural understanding but faces challenges in screening throughput and methodological biases.

The future of protein engineering lies in integrated strategies that combine the predictive power of computational design with the exploratory strength of evolutionary methods. Advances in artificial intelligence, structural biology, and high-throughput screening continue to bridge the gap between these approaches, enabling more efficient engineering of proteins for therapeutic applications, industrial biocatalysis, and fundamental scientific research. As both methodologies continue to evolve and converge, they will undoubtedly drive further innovations in biotechnology and drug development.

Protein engineering has been fundamentally transformed by the development of directed evolution, a method that mimics natural selection in laboratory settings to steer proteins toward user-defined goals [9]. This approach stands in contrast to rational design, which relies on precise, knowledge-based structural modifications. The journey from early in vitro evolution experiments to Nobel Prize-winning methodologies represents a paradigm shift in how scientists engineer biocatalysts, antibodies, and therapeutic proteins [6]. This whitepaper traces the historical trajectory of directed evolution, examining its technical foundations, methodological evolution, and current convergence with computational approaches, all within the broader context of comparing its advantages and limitations against rational protein design.

Historical Foundations: From Preliminary Experiments to Methodological Establishment

The First In Vitro Evolution Experiments

The conceptual origins of directed evolution can be traced to Sol Spiegelman's pioneering work in 1967, which constituted the first documented Darwinian evolution experiment in a test tube [6]. Spiegelman and colleagues evolved RNA molecules through iterative rounds of replication using Qβ bacteriophage RNA polymerase, selecting for variants with increased replication efficiency [6] [9]. This groundbreaking "Spiegelman's Monster" experiment demonstrated that biomolecules could be evolved toward specific properties outside living organisms, establishing the core principle that would later underpin directed evolution methodologies.

Expansion to Application-Driven Approaches

Throughout the 1980s, directed evolution experiments shifted toward practical applications, most notably with the development of phage display by George P. Smith [6] [1]. This technology enabled the display of exogenous peptides on filamentous phage surfaces, allowing affinity-based selection of binding variants [9]. Gregory Winter later adapted phage display for antibody engineering, creating a powerful platform for developing therapeutic antibodies [1]. These early methodologies established the critical genotype-phenotype linkage essential for efficient directed evolution, where a protein's function (phenotype) could be directly traced back to its genetic code (genotype) [9].

Fundamental Principles and Methodological Framework

Directed evolution mimics natural evolution through an iterative cycle of three fundamental processes: diversification, selection, and amplification [9]. This section details the experimental protocols and methodologies that operationalize these principles.

Library Generation Methods

Random Mutagenesis Techniques

Error-Prone PCR (epPCR): This foundational method introduces random point mutations throughout the gene of interest by manipulating PCR conditions to reduce polymerase fidelity. Manganese ions are added to the reaction buffer, and nucleotide concentrations are skewed to promote misincorporation [6] [9]. The mutation rate can be controlled by adjusting template concentration, cycle number, and magnesium concentration [10]. A key limitation is biased mutagenesis distribution and a high frequency of deleterious mutations, especially in large genes [10].

Mutator Strains: These utilize engineered E. coli strains with defective DNA repair machinery (mutD, mutT, mutS) to achieve in vivo random mutagenesis [6]. While simple to implement, this approach lacks control over mutation rates and cannot target specific genes.

Orthogonal Replication Systems: Recent advancements employ engineered DNA polymerases (e.g., Pol I) or orthogonal replication systems (pGLK1/2, Ty1, T7RNAP) that can be coupled with CRISPR-Cas9 to restrict mutagenesis to target sequences, though mutation frequency remains relatively low [6].

Recombination-Based Methods

DNA Shuffling: Developed in the 1990s, this method mimics natural homologous recombination [9]. Parental genes are fragmented with DNase I, and fragments with sufficient homology reassemble via primerless PCR [6] [10]. This approach allows beneficial mutations from different parents to combine, potentially accelerating functional improvement.

Staggered Extension Process (StEP): A simplified recombination method where short annealing/extension cycles during PCR continually switch templates, generating recombined products [6].

RACHITT (Random Chimeragenesis on Transient Templates): This method increases crossover frequency compared to traditional DNA shuffling and removes parental sequences from the final library [6].

Advanced and Specialized Methods

Recent innovations address limitations in traditional approaches, particularly for large proteins:

Segmental Error-Prone PCR (SEP): Large genes are divided into smaller fragments that undergo independent epPCR before reassembly in Saccharomyces cerevisiae, ensuring more even mutation distribution [10].

Directed DNA Shuffling (DDS): Selectively amplifies mutated fragments from positive SEP variants for reassembly, cumulatively combining beneficial mutations [10].

ITCHY (Incremental Truncation for the Creation of Hybrid enzYmes) and SCRATCHY: Enable recombination of sequences with low homology by creating comprehensive libraries of N-terminal and C-terminal fragment fusions [6].

The following workflow summarizes the key methodological decision points in designing a directed evolution experiment:

Selection and Screening Methodologies

The success of directed evolution critically depends on effectively identifying improved variants from libraries:

In Vivo Selection: Directly couples protein function to host survival, such as by making enzyme activity necessary for antibiotic resistance or nutrient synthesis [9]. While offering extremely high throughput (limited only by transformation efficiency), developing such systems is challenging and prone to artifacts [9].

Phage Display: An in vitro selection technique where protein variants are displayed on phage surfaces, exposed to immobilized target molecules, and binders are isolated after washing [6] [9]. This method is particularly powerful for engineering binding proteins and antibodies.

Fluorescence-Activated Cell Sorting (FACS): Enables high-throughput screening of cell-surface displayed libraries using fluorescent labeling [6] [1]. Recent advancements include product entrapment strategies that expand application scope to enzymatic activities [6].

Microplate-Based Screening: Individual variants are expressed and assayed in multi-well plates, typically using colorimetric or fluorogenic substrates [6]. While lower in throughput, this approach provides detailed quantitative data on each variant.

The Nobel Prize Recognition and Methodological Maturation

The year 2018 marked a significant milestone for directed evolution, with the Nobel Prize in Chemistry awarding three pioneers in the field:

Frances H. Arnold received half the prize "for the directed evolution of enzymes" [1]. Her work demonstrated that iterative random mutagenesis and screening could rapidly improve enzyme properties such as stability, activity, and solvent tolerance, even without structural knowledge [6].

George P. Smith and Sir Gregory P. Winter shared the other half for "the phage display of peptides and antibodies" [1]. Their methodology enabled the evolution of antibody affinity and specificity, leading to breakthrough therapeutics like adalimumab, the first fully human antibody approved for clinical use [1].

This recognition cemented directed evolution as an essential protein engineering strategy and highlighted its complementary relationship with rational design approaches.

Quantitative Comparison of Directed Evolution Techniques

The table below summarizes the key methodologies, their advantages, limitations, and representative applications:

| Technique | Purpose | Advantages | Disadvantages | Application Examples |

|---|---|---|---|---|

| Error-prone PCR [6] [10] | Insertion of point mutations across whole sequence | Easy to perform; no prior knowledge needed | Biased mutagenesis; high frequency of deleterious mutations | Subtilisin E; Glycolyl-CoA carboxylase |

| DNA Shuffling [6] [10] | Random sequence recombination | Combines beneficial mutations from multiple parents | Requires high homology (>70%) between sequences | Thymidine kinase; Non-canonical esterase |

| SEP & DDS [10] | Evolution of large proteins | Even mutation distribution; reduces reverse mutations | Additional steps for fragment handling | β-glucosidase activity & organic acid tolerance |

| Site-Saturation Mutagenesis [6] | Focused mutagenesis of specific positions | In-depth exploration of chosen positions; smart library design | Limited to few positions; libraries can become very large | Widely applied to enzyme evolution |

| Orthogonal Systems [6] | In vivo random mutagenesis | Mutagenesis restricted to target sequence | Low mutation frequency; sequence size limitations | β-Lactamase; Dihydrofolate reductase |

| Phage Display [6] [9] | Selection of binding proteins | Extremely high throughput; well-established | Limited to binding functions; not directly applicable to enzymes | Antibodies; Fbs1 glycan-binding protein |

| FACS-Based Methods [6] | Screening of variants | High throughput (up to 10^9 variants/day) | Requires fluorescence coupling; specialized equipment | Sortase; Cre recombinase; β-galactosidase |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful directed evolution experiments require carefully selected biological reagents and materials:

| Reagent/Material | Function/Purpose | Examples/Notes |

|---|---|---|

| Gene of Interest | Template for diversification | Wild-type or parent variant with baseline activity [10] |

| Mutagenesis Polymerases | Introduce random mutations | Error-prone polymerases with reduced fidelity [6] |

| Host Organisms | Expression of variant libraries | E. coli (prokaryotic proteins), S. cerevisiae (eukaryotic proteins, high recombination) [10] |

| Selection Agents | Apply evolutionary pressure | Antibiotics, toxic metabolites, or nutrient limitations [9] |

| Fluorescent Substrates | Enable high-throughput screening | Colorimetric/fluorogenic proxies for actual activity [6] |

| Display Scaffolds | Genotype-phenotype linkage | M13 phage (phage display), yeast surface display [6] [9] |

| Microfluidic Devices | Ultra-high-throughput screening | Emulsion-based compartmentalization [9] |

Contemporary Advancements and Future Directions

Semi-Rational and Hybrid Approaches

The distinction between directed evolution and rational design has blurred with the emergence of semi-rational approaches that combine their strengths [2] [9]. These methods use evolutionary information, structural data, or computational predictions to create "smart libraries" focused on promising protein regions, dramatically reducing library size while increasing functional content [2]. Key strategies include:

Sequence-Based Design: Using multiple sequence alignments and phylogenetic analysis to identify evolutionarily variable positions likely to tolerate mutation [2].

Structure-Based Design: Targeting residues near active sites, domain interfaces, or hinge regions based on three-dimensional structural knowledge [2].

Hotspot Identification: Computational tools like HotSpot Wizard identify positions with high probability of functional improvement [2].

AI-Driven Protein Design

Recent years have witnessed a paradigm shift with the integration of artificial intelligence and machine learning:

Structure Prediction Tools: AlphaFold2 and RoseTTAFold provide accurate protein structure predictions, enabling better-informed library design [11] [3].

Generative Models: ProteinMPNN (for inverse folding) and RFDiffusion (for de novo backbone generation) allow computational creation of novel protein sequences and structures [11].

Unified Workflows: Systematic frameworks now connect database searching, structure prediction, function prediction, sequence/structure generation, and virtual screening into coherent protein engineering pipelines [11].

Continuous Directed Evolution

Novel platforms enable continuous evolution without discrete rounds of mutagenesis and selection [10]. These systems enhance mutation rates in vivo by engineering DNA replication and repair mechanisms, though challenges remain in controlling evolutionary trajectories and ensuring reproducibility [10].

Directed evolution has progressed remarkably from Spiegelman's initial in vitro RNA evolution to sophisticated Nobel Prize-winning methodologies that routinely engineer proteins for therapeutic, industrial, and research applications. While the core principles of diversification, selection, and amplification remain unchanged, methodological innovations have dramatically expanded capabilities. The field continues to evolve through integration with computational approaches, creating powerful hybrid methodologies that leverage the strengths of both directed evolution and rational design. As protein engineering advances, this historical trajectory suggests that the most productive future lies not in choosing between directed evolution and rational design, but in developing integrated strategies that combine their complementary advantages to address the growing challenges in biotechnology and medicine.

Rational protein design is a powerful biotechnological process that focuses on creating new enzymes or proteins and improving the functions of existing ones by deliberately manipulating their amino acid sequences based on a deep understanding of their structure-function relationships [1]. This approach stands in contrast to directed evolution, which mimics natural selection by generating random mutations and screening for desired traits without requiring prior structural knowledge [12]. The foundational principle of rational design is that proteins adopt specific three-dimensional structures determined by their amino acid sequences, and these structures directly dictate their biological functions [13] [14]. Scientists utilizing rational design act as protein architects, employing detailed structural knowledge to create specific, targeted changes in a protein's amino acid sequence to achieve predefined functional enhancements [12].

This methodology relies heavily on computational models and existing structural data to predict how precise modifications will impact protein performance, enabling targeted alterations that can enhance stability, specificity, or catalytic activity [12]. The precision of rational design is its greatest advantage, allowing researchers to move beyond random exploration to intentional engineering. However, this approach necessitates a comprehensive understanding of the protein in question, including its three-dimensional architecture and the mechanistic role of key residues, information that is not always available, especially for complex or poorly characterized proteins [12] [1].

The Sequence-Structure-Function Paradigm

The sequence-structure-function paradigm is a central tenet in structural biology, stating that a protein's amino acid sequence determines its folded three-dimensional structure, which in turn dictates its specific biological function [14]. This linear relationship provides the theoretical foundation for rational design. The function of a protein is strongly dependent on its structure, and during evolution, proteins acquire new functions through mutations that alter the amino-acid sequence [13].

Understanding the underlying relations between sequence, structure, and function has been an active research topic in molecular biology for decades [13]. With the advent of powerful structure prediction tools like AlphaFold2 and RoseTTAFold, the field is now better equipped to explore this relationship on a large scale [14]. These advances have revealed that the structural space is continuous and largely saturated, highlighting the need for a shift in focus from merely obtaining structures to putting them into functional context [14].

In rational design, this paradigm is leveraged in reverse: scientists start with a desired function, hypothesize a structural configuration that would enable that function, and then design a sequence predicted to fold into that target structure. This approach requires sophisticated computational models to accurately predict how specific amino acid substitutions will affect the protein's fold and, consequently, its functional capabilities.

Fundamental Mechanisms of Mutation Effects

Mutations introduced through rational design can affect protein function through several distinct structural mechanisms. The replacement of an amino acid in the sequence—a mutation—can have structural consequences on the resulting protein and thus has a potential effect on its function [13]. Understanding these mechanisms is crucial for designing effective mutations.

Position-Dependent Effects and Structural Sensitivity

Research has demonstrated that functional change due to mutation is strongly position-dependent, notwithstanding the chemical properties of mutant and mutated amino acids [13]. This indicates that structural properties of a given position are potentially responsible for the functional relevance of a mutation. Studies analyzing the relationship between structure and function using amino acid networks have found that:

- Structural sensitivity to mutations is position-dependent [13]

- Strong structural change correlates with functional loss [13]

- Positions with functional gain due to mutations tend to be structurally robust [13]

These findings suggest that not all positions in a protein are equally amenable to mutation. Some positions (structurally robust positions) can tolerate substitutions with minimal functional consequence, while others (structurally sensitive positions) are critical for maintaining structure and function.

Network-Based Analysis of Mutation Effects

Network science has been successfully used to model protein structure, where amino acids are represented by nodes connected if they are within a specific distance threshold [13]. This approach allows researchers to quantify the structural perturbation caused by a mutation by comparing the 3D structure of the original protein and its mutant. Key metrics for measuring structural change include:

- Perturbation network size: The number of amino acids affected by the mutation

- Edge changes: The number of structural contacts between amino acids that are altered

- Weighted sum: The number of atomic pairs that either moved closer or further apart from a chosen distance threshold [13]

This methodology enables researchers to measure structural change computationally and correlate it with experimentally observed functional changes, creating predictive models for determining which mutations will produce desired functional outcomes.

Methodological Framework for Rational Design

Implementing a successful rational design strategy requires a systematic approach that integrates structural analysis, computational modeling, and experimental validation. The following workflow outlines the key steps in this process.

Structural Analysis and Target Identification

The initial phase involves comprehensive structural analysis to identify promising targets for mutagenesis. This process includes:

- Obtaining high-resolution structural data through X-ray crystallography, NMR, or cryo-EM, or utilizing predicted structures from databases like AlphaFold or the Protein Data Bank [1]

- Identifying key functional regions such as active sites, binding pockets, allosteric sites, or protein-protein interaction interfaces [1]

- Analyzing evolutionary conservation to pinpoint residues critical for structure and function [13]

- Mapping stability determinants including hydrophobic cores, hydrogen bonding networks, and salt bridges that maintain structural integrity

Computational Modeling and In Silico Mutagenesis

Once target regions are identified, computational tools are employed to model the effects of potential mutations:

- Molecular dynamics simulations to assess structural flexibility and conformational changes

- Energy calculations to evaluate the thermodynamic stability of mutant proteins [13]

- Docking studies for predicting changes in substrate binding or protein-protein interactions

- Amino acid network analysis to predict perturbation effects of mutations [13]

Table 1: Correlation Between Structural Perturbation and Functional Change

| Perturbation Measure | Mean Spearman Correlation (ρ) with Functional Change | Statistical Significance (mean p-value) |

|---|---|---|

| Nodes (affected residues) | -0.56 ± 0.12 | 3.6 × 10⁻⁴ ± 6.2 × 10⁻³ |

| Edges (structural contacts) | -0.53 ± 0.1 | 3.6 × 10⁻⁴ ± 6.2 × 10⁻³ |

| Weighted sum | -0.51 ± 0.1 | 3.6 × 10⁻⁴ ± 6.2 × 10⁻³ |

| Diameter | -0.3 ± 0.11 | 1.6 × 10⁻² ± 5.3 × 10⁻² |

Data derived from network analysis of five proteins with deep mutational scanning data [13]

Experimental Validation and Iterative Optimization

After computational predictions, proposed mutations must be experimentally validated:

- Site-directed mutagenesis to introduce specific point mutations via insertions or deletions in the coding sequence [1]

- High-throughput screening of mutant libraries using methods like fluorescence-activated cell sorting (FACS) or phage display [1]

- Biophysical characterization to assess structural integrity, stability, and conformational changes

- Functional assays to quantify catalytic efficiency, binding affinity, or other relevant parameters

Diagram 1: Rational Protein Design Workflow

Key Techniques and Reagent Solutions

Rational protein design employs a diverse toolkit of experimental and computational methods. The table below outlines essential reagents and techniques used in typical rational design experiments.

Table 2: Research Reagent Solutions for Rational Protein Design

| Category | Specific Reagents/Methods | Function in Rational Design |

|---|---|---|

| Mutagenesis | Site-directed mutagenesis kits, synthetic genes | Introduces specific, targeted changes into protein coding sequences [1] |

| Structural Analysis | X-ray crystallography, NMR, cryo-EM, AlphaFold2 predictions | Provides 3D structural information essential for target identification [1] [14] |

| Computational Modeling | Rosetta, DMPfold, molecular dynamics software | Predicts effects of mutations on protein structure and stability [13] [14] |

| Expression Systems | Recombinant DNA vectors, bacterial/yeast/mammalian hosts | Produces mutant protein variants for experimental characterization [1] |

| Quantitative Assays | Fluorescence-based assays, mass spectrometry, calorimetry | Measures functional properties and binding affinities of designed variants [15] |

| Stability Assessment | Differential scanning calorimetry, circular dichroism | Evaluates thermodynamic stability of mutant proteins |

Applications and Case Studies

Rational design has been successfully applied to engineer proteins for diverse applications across biotechnology, medicine, and industrial processes. The precision of this approach makes it particularly valuable when specific, targeted alterations are required.

Industrial Enzyme Engineering

In industrial settings, enzymes often need to function under non-physiological conditions such as extreme temperatures, pH levels, or organic solvents. Rational design has been used to enhance important properties of industrially relevant enzymes:

- Thermostability: Engineering enhanced thermal stability in α-amylase for food processing applications through site-directed mutagenesis [1]

- Alkaline stability: Improving alkaline protease activity at high pH and low temperatures for detergent applications [1]

- Catalytic efficiency: Optimizing active site residues to enhance kinetic properties of enzymes like 5-enolpyruvylshikimate-3-phosphate synthase for agricultural applications [1]

Therapeutic Protein Engineering

Rational design has revolutionized the development of therapeutic proteins with enhanced properties:

- Insulin analogs: Creating fast-acting monomeric insulin through site-directed mutagenesis to improve diabetes treatment [1]

- Therapeutic antibodies: Engineering antibody affinity, specificity, and stability for improved therapeutic efficacy [12]

- Protein-based vaccines: Designing stabilized antigen constructs with improved immunogenicity [1]

Diagram 2: Structure-Function Relationship in Rational Design

Comparison with Directed Evolution

While this article focuses on rational design, understanding its relative strengths and limitations compared to directed evolution provides valuable context for researchers selecting protein engineering strategies.

Advantages of Rational Design

Rational design offers several distinct advantages for protein engineering:

- Precision: Allows for targeted alterations at specific positions known to influence particular functions [12]

- Efficiency: Can achieve desired outcomes with fewer variants compared to the large libraries required for directed evolution [1]

- Mechanistic insight: Provides deeper understanding of structure-function relationships that can inform future engineering efforts [13]

- Speed: When structural information is available, rational design can be more straightforward and less time-consuming than extensive screening processes [12]

Limitations and Challenges

Despite its advantages, rational design faces several significant challenges:

- Structural knowledge dependency: Requires detailed, high-quality structural information that may not be available for all proteins [12] [1]

- Prediction accuracy: The complex relationship between sequence, structure, and function makes it difficult to accurately predict all effects of mutations [13] [1]

- Conformational dynamics: Challenges in predicting protein conformational changes that occur during binding with other molecules [1]

- Epistatic effects: Difficulties in accounting for non-additive interactions between mutations [13]

Table 3: Performance Metrics for Predicting Functionally Sensitive Positions

| Prediction Metric | Performance Score | Interpretation |

|---|---|---|

| Mean Precision | 74.7% | Percentage of predicted sensitive positions that are truly functional |

| Mean Recall | 69.3% | Percentage of all true functional positions that are identified |

| Area Under ROC Curve | 0.83 ± 0.04 | Overall prediction accuracy (1.0 = perfect prediction) |

Performance of computational method predicting functionally sensitive positions using structural change across five proteins [13]

Future Directions and Emerging Technologies

The field of rational protein design continues to evolve with advances in computational methods, structural biology, and artificial intelligence. Several emerging technologies are poised to address current limitations and expand the capabilities of rational design.

Artificial Intelligence and Machine Learning

Machine learning approaches are dramatically enhancing rational design capabilities:

- Structure prediction: AI systems like AlphaFold2 and RoseTTAFold have revolutionized protein structure prediction, providing high-quality models for proteins without experimentally determined structures [1] [14]

- Function prediction: Tools like DeepFRI use graph convolutional networks to provide residue-specific functional annotations from structural data [14]

- Sequence design: Generative models and diffusion probabilistic models are being applied to design novel protein sequences that fold into target structures [1]

Hybrid and Semirational Approaches

Recognizing the complementary strengths of different protein engineering strategies, researchers are increasingly adopting hybrid approaches:

- Semirational design: Combining rational and directed evolution methods by using computational modeling to identify promising target regions for focused mutagenesis and screening [12] [1]

- Autonomous protein engineering: Platforms like SAMPLE (Self-driving Autonomous Machines for Protein Landscape Exploration) that combine AI-based design with fully automated robotic experimentation [1]

These integrated approaches leverage the precision of rational design with the explorative power of directed evolution, potentially overcoming the limitations of either method used in isolation. As these technologies mature, they promise to accelerate the design of novel proteins with tailored functions for diverse applications in medicine, industry, and biotechnology.

Directed evolution is a powerful protein engineering method that mimics the process of natural selection in a laboratory setting to steer proteins or nucleic acids toward a user-defined goal [9]. This approach fundamentally relies on an iterative cycle of creating genetic diversity (random mutagenesis) and identifying improved variants through high-throughput selection or screening [6] [16]. Since its early origins in the 1960s with Spiegelman's evolution of RNA molecules, directed evolution has transformed into a robust biotechnology platform, recognized by the 2018 Nobel Prize in Chemistry awarded to Frances Arnold for the directed evolution of enzymes and to George Smith and Gregory Winter for phage display [6] [9]. The method's principal advantage lies in its ability to improve protein properties—such as stability, catalytic activity, or substrate specificity—without requiring prior structural knowledge or mechanistic understanding of the target protein [9] [12]. This stands in contrast to rational design approaches that depend on comprehensive structural and functional information to make calculated mutations [12] [1]. By harnessing random mutagenesis and high-throughput selection, researchers can explore vast sequence spaces to discover beneficial mutations that might not be predictable through rational means alone [9].

Core Principles and Methodologies

The Directed Evolution Cycle

Directed evolution functions through an iterative Darwinian cycle comprising three essential stages: diversification, selection, and amplification [9]. The process begins with the introduction of random mutations into the gene of interest, creating a library of genetic variants. This library is then expressed, and the resulting protein variants are subjected to selection or screening pressures to identify individuals with improved functional properties. The genes encoding these improved variants are amplified to serve as templates for subsequent rounds of evolution, enabling stepwise enhancements through multiple iterations [6] [9]. The probability of success in directed evolution experiments correlates directly with total library size, as evaluating more mutants increases the likelihood of discovering variants with desired properties [9]. This fundamental framework has been successfully applied to engineer diverse protein properties, including enhanced thermostability for industrial applications, improved binding affinity for therapeutic antibodies, and altered substrate specificity for novel biocatalytic functions [9].

Random Mutagenesis Strategies

The generation of genetic diversity represents the foundational step in any directed evolution experiment. Multiple molecular biology techniques have been developed to create mutant libraries, each offering distinct advantages and limitations.

Table 1: Common Mutagenesis Methods in Directed Evolution

| Method | Principle | Advantages | Disadvantages | Application Examples |

|---|---|---|---|---|

| Error-prone PCR (epPCR) | Random point mutations through low-fidelity PCR amplification [6] | Easy to perform; no prior knowledge required [6] | Reduced sampling of mutagenesis space; mutagenesis bias [6] | Subtilisin E [6] |

| DNA Shuffling | In vitro recombination of homologous genes [9] | Recombines beneficial mutations from multiple parents [9] | Requires high sequence homology (>70%) [9] | Thymidine kinase [6] |

| RAISE | Random insertion and deletion of short sequences [6] | Enables random indels across sequence [6] | Introduces frameshifts; limited to few nucleotides [6] | β-Lactamase [6] |

| Mutator Strains | In vivo mutagenesis using engineered bacterial strains [6] | Simple system; continuous evolution possible [6] | Biased and uncontrolled mutagenesis spectrum; mutagenesis not restricted to target [6] | Vitamin K epoxide reductase [6] |

| Orthogonal Replication Systems | In vivo targeted mutagenesis using specialized polymerases [6] | Mutagenesis restricted to target sequence [6] | Relatively low mutation frequency; target size limitations [6] | β-Lactamase, Dihydrofolate reductase [6] |

Figure 1: The Iterative Directed Evolution Cycle. This workflow illustrates the repetitive process of diversification, selection, and amplification that enables stepwise protein improvement.

High-Throughput Selection and Screening Technologies

Selection Systems

Selection methodologies directly couple desired protein function to host organism survival or gene replication, enabling efficient screening of extremely large libraries (up to 10¹⁵ variants) [9]. Phage display represents a prominent selection technique where variant proteins are expressed on phage surfaces, exposed to immobilized target molecules, and non-binders are washed away while bound phages are collected and amplified [9]. Survival-based selection represents another powerful approach where enzyme activity is made essential for cell viability, either through production of vital metabolites or detoxification of harmful compounds [9]. While selection systems offer exceptional throughput and require fewer resources than screening approaches, they can be challenging to engineer and may not provide detailed information on the range of activities present in the library [9].

Screening Methodologies

Screening systems involve the individual assessment of each variant using quantitative assays, typically based on colorimetric, fluorogenic, or other detectable signals [6]. Although generally lower in throughput than selection methods, screening provides detailed functional characterization of each variant and enables the identification of intermediate improvements [9]. Fluorescence-activated cell sorting (FACS) has emerged as a particularly powerful screening technology, capable of analyzing up to 10⁸ cells per hour based on fluorescent signals [6]. Recent advances in biosensor development and microfluidic technologies have further enhanced screening capabilities, enabling continuous evolution systems and more sophisticated phenotypic selections [16].

Table 2: High-Throughput Selection and Screening Methods

| Method | Principle | Throughput | Advantages | Disadvantages |

|---|---|---|---|---|

| Phage Display | Binding selection with phenotype-genotype linkage [9] | Very High (10¹⁰-10¹¹) | Efficient for binding molecules; direct genotype-phenotype link [9] | Limited to binding functions; not directly applicable to catalysis [9] |

| FACS | Microfluidic droplet sorting based on fluorescence [6] | High (10⁸ cells/hour) | Quantitative; multi-parameter analysis possible [6] | Requires fluorescent reporter; instrument access needed [6] |

| In Vitro Compartmentalization | Water-in-oil emulsion droplets link gene and product [9] | Very High (10¹⁰) | Compartments function as artificial cells; protects library DNA [9] | Requires specialized expertise; not all enzymes compatible [9] |

| Microtiter Plate Screening | Individual culture assay in multi-well plates [6] | Medium (10³-10⁶) | Quantitative; adaptable to various assay types [6] | Labor-intensive; lower throughput than other methods [6] |

| mRNA Display | Covalent linkage between mRNA and encoded protein [9] | High (10¹³) | Larger libraries than cellular systems; direct physical linkage [9] | In vitro translation limitations; non-natural conditions [9] |

Figure 2: High-Throughput Screening Workflow. This diagram outlines the key stages in screening variant libraries, with associated detection platforms indicated.

Experimental Protocols for Directed Evolution

Standard Protocol for Error-Prone PCR and Screening

This foundational protocol describes a complete cycle of directed evolution using error-prone PCR for mutagenesis and microtiter plate screening for identification of improved variants [6].

Materials Required:

- Template DNA (gene of interest in expression vector)

- Error-prone PCR kit (commercial kits with optimized mutation rates)

- Expression host (typically E. coli strains suitable for protein production)

- Screening reagents (substrate-specific detection system)

Procedure:

- Library Generation: Perform error-prone PCR on target gene using conditions that yield 1-3 amino acid substitutions per gene copy. Use manganese ions and unequal dNTP concentrations to promote polymerase errors [6].

- Cloning and Transformation: Digest PCR product and vector with appropriate restriction enzymes. Ligate and transform into expression host, aiming for library size of 10⁴-10⁶ variants. Plate transformed cells and incubate overnight.

- Protein Expression: Inoculate individual colonies into deep-well plates containing growth medium. Induce protein expression at optimal conditions for host system.

- High-Throughput Screening: Transfer aliquots of cell culture or lysate to assay plates containing specific substrates. Incubate under desired reaction conditions and measure activity using plate reader appropriate for detection method (absorbance, fluorescence, etc.).

- Hit Identification: Rank variants by desired activity metric. Select top performers (typically 0.1-1% of library) for sequence analysis and validation.

- Iterative Rounds: Use best variant as template for subsequent rounds of mutagenesis and screening until desired improvement is achieved.

Advanced Protocol: Phage Display for Binding Affinity Maturation

This protocol specializes in improving binding affinity of protein scaffolds through phage display technology [9].

Materials Required:

- Phage display library (target gene fused to coat protein gene)

- Immobilized target molecule (on beads or plate surface)

- Elution buffers (varying pH or containing competitive ligand)

- E. coli host for phage propagation

Procedure:

- Library Preparation: Create phage library displaying protein variants through fusion to minor coat protein (pIII). Library diversity typically ranges from 10⁸-10¹¹ unique members.

- Panning Rounds: Incubate phage library with immobilized target. Wash extensively to remove non-specific binders. Elute bound phages using low pH buffer (0.1 M glycine-HCl, pH 2.2) or competitive ligand.

- Amplification: Infect log-phase E. coli with eluted phages to amplify selected variants for subsequent rounds.

- Characterization: After 3-5 rounds of selection, isolate individual clones for binding affinity measurement using ELISA, surface plasmon resonance, or similar techniques.

- Sequence Analysis: Identify consensus mutations and key residues contributing to improved binding.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Directed Evolution

| Reagent/Resource | Function | Application Context | Examples/Specifications |

|---|---|---|---|

| Error-Prone PCR Kits | Introduces random mutations during amplification [6] | Initial library generation for any gene target | Commercial kits with optimized mutation rates (e.g., 1-15 mutations/kb) |

| Mutator Strains | In vivo mutagenesis through defective DNA repair [6] | Continuous evolution without library construction | XL1-Red, Mutator S (Epicentre) |

| Phage Display Vectors | Links genotype to phenotype for selection [9] | Engineering binding proteins and antibodies | M13-based vectors (pIII or pVIII fusion) |

| Fluorescent Substrates | Enables FACS-based screening [6] | High-throughput activity screening | Fluorogenic esters (for esterases), coumarin derivatives |

| Microfluidic Devices | Compartmentalization for single-cell analysis [16] | Ultra-high-throughput screening | Water-in-oil emulsion systems; commercial droplet generators |

| Biosensor Systems | Reports on intracellular metabolite levels [16] | In vivo selection for metabolic engineering | Transcription factor-based reporters for specific metabolites |

Comparative Analysis: Directed Evolution vs. Rational Design

Directed evolution and rational design represent complementary approaches in the protein engineering toolkit, each with distinct advantages and limitations [12]. Directed evolution excels in situations where structural information is limited or the relationship between sequence and function is poorly understood [9]. By mimicking natural evolutionary processes, it can discover unexpected solutions and complex mutational synergies that would be difficult to predict computationally [12]. However, the method requires significant resources for library creation and screening, and success depends heavily on the availability of robust high-throughput assays [9]. Rational design, conversely, employs detailed structural knowledge and computational modeling to make specific, targeted mutations [17] [12]. This approach is more efficient when the structural basis of function is well-characterized but can be limited by gaps in our understanding of protein structure-function relationships [12]. Semi-rational approaches have emerged as powerful hybrids, using computational and bioinformatic analyses to identify promising regions for randomization, thereby creating smaller, higher-quality libraries that combine the benefits of both strategies [2] [18] [1].

Directed evolution has established itself as a cornerstone methodology in protein engineering, enabling remarkable advances in biocatalyst development, therapeutic protein optimization, and fundamental studies of protein function [6] [16]. The continuing development of more sophisticated mutagenesis methods, high-throughput screening technologies, and automated experimental platforms promises to further expand the capabilities of this powerful approach [1] [16]. Emerging trends include the integration of machine learning algorithms to analyze rich datasets generated by screening experiments, which can provide insights into sequence-function relationships and guide more intelligent library design [16] [19]. The recent development of fully autonomous protein engineering systems, such as the Self-driving Autonomous Machines for Protein Landscape Exploration (SAMPLE) platform, represents the cutting edge of this field, combining artificial intelligence with robotic experimentation to accelerate the protein design process [1]. As these technologies mature, directed evolution will continue to be an indispensable tool for harnessing the power of random mutagenesis and high-throughput selection to solve complex challenges in biotechnology, medicine, and basic science.

Protein engineering stands as a formidable frontier in modern biotechnology, aiming to create and optimize proteins for applications ranging from therapeutic development to industrial biocatalysis. The field is fundamentally governed by the relationship between a protein's amino acid sequence, its three-dimensional structure, and its resulting biological function. However, researchers face a central, overwhelming challenge: the unimaginable vastness of the protein sequence-function universe. For a mere 100-residue protein, the number of possible amino acid arrangements reaches 20^100 (approximately 1.27 × 10^130), a figure that exceeds the estimated number of atoms in the observable universe (~10^80) by more than fifty orders of magnitude [20]. Within this astronomically large sequence space, the subset of sequences that fold into stable, functional proteins is vanishingly small. This creates a proverbial "needle in a haystack" problem, where identifying or designing functional proteins through unguided exploration is profoundly inefficient and often impossible [20].

This challenge is further compounded by the constraints of natural evolution. Despite their functional richness, natural proteins are products of evolutionary pressures for biological fitness in specific niches, not optimized for human utility. This "evolutionary myopia" means that known natural proteins represent only a tiny fraction of the diversity that the protein functional universe can theoretically produce [20]. Furthermore, evidence suggests that the known natural fold space may be approaching saturation, with recent functional innovations arising predominantly from domain rearrangements rather than the emergence of genuinely novel folds [20]. Consequently, conventional protein engineering strategies, which often rely on modifying natural templates, are inherently limited in their ability to access the vast, uncharted regions of functional potential. Navigating this immense and complex landscape requires sophisticated strategies that combine computational power, biological insight, and high-throughput experimental validation.

Quantitative Dimensions of the Challenge

The scale of the protein sequence-structure-function landscape is difficult to comprehend. The theoretical "protein functional universe" encompasses all possible protein sequences, structures, and the biological activities they can perform [20]. Public databases, while massive, capture only an infinitesimal fraction of this theoretical space. For context, resources like the MGnify Protein Database (nearly 2.4 billion non-redundant sequences) and the AlphaFold Protein Structure Database (~214 million models) represent an exceptionally small and biased sample, shaped by evolutionary history and assayability rather than functional potential [20].

The table below quantifies the disparity between known biological data and the theoretical possibilities.

Table 1: The Scale of the Protein Sequence-Structure-Function Universe

| Aspect | Known Biological Data (Databases) | Theoretical Possibility | Implication for Protein Engineering |

|---|---|---|---|

| Sequence Space | ~2.4 billion sequences (MGnify DB) [20] | 20^100 for a 100-residue protein (~1.27x10^130) [20] | Unguided random screening is infeasible. |

| Structure Space | ~214 million models (AlphaFold DB) [20] | A near-infinite fold space beyond natural saturation [20] | New functions may require novel, non-natural scaffolds. |

| Functional Space | Functions optimized for natural fitness [20] | Vast potential for novel catalysts, binders, and materials [20] | Engineering must transcend natural evolutionary pathways. |

This quantitative disparity underscores a fundamental truth: systematic exploration of the protein functional universe demands a disruptive, more pioneering approach that moves beyond simple modification of existing biological templates [20].

Methodological Frameworks for Navigation

To overcome the challenge of scale, protein scientists have developed three primary methodological frameworks, each with distinct strategies for navigating the sequence-function landscape.

Directed Evolution: Harnessing Darwinian Principles

Directed evolution mimics natural selection in a laboratory setting. It involves iterative cycles of random mutagenesis and selection to improve a protein's function without requiring prior structural knowledge [12] [5]. Its strategic advantage is the ability to discover non-intuitive, highly effective solutions that computational models or human intuition might miss [5].

Experimental Protocol:

- Diversification: Create a library of gene variants. Common methods include:

- Error-Prone PCR (epPCR): A modified PCR protocol that uses low-fidelity polymerases and manganese ions to introduce random point mutations across the entire gene, typically at a rate of 1-5 mutations per kilobase [5].

- DNA Shuffling: Homologous genes from different species are fragmented with DNaseI and then reassembled in a primer-less PCR reaction. This "sexual PCR" recombines beneficial mutations from multiple parents, accelerating improvement [5].

- Screening/Selection: Identify improved variants from the library. This is often the major bottleneck.

- Screening: Individual variants are assayed for activity (e.g., using colorimetric or fluorometric substrates in microtiter plates). Throughput is typically limited to 10^3–10^4 variants [5].

- Selection: A system where the desired function is coupled to the host organism's survival or replication, allowing for the evaluation of much larger libraries (e.g., phage display) [1] [5].

- Amplification: The genes of the top-performing variants are isolated and used as the template for the next round of evolution, allowing beneficial mutations to accumulate [5].

Rational Design: The Computational Architect

In contrast, rational design operates like an architect. It uses detailed knowledge of protein structure and function to make specific, targeted changes to the amino acid sequence [12] [1]. This approach is precise but requires high-resolution structural data and a deep understanding of sequence-structure-function relationships.

Experimental Protocol:

- Structural Analysis: Obtain a high-resolution 3D structure of the target protein via X-ray crystallography or cryo-EM, or generate a reliable computational model (e.g., with AlphaFold) [1].

- Computational Modeling: Identify key residues for mutation (e.g., in the active site or a stability hotspot) using molecular dynamics simulations or energy calculations [1] [3].

- Site-Directed Mutagenesis: Implement specific point mutations, insertions, or deletions in the gene using precise molecular biology techniques like PCR-based mutagenesis [1].

- Validation: Express the purified mutant protein and characterize its biophysical and functional properties to confirm the predicted improvements [1].

Hybrid and Next-Generation Approaches

Recognizing the limitations of pure strategies, the field has increasingly moved towards hybrid and advanced computational methods.

- Semi-Rational Design: This approach combines the strengths of both directed evolution and rational design. Researchers use computational or bioinformatic modeling to identify promising target regions for mutation, then create focused, high-quality libraries that require screening of only a small number of variants (e.g., under 1000) [1] [2]. Techniques include Site-Saturation Mutagenesis, where a specific codon is randomized to encode all 19 possible alternative amino acids, thoroughly exploring a residue's functional role [5].

- AI-Driven De Novo Design: This represents a paradigm shift, using artificial intelligence to design entirely new proteins from scratch. Generative models, trained on vast biological datasets, learn the high-dimensional mappings between sequence, structure, and function [20]. These models can then create proteins with customized folds and functions that are not found in nature, fundamentally expanding the explorable protein universe [20] [3]. Fully autonomous platforms like SAMPLE (Self-driving Autonomous Machines for Protein Landscape Exploration) combine AI-based protein design with robotic systems to perform and analyze experiments in a closed loop, dramatically accelerating the discovery process [1].

The following diagram illustrates the logical workflow and decision process for selecting a protein engineering strategy:

Figure 1: Decision workflow for selecting a protein engineering methodology.

The Scientist's Toolkit: Essential Research Reagent Solutions

The experimental execution of protein engineering strategies relies on a suite of key reagents and tools. The following table details essential materials and their functions in a typical protein engineering workflow.

Table 2: Key Research Reagent Solutions for Protein Engineering

| Reagent / Material | Function in Protein Engineering |

|---|---|

| Error-Prone PCR Kit | A pre-mixed system containing low-fidelity polymerase (e.g., Taq), biased dNTP pools, and MnCl₂ for introducing random mutations during gene amplification [5]. |

| Phage Display Library | A collection of filamentous phage particles displaying a vast diversity of peptides or proteins on their coat, used for high-throughput selection of binders [1]. |

| Site-Directed Mutagenesis Kit | A optimized kit (often based on PCR or inverse PCR) with high-fidelity polymerase and DpnI enzyme for efficiently introducing specific point mutations into a plasmid [1]. |

| Fluorogenic/Chromogenic Substrate | A chemical compound that produces a fluorescent or colored signal upon enzymatic conversion, enabling high-throughput screening of enzyme activity in microtiter plates [5]. |

| Expression Vector & Host Cells | A plasmid (e.g., pET vector) and compatible microbial host (e.g., E. coli BL21) for the high-level expression and production of recombinant protein variants [5]. |

| Protein Purification Resin | Chromatography media (e.g., Ni-NTA for His-tagged proteins, immobilized metal affinity chromatography) for rapid purification of recombinant proteins from cell lysates [21]. |

The central challenge of navigating the vast protein sequence-function universe has driven the development of increasingly sophisticated engineering strategies. While directed evolution and rational design offer powerful, complementary paths forward, the future lies in integrated and autonomous approaches. The combination of semi-rational design, AI-driven de novo creation, and self-driving laboratories represents a transformative leap [1] [20] [3]. These paradigms fuse computational power with experimental validation, systematically unlocking the immense latent functional potential within the uncharted protein universe. This progress brings us closer to a future where bespoke proteins with tailored functionalities can be designed on demand to address pressing challenges in medicine, sustainability, and technology.

Methodologies in Action: Techniques and Real-World Applications for Drug Development and Biocatalysis

Directed evolution is a powerful, forward-engineering process that harnesses the principles of Darwinian evolution—iterative cycles of genetic diversification and selection—within a laboratory setting to tailor proteins for specific, human-defined applications [5]. Its profound impact was formally recognized with the 2018 Nobel Prize in Chemistry awarded to Frances H. Arnold for establishing it as a cornerstone of modern biotechnology [5]. The primary strategic advantage of directed evolution lies in its capacity to deliver robust solutions—such as enhanced stability, novel catalytic activity, or altered substrate specificity—without requiring detailed a priori knowledge of a protein's three-dimensional structure or its catalytic mechanism [5]. This capability allows it to bypass the inherent limitations of rational design, which relies on a predictive understanding of sequence-structure-function relationships that is often incomplete [5] [1].

This technical guide details the core directed evolution workflow, framing it within the broader context of protein engineering strategies. It provides researchers and drug development professionals with an in-depth analysis of the cycle's phases, supported by current methodologies, quantitative data, and emerging technologies that are reshaping this dynamic field.

The Core Iterative Cycle of Directed Evolution

At its heart, the directed evolution workflow functions as a two-part iterative engine, driving a population of protein variants toward a desired functional goal [5]. This process compresses geological timescales of natural evolution into weeks or months by intentionally accelerating the rate of mutation and applying an unambiguous, user-defined selection pressure [5]. The following diagram illustrates this continuous, iterative process.

Phase 1: Generating Genetic Diversity – The Library as the Search Space

The creation of a diverse library of gene variants is the foundational step that defines the boundaries of the explorable sequence space [5]. The quality, size, and nature of this diversity directly constrain the potential outcomes of the entire evolutionary campaign [5]. The table below summarizes the primary methods used for library generation.

| Method | Key Principle | Typical Library Size & Diversity | Key Advantages | Primary Limitations |

|---|---|---|---|---|

| Error-Prone PCR (epPCR) [5] | Reduced fidelity PCR introduces random point mutations. | 1-5 mutations/kb; explores ~5-6 of 19 possible amino acids per position [5]. | Simple, widely applicable; requires no structural data. | Mutational bias toward transitions; limited amino acid diversity accessed. |

| DNA Shuffling [5] | Fragmented genes reassembled via homologous recombination. | Varies; combines mutations from multiple parents. | Recombines beneficial mutations; mimics sexual evolution. | Requires high sequence homology (>70-75%) for efficient reassembly. |

| Site-Saturation Mutagenesis [5] [1] | Targeted codon randomization for all 20 amino acids. | Focused library of 20 variants per position. | Comprehensive exploration of a specific residue; high-quality, small library. | Requires prior knowledge to identify target sites. |

Phase 2: Selection and Amplification – Identifying the Fittest

Once a diverse library is created, the central challenge is identifying the rare improved variants—a process widely recognized as the primary bottleneck in directed evolution [5]. The success of a campaign is dictated by the axiom, "you get what you screen for" [5]. A key distinction exists between screening and selection [5]:

- Screening involves the individual evaluation of every library member for the desired property. While lower in throughput, it guarantees that every variant is tested and provides quantitative data on its performance. Examples include microtiter plate-based assays using colorimetric or fluorometric substrates [5].

- Selection establishes a system where the desired function is directly coupled to the survival or replication of the host organism, automatically eliminating non-functional variants. Selections can handle much larger libraries (e.g., >10^9 variants) but are often difficult to design and can be prone to artifacts [5].

The genes encoding the identified "winners" are isolated and serve as the template for the next round of diversification and selection, allowing beneficial mutations to accumulate over successive generations [5].

Advanced and Emerging Methodologies

Mammalian Cell Directed Evolution: The PROTEUS Platform

Traditional directed evolution platforms are primarily prokaryotic or yeast-based, but evolving proteins directly in mammalian cells can provide a more relevant physiological context. The PROTEUS platform addresses this need by using chimeric virus-like vesicles (VLVs) to enable extended mammalian directed evolution campaigns without loss of system integrity [22]. The workflow, detailed below, is designed to maintain a tight link between the activity of the evolved transgene and viral propagation fitness.

Key Experimental Protocol for PROTEUS [22]:

- Vector Construction: Clone the target transgene (e.g., tetracycline-controlled transactivator, tTA) into the pSFV-DE replicon vector, which encodes attenuated SFV non-structural proteins.

- VLV Production: Co-transfect BHK-21 host cells with the replicon vector and a pCMV_VSVG plasmid constitutively expressing the VSVG envelope protein to produce chimeric VLVs.