Protein Folding Showdown: Evolutionary Algorithms vs. Machine Learning in Structural Biology

This article provides a comparative analysis for researchers and drug development professionals on two dominant computational approaches for predicting protein tertiary structure: classical evolutionary algorithms and modern machine learning.

Protein Folding Showdown: Evolutionary Algorithms vs. Machine Learning in Structural Biology

Abstract

This article provides a comparative analysis for researchers and drug development professionals on two dominant computational approaches for predicting protein tertiary structure: classical evolutionary algorithms and modern machine learning. We explore the foundational principles of each method, from the global optimization strategies of evolutionary algorithms like USPEX to the deep learning architectures of AI systems such as AlphaFold2, ESMFold, and RoseTTAFold. The scope includes a critical examination of their methodological applications, a troubleshooting guide for inherent limitations like force field accuracy and dynamic conformation modeling, and a validation framework using established metrics like pLDDT and GDT_TS. By synthesizing current capabilities and challenges, this review aims to guide the selection and future development of computational tools for structural biology and drug discovery.

The Theoretical Battlefield: Core Principles of Protein Structure Prediction

Anfinsen's Dogma and the Thermodynamic Hypothesis of Folding

The thermodynamic hypothesis of protein folding, more famously known as Anfinsen's dogma, represents one of the most fundamental principles in molecular biology. Championed by Nobel Laureate Christian B. Anfinsen from his pioneering research on ribonuclease A, this postulate states that for a small globular protein in its standard physiological environment, the native three-dimensional structure is determined solely by the protein's amino acid sequence [1]. Anfinsen's conclusions, drawn from experimental observations that denatured RNase A could spontaneously refold and regain its native activity, posited that the native conformation represents a unique, stable, and kinetically accessible minimum of the free energy [1] [2]. This revolutionary theory established the conceptual foundation for understanding how linear polypeptides self-assemble into functional biological machines and has influenced decades of subsequent research in structural biology.

The significance of Anfinsen's dogma extends far beyond its original formulation, providing the theoretical basis for computational protein structure prediction and design. If the native structure is indeed encoded in the sequence, then it should be possible, in principle, to compute this structure from first principles. This review examines Anfinsen's dogma through a modern lens, exploring how its core principles have shaped the development of both evolutionary algorithms and contemporary machine learning approaches in protein folding research. We will investigate how recent technological advances are testing the boundaries of this fundamental hypothesis while simultaneously leveraging its insights to revolutionize computational structural biology and drug discovery.

Core Principles of Anfinsen's Dogma

The Thermodynamic Hypothesis and Its Experimental Foundation

Anfinsen's dogma emerged from a series of elegant experiments on bovine pancreatic ribonuclease A (RNase A) in the 1950s and 1960s. The foundational experiments demonstrated that the enzyme, when denatured using reducing agents and high concentrations of urea, could spontaneously refold upon removal of denaturing conditions, regaining both its native structure and catalytic activity [1] [2]. This observation led to the seminal conclusion that all information necessary to specify the three-dimensional structure of a protein resides in its amino acid sequence, and that the native state corresponds to the global minimum of Gibbs free energy under physiological conditions [1] [3].

The original RNase A refolding experiments involved two key observations that supported the thermodynamic hypothesis. First, Anfinsen and colleagues demonstrated that a completely reduced and denatured RNase A could regain significant enzymatic activity upon re-oxidation, suggesting that the polypeptide chain could find its way back to the native conformation without external guidance [2]. Second, they showed that RNase A with randomly scrambled disulfide bridges could, in the presence of trace amounts of β-mercaptoethanol, reorganize its disulfide bonds to the native pattern with concomitant recovery of function, indicating that the native state is thermodynamically favored over misfolded states [1].

According to the formal statement of Anfinsen's dogma, the native structure must satisfy three essential conditions [1]:

- Uniqueness: The sequence must not have any other configuration with comparable free energy; the free energy minimum must be unchallenged.

- Stability: Small changes in environmental conditions must not produce changes in the minimum configuration; the free energy surface around the native state must be steep and high.

- Kinetical accessibility: The folding pathway from unfolded to folded state must be reasonably smooth without requiring highly complex conformational changes.

Quantitative Analysis of RNase A Refolding Experiments

Table 1: Experimental Conditions and Activity Recovery in RNase A Refolding Studies

| Experimental Condition | Temperature | Protein Concentration | Copper (Cu²⁺) | Time to Oxidation | Activity Recovery |

|---|---|---|---|---|---|

| rRNase I (no additives) | 37°C | 14 µM | - | 49 hours | 23% |

| rRNase I (no additives) | 25°C | 14 µM | - | 49.6 hours | 47% |

| rRNase I + trace Cu²⁺ | 25°C | 14 µM | 0.3 µM | 8.3 hours | 41% |

| rRNase I + β-ME | 25°C | 14 µM | 0.3 µM | 19.7 hours | 82% |

| rRNase I + high Cu²⁺ | 25°C | 14 µM | 10 µM | 1 hour | 9% |

Recent reassessments of Anfinsen's original experiments have revealed intriguing nuances often overlooked in textbook descriptions. Contemporary recreations of the RNase A refolding experiments demonstrate that spontaneous re-oxidation of fully reduced RNase A typically yields only 20-30% recovery of native activity, contrary to the near-complete recovery often cited [2]. Only under specific conditions, including the presence of catalytic amounts of β-mercaptoethanol (enabling disulfide reshuffling) or trace metal ions, does activity recovery approach 80-100% [2]. These findings suggest that while the native state is indeed thermodynamically favored, kinetic accessibility to this state may require specific environmental conditions or molecular assistance.

Biophysical analyses of refolded RNase A further illuminate these limitations. Circular dichroism spectroscopy shows that spontaneously re-oxidized RNase I exhibits reduced β-sheet and turn structures compared to the native enzyme (22.5% strand vs. 27.5% in native; 18.0% turn vs. 20.6% in native) [2]. Similarly, intrinsic fluorescence measurements indicate that tyrosine residues in re-oxidized RNase I reside in altered microenvironments, suggesting non-native tertiary structures despite complete disulfide formation [2]. These observations underscore that while the native state represents an energy minimum, kinetic traps can yield alternative, stable conformations with non-native disulfide pairings.

Challenges and Exceptions to the Dogma

Protein Misfolding and Amyloid Formation

The thermodynamic hypothesis faces significant challenges from the phenomenon of protein misfolding and amyloid formation, processes implicated in numerous neurodegenerative diseases. Although Anfinsen's dogma posits that the native state represents the global free energy minimum, many proteins can access alternative stable states—amyloid fibrils—that are associated with pathological conditions [4]. This apparent contradiction can be resolved through the concept of supersaturation barriers that separate the folding and misfolding universes [4].

Recent research demonstrates that many globular proteins capable of reversible unfolding under thermal denaturation can be induced to form amyloid fibrils when agitation is applied at high temperatures [4]. For example, hen egg white lysozyme (HEWL) shows reversible unfolding upon heating but forms amyloid fibrils when stirred at high temperatures under acidic conditions. Similarly, wild-type transthyretin (TTR) forms amyloid fibrils upon incubation with stirring at 50°C and pH 2.0, while maintaining a native-like conformation without agitation [4]. This suggests that proteins often exist in supersaturated states concerning amyloid formation, with agitation providing the necessary perturbation to overcome the kinetic barrier to aggregation.

The table below summarizes the conditions under which various proteins transition from folded to amyloid states:

Table 2: Experimental Conditions for Amyloid Formation in Various Proteins

| Protein | Conditions for Amyloid Formation | Agitation Required | Key Experimental Observations |

|---|---|---|---|

| Immunoglobulin VL domain | pH 7.0, 65°C | Yes | ThT fluorescence increase at ~65°C only with stirring |

| Hen egg white lysozyme | pH 2.0 | Yes | Amyloid formation requires stirrer agitation at high temperatures |

| Transthyretin (wild-type) | pH 2.0, 50°C, 50-150 mM NaCl | Yes | Forms seeding-competent fibrils only with agitation |

| Ribonuclease A | pH 5.0, 1.0 M NaCl | Yes | Essential stirring for amyloid formation; exhibits seeding activity |

| Aβ40 peptide | pH 7.0 | Yes | No amyloid formation without agitation during heating experiments |

| α-Synuclein | pH 7.0, 1.0 M NaCl | Yes | Amyloid formation starts at ~60°C only under stirring conditions |

Biological Complexities: Chaperones, Alternative Folds, and Disordered Proteins

Cellular protein folding presents additional challenges to the simplistic formulation of Anfinsen's dogma. Molecular chaperones assist many proteins in attaining their native conformations, seemingly contradicting the principle of spontaneous folding [1]. However, chaperones primarily function to prevent aggregation during folding rather than directing the structural outcome, and thus do not fundamentally violate the thermodynamic hypothesis [1].

More significantly, certain proteins exhibit fold-switching behavior, adopting different stable conformations under varying cellular conditions. The KaiB protein in cyanobacteria, for instance, switches its fold throughout the day as part of a biological clock mechanism [1]. Recent estimates suggest that 0.5-4% of proteins in the Protein Data Bank may undergo such fold-switching behavior, driven by ligand interactions, post-translational modifications, or environmental changes [1]. These alternative structures may represent kinetically trapped local minima rather than the global free energy minimum.

Intrinsically disordered proteins (IDPs) represent another significant exception to Anfinsen's dogma, as they lack a stable tertiary structure altogether yet remain functional [4]. Proteins such as α-synuclein, associated with Parkinson's disease, exist as dynamic ensembles of conformations rather than unique folded structures, challenging the fundamental premise of a single native state [4].

Computational Protein Structure Prediction: From Physics-Based Models to Machine Learning

Early Approaches: Molecular Dynamics and Fragment Assembly

The computational pursuit of protein structure prediction has evolved through distinct methodological eras, all grounded in Anfinsen's fundamental insight. Early physics-based approaches attempted to simulate the folding process using molecular dynamics (MD) and related techniques, directly implementing the thermodynamic hypothesis by searching for low-energy states [5] [6]. Methods like the United Residue (UNRES) technique simplified the complex energy landscape by representing amino acid residues as interacting points, enabling the prediction of larger protein structures [5]. However, these approaches suffered from the inaccuracy of force fields and the immense computational resources required to explore conformational space [6].

The Levinthal paradox highlighted the fundamental challenge of these approaches: the conformational space available to a polypeptide chain is astronomically large, yet proteins fold on biologically feasible timescales [5]. This suggested that proteins do not randomly sample all possible conformations but follow funneled energy landscapes that guide them to the native state [6]. To address this, fragment assembly methods like ROSETTA emerged, combining knowledge-based potentials with local structure fragments from known proteins to efficiently navigate the energy landscape [5]. These methods demonstrated that atomic-level accuracy could be achieved for small proteins (<100 residues), representing significant progress toward realizing Anfinsen's hypothesis in silico [5].

The Machine Learning Revolution: AlphaFold and Beyond

The past decade has witnessed a paradigm shift in protein structure prediction with the emergence of deep learning approaches. AlphaFold, developed by DeepMind, marked a watershed moment during the CASP13 competition by combining co-evolutionary analysis with deep neural networks to predict contact maps from multiple sequence alignments [3] [6]. Its successor, AlphaFold2, further revolutionized the field by achieving accuracy comparable to experimental methods in many cases [7] [3] [6].

These machine learning methods differ fundamentally from earlier approaches. Rather than simulating physical folding processes, they learn the relationship between sequence and structure from the vast corpus of known protein structures and sequences. AlphaFold2 employs a novel architecture that integrates both physical and biological knowledge within a dual-track framework, processing multiple sequence alignments and pairwise residue features to directly predict atomic coordinates [3]. Related methods like RoseTTAFold and ESMFold similarly leverage deep learning to achieve unprecedented accuracy [7] [3].

Despite their remarkable success, these approaches face limitations. They struggle with proteins lacking evolutionary information and cannot reliably predict multiple conformations or folding pathways [7] [6]. Additionally, they do not explicitly model the physical forces driving folding, instead learning statistical relationships from existing data [7]. This represents a departure from the first-principles implementation of Anfinsen's hypothesis, though the end result—accurate structure prediction—validates its fundamental premise.

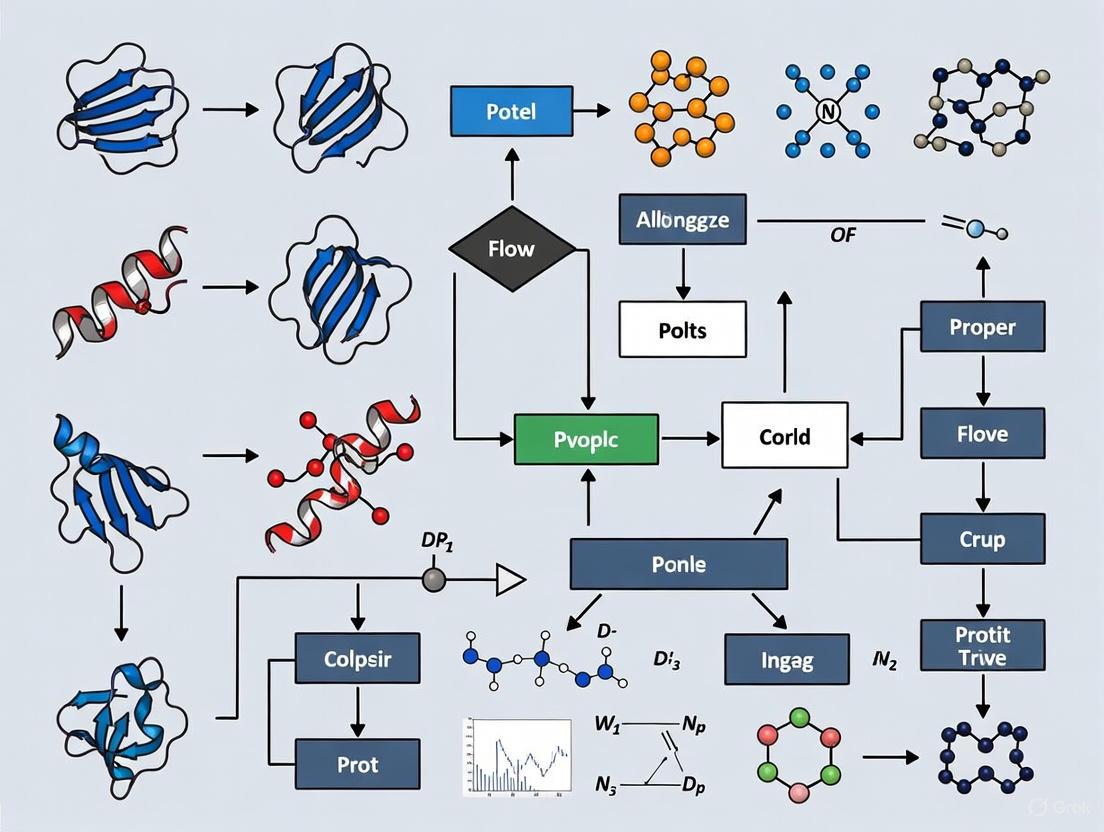

Diagram 1: The computational evolution of Anfinsen's dogma from physics-based simulations to modern machine learning approaches, highlighting methodological transitions and persistent limitations.

Experimental and Computational Methodologies

Key Experimental Protocols in Protein Folding Research

Oxidative Refolding of RNase A

The foundational protocol for demonstrating spontaneous refolding involves the oxidative refolding of reduced RNase A [2]:

Reduction and Denaturation: Native RNase A is fully reduced using thioglycolic acid or β-mercaptoethanol in 8M urea, breaking all four disulfide bonds and unfolding the polypeptide chain.

Denaturant Removal: The reducing agent and urea are removed via gel filtration (Sephadex G-25) or dialysis. Notably, gel filtration produces faster separation and different refolding outcomes compared to slow dialysis.

Re-oxidation: The reduced protein is exposed to air oxidation at pH 8.0-8.5 and temperatures between 25-37°C. Trace metal ions (particularly Cu²⁺ at 0.3 µM) catalyze disulfide formation, while sub-stoichiometric β-mercaptoethanol (11 µM) enables disulfide reshuffling.

Activity Assessment: Regained enzymatic activity is measured using specific RNase assays, with optimal conditions yielding 80-100% activity recovery.

Structural Validation: Refolded structures are analyzed using circular dichroism spectroscopy, intrinsic fluorescence measurements, and mass spectrometry to confirm native disulfide pairing.

Agitation-Induced Amyloid Formation

The protocol for probing the supersaturation barrier between folding and misfolding involves [4]:

Sample Preparation: Proteins are dissolved at appropriate concentrations (typically 10-50 µM) in buffers ranging from pH 2.0 to 7.0, with varying ionic strength.

Thermal Denaturation with Agitation: Samples are heated (typically 50-90°C) with continuous magnetic stirring at defined speeds. Control experiments are performed without agitation.

Amyloid Detection: Fibril formation is monitored in real-time using thioflavin T (ThT) fluorescence (excitation 440 nm, emission 480 nm), with increases indicating amyloid formation.

Aggregation Monitoring: Light scattering at 350 nm measures total aggregate formation independently of amyloid structure.

Structural Characterization: Circular dichroism spectroscopy assesses secondary structure changes, while transmission electron microscopy visualizes fibril morphology.

Seeding Experiments: The self-templating activity of aggregates is tested by adding pre-formed fibrils to native protein solutions.

Computational Pipelines for Structure Prediction

Modern computational approaches employ sophisticated pipelines for protein structure prediction [6]:

Multiple Sequence Alignment Generation:

- Tool: DeepMSA

- Method: Sensitive homology search using HHblits and JackHMMER against UniRef, UniClust, and metagenomic databases

- Output: Profile Hidden Markov Models and covariance information

Distance Distribution Prediction:

- Tool: trRosetta or AlphaFold2

- Input: MSA profiles

- Output: Distance distributions (distograms) and orientation distributions for residue pairs

Structure Generation:

- Method: Energy minimization with distance restraints

- Force Field: Knowledge-based or physics-based potentials (e.g., AWSEM)

- Output: Ensemble of candidate structures

Model Selection and Validation:

- Method: Clustering by RMSD and energy scoring

- Output: Lowest energy structures from each cluster

Table 3: Research Reagent Solutions for Protein Folding Studies

| Reagent/Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| β-mercaptoethanol | Chemical reagent | Disulfide reduction and reshuffling | RNase A refolding experiments |

| Thioflavin T (ThT) | Fluorescent dye | Amyloid fibril detection | Aggregation studies |

| Urea | Denaturant | Protein unfolding | Denaturation/renaturation studies |

| DeepMSA | Computational tool | Multiple sequence alignment generation | Template-free structure prediction |

| trRosetta | Software suite | Residue distance prediction | Deep learning-based structure prediction |

| AWSEM | Force field | Energy calculation for protein structures | Physics-based structure prediction |

| AlphaFold2 | AI system | End-to-end structure prediction | High-accuracy model generation |

| ProteinMPNN | Neural network | Sequence design for structures | Inverse folding and protein design |

Implications for Drug Discovery and Therapeutic Development

The principles derived from Anfinsen's dogma have profound implications for understanding and treating protein misfolding diseases. Neurodegenerative disorders including Alzheimer's, Parkinson's, and prion diseases involve the accumulation of misfolded proteins as amyloid fibrils [3] [4]. These pathological aggregates represent stable alternative states to the native protein conformation, effectively escaping the quality control mechanisms that normally ensure proper folding [3].

Computational structure prediction has become increasingly valuable in drug discovery, particularly for targets difficult to characterize experimentally. AlphaFold2-predicted structures have been used to study disease-related proteins such as α-synuclein in Parkinson's disease and tau in Alzheimer's disease [3]. For example, computational analyses have identified β-strand segments (β1 and β2) in α-synuclein that mediate interactions within amyloid fibrils, providing potential targets for therapeutic intervention [3]. Similarly, MOVA, a computational method combining AlphaFold2 with variant analysis, has been applied to identify pathogenic mutations in 12 amyotrophic lateral sclerosis (ALS)-causative genes [3].

The inverse folding problem—designing sequences that fold into target structures—has emerged as a powerful application of these principles. Methods like ProteinMPNN and ESM-IF enable the design of novel protein sequences that adopt predetermined folds, with applications in therapeutic protein engineering, enzyme design, and vaccine development [7]. These approaches leverage the fundamental insight of Anfinsen's dogma—that sequence determines structure—while overcoming the combinatorial complexity of the sequence space through machine learning.

Diagram 2: Therapeutic applications of protein folding principles, connecting misfolding mechanisms to disease pathology and computational intervention strategies.

Sixty-five years after its initial formulation, Anfinsen's dogma remains a cornerstone of molecular biology, even as its limitations and nuances have become increasingly apparent. The thermodynamic hypothesis has successfully guided decades of research while adapting to accommodate exceptions such as chaperone-assisted folding, intrinsically disordered proteins, and amyloid formation. The fundamental principle that sequence determines structure has been powerfully validated by the success of deep learning methods like AlphaFold2, which effectively leverage this relationship to predict protein structures with remarkable accuracy.

The evolution from physics-based simulations to modern machine learning represents not an abandonment of Anfinsen's principles but rather a transformation in how they are computationally implemented. While early methods directly simulated the folding process to find energy minima, contemporary approaches learn the sequence-structure relationship from evolutionary data, implicitly capturing the physical constraints that govern folding. This shift has dramatically improved predictive accuracy while raising new questions about the role of physical principles in computational structural biology.

Future research directions will likely focus on integrating these approaches—combining the physical interpretability of molecular dynamics with the predictive power of deep learning. Key challenges include predicting multiple conformational states, modeling folding pathways, understanding the role of cellular environment in folding, and designing proteins with novel functions. As these methods advance, they will continue to transform drug discovery, protein engineering, and our fundamental understanding of biological systems, all built upon the foundational insight that the information specifying a protein's native structure is encoded in its amino acid sequence.

The Levinthal Paradox and the Computational Challenge of Conformational Space

The Levinthal Paradox presents a fundamental conundrum in structural biology: how do proteins fold into their native three-dimensional structures on biologically feasible timescales when the theoretical conformational space is astronomically large? This whitepaper examines this paradox through the dual lenses of evolutionary algorithms, grounded in biophysical principles, and modern machine learning approaches. We provide a comprehensive technical analysis of the computational challenges, compare quantitative performance metrics across methodologies, and detail experimental protocols for validating predicted structures. The discussion is framed within the context of drug discovery and protein engineering, where accurate structure prediction is paramount, and concludes with an assessment of current limitations and future research directions integrating these complementary computational philosophies.

In 1969, molecular biologist Cyrus Levinthal articulated a fundamental paradox that has since shaped computational biology: while a typical protein possesses an astronomical number of possible conformations (~10³⁰⁰ for a 150-residue protein), it reliably folds into its functional native state within milliseconds to seconds [8]. Levinthal's calculation demonstrated that a random, brute-force search through this conformational space would require time exceeding the age of the known universe, implying that proteins must follow specific, guided kinetic pathways rather than sampling conformations stochastically [8].

This paradox establishes the core computational challenge in protein structure prediction. The conformational space that must be navigated is vast both in scale and complexity, requiring sophisticated algorithms that can efficiently identify the native structure—or ensemble of structures—that represents the functional state of the protein. The resolution of this paradox lies in the understanding that protein folding is not a random search but a directed process "speeded and guided by the rapid formation of local interactions which then determine the further folding of the polypeptide" [8]. This insight has inspired two major computational philosophies: evolutionary algorithms based on physical principles and pattern-recognition approaches based on machine learning.

Quantitative Dimensions of the Challenge

The computational challenge posed by the Levinthal Paradox can be quantified across multiple dimensions. The table below summarizes key quantitative aspects of the conformational search space and computational requirements for different prediction approaches.

Table 1: Quantitative Dimensions of Protein Conformational Space and Computational Challenges

| Parameter | Value/Description | Implication |

|---|---|---|

| Theoretical Conformations | ~10³⁰⁰ for a 150-residue protein [8] | Brute-force computation impossible |

| Observed Folding Time | Microseconds to seconds | Guided pathways necessary |

| Energy Barriers (ΔG+) | ~5 kcal/mol [9] | Small enough to allow conformational flexibility |

| Experimentally Solved Structures (PDB) | ~226,414 (as of 2024) [10] | Limited training data for machine learning |

| Known Protein Sequences (UniProt) | >200 million [10] | Vast sequence space with unsolved structures |

| AlphaFold2 RMSD | 0.8 Å (backbone) [10] | Near-experimental accuracy for single structures |

| AlphaFold2 CASP14 Performance | Total z-score: 244.0 (vs. 90.8 for next best) [10] | Significant performance leap |

The sheer size of the conformational search space necessitates algorithms that incorporate strong inductive biases or heuristics to efficiently locate the native state. As Levinthal inferred, any successful algorithm—whether biological or computational—must employ strategies that dramatically prune the search space by prioritizing local interactions that serve as folding nucleation points [8].

Computational Philosophies: Evolutionary Algorithms vs. Machine Learning

Two dominant computational paradigms have emerged to address the Levinthal challenge: evolutionary algorithms rooted in biophysics and machine learning approaches leveraging pattern recognition.

Evolutionary Algorithms and Physical Principles

Evolutionary algorithms, including molecular dynamics (MD) simulations and free energy perturbation approaches, are grounded in physicochemical principles. These methods attempt to simulate the folding process by modeling atomic interactions and energetics, essentially emulating the physical journey a protein undertakes to reach its native state.

- Physical Basis: These algorithms operate on first principles, modeling forces including hydrogen bonding, hydrophobic interactions, electrostatic attractions/repulsions, and dihedral angle preferences [9].

- Search Mechanism: They typically employ sophisticated sampling techniques to navigate the energy landscape, seeking the global free energy minimum that corresponds to the native structure under the thermodynamic hypothesis of protein folding [11].

- Strengths: Capacity to simulate folding pathways, model conformational changes, and provide dynamic information beyond static structures.

- Limitations: Computationally intensive, often restricted to short timescales (microseconds), and challenged by the high-dimensionality of the energy landscape [9].

Machine Learning and Pattern Recognition

Machine learning approaches, particularly deep neural networks like AlphaFold2, address the paradox through a different philosophy: learning the mapping between sequence and structure from known protein structures in the Protein Data Bank (PDB).

- Data Basis: These models leverage evolutionary information from multiple sequence alignments (MSAs) and known structural templates to infer relationships [12] [10].

- Search Mechanism: Rather than simulating physical folding, they employ pattern recognition to predict spatial relationships between residues (e.g., distances, angles), then reconstruct the 3D structure [10].

- Strengths: Extraordinary accuracy and speed for single-state predictions, capable of predicting structures with near-experimental accuracy in minutes [12] [10].

- Limitations: Heavy dependence on training data from PDB, challenges with orphan proteins lacking homologous sequences, and difficulties capturing conformational heterogeneity [13] [14] [10].

Table 2: Comparison of Computational Approaches to Protein Structure Prediction

| Characteristic | Evolutionary Algorithms/Physical Models | Machine Learning Models |

|---|---|---|

| Theoretical Basis | Thermodynamics, molecular mechanics | Pattern recognition, evolutionary conservation |

| Primary Input | Amino acid sequence, force field parameters | Amino acid sequence, multiple sequence alignments |

| Conformational Search | Energy landscape sampling | Direct coordinate prediction |

| Output | Folding pathway, energy landscape, ensemble | Static structure(s) with confidence metrics |

| Computational Cost | Very high (long simulation times) | Relatively low (rapid prediction) |

| Handling Dynamics | Strong (explicitly models motion) | Weak (typically single conformation) |

| Representative Tools | MODELLER, GROMACS, Rosetta (physics-based) | AlphaFold2, RoseTTAFold, ESMFold |

Experimental Protocols and Validation Methodologies

Validating computational predictions against experimental data is crucial. Several biophysical techniques provide experimental constraints to guide and assess structure prediction algorithms.

DEER Spectroscopy with DEERFold Integration

Double Electron-Electron Resonance (DEER) spectroscopy measures distance distributions between spin-labeled sites on a protein, providing information on conformational heterogeneity [15]. The recently developed DEERFold protocol integrates these measurements directly into the AlphaFold2 architecture.

Table 3: Research Reagent Solutions for Structural Validation

| Reagent/Method | Function in Structural Biology |

|---|---|

| DEER Spectroscopy | Measures distance distributions between spin labels to probe conformational ensembles [15] |

| Cross-linking Mass Spectrometry | Identifies spatially proximate amino acids, providing distance constraints [15] |

| Hydrogen-Deuterium Exchange MS | Probes protein flexibility and solvent accessibility [13] |

| Single-molecule FRET | Measures distances between fluorescent labels in single molecules [13] |

| Cryo-Electron Microscopy | Determines high-resolution structures of macromolecular complexes [11] |

DEERFold Experimental Workflow:

- Sample Preparation: Introduce spin labels (e.g., MTSSL) at specific cysteine residues via site-directed mutagenesis and labeling.

- DEER Data Collection: Perform DEER measurements under relevant biochemical conditions to obtain distance distributions between spin label pairs.

- Data Conversion: Transform experimental distance distributions into input representations compatible with the neural network architecture (e.g., distograms).

- Model Fine-tuning: Retrain AlphaFold2 on the OpenFold platform using structurally diverse proteins to incorporate DEER distance constraints explicitly.

- Structure Prediction: Run DEERFold with experimental constraints to generate conformational ensembles consistent with the measured distances.

- Validation: Compare predicted models with experimental structures (when available) and assess agreement with additional DEER measurements not used in training.

This methodology enables the prediction of alternative conformations for the same protein sequence, addressing a key limitation of standard AlphaFold2 [15].

The FiveFold Approach for Conformational Ensembles

The FiveFold approach addresses conformational heterogeneity through a novel geometric strategy [9]:

- Protein Folding Shape Code (PFSC) Definition: Develop a library of 27 alphabetic codes representing all possible local folding patterns for pentapeptide segments.

- Local Folding Database Construction: Create the 5AAPFSC database containing all possible folding patterns for each possible five-amino-acid combination.

- Protein Folding Variation Matrix (PFVM) Generation: For a target sequence, assemble a matrix detailing all possible local folding variants along the entire sequence.

- Conformational Sampling: Generate massive numbers of possible conformations by optimizing combinations of PFSC letters from the PFVM.

- Ensemble Construction: Build 3D structures for predominant conformational states by screening against a PDB-PFSC database using high-throughput homology modeling.

This method explicitly addresses the Levinthal Paradox by demonstrating how an astronomical number of conformations can be systematically sampled and reduced to a manageable ensemble of biologically relevant structures [9].

Visualization of Computational Strategies

The following diagrams illustrate the core concepts and workflows discussed in this whitepaper.

The Levinthal Paradox and Solution Pathways

Diagram 1: Levinthal Paradox and Solution Pathways. The paradox contrasts the impossibility of random search with biologically feasible guided folding.

DEERFold Experimental and Computational Workflow

Diagram 2: DEERFold Integrated Workflow. Combines experimental distance constraints with neural network prediction to generate conformational ensembles.

Limitations and Fundamental Challenges

Despite remarkable progress, current computational approaches face persistent challenges rooted in the fundamental nature of proteins:

Static vs. Dynamic Structures: AI systems like AlphaFold predict single static models, while proteins exist as dynamic ensembles of interconverting conformations [13] [14]. This limitation is particularly problematic for intrinsically disordered proteins and regions that lack fixed structures [9] [10].

Environmental Dependence: Protein structures are sensitive to their thermodynamic environment—including pH, solvent, temperature, and binding partners—but current AI models are typically trained on structures determined under non-physiological conditions (e.g., crystal structures) [13].

Quantum Mechanical Effects: Some researchers propose that the protein folding problem embodies a quantum-like paradox where determining the structure inevitably disrupts the thermodynamic environment that controls that structure, analogous to the Heisenberg Uncertainty Principle [13].

Orphan Protein Challenge: Proteins with few evolutionary relatives (orphan proteins) remain challenging for MSA-dependent methods like AlphaFold, which rely on deep multiple sequence alignments for accurate prediction [10].

The Levinthal Paradox continues to shape computational approaches to protein structure prediction, presenting both a theoretical challenge and practical framework for algorithm development. Evolutionary algorithms and machine learning approaches offer complementary strengths: while physical models better capture dynamics and folding pathways, machine learning models achieve superior accuracy for static structures efficiently.

Future progress will likely involve hybrid approaches that integrate physical principles with data-driven learning. Methods like DEERFold that incorporate experimental constraints represent a promising direction for capturing conformational heterogeneity. Similarly, approaches like FiveFold that explicitly model the complete conformational space address the fundamental challenge posed by Levinthal's calculation.

For drug discovery professionals, understanding these computational philosophies and their limitations is crucial. While current AI tools have transformed structural biology, recognizing their inability to fully represent protein dynamics and environmental sensitivity is essential for proper application in therapeutic development. The next frontier in computational structural biology will involve moving beyond single-structure prediction to modeling complete conformational landscapes under physiological conditions—ultimately providing a more comprehensive solution to the challenge first articulated by Levinthal over half a century ago.

Evolutionary computation (EC) represents a class of population-based global optimization algorithms inspired by biological evolution, operating on principles of natural selection and genetics to solve complex optimization problems [16]. These metaheuristic algorithms possess stochastic optimization characteristics that enable them to seek approximate globally optimal solutions without requiring the objective function to be continuous, differentiable, or unimodal [16]. In the context of protein folding research—a domain challenged by the astronomical complexity of conformational space—evolutionary algorithms (EAs) offer distinct advantages over gradient-based methods by maintaining population diversity and exploring multiple potential solutions simultaneously.

The fundamental analogy between biological evolution and computational optimization is straightforward: an initial set of candidate solutions constitutes a population where each solution represents heritable traits [16]. Through iterative processes, suboptimal solutions are eliminated while random changes are introduced to create new generations, mirroring evolutionary pressure in nature [16]. The objective function in EAs serves as the computational equivalent of biological fitness, driving selection toward increasingly optimal solutions. This framework proves particularly valuable in protein structure prediction, where the search space encompasses approximately 10³⁰⁰ possible configurations for a typical-length protein [12], presenting a formidable challenge for conventional optimization approaches.

Core Principles and Mechanism of Evolutionary Algorithms

Fundamental Components and Processes

Evolutionary algorithms operate through a structured process that mimics Darwinian evolution, with each component serving a specific biological analogue:

Initialization: The process begins with randomly generating an initial population of candidate solutions, ensuring diversity within defined problem constraints [16].

Fitness Evaluation: Each solution undergoes assessment through an objective function that quantifies its quality relative to the optimization target [16].

Selection: Individuals are selected based on fitness values, with higher-fit solutions preferentially retained—implementing the "survival of the fittest" principle [16].

Variation Operators: The selected individuals undergo transformation through crossover (recombination) and mutation operators to create new offspring solutions [16].

Generational Replacement: Newly created offspring replace part or all of the previous population, forming a new generation for continued optimization [16].

This iterative process continues until termination conditions are satisfied, typically reaching either a maximum number of generations or achieving a predetermined fitness threshold [16].

Key Variation Operators

Variation operators serve as the primary mechanism for introducing diversity and exploring new regions of the search space in evolutionary algorithms:

Crossover (Recombination): This operator combines genetic information from two parent solutions to produce offspring, typically by exchanging subsequences of their encoded representations [16]. Common implementations include one-point, two-point, and uniform crossover, each affecting the mixing of parental traits differently.

Mutation: The mutation operator introduces random changes to individual solutions, typically with low probability, helping maintain population diversity and prevent premature convergence [16]. In protein folding applications, mutation might alter torsion angles or side-chain conformations to explore alternative structural arrangements.

Hybrid Operators: Advanced EA implementations often incorporate domain-specific variation operators. In protein structure prediction, these might include fragment replacement, local conformational sampling, or knowledge-based structural perturbations that respect biochemical constraints.

Evolutionary Algorithms in Protein Folding: Methodologies and Applications

Addressing Multimodal Optimization in Protein Conformational Space

Protein folding represents a quintessential multimodal optimization problem (MMOP), where multiple distinct structural configurations may represent viable energy minima [17]. Evolutionary algorithms excel in such environments through specialized niching techniques that maintain population diversity and enable simultaneous identification of multiple optimal solutions [17]. The DADE (Diversity-based Adaptive Differential Evolution) algorithm exemplifies this approach, incorporating a diversity-based niching method that partitions populations into appropriately sized subpopulations at different search stages [17]. This adaptive partitioning allows thorough exploration of the entire fitness landscape during early stages while facilitating sufficient local exploitation during later stages.

For intrinsically disordered proteins (IDPs)—which represent a significant challenge for deep learning methods like AlphaFold [18]—evolutionary algorithms offer particular advantages. Unlike deep learning approaches trained predominantly on structured proteins with single "ground truth" configurations [18], EAs can natively handle the conformational ensembles and fluctuating configurations characteristic of IDPs by maintaining diverse populations representing multiple possible states.

Constraint Handling and Feasibility Maintenance

A critical challenge in protein structure prediction involves handling biochemical constraints including steric clashes, torsion angle limits, and thermodynamic requirements. Evolutionary algorithms address this through specialized constraint-handling techniques:

Penalty Functions: Traditional approaches incorporate penalty terms into the fitness function to discourage constraint violations, though these methods face challenges in balancing exploration and exploitation [19].

Feasibility Criteria: Advanced implementations like the hybrid multi-operator EA employ feasibility criteria to explicitly eliminate infeasible solutions while making trade-offs between exploration and exploitation [19].

Repair Operators: Domain-specific repair mechanisms can transform infeasible solutions into valid conformations by resolving constraint violations while preserving beneficial traits.

Hybrid Multi-Operator Frameworks

Recent advances in evolutionary computation for protein folding emphasize hybrid methodologies that combine multiple optimization strategies. The hybrid multi-operator evolutionary algorithm described in Scientific Reports integrates genetic algorithm (GA), differential evolution (DE), and particle swarm optimization (PSO) to address multiperiod large-scale optimization problems [19]. This approach leverages complementary strengths of different algorithms: GA provides robust exploration through crossover and mutation, DE offers efficient local search through difference vectors, and PSO enables effective information sharing across the population.

Such hybrid frameworks demonstrate particular efficacy for dynamic optimization scenarios involving changing environmental conditions—analogous to varying cellular environments in protein folding—by adapting search strategies throughout the optimization process [19]. The implementation of representative constraint handling techniques further enhances performance by maintaining feasible solutions while navigating complex constraint landscapes.

Comparative Analysis: Evolutionary Algorithms vs. Deep Learning Approaches

Performance Metrics and Quantitative Comparison

Table 1: Performance comparison of optimization approaches for biological structures

| Metric | Evolutionary Algorithms | Deep Learning (AlphaFold) |

|---|---|---|

| Solution Diversity | Multiple diverse solutions maintained simultaneously [17] | Single "best" structure prediction [18] |

| Structured Proteins | Good performance with sufficient computational budget | Near-experimental accuracy (0.8Å RMSD) [10] |

| Intrinsically Disordered Proteins | Native handling of conformational ensembles [17] | Low-confidence predictions or unrealistic stable forms [18] |

| Data Requirements | Moderate (fitness function only) | Extensive (large labeled datasets) [18] [10] |

| Computational Demand | High during optimization, minimal for inference | High during training, moderate during inference |

| Constraint Handling | Explicit constraint incorporation [19] | Implicit through training data |

| Interpretability | Transparent optimization process | Black-box predictions |

Experimental Protocols for Protein Structure Optimization

Diversity-Based Adaptive Differential Evolution (DADE) Protocol

The DADE methodology for multimodal protein optimization involves three key components [17]:

Diversity-Based Niching:

- Initialize population of candidate structures

- Calculate pairwise diversity metrics using modified diversity measurement

- Partition population into niches based on adaptive thresholding

- Implement niche size reduction throughout iterative progress

Mutation Selection Scheme:

- Monitor diversity within each niche

- Select mutation operators based on problem dimensionality and population diversity

- Balance exploration and exploitation within each subpopulation

Local Optima Processing:

- Identify prematurely convergent subpopulations (diversity consistently below threshold)

- Reinitialize stagnant individuals while leveraging tabu archive

- Avoid rediscovery of previously identified optima

Hybrid Multi-Operator EA Protocol for Dynamic Environments

For time-dependent protein folding scenarios (e.g., folding pathways), the hybrid multi-operator approach implements [19]:

Multi-Operator Integration:

- Maintain parallel populations for GA, DE, and PSO variants

- Implement periodic migration between subpopulations

- Adaptive operator selection based on recent performance

Feasibility-Driven Search:

- Evaluate constraint violations for each candidate

- Apply feasibility criteria to eliminate invalid solutions

- Balance exploration of novel regions with exploitation of promising areas

Dynamic Adaptation:

- Adjust search parameters based on changing conditions (e.g., solvation effects)

- Modify fitness function to reflect temporal constraints

- Implement memory mechanisms to retain previously successful strategies

Table 2: Essential resources for evolutionary algorithm research in protein folding

| Resource Category | Specific Tools | Function and Application |

|---|---|---|

| Optimization Frameworks | PyGAD, EvoJAX [20] | GPU-accelerated evolutionary computation toolkit |

| Structure Evaluation | Rosetta, FoldX | Energy function calculation and fitness evaluation |

| Conformational Sampling | MODELLER, GROMACS | Molecular dynamics for local search operators |

| Benchmark Datasets | CEC2013 MMOP Suite [17] | Multimodal benchmark functions for algorithm validation |

| Analysis and Visualization | UCSF Chimera, PyMOL | Solution quality assessment and structural analysis |

| Constraint Libraries | PDB, UniProt [10] | Structural constraints and biological knowledge bases |

Evolutionary algorithms represent a powerful paradigm for global optimization in protein folding research, particularly for problems characterized by multimodality, complex constraints, and dynamic environments. Their ability to maintain diverse solution populations and explicitly handle constraints complements the strengths of deep learning approaches like AlphaFold, suggesting promising directions for hybrid methodologies.

Future research should focus on tightly integrated evolutionary-deep learning frameworks where EAs handle conformational sampling and constraint satisfaction while deep learning models provide rapid energy estimation and structural scoring. Such approaches could leverage the exploratory power of evolutionary methods with the pattern recognition capabilities of deep learning, potentially addressing current limitations in both paradigms, particularly for challenging protein classes like intrinsically disordered proteins and multi-state folding systems.

The continued development of evolutionary algorithms for protein folding will likely emphasize adaptive operator selection, knowledge-informed variation operators, and multi-fidelity evaluation strategies that balance computational expense with solution quality. As demonstrated by recent advances in hybrid multi-operator EAs and diversity-based approaches, evolutionary methods remain at the forefront of computational methodology for tackling the complex optimization challenges inherent in biological systems.

The prediction of a protein's three-dimensional structure from its amino acid sequence—the classic "protein folding problem"—has been one of the most challenging and enduring problems in computational biology for over 50 years [21] [22]. Understanding protein structure is fundamental to elucidating biological function, with profound implications for drug discovery and therapeutic development. The problem's computational complexity arises from the astronomical number of possible conformations a protein chain could adopt; as noted in Levinthal's 1969 paradox, a protein cannot possibly sample all configurations to find its native state, suggesting the existence of a more direct folding pathway [22].

Two complementary computational approaches have emerged to address this challenge: evolutionary algorithms rooted in physical and chemical principles, and machine learning methods leveraging patterns in biological data. Evolutionary algorithms treat protein folding as a global optimization problem, searching for the lowest-energy conformation according to physicochemical force fields [23] [24]. In contrast, machine learning approaches, particularly deep neural networks and transformers, have demonstrated remarkable success by learning structural patterns from vast repositories of known protein structures and sequences [25] [21]. This technical guide examines the core architectures, methodologies, and performance of these competing paradigms, with particular focus on their applications, limitations, and future directions in structural biology.

Machine Learning Foundations: From Deep Neural Networks to Transformers

Historical Development of Deep Learning in Biology

The application of machine learning to biological problems has evolved significantly alongside advancements in computational architecture and training methodologies. The conceptual foundations date to 1943 with the McCulloch-Pitts neuron model, but meaningful progress began with key developments: Rosenblatt's perceptron (1958), backpropagation (1974), LeNet for handwriting recognition (1990), and Long Short-Term Memory networks (1997) [25]. The modern deep learning revolution accelerated in 2012 with AlexNet's breakthrough in image recognition, demonstrating the power of deep convolutional neural networks [25].

Biological applications progressed through several phases. Early machine learning approaches to protein folding used neural networks to analyze gene expression data in the 1990s [25]. In 2015, DeepBind demonstrated the potential of deep learning to identify RNA-binding protein sites and regulatory elements [25]. However, the transformational breakthrough came with DeepMind's AlphaFold2 in 2020, which achieved unprecedented accuracy in protein structure prediction during the CASP14 assessment [21] [22].

Table: Evolution of Deep Learning for Biological Sequences

| Date | Development | Significance for Protein Science |

|---|---|---|

| 1990 | LeNet (CNN) | Enabled pattern recognition in sequential data |

| 1997 | LSTM Networks | Allowed modeling of long-range dependencies in sequences |

| 2015 | DeepBind | Demonstrated deep learning could identify protein-binding sites |

| 2017 | Transformer Architecture | Introduced attention mechanism for global sequence relationships |

| 2020 | AlphaFold2 (Evoformer) | Combined transformers with biological insights for state-of-the-art structure prediction |

| 2022-2023 | Protein Language Models (ESMFold) | Enabled structure prediction without multiple sequence alignments |

Core Architectural Components

Convolutional Neural Networks (CNNs)

CNNs apply sliding filters (kernels) across input sequences to detect local patterns and motifs. In protein sequence analysis, CNNs excel at identifying conserved regions, binding sites, and local structural features through their hierarchical feature extraction capabilities [25].

Recurrent Neural Networks (RNNs) and LSTMs

RNNs process sequential data through time-step connections, making them suitable for biological sequences where context matters. Long Short-Term Memory (LSTM) networks address the vanishing gradient problem in traditional RNNs, enabling learning of long-range dependencies in protein sequences [25].

Transformer Architecture and Attention Mechanism

The transformer architecture, introduced in 2017, represents a fundamental shift through its self-attention mechanism, which computes pairwise relationships between all positions in a sequence regardless of distance [26] [25]. This capability is particularly valuable for protein folding, where residues distant in sequence may be proximate in the folded structure.

The attention mechanism operates through query, key, and value vectors computed for each token (amino acid) in the sequence:

This allows each position to attend to all other positions, capturing global dependencies more effectively than sequential models [26].

Machine Learning Approaches to Protein Structure Prediction

AlphaFold2: Integrated Evolutionary and Structural Reasoning

AlphaFold2 represents a watershed in computational biology, achieving median backbone accuracy of 0.96 Å (competitive with experimental methods) in the CASP14 assessment [21]. Its architecture integrates two key information sources through novel neural network components:

Evoformer Block: The Evoformer is a novel neural network module that jointly processes multiple sequence alignments (MSAs) and residue-pair representations [21]. It operates through attention-based mechanisms that exchange information between the MSA representation (showing evolutionary relationships) and the pair representation (capturing spatial relationships between residues) [21]. The triangular self-attention and multiplicative update operations enforce geometric constraints essential for physically plausible structures [21].

Structure Module: This component generates atomic coordinates from the Evoformer's representations using an equivariant transformer that respects rotational and translational symmetry [21]. It initializes with all residues at the origin and iteratively refines their positions and orientations through a process called "recycling" [21].

Protein Language Models: Beyond Multiple Sequence Alignments

While AlphaFold2 relies on evolutionary information from multiple sequence alignments (MSAs), protein language models (PLMs) like ESMFold represent an alternative approach that learns structural principles directly from sequences [26]. ESM-2, an encoder-only transformer architecture, is pretrained on millions of protein sequences to learn evolutionary patterns, eliminating dependence on MSAs for structure prediction [26]. This is particularly valuable for orphan proteins with few homologs or rapidly evolving proteins where MSAs are sparse [26].

Performance Benchmarks: CASP15 Assessment

The Critical Assessment of Structure Prediction (CASP) provides blind tests for objectively evaluating prediction methods. Recent CASP15 results demonstrate the current performance landscape:

Table: CASP15 Performance Metrics for Single-Chain Proteins (n=69 targets)

| Method | Approach Type | Mean GDT-TS | Topology Accuracy (TM-score >0.5) | Side-Chain Accuracy (GDC-SC) | MSA Dependence |

|---|---|---|---|---|---|

| AlphaFold2 | MSA-based Transformer | 73.06 | ~80% | <50 | Moderate |

| RoseTTAFold | MSA-based 3-Track | Not Reported | ~70% | Lower than PLMs | High |

| ESMFold | Protein Language Model | 61.62 | Lower than MSA-based | Higher than RoseTTAFold | None |

| OmegaFold | Protein Language Model | Lower than ESMFold | Lower than MSA-based | Higher than RoseTTAFold | None |

GDT-TS: Global Distance Test-Total Score; TM-score: Template Modeling Score; GDC-SC: Global Distance Calculation for Side-Chains [26]

The benchmarking reveals several key insights: AlphaFold2 maintains superior overall accuracy, MSA-based methods achieve better overall topology prediction, and protein language models have closed the gap significantly while offering independence from MSAs [26]. All methods show declining accuracy with increasing protein size, particularly for multidomain proteins where domain packing presents challenges [26].

Evolutionary Algorithms: Physicochemical Optimization Approaches

Foundation and Methodology

Evolutionary algorithms address protein folding as a global optimization problem, searching for the conformation that minimizes the free energy according to physicochemical force fields [23] [24]. Unlike machine learning approaches that leverage patterns in known structures, evolutionary methods rely on first principles of molecular physics and chemistry.

The USPEX (Universal Structure Predictor: Evolutionary Xtallography) algorithm exemplifies this approach, using evolutionary operators to explore conformational space [24]. Its workflow includes:

Initialization: Generating a population of random protein conformations. Fitness Evaluation: Calculating energy for each structure using force fields (Amber, Charmm, Oplsaal) or scoring functions (Rosetta's REF2015) [24]. Selection: Preserving low-energy structures for reproduction. Variation: Applying mutation and crossover operators to create new conformations. Iteration: Repeating the process until convergence to low-energy states [24].

Performance and Limitations

USPEX testing on proteins up to 100 residues demonstrates its ability to find deep energy minima, in some cases discovering structures with lower energy than Rosetta's Abinitio approach [24]. However, the accuracy of evolutionary algorithms is fundamentally limited by the quality of available force fields rather than search efficiency [24]. Current force fields lack sufficient accuracy for reliable blind prediction without experimental validation [24].

Evolutionary algorithms face significant computational challenges due to the high-dimensionality of protein conformational space. Even simplified models like the 2D Hydrophobic-Polar (HP) model have been proven NP-complete, necessitating heuristic approaches [23].

Comparative Analysis: Strengths, Limitations, and Integration

Performance Under Different Conditions

Table: Method Performance Across Protein Categories

| Protein Category | Machine Learning Approach | Evolutionary Algorithm Approach | Key Challenges |

|---|---|---|---|

| Well-Folded Single Domain | High accuracy (GDT-TS >90 for small proteins) [26] | Good accuracy for small proteins (<100 residues) [24] | Limited primarily by force field accuracy [24] |

| Multidomain Proteins | Accurate domains but poor domain packing [26] | Computationally intractable for large proteins | Domain orientation and flexibility |

| Intrinsically Disordered Proteins | Fundamental limitation; forces single structure [18] | Potentially suitable with ensemble modeling | Heterogeneous, dynamic ensembles [18] [27] |

| Orphan Proteins (Few Homologs) | MSA-based methods struggle; PLMs perform better [26] | Unaffected by evolutionary information | Limited evolutionary constraints |

Limitations of Machine Learning Approaches

Machine learning methods face several fundamental limitations. For intrinsically disordered proteins (IDPs) and regions (IDRs), which exist as dynamic structural ensembles rather than single conformations, AlphaFold's single-structure prediction is inherently mismatched [18]. When encountering disorder, AlphaFold either outputs low-confidence predictions or forces unrealistic stable conformations [18]. This limitation stems from training on the Protein Data Bank, which is biased toward structured, crystallizable proteins [18].

Additionally, side-chain positioning remains challenging for all methods, with even AlphaFold2 achieving mean GDC-SC scores below 50% [26]. Stereochemical quality also varies, with PLM-based methods showing physically unrealistic local regions [26].

Emerging Hybrid Approaches

Recent research explores hybrid methodologies that combine machine learning predictions with physicochemical simulations. AlphaFold-Metainference uses AlphaFold-predicted distances as restraints in molecular dynamics simulations to model structural ensembles of disordered proteins [27]. This approach successfully predicts conformational properties of both ordered and disordered proteins, demonstrating the synergistic potential of combining data-driven and physics-based methods [27].

Experimental Protocols and Research Applications

Standardized Evaluation Protocol (CASP)

The Critical Assessment of Structure Prediction (CASP) provides the gold-standard evaluation framework, conducting blind tests using recently solved structures not yet publicly available [21] [22]. The standard protocol includes:

- Target Selection: Recently determined experimental structures withheld from public databases

- Sequence-Only Input: Participants receive only amino acid sequences

- Timed Prediction: Limited timeframe for structure prediction

- Standardized Metrics: Quantitative evaluation using GDT-TS, lDDT, TM-score, and MolProbity [26] [21]

Research Reagent Solutions

Table: Essential Computational Tools for Protein Structure Prediction

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| Protein Data Bank (PDB) | Data Repository | Experimental protein structures | Training data for ML; validation for all methods |

| AlphaFold2 | MSA-based Transformer | End-to-end structure prediction | High-accuracy prediction for proteins with evolutionary information |

| ESMFold | Protein Language Model | Structure prediction without MSAs | Orphan proteins; rapid prototyping |

| USPEX | Evolutionary Algorithm | Global structure optimization | Physicochemical studies; force field development |

| Rosetta | Physics-based Modeling | Structure prediction and design | Comparative modeling; protein design |

| Tinker | Molecular Dynamics | Force field calculations | Structure relaxation; ensemble generation |

The machine learning revolution, particularly through transformer-based architectures, has dramatically advanced protein structure prediction capabilities. AlphaFold2 and related methods have achieved accuracies competitive with experimental approaches for many well-folded proteins [21] [22]. However, significant challenges remain for multidomain proteins, intrinsically disordered regions, and precise side-chain positioning [18] [26].

Evolutionary algorithms continue to provide value for studying folding physics and optimizing structures according to physicochemical principles, though they remain limited by force field accuracy and computational complexity [23] [24]. The emerging convergence of these approaches—using machine learning predictions to guide physics-based simulations—represents a promising frontier [27].

Future progress will likely require developments in several key areas: improved modeling of protein dynamics and flexibility, better integration of experimental data, more accurate force fields for evolutionary algorithms, and architectures capable of modeling large macromolecular complexes. As these computational methods mature, their integration into drug discovery pipelines promises to accelerate target identification, lead optimization, and personalized medicine development [28] [25].

The transformation of protein science by machine learning demonstrates the power of specialized neural architectures applied to fundamental biological problems. Rather than representing an endpoint, these advances have opened new research directions while highlighting the enduring complexity of biological systems and the continued need for interdisciplinary approaches combining computational and experimental methods.

The Critical Assessment of protein Structure Prediction (CASP) is a community-wide, worldwide experiment that has been conducted every two years since 1994 to objectively test protein structure prediction methods [29]. CASP operates as a rigorous blind test, providing an independent assessment of the state of the art in protein structure modeling to the research community and software users [30] [29]. The core principle of CASP is fully blinded testing: predictors receive amino acid sequences of proteins whose structures have been experimentally determined but not yet publicly released, and must submit their predicted three-dimensional structures before the experimental results are revealed [30]. This process ensures that no predictor can have prior information about a protein's structure, creating a level playing field for evaluating methodological capabilities [29].

The fundamental importance of protein structure prediction stems from the fact that experimental structures were available for less than 1/1000th of the proteins with known sequences at the time of CASP's founding [30]. Modeling therefore plays a crucial role in providing structural information for a wide range of biological problems. When proteins fold incorrectly, diseases such as Alzheimer's or Parkinson's can develop, while understanding precise protein structures significantly enhances drug development and research into protein function [31].

The CASP Experimental Framework

Target Selection and Categorization

CASP employs a meticulous target selection process to ensure unbiased evaluation. Targets are either structures soon-to-be solved by X-ray crystallography or NMR spectroscopy, or structures that have been recently solved but are kept on hold by the Protein Data Bank [29]. This double-blind approach guarantees that neither predictors nor organizers know the target structures during the prediction period.

Target proteins are systematically categorized based on prediction difficulty, with two primary classifications:

- Template-Based Modeling (TBM): Targets where a relationship to one or more experimentally determined structures can be identified, providing at least one modeling template [30].

- Free Modeling (FM): Targets where there are no usefully related structures, or the relationship is so distant it cannot be detected [30].

As fewer new folds are discovered experimentally, CASP introduced CASP ROLL in December 2011 - a continuous mechanism for soliciting and evaluating FM targets to ensure adequate data for assessing template-free methods [30].

Evaluation Methodology and Metrics

CASP employs sophisticated evaluation methods to assess prediction accuracy. The primary method compares predicted model α-carbon positions with those in the target structure [29]. The key quantitative metric is the Global Distance Test - Total Score (GDT_TS), which calculates the percentage of well-modeled residues in the prediction compared to the target structure [29].

Table: CASP Evaluation Categories and Metrics

| Category | Evaluation Method | Key Metrics | First Introduced |

|---|---|---|---|

| Tertiary Structure Prediction | Comparison of α-carbon positions | GDT_TS, RMSD | CASP1 (1994) |

| Model Quality Assessment | Estimation of model accuracy | Local Distance Difference Test (lDDT) | CASP7 |

| Model Refinement | Improvement of initial models | GDT_TS improvement | CASP7 |

| Contact Prediction | Residue-residue contact identification | Precision, Recall | CASP4 |

| Disordered Regions | Identification of unstructured regions | AUC, Precision | CASP5 |

Evaluation extends beyond tertiary structure to include multiple specialized categories that have evolved over CASP experiments. These include residue-residue contact prediction (starting CASP4), disordered regions prediction (starting CASP5), function prediction (starting CASP6), model quality assessment (starting CASP7), and model refinement (starting CASP7) [29].

Methodological Evolution Through CASP Milestones

Early CASP Experiments: Knowledge-Based and Evolutionary Approaches

The initial CASP experiments (1994-2004) were dominated by knowledge-based methods and evolutionary approaches leveraging the growing database of known protein structures. In CASP1 (1994), only 229 unique protein folds were known, making homology modeling applicable to relatively few targets [30]. Early methods heavily relied on:

- Comparative Modeling: Using structures of homologous proteins as templates [29]

- Protein Threading: Fold recognition using scoring functions even without significant sequence similarity [29]

- Fragment Assembly: Constructing models from fragments of known structures [29]

During this period, the accuracy of homology models improved dramatically through a combination of improved methods, larger databases of structure and sequence, and feedback from the CASP process [30].

Rise of Machine Learning and Deep Learning

The period from CASP5 to CASP12 (2002-2016) witnessed the gradual integration of machine learning approaches. A significant milestone occurred in 2014 during CASP11, where deep learning was first introduced for protein structure prediction [31]. The graph from CASP11 showed leading teams achieving limited success around 75 points, while most teams scored below 25 points, indicating the early challenges of accurate prediction [31].

Machine learning methods that emerged during this period included:

- Deep Learning Networks: For improving protein fold recognition [31]

- Contact Prediction Methods: Using co-evolutionary analysis and neural networks [30]

- Multi-Task Learning: Simultaneously learning several related endpoints [32]

Table: Performance Evolution in CASP Experiments (1994-2020)

| CASP Edition | Year | Leading Method | Approximate GDT_TS | Methodological Approach |

|---|---|---|---|---|

| CASP1 | 1994 | Comparative Modeling | ~40% | Knowledge-based, Homology |

| CASP5 | 2002 | Threading + Fragment Assembly | ~60% | Hybrid Methods |

| CASP11 | 2014 | Deep Learning Introduction | ~75% | Early Neural Networks |

| CASP13 | 2018 | AlphaFold1 | ~120% | Distance-based CNN |

| CASP14 | 2020 | AlphaFold2 | ~240% | Transformers, Evoformer |

The AlphaFold Revolution and Transformer Era

The most dramatic methodological shift occurred with the introduction of AlphaFold in CASP13 (2018) and its successor AlphaFold2 in CASP14 (2020). AlphaFold1 achieved a remarkable accuracy level of approximately 120 points, substantially surpassing previous methods [31]. AlphaFold1 utilized convolutional neural networks (CNNs) and transformed 3D structural information into 2D feature maps for analysis, specifically using distances between amino acids (C-alpha atoms) converted into 2D image representations [31].

AlphaFold2 represented a quantum leap, scoring approximately 240 points in CASP14 - a performance level that far exceeded not only previous teams but also its predecessor AlphaFold1 [31]. The key methodological innovations in AlphaFold2 included:

- Sequence-Based Learning: Moving beyond predetermined distance information to utilize sequence information directly, including Multiple Sequence Alignment (MSA) and pair representation [31]

- Evoformer Architecture: A modified Transformer algorithm that enabled powerful attention mechanisms for understanding sequence characteristics [31]

- End-to-End Learning: The ability to learn complex relationships directly from sequences without heavy reliance on finished structures [31]

CASP's Impact on Methodological Paradigms

Shift from Physics-Based to Knowledge-Driven Approaches

CASP experiments documented a significant transition from physics-based to knowledge-driven methodologies. Early expectations that "physics methods, together with a better understanding of the process by which proteins fold, would lead to a solution" gradually gave way to data-driven approaches [30]. The CASP10 experiment (2012) noted: "Physics and knowledge of the protein folding process have not played a major role in these advances" regarding ab initio methods [30].

This paradigm shift became increasingly pronounced with the success of deep learning methods. Traditional molecular dynamics and energy minimization approaches were supplemented, and in many cases supplanted, by pattern recognition from existing structural databases and evolutionary information.

Experimental Workflow and Method Evolution

The following diagram illustrates the evolution of methodological approaches in protein structure prediction as driven by CASP experiments:

Table: Key Research Reagent Solutions in Protein Structure Prediction

| Resource Type | Specific Tools | Function | CASP Impact |

|---|---|---|---|

| Template Databases | Protein Data Bank (PDB), Structural Classification of Proteins (SCOP) | Provide known structures for comparative modeling | Foundation for early CASP progress |

| Sequence Analysis | HHsearch, HHblits, BLAST | Detect remote homology and evolutionary relationships | Critical for template-based modeling |

| Deep Learning Frameworks | TensorFlow, PyTorch | Enable neural network architecture development | Essential for modern AlphaFold-style approaches |

| Structure Evaluation | MolProbity, PROCHECK, QMEAN | Validate geometric and stereochemical quality | Standardization of model assessment |

| Specialized Servers | I-TASSER, ROSETTA, MODELLER | Automated structure prediction pipelines | Democratized access to advanced methods |

Implications for Drug Discovery and Development

The methodological evolution driven by CASP has profound implications for pharmaceutical research and development. Accurate protein structure prediction enables:

- Structure-Based Drug Design: Precise identification of binding pockets and interaction sites [32]

- Target Validation: Better understanding of protein function and disease relevance [31]

- Polypharmacology Optimization: Designing compounds fitting specific pharmacological profiles [32]

The integration of AI-driven structure prediction with experimental validation has accelerated drug discovery timelines. For example, the ML-driven discovery of SARS-CoV-2 PLpro inhibitors identified a lead compound active in a mouse model in less than eight months [32]. Similarly, the discovery of the Malt-1 inhibitor SGR-1505 used a computational pipeline that needed only 10 months and 78 synthesized compounds to optimize to a clinical candidate [32].

CASP has served as the principal catalyst for methodological evolution in protein structure prediction for nearly three decades. From its inception in 1994 through the AlphaFold revolution of 2020, CASP's rigorous blind testing framework has objectively documented the transition from knowledge-based methods through hybrid approaches to the current deep learning paradigm. The experiment has not only driven competition and innovation but has provided crucial standardized evaluation metrics that enable direct comparison of diverse methodological approaches.

The dramatic acceleration in prediction accuracy, particularly through transformer-based architectures and end-to-end learning, demonstrates how community-wide benchmarking challenges can accelerate scientific progress. As CASP continues to evolve, it will likely continue to shape methodological developments at the intersection of computational biology, artificial intelligence, and structural bioinformatics, with profound implications for basic research and therapeutic development.

Architectures in Action: How EA and ML Build Protein Models