Random Mutagenesis vs. Semi-Rational Design: A Comparative Analysis for Modern Protein Engineering and Drug Discovery

This article provides a comprehensive comparative analysis of random mutagenesis and semi-rational design strategies for protein engineering.

Random Mutagenesis vs. Semi-Rational Design: A Comparative Analysis for Modern Protein Engineering and Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of random mutagenesis and semi-rational design strategies for protein engineering. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of both approaches, from the exploratory power of error-prone PCR to the targeted efficiency of site-saturation mutagenesis. It delves into advanced methodologies and real-world applications across industrial enzymes, DNA polymerases, and therapeutic protein engineering. The content further addresses critical troubleshooting and optimization challenges, including managing library size and leveraging machine learning. Finally, it synthesizes validation strategies and comparative performance metrics, offering a decisive guide for selecting the optimal protein engineering strategy to accelerate biocatalyst and therapeutic development.

The Evolutionary Engine vs. The Rational Blueprint: Core Principles of Mutagenesis

Protein engineering, the biotechnological process of creating new or improved enzymes and proteins, heavily relies on Darwinian principles of mutation and selection [1]. Directed evolution stands as a primary method, deliberately mimicking natural evolution in laboratory settings to tailor biocatalysts for specific industrial and therapeutic applications [2] [1]. This approach iteratively generates molecular diversity and identifies improved variants through high-throughput screening or selection. Traditional directed evolution often depends on random mutagenesis methods, such as error-prone PCR (EP-PCR), to create vast libraries of protein variants [1]. However, this method samples only a tiny fraction of the possible sequence space, and its efficiency can be limited by library size and screening capacity [2].

Over the last two decades, advances in understanding protein structure and function have empowered scientists to develop more efficient strategies [2]. This has led to the emergence of semi-rational design, a hybrid approach that combines the exploratory power of directed evolution with predictive, knowledge-based methods [3] [1]. By utilizing information on protein sequence, structure, and function, semi-rational design creates smaller, functionally rich "smart" libraries that are more likely to yield positive results, significantly streamlining the engineering process [2] [3]. This guide provides a comparative analysis of these methodologies, focusing on their protocols, performance, and applications in modern drug and enzyme development.

Experimental Protocols and Workflows

Directed Evolution and Random Mutagenesis

The classical directed evolution workflow is an iterative cycle of two main steps: diversity generation and screening [2].

- Diversity Generation via Random Mutagenesis: The process typically begins with the creation of a large library of gene variants. Error-Prone PCR (EP-PCR) is a commonly used technique, which uses conditions that reduce the fidelity of the DNA polymerase, introducing random mutations throughout the gene sequence [1]. Alternative methods include DNA shuffling, which involves fragmenting and reassembling homologous genes to create chimeric proteins [2].

- Screening and Selection: The resulting library of mutant genes is expressed, and the corresponding protein variants are subjected to high-throughput screening or selection for the desired property (e.g., enzymatic activity, thermostability, binding affinity). Techniques such as Fluorescence-Activated Cell Sorting (FACS) and phage display are often employed to efficiently examine large libraries [1].

- Iteration: The best-performing variants from one round are used as templates for the next round of mutagenesis and screening, gradually accumulating beneficial mutations [1].

This method requires no prior structural knowledge but relies on the ability to screen or select for improved function from a vast number of candidates [1].

Semi-Rational Design

Semi-rational approaches reduce reliance on massive libraries by incorporating prior knowledge to target mutations to specific regions [2] [3]. The key steps include:

- Target Identification: Computational and bioinformatic tools are used to identify "hot spots"—specific amino acid residues that are likely to influence the protein's function. Common analytical methods include:

- Multiple Sequence Alignments (MSAs) and phylogenetic analysis to identify evolutionarily variable and conserved positions [2].

- Structure-based analysis using molecular modeling and dynamics (MD) simulations to identify residues critical for substrate access, stability, or catalysis [2].

- Specialized software like the HotSpot Wizard and 3DM database, which integrate sequence and structure data to create mutability maps for a target protein [2].

- Focused Library Construction: Instead of mutating the entire gene, techniques like site-saturation mutagenesis are applied to the pre-selected target positions. This allows all possible amino acids to be tested at a specific site, but because only a few sites are targeted, the resulting library remains small (often fewer than 1,000 variants) [2].

- Evaluation: The small, focused library is then screened. The high functional content of these libraries often means that a higher proportion of variants show improvements, and the smaller scale can enable the use of more informative, lower-throughput assays [2].

Emerging Machine Learning-Guided Approaches

Recent advances integrate deep learning to further accelerate protein evolution. The DeepDE algorithm exemplifies this trend [4].

- Library Construction and Training: A compact initial library of approximately 1,000 protein mutants is experimentally created and characterized.

- Iterative Learning and Design: A deep learning model is trained on this data to predict protein function from sequence. The model then designs a new set of protein sequences, often using triple mutants as building blocks to explore a broader sequence space efficiently.

- Experimental Feedback: The top-predicted variants are synthesized and tested, and their experimental performance data is fed back into the model to refine subsequent design rounds. This closed-loop system achieved a 74.3-fold increase in GFP activity over just four rounds, dramatically outperforming traditional directed evolution for this application [4].

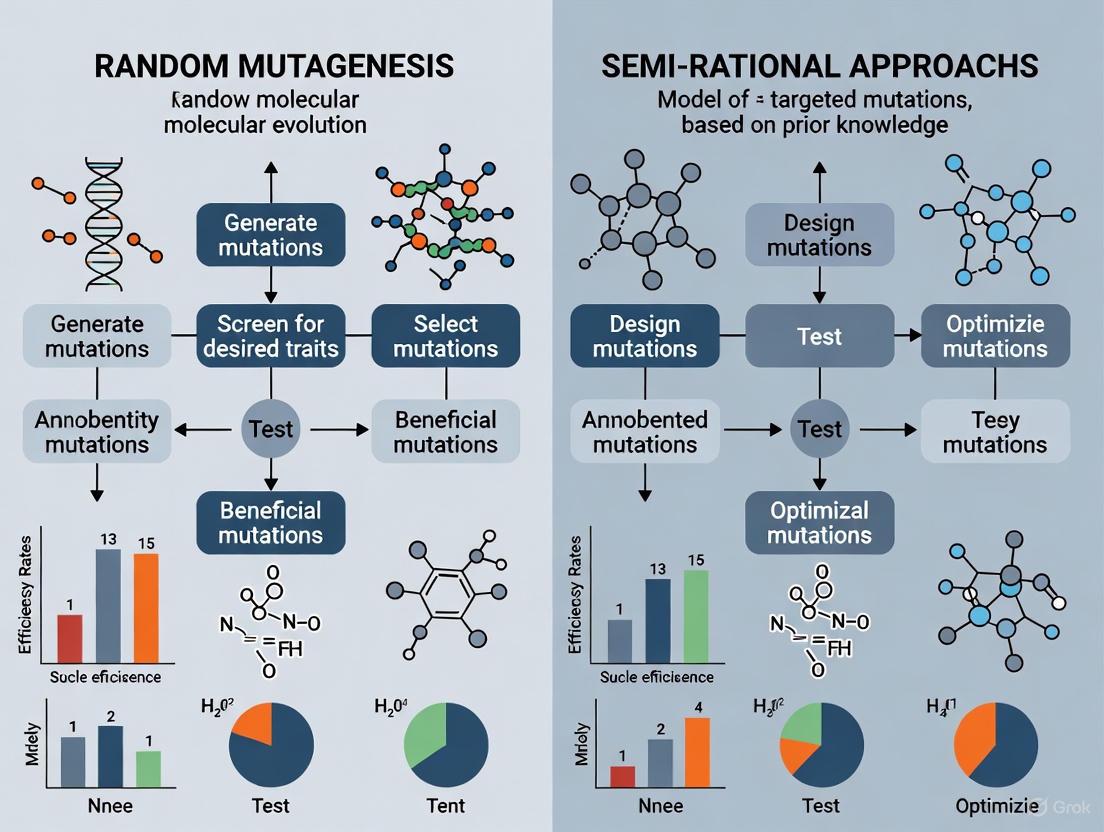

Diagram Title: Comparative Experimental Workflows

Comparative Performance Analysis

Quantitative Comparison of Engineering Approaches

The table below summarizes key characteristics and experimental outcomes of different protein engineering strategies, highlighting differences in library size, efficiency, and typical applications.

Table 1: Performance and Characteristics of Protein Engineering Methods

| Engineering Method | Typical Library Size | Key Mutagenesis Techniques | Screening Requirement | Primary Knowledge Requirement | Reported Experimental Outcome |

|---|---|---|---|---|---|

| Directed Evolution / Random Mutagenesis | Very Large (millions) | Error-prone PCR, DNA shuffling [2] [1] | High-throughput screening/selection [1] | None essential | Iterative improvements over multiple rounds; success depends on screening capacity [1]. |

| Semi-Rational Design | Small (often < 1,000 variants) [2] | Site-saturation mutagenesis at targeted positions [2] | Lower-throughput evaluation possible [2] | Protein sequence, structure, and/or mechanism [2] [3] | 200-fold activity and 20-fold enantioselectivity improvement in Pseudomonas fluorescens esterase [2]. 32-fold activity improvement in Rhodococcus rhodochrous haloalkane dehalogenase [2]. |

| Machine Learning-Guided | Compact (~1,000 for training) [4] | In silico design of triple mutants [4] | Limited screening of selected variants [4] | Large, high-quality training data | 74.3-fold activity increase in GFP in four rounds [4]. |

Analysis of Comparative Advantages

- Functional Efficiency: Semi-rational design consistently demonstrates that smaller, knowledge-driven libraries can yield significant functional gains. For instance, targeting just four specific amino acid positions in Pseudomonas fluorescens esterase based on 3DM superfamily analysis led to variants with a 200-fold improvement in activity and a 20-fold enhancement in enantioselectivity [2]. This highlights the high "functional content" of smart libraries.

- Exploration of Sequence Space: A key limitation of traditional directed evolution is the sparse sampling of possible protein sequences. Machine learning-guided methods like DeepDE address this by using triple mutants as building blocks, enabling more efficient exploration of sequence space with a limited experimental budget [4].

- Iterative Performance: In a direct application for enhancing GFP activity, the DeepDE algorithm achieved a 74.3-fold increase over just four rounds, far surpassing the benchmark superfolder GFP. This demonstrates the power of combining iterative deep learning with affordable experimental screening to mitigate data sparsity problems [4].

The Scientist's Toolkit: Key Research Reagents & Solutions

Successful implementation of these engineering strategies relies on a suite of specialized reagents and computational tools.

Table 2: Essential Research Reagents and Tools for Protein Engineering

| Reagent / Solution / Tool | Function / Description | Relevance to Method |

|---|---|---|

| Error-Prone PCR Kits | Commercial kits designed to introduce random mutations during gene amplification by reducing polymerase fidelity [1]. | Directed Evolution |

| Site-Directed/Site-Saturation Mutagenesis Kits | Kits enabling precise codon changes at specific positions in a gene sequence (e.g., to test all 20 amino acids at a hotspot) [2]. | Semi-Rational Design |

| HotSpot Wizard | An internet-based computational tool that creates a mutability map for a target protein by combining data from sequence and structure databases [2]. | Semi-Rational Design |

| 3DM Database System | A commercial database that integrates protein superfamily sequence and structure data, allowing searches for evolutionary features like correlated mutations [2]. | Semi-Rational Design |

| Fluorescence-Activated Cell Sorter (FACS) | A high-throughput technology used to screen vast libraries of cell-surface displayed proteins or enzymes based on fluorescent signals [1]. | Directed Evolution |

| Robotic Liquid Handling Systems | Automation systems that enable the setup and screening of large numbers of assays with high precision and speed. | Directed Evolution |

| Molecular Dynamics (MD) Simulation Software | Computational tools for simulating physical movements of atoms and molecules, used to study tunnel dynamics and allosteric effects [2]. | Semi-Rational Design |

The field of protein engineering has progressively moved from discovery-based random exploration towards more hypothesis-driven, knowledge-rich strategies. While directed evolution with random mutagenesis remains a powerful and general-purpose tool, its requirement for large-scale screening poses a significant bottleneck [2] [1]. The comparative analysis confirms that semi-rational design effectively addresses this by leveraging computational tools and bioinformatic insights to create small, high-quality libraries, leading to efficient identification of superior biocatalysts without the need for massive screening efforts [2] [3].

The emerging integration of machine learning and deep learning represents a further evolution of these Darwinian principles. By using data from compact but well-designed experimental libraries to train predictive models, these approaches enable a more intelligent and rapid navigation of the fitness landscape, as evidenced by dramatic performance improvements achieved in a few iterative rounds [4]. The future of harnessing Darwinian principles for protein engineering lies in increasingly sophisticated cycles of computational prediction and experimental validation, streamlining the path from concept to optimized enzyme or therapeutic.

In the pursuit of engineering superior biocatalysts and biomolecules, directed evolution has emerged as a transformative technology, harnessing the principles of Darwinian evolution in a laboratory setting to tailor proteins for specific applications [5]. At the heart of any directed evolution campaign lies a critical first step: the generation of genetic diversity. Among the most powerful and widely used methods for creating this diversity are Error-Prone PCR (epPCR) and DNA Shuffling [5] [6]. These techniques represent a "mechanism of chance," exploring vast sequence landscapes through random mutagenesis and recombination. While semi-rational design approaches, which rely on structural and computational data, are gaining traction, random mutagenesis remains indispensable for exploring novel sequence solutions that defy intuitive prediction [7] [6]. This guide provides a comparative analysis of epPCR and DNA Shuffling, detailing their mechanisms, protocols, and applications to inform strategic decisions in research and drug development.

Core Principles and Comparative Mechanics

Error-Prone PCR and DNA Shuffling operate on distinct principles, leading to different types and distributions of genetic diversity.

Error-Prone PCR (epPCR)

epPCR is a modified polymerase chain reaction designed to introduce point mutations randomly throughout the amplified gene [8] [5]. This is achieved by creating reaction conditions that reduce the fidelity of the DNA polymerase. Key strategies include:

- Using Low-Fidelity Polymerases: Employing polymerases like Taq polymerase that lack 3' to 5' proofreading exonuclease activity [5].

- Altering dNTP Concentrations: Creating an imbalance in the concentrations of the four deoxynucleotide triphosphates (dNTPs) [5].

- Adding Manganese Ions: The inclusion of Mn²⁺ is a critical factor in destabilizing polymerase fidelity and increasing the error rate [8] [5].

A significant limitation of epPCR is its inherent mutational bias. DNA polymerases favor transition mutations (purine-to-purine or pyrimidine-to-pyrimidine) over transversion mutations (purine-to-pyrimidine or vice versa) [5]. Due to the degeneracy of the genetic code, this means that at any given amino acid position, epPCR can only access an average of 5–6 of the 19 possible alternative amino acids, thus constraining the explorable sequence space [5].

DNA Shuffling

DNA Shuffling, also known as "sexual PCR," is a recombination-based method that mimics natural homologous recombination [5] [9]. Instead of introducing solely new point mutations, its primary power lies in recombining existing beneficial mutations from multiple parent genes. The process involves:

- Random Fragmentation: The starting gene or a pool of related genes is randomly fragmented using an enzyme like DNase I [5] [9].

- Reassembly: The small fragments are reassembled in a primerless PCR reaction. During the annealing step, homologous fragments from different templates can overlap and prime each other, resulting in template switching and crossovers [5]. This creates a library of chimeric genes containing novel combinations of sequences from the parent pool.

- Introduction of New Mutations: The reassembly process itself can introduce additional point mutations, typically at a rate of about 0.7% [9].

A powerful extension is Family Shuffling, which recombines homologous genes from different species, providing access to a broader and more functionally relevant region of sequence space than mutating a single gene [5]. A key requirement for efficient DNA shuffling is that the parental genes must share sufficient sequence homology (typically >70-75%) for correct reassembly [5].

Comparative Analysis: epPCR vs. DNA Shuffling

The table below summarizes the fundamental differences between these two techniques.

Table 1: Fundamental Comparison of Error-Prone PCR and DNA Shuffling

| Feature | Error-Prone PCR (epPCR) | DNA Shuffling |

|---|---|---|

| Core Principle | Random point mutagenesis via low-fidelity amplification [5] | Recombination of homologous gene fragments [5] [9] |

| Primary Outcome | Library of point mutants | Library of chimeric genes |

| Mutation Rate | Tunable, typically 1-5 base mutations/kb [5] | Point mutation rate ~0.7%; recombines existing variation [9] |

| Key Advantage | Simple, requires no prior sequence information [6] | Rapidly combines beneficial mutations; can access large functional leaps [5] |

| Inherent Bias | Biased toward transition mutations and limited amino acid substitutions [5] | Requires sequence homology; crossovers favored in regions of high identity [5] |

| Ideal Use Case | Initial exploration of sequence space from a single parent gene | Optimizing and recombining mutations from multiple leads or homologous genes [5] |

Experimental Protocols and Workflows

The practical application of these techniques involves standardized, yet optimizable, laboratory protocols.

Error-Prone PCR Protocol

The following protocol, adapted from standard methodologies, outlines the key steps for performing epPCR [8] [5].

- Reaction Assembly: On ice, combine the following in a PCR tube:

- 100 ng template DNA.

- 10 µL of 10× Polymerase buffer.

- 10 µL of 2 mM dNTP Mix.

- 1 µL of 100 µM Forward primer.

- 1 µL of 100 µM Reverse primer.

- 1 µL of 5 U/µL Polymerase (e.g., non-proofreading Taq polymerase).

- Nuclease-free H₂O to a final volume of 100 µL [8].

- Initial Amplification: Run 10 cycles of a standard PCR program (e.g., 94°C for 30 s, 55-65°C for 30 s, 72°C for 30 s/kb) to generate a large pool of template DNA [8].

- Mutagenic Amplification: Add 1 µL of 500 mM MnCl₂ to the reaction, mix well, and run an additional 30 cycles using the same PCR program. The addition of manganese is crucial for reducing fidelity and introducing errors [8].

- Cloning and Screening: The resulting PCR product is purified, subcloned into an appropriate expression vector, and transformed into a host organism (e.g., E. coli). Individual colonies are then screened for the desired functional improvements [8].

DNA Shuffling Protocol

This protocol, based on established kits and literature, describes the process for single-gene shuffling [9].

- DNase I Fragmentation: In a total volume of 50 µL, combine 0.5-2 µg of the starting DNA(s) with 5 µL of 10× Digestion Buffer and 0.1 units of DNase I per µg of DNA. Incubate at 37°C for a short period (e.g., 1-8 minutes) to generate random fragments of the desired size (e.g., 70-280 bp). The reaction is stopped with a Stop Solution and heat-inactivated [9].

- Fragment Purification: The digested DNA fragments are separated by agarose gel electrophoresis, and fragments of the target size range are excised and purified from the gel.

- Primerless Reassembly PCR: In a 50 µL reaction, combine the purified fragments (10-20 ng/µL) with 5 µL of 10× Shuffling Buffer, 1 µL of dNTP mix, and 2.5 units of Taq Polymerase. Run 30-45 cycles of PCR (e.g., 94°C for 90 s, 55°C for 30 s, 72°C for 30 s). In this step, the fragments prime each other, leading to recombination as the polymerase extends overlapping regions [9].

- Full-Length Gene Amplification: Use 2 µL of the reassembly PCR product as the template in a standard PCR reaction containing gene-specific primers. This amplifies the full-length, reassembled genes. The final product is then cloned and screened [9].

Workflow Visualization

The diagram below illustrates the logical workflow and key differences between the two techniques.

Performance and Experimental Data in Practice

The true test of any protein engineering method lies in its practical outcomes. Both epPCR and DNA shuffling have proven highly effective in enhancing key enzyme properties such as product specificity, thermostability, and activity across a broad pH range.

Enhancing Product Specificity and pH Range

A landmark study on a γ-cyclodextrin glucanotransferase (CGTase) from Bacillus sp. provides a direct comparison of the two techniques, used in a stepwise manner [10]. Researchers performed two rounds of low-frequency epPCR followed by DNA shuffling to evolve variants with higher product specificity for γ-cyclodextrin (CD8) and a broader pH activity profile.

Table 2: Experimental Outcomes from Directed Evolution of CGTase [10]

| Variant | Technique(s) Used | Key Amino Acid Substitutions | Improved Property | Performance Data |

|---|---|---|---|---|

| S54 | epPCR + DNA Shuffling | N187D, A248V, V252E, H352L, D465G, E560V, E687G | Product Specificity | 1.2-fold increase in CD8-synthesizing activity; product ratio (CD7:CD8) shifted to 1:7 from wild-type's 1:3. |

| S35 | epPCR + DNA Shuffling | E39K, T66S, L71P, I101L, S461G, E472G, V605A, N606K, R684H | pH Activity Range | Active in pH 4.0–10.0 (vs. wild-type inactive below pH 6.0); retained 70% activity at pH 4.0. |

| S80 | epPCR + DNA Shuffling | S184G, Y662F, N670D | pH Activity Range | Active between pH 4.0 and 9.5; retained 14% activity at pH 4.0. |

This study highlights a critical strategic insight: while epPCR can identify beneficial point mutations, DNA shuffling is exceptionally effective at combining these mutations from different lineages to achieve synergistic effects and novel properties not present in any single parent [10].

Industrial Application of DNA Shuffling

The power of DNA shuffling is further demonstrated in industrial-scale metabolic engineering. The gene aveC, which modulates the production ratio of the anthelmintic drug doramectin to a less desirable analog (CHC-B2), was subjected to iterative rounds of "semi-synthetic" DNA shuffling [11]. The best-evolved aveC variant, containing 10 amino acid mutations, conferred a final CHC-B2:doramectin ratio of 0.07:1, a 23-fold improvement over the wild-type gene [11]. This engineered strain was integrated into a high-titer production host, resulting in a commercially viable process that reduces by-product formation and provides significant cost savings [11].

Essential Research Reagent Solutions

Successful implementation of these techniques relies on a core set of reagents and kits.

Table 3: Key Reagents for Random Mutagenesis Experiments

| Reagent / Kit | Function | Specific Example / Note |

|---|---|---|

| Non-Proofreading DNA Polymerase | Catalyzes DNA amplification with reduced fidelity in epPCR. | Taq polymerase is commonly used [5]. |

| Manganese Chloride (MnCl₂) | Critical additive to reduce polymerase fidelity and increase mutation rate in epPCR [8] [5]. | Concentration is optimized to tune mutation frequency. |

| Unbalanced dNTP Mix | Creates nucleotide pool imbalance, contributing to polymerase errors in epPCR [5]. | |

| DNase I | Enzyme used to randomly fragment DNA for the shuffling process [9]. | Digestion time is carefully controlled to achieve desired fragment size. |

| DNA Shuffling Kit | Provides optimized, ready-to-use reagents for the entire shuffling workflow. | JBS DNA-Shuffling Kit includes DNase I, dedicated buffers, stop solution, and polymerase [9]. |

Error-Prone PCR and DNA Shuffling are foundational tools in the directed evolution arsenal. epPCR excels in the initial exploration of the sequence space surrounding a single parent gene, while DNA Shuffling is unparalleled in its ability to recombine beneficial mutations to achieve synergistic improvements and access large functional leaps [10] [5].

The most successful protein engineering campaigns often employ these methods not in isolation, but as complementary, sequential steps [5] [6]. A common strategy involves using an initial round of epPCR to identify "hotspots" for improvement, followed by DNA shuffling to recombine the best mutations from different variants. This combined approach can effectively navigate the fitness landscape of a protein, mitigating the individual limitations of each method and accelerating the path to a high-performance enzyme. For researchers embarking on optimizing proteins for drug development or industrial biocatalysis, a strategic integration of these "mechanisms of chance" remains a powerfully effective route to discovery.

For decades, directed evolution—iterative rounds of random mutagenesis and screening—served as the cornerstone of protein engineering, enabling the tailoring of enzymes for industrial and synthetic applications without requiring intricate structural knowledge [12] [2]. However, this approach faces significant limitations, primarily the necessity to screen excessively large libraries, often encompassing millions of variants, to identify beneficial mutations [12] [2]. The burgeoning availability of protein structural information and powerful computational tools has catalyzed a paradigm shift toward more informed design strategies. This guide objectively compares these methodologies, focusing on the rising implementation of semi-rational design, which synergistically combines the exploratory power of random mutagenesis with the predictive precision of structure-based reasoning [12] [13]. By targeting diversity to specific, functionally rich regions, semi-rational approaches create "smart" libraries that drastically reduce screening burdens and increase the likelihood of success, offering a powerful alternative to traditional methods [12] [2].

Methodology Comparison: Core Principles and Workflows

Traditional Directed Evolution (Random Mutagenesis)

- Core Principle: This method introduces random mutations throughout the entire gene of interest, mimicking natural evolution in an accelerated time frame. It does not require prior structural knowledge of the protein [12] [2].

- Typical Workflow: The process involves iterative cycles of (1) creating a diverse library of gene variants via random mutagenesis techniques (e.g., error-prone PCR), (2) expressing these variants, and (3) screening or selecting for improved phenotypes using high-throughput methods [2].

- Key Limitation: Its primary bottleneck is the enormous screening burden, as the vast majority of random mutations are neutral or deleterious. This necessitates robust high-throughput screening assays capable of evaluating millions of clones [12].

Semi-Rational and Rational Design

- Core Principle: These approaches utilize prior knowledge of protein sequence, structure, and function to make informed decisions about which residues to mutate [2] [13].

- Semi-Rational Design: Identifies "hotspot" residues based on evolutionary analysis (e.g., using 3DM, HotSpot Wizard) or structural inspection. These targeted positions are then randomized to create focused libraries [2] [13].

- Rational Design: Relies on detailed mechanistic and structural understanding to predict specific amino acid substitutions that will confer a desired function. This often involves computational docking and molecular dynamics simulations [13].

- Typical Workflow: The process is more targeted: (1) Analyze the protein using sequence alignment and structural data to identify key residues, (2) Create a focused library via saturation mutagenesis of these hotspots, and (3) Screen the resulting, smaller library for improved variants [2] [14].

The diagram below illustrates the typical workflow for a semi-rational design campaign, from target analysis to final variant validation.

Performance Data: A Quantitative Comparison

The following tables summarize experimental data that directly compares the performance and efficiency of random mutagenesis versus semi-rational design.

Table 1: Comparative Engineering of Cytochrome P450 BM3 [15]

| Engineering Approach | Library Size | Fraction of Functional Variants | Key Outcome |

|---|---|---|---|

| Random Mutagenesis | Not Specified | Lower | Baseline for comparison |

| Semi-Rational (CSSM) | 343-1028 | Higher | Propane-hydroxylating variants identified; >75% of library folded |

| Semi-Rational (CRAM) | 343-1028 | Highest | 16,800 propane turnovers; highest number of active variants |

Table 2: General Workflow and Resource Comparison

| Parameter | Random Mutagenesis | Semi-Rational Design |

|---|---|---|

| Required Prior Knowledge | Low | High (Structure/Sequence data) |

| Typical Library Size | Very Large (10^6 - 10^9) | Focused (10^2 - 10^4) [2] |

| Screening Throughput | Must be very high | Can be medium-to-low |

| Iterations to Success | Often many | Fewer [2] |

| Capital Investment | High (for automation) | Shifted to computational resources |

Experimental Protocols in Practice

Protocol for Semi-Rational Library Construction

This protocol outlines the creation of a diversified gene or promoter library using overlap extension PCR, a common semi-rational technique [14].

- Step 1: Library Design and Oligo Synthesis. Identify target codons for randomization based on sequence or structural data. Design forward and reverse primers containing degenerate codons (e.g., NNK, where N=A/T/C/G and K=G/T) for the target sites, flanked by 15-20 base pairs of homologous sequence.

- Step 2: Primary PCR - Fragment Generation. Perform the first PCR to generate DNA fragments. The reaction mix includes: template DNA (e.g., 100 ng), forward and reverse degenerate primers (0.5 µM each), dNTPs (200 µM), high-fidelity polymerase, and corresponding buffer. Use the following cycling conditions: initial denaturation at 98°C for 30 sec; 25 cycles of [98°C for 10 sec, 55-65°C for 15 sec, 72°C for 15 sec/kb]; final extension at 72°C for 5 min.

- Step 3: Secondary PCR - Fragment Assembly. Purify the PCR fragments from Step 2. Use these fragments as overlapping megaprimers in a second PCR assembly reaction with minimal additional cycles (e.g., 10-15 cycles) to build the full-length, diversified gene.

- Step 4: Library Transformation. Purify the assembled DNA product and transform it into a suitable expression host (e.g., E. coli) via electroporation to create the library. The resulting library diversity can range from 10^4 to 10^7 variants [14].

Protocol for Screening via Fluorescence-Activated Cell Sorting (FACS)

For phenotypes that can be linked to a fluorescent reporter, FACS provides an ultra-high-throughput screening method [14].

- Step 1: Reporter System Construction. Engineer a host cell where the activity of the engineered protein or promoter directly regulates the expression of a fluorescent protein (e.g., GFP).

- Step 2: Cell Preparation and Sorting. Grow the library of transformed cells under inducing conditions. Harvest cells during mid-log phase and resuspend in a suitable buffer for FACS analysis. Use a FACS instrument to sort the cell population based on fluorescence intensity, gating for cells displaying the desired signal (e.g., high fluorescence for gain-of-function mutants).

- Step 3: Iteration and Validation. Typically, 3-5 rounds of positive and negative sorting are performed to enrich for the best variants. After the final sort, spread cells on agar plates to isolate single clones. Pick these colonies for sequencing and subsequent validation assays to confirm the improved phenotype [14].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents for Semi-Rational Design and Screening

| Reagent / Solution | Function | Example Use Case |

|---|---|---|

| Degenerate Primers | Introduces controlled diversity at specific codons during PCR. | Saturation mutagenesis of active site residues [14]. |

| High-Fidelity PCR Mix | Amplifies DNA fragments with minimal error rates. | Constructing large, high-quality gene libraries [14]. |

| Fluorescent Reporter Plasmid | Serves as a biosensor for the target activity. | FACS-based screening of promoter or enzyme libraries [14]. |

| 3DM / HotSpot Wizard | Bioinformatics platforms for evolutionary analysis. | Identifying mutable "hotspot" residues from protein superfamilies [2]. |

| CAVER Software | Analyzes tunnels and channels in protein structures. | Engineering substrate access tunnels in enzymes like haloalkane dehalogenase [2] [13]. |

| Rosetta Software Suite | Models protein structures and designs sequences. | De novo enzyme design and optimizing active sites [13]. |

Recent Advances and Future Perspectives

The field of semi-rational design is being profoundly transformed by the integration of artificial intelligence (AI) and more sophisticated computational models. Generative AI models, including variational autoencoders (VAEs) and diffusion models, are now being used to navigate chemical and proteomic spaces, proposing novel protein sequences and bioactive small molecules with predefined properties [16] [17]. These tools can predict how mutations affect folding and function, further reducing the experimental burden [17].

Furthermore, the convergence of advanced experimental techniques like NMR-driven structure-based drug discovery (NMR-SBDD) is helping to overcome limitations of traditional methods like X-ray crystallography. NMR can provide dynamic structural information in solution and reveal critical details about hydrogen bonding and protein-ligand dynamics, offering richer data for the rational design process [18]. The future of protein engineering lies in the tight integration of these powerful computational and experimental methodologies, creating closed-loop systems that accelerate the design-build-test cycle for developing next-generation biocatalysts and therapeutics [16] [17].

Protein engineering relies on mutagenesis techniques to alter gene sequences, thereby creating novel proteins with improved or entirely new functions. Within this field, site-saturation mutagenesis (SSM) and combinatorial mutagenesis represent two powerful, yet distinct, strategies. SSM is a focused approach that systematically randomizes a single codon or a defined set of codons to generate all possible amino acid substitutions at a specific position [19] [20]. In contrast, combinatorial mutagenesis randomizes multiple positions simultaneously, creating vast libraries of variants that explore the functional potential of interactions between distant sites in a protein structure [21]. These methodologies occupy different points on the spectrum of protein engineering, with SSM often being a tool for semi-rational design based on structural or evolutionary data, and combinatorial mutagenesis enabling a broader, more exploratory search of sequence space. This guide provides a comparative analysis of these two key methods, framing them within the broader context of random versus semi-rational mutagenesis approaches.

Principles and Methodologies

Site-Saturation Mutagenesis (SSM)

Core Principle: SSM is designed to answer a specific question: which amino acid is optimal at a single, pre-determined position in a protein? It involves the substitution of a specific codon with a degenerate codon, which is a mixture of nucleotides that encodes for all or most of the 20 standard amino acids [19] [20]. This method is ideal for probing the functional role of a particular residue, such as one in an active site, or for creating a limited, "saturated" library around a known beneficial region.

Key Methodological Details: The most critical aspect of SSM is the choice of the degenerate codon. A fully random 'NNN' codon (where N represents an equimolar mixture of A, T, G, and C) generates 64 possible codons, covering all 20 amino acids but also including three stop codons. To improve efficiency, alternative codon schemes are preferred [19].

Table 1: Common Degenerate Codons Used in SSM

| Degenerate Codon | No. of Codons | No. of Amino Acids | No. of Stops | Key Amino Acids Encoded |

|---|---|---|---|---|

| NNN | 64 | 20 | 3 | All 20 amino acids |

| NNK / NNS | 32 | 20 | 1 | All 20 amino acids |

| NDT | 12 | 12 | 0 | R,N,D,C,G,H,I,L,F,S,Y,V |

| DBK | 18 | 12 | 0 | A,R,C,G,I,L,M,F,S,T,W,V |

As shown in Table 1, codons like NNK (where K is G or T) or NNS (where S is G or C) reduce the codon set to 32, encoding all 20 amino acids with only one stop codon [19]. For even more focused libraries, codons like NDT or DBK can be used to create a restricted set of 12 amino acids that cover a range of biophysical properties (e.g., charged, hydrophobic, polar) while completely eliminating stop codons [19].

Experimentally, SSM is commonly performed using PCR-based methods. A prominent one-step technique uses partially overlapping primers containing the degenerate codon for site-directed mutagenesis [22]. For "difficult-to-randomize" genes—those with high GC-content, secondary structures, or contained in large plasmids—a two-step megaprimer PCR method has proven superior. This method first amplifies a short gene fragment using one mutagenic and one non-mutagenic primer. The purified fragment is then used as a megaprimer in a second PCR to amplify the entire plasmid, leading to higher-quality libraries with less parental template contamination [22].

Combinatorial Mutagenesis

Core Principle: Combinatorial mutagenesis aims to explore the synergistic effects of mutations across multiple amino acid positions. Instead of focusing on one site, it creates libraries where multiple residues are randomized at the same time, either fully randomly or from a defined set of possibilities at each position [21]. The size of such a library grows exponentially with the number of randomized positions (e.g., 20n for n positions with all 20 amino acids), making comprehensive experimental screening often impossible [19] [21].

Key Methodological Details: The traditional approach involves designing primers with degenerate codons at multiple target sites. However, the immense size of the resulting sequence space is a major bottleneck. For example, a library targeting just 8 positions would contain 20⁸ (over 25 billion) theoretical variants, far beyond the screening capacity of most laboratories [21].

To overcome this, machine learning (ML)-coupled combinatorial mutagenesis has emerged as a powerful strategy. In this approach:

- A focused combinatorial library is designed, typically based on structural information, targeting several key residues.

- A relatively small, but diverse, subset of this library (e.g., thousands of variants) is experimentally screened for the desired activity [21].

- The resulting data is used to train a machine learning model (e.g., random forests, neural networks) to predict the fitness of all other variants in the virtual library.

- The model prioritizes the top-performing predicted variants for further experimental validation, dramatically reducing the screening burden [21].

This ML-coupled approach has been shown to reduce experimental screening by as much as 95% while enriching for top-performing variants by approximately 7.5-fold compared to random screening [21].

Direct Comparison of SSM and Combinatorial Mutagenesis

The choice between SSM and combinatorial mutagenesis is dictated by the research goal, available structural information, and screening capacity. The following table outlines their core distinctions.

Table 2: Comparative Analysis of SSM and Combinatorial Mutagenesis

| Feature | Site-Saturation Mutagenesis (SSM) | Combinatorial Mutagenesis |

|---|---|---|

| Philosophy | Semi-rational, focused exploration | Broad, exploratory search of sequence space |

| Sequence Space | Limited and linear (scales with number of sites done iteratively) | Vast and exponential (20n for n sites) |

| Key Application | Identify key residues, study active sites, fine-tune specific properties | Engineer complex traits involving long-range interactions, multi-domain optimization |

| Structural Input | Requires prior knowledge (e.g., from structure, evolution) to pick sites | Can be applied with or without high-resolution structural data |

| Screening Burden | Manageable (hundreds to thousands of clones) | Extremely high without computational aid; manageable with ML-coupling |

| Best For | "Hot-spot" identification, mechanistic studies, initial functional mapping | Global optimization, discovering unpredictable epistatic interactions |

Workflow and Context: The decision path for employing these tools often depends on the initial state of knowledge. SSM is frequently employed in an Iterative Saturation Mutagenesis (ISM) strategy, where beneficial "hot spots" are identified in initial rounds of SSM and then combined or further optimized in subsequent rounds [19] [13]. Combinatorial mutagenesis, especially when coupled with machine learning, is leveraged when the functional landscape is too complex to navigate with iterative single-site changes, such as optimizing the DNA-binding affinity and specificity of CRISPR-Cas9, which involves residues across multiple domains [21].

The following diagram illustrates the typical workflows for both SSM and ML-enhanced combinatorial mutagenesis, highlighting their key differences in process and scale.

Key Research Reagent Solutions

Successful execution of SSM and combinatorial mutagenesis experiments relies on a suite of specialized reagents and tools. The following table catalogues essential solutions for constructing high-quality mutagenesis libraries.

Table 3: Essential Research Reagents and Tools for Mutagenesis

| Reagent / Tool | Function / Description | Example Use Case |

|---|---|---|

| KOD Hot Start Polymerase | High-fidelity DNA polymerase used in PCR for SSM library construction, minimizes spurious mutations [22]. | Two-step megaprimer PCR for difficult templates like P450-BM3 [22]. |

| Degenerate Oligonucleotides | Primers containing NNK, NNS, or other degenerate codons; serve as the mutagenic primers in SSM [19] [22]. | Saturation of a single active site residue to determine optimal amino acid [20]. |

| DpnI Restriction Enzyme | Digests the methylated parental DNA template post-PCR, enriching for newly synthesized mutated plasmids [22]. | Standard step in QuikChange and related mutagenesis protocols to reduce background. |

| Machine Learning Software | Algorithms (e.g., Random Forests, Neural Networks) for predicting variant fitness from limited data [21]. | Predicting high-activity Cas9 variants from a screened subset of a combinatorial library [21]. |

| CRISPR-Cas9 System | Enables genome-wide screening and targeted integration of variants in a cellular context [23] [24]. | Creating knock-out cell lines as a platform for functional assays of variants [23]. |

| Next-Generation Sequencing (NGS) | High-throughput sequencing for analyzing library diversity and enrichment in functional screens [21] [23]. | Quantifying variant abundance in sorted cell populations from a deep mutational scan. |

Performance and Experimental Data

Efficiency and Effectiveness of SSM

The performance of SSM is highly dependent on the experimental protocol. A comparative study on the challenging cytochrome P450-BM3 gene demonstrated that a two-step PCR megaprimer method significantly outperformed the traditional one-step, partially overlapping primer method. Evaluation through massive sequencing revealed that the two-step method consistently produced higher-quality libraries with more comprehensive coverage of the desired mutations and a lower percentage of undigested parental template, making it the preferred method for recalcitrant genes [22].

Performance of ML-Coupled Combinatorial Mutagenesis

The integration of machine learning with combinatorial mutagenesis dramatically enhances its efficiency. Research on engineering CRISPR-Cas9 activities provides robust quantitative data on this improvement [21]. In this study, using only 5-20% of the empirical combinatorial library data to train the ML model was sufficient to generate accurate predictions. The model's performance was measured using metrics like the Normalized Discounted Cumulative Gain (NDCG) and enrichment score, which reflect its ability to identify the top-performing variants from the vast sequence space [21]. This approach led to a 95% reduction in the experimental screening burden and a ~7.5-fold enrichment for high-performing variants compared to a null model, demonstrating a profound acceleration of the protein engineering cycle [21].

Site-saturation mutagenesis and combinatorial mutagenesis are complementary pillars of modern protein engineering. SSM is a precise, semi-rational tool ideal for deep functional analysis of specific residues and is most powerful when used iteratively or with prior structural knowledge. Its efficiency is heavily influenced by the choice of degenerate codon and the molecular biology protocol, with newer two-step methods offering superior performance for difficult genes. Combinatorial mutagenesis, particularly when augmented with machine learning, is a powerful strategy for tackling complex engineering goals that involve interactions between multiple amino acids. The data-driven ML approach effectively navigates the intractably large sequence space, making it possible to discover highly optimized variants with minimal experimental effort. The choice between these tools is not mutually exclusive; a robust protein engineering campaign will often leverage the targeted power of SSM to identify hot spots before using combinatorial approaches and machine learning to achieve a globally optimized final variant.

In the field of protein engineering, the creation of improved or novel enzymes and biocatalysts is primarily driven by two powerful methodologies: random mutagenesis and semi-rational design. Random mutagenesis relies on the introduction of untargeted genetic changes across the protein sequence, leveraging high-throughput screening to identify beneficial variants through an iterative, exploratory process. In contrast, semi-rational design utilizes available information on protein structure, function, and evolutionary history to make informed decisions about which residues to mutate, creating smaller, more focused libraries. This guide provides an objective comparison of these strategies, examining their performance characteristics, optimal applications, and practical implementation to inform selection for specific research and development goals in drug development and biotechnology.

Core Principles and Methodologies

Random Mutagenesis: Unleashing Exploratory Power

Random mutagenesis mimics natural evolution in a laboratory setting by introducing random mutations throughout the gene of interest without requiring prior structural knowledge. The most common technique is Error-Prone PCR (epPCR), a modified polymerase chain reaction that reduces replication fidelity through factors such as manganese ions and unbalanced nucleotide concentrations to achieve a typical mutation rate of 1-5 base changes per kilobase [5]. This approach generates highly diverse libraries, allowing researchers to explore a vast sequence space and discover non-intuitive, beneficial mutations that might not be predicted by rational design. However, epPCR is not truly random; it exhibits biases toward transition mutations and can only access approximately 5-6 of the 19 possible alternative amino acids at any given position due to genetic code degeneracy [5]. DNA Shuffling represents another random method, which involves fragmenting homologous genes and reassembling them to create chimeric proteins, effectively recombining beneficial mutations from multiple parents [25] [5].

Semi-Rational Design: The Path to Targeted Efficiency

Semi-rational design employs computational and bioinformatic tools to target specific protein regions for mutagenesis, creating smaller, smarter libraries with a higher probability of containing improved variants. Key techniques include:

- Site-Saturation Mutagenesis (SSM): Systematically replaces a single amino acid position with all 19 other natural amino acids, enabling comprehensive exploration of a residue's functional role [25] [5].

- Combinatorial Site-Saturation Mutagenesis: Extends SSM by simultaneously targeting multiple pre-identified residues, often with a reduced amino acid alphabet to maintain manageable library sizes [15].

- Structure-Guided Design: Utilizes protein structural data and computational algorithms (e.g., molecular dynamics, docking) to identify mutational hotspots affecting substrate binding, catalytic efficiency, or stability [2] [26].

- Evolutionary-Guided Design: Leverages multiple sequence alignments and phylogenetic analysis of homologous proteins to identify conserved or functionally important residues amenable to mutagenesis [2].

Direct Performance Comparison: Experimental Data

Library Characteristics and Functional Output

Comparative studies reveal distinct differences in library size, functional content, and screening efficiency between the two approaches, as summarized in Table 1.

Table 1: Comparative Library Characteristics and Functional Output

| Parameter | Random Mutagenesis | Semi-Rational Design |

|---|---|---|

| Typical Library Size | Very Large (10⁴-10⁸ variants) [25] | Small to Medium (10²-10⁴ variants) [15] [2] |

| Amino Acid Diversity | Broad but biased (avg. 1-2 substitutions/variant) [5] | Focused and comprehensive at target sites (2-7 substitutions/variant) [15] |

| Fraction of Functional Variants | Low (<1% common) [15] | High (≥75% properly folded in optimized libraries) [15] |

| Screening Throughput Requirement | Very High | Moderate to Low |

| Key Advantage | Explores vast, unexpected sequence space; requires no prior knowledge | High efficiency; reduced screening burden; provides mechanistic insights |

A direct comparative study on engineering cytochrome P450 BM3 demonstrated the efficiency advantages of semi-rational libraries. While random mutagenesis libraries contained mostly non-functional variants, semi-rational approaches—including Combinatorial Site-Saturation Mutagenesis (CSSM), C(orbit), and CRAM libraries—achieved ≥75% properly folded variants despite higher average amino acid substitution levels (2.6-7.5 substitutions per variant) [15]. These libraries were "enriched with respect to the fraction functional and maximal activities," yielding propane- and ethane-hydroxylating variants with as few as two amino acid substitutions [15].

Catalytic Efficiency and Engineering Outcomes

The ultimate success of protein engineering campaigns can be measured by catalytic improvements and the number of iterations required to achieve them, with both approaches demonstrating distinct strengths.

Table 2: Representative Engineering Outcomes Across Protein Classes

| Protein Engineered | Approach | Key Mutations | Catalytic Improvement | Reference |

|---|---|---|---|---|

| Cytochrome P450 BM3 | Semi-rational (CRAM) | Not Specified | 16,800 propane turnovers (36% coupling) | [15] |

| KOD DNA Polymerase | Semi-rational | D141A, E143A, L408I, Y409A, A485E + 6 others | >20-fold improvement in modified nucleotide incorporation | [26] |

| α-L-Rhamnosidase (MlRha4) | Combined (Random + Semi-rational) | K89R, K70R, E475D | 70.6% increase in enzyme activity; enhanced alkalinity tolerance | [27] |

| Pseudomonas fluorescens Esterase | Semi-rational (3DM analysis) | 4 active site positions | 200-fold improved activity; 20-fold improved enantioselectivity | [2] |

Semi-rational design often produces significant catalytic improvements in fewer rounds of screening. For example, engineering of a KOD DNA polymerase through semi-rational approaches yielded an 11-mutation variant with over 20-fold improvement in enzymatic activity for incorporating modified nucleotides [26]. Similarly, semi-rational design of Pseudomonas fluorescens esterase using 3DM database analysis generated variants with 200-fold improved activity and significantly enhanced enantioselectivity from a library of approximately 500 variants [2].

Random mutagenesis, while more laborious, can identify beneficial mutations distant from the active site that would be difficult to predict. However, its true strength emerges when combined with semi-rational approaches. In the engineering of α-L-rhamnosidase, an initial round of random mutagenesis identified beneficial regions, followed by semi-rational design to refine these hits, culminating in a triple mutant with 70.6% increased activity and improved tolerance to alkaline conditions [27].

Experimental Protocols and Workflows

Random Mutagenesis Workflow

Random Mutagenesis Workflow

Step 1: Library Generation via Error-Prone PCR

- Set up PCR reactions with: Template DNA (10-100 ng), Taq polymerase (lacks proofreading), unbalanced dNTP concentrations (e.g., 0.2 mM dATP/dGTP, 1 mM dCTP/dTTP), 0.5 mM Mn₂⁺, primers targeting gene of interest [5].

- Run thermocycling with standard parameters (25-30 cycles).

- Clone expressed variants into expression vector and transform into host cells (e.g., E. coli) to create variant library.

Step 2: High-Throughput Screening

- Plate transformed cells on agar plates for colony formation or culture in 96/384-well microtiter plates [5].

- Assay for desired activity using colorimetric, fluorometric, or survival-based selection. For thermostability engineering, heat pre-treatment followed by activity measurement identifies stabilized variants [5].

- Isolate top-performing variants (typically 0.1-1% of library) for characterization.

Step 3: Iterative Improvement

- Use best variants as templates for subsequent epPCR rounds.

- Accumulate beneficial mutations over 3-10 generations until desired performance achieved.

Semi-Rational Design Workflow

Semi-Rational Design Workflow

Step 1: Target Identification

- Perform multiple sequence alignment of homologous proteins to identify conserved or variable positions (using tools like 3DM or HotSpot Wizard) [2].

- Analyze protein structure (experimental or homology model) to identify active site residues, substrate access tunnels, or flexible regions.

- Select 3-10 target residues for mutagenesis based on evolutionary conservation, structural location, and functional importance.

Step 2: Focused Library Construction

- For Site-Saturation Mutagenesis: Design degenerate primers (e.g., NNK codons) targeting selected residues, where N represents any nucleotide and K represents G or T, covering all 20 amino acids with 32 codons [25].

- Perform PCR with high-fidelity polymerase to minimize background mutations.

- For combinatorial libraries, use overlap extension PCR or gene synthesis for multiple simultaneous mutations.

Step 3: Screening and Validation

- Screen smaller libraries (typically 100-5,000 variants) using moderate-throughput methods (96/384-well plates).

- Characterize best hits with detailed kinetic analysis (KM, kcat), stability assays (Tm), and substrate specificity profiling.

- Use computational validation (molecular dynamics, docking) to rationalize improvements and guide further optimization.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents and Resources for Implementation

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Taq Polymerase | Low-fidelity PCR amplification | Essential for error-prone PCR; lacks 3'→5' proofreading [5] |

| Mn²⁺ Ions | Reduces polymerase fidelity | Critical component in epPCR buffers to increase mutation rate [5] |

| NNK Primers | Codon saturation | Encodes all 20 amino acids + stop codon; minimal redundancy [25] |

| 3DM Database | Protein superfamily analysis | Identifies evolutionarily allowed substitutions; guides library design [2] |

| Rosetta Software | Protein design calculations | Predicts stabilizing mutations and enzyme specificity changes [2] |

| HotSpot Wizard | Mutability mapping | Identifies functional hotspots from sequence/structure data [2] |

| Microtiter Plates | High-throughput screening | 96-well or 384-well format for colony screening and assays [5] |

Strategic Application Guidelines

When to Prefer Random Mutagenesis

- Limited Structural/Mechanistic Information: When high-resolution structures or detailed mechanistic understanding is unavailable [5].

- Exploratory Function Discovery: When seeking entirely new functions or non-intuitive solutions (e.g., altering substrate specificity without active site knowledge) [25].

- High-Throughput Capacity: When access to ultra-high-throughput screening (FACS, microfluidics) enables screening of >10⁶ variants [25].

- Stability Engineering: When targeting global protein properties like thermostability that can be improved by mutations throughout the structure.

When to Prefer Semi-Rational Design

- Focused Functional Changes: When engineering specific properties like substrate specificity, enantioselectivity, or cofactor preference [2].

- Limited Screening Resources: When practical constraints limit screening to 10²-10⁴ variants [15] [2].

- Structural Information Available: When crystal structures or reliable homology models enable target identification [26].

- Iterative Refinement: When initial random approaches have identified "hotspots" requiring comprehensive exploration [27].

Hybrid Approaches for Maximum Impact

The most successful protein engineering campaigns often combine both strategies sequentially: using random mutagenesis for broad exploration followed by semi-rational design for focused optimization [27]. This hybrid approach leverages the exploratory breadth of random methods with the targeted efficiency of rational design, accelerating the engineering process while mitigating the limitations of each individual method.

From Theory to Bench: Practical Applications in Enzyme and Therapeutic Engineering

Enzymes, as biological catalysts, are pivotal in industrial processes, from pharmaceutical manufacturing to food and beverage production. Their catalytic efficiency, specificity, and ability to function under mild conditions make them superior to traditional chemical catalysts. However, natural enzymes often lack the desired properties for industrial application, necessitating optimization. The field of enzyme engineering has evolved significantly, primarily driven by two philosophies: random mutagenesis (directed evolution) and semi-rational design. This guide provides a comparative analysis of these approaches through detailed case studies on two industrially relevant enzymes: α-L-Rhamnosidase and Cytochrome P450 BM3 (CYP102A1). We will dissect the experimental protocols, quantify improvements, and present the data for direct comparison, providing a framework for selecting an optimal engineering strategy.

The core distinction between these methods lies in the source of genetic diversity and the prior knowledge required.

Random Mutagenesis, or directed evolution, mimics natural evolution in a laboratory setting. It involves creating a large library of enzyme variants through random changes to the gene sequence using methods like error-prone PCR. This library is then subjected to high-throughput screening to identify variants with improved properties. The major advantage is that it requires no prior structural knowledge of the enzyme. However, its primary limitation is the immense screening burden, as beneficial mutations are rare within a vast sequence space [15] [12].

Semi-Rational Design bridges the gap between purely random methods and fully rational design. It utilizes available structural and functional information—such as crystal structures, sequence alignments, or computational predictions—to target specific residues for mutagenesis. Techniques include Combinatorial Site-Saturation Mutagenesis (CSSM), where a reduced set of amino acids is tested at targeted positions, and computational design using algorithms like C(orbit) and CRAM. This approach creates "smarter," smaller libraries that are enriched with functional variants, drastically reducing the number of clones that need to be screened [15] [12].

The following workflow illustrates how these strategies can be integrated into a modern enzyme optimization pipeline.

Case Study 1: Optimization of α-L-Rhamnosidases

α-L-Rhamnosidase (EC 3.2.1.40) is a glycoside hydrolase that cleaves terminal α-linked L-rhamnose sugars from natural compounds. It has significant applications in the food industry for debittering citrus juices and in the pharmaceutical industry for producing high-value compounds like icariin, which has anti-osteoporosis and neuroprotective effects [28] [29].

Optimization Objectives and Challenges

The primary industrial challenge is that the native enzyme often has low catalytic efficiency, insufficient thermostability, or narrow substrate specificity for the desired application. For instance, in the bioconversion of epimedin C to the more valuable icariin, a highly specific and efficient α-L-rhamnosidase is required to hydrolyze the α-1,2 glycosidic bond [28]. Furthermore, natural enzyme production from fungi like Aspergillus niger can be inefficient and costly [29].

The table below summarizes key experimental data from optimization studies on α-L-Rhamnosidase.

Table 1: Comparative Performance of Engineered α-L-Rhamnosidases

| Enzyme / Variant | Engineering Approach | Key Mutations / Features | Catalytic Efficiency (kcat/Km) | Specific Activity | Key Improvement |

|---|---|---|---|---|---|

| Papiliotrema laurentii ZJU-L07 [28] | Random Mutagenesis (Strain improvement via γ-rays & nitrosoguanidine) | Not specified (Whole-cell mutagenesis) | Km: 1.38 mM (pNPR); 3.28 mM (epimedin C) | 29.89 U·mg⁻¹ (purified enzyme) | Icariin yield from epimedin C increased from 61% to >83% |

| N12-Rha (from Aspergillus niger) [29] | Semi-Rational (Codon optimization & engineered strain) | Codon-optimized gene for P. pastor | Not explicitly stated | 7,240 U/mL (hesperidin); 945 U/mL (naringin) | 10.63x higher activity than native enzyme; stable at pH 3–6 & 40–60°C |

| AK-rRha (from A. kawachii) [30] | Native (Comparative study) | Native sequence (92% identity to AT-Rha) | kcat: 0.67 s⁻¹ (on naringin) | 0.816 U/mg (on naringin) | Baseline for comparison |

| AT-rRha (from A. tubingensis) [30] | Native (Comparative study) | Native sequence (naturally evolved) | kcat: 4.89 x 10⁴ s⁻¹ (on naringin) | 125.142 U/mg (on naringin) | 73,000x higher kcat than AK-rRha, illustrating impact of subtle sequence differences |

Experimental Protocols for α-L-Rhamnosidase

1. Strain Improvement via Random Mutagenesis [28]:

- Mutagenesis: The wild-type yeast Papiliotrema laurentii was treated with gamma rays (²⁰Coγ, 250-1000 Gy) and the chemical mutagen nitrosoguanidine (0.5-2 M).

- Screening: Mutagenized cells were first plated on LB medium containing p-nitrophenyl-α-L-rhamnopyranoside (pNPR), a chromogenic substrate. Active clones (forming yellow halos due to p-nitrophenol release) were selected for secondary screening in a medium containing epimedin C.

- Analysis: The conversion of epimedin C to icariin was quantified using High-Performance Liquid Chromatography (HPLC).

2. Semi-Rational Gene Optimization and Expression [29]:

- Gene Synthesis: The native α-L-rhamnosidase gene from Aspergillus niger JMU-TS528 was optimized for codon usage in Pichia pastoris and chemically synthesized.

- Expression: The synthesized gene was cloned into the pPIC9K vector and transformed into P. pastoris GS115.

- Fermentation & Screening: Engineered strains were cultured in buffered glycerol-complex medium (BMGY), then induced with methanol. High-expression clones (like N12) were selected based on activity assays using rutin as a substrate.

- Purification: The enzyme was purified from the culture supernatant using ammonium sulfate precipitation and nickel-affinity chromatography (due to a His-tag).

Case Study 2: Optimization of Cytochrome P450 BM3

Cytochrome P450 BM3 (CYP102A1) from Bacillus megaterium is a self-sufficient monooxygenase that catalyzes the oxidation of unactivated C-H bonds, a valuable reaction for synthesizing pharmaceuticals and fine chemicals. Its fused nature (heme and reductase domains in one polypeptide) and high native activity make it an attractive engineering target [31] [32].

Optimization Objectives and Challenges

Key challenges for industrial use include limited substrate scope (native enzyme prefers long-chain fatty acids), low operational stability, and a dependency on the expensive cofactor NADPH. Engineering goals often focus on expanding substrate range, improving thermostability and solvent tolerance, and enhancing activity with the cheaper cofactor NADH [31] [32].

The table below consolidates quantitative data from various P450 BM3 engineering studies.

Table 2: Comparative Performance of Engineered Cytochrome P450 BM3 Variants

| Enzyme / Variant | Engineering Approach | Key Mutations / Features | Cofactor Used | Total Turnover Number (TON) / Activity | Key Improvement |

|---|---|---|---|---|---|

| Wild-Type (WT) BM3 [32] | Baseline | Native sequence | NADPH | 4,918 (pNP/CYP, 10-pNCA substrate) | Baseline |

| NADH | 1,313 (pNP/CYP, 10-pNCA substrate) | Baseline | |||

| DE Variant [32] | Experimental Evolution (Oleic acid adaptation) | 34 mutations (5 in heme, 5 in linker, 24 in reductase domain) | NADPH | 6,060 (pNP/CYP) | 1.23x TON vs. WT |

| NADH | 2,316 (pNP/CYP) | 1.76x TON vs. WT; Increased cosolvent tolerance | |||

| E32 Variant [15] | Semi-Rational (CRAM algorithm library) | Targeted 10 active site residues to reduce pocket size | Not specified | 16,800 turnovers (propane) | Rivals activity from 10-12 rounds of directed evolution |

| NTD5/6 Variants [31] | Consensus-Guided Evolution | A769S, S847G, S850R, E852P, V978L (on reductase domain) | NBAH | 5.24x total product output vs. parent (R966D/W1046S) | Enhanced use of inexpensive cofactors NADH/NBAH |

| NADH | 2.3x total product output vs. parent (R966D/W1046S) | ||||

| Ginkgo Bioworks AI Engineered [33] | AI/Machine Learning (Owl model) | Mutations predicted by AI across 4 iterative rounds | Not specified | 10x improvement in kcat/KM (catalytic efficiency) | Met customer's economic target |

Experimental Protocols for Cytochrome P450 BM3

1. Experimental Evolution [32]:

- Evolution Pressure: Bacillus megaterium was cultured in progressively higher concentrations of oleic acid (from 2.5 µM to 300 µM), which is toxic and induces BM3 expression.

- Selection: Bacterial growth under these conditions selected for mutants with enhanced BM3 activity capable of detoxifying oleic acid.

- Variant Isolation: The BM3 gene from the evolved strain was sequenced, revealing the DE variant with 34 mutations.

2. Semi-Rational Designed Libraries [15]:

- Library Design: Three semi-rational libraries were constructed:

- CSSM: Combinatorial site-saturation mutagenesis of active site residues with a reduced amino acid set.

- C(orbit) & CRAM: Computational algorithms used to design focused libraries targeting up to 10 active site residues to re-specialize the enzyme for small alkanes.

- Screening: These small libraries (343–1,028 variants) were screened for demethylation of dimethyl ether and hydroxylation of propane and ethane. The CRAM library, designed to reduce the active site size, was most effective.

3. Consensus-Guided Evolution [31]:

- Sequence Analysis: The reductase domain of BM3 was aligned with homologous domains from other enzymes to identify conserved amino acid positions.

- Mutagenesis: Residues in the reductase domain were mutated to match the consensus sequence, aiming to improve stability and cofactor usage.

- Assay: Product output was measured using the substrate 10-pNCA, with NADH and N-benzyl-1,4-dihydronicotinamide (NBAH) as cofactors.

The Scientist's Toolkit: Essential Research Reagents

This table lists key reagents and materials used in the cited enzyme engineering studies, which are fundamental for designing similar experiments.

Table 3: Key Research Reagents and Their Applications in Enzyme Engineering

| Reagent / Material | Function / Application | Example Use in Case Studies |

|---|---|---|

| pNPR (p-nitrophenyl-α-L-rhamnopyranoside) | Chromogenic substrate for high-throughput screening of α-L-rhamnosidase activity. | Used in initial screening of mutagenized P. laurentii [28]. |

| Epimedin C / Icariin | Natural substrate and product for assessing therapeutic enzyme performance. | Used as the target reaction for bioconversion by P. laurentii α-L-rhamnosidase [28]. |

| Rutin, Naringin, Hesperidin | Natural flavonoid glycosides; substrates for enzyme specificity and activity assays. | Used to characterize the substrate range and kinetic parameters of α-L-rhamnosidases [29] [30]. |

| 10-pNCA (p-nitrophenoxydecanoic acid) | Model chromogenic substrate for assaying P450 BM3 hydroxylation activity. | Used to measure the total product output and TON of BM3 variants [31] [32]. |

| NADPH / NADH / NBAH | Cofactors for redox enzymes; engineering target for cost reduction. | Used to assay and engineer improved cofactor usage in P450 BM3 variants [31] [32]. |

| Oleic Acid | Fatty acid inducer of BM3 expression and agent for experimental evolution. | Applied as a selective pressure to evolve more robust P450 BM3 in B. megaterium [32]. |

| Pichia pastoris GS115 & pPIC9K | Eukaryotic expression system for high-yield recombinant enzyme production. | Host and vector for expressing recombinant α-L-rhamnosidases [28] [29]. |

Integrated Engineering Workflow: From Data to Design

Modern enzyme engineering, as demonstrated by companies like Ginkgo Bioworks, increasingly relies on an iterative cycle that integrates massive data generation with machine learning. This approach leverages the strengths of both random and semi-rational methods.

In this workflow, initial small-scale experiments (using either semi-rational or random methods) generate the first set of data. This data is used to train a machine learning model (e.g., Ginkgo's "Owl"), which then predicts which mutations or combinations are most likely to be beneficial. These predictions guide the design of the next library, creating a powerful feedback loop. For example, Ginkgo used this method to achieve a 10-fold improvement in the catalytic efficiency of a central carbon metabolism enzyme in just four generations, a feat that surpassed decades of traditional research [33].

The case studies of α-L-Rhamnosidase and Cytochrome P450 BM3 demonstrate that both random mutagenesis and semi-rational design are powerful strategies for industrial enzyme optimization.

- Semi-rational design excels when structural or sequence data is available, allowing researchers to make "large jumps in sequence space" with high efficiency and reduced screening burden [15] [12]. The success of consensus-guided evolution [31] and computationally designed libraries [15] underscores this advantage.

- Random mutagenesis and experimental evolution remain highly valuable, particularly when structural information is lacking or when seeking complex, multi-factorial traits like overall fitness and solvent tolerance, as seen in the P450 BM3 DE variant [32].

The future of enzyme engineering lies in the synergistic integration of these approaches, supercharged by machine learning. By generating high-quality data from intelligent initial libraries—whether random or targeted—researchers can build predictive models that dramatically accelerate the optimization process. Choosing a strategy depends on the specific enzyme, the desired property, and the available resources. However, as these case studies show, a hybrid approach that leverages data-driven insights is consistently the most effective path to achieving industrial biocatalysis goals.

DNA polymerases are fundamental tools in biotechnology, enabling DNA replication, sequencing, and amplification. However, natural DNA polymerases often inefficiently incorporate modified nucleotides, which are crucial for advancing synthetic biology, DNA sequencing, and therapeutic aptamer development. To overcome this limitation, protein engineers have employed both random mutagenesis and semi-rational design to create DNA polymerases with enhanced capabilities. This guide provides a comparative analysis of these engineering approaches, focusing on their success in generating polymerases that incorporate non-canonical nucleotides, with supporting experimental data and methodologies to inform researchers and drug development professionals.

Engineering Approaches: A Comparative Analysis

Engineering DNA polymerases for new functions borrows techniques from general enzyme engineering, primarily falling into two categories: random mutagenesis and semi-rational design. A comparative study on engineering cytochrome P450 BM3 provides quantitative data that can be analogously applied to understanding polymerase engineering strategies [15].

Table 1: Comparison of Polymerase Engineering Approaches

| Engineering Approach | Methodology Description | Typical Library Size | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Random Mutagenesis | Introduction of mutations randomly throughout the gene, often via error-prone PCR. | Very Large (10,000+ variants) | Requires no prior structural knowledge; can discover unexpected beneficial mutations. | Vast sequence space to screen; high proportion of non-functional variants. |

| Semi-Rational Design | Mutagenesis targeted to specific residues chosen based on structural or phylogenetic data. | Small to Medium (343 - 1,028 variants) [15] | Higher probability of success; more efficient screening; fewer non-functional variants [15]. | Requires high-quality structural and/or functional data. |

| Combinatorial Site-Saturation Mutagenesis (CSSM) | A semi-rational method where targeted residues are mutated to a reduced set of amino acids [15]. | Small (e.g., 343 variants) [15] | Enriches for functional folds; balances diversity with library practicality [15]. | Depends on accurate residue selection. |

The selection of an engineering strategy often depends on the depth of existing knowledge about the polymerase's structure-function relationship. For polymerases with well-characterized active sites, semi-rational designs—such as Combinatorial Site-Saturation Mutagenesis (CSSM)—have proven highly effective. One study demonstrated that semi-rational libraries were significantly enriched with functional variants compared to a random mutagenesis library, with at least 75% of library members being properly folded despite multiple amino acid substitutions [15].

Engineered DNA Polymerases and Their Applications

Successful engineering efforts, using both random and semi-rational strategies, have yielded several notable DNA polymerases with tailored properties for biotechnology.

Therminator DNA Polymerase: A Case Study in Semi-Rational Design

Therminator DNA Polymerase is a premier example of successful protein engineering. It is derived from the family B DNA polymerase of Thermococcus sp. 9°N and was created through a semi-rational approach [34]. The wild-type enzyme was modified with three key mutations: D141A/E143A (to inactivate 3′-5′ exonuclease proofreading activity) and A485L (the key mutation in the polymerase active site that enhances modified nucleotide incorporation) [34]. The A485L mutation is located on the O-helix finger domain. While it does not directly contact the incoming nucleotide, it is hypothesized to indirectly enhance incorporation by reducing steric barriers or altering the equilibrium between the open and closed states during the polymerization conformational change [34]. This single mutation enables the polymerase to incorporate a wide range of modified substrates.

Table 2: Engineered DNA Polymerases and Their Applications in Biotechnology

| Engineered Polymerase | Key Mutation(s)/Design | Application in Biotechnology | Performance Data / Key Feature |

|---|---|---|---|

| Therminator (9°N mutant) | D141A, E143A, A485L [34] | Incorporation of dye-labeled dNTPs, ribonucleotides (rNTPs), and other modified nucleotides [34]. | Incorporates up to 20 consecutive ribonucleotides; incorporates rhodamine-dye nucleotides more efficiently than Cyanine dyes [34]. |

| Tgo exo- mutant | Y409G, A485L, E665K [34] | Synthesis of long RNA products. | Enables synthesis of A-form RNA:DNA up to 1.7 kb in length [34]. |

| KlenTaq | Not Specified (Point Mutations) [35] | "Hot-start" PCR; forensic and ancient DNA amplification [35]. | Reduced mispriming at non-specific sites at ambient temperature. |

| A485L-equivalent in Vent Pol | A488L [34] | Mechanism study for rNTP incorporation. | Increased rCTP incorporation efficiency: KD=360 µM, kpol=0.7 s⁻¹ (vs. WT: KD=1100 µM, kpol=0.160 s⁻¹) [34]. |

Advanced Engineering Methods

Beyond single point mutations, advanced engineering methods have been developed to evolve polymerases with novel functions:

- Compartmentalized Self-Replication (CSR): This method involves isolating single polymerase clones in water-in-oil emulsions, where each clone amplifies its own encoding gene. The resulting PCR products are then used for the next round of evolution, effectively selecting for polymerases with improved PCR performance [35]. CSR has been used to engineer polymerases for robust activity in CSR itself and for amplifying ancient DNA samples [35].

- Droplet-based Optical Polymerase Sorting (DrOPS): A more recent high-throughput technique where single cells carrying polymerase variants are encapsulated in droplets along with activity assay reagents. This method allows for the screening of extremely large libraries with minimal quantities of precious substrates, such as synthetic dNTPs [35].

Experimental Protocols and Validation