Sequential Mutagenesis Strategies: Engineering Complex Traits for Next-Generation Therapeutics and Crops

This article provides a comprehensive overview of sequential mutagenesis as a powerful strategy for engineering complex polygenic traits.

Sequential Mutagenesis Strategies: Engineering Complex Traits for Next-Generation Therapeutics and Crops

Abstract

This article provides a comprehensive overview of sequential mutagenesis as a powerful strategy for engineering complex polygenic traits. Aimed at researchers and drug development professionals, it explores the foundational principles of overcoming genetic redundancy and the polygenic nature of many agronomic and biomedical traits. The content delves into advanced methodological toolkits, including multiplex CRISPR editing, combinatorial library design, and base editing, highlighting their applications in trait stacking, de novo domestication, and protein engineering. A strong emphasis is placed on practical troubleshooting, optimizing editing efficiency, and minimizing unintended effects. Finally, the article covers rigorous validation frameworks, comparing emerging technologies like base editing with established methods such as deep mutational scanning to ensure accurate variant annotation and functional characterization, thereby bridging the gap between laboratory innovation and real-world application.

The Polygene Challenge: Foundational Concepts in Engineering Complex Traits

Understanding Genetic Redundancy and Polygenic Traits in Eukaryotes

Core Concepts FAQ

What is genetic redundancy and why is it a challenge in research? Genetic redundancy describes a situation where two or more genes perform the same biochemical function, so that inactivation of one of these genes has little or no effect on the biological phenotype [1] [2]. For researchers, this is problematic because when studying gene function through loss-of-function mutants (e.g., knockouts), redundant genes can obscure phenotypic screening or analysis—a mutated gene may show no obvious phenotype because its homologue compensates for its loss [3].

How does genetic redundancy relate to polygenic traits? While genetic redundancy involves multiple genes performing overlapping functions, polygenic traits are influenced by many genetic variants across the genome, each with small effects [4] [5]. Both concepts illustrate how biological systems distribute function across multiple genetic elements rather than relying on single genes. The key difference is that redundant genes often perform identical or highly similar functions, whereas polygenic traits emerge from the combined effects of genes that may participate in different biological processes [3] [4].

Why is genetic redundancy evolutionarily stable? The persistence of genetic redundancy represents an evolutionary paradox because truly redundant genes should not be protected against accumulation of deleterious mutations [1]. However, several mechanisms explain its stability:

- Gene dosage benefits: Increased dosage of a gene product may be advantageous in certain environmental or genetic contexts [3]

- Subfunctionalization: Duplicated genes split the functions of their ancestor [3]

- Neofunctionalization: One duplicate acquires mutations that support new functional roles [3]

- Distributed robustness: Independent systems evolve to overlap in function, providing adaptability [3]

What experimental approaches can overcome redundancy challenges? To circumvent issues caused by genetic redundancy, researchers must generate mutants harboring mutations in most, if not all, homologous genes within a family [3]. Sequential mutagenesis strategies using technologies like CRISPR-Cas9 enable systematic targeting of multiple redundant genes to reveal their collective function [6].

Troubleshooting Guide: Experimental Challenges with Redundant Gene Systems

Problem: No Observable Phenotype in Loss-of-Function Mutants

Potential Causes and Solutions

| Problem Cause | Diagnostic Clues | Recommended Solutions |

|---|---|---|

| Complete redundancy | No phenotype in single mutant; homologs expressed in same tissues | Generate higher-order mutants; target entire gene family using sequential CRISPR [3] |

| Partial redundancy | Subtle or context-dependent phenotypes; requires specific conditions | Implement sensitized genetic screens; apply environmental stressors [3] |

| Insufficient genetic background variation | Phenotype visible only in specific genetic backgrounds | Cross mutants into diverse genetic backgrounds; use outbred populations [4] |

| Technical compensation | Upregulation of homologous genes in mutant | Perform transcriptomic analysis to detect compensatory mechanisms [3] |

Problem: High Experimental Variability in Phenotypic Measurements

Potential Causes and Solutions

| Problem Cause | Diagnostic Clues | Recommended Solutions |

|---|---|---|

| Genetic background effects | Phenotype severity varies across strains | Use controlled genetic backgrounds; employ advanced intercross lines [5] |

| Environmental modulation | Phenotypes context-dependent under different conditions | Standardize environmental conditions; explicitly test environmental interactions [3] [4] |

| Epistatic interactions | Phenotype depends on combination of alleles at other loci | Perform genetic interaction mapping; use systems genetics approaches [4] |

Problem: Difficulty Identifying Causal Genes in Polygenic Traits

Potential Causes and Solutions

| Problem Cause | Diagnostic Clues | Recommended Solutions |

|---|---|---|

| Small effect sizes | Many loci with minimal individual contribution | Increase sample size; use advanced intercross lines to enhance recombination [5] |

| Linkage disequilibrium | Causal variants linked to multiple genes | Use fine-mapping populations; employ multi-omics data integration [5] |

| Regulatory vs. coding variants | GWAS signals in non-coding regions | Integrate eQTL, chromatin accessibility, and epigenetic data [4] [5] |

Experimental Protocols for Sequential Mutagenesis

Sequential CRISPR-Cas9 Protocol for Redundant Gene Families

Methodology Details:

- Gene Family Identification: Use genomic databases to identify all homologous genes through sequence similarity and domain architecture analysis [3]

- Guide RNA Design: Design CRISPR gRNAs with minimal off-target potential using tools like IDT's OligoAnalyzer [7]

- Sequential Mutagenesis: Generate single mutants first, then systematically cross them to create double, triple, and higher-order mutants [3]

- Phenotypic Screening: Implement high-throughput phenotyping across multiple environments and developmental stages [6]

- Multi-omics Validation: Integrate transcriptomic, proteomic, and metabolomic data to understand compensatory mechanisms [4]

Systems Genetics Approach for Polygenic Trait Dissection

Methodology Details:

- Population Design: Use advanced intercross lines (AIL) or other mapping populations to enhance recombination and mapping resolution [5]

- Multi-Omics Data Collection: Generate transcriptomic, proteomic, and metabolomic datasets from relevant tissues [4]

- Molecular QTL Mapping: Identify loci controlling molecular traits (eQTLs, pQTLs) and relate them to clinical/physiological traits [4] [5]

- Causal Inference Testing: Use mediation analysis and Mendelian randomization to establish causal relationships [4]

- Network Integration: Build networks connecting DNA variation to molecular and physiological traits [4]

The Scientist's Toolkit: Essential Research Reagents

Key Research Reagent Solutions

| Reagent/Category | Function in Research | Application Notes |

|---|---|---|

| High-fidelity polymerases (e.g., Q5) | Accurate amplification with low error rates | Essential for mutagenesis; reduces background mutations [8] [9] |

| CRISPR-Cas9 systems | Targeted genome editing | Sequential mutagenesis of redundant gene families [6] [3] |

| Diverse genetic backgrounds | Context for gene function analysis | Reveals phenotypic effects masked in single backgrounds [4] |

| Methylation-sensitive enzymes | Epigenomic analysis | Identifies regulatory variants in non-coding regions [4] |

| Competent cell strains (recA-) | Stable plasmid propagation | Prevents recombination; maintains construct integrity [8] |

| Phosphatases/kinases (e.g., T4 PNK) | DNA end modification | Controls ligation efficiency; critical for cloning [8] |

| Advanced intercross lines | High-resolution genetic mapping | Enhances recombination; improves QTL mapping precision [5] |

Advanced Technical Notes

Interpreting Negative Results in Genetic Screens When single-gene mutations produce no observable phenotype, consider these investigative steps before concluding genetic redundancy:

- Verify mutant generation: Confirm frameshift mutations and protein truncation through sequencing [7]

- Assess compensatory regulation: Check for upregulated expression of homologous genes via qRT-PCR [3]

- Test condition-specific phenotypes: Challenge mutants with environmental stressors, pathogens, or dietary variations [3]

- Quantitative phenotyping: Implement sensitive measurements that may detect subtle phenotypic changes [5]

Integrating Functional Genomics Data Modern approaches to studying redundant systems require multi-layered data integration [4] [5]:

- Combine genomic, transcriptomic, and proteomic datasets to identify compensation mechanisms

- Use mediation analysis to determine if molecular traits (e.g., transcript levels) mediate genetic effects on complex traits

- Apply network models to identify hub genes and functional modules within redundant systems

The continued development of sequential mutagenesis strategies, coupled with systems genetics approaches, provides powerful frameworks for dissecting the contributions of redundant genes to polygenic traits, ultimately enabling more effective strategies for complex trait improvement in eukaryotic organisms.

The Limitation of Single-Gene Editing and the Case for Sequential Approaches

Troubleshooting Guides

Guide 1: Addressing Incomplete Phenotypes After Single-Gene Knockout

Problem: After a successful single-gene knockout, the expected strong phenotypic change is not observed, or the phenotype is weaker than anticipated.

Explanation: This is a common indication that the trait you are studying is complex and polygenic, meaning it is influenced by multiple genes. Knocking out a single gene may not be sufficient to cause a strong phenotype due to genetic redundancy or compensatory mechanisms within the biological network [4].

Solution: Employ a sequential mutagenesis strategy.

- Confirm Knockout: First, validate that your initial knockout was successful at both the genomic (e.g., via Sanger sequencing and ICE analysis) and protein levels (e.g., via western blot) [10].

- Identify Candidate Genes: Use systems genetics data (e.g., from transcriptomics or proteomics studies) to identify other genes that are co-expressed or function in the same pathway as your initial target [4].

- Sequential Editing: Design gRNAs for these additional candidate genes. Introduce these edits sequentially into your already-modified cell line or organism.

- Phenotypic Re-assessment: After each sequential edit, re-evaluate the phenotype to determine if the desired complex trait is progressively enhanced.

Guide 2: Managing Structural Variations and Genomic Instability

Problem: CRISPR editing, especially when using strategies to enhance homology-directed repair (HDR), can lead to large, unintended structural variations (SVs) like megabase-scale deletions or chromosomal translocations, which compromise genomic integrity [11].

Explanation: Double-strand breaks (DSBs) induced by CRISPR-Cas9 can be misrepaired by cellular mechanisms. The use of certain HDR-enhancing agents, such as DNA-PKcs inhibitors, can drastically increase the frequency of these dangerous SVs [11].

Solution: Adopt safer editing practices and rigorous validation.

- Avoid High-Risk Enhancers: Be cautious when using DNA-PKcs inhibitors (e.g., AZD7648) to promote HDR, as they are strongly linked to increased genomic aberrations [11].

- Use High-Fidelity Cas9 Variants: Utilize engineered Cas9 proteins like eSpCas9(1.1) or SpCas9-HF1 to reduce off-target activity [12] [11].

- Long-Range Genotyping: Do not rely solely on short-read amplicon sequencing, which can miss large deletions. Use methods like CAST-Seq or LAM-HTGTS that are capable of detecting SVs to fully validate your edited lines [11].

Frequently Asked Questions (FAQs)

FAQ 1: Why would I use sequential editing instead of a multiplexed approach where I edit all genes at once?

While multiplexing can save time, it can also overwhelm the cellular repair machinery and increase the risk of complex genomic rearrangements and cell death [11]. A sequential approach allows you to:

- Monitor phenotypic changes at each step.

- Identify which genetic combination yields the optimal trait.

- Reduce cellular stress by introducing one genetic perturbation at a time, which is crucial for studying subtle, polygenic traits [4].

FAQ 2: My single-gene knockout was successful, but western blot shows a truncated protein is still being expressed. What happened?

This often occurs because the guide RNA was designed to target an exon that is not present in all protein-coding isoforms of your gene [10]. Due to alternative splicing, a truncated but still functional protein isoform may be expressed.

- Solution: Redesign your gRNA to target an early exon that is common to all prominent isoforms of the gene to ensure a complete knockout [10].

FAQ 3: What are the key limitations of single-gene editing when studying complex traits?

The primary limitations are:

- Genetic Redundancy: Multiple genes can perform overlapping functions. Disrupting one may not cause a phenotype.

- Modifier Genes: The effect of a mutation can be strongly influenced by the genetic background. A knockout in one strain may have a different phenotype in another [4].

- Network Effects: Biological systems are highly interconnected. A single perturbation can be buffered by the network.

- Oversimplification: Complex traits like yield, stress resistance, or disease susceptibility are controlled by many genes, making single-gene edits insufficient [4].

FAQ 4: How can systems genetics inform a sequential editing strategy?

Systems genetics integrates data on natural genetic variation with intermediate molecular phenotypes (e.g., RNA, protein levels) [4]. This allows you to:

- Identify Causal Genes: Pinpoint which genes in a locus are actually driving a trait.

- Reveal Networks: Discover entire pathways and networks of genes that co-vary with your trait of interest.

- Prioritize Targets: Generate a ranked list of the most promising genes to target sequentially for complex trait improvement.

Quantitative Data on Editing Outcomes and Risks

The table below summarizes key quantitative findings on CRISPR editing outcomes, which are critical for planning sequential experiments.

| Editing Parameter | Reported Value or Frequency | Context and Implications |

|---|---|---|

| Nonsense Mutation Prevalence | ~30% of rare diseases [13] | Highlights a large patient population that could benefit from a universal editing approach like PERT. |

| Large Structural Variations (SVs) | Kilobase- to megabase-scale deletions [11] | A critical safety risk; frequency can be increased by using DNA-PKcs inhibitors. |

| Impact of DNA-PKcs Inhibitors | Up to thousand-fold increase in translocation frequency [11] | These HDR-enhancing compounds can severely aggravate genomic aberrations. |

| Therapeutic Protein Restoration | 20-70% of normal enzyme activity (cell models); ~6% (mouse model) [13] | Even low levels of restored protein function can be sufficient to alleviate disease symptoms. |

Experimental Protocol for a Sequential Mutagenesis Workflow

This protocol outlines a general workflow for sequentially introducing multiple edits to study a complex trait.

1. Target Identification and gRNA Design:

- Identify Gene Network: Use systems genetics resources (e.g., gene co-expression networks from databases like GTEx for humans or BXD panels for mice) to define a list of candidate genes involved in your complex trait [4].

- Design gRNAs: For each candidate gene, design highly specific gRNAs. Use online tools to select gRNAs that:

2. Initial Cell Line Modification and Validation:

- Transfection/Electroporation: Introduce the CRISPR components (Cas9 + gRNA #1) into your target cells using an appropriate method (e.g., electroporation for immune cells, lipofection for immortalized lines) [10] [14].

- Clonal Isolation: After editing, use limiting dilution or FACS to isolate single cells and expand them into clonal populations [10].

- Genotypic Validation: Genotype clonal lines using Sanger sequencing and a tool like ICE to confirm the intended edit and ensure a bi-allelic knockout [10].

- Phenotypic Baseline: Establish a baseline measurement of your target complex trait (e.g., growth rate, metabolite production, stress resistance).

3. Sequential Editing and Phenotyping:

- Repeat Transfection: Using the validated clone from the previous step, introduce the CRISPR components for the second target gene (gRNA #2).

- Isolate and Validate: Again, isolate clonal lines and confirm the presence of the new edit via genotyping.

- Intermediate Phenotyping: Re-measure your complex trait. This step helps you understand the additive or synergistic contribution of each gene.

4. Final Validation and Safety Check:

- Off-Target Screening: For your final, multi-gene edited line, perform a genome-wide method to check for off-target mutations.

- Structural Variation Screening: Use long-read sequencing or specialized assays (e.g., CAST-Seq) to check for large, unintended deletions or rearrangements, especially if HDR-enhancing chemicals were used [11].

- Functional Assay: Conduct a definitive functional assay to confirm that the combined edits have successfully and robustly enhanced the complex trait.

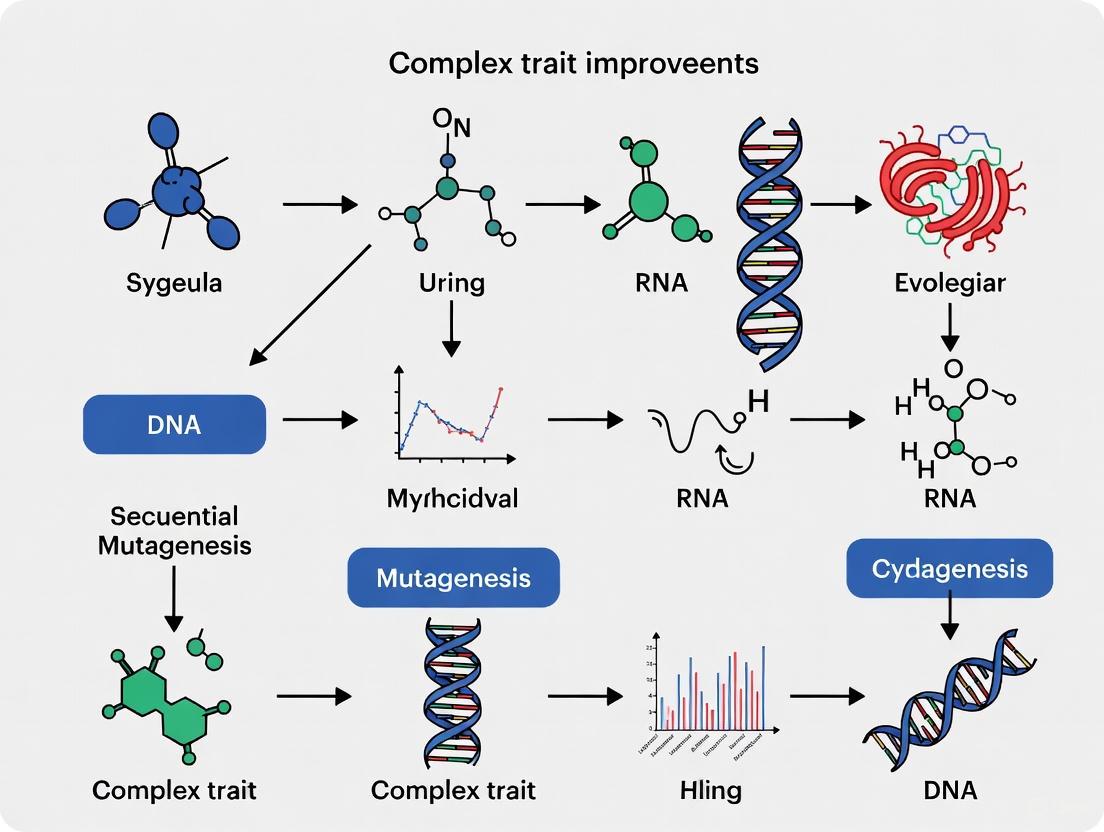

Experimental Workflow and Biological Network Diagrams

Sequential Mutagenesis Workflow

Single-Gene vs. Sequential Editing Outcomes

Research Reagent Solutions

The table below lists key reagents and their applications for sequential editing workflows.

| Reagent / Tool | Function in Sequential Editing |

|---|---|

| High-Fidelity Cas9 (e.g., SpCas9-HF1) | Reduces off-target effects during each editing round, crucial for maintaining genomic integrity in multi-gene edits [12] [11]. |

| Prime Editor (for PERT approach) | Installs a universal suppressor tRNA to overcome nonsense mutations across many genes, a disease-agnostic strategy [13]. |

| DNA-PKcs Inhibitor (e.g., AZD7648) | Use with Caution. Enhances HDR but can drastically increase structural variations and translocations [11]. |

| AAV or Lentiviral Vectors | Delivery of CRISPR components; note AAV has limited capacity, which may require split systems or smaller Cas proteins [14]. |

| CAST-Seq Assay | A specialized method for detecting structural variations and chromosomal translocations in edited cells, essential for final safety validation [11]. |

| Systems Genetics Datasets (e.g., GTEx, BXD) | Provides unbiased data to identify networks of candidate genes for sequential targeting, moving beyond single-gene hypotheses [4]. |

FAQs: Understanding Functional Redundancy in MLO Genes

What is functional redundancy in gene families and why does it complicate research? Functional redundancy occurs when multiple genes in a genome perform the same or overlapping functions, so that disrupting a single gene has minimal phenotypic impact because other genes can compensate. This is particularly common in gene families that arose through duplication events. In MLO gene families, this means that mutating a single MLO gene often fails to confer desired traits like powdery mildew resistance because paralogous genes maintain the susceptibility function [15] [16].

Which MLO genes typically show functional redundancy across species? Research across multiple plant species has consistently identified redundancy among specific clades of MLO genes. In Arabidopsis, three clade V genes (AtMLO2, AtMLO6, and AtMLO12) show functional redundancy in powdery mildew susceptibility, requiring triple mutants for complete resistance [17] [16]. Similarly, in grapevine, VvMLO3, 4, 13, and 17 demonstrate overlapping functions, with quadruple mutants needed for near-complete resistance [18]. This pattern persists in strawberry, where multiple FaMLO orthologs must be targeted [16].

What are the most effective strategies to overcome MLO redundancy? Sequential or simultaneous targeting of multiple redundant genes has proven most effective. This can be achieved through:

- Higher-order mutagenesis: Creating double, triple, or quadruple mutants using conventional breeding or crosses between single mutants [18]

- CRISPR-Cas9 with multiple gRNAs: Using systems that target several redundant paralogs simultaneously [18]

- TILLING populations: Screening large mutant libraries for individuals with mutations in multiple target genes [19]

- Virus-Induced Gene Silencing: Temporarily knocking down multiple gene family members [20]

Troubleshooting Guide: Common Experimental Challenges

Problem: Incomplete phenotypic effect after targeting a single MLO gene Solution: Identify and co-target redundant paralogs through phylogenetic analysis. Members of the same phylogenetic clade often share redundant functions. For powdery mildew susceptibility in dicots, focus on clade V genes and target all members within this clade [16] [18].

Problem: Pleiotropic effects when targeting multiple MLO genes Solution: Implement tissue-specific or inducible CRISPR/Cas9 systems to limit editing to specific tissues or developmental stages. Alternatively, screen for edited lines with minimal off-target effects and normal growth phenotypes, as editing efficiency varies between guide RNAs [18].

Problem: Difficulty identifying all redundant family members in non-model species Solution: Conduct comprehensive genome-wide identification using conserved MLO domains (PF03094) and phylogenetic analysis with related species. In octoploid strawberry, 68 MLO genes were identified across 28 chromosomes, requiring systematic characterization [16].

Experimental Protocols & Data

Table 1: MLO Family Size and Redundant Members Across Plant Species

| Species | Total MLO Genes | Redundant Susceptibility Genes | References |

|---|---|---|---|

| Arabidopsis thaliana | 15 | AtMLO2, AtMLO6, AtMLO12 | [17] [16] |

| Rice (Oryza sativa) | 12 | OsMLO1, OsMLO3, OsMLO8 (diurnal expression) | [17] |

| Grapevine (Vitis vinifera) | 17+ | VvMLO3, VvMLO4, VvMLO13, VvMLO17 | [18] |

| Strawberry (Fragaria × ananassa) | 68 | 12 FaMLO orthologs of FveMLO10, 17, 20 | [16] |

| Legumes (various species) | 13-20 | Clade V members across species | [21] |

Table 2: Efficiency of Higher-Order MLO Mutants in Powdery Mildew Resistance

| Species | Genotype | Infection Reduction | Pleiotropic Effects | References |

|---|---|---|---|---|

| Grapevine | Single mutants (mlo3, mlo4, mlo13, mlo17) | 8-50% | Minimal | [18] |

| Grapevine | Double mutants (mlo3/4, mlo3/13, mlo13/17) | 60-90% | Variable | [18] |

| Grapevine | Triple mutant (mlo3/13/17) | ~90% | More pronounced | [18] |

| Grapevine | Quadruple mutant (mlo3/4/13/17) | Near complete resistance | Significant pleiotropy | [18] |

| Arabidopsis | Single mutant (Atmlo2) | Partial resistance | Minimal | [16] |

| Arabidopsis | Triple mutant (Atmlo2/6/12) | Complete resistance | Some developmental effects | [17] [16] |

Protocol 1: Identification of Redundant MLO Family Members

- Genome-wide identification: Use known MLO protein sequences (e.g., AtMLO1: AT4G02600) as BLAST queries against your target genome [16]

- Phylogenetic analysis: Construct Neighbor-Joining tree with MEGA5 toolkit including MLOs from related species [17]

- Clade assignment: Group sequences into phylogenetic clades (I-VIII), noting that powdery mildew susceptibility genes typically cluster in clade IV (monocots) or V (dicots) [16] [21]

- Expression analysis: Integrate tissue-specific expression data to identify genes with overlapping expression patterns that may function redundantly [17]

- Synteny analysis: Check for conserved genomic blocks containing potential redundant paralogs [21]

Protocol 2: Designing CRISPR/Cas9 Systems for Multiple MLO Targeting

- Guide RNA design: Create gRNAs targeting conserved regions across redundant MLO genes

- Multiplex vector construction: Use systems like CRISPR/Cas12a for efficient editing of multiple targets [19]

- Transformation and screening: Identify lines with mutations in all target genes through sequencing

- Phenotypic analysis: Assess both desired traits (e.g., disease resistance) and potential pleiotropic effects [18]

- Segregation: Backcross to eliminate off-target mutations and separate transgene from edited loci [18]

Research Reagent Solutions

Table 3: Essential Research Reagents for MLO Redundancy Studies

| Reagent/Tool | Function/Application | Examples/Specifications |

|---|---|---|

| CRISPR-Cas Systems | Simultaneous targeting of multiple redundant genes | Cas9, Cas12a for multiplex editing [18] |

| TILLING Populations | Reverse genetics screening for multiple mutations | EMS-mutagenized libraries [19] |

| Phylogenetic Analysis Tools | Identifying redundant paralogs in gene families | MEGA5, ClustalX [17] |

| Virus-Induced Gene Silencing (VIGS) | Transient knockdown of multiple gene family members | TRV-based vectors [20] |

| RNAi Constructs | Stable silencing of redundant gene subsets | Hairpin vectors targeting conserved domains [16] |

| Multiplex gRNA Vectors | Targeting several MLO paralogs simultaneously | Golden Gate or tRNA-based systems [18] |

MLO Gene Redundancy Conceptual Framework

MLO Redundancy Workflow

Sequential Mutagenesis Experimental Design

Sequential Mutagenesis Design

Frequently Asked Questions

Q1: What is the primary advantage of combinatorial mutagenesis over single-point mutagenesis? Combinatorial mutagenesis allows you to test multiple user-defined mutations at defined positions in a single experiment. This is crucial for evaluating epistasis (gene interactions), recapitulating processes like antibody affinity maturation, and combining beneficial mutations from directed evolution campaigns into a single library. It moves beyond studying mutations in isolation to understanding their combined effects [22].

Q2: My combinatorial library has a high percentage of wild-type sequences. What is the most likely cause? High wild-type carry-over is often due to inefficient oligonucleotide incorporation during the synthesis step. This can be caused by primers with an excessive number of mismatches to the template or insufficient homology arms. Ensure your mutagenic oligonucleotides are designed with ~30bp homology arms where possible and limit the number of mismatches per primer to maintain even mutation incorporation [22].

Q3: What is the practical limit on the number of positions I can mutate in a single combinatorial library? The described nicking mutagenesis protocol is empirically limited to mutating about eight different positions using a single parental plasmid. For libraries with more positions (up to 14 have been demonstrated), you must use two different parental plasmids (e.g., Sequence A as starting, Sequence B with the complete set of mutations) and perform sequential rounds of nicking mutagenesis [22].

Q4: How do I choose between a vector-based genomic library and a transposon mutagenesis approach?

- Choose genomic vector libraries (like SCALEs or CoGEL) when you want to identify genes or multi-gene fragments that confer a trait through overexpression. This is ideal for finding genes that improve tolerance to stress or restore metabolic function [23].

- Choose transposon mutagenesis (like Tn-Seq) when you need to study gene function through disruption or knockout. This is widely used to assess gene fitness under different media conditions, study pathogenesis, or biofilm formation [23].

Q5: How can I map genotype to phenotype for complex traits that involve many genes? Complex traits are best studied using a systems genetics approach that combines both forward and reverse genetics. Forward genetics starts with a variable phenotype to identify upstream causal genetic variants. Reverse genetics starts with a gene of interest to determine its downstream phenotypic impact. Using Genetic Reference Populations (GRPs) allows for the high-resolution mapping of these complex interactions in a controlled setting [24].

Troubleshooting Guides

Issue 1: Low Library Diversity or Incomplete Coverage

Problem: Your synthesized library does not contain the full spectrum of planned variants, missing many potential combinations.

| Possible Cause | Diagnostic Questions | Solution |

|---|---|---|

| Inefficient primer annealing [22] | Are mutagenic primers >30bp apart? Do primers have long homology arms (ideally 30bp)? | Redesign primers to have 30bp homology arms. Group close-together mutations into a single oligonucleotide. |

| Low oligonucleotide-to-template ratio [22] | What molar ratio of primers to template was used? | Use a 5:1 molar ratio of mutagenic oligonucleotides to ssDNA template to ensure multiple primers anneal simultaneously. |

| Using a single parental plasmid for large libraries [22] | Are you mutating more than 8 positions? | For libraries with >8 mutated positions, use two parental plasmids and perform two sequential rounds of nicking mutagenesis. |

Issue 2: Poor Transformation Efficiency After Library Synthesis

Problem: After the mutagenesis reaction, you get very few colonies upon transforming the library into your bacterial host.

| Possible Cause | Diagnostic Questions | Solution |

|---|---|---|

| Incomplete template degradation [22] | Was the nicking enzyme step performed correctly? | Ensure the ssDNA template is freshly prepared from a dam+ bacterial strain. Confirm that all BbvCI sites in the plasmid are in the same orientation for efficient nicking. |

| Toxicity of the mutated sequences | Could some combinatorial variants be toxic to the host cells? | Use a tightly inducible promoter to control expression of your library until screening. Consider using a different bacterial strain. |

| Carryover of nicking enzymes or exonucleases [22] | Was a cleanup step (e.g., AMPure XP beads) performed post-synthesis? | Always include a post-reaction cleanup step, such as using AMPure XP beads, to purify the synthesized dsDNA plasmid before transformation. |

Issue 3: High False Discovery Rate in Target Identification

Problem: Targets identified in pre-clinical models (cells, animal models) fail to show efficacy in later-stage experiments or human trials.

| Possible Cause | Diagnostic Questions | Solution |

|---|---|---|

| Poor external validity of pre-clinical models [25] | Are you relying solely on 2D cell cultures or animal models? | Transition to Complex In Vitro Models (CIVMs) like organoids or organ-on-a-chip technology. These 3D models better mimic human in vivo conditions and improve predictive accuracy [26]. |

| Inherently high false discovery rate (FDR) in pre-clinical science [25] | What false-positive rate (α) and power (1-β) is your study designed for? | Increase statistical rigor: use a more stringent false-positive rate (e.g., α < 0.01) and ensure high statistical power through larger sample sizes to reduce FDR. |

| Ignoring human genomic evidence [25] | Are you using human genomics for target validation? | Use human genome-wide association studies (GWAS) for primary target identification. Genetic evidence in humans is a stronger predictor of clinical success because it mimics the randomized design of an RCT. |

Experimental Protocols

Protocol 1: Combinatorial Mutagenesis via Nicking Mutagenesis

This protocol is for generating a combinatorial library with user-defined mutations at multiple positions, adapted from a established method [22].

1. Preparation of Parental DNA Plasmid(s)

- Template Requirement: The parental plasmid(s) must contain a BbvCI nicking site (CCTCAGC for Nt.BbvCI; GCTGAGG for Nb.BbvCI). If needed, add this site via site-directed mutagenesis. Multiple sites are acceptable only if all are in the same orientation [22].

- Template Preparation: Isolate plasmid from a

dam+bacterial strain using a commercial miniprep kit. You will need 0.76 pmol (typically 2–3 μg) of dsDNA plasmid for each parental sequence. Using freshly prepared template is critical for success [22].

2. Design of Mutagenic Oligonucleotides

- Identify Mutations: Align sequences to identify all codon positions to be varied.

- Primer Design Rules:

- Group residues that are <30bp apart into a single oligonucleotide.

- For residues ≥30bp apart, use separate primers.

- Design primers with ~30bp homology arms on each side of the mutagenic site.

- Encode diversity using degenerate codons (e.g., NNK) that include both the parental and the desired mutant residues.

- The total oligo length should not exceed 100 nucleotides [22].

3. Nicking Mutagenesis Reaction

- Phosphorylation: In a PCR tube, combine mutagenic primers and a single non-mutagenic control primer with T4 Polynucleotide Kinase (PNK) in 1x PNK buffer with ATP. Incubate at 37°C for 30 minutes, then heat-inactivate at 65°C for 20 minutes [22].

- Annealing and Synthesis:

- To the phosphorylated oligos, add ssDNA template, Taq DNA ligase buffer, DpnI enzyme, dNTPs, NAD+, and nicking enzymes (Nb.BbvCI and Nt.BbvCI).

- Run the following thermal cycler program:

- 95°C for 2 min (denaturation)

- Ramp down to 58°C over 10 min

- 58°C for 5 min (annealing)

- Ramp down to 45°C over 10 min

- 45°C for 90 min (synthesis/ligation)

- 37°C for 90 min (nicking/degradation)

- 80°C for 20 min (heat inactivation) [22]

- Cleanup: Purify the synthesized dsDNA using a PCR cleanup kit (e.g., Monarch PCR & DNA Cleanup Kit) [22].

4. Transformation and Library Validation

- Transformation: Transform the purified DNA into high-efficiency electrocompetent E. coli (e.g., XL1-Blue). Plate on large (245 mm x 245 mm) bioassay dishes with selective antibiotic [22].

- Validation: Isolve plasmid from multiple colonies and sequence the mutated regions to confirm library diversity and evenness of mutation incorporation.

Protocol 2: Genomic Vector Library Enrichment (SCALEs)

This method identifies genes or gene fragments that confer a desired phenotype through overexpression [23].

1. Library Construction

- Fragmentation: Purify genomic DNA from your organism of interest and fragment it physically or enzymatically.

- Cloning: Clone the fragmented DNA into a suitable plasmid backbone.

- Transformation: Transform the library into a host strain to create a pool of variants.

2. Selection and Enrichment

- Apply Selective Pressure: Grow the library under the condition of interest (e.g., presence of an antimicrobial, specific carbon source).

- Harvest Enriched Variants: Isolate plasmids from the population that survives or grows best under selection.

3. Identification of Enriched Fragments

- Sequence: Identify the inserted genomic fragments in the enriched pool using next-generation sequencing.

- Microarray: Alternatively, identify fragments by hybridizing them to a whole-genome microarray [23].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Experiment |

|---|---|

| Plasmid with BbvCI site [22] | Serves as the template for nicking mutagenesis. The nicking enzyme site is essential for degrading the parental strand. |

| Mutagenic Oligonucleotides [22] | Designed with degenerate bases to encode the desired combinatorial mutations. They anneal to the template and serve as primers for new strand synthesis. |

| Nicking Enzymes (Nt.BbvCI, Nb.BbvCI) [22] | Create single-strand nicks in the parental DNA template at specific sites, enabling its selective degradation. |

| Taq DNA Ligase [22] | Joins the newly synthesized DNA fragments, creating a closed circular dsDNA plasmid. |

| Exonuclease III [22] | Degrades the nicked parental DNA strand after nicking enzyme treatment, leaving the newly synthesized mutagenic strand intact. |

| Electrocompetent E. coli (e.g., XL1-Blue) [22] | Used for high-efficiency transformation of the synthesized mutagenic library to amplify the variant pool. |

| Complex In Vitro Models (CIVMs) [26] | Advanced 3D cell models (e.g., organoids, organ-on-a-chip) that provide a more physiologically relevant context for screening variants or validating targets than 2D cultures. |

| Genetic Reference Populations (GRPs) [24] | Populations of genetically unique but reproducible individuals (e.g., BXD mice) used for high-resolution mapping of complex traits. |

Workflow & Concept Diagrams

Diagram 1: From Gene Duplication to Trait Stacking

Diagram 2: Combinatorial Mutagenesis Workflow

Diagram 3: Mutagenic Primer Design

Core Concept Definitions

Multiplex Editing is a advanced genome engineering approach that enables the simultaneous targeting of multiple genes, regulatory elements, or chromosomal regions in a single transformation event. This CRISPR-Cas based technology is particularly effective for dissecting gene family functions, addressing genetic redundancy, engineering polygenic traits, and accelerating trait stacking. Its applications now extend beyond standard gene knockouts to include epigenetic and transcriptional regulation, chromosomal engineering, and transgene-free editing [27] [28].

Combinatorial Mutagenesis refers to the systematic creation and analysis of multiple genetic perturbations in combination. This approach is essential for understanding complex trait architecture where phenotypes emerge from interactions between multiple genes. It allows researchers to explore epistatic relationships and identify synthetic lethal interactions that would be missed through single-gene approaches [27] [29].

De Novo Domestication is a novel crop breeding strategy that involves selecting elite foundation materials from wild or semi-wild plant species and rapidly introducing domestication-related traits using genetic tools while retaining their desirable wild features. This approach creates new crops with beneficial traits compared to current cultivars and is particularly valuable for incorporating climate resilience and sustainability traits from wild relatives [30] [31].

Technical Support & Troubleshooting Guides

Frequently Asked Questions

Q: What are the main technical challenges in implementing multiplex editing workflows? A: The primary challenges include complex construct design, genetic instability of repetitive elements in bacterial intermediates, somatic chimerism, and the need for robust, scalable mutation detection methods. For polyploid species, the challenge is compounded by the need to edit multiple homologous copies [27].

Q: How can we minimize off-target effects in multiplex CRISPR editing? A: Using Cas9 nickases that create single-strand breaks rather than double-strand breaks significantly reduces off-target effects. Programming two nickases to target opposite DNA strands mediates efficient on-target editing with minimal off-target activity [28].

Q: What strategies exist for achieving high-efficiency multiplex editing in plants with long generation times? A: Focus on optimizing vector architecture through promoter and scaffold engineering. Experimentally validated inducible or tissue-specific promoters are highly desirable for achieving spatiotemporal control. Additionally, leveraging high-throughput sequencing technologies, including long-read platforms, improves resolution of complex editing outcomes [27].

Q: How can we overcome linkage drag when introducing beneficial traits from wild relatives? A: Genome editing provides a solution by enabling precise introduction of specific alleles without associated deleterious genes. An alternative strategy is to engineer meiotic recombination by increasing recombination events and altering their genomic locations through temperature control, epigenetic factors, or regulating genes that control meiotic recombination [32].

Q: What are the practical limits for the number of simultaneous targets in multiplex editing? A: While efficiency varies by system, studies have successfully demonstrated 10-plex gene editing in mammalian cell lines using modular assembly methods. The practical limit depends on the delivery system, cellular repair mechanisms, and the specific CRISPR platform employed [28].

Troubleshooting Common Experimental Issues

| Problem | Possible Causes | Solutions |

|---|---|---|

| Low editing efficiency across multiple targets | gRNA design issues, inefficient delivery, nuclease exhaustion | Use optimized gRNA scaffolds; validate gRNA efficiency individually; consider Cas9 protein or mRNA delivery |

| Somatic chimerism in primary transformations | Incomplete editing in early cell divisions | Conduct sequential regeneration; use tissue-specific promoters; advance generations through selfing |

| Unexpected structural variations | Simultaneous DSBs at repetitive or tandemly spaced loci | Incorporate long-read sequencing in genotyping; increase distance between target sites |

| Bacterial instability during vector assembly | Repetitive elements in gRNA expression cassettes | Use heterogeneous promoters; incorporate tRNA or ribozyme sequences between gRNAs |

| Inconsistent phenotypes despite confirmed edits | Genetic compensation, epistatic interactions | Create multiple independent lines; conduct complementation tests; analyze intermediate generations |

Experimental Protocols & Methodologies

Multiplex Editing Workflow for Polygenic Trait Engineering

Key Methodology: High-Efficiency Multiplex Vector Construction

The Golden Gate assembly method enables efficient construction of multiplex CRISPR cassettes. This protocol utilizes type IIS restriction enzymes that cut outside their recognition sequences, allowing for seamless assembly of multiple gRNA expression units [28] [33].

Step-by-Step Protocol:

- gRNA Design: Select 20-nt target sequences with high on-target efficiency scores and minimal off-target potential. Include appropriate PAM sequences for your Cas nuclease (e.g., NGG for SpCas9).

- Oligo Design: Design complementary oligonucleotides with 5' and 3' overhangs compatible with your Golden Gate assembly system.

- Assembly Reaction: Set up Golden Gate reaction with BsaI-HFv2 or similar type IIS enzyme, T4 DNA ligase, and assembled fragments.

- Vector Construction: Clone the assembled gRNA array into your destination vector containing Cas9 expression cassette.

- Validation: Verify construct by Sanger sequencing across all junctions and restriction digest analysis.

Critical Notes: Use heterogeneous Pol III promoters (e.g., U6, U3) or incorporate self-cleaving elements (tRNA, ribozymes) between gRNAs to prevent recombination in bacterial hosts [27].

De Novo Domestication Protocol for Wild Species

Research Reagent Solutions

| Reagent Type | Specific Examples | Function & Application Notes |

|---|---|---|

| Cas Nucleases | SpCas9, LbCas12a, Cas9 nickases | SpCas9 most widely validated; Cas12a processes crRNA arrays natively; nickases reduce off-targets [28] |

| gRNA Expression Systems | Pol III promoters (U6, U3), tRNA-gRNA, ribozyme-gRNA | Heterogeneous promoters prevent recombination; tRNA and ribozyme systems enable polycistronic processing [27] |

| Assembly Systems | Golden Gate, PCR-on-ligation | Golden Gate most widely used for multiplex constructs; PCR-on-ligation enables modular assembly [33] |

| Delivery Vectors | Lentiviral, Agrobacterium, particle bombardment | Choice depends on host system; Agrobacterium most common for plants [27] [28] |

| Detection Tools | Long-read sequencers, amplicon sequencing, ddPCR | Long-read platforms essential for detecting structural variations [27] |

Advanced Applications & Integration Frameworks

Machine Learning-Assisted Combinatorial Mutagenesis

Recent advances in machine learning-assisted directed evolution (MLDE) have demonstrated improved efficiency in identifying high-fitness protein variants across diverse combinatorial landscapes. The most significant advantages are observed on landscapes that are challenging for conventional directed evolution, particularly when focused training is combined with active learning [29].

Implementation Framework:

- Landscape Analysis: Quantify navigability using multiple attributes including epistasis, ruggedness, and neutrality.

- Strategy Selection: Choose appropriate MLDE strategy based on landscape characteristics.

- Focused Training: Combine zero-shot predictors leveraging evolutionary, structural, and stability knowledge.

- Active Learning: Iteratively refine models with experimental data.

Integration with Omics and AI Technologies

The integration of genome editing with omics technologies, artificial intelligence, and robotics is creating powerful new paradigms for crop improvement. AI-driven decision support systems can analyze high-throughput omics and phenomics data to prioritize targets for multiplex editing, while robotics enables automated workflow implementation [32].

Quantitative Data & Performance Metrics

Multiplex Editing Efficiency Across Systems

| Species/System | Target Number | gRNA Architecture | Efficiency Range | Key Factors |

|---|---|---|---|---|

| Arabidopsis thaliana | 3-12 genes | Individual Pol III, tRNA | 0-94% | gRNA design, target accessibility [27] |

| Human cell lines | Up to 10 targets | Golden Gate assembly | Variable by target | Delivery efficiency, nuclease concentration [28] |

| Cucumis sativus | 3 genes | tRNA processing | High for disease resistance | Selection strategy, regeneration protocol [27] |

| Tomato de novo domestication | Multiple loci | CRISPR-Cas9 | Successful trait integration | Knowledge of domestication genes [31] |

De Novo Domestication Timeframe Comparison

| Approach | Traditional Breeding | Genome Editing | Key Advantages |

|---|---|---|---|

| Time to new cultivar | Decades | Years to decades | Knowledge-based, precise [31] |

| Trait integration | Limited by reproductive barriers | Overcomes species barriers | Access to diverse gene pools |

| Genetic load | Linkage drag inevitable | Minimal linkage drag | Precision editing |

| Regulatory path | Established but lengthy | Evolving framework | Potential for streamlined approval |

Advanced Toolkits: Methodologies and Real-World Applications of Sequential Mutagenesis

Multiplex CRISPR-Cas systems represent a transformative approach in genome engineering, enabling researchers to perform simultaneous edits at multiple genetic loci. For scientists investigating complex traits—often governed by polygenic networks and requiring sequential mutagenesis—these technologies provide an essential tool for sophisticated genetic manipulation. Unlike single-guide systems, multiplexed configurations allow for coordinated gene knockouts, large chromosomal deletions, and combinatorial genetic perturbations that can unravel complex genetic interactions and accelerate trait improvement strategies [34] [35].

The core advantage of multiplex CRISPR lies in its ability to express numerous guide RNAs (gRNAs) alongside CRISPR-associated (Cas) proteins, facilitating parallel targeting of multiple genomic sites [34]. This capability is particularly valuable for metabolic pathway engineering, functional genomic screening, and modeling complex diseases where multiple genetic elements interact to produce phenotypic outcomes [35] [28]. As these technologies advance, they offer unprecedented opportunities for analyzing and improving complex traits through systematic, multi-locus genome modifications.

Technical Guide: gRNA Architectures for Multiplex Editing

gRNA Expression and Processing Architectures

Implementing effective multiplex CRISPR editing requires selecting appropriate genetic architectures for gRNA expression and processing. The table below summarizes the primary strategies developed for this purpose:

Table 1: gRNA Expression Architectures for Multiplex CRISPR Systems

| Architecture | Mechanism | Key Features | Organisms Demonstrated | Key References |

|---|---|---|---|---|

| Individual Promoters | Each gRNA expressed from separate Pol III promoters (U6, tRNA) | High fidelity, simpler cloning but limited scalability | Mammalian cells, yeast, plants | [34] [36] |

| Native CRISPR Array Processing | gRNAs processed from single transcript by Cas proteins (Cas12a) or accessory proteins (tracrRNA/RNase III) | Leverages natural processing; efficient for large arrays | Human cells, plants, yeast, bacteria | [34] |

| Ribozyme Processing | gRNAs flanked by self-cleaving Hammerhead and hepatitis delta virus ribozymes | Compatible with Pol II/III transcription; modular | Multiple organisms | [34] |

| Csy4 Processing | gRNAs separated by Csy4 endonuclease recognition sites | High processing efficiency; requires Csy4 co-expression | Mammalian cells, yeast, bacteria | [34] |

| tRNA Processing | gRNAs flanked by pre-tRNA sequences processed by RNases P and Z | Uses endogenous tRNA processing; no additional enzymes needed | Human cells, plants, citrus | [34] [36] |

Figure 1: gRNA Expression and Processing Workflow. This diagram illustrates the two-stage process for generating functional gRNAs in multiplexed systems: transcription from Pol II or Pol III promoters, followed by processing via various mechanisms to yield individual guide RNAs.

Vector Assembly Methods for Multiplex Constructs

Constructing vectors capable of expressing multiple gRNAs presents technical challenges due to repetitive sequences. The following table compares common assembly methods:

Table 2: Vector Assembly Methods for Multiplex CRISPR Systems

| Method | Principle | Maximum gRNAs Demonstrated | Advantages | Limitations |

|---|---|---|---|---|

| Golden Gate Assembly | Type IIS restriction enzymes create unique overhangs for directional assembly | 7-10 gRNAs | Modular, efficient, directional cloning | Requires specialized vectors and enzymes |

| Gibson Assembly | Isothermal assembly using 5' exonuclease and DNA polymerase | Varies | No restriction sites needed; seamless | Potential incorrect assemblies with repeats |

| PCR-on-Ligation | Combinatorial PCR assembly of gRNA modules | 10 gRNAs | High multiplexing capacity | Complex optimization required |

Golden Gate assembly has emerged as a particularly efficient method for constructing multiplex CRISPR vectors. Sakuma et al. demonstrated the assembly of a single CRISPR-Cas9 cassette with seven gRNAs using this approach [35] [28]. Further optimization by Zuckermann et al. enabled 10-plex gene editing in HEK293T cells through a "PCR-on-ligation" step that allows modular assembly of multiple gRNAs [35] [28].

Experimental Protocols

All-in-One Vector Construction for Multiplex Editing

The following protocol describes the creation of all-in-one vectors for multiplex genome engineering, based on the system developed by Sakuma et al. (2014) [37]:

Materials:

- pX330 or similar CRISPR vector backbone

- BpiI restriction enzyme (Thermo Scientific)

- Quick ligase (New England Biolabs)

- Oligonucleotides for gRNA target sequences

- Competent E. coli cells

Method:

- Design and anneal oligonucleotides: Synthesize sense and antisense oligonucleotides for each target site. Anneal in buffer containing 40 mM Tris-HCl (pH 8.0), 20 mM MgCl₂, and 50 mM NaCl.

- Initial cloning: Insert annealed oligonucleotides into individual pX330A/S vectors using BpiI digestion and ligation in a single-tube reaction.

- Golden Gate assembly: Assemble multiple gRNA expression cassettes using Golden Gate cloning with BsaI restriction sites.

- Screen clones: Identify correctly assembled clones by colony PCR.

- Verify constructs: Sequence final all-in-one vectors using high-quality plasmid DNA. Add DMSO to sequencing reactions (5% final concentration) to improve results when encountering difficult sequences [38].

This system has been validated for simultaneous targeting of up to seven genomic loci in human cells with efficiencies comparable to single gRNA vectors [37].

tRNA-gRNA Array System for Plant Genome Editing

For plant systems, tRNA-gRNA arrays have proven particularly effective. The following protocol is adapted from studies in citrus and oilseed rape [36] [39]:

Materials:

- Plant codon-optimized Cas9 (zCas9i for citrus)

- UBQ10 or RPS5a promoter for Cas9 expression

- Pol III promoters (U6-26) or Pol II promoters (UBQ10, ES8Z) for gRNA arrays

- Arabidopsis thaliana tRNA sequences (GCC anticodon)

- Agrobacterium tumefaciens strain EHA105 for plant transformation

Method:

- Design tRNA-gRNA array: Synthesize arrays of sgRNAs separated by tRNA sequences (e.g., Arabidopsis thaliana tRNA with GCC anticodon).

- Clone into binary vector: Insert the tRNA-gRNA array and Cas9 expression cassette into binary vectors using Golden Gate cloning.

- Transform plants: For citrus, use epicotyls from etiolated seedlings for Agrobacterium-mediated transformation with appropriate selection.

- Screen mutants: Use polyacrylamide gel electrophoresis (PAGE) based screening to identify mutations. In oilseed rape, plants with obvious heteroduplexed PAGE bands showed 96.8-100% editing frequency versus 0-60.8% in those without clear bands [39].

Promoter Selection: Optimal promoter combinations significantly enhance editing efficiency. In citrus, the Arabidopsis UBQ10 or RPS5a promoters driving zCas9i, combined with Pol III promoters or the ES8Z Pol II promoter for gRNA arrays, achieved efficient multiplex editing [36].

Troubleshooting Guide

Common Experimental Challenges and Solutions

Table 3: Troubleshooting Multiplex CRISPR Experiments

| Problem | Possible Causes | Recommended Solutions | Supporting References |

|---|---|---|---|

| Low editing efficiency | Poor gRNA expressionInsufficient Cas9Inaccessible chromatin | Optimize promoter choiceUse intron-containing Cas9 variantsApply heat stress to improve chromatin accessibility | [36] [38] |

| No cleavage bands detected | Transfection efficiency too lowNucleases cannot access target | Optimize transfection protocolDesign new targeting strategy at nearby sequencesUse kit control templates to verify components | [38] |

| Unintended mutations (off-target effects) | gRNA homology with non-target sitesHigh nuclease concentration | Use double nickase strategy (Cas9 D10A mutant)Design gRNAs with minimal off-target potentialValidate with Genomic Cleavage Detection Kit | [40] [41] |

| PCR artifacts in cleavage detection | Lysate too concentratedGC-rich regions | Dilute lysate 2-4 foldAdd GC enhancer (1-10 μL in 50 μL reaction)Redesign primers for 18-22 bp, 45-60% GC content | [38] |

| Vector assembly failures | Oligos designed incorrectlyRepetitive sequence recombination | Verify cloning overhangs (CACC on 5' end, AAAC on 3' end)Use different promoters for each gRNAApply Gibson or Golden Gate assembly | [38] [35] |

FAQs: Addressing Key Technical Questions

Q1: Should I use wildtype Cas9 or double nickase for multiplex experiments?

A1: The choice depends on your priority. Wildtype Cas9 with optimized chimeric gRNA typically shows high efficiency but potentially higher off-target effects. The double nickase system (using Cas9 D10A mutant) requires two gRNAs per target but demonstrates comparable efficiency with significantly reduced off-target effects. For multiplex applications where specificity is crucial, the double nickase approach is recommended [41].

Q2: How should I design oligos for cloning into CRISPR vectors?

A2: When using vectors with U6 promoters, add a 'G' nucleotide at the transcription start site for optimal expression. Do not include the PAM (NGG) sequence in the oligo—it must be present in the genomic target but not in the oligo itself. Standard oligo design should include the appropriate overhangs (e.g., CACC on the 5' end for top strand) for directional cloning [41].

Q3: What are the key considerations for homologous recombination templates?

A3: For small changes (<50 bp), use single-stranded DNA oligos with 50-80 bp homology arms. For larger insertions (>100 bp), use plasmid donors with ~800 bp homology arms. Critical: mutate the PAM sequence in the HR template (e.g., change NGG to NGT) to prevent Cas9 cleavage of the donor DNA. The double-strand break should be within 10 bp of the desired modification for optimal efficiency [41].

Q4: How can I achieve single-allelic editing when targeting both alleles?

A4: Even when the target sequence is present in both alleles, it is possible to obtain single-allelic edits. After CRISPR treatment and single-cell cloning, genotype individual colonies. Single-allelic modifications typically comprise the majority of edited cells unless targeting efficiency is exceptionally high [41].

Research Reagent Solutions

Table 4: Essential Reagents for Multiplex CRISPR Research

| Reagent Category | Specific Examples | Function & Application Notes | Key References |

|---|---|---|---|

| Cas9 Variants | SpCas9, SaCas9, FnCas9, dCas9, Cas9 nickase (D10A) | Nucleases with different PAM requirements; dCas9 for transcriptional control; nickase for reduced off-targets | [40] [41] |

| Promoters for gRNAs | U6 (Pol III), tRNA (Pol III), ES8Z (Pol II) | Drive gRNA expression; Pol III for high fidelity, Pol II for flexibility and inducibility | [34] [36] |

| Promoters for Cas9 | UBQ10, RPS5a, 35S | Constitutive high-expression promoters for Cas9 in plants; species-specific optimization needed | [36] |

| Processing Systems | tRNA-Gly, Csy4, Ribozymes (HH/HDV), Cas12a | Process polycistronic gRNA arrays into individual functional gRNAs | [34] [36] |

| Assembly Systems | Golden Gate MoClo toolkit, Gibson Assembly | Modular cloning systems for efficient vector construction | [36] [35] |

| Detection Kits | Genomic Cleavage Detection Kit | Verify cleavage efficiency and detect mutations at endogenous loci | [38] |

Figure 2: Multiplex CRISPR Experimental Workflow and Optimization Points. This diagram outlines the key stages in implementing multiplex CRISPR systems, highlighting critical optimization points that significantly impact experimental success.

Multiplex CRISPR-Cas systems have revolutionized approaches to complex trait improvement by enabling simultaneous, coordinated genetic modifications. The gRNA architectures and vector design strategies detailed in this technical resource provide scientists with robust frameworks for implementing these powerful tools in their research. As the field advances, further optimization of promoter systems, processing efficiency, and delivery methods will continue to enhance the precision and scalability of multiplex genome editing.

For researchers investigating polygenic traits, these technologies offer unprecedented opportunities to model and engineer complex genetic networks. By applying the troubleshooting guidelines and experimental protocols outlined here, scientists can overcome common technical challenges and leverage multiplex CRISPR systems to accelerate discoveries in functional genomics and trait improvement research.

Sequential mutagenesis, the process of introducing multiple genetic alterations in a stepwise manner, is a powerful technique for studying complex biological processes like cancer evolution, organismal development, and for engineering crops with improved traits [19] [42]. The ability to precisely control the order of genetic events is crucial, as certain phenotypes only manifest with specific temporal sequences of mutations [42]. This technical support center provides detailed protocols and troubleshooting guides for three powerful methods—LFEAP, OE-PCR, and Gibson Assembly—that enable researchers to make large and multiple genetic changes efficiently.

The table below summarizes the core characteristics, advantages, and limitations of each mutagenesis strategy.

Table 1: Comparison of Mutagenesis Strategies for Large and Multiple Changes

| Method | Key Principle | Best For | Maximum Simultaneous Changes Demonstrated | Key Advantage | Primary Limitation |

|---|---|---|---|---|---|

| LFEAP Mutagenesis [43] | Ligation of Fragment Ends After PCR; uses inverse PCR and sticky-end assembly. | Introducing multiple point mutations, insertions, and deletions in large plasmids. | 15 changes in a single reaction [43] | High efficiency and fidelity for complex, multi-site alterations. | Requires multiple PCR and enzymatic steps. |

| Overlap Extension PCR (OE-PCR) [44] | Gene fusion by splicing DNA fragments with overlapping ends. | Fusing multiple DNA fragments or introducing mutations via PCR. | Varies with template difficulty; long/multi-fragment PCR can be inefficient. | No restriction enzymes required; can assemble multiple fragments. | Low efficiency for long genes and multi-fragment fusion. |

| Gibson Assembly [45] | Single-tube, isothermal reaction using exonuclease, polymerase, and ligase. | Seamless assembly of multiple DNA fragments (e.g., plasmid construction, CRISPR vectors). | Up to 6 fragments in a single reaction [45] | Seamless, flexible, and fast assembly of multiple fragments without scarring. | Optimal overlap length must be carefully designed (20-40 bp). |

The following diagram illustrates the core workflow for the LFEAP mutagenesis method:

Detailed Experimental Protocols

LFEAP Mutagenesis Protocol

The LFEAP method is highly versatile for introducing a wide array of mutations into plasmid DNA [43].

- Primer Design: For each mutation site, design four primers. Forward Primer 1 (Fw1) and Reverse Primer 1 (Rv1) should flank the "overhang" region and contain the desired mutations at their 5' ends. Forward Primer 2 (Fw2) and Reverse Primer 2 (Rv2) are designed to have additional overhang sequences (6-10 nucleotides are optimal [43]) at their 5' ends.

- First-Round PCR: Perform an inverse PCR on the target plasmid using the Fw1 and Rv1 primer pairs. This generates linearized DNA fragments containing the desired mutations. Use a high-fidelity DNA polymerase to minimize errors.

- Product Purification: Gel purify the PCR products from the first round to remove primers and the original template.

- Second-Round PCR: Use the purified DNA from step 2 as the template in two separate single-primer PCRs. One reaction uses only Fw2, and the other uses only Rv2. This generates complementary single-stranded DNA fragments with the designed 5' overhangs.

- Phosphorylation and Annealing: Treat the second-round PCR products with Polynucleotide Kinase (PNK) to ensure the 5' ends are phosphorylated. Subsequently, mix and anneal the complementary single-stranded DNA fragments to form double-stranded DNA with compatible sticky ends.

- Ligation and Transformation: Ligate the annealed products using DNA ligase to form a circular, mutagenized plasmid. Transform the ligation reaction into competent E. coli cells and screen colonies for the desired mutations.

Gibson Assembly Protocol

Gibson Assembly is a popular method for seamless DNA assembly, useful for building complex constructs from multiple fragments [45].

- Fragment Preparation: Obtain DNA fragments with 20-40 base pair overlapping ends. The overlap should have a high GC content to promote stable annealing. Fragments can be generated by PCR (using a high-fidelity polymerase) or by restriction enzyme digestion. Linearize your vector via PCR or restriction digest.

- Gibson Reaction Assembly: In a single tube, combine the linearized vector and DNA fragments with the Gibson Assembly master mix, which contains an exonuclease, a DNA polymerase, and a DNA ligase. The typical reaction time is 15-60 minutes at 50°C.

- Transformation and Screening: Transform the entire assembly reaction into high-efficiency competent E. coli cells. Plate on selective media and screen resulting colonies by colony PCR, restriction digest, or sequencing.

Enhanced OE-PCR with Gibson Assembly Interposition

For difficult overlap extension PCR involving long DNA or multiple fragments, a hybrid approach can significantly improve efficiency [44].

- Fragment Amplification: Amplify each individual DNA fragment via PCR, ensuring they contain overlapping ends with adjacent fragments.

- Gibson Assembly Interposition: Instead of proceeding directly to the fusion PCR, mix the purified fragments in equal proportion and perform a Gibson Assembly reaction. This facilitates the formation of complete gene templates at a moderate temperature.

- Fusion PCR Amplification: Use the assembled mixture from the previous step as a high-quality template for the second round of PCR to amplify the full-length, fused product.

Troubleshooting Guides

Common Issues and Solutions for LFEAP and OE-PCR

Table 2: Troubleshooting LFEAP and Overlap Extension PCR Methods

| Problem | Possible Cause | Solution |

|---|---|---|

| Few or no colonies after transformation. | Inefficient ligation due to short overhangs. | For LFEAP, ensure overhangs are 6-10 nucleotides long for optimal efficiency [43]. |

| Low purity of DNA fragments. | Gel purify PCR products to remove primers, enzymes, and salts that may inhibit downstream steps [46]. | |

| No PCR product in initial amplification. | Suboptimal primer design. | Redesign primers ensuring they are 15-30 bases, have 40-60% GC content, and similar Tm values (within 5°C) [47]. |

| Complex template (e.g., high GC-content). | Use a PCR additive like DMSO (1-10%), formamide (1.25-10%), or Betaine (0.5-2.5 M) to help denature GC-rich templates [46] [47]. | |

| Mutations not present in final construct. | Low-fidelity DNA polymerase. | Use a high-fidelity DNA polymerase to reduce misincorporation of nucleotides [46] [48]. |

| Unbalanced dNTP concentrations. | Ensure equimolar concentrations of dATP, dCTP, dGTP, and dTTP in the PCR [46]. |

Common Issues and Solutions for Gibson Assembly

Table 3: Troubleshooting Gibson Assembly Cloning

| Problem | Possible Cause | Solution |

|---|---|---|

| High background (empty vector). | Incomplete digestion of the vector backbone. | If using a restriction enzyme, confirm digestion is complete by gel electrophoresis. For PCR-linearized vectors, use DpnI treatment to digest the methylated parental template [45]. |

| Incorrect assembly. | Short or misdesigned overlaps. | Design overlaps to be 20-40 bp with a Tm >50°C. Use software to verify design [45]. |

| Low assembly efficiency. | Too many fragments at once. | While up to 6 fragments can be assembled, efficiency may drop. Consider a hierarchical assembly strategy for very complex constructs [45]. |

| Incorrect fragment stoichiometry. | Use a molar ratio of 1:1 to 1:3 (vector:insert) for each fragment. Adjust ratios for larger inserts [45]. |

Frequently Asked Questions (FAQs)

Q1: How do I decide between Golden Gate Assembly and Gibson Assembly for my cloning project?

- Choose Golden Gate Assembly for highly precise, repetitive cloning tasks using type IIS restriction enzymes. Choose Gibson Assembly when you need to seamlessly assemble a larger number of DNA fragments simultaneously or work with fragments that lack convenient restriction sites [45].

Q2: What is the single most critical factor for successful LFEAP mutagenesis?

- The length of the overhang sequence is critical. An overhang of 6-10 nucleotides results in maximum efficiency and fidelity (~100%). Overhangs shorter than 4 nucleotides or longer than 20 nucleotides lead to a significant drop in performance [43].

Q3: My OE-PCR fails for long or multi-fragment assemblies. What can I do?

- Insert a Gibson assembly process between the two PCR rounds. After amplifying each fragment with overlaps, mix them for a Gibson Assembly reaction. This facilitates template formation at a moderate temperature, after which the assembled product can be used as a template for the final fusion PCR, greatly improving efficiency [44].

Q4: How can I speed up my Gibson Assembly workflow?

- You can shorten the reaction time, use unpurified PCR products directly in the assembly (if yield and specificity are high), or employ a rapid transformation protocol that omits extended heat-shock or recovery steps [45].

The Scientist's Toolkit: Key Research Reagents

Table 4: Essential Reagents for Mutagenesis and Assembly Techniques

| Reagent / Kit | Function | Application Notes |

|---|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, Platinum SuperFi II) | Amplifies DNA fragments with extremely low error rates. | Essential for all methods to prevent unwanted mutations in the final construct [46] [45]. |

| Gibson Assembly Master Mix | Pre-mixed blend of exonuclease, polymerase, and ligase enzymes. | Simplifies and standardizes the Gibson Assembly protocol for seamless fragment assembly [45]. |

| Polynucleotide Kinase (PNK) | Adds a phosphate group to the 5' end of DNA. | Critical for the LFEAP protocol to ensure the DNA fragments can be ligated [43]. |

| T4 DNA Ligase | Joins DNA fragments by forming phosphodiester bonds. | Used in the final step of LFEAP to circularize the mutagenized plasmid [43]. |

| DpnI Restriction Enzyme | Cleaves methylated DNA. | Used to digest the parental, methylated plasmid template after PCR, reducing background in transformations [45]. |

| One Shot TOP10 Competent E. coli | High-efficiency chemically competent cells. | Used for transforming assembled DNA constructs to obtain a high number of correct clones [45]. |

Troubleshooting Guides & FAQs

FAQ: Core Concepts and Strategic Planning

Q1: What is the primary advantage of using computational design over fully random mutagenesis methods like error-prone PCR?

Synthetic combinatorial libraries limit mutations to defined regions at precise frequencies, unlike conventional methods that incorporate many unwanted background mutations. This focuses diversity on functionally important areas, dramatically reducing the number of non-functional variants and saving significant screening time and cost. [49]

Q2: How do I strategically balance the competing objectives of library quality and novelty?

The OCoM framework explicitly evaluates this trade-off. You can explore this balance by treating library design as a multi-objective optimization problem, using a parameter (λ) to weight the importance of predicted fitness against sequence diversity. This generates a Pareto frontier of optimal solutions where neither quality nor novelty can be improved without compromising the other. [50] [51]

Q3: My project involves engineering a new-to-nature enzyme function with no existing fitness data. What is the best "cold-start" approach?

Machine learning algorithms like MODIFY are designed for this "cold-start" challenge. They use pre-trained protein language models to make zero-shot fitness predictions based on evolutionary patterns in natural protein sequences, then co-optimize expected fitness and diversity to design effective starting libraries without requiring experimentally characterized mutants. [51]

Q4: For a typical protein engineering project, what library size is considered manageable and effective?

Library design often targets specific regions. For example, one study exploring a 17-residue combinatorial space (theoretically 196,608 variants) successfully identified improved mutants by testing only about 0.08% of the sequence space (152 data points) using machine learning guidance. [52] Commercial synthetic libraries are available for up to 1,011 variants for simultaneous randomization of multiple codons. [49]

Troubleshooting Guide: Common Experimental Challenges

Problem: Poor functional hit rate in synthesized library.

- Potential Cause: Library diversity is too broad and includes too many destabilizing mutations.

- Solution: Implement structural filters. Use tools like SOCoM to incorporate structure-based energy calculations, or apply computational stability predictions to filter out folds that are unlikely to be stable. Focus randomization on regions known from crystal structures, conserved motifs, or homologs. [49] [53]

Problem: Inability to effectively screen large libraries due to low-throughput assays.

- Potential Cause: Library size exceeds screening capacity.

- Solution: Adopt a machine learning-guided iterative approach. Start with a smaller, rationally designed library (e.g., using OCoM or MODIFY) to generate initial sequence-function data. Use this data to train a model that predicts higher-fitness regions of sequence space, then focus subsequent screening on these enriched, smaller subsets. [51] [52]

Problem: ML model predictions do not correlate well with experimental results.

- Potential Cause 1: Training data is insufficient or lacks higher-order mutants.

- Solution: Ensure your initial training library includes combinatorial mutations, not just single mutants. Studies show ML models can predict higher-order mutant fitness from lower-order mutant data. [52]

- Potential Cause 2: Model does not account for epistatic effects.

- Solution: Utilize models that incorporate two-body interactions or more advanced ensemble methods that capture non-additive effects between mutations. [50] [51]

Problem: Need for high-quality, sequence-defined variant libraries without cumbersome cloning.

- Potential Cause: Traditional site-saturation mutagenesis with degenerate primers can be inefficient.

- Solution: Implement a cell-free protein synthesis pipeline. Use PCR-based mutagenesis followed by cell-free DNA assembly and direct expression via linear DNA templates. This avoids transformation and cloning bottlenecks, enabling rapid generation of thousands of sequence-defined mutants in parallel. [54]

Table 1: Performance Comparison of Combinatorial Library Design Algorithms

| Algorithm | Core Approach | Optimization Method | Key Output | Reported Efficiency |

|---|---|---|---|---|

| OCoM [50] | Sequence potentials (one- & two-body) | Dynamic programming, Integer programming | Library variants balancing quality & novelty | Designed 18-mutation library (10⁷ variants of 443-residue P450) in 1 hour |

| SOCoM [53] | Structure-based energy scoring + evolutionary acceptability | Not specified | Libraries optimized along structure-sequence trade-off continuum | Incorporates known beneficial mutations while providing novel combinations |

| MODIFY [51] | Ensemble ML (protein language + sequence density models) | Pareto optimization | Library with co-optimized fitness and diversity | Outperformed baselines in zero-shot fitness prediction on 34/87 ProteinGym datasets |

| ML-guided (Pectin Lyase Study) [52] | Regression models trained on low-order mutants | Iterative DBTL | Enriched libraries of higher-order mutants | Enriched stable mutants by testing 0.08% of sequence space (152 of 196,608 variants) |

Table 2: Experimental Outcomes from ML-Guided Combinatorial Mutagenesis

| Study / System | Library & Screening Scale | Key Experimental Results | Structural & Functional Insights |

|---|---|---|---|

| Pectin Lyase Thermostability [52] | 17 residues targeted; 152 low-order mutants trained model to predict 196,608-variant space. | Best mutant P36: 67x longer half-life at 75°C; 2.1x increased activity. | Molecular dynamics revealed enhanced rigidity and stronger interaction networks. |

| New-to-Nature Cytochrome c [51] | MODIFY-designed library for C–B and C–Si bond formation. | Identified generalist biocatalysts 6 mutations away from previous designs with superior/comparable activity. | Altered loop dynamics contributed to new catalytic activity. |

| Amide Synthetase Engineering [54] | 1,217 enzyme variants tested in 10,953 reactions for ML training. | ML-predicted variants showed 1.6x to 42x improved activity for 9 pharmaceuticals. | Cell-free platform enabled parallel mapping of fitness landscapes for multiple reactions. |

Experimental Protocols

Protocol 1: OCoM-Based Library Design for Sequence-Quality-Novelty Balance

This protocol is adapted from the OCoM (Optimization of Combinatorial Mutagenesis) methodology for designing libraries that balance variant quality and novelty. [50]

Key Reagents & Inputs:

- Target Protein Sequence: The wild-type or parent sequence for the design.

- Mutation Positions: A set of residue positions targeted for randomization.

- Sequence Potentials Data: One-body and two-body statistical potentials derived from evolutionary data.

- Construction Constraints: Specifications for library synthesis (e.g., degenerate codon options, library size limits).

Methodology:

- Define Optimization Objectives: Formally define the objective function to maximize the average one-body and two-body sequence potentials over all library variants (quality) while incorporating a penalty for simply recapitulating known natural sequences (novelty). [50]

- Algorithm Selection: