Site-Saturation Mutagenesis: A Strategic Guide to Building Focused Libraries for Protein Engineering

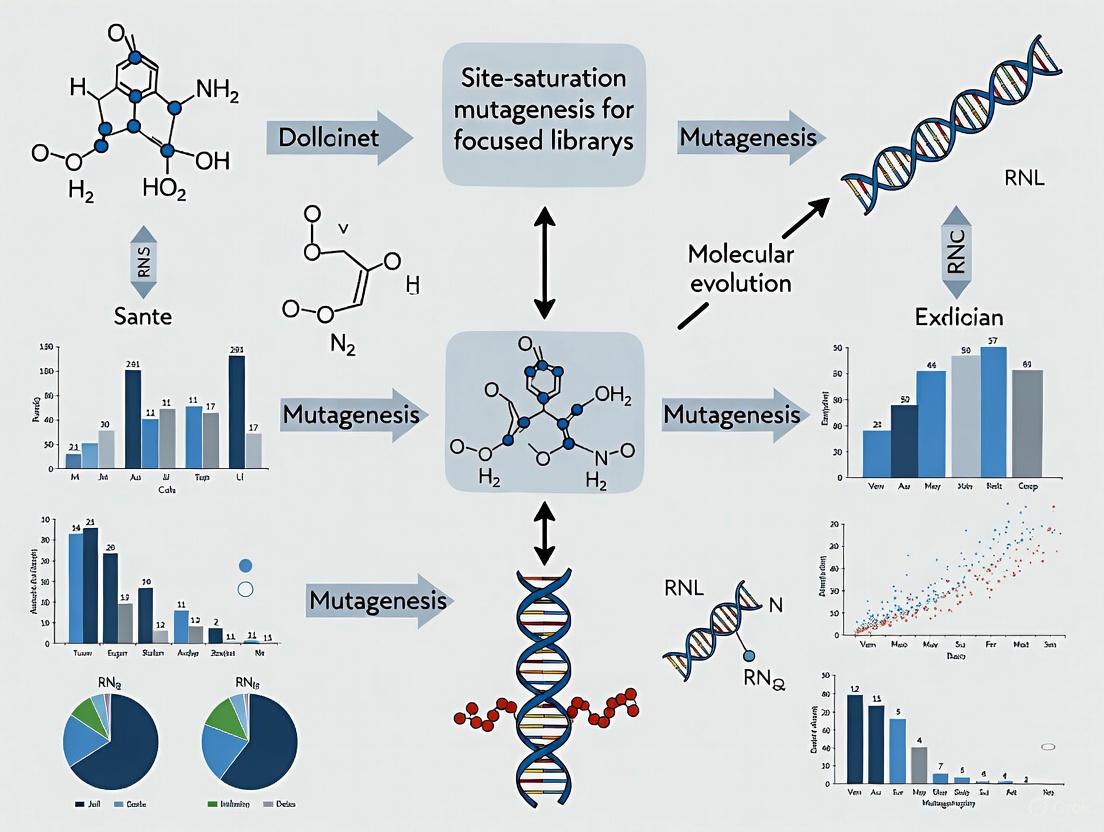

This article provides a comprehensive guide to site-saturation mutagenesis (SSM) for constructing focused mutant libraries, a cornerstone technique in modern protein engineering and directed evolution.

Site-Saturation Mutagenesis: A Strategic Guide to Building Focused Libraries for Protein Engineering

Abstract

This article provides a comprehensive guide to site-saturation mutagenesis (SSM) for constructing focused mutant libraries, a cornerstone technique in modern protein engineering and directed evolution. Tailored for researchers and drug development professionals, it covers foundational principles—contrasting SSM with random mutagenesis—and delves into advanced methodologies like CAST/ISM and FRISM for creating 'smarter', smaller libraries. The scope extends to practical troubleshooting of common experimental pitfalls, the application of computational tools for in silico library design and validation, and a comparative analysis of SSM's performance against other techniques. By synthesizing current methods, optimization strategies, and validation frameworks, this resource aims to equip scientists with the knowledge to efficiently engineer proteins with enhanced properties such as stability, activity, and selectivity.

What is Site-Saturation Mutagenesis? Building a Foundational Understanding for Focused Library Design

Site-saturation mutagenesis (SSM) is a powerful protein engineering technique that systematically replaces a specific amino acid residue within a protein with all other 19 natural amino acids. This approach enables researchers to comprehensively explore the functional and structural contributions of individual residues without a priori assumptions about which substitutions might be beneficial. Unlike random mutagenesis methods that scatter mutations throughout a gene, SSM creates focused libraries that concentrate diversity at predetermined positions, enabling more efficient investigation of structure-function relationships. SSM has become an indispensable tool in the molecular biology toolkit for probing protein stability, enzyme activity, ligand binding, and allosteric regulation, providing residue-by-residue functional maps that illuminate the mechanistic basis of protein function [1].

The fundamental premise of SSM is that by systematically testing all possible amino acid substitutions at a given site, researchers can identify "hot-spot" residues critical for function and distinguish them from positions tolerant to variation. This methodology has been revolutionized by advances in high-throughput DNA synthesis, next-generation sequencing, and robotic screening platforms, which now enable the simultaneous analysis of hundreds of thousands of variants in a single experiment. Recent large-scale applications demonstrate the remarkable scalability of SSM approaches, with one study quantifying the effects of over 500,000 missense variants on the abundance of more than 500 human protein domains, revealing that approximately 60% of pathogenic missense variants reduce protein stability [2].

Key Applications and Impact

SSM enables diverse applications across basic research and biotechnology development, each leveraging the comprehensive nature of saturated amino acid substitution.

Table 1: Key Applications of Site-Saturation Mutagenesis

| Application Area | Specific Use Cases | Key Outcomes |

|---|---|---|

| Protein Stability Analysis | Mapping stability determinants; Identifying stabilizing mutations | Quantification of ΔΔG changes; Identification of residues where mutations to proline are most detrimental [2] |

| Functional Site Mapping | Active site characterization; Binding interface analysis | Discrimination between active site residues (where mutations have large effects) and buried residues (primarily affecting folding) [1] |

| Protein Engineering | Enzyme optimization; Therapeutic antibody maturation | Enhanced stability, activity, and altered specificity relative to wild-type [1] |

| Disease Mechanism Elucidation | Functional characterization of genetic variants; Pathogenicity assessment | Revealed 60% of pathogenic missense variants reduce protein abundance [2] |

| Computational Method Validation | Testing protein stability predictions; Benchmarking variant effect predictors | Provides experimental data for training and validating algorithms like ThermoMPNN (ρ = 0.50-0.57) [2] |

The data generated from SSM experiments provides unprecedented insights into protein fitness landscapes. By comparing experimentally quantified stability to evolutionary fitness, researchers have demonstrated that protein stability accounts for a median of 30% of the variance in protein fitness across domains, with variation across protein families: 40% for all-beta domains compared to 25% for all-alpha domains [2]. This quantitative understanding of stability-activity relationships accelerates the rational design of proteins with customized properties.

Experimental Design and Workflow

The following diagram illustrates the core workflow for a typical SSM experiment, from library design through functional analysis:

Library Design Strategies

Central to SSM is the design of oligonucleotides that introduce diversity at the target codon. Traditional methods used NNK or NNN degeneracy (where N = A/T/G/C, K = G/T), which encode all 20 amino acids but with varying redundancy and include a stop codon. However, modern approaches employ customized degenerate codons that minimize library size while maintaining coverage of desired amino acids. Computational tools like DYNAMCC_D help design minimal degenerate codon sets based on user-defined parameters including target organism codon usage, desired amino acid subsets, and hamming distance from the wild-type codon [3].

The hamming distance—the number of nucleotide changes between the wild-type and mutant codon—significantly impacts library diversity and functional outcomes. Restricting libraries to single-nucleotide polymorphisms (SNPs), which occur most frequently in nature, accesses only 9 possible codons from any given wild-type codon. In contrast, allowing two or three base changes accesses up to 54 codons and enables exploration of amino acids with more diverse chemical properties, which is often necessary for dramatic functional enhancements in protein engineering [3].

Primer Design and Library Construction

An efficient one-step method for site-directed and site-saturation mutagenesis improves upon commercial protocols like the QuickChange system by modifying primer design to minimize primer dimerization and ensure priority of primer-template annealing. In this approach, primers complement each other at the 5′-terminus rather than the 3′-terminus, which prevents self-extension and enables successful introduction of multiple mutations (up to 7 bases) in vectors ranging from 4-12 kb [4].

For saturation mutagenesis, primers are designed with degenerate codons at the target positions:

- Forward: 5′-CTGTGCCATCNNKTATGGTAACTTCTTTGACTACTGG-3′

- Reverse: 5′-GAAGTTACCATAMNNGATGGCACAGTAATAGACTGCAGAG-3′

Where N represents any nucleotide (A/T/G/C), K represents G/T, and M represents A/C [4]. This design strategy has been shown to produce libraries without specific sequence selection bias, with randomized positions resulting in average occurrence of each base.

Essential Reagents and Research Tools

Successful implementation of SSM requires carefully selected molecular biology reagents and computational tools.

Table 2: Essential Research Reagents and Solutions for SSM

| Reagent/Tool Category | Specific Examples | Function in SSM Protocol |

|---|---|---|

| Polymerase Systems | Expand High Fidelity PCR system; Q5 high-fidelity DNA polymerase | Amplification of mutant libraries with high fidelity and efficiency [4] |

| Cloning & Assembly | NEBuilder HiFi DNA assembly master mix; T4 DNA ligase; BsmBI-v2; BsaI-HFv2 | Assembly of mutant libraries into expression vectors [5] |

| Competent Cells | Endura electrocompetent cells; XL1-Blue chemo-competent cells; TOP10 competent cells | Transformation of mutant libraries for propagation and analysis [5] [4] |

| Selection Markers | Blasticidin S HCl; Puromycin dihydrochloride | Selection of cells expressing mutant libraries [5] |

| Sequencing Platforms | Illumina MiSeq; Roche 454 pyrosequencing | High-throughput sequencing of variant libraries before and after selection [6] [2] |

| Computational Tools | DYNAMCC_D; SONAR suite; partis | Library design, sequence analysis, and germline gene assignment [6] [3] |

Detailed Protocol: Saturation Mutagenesis-Reinforced Functional (SMuRF) Assay

The SMuRF assay represents a recent advancement applying SSM to characterize genetic variants in disease-related genes. This protocol enables generation of functional scores for small-sized variants (SNVs, indels) through the following steps [5]:

Cell Line Platform Establishment (25-30 days)

CRISPR RNP nucleofection creates a clean background by knocking out the endogenous gene of interest (GOI):

- Design and synthesize sgRNA targeting early exons of the GOI to generate frameshift variants (e.g., spacer sequence: GTTCGAGGCATTTGACAACG for FKRP gene)

- Prepare ribonucleoprotein complexes by combining:

- 18 μL supplemented SE Cell Line Nucleofector Solution

- 6 μL 30 μM sgRNA

- 1 μL 20 μM SpCas9 2NLS Nuclease

- Incubate at room temperature for 10 minutes

- Prepare cells for nucleofection according to manufacturer's instructions

- Isolate monoclonal cell lines and validate knockout efficiency through sequencing and functional assays [5]

Programmed Allelic Series with Common Procedures (PALS-C) Cloning

This method simplifies saturation mutagenesis library construction:

- Design oligonucleotide pools containing all desired mutations flanked by homology arms

- Perform PCR amplification using high-fidelity polymerase under the following conditions:

- Initial denaturation: 94°C for 3 minutes

- 16 cycles of: 94°C for 1 minute, 52°C for 1 minute, 68°C for 8-24 minutes (depending on template size)

- Final extension: 68°C for 1 hour

- Treat PCR products with DpnI to digest methylated parental DNA template

- Purify PCR products using QIAquick PCR purification kit

- Transform into competent cells and inoculate on selective media [5] [4]

Functional Screening and Sequencing

- Delivery of variant plasmid pools into platform cell lines via nucleofection

- Fluorescence-activated cell sorting based on functional signaling (e.g., IIH6C4 antibody detection of α-dystroglycan glycosylation levels)

- Extract genomic DNA from sorted populations using PureLink genomic DNA mini kit

- Prepare sequencing libraries and perform high-throughput sequencing

- Analyze sequence data to determine variant enrichment in functional populations [5]

Data Analysis and Interpretation

Analysis of SSM data involves comparing variant frequencies before and after selection to calculate enrichment scores that reflect each mutation's functional impact. The relative enrichment or depletion of each mutant serves as a quantitative measure of its contribution to the screened property [1]. For protein stability studies, researchers typically observe that mutations in buried core regions are more detrimental than surface mutations, with mutations to proline generally being most destabilizing, particularly in beta strands and helices [2].

Advanced analysis integrates stability measurements with evolutionary fitness predictions from protein language models like ESM1v. Sigmoidal curves model the relationship between protein abundance and evolutionary fitness, with residuals identifying mutations with larger effects on fitness than can be accounted for by stability changes alone—potentially indicating residues involved in specific molecular interactions rather than structural integrity [2].

Site-saturation mutagenesis represents a powerful methodological framework for comprehensively exploring amino acid substitution space at targeted protein positions. Through carefully designed library construction, high-throughput functional screening, and sophisticated sequence analysis, SSM provides unprecedented insights into protein structure-function relationships, enables engineering of improved biocatalysts and therapeutics, and facilitates characterization of disease-associated genetic variants. As DNA synthesis and sequencing technologies continue to advance, SSM approaches will likely expand to encompass larger protein segments and multiple simultaneous mutations, further illuminating the complex relationships between protein sequence, structure, stability, and function.

In the field of protein engineering and directed evolution, the choice of mutagenesis strategy is pivotal to the success of research and development projects. Site-saturation mutagenesis (SSM) and random mutagenesis represent two fundamentally distinct approaches, each with characteristic advantages and limitations. SSM is a semi-rational technique that enables researchers to substitute specific amino acid residues with all possible amino acids, allowing comprehensive exploration of function and stability at predetermined positions [1] [7]. In contrast, traditional random mutagenesis methods introduce mutations throughout the entire genome or gene segment without precise positional control [8]. For researchers and drug development professionals requiring focused investigation of structural or functional regions—such as enzyme active sites, ligand-binding pockets, or protein-protein interaction interfaces—SSM provides unparalleled precision that random approaches cannot match. This application note delineates the strategic advantages of SSM, presents optimized protocols for library construction, and provides quantitative frameworks for experimental design and evaluation.

Strategic Advantages of Site-Saturation Mutagenesis

Precision Targeting for Focused Library Design

SSM enables researchers to concentrate diversity on specific residues identified through structural knowledge or previous functional studies. This focused approach dramatically reduces library size and screening effort compared to random methods while maximizing the probability of identifying beneficial mutations [1]. By targeting individual codons for randomization, SSM allows comprehensive functional characterization of every possible amino acid substitution at protein hotspots, providing deep insight into residue-specific contributions to stability, activity, and specificity [1]. This precision is particularly valuable for drug development applications where understanding structure-activity relationships is critical.

Controlled Diversity Through Rational Codon Design

A key advantage of SSM over random mutagenesis is the ability to control chemical diversity through intelligent codon design. Traditional NNK degeneracy (N = A/C/G/T; K = G/T) encodes all 20 amino acids with only 32 codons, reducing redundancy and stop codons compared to NNN degeneracy (64 codons) [9]. Advanced algorithms like DYNAMCC further optimize this process by generating minimal degenerate codon sets that eliminate unwanted elements (stop codons, redundancy) while considering organism-specific codon usage patterns [3]. For investigations requiring specific mutational biases, the DYNAMCC_D tool allows library design based on Hamming distance from the wild-type codon, enabling either exploration of conservative single-nucleotide polymorphisms (SNPs) or more radical multi-base changes that access chemically diverse amino acids [3].

Table 1: Comparison of Site-Saturation and Random Mutagenesis Approaches

| Parameter | Site-Saturation Mutagenesis | Random Mutagenesis |

|---|---|---|

| Targeting Precision | Specific, user-defined residues | Entire gene or genome |

| Library Size | Controlled (exponential with sites) | Large, unpredictable |

| Amino Acid Coverage | Comprehensive at chosen positions | Sparse across sequence |

| Screening Burden | Manageable with focused diversity | High, requiring extensive resources |

| Structural Insight | Direct residue-function relationships | Indirect, correlation-based |

| Optimal Application | Active site engineering, stability determinants | Discovery without structural knowledge |

SSM Library Design and Optimization

Codon Design Strategies for Focused Diversity

The design of degenerate codons fundamentally determines library quality and screening efficiency. While NNK degeneracy has been widely adopted, recent computational tools enable more sophisticated design strategies:

Codon Compression Algorithms: The DYNAMCC suite selects minimal degenerate codon sets according to user-defined parameters including target organism, saturation type, and codon usage levels [3]. This approach significantly reduces library size—for example, compressing three-site saturation from 98,164 variants (NNK) to 23,966 variants (compressed codons) while maintaining 95% coverage [3].

Distance-Based Design: DYNAMCC_D incorporates Hamming distance (number of base changes from wild-type) into library design [3]. Single-base change libraries (distance=1) access 9 codons and are optimal for recapitulating natural evolutionary paths or studying conservative substitutions. Multi-base change libraries (distance≥2) access 54 codons and enable exploration of more dramatic chemical transformations, often necessary for achieving novel enzyme functions [3].

Table 2: Library Coverage and Screening Requirements for Different SSM Strategies

| Saturation Strategy | Codons per Site | 95% Coverage for 3 Sites | Amino Acid Diversity | Best Application |

|---|---|---|---|---|

| NNK Degeneracy | 32 | 98,164 variants | All 20 amino acids, redundant | General purpose |

| NNN Degeneracy | 64 | 262,144 variants | All 20 amino acids, highly redundant | Non-selective screening |

| Codon Compression | 20 | 23,966 variants | All 20 amino acids, non-redundant | High-efficiency screening |

| Single-Base Changes | 9 | 2,146 variants | 5-8 amino acids, conservative | Natural mutation studies |

| Multi-Base Changes | 54 | 157,464 variants | Broad chemical diversity | Novel function engineering |

Quantitative Library Evaluation

Robust assessment of SSM library quality is essential before committing to resource-intensive screening. The Q-value metric enables quantitative evaluation directly from sequencing electropherograms of pooled plasmids [9]. This method analyzes peak amplitudes at randomized positions to calculate library degeneracy, allowing early rejection of substandard libraries. Implementation of this quality control measure has demonstrated consistent performance across systems, with optimized protocols yielding 27.4 ± 3.0 of 32 possible codons from a pool of 95 transformants [9].

Experimental Protocols for SSM Library Construction

Two-Step PCR Megaprimer Method for Challenging Templates

For difficult-to-randomize genes—such as those with high AT-content, strong secondary structure, or cloned in large plasmids—a robust two-step PCR protocol has demonstrated superior performance compared to traditional one-step methods [10]:

Step 1: Mutagenic Fragment Amplification

- Prepare PCR mixture containing: 30 μL water, 5 μL KOD hot start polymerase buffer (10×), 3 μL dNTPs (2 mM each), 3 μL MgSO₄ (25 mM), 2.5 μL forward mutagenic primer (10 μM), 2.5 μL reverse non-mutagenic primer (10 μM), 1 μL template plasmid (50 ng), and 1 μL KOD hot start DNA polymerase [10].

- Amplify with thermocycler parameters: 95°C for 2 min; 28 cycles of 95°C for 20 s, 55-68°C for 10 s, 70°C for 30 s/kb; final extension at 70°C for 5 min [10].

- Purify the resulting mutagenic fragment using standard PCR purification kits.

Step 2: Whole-Plasmid Amplification with Megaprimer

- Use the purified fragment from step 1 as megaprimer in a second PCR with similar reaction composition but without additional primers.

- Thermocycler parameters: 95°C for 2 min; 24 cycles of 95°C for 20 s, 55-68°C for 30 s, 70°C for 2-4 min (depending on plasmid size); final extension at 70°C for 5 min [10].

- Digest PCR product with DpnI (6 hours at 37°C) to eliminate parental template [10].

- Transform into appropriate E. coli strain (e.g., BL21-DE3) and harvest library.

This method has demonstrated significant improvement over partially overlapping primer approaches, particularly for challenging templates like cytochrome P450-BM3 (3.3 kb with AT-rich regions), with massive sequencing verification showing superior library completeness [10].

Overlap Extension PCR for Promoter and Multi-Region Engineering

For applications requiring simultaneous mutagenesis of non-adjacent regions (e.g., promoter -35/-10 boxes and ribosomal binding sites), overlap extension PCR provides a flexible solution:

- Design degenerate oligonucleotides targeting each region of interest with 15-20 bp overlapping homologous sequences.

- Perform initial PCR to generate individual mutagenic fragments.

- Combine fragments in overlap extension PCR without external primers for 10-15 cycles to allow hybridization and extension.

- Add external primers and amplify full-length product for 20-25 cycles.

- Clone into appropriate vector and transform [11].

This approach efficiently creates combinatorial libraries of 10⁴–10⁷ variants, enabling simultaneous optimization of multiple regulatory elements [11].

SSM Experimental Workflow

High-Throughput Screening and Analysis

Fluorescence-Activated Cell Sorting for Rapid Screening

When SSM libraries are coupled with a suitable fluorescent reporter in a whole-cell system, fluorescence-activated cell sorting (FACS) enables rapid screening of 10⁵–10⁷ variants within days [11]. Through iterative positive and negative sorting based on reporter response, libraries rapidly converge to optimal variants with desired phenotypes. This approach is particularly powerful for engineering biosensors, optimizing metabolic pathways, and altering substrate specificity [11].

Deep Sequencing for Comprehensive Functional Analysis

Next-generation sequencing of SSM libraries before and after selection enables quantitative measurement of each mutant's enrichment, providing residue-specific contributions to protein fitness [1]. This "mutational scanning" approach identifies hot-spot residues, stability determinants, and specificity constraints, generating datasets that can be used to test computational predictions and guide further protein design [1].

Research Reagent Solutions

Table 3: Essential Research Reagents for SSM Library Construction and Screening

| Reagent/Category | Specific Examples | Function in SSM Workflow |

|---|---|---|

| Polymerase Systems | KOD Hot Start, Phusion Hot Start II | High-fidelity amplification in PCR steps |

| Degenerate Primers | NNK, NNN, DYNAMCC-optimized codons | Introducing controlled diversity at target sites |

| Template Elimination | DpnI restriction enzyme | Selective digestion of methylated parental plasmid |

| Cloning Systems | pRSFDuet-1, other expression vectors | Variant expression and maintenance |

| Host Strains | E. coli BL21(DE3), ElectroTen-Blue | Library transformation and propagation |

| Screening Tools | FACS instrumentation, deep sequencing platforms | Variant identification and characterization |

| Analysis Software | mutagenesis_visualization Python package | Data processing, visualization, and statistical analysis |

Application Scenarios in Drug Development and Protein Engineering

Enzyme Engineering for Therapeutic Applications

SSM has proven particularly valuable for optimizing therapeutic enzymes, where precise control over activity, specificity, and stability is paramount. By focusing diversity on active site residues and stability-determining regions, SSM generates focused libraries that efficiently explore sequence-function relationships while minimizing screening burden [1]. This approach has successfully engineered enzymes with altered stereoselectivity, enhanced thermostability, and novel catalytic activities [9].

Antibiotic Resistance Mechanism Investigation

In antimicrobial resistance research, SSM has elucidated how specific mutations in resistance enzymes confer protection against next-generation therapeutics. Recent investigations of KPC β-lactamase variants revealed how tandem repeat-mediated mutagenesis generates structural changes that confer resistance to ceftazidime-avibactam, informing the design of subsequent inhibitor generations [12]. Such studies demonstrate how SSM can illuminate evolutionary pathways in clinical pathogens.

SSM in Protein Engineering Cycle

Site-saturation mutagenesis represents a powerful paradigm for targeted protein engineering that balances rational design with comprehensive diversity exploration. Through precise codon-level control and focused library design, SSM enables researchers to answer specific questions about residue function while managing screening resources efficiently. The continued development of optimized protocols for challenging templates, sophisticated codon design algorithms, and high-throughput screening methodologies ensures that SSM will remain a cornerstone technique for protein engineers and drug development professionals seeking to establish clear relationships between protein sequence and function.

Site-saturation mutagenesis (SSM) has established itself as a powerful semi-rational approach in the molecular toolbox of protein engineering. This technique transforms protein modification from educated guesswork into a comprehensive investigation by systematically substituting every possible amino acid at specific positions within a defined region of a DNA sequence [13]. The method's precision enables researchers to address fundamental questions about protein function, structure, and stability that are often intractable through random mutagenesis alone. By providing a controlled means to explore sequence-function relationships, SSM plays two primary roles: identifying individual amino acid residues that are critical for protein function or stability, and creating focused mutant libraries for directed evolution campaigns aimed at improving or altering enzyme properties [13] [14]. This application note details the core advantages of SSM through quantitative data comparisons, standardized protocols, and practical resource guidance to support researchers in implementing these methods effectively.

Core Advantages and Quantitative Comparisons

Strategic Advantages Over Alternative Methods

Site-saturation mutagenesis offers distinct strategic benefits compared to random mutagenesis approaches, particularly when research objectives require precision and systematic analysis [13].

- Precision and Systematic Analysis: Unlike random mutagenesis which introduces mutations throughout the entire sequence, SSM allows researchers to focus on specific regions of interest, avoiding unnecessary mutations in non-essential areas that can complicate results interpretation [13].

- Identification of Key Residues: The systematic nature of SSM makes it particularly effective in pinpointing critical amino acid residues or nucleotides essential for protein activity, stability, or binding through comprehensive analysis of mutation effects at each targeted position [13].

- Focused Mutagenesis for Protein Engineering: When engineering proteins for specific improvements such as enhanced enzymatic activity, altered substrate specificity, or improved thermal stability, SSM provides a targeted approach that facilitates screening and selection of variants with desired traits [13].

Quantitative Data from Large-Scale Applications

Recent large-scale studies demonstrate the power of SSM in generating comprehensive functional datasets. A landmark study published in Nature (2025) performed site-saturation mutagenesis on 500 human protein domains, quantifying the effects of 563,534 missense variants on cellular abundance [2].

Table 1: Large-Scale SSM Dataset Statistics from Human Domainome Study

| Parameter | Scale | Significance |

|---|---|---|

| Protein Domains Analyzed | 522 domains (503 human) | Covers 2.0% of all unique domain families in human proteome |

| Missense Variants Quantified | 563,534 | Nearly 5-fold increase in stability measurements for human protein variants |

| Measurement Reproducibility | Median Pearson's r = 0.85 | High reproducibility between biological replicates |

| Pathogenic Variant Analysis | 60% reduce stability | Establishes stability loss as major disease mechanism |

| Domain Family Coverage | 127 different families | Enables comparative studies across diverse structural classes |

The data revealed that 60% of pathogenic missense variants reduce protein stability, establishing this as a primary disease mechanism [2]. Furthermore, the study demonstrated that mutational effects on stability are largely conserved in homologous domains, enabling accurate stability prediction across entire protein families.

Table 2: Performance Comparison of Mutagenesis Approaches

| Characteristic | Site Saturation Mutagenesis | Random Mutagenesis |

|---|---|---|

| Mutation Control | Targeted to specific positions/regions | Genome-wide or gene-wide random distribution |

| Library Quality | High - covers all amino acid substitutions at chosen sites | Variable - may miss important single mutations |

| Screening Effort | Reduced due to focused library size | Large - requires extensive screening |

| Functional Insights | Direct residue-level functional mapping | Global identification without positional precision |

| Best Applications | Critical residue identification, protein engineering | Broad phenotypic selection, unknown targets |

Experimental Protocols and Methodologies

Core SSM Workflow and Visualization

The following diagram illustrates the generalized experimental workflow for site-saturation mutagenesis, from target selection through to functional analysis:

Key Methodological Approaches

Oligonucleotide-Based Saturation Mutagenesis

This standard approach utilizes mutagenic primers containing degenerate codons to introduce diversity at specific positions [13] [14].

Protocol Details:

- Primer Design: Design oligonucleotides containing degenerate codons (e.g., NNK or NNS) at the target positions, where N = A/T/C/G, K = G/T, S = G/C. These provide all 20 amino acids with only one stop codon [14] [15].

- PCR Amplification: Perform polymerase chain reaction using the mutagenic primers and template DNA. The mutagenic primer incorporates the degenerate codon while flanking sequences ensure specific binding.

- Template Removal: Digest the methylated template DNA using DpnI restriction enzyme, which specifically cleaves at GmeATC sequences.

- Transformation: Transform the amplified, nicked vector into competent E. coli cells for repair and propagation.

Critical Considerations: The choice of degenerate codon significantly impacts library quality. While NNK/NNS (32 codons) encode all 20 amino acids with minimal stop codons, more restricted schemes like NDT (12 codons) can reduce library size while maintaining chemical diversity [14] [3].

Two-Step PCR for Difficult-to-Randomize Genes

For genes that are challenging to randomize using standard methods, a two-step PCR approach can significantly improve efficiency [16].

Protocol Details:

- First PCR: Amplify a short DNA fragment using a mutagenic primer and a non-mutagenic (silent) primer. This fragment is purified and used as a megaprimer.

- Second PCR: Use the megaprimer to amplify the entire plasmid in a second PCR reaction.

- Advantages: This method demonstrated distinct superiority over one-step approaches for "difficult-to-randomize" genes like cytochrome P450-BM3, providing better library coverage and quality at both DNA and amino acid levels [16].

Advanced Applications: SMuRF Protocol for Variant Functionalization

The Saturation Mutagenesis-Reinforced Functional (SMuRF) assay protocol enables high-throughput functional interpretation of disease-related genetic variants [17].

Protocol Highlights:

- PALS-C Cloning: Programmed Allelic Series with Common Procedures cloning introduces small-sized variants into a gene of interest.

- Cell Line Preparation: Nucleofection establishes cell line platforms for functional testing.

- FACS Analysis: Fluorescence-activated cell sorting enriches variants based on functional signaling.

- NGS and Scoring: Next-generation sequencing coupled with functional score generation enables variant effect quantification.

This approach allows functional annotation of thousands of variants in disease-related genes, addressing a critical challenge in clinical genetics [17].

Research Reagent Solutions

Successful implementation of site-saturation mutagenesis requires specific reagents and tools optimized for creating high-quality mutant libraries.

Table 3: Essential Research Reagents for Site-Saturation Mutagenesis

| Reagent/Tool | Function/Purpose | Examples/Alternatives |

|---|---|---|

| Degenerate Primers | Introduce random mutations at specific codons | NNK (32 codons), NDT (12 codons), DBK (18 codons) [14] [3] |

| High-Fidelity DNA Polymerase | Accurate amplification with low error rates | Phusion, Q5, Pfu polymerases |

| DpnI Restriction Enzyme | Selective digestion of methylated template DNA | Thermo Scientific FastDigest DpnI |

| Competent E. coli Cells | Transformation and propagation of mutant libraries | DH5α, XL1-Blue, BL21(DE3) strains |

| Codon Compression Tools | Optimize degenerate codon design for reduced redundancy | DYNAMCC web tool [3] |

| Vector Systems | Clone and express variant libraries | pET, pBAD, yeast display vectors |

Degenerate Codon Strategy Selection

The choice of degenerate codon strategy represents a critical experimental design consideration that significantly impacts library size and quality:

- NNK/NNS: Provides all 20 amino acids using 32 codons with single stop codon; balanced but redundant [14]

- NDT: Encodes 12 amino acids using 12 codons with no stop codons; reduced chemical diversity but smaller library [14]

- DBK: Encodes 12 amino acids using 18 codons with no stops; covers main biophysical types [14]

- Codon Compression Algorithms: Computational tools like DYNAMCC optimize degenerate codon selection based on user-defined parameters including target organism, saturation type, and codon usage levels [3]

Discussion and Technical Considerations

Integration with Directed Evolution Frameworks

Site-saturation mutagenesis serves as a foundational element in advanced directed evolution strategies. Iterative Saturation Mutagenesis (ISM) applies systematic cycles of SSM at rationally chosen sites, dramatically reducing screening efforts while efficiently exploring protein sequence space [18]. In one application, ISM significantly enhanced the thermostability of Bacillus subtilis lipase by targeting sites with high B-factors from crystallographic data [18].

The Focused Rational Iterative Site-specific Mutagenesis (FRISM) strategy represents a further refinement, where molecular docking identifies key mutation sites and a highly focused library is created by mutating hotspots to 3-5 specific amino acids [15]. This approach successfully engineered Candida antarctica lipase B into four stereo-complementary variants by screening fewer than 25 variants per evolutionary route [15].

Technical Implementation Challenges

While powerful, SSM presents several technical challenges that require consideration:

- Library Size Management: As the number of saturated sites increases, library size grows exponentially. For example, saturating three sites with NNK requires screening ~98,000 clones for 95% coverage [3]. Strategic use of restricted amino acid sets and codon compression algorithms can mitigate this challenge.

- Sequence Bias: The genetic code's structure means that single-base changes often produce chemically similar amino acids. Focusing on codons with Hamming distances >1 from wild-type enables access to more diverse amino acid properties [3].

- Functional Assay Requirements: SSM generates specific amino acid substitutions whose functional characterization requires appropriate high-throughput assays, such as protein abundance measurements [2] or growth-based selections [17].

Site-saturation mutagenesis provides an indispensable methodological foundation for both basic protein science and applied biotechnology. Its core advantages in identifying critical residues and enabling efficient directed evolution stem from the unique combination of systematic exploration and focused investigation. The quantitative data, standardized protocols, and reagent solutions presented in this application note demonstrate how SSM enables researchers to move beyond random mutagenesis toward more predictive protein engineering. As large-scale studies increasingly illuminate the relationships between protein sequence, structure, and function [2], and advanced algorithms optimize library design [3], SSM continues to evolve as a precision tool for resolving biological mechanisms and creating novel biocatalysts.

Site-saturation mutagenesis (SSM) serves as a cornerstone technique in protein engineering and directed evolution, enabling researchers to systematically explore the function of individual amino acid positions within proteins. This approach relies heavily on the use of degenerate primers—synthetically designed oligonucleotides that contain mixtures of nucleotides at specific codon positions, thereby encoding a diverse library of amino acid substitutions. The power of SSM lies in its capacity to create "focused libraries" where every possible amino acid replacement is represented at targeted sites, facilitating deep investigation into structure-function relationships without requiring prior structural knowledge.

The design of these primers is framed within the fundamental concept of codon degeneracy, a property of the genetic code where most amino acids are encoded by multiple nucleotide triplets. This redundancy means that transitioning from a single specific codon to all possible amino acids at a position requires strategic primer design. While the NNK degenerate codon (where N represents any nucleotide and K represents G or T) has emerged as a popular standard, it represents just one of several strategies available to researchers. The choice of degeneracy scheme directly impacts critical experimental parameters including library size, amino acid coverage, screening efficiency, and ultimately, the success of protein engineering campaigns [19] [20].

This application note provides a comprehensive framework for understanding and implementing degenerate primer strategies, with particular emphasis on moving beyond basic NNK approaches to leverage advanced methods that minimize bias and maximize practical screening efficiency. We present quantitative comparisons of degeneracy schemes, detailed experimental protocols validated through large-scale studies, and visual guides to experimental design—all contextualized within the rigorous demands of modern focused library research for drug development and basic science.

Understanding Codon Degeneracy and Common Schemes

The Foundation: Genetic Code Redundancy

The degeneracy of the genetic code originates from the fact that 61 nucleotide triplets encode only 20 standard amino acids, with the remaining three codons serving as stop signals. This redundancy means that most amino acids are encoded by multiple codons—a property that directly impacts degenerate primer design. For example, the amino acid leucine can be encoded by six different codons (TTA, TTG, CTT, CTC, CTA, CTG), while tryptophan is encoded by only one (TGG). This uneven distribution presents both challenges and opportunities when designing primers for saturation mutagenesis [20] [21].

The primary goal of employing degenerate codons in primer design is to control the representation of amino acids in the resulting mutant library. An ideal scheme would provide equal representation of all 20 amino acids with minimal redundancy and no stop codons. In practice, however, trade-offs between these objectives are inevitable. The genetic code's structure makes it impossible to achieve perfect representation using a single degenerate codon, necessitating strategic selection based on experimental priorities [19].

Common Degenerate Codon Schemes

Table 1: Comparison of Common Degenerate Codon Schemes

| Codon Scheme | Degeneracy | Amino Acids Encoded | Stop Codons | Key Characteristics |

|---|---|---|---|---|

| NNN | 64-fold | All 20 | 3 (TAA, TAG, TGA) | Maximum diversity but includes all stop codons; high screening burden |

| NNK | 32-fold | All 20 | 1 (TAG) | Reduced redundancy; only one stop codon; most popular balanced approach |

| NNS | 32-fold | All 20 | 1 (TAG) | Similar to NNK but different base composition (S = G or C) |

| NDT | 12-fold | 12 (F,L,I,V,Y,H,N,D,C,R,S,G) | 0 | No stop codons; limited but diverse amino acid set |

| NNT | 16-fold | 15 (excludes W,Q,M,K,E) | 0 | No stop codons; excludes several polar and charged residues |

| NNG | 16-fold | 15 (excludes F,Y,C,H,I,N,D) | 0 | No stop codons; excludes several hydrophobic and polar residues |

The NNK codon (where N = A/C/G/T and K = G/T) has emerged as a particularly popular choice for saturation mutagenesis. This scheme offers a balanced approach with 32 possible codons covering all 20 amino acids with only a single stop codon (TAG). The reduction from 64 (NNN) to 32 possible codons significantly decreases the screening burden while maintaining complete amino acid coverage. However, NNK still introduces substantial bias in amino acid representation due to the genetic code's inherent structure. Specifically, some amino acids like serine (6.3% occurrence), arginine (6.3%), and leucine (6.3%) are overrepresented, while others like methionine (3.1%) and tryptophan (3.1%) appear less frequently [19] [20].

For researchers specifically interested in exploring single-nucleotide polymorphisms (SNPs), specialized library designs focusing on a hamming distance of 1 (single base changes from the wild-type codon) can be employed. These libraries access only 9 codons on average, with the number of unique amino acids being codon-dependent (ranging between 5-8), with the remaining codons representing synonymous changes or stop codons. This approach dramatically reduces library size and is particularly valuable for studying naturally occurring mutations or when exploring immediate evolutionary neighborhoods of existing sequences [3].

Advanced Strategies: Moving Beyond NNK

Limitations of Conventional NNK Approaches

While NNK offers a reasonable balance between completeness and practical screening requirements, several significant limitations persist. The approach still generates substantial amino acid bias—a critical concern when screening capacity is limited. For example, in an NNK library, the amino acids leucine, arginine, and serine are each encoded by three codons, making them three times more likely to be sampled than tryptophan or methionine, which are encoded by single codons. This bias becomes exponentially problematic when performing combinatorial saturation mutagenesis at multiple sites simultaneously, where certain amino acid combinations may be severely underrepresented despite their potential functional importance [19].

Additionally, the presence of a stop codon (TAG) in NNK libraries means that a portion of all clones will be non-functional, unnecessarily consuming screening resources. For large libraries targeting multiple positions, this wasted screening capacity can become substantial. These limitations have motivated the development of more sophisticated degeneracy strategies that offer better control over library composition [19] [3].

Reduced-Codon Strategies: The 22c-Trick and Small-Intelligent Methods

Two particularly notable methods have emerged as solutions to NNK's limitations: the "22c-trick" and "small-intelligent" approaches. These methods utilize carefully selected mixtures of degenerate codons to achieve more balanced amino acid representation while eliminating stop codons.

The 22c-trick employs a combination of three codons—NDT (encodes 12 amino acids), VHG (encodes 10 amino acids), and TGG (encodes tryptophan)—to cover all 20 canonical amino acids with dramatically reduced bias compared to NNK. This approach significantly improves library quality by eliminating stop codons and reducing the overrepresentation of certain amino acids. However, it requires using multiple primers with different codon schemes, adding complexity to library construction [19].

The small-intelligent method represents a further refinement, utilizing an optimized set of codons that collectively cover all 20 amino acids with minimal redundancy. This approach achieves nearly uniform amino acid representation—each of the 20 amino acids is represented exactly once in the codon set. The result is an "unbiased" library where screening efforts are distributed evenly across the entire amino acid space. While theoretically optimal, this method requires the most complex primer design and synthesis [19].

Table 2: Advanced Degenerate Codon Strategies for Reduced Bias

| Strategy | Codons Employed | Amino Acid Coverage | Stop Codons | Best Application Context |

|---|---|---|---|---|

| 22c-Trick | NDT, VHG, TGG | All 20 | 0 | General purpose protein engineering |

| Small-Intelligent | Custom optimized set | All 20 (uniform) | 0 | Maximum diversity with limited screening capacity |

| DYNAMCC Algorithms | Varies by parameters | User-defined | User-controlled | High-throughput with specific organism preferences |

| Single-Base Change (Hamming Distance = 1) | 9 codons (average) | 5-8 unique amino acids | Possible | Studying natural mutations and evolutionary neighbors |

Computational Tools for Library Design

Modern library design has been significantly enhanced through computational tools that optimize codon selection based on specific experimental parameters. The DYNAMCC (Dynamic Management of Codon Compression) algorithm family represents a particularly advanced approach to this challenge. These web-accessible tools (available at http://www.dynamcc.com/) enable researchers to design optimized degenerate codon schemes based on multiple parameters including:

- Target organism codon usage bias: Optimizing for the preferred codons of the expression host to maximize protein expression levels

- Amino acid subset selection: Restricting to specific amino acid groups based on chemical properties or known functional constraints

- Wild-type amino acid inclusion/exclusion: Controlling whether the original amino acid is included in the library

- Hamming distance restrictions: Limiting to specific numbers of base changes from the wild-type codon

The DYNAMCC tools output a minimal list of compressed codons using IUPAC nucleic acid notation that covers the desired amino acid space with maximum efficiency. This approach balances the simplicity of using a single degenerate codon (like NNK) against the impracticality of synthesizing all 64 codons individually [3].

Experimental Protocol: Site-Saturation Mutagenesis Using Degenerate Primers

Primer Design and Synthesis

The following protocol adapts and enhances established methodologies for high-success-rate site-saturation mutagenesis [22], incorporating best practices from large-scale mutagenesis studies [23] [2].

Step 1: Codon Selection and Primer Design

- Select the appropriate degenerate codon scheme based on experimental goals (refer to Tables 1 and 2)

- Design forward and reverse primers containing the degenerate codon at the target position

- Ensure flanking regions of 15-20 nucleotides on each side of the degenerate codon with optimal G/C content (40-60%)

- Include at least 5 non-degenerate nucleotides at the 3' end to ensure specific binding during PCR

- Check primers for secondary structure formation and primer-dimer potential using tools like NetPrimer (http://www.premierbiosoft.com/netprimer/)

- For the QuikChange method, both primers must be complementary and phosphorylated

Step 2: Primer Synthesis and Quality Control

- Standard desalted primers are generally sufficient without need for HPLC purification [22]

- Dissolve primers in 0.1X TE buffer (1 mM Tris, 0.1 mM EDTA, pH 8.0) or nuclease-free water

- Dilute to working concentration of 2 μM for PCR reactions

- Validate degenerate primer quality by sequencing the pooled library before screening when possible [23]

PCR Amplification and Library Construction

Step 3: Mutagenesis PCR Reaction

- Assemble 25 μL reaction containing:

- 1X reaction buffer (e.g., Pfu buffer: 10 mM KCl, 10 mM (NH₄)₂SO₄, 20 mM Tris-HCl pH 8.8, 2 mM MgSO₄, 0.1% Triton X-100)

- 20 ng plasmid template DNA

- 6 pmol of each degenerate primer (forward and reverse)

- 200 μM of each dNTP

- 1 unit of high-fidelity DNA polymerase (e.g., PfuTurbo, KAPA HiFi HotStart, or Platinum SuperFi II [23])

Step 4: Thermal Cycling Conditions

- Initial denaturation: 95°C for 2 minutes

- 16 cycles of:

- Denaturation: 95°C for 30 seconds

- Annealing: 55°C for 1 minute

- Extension: 68°C for 1-2 minutes per kb of plasmid length

- Final extension: 68°C for 10 minutes

- Hold at 4°C

Studies comparing polymerase performance have demonstrated that KAPA HiFi HotStart, Platinum SuperFi II, and Hot-Start Pfu DNA Polymerase show superior amplification efficiency with lower chimera formation rates, making them preferred choices for quality library construction [23].

Step 5: Template Digestion and Transformation

- Digest parental (methylated) template DNA by adding 5 units of DpnI directly to PCR reaction

- Incubate at 37°C for 1 hour

- Transform 5 μL of reaction into 50 μL of chemically competent E. coli cells (e.g., TOP10)

- Heat shock at 42°C for 30 seconds, recover in SOC medium at 37°C for 1 hour

- Plate onto selective agar plates and incubate overnight at 37°C

- Expect 100-500 colonies per transformation for typical plasmids

This protocol has demonstrated success rates exceeding 95% for creating high-quality saturation libraries when properly optimized [22].

Quality Assessment and Troubleshooting

Library Quality Validation

Rigorous quality assessment is essential for successful saturation mutagenesis experiments. The following approaches should be employed to validate library quality:

Sequence Verification:

- Pick 10-20 random clones for Sanger sequencing to verify mutation rate and diversity

- Alternatively, use next-generation sequencing to comprehensively analyze library composition for large projects [23]

- Expect approximately 5-10% of clones to contain the wild-type sequence when using NNK degeneracy

Library Coverage Assessment:

- Calculate theoretical library coverage based on degeneracy scheme and screening capacity

- For NNK (32 codons), screening 93-95 clones provides >90% probability of covering all amino acids

- For more complex multi-site libraries, use the formula: ( T = ln(1-p)/ln(1-1/V) ) where T is the number of clones to screen, p is the desired coverage (e.g., 0.95), and V is the number of possible variants [3]

Functional Assessment:

- Include positive and negative controls in screening assays

- Monitor the percentage of functional clones as an indicator of library quality

- Expect lower functional percentages for libraries targeting structurally critical residues

Troubleshooting Common Issues

Table 3: Troubleshooting Guide for Degenerate Primer-Based Mutagenesis

| Problem | Potential Causes | Solutions |

|---|---|---|

| Low transformation efficiency | Incomplete DpnI digestion, insufficient PCR product, poor cell competence | Extend DpnI digestion time, increase PCR cycles, use highly competent cells |

| High wild-type background | Incomplete primer binding, insufficient DpnI digestion | Optimize annealing temperature, extend DpnI digestion, try different polymerase |

| Biased amino acid representation | Primer synthesis errors, PCR bias, poor primer design | Verify primer quality, optimize PCR conditions, consider alternative degenerate schemes |

| Low mutation rate | Primers not phosphorylated, insufficient cycling, polymerase with proofreading | Ensure primer phosphorylation, increase cycle number, verify polymerase compatibility |

| Library size mismatch | Theoretical vs. practical degeneracy, transformation issues | Sequence validate library, optimize transformation protocol, adjust primer degeneracy |

Research Reagent Solutions

Table 4: Essential Reagents for Degenerate Primer-Based Mutagenesis

| Reagent Category | Specific Products | Function and Application Notes |

|---|---|---|

| High-Fidelity DNA Polymerases | KAPA HiFi HotStart, Platinum SuperFi II, Hot-Start Pfu, PfuTurbo | PCR amplification with minimal bias and error rates; critical for library quality [23] [22] |

| Mutagenesis Kits | QuikChange Site-Directed Mutagenesis Kit | Streamlined protocol for single-site saturation mutagenesis [22] |

| Cloning Strains | TOP10, XL1-Blue, DH5α | High-efficiency transformation with standard plasmid propagation |

| Template Digestion Enzymes | DpnI | Selective digestion of methylated parental template DNA |

| Primer Synthesis Services | Custom degenerate oligos from suppliers like GenScript, Operon | Supply of degenerate primers with controlled mixing; quality varies by supplier [23] |

| Computational Design Tools | DYNAMCC web tools (http://www.dynamcc.com/) | Optimized degenerate codon selection based on multiple parameters [3] |

| Quality Control | NGS services, Sanger sequencing | Library validation and diversity assessment [23] [2] |

Degenerate primers represent a fundamental tool in the construction of saturation mutagenesis libraries for focused protein engineering. While the NNK codon scheme offers a practical balance for many applications, advanced strategies like the 22c-trick, small-intelligent method, and computational design tools like DYNAMCC provide powerful alternatives that minimize bias and maximize screening efficiency. The experimental protocol outlined here, incorporating high-fidelity polymerases and optimized cycling conditions, has demonstrated success rates exceeding 95% in large-scale studies. As site-saturation mutagenesis continues to enable deep functional characterization of proteins across basic research and drug development applications, the strategic selection and implementation of degenerate codon schemes remains an essential consideration for designing efficient and comprehensive focused libraries.

Application Notes

Site-saturation mutagenesis (SSM) is a powerful protein engineering technique that systematically substitutes each amino acid in a target protein region. This enables comprehensive exploration of sequence-function relationships, driving advances in enzyme engineering, drug development, and evolutionary studies [13].

Application in Enzyme Engineering

In enzyme engineering, SSM improves catalytic properties like activity, stability, and substrate specificity [24] [13]. It has been successfully applied to engineer amide synthetases, enhancing their capability for pharmaceutical synthesis. Machine-learning guided SSM of the amide bond-forming enzyme McbA evaluated 1,217 variants, creating models that predicted specialized variants with 1.6- to 42-fold improved activity for producing nine small-molecule pharmaceuticals [25].

Application in Drug Development

SSM identifies critical residues for drug binding and elucidates mechanisms of genetic diseases [13]. A large-scale study of over 500,000 missense variants across 500+ human protein domains revealed that 60% of pathogenic missense variants reduce protein stability [2]. This understanding is crucial for diagnosing disease mechanisms and developing targeted therapies. High-throughput functional assays like the Saturation Mutagenesis-Reinforced Functional (SMuRF) framework help interpret unresolved variants in disease-related genes such as FKRP and LARGE1 [5].

Application in Evolutionary Studies

SSM provides insights into evolutionary constraints and the flexibility of protein sequences [13]. Comparing stability measurements with evolutionary fitness from protein language models shows that protein stability accounts for a median of 30% of the variance in protein fitness, varying across domain families [2]. This helps annotate functional sites and understand divergence in enzyme families, where studies show most evolutionary changes occur at the level of substrate specificity rather than reaction type [26].

Table 1: Key Quantitative Findings from Major Site-Saturation Mutagenesis Studies

| Study Focus | Scale of Variants/Proteins | Key Quantitative Finding | Implication |

|---|---|---|---|

| Human Protein Domain Stability [2] | >500,000 variants; 522 protein domains | 60% of pathogenic missense variants reduce protein stability. | Establishes stability loss as a major disease mechanism. |

| Machine-Learning Guided Enzyme Engineering [25] | 1,217 enzyme variants; 9 pharmaceutical compounds | Predicted variants showed 1.6- to 42-fold improved activity. | Demonstrates the power of ML to accelerate directed evolution. |

| Contribution of Stability to Fitness [2] | >500,000 variants across >500 domains | Protein stability accounts for a median of 30% of fitness variance. | Highlights the role of other biophysical properties in evolution. |

Experimental Protocols

High-Throughput SSM for Functional Scoring (SMuRF Protocol)

This protocol details the Saturation Mutagenesis-Reinforced Functional (SMuRF) assay for generating functional scores of small-sized variants in disease-related genes [5].

Protocol Workflow

The diagram below outlines the major steps for a high-throughput SMuRF assay.

Detailed Methodological Steps

Step 1: Develop a High-Throughput Functional Assay

- Identify a molecular phenotype of interest linked to the disease.

- Establish a robust assay compatible with high-throughput flow cytometry, such as using the IIH6C4 antibody to quantify α-DG glycosylation levels for muscular dystrophy-related genes [5].

Step 2: Establish Cell Line Platforms via CRISPR RNP Nucleofection

- Design and synthesize sgRNA: Design sgRNA to target the gene's early coding region for frameshift mutations (e.g., spacer: GTTCGAGGCATTTGACAACG for FKRP) [5].

- Prepare RNP complexes: Combine 18 µL nucleofector solution, 6 µL of 30 µM sgRNA, and 1 µL of 20 µM SpCas9 2NLS nuclease. Incubate at room temperature for 10 minutes [5].

- Perform nucleofection: Deliver RNP complexes into cells using a 4D-Nucleofector system. Isolate and validate monoclonal knockout cell lines [5].

Step 3: Programmed Allelic Series with Common Procedures (PALS-C) Cloning

- Use PALS-C as a cost-effective method to introduce saturation mutagenesis variant plasmid pools into the gene of interest [5].

Step 4: Functional Screening and Sequencing

- Nucleofection and sorting: Deliver variant plasmid pools into the engineered cell line. Perform Fluorescence-Activated Cell Sorting (FACS) based on the functional signal (e.g., IIH6C4 fluorescence) [5].

- Next-Generation Sequencing (NGS): Sequence sorted cell populations to determine variant frequencies in different functional groups [5].

- Generate functional scores: Analyze NGS data to calculate enrichment scores for each variant, representing their functional impact [5].

Chip-Based Oligonucleotide Synthesis for Mutagenesis Library Construction

This protocol describes a high-throughput method for constructing precisely controlled mutagenesis libraries using chip-synthesized oligonucleotides [23].

Protocol Workflow

The workflow for constructing a high-quality mutagenesis library is as follows.

Detailed Methodological Steps

Step 1: Library Design

- Divide the target gene coding sequence into sub-libraries. For PSMD10 (226 amino acids), it was divided into ten sub-libraries, P1–P9 covering 24 amino acids each and P10 covering the C-terminal region [23].

- Design oligonucleotides with 16–19 bp homologous arms for Gibson assembly. Each sub-library consists of oligonucleotides to introduce a specific mutation (e.g., TAG codon) at every amino acid position [23].

Step 2: Oligonucleotide Pool Synthesis and Amplification

- Synthesize the variant oligonucleotide library as a custom oligo pool using high-density CMOS chip-based technology [23].

- Amplify the oligo pool using a high-fidelity DNA polymerase. Evaluation of five polymerases showed KAPA HiFi HotStart, Platinum SuperFi II, and Hot-Start Pfu DNA Polymerase offer higher amplification efficiency and lower chimera formation rates [23].

Step 3: Gene Assembly and Cloning

- Use Gibson assembly to incorporate the amplified, mutated fragments into a plasmid vector [23].

Step 4: Quality Control and Validation

- Validate the library via Next-Generation Sequencing (NGS). This method achieved 93.75% mutation coverage for a full-length PSMD10 amber codon scanning library [23].

- Analyze unmapped reads to identify and troubleshoot common issues like oligonucleotide synthesis errors and chimeric sequence formation during PCR [23].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials for Site-Saturation Mutagenesis

| Item Name | Function/Application | Specific Examples & Notes |

|---|---|---|

| High-Fidelity DNA Polymerase | Amplifies mutagenic libraries with low error and bias rates. | KAPA HiFi HotStart, Platinum SuperFi II, Hot-Start Pfu DNA Polymerase are recommended for low chimera rates [23]. |

| CRISPR-Cas9 System | Creates knockout cell lines for functional assays. | SpCas9 2NLS nuclease and synthetic sgRNA are used for RNP nucleofection [5]. |

| Nucleofection System | Efficiently delivers RNP complexes or plasmid libraries into cells. | Lonza 4D-Nucleofector system with SE Cell Line Nucleofector Solution [5]. |

| Fluorescence-Activated Cell Sorter (FACS) | Sorts cell populations based on functional phenotypes for enrichment analysis. | Used in SMuRF assays with antibodies like IIH6C4 to sort based on glycosylation levels [5]. |

| Chip-Synthesized Oligo Pools | Provides the source of designed mutations for library construction. | GenTitan Oligo Pools synthesized via CMOS-based technology enable high-throughput, precise mutagenesis [23]. |

| DNA Assembly Master Mix | Assembles multiple DNA fragments into a vector seamlessly. | NEBuilder HiFi DNA assembly master mix used in Gibson assembly protocols [5] [23]. |

| Next-Generation Sequencer | Essential for variant coverage analysis and functional score calculation. | Used for quality control of libraries and deep sequencing of sorted populations [5] [23]. |

Building Smarter Libraries: Advanced SSM Strategies and Practical Applications

Site-saturation mutagenesis (SSM) serves as a cornerstone technique in protein engineering and functional genomics, enabling the systematic replacement of amino acids at specific positions to create focused variant libraries. This application note details a standardized experimental workflow for implementing SSM, framed within the context of advanced library research for drug development. We provide comprehensive protocols from initial primer design through final variant screening, incorporating both traditional and high-throughput methodologies to meet the diverse needs of research scientists.

Key Research Reagent Solutions

The following table summarizes essential reagents and their specific functions in site-saturation mutagenesis workflows:

| Reagent/Resource | Function in SSM Workflow | Examples & Notes |

|---|---|---|

| High-Fidelity DNA Polymerase | Amplifies target gene with minimal error introduction during PCR | KAPA HiFi HotStart, Platinum SuperFi II, and Q5 Polymerase are preferred for high efficiency and low chimera rates [23]. |

| Type IIS Restriction Enzymes | Enables seamless assembly of DNA fragments in Golden Gate cloning | BsaI and BbsI cut outside their recognition sites, creating unique 4 bp overhangs for fragment assembly [27]. |

| DNA Ligase | Joins DNA fragments with compatible overhangs | T4 DNA Ligase is commonly used in one-pot restriction-ligation setups [27]. |

| Cloning & Expression Vectors | Hosts the mutated gene library for propagation and protein expression | Vectors should be Golden Gate-compatible for efficient assembly (e.g., pAGM22082_CRed) [27]. |

| Competent Cells | Used for transformation and library propagation | E. coli strains like BL21(DE3) pLysS allow for controlled T7 promoter-based expression of potentially toxic proteins [27]. |

| Degenerate Primers | Introduces randomized codons at target amino acid positions | NNK codons (N=A/C/G/T; K=G/T) encode all 20 amino acids and reduce stop codons [10]. |

Primer Design Strategies

Core Principles and Codon Degeneracy

Effective primer design is the critical first step in SSM. The fundamental goal is to create primers that replace a specific codon with a degenerate mixture representing all 20 canonical amino acids.

- Codon Selection: The NNK degeneracy is widely recommended, where N represents all four nucleotides and K represents G or T. This combination reduces the codon set from 64 to 32 possibilities while still encoding all 20 amino acids and including only one stop codon (TAG) [10]. This significantly lowers the screening burden compared to a full NNN (64 codons) mixture.

- Primer Structure: For a standard site-directed mutagenesis approach, a pair of complementary primers, each containing the desired degenerate codon, is designed to anneal back-to-back on the plasmid template. The 5' ends of the primers should point away from the mutation site [28].

- Minimizing Secondary Structures: Primer design should aim to minimize primer-dimerization and ensure that primer-template annealing is favored over primer self-pairing during PCR. Using partial overlapping primers instead of fully complementary ones can significantly improve amplification efficiency [4].

Specialized Methods: Golden Mutagenesis

For multi-site saturation mutagenesis, the Golden Gate cloning technique offers a robust solution. In this method, primers are designed with the following structure [27]:

- 5' Type IIS Recognition Site: (e.g., for BsaI or BbsI)

- Specified Four Base Pair Overhang: Ensures correct fragment ordering.

- Randomization Site: Contains the NNK or other degenerate codon.

- Template Binding Sequence: The gene-specific portion of the primer.

This design allows PCR fragments to be assembled seamlessly into a vector in a single-tube reaction, liberating the original restriction site so it is not re-ligated.

PCR and Library Construction Methods

Several PCR strategies can be employed for SSM, each with distinct advantages. The table below compares the key methodologies.

| Method | Principle | Advantages | Considerations |

|---|---|---|---|

| One-Step PCR (Partially Overlapping Primers) | Uses a pair of primers with partial overlaps to amplify the entire plasmid in a single PCR [4]. | Simple, single-step protocol. | Can yield low amplicon quantities and high parental background for "difficult" templates [10]. |

| Two-Step PCR (Megaprimer) | Step 1: A short DNA fragment is generated using one mutagenic and one non-mutagenic primer. Step 2: The purified fragment serves as a megaprimer for whole-plasmid amplification [10]. | Superior for "difficult-to-randomize" genes (e.g., long, high AT/GC content). Higher quality libraries with lower parental carryover. | Requires two PCR steps and an intermediate purification. |

| Golden Gate Cloning | PCR fragments with Type IIS ends are assembled directly into a linearized vector in a one-pot restriction-ligation [27]. | Highly efficient for multi-site mutagenesis. Seamless assembly without extra bases. | Requires specialized vector and primer design. |

| High-Throughput Oligo Pool Synthesis | Diversified oligonucleotides are synthesized on a chip, amplified, and assembled into full-length genes via methods like Gibson assembly [23]. | Ideal for large-scale, customized libraries (e.g., full-length amber codon scanning). Extremely precise and scalable. | Higher cost; requires specialized facilities and expertise. |

Recommended Protocol: Two-Step Megaprimer PCR

For challenging templates, a two-step PCR approach is highly effective [10].

First PCR – Generate Megaprimer:

- Reaction Mix: 50 μL containing 1x polymerase buffer, 100 ng plasmid template, 200 μM dNTPs, 0.4-2 μM of each primer (one mutagenic, one non-mutagenic flanking primer), and 2 U of a high-fidelity DNA polymerase.

- Cycling Conditions: Initial denaturation at 94°C for 3 min; 28 cycles of (94°C for 1 min, 52°C for 1 min, 68°C for 1 min/kb of fragment length); final extension at 68°C for 5 min.

- Purification: Analyze the product on an agarose gel and purify the correct-sized DNA fragment using a PCR cleanup kit.

Second PCR – Whole-Plasmid Amplification:

- Reaction Mix: 50 μL containing 1x polymerase buffer, 50-100 ng of the original plasmid template, 200 μM dNTPs, the purified megaprimer (using ~1:1 molar ratio of megaprimer:template), and 2 U of high-fidelity DNA polymerase.

- Cycling Conditions: Initial denaturation at 94°C for 3 min; 24 cycles of (94°C for 1 min, 52°C for 1 min, 68°C for 1-2 min/kb of plasmid length); final extension at 68°C for 1 hour.

- Template Digestion: Treat the PCR product with DpnI restriction enzyme (37°C for 6 hours) to digest the methylated parental plasmid template.

Diagram 1: Two-step megaprimer PCR workflow for SSM.

Transformation and Library Analysis

Transformation and Library Harvesting

Following PCR amplification and DpnI treatment, the product is transformed into a suitable E. coli strain.

- Transformation: Use 1-4 μL of the DpnI-treated PCR product to transform 50-100 μL of chemically competent cells. After a heat shock recovery, plate the cells on LB agar plates containing the appropriate antibiotic [4].

- Library Harvesting: For library-level analysis, instead of picking individual colonies, the transformation mixture can be directly inoculated into a liquid culture. The plasmid DNA is then prepared from this pooled culture for sequencing to assess library quality [4].

Quality Control and Functional Screening

Rigorous quality control is essential to ensure a successful SSM library.

- Sequencing-Based Quality Control: Sequence a pooled plasmid library (or a large number of individual clones) to assess the distribution of mutations. This verifies that all intended amino acids are represented without significant bias. Automated web tools can assist in analyzing sequencing results and visualizing nucleobase distributions [27].

- Functional Assays: The ultimate goal is to link genotype to phenotype. Functional assays are highly gene-specific but can include:

- Growth-Based Selections: Using an abundance protein fragment complementation assay (aPCA), where protein stability influences the growth rate of cells, allowing for quantification of variant effects [2].

- Fluorescence-Activated Cell Sorting (FACS): For surface proteins or enzymes affecting a fluorescent output, FACS can be used to sort cells based on function and quantify variant enrichment via next-generation sequencing [17] [5].

Diagram 2: Functional screening workflow for variant library.

This application note outlines a complete and robust workflow for constructing and analyzing focused libraries via site-saturation mutagenesis. By selecting the appropriate primer design and PCR strategy—such as the highly effective two-step megaprimer method for difficult templates—researchers can generate high-quality variant libraries. Coupling this with modern cloning techniques like Golden Mutagenesis and high-throughput functional screening (SMuRF assays) provides a powerful framework for advancing research in protein engineering, functional genomics, and drug development.

Site-saturation mutagenesis serves as a fundamental methodology in protein engineering, enabling researchers to systematically replace specific amino acid positions with all or a subset of natural or non-canonical amino acids. This approach is particularly valuable for exploring structure-function relationships and optimizing protein properties such as catalytic efficiency, stability, and binding affinity [29] [22]. However, a significant challenge emerges when targeting multiple sites simultaneously, as library size increases exponentially, creating substantial screening burdens. For example, when saturating just three sites using a conventional NNK codon (where N = A/C/G/T, K = G/T), the number of variants required to achieve 95% library coverage reaches 98,164 [3]. This exponential expansion severely limits the number of sites that can be practically explored, especially when using screening methods rather than selection for desired phenotypes [3].

The foundation of this challenge lies in the structure of the genetic code and conventional mutagenesis approaches. The standard NNK codon encodes 32 possible codons covering all 20 amino acids but with significant redundancy and including one stop codon [3]. This redundancy means researchers must screen numerous identical amino acid variants, wasting valuable resources on functionally identical clones. Furthermore, as protein engineering efforts grow more ambitious—targeting larger protein regions or multiple simultaneous mutations—these limitations become increasingly prohibitive. Codon compression algorithms address these fundamental limitations through sophisticated bioinformatic approaches that minimize redundancy while maintaining desired amino acid diversity, thereby dramatically reducing screening efforts while maximizing information content [3].

Theoretical Foundation of Codon Compression

Principles of Genetic Code Compression

Codon compression algorithms operate on the principle of selecting minimal sets of degenerate codons according to user-defined parameters to achieve efficient saturation of target sites. These algorithms strategically use International Union of Pure and Applied Chemistry (IUPAC) nucleic acid notation to represent multiple codons through single degenerate sequences, a process termed "codon compression" [3]. The inverse operation—deriving individual codons from a compressed codon—is known as "codon explosion" [3]. This approach enables researchers to eliminate several undesirable elements from saturation libraries, including stop codons, wild-type amino acids (when not desired), and redundant coverage of the same amino acid by multiple codons [3].

A key innovation in advanced codon compression involves considering the Hamming distance—the number of positional differences in nucleotide sequence—between wild-type and library codons [3]. This consideration recognizes that different biological questions require different mutational spectra. Studies of naturally occurring mutations benefit from focusing on single-nucleotide polymorphisms (SNPs), as random mutations rarely achieve more than a single-base change within a codon [3]. In contrast, protein engineering often requires larger Hamming distances (2-3 base changes) to access greater chemical diversity, as single-base changes have approximately a 40% chance of producing identical or chemically similar amino acids due to the structure of the genetic code [3].

The DYNAMCC Tool Suite

The DYNAMCC (Dynamic Management of Codon Compression) web tools provide implemented codon compression algorithms accessible to researchers without computational backgrounds. The suite includes three specialized tools with distinct optimization parameters:

- DYNAMCC_0: Focuses on removing redundancy, stop codons, and wild-type amino acids while considering codon usage values for the target organism [3].

- DYNAMCC_R: Designed for experiments where redundant space is relevant, including every codon for selected amino acids when synonymous mutations might affect mRNA stability, protein expression, folding, or function [3].

- DYNAMCC_D: The most recently developed tool incorporates distance metrics between wild-type and library codons, allowing researchers to restrict libraries to specific Hamming distances depending on their experimental goals [3].

These tools are accessible at http://www.dynamcc.com/ and support user-uploaded codon usage tables for non-model organisms, providing flexibility across diverse experimental systems [3]. The underlying algorithms were written in Python 2.7 and are freely available under the BSD 3-clause license, enabling modification and customization for specific research needs [3].

Quantitative Impact of Codon Compression

The practical value of codon compression becomes evident when examining the quantitative reduction in library size across various scenarios. The following table summarizes the dramatic efficiency improvements achievable through strategic codon compression:

Table 1: Library Size Comparison Between Conventional and Compressed Approaches

| Mutagenesis Scenario | Conventional Approach | Library Size with Compression | Size Reduction | Coverage |

|---|---|---|---|---|

| 3-site saturation (NNK) | 98,164 variants [3] | 23,966 variants [3] | 75.6% | 95% |

| Single-site SNP library | 32 codons (NNK) [3] | 9 codons [3] | 71.9% | Varies by wild-type codon |