Transforming Drug Development Education: A Conceptual Change Framework for Curriculum Modernization

This article addresses the critical need for conceptual change in the education of drug development professionals.

Transforming Drug Development Education: A Conceptual Change Framework for Curriculum Modernization

Abstract

This article addresses the critical need for conceptual change in the education of drug development professionals. It explores the foundational theories of how experts learn and overcome deeply held misconceptions, provides methodological strategies for modernizing curricula with models like Kotter's change process and STEAM integration, offers solutions for overcoming common implementation challenges like curricular overload and resistance, and presents validation frameworks for assessing educational outcomes. Designed for researchers, scientists, and professionals in pharmaceuticals, this guide synthesizes current educational research and industry trends to provide a roadmap for developing a more agile, integrated, and effective training ecosystem that can keep pace with the rapid evolution of medicine development.

The Science of Learning: Understanding Conceptual Change in Drug Development Expertise

Troubleshooting Guide & FAQs: Addressing Conceptual Hurdles in Medical Research

This section provides targeted support for common conceptual challenges encountered during experiments in medical education and conceptual change research.

Table 1: Troubleshooting Guide for Conceptual Change Experiments

| Problem Area | Specific Issue | Proposed Solution & Underlying Rationale |

|---|---|---|

| Identifying Misconceptions | Failure to detect robust, prevalent student/researcher misconceptions. | Use diagnostic questions and concept inventories before instruction [1]. This proactively maps the landscape of incorrect mental models instead of assuming known conceptual hurdles. |

| Assessment Design | Assessments validate rote memorization, not genuine conceptual understanding or transfer. | Develop evaluation tools that are reliable indicators of understanding, asking: "Does this item provide clear evidence the concept has been understood and can be applied?" [1] |

| Curriculum Design | Learning activities do not lead to the desired enduring understandings. | Adopt a backward design framework: 1.) Identify desired results and enduring understandings; 2.) Design evidence-based assessments; 3.) Then, and only then, plan learning activities [1]. |

| Promoting Transfer | Learners understand a concept in one context but fail to apply it in a new, meaningful scenario. | The learning program's goal should be to use the corrected idea in a new setting. Frame assessment items to check if a cleared misconception can be applied to a different context [1]. |

| Mental Model Resistance | Learners revert to previous misconceptions after instruction. | Instructional material should break down the mental formation of a concept into simple steps to ensure the misconception is not formed. Confront and dismantle the misconception directly [1]. |

Experimental Protocols for Studying Conceptual Change

This section outlines detailed methodologies for key experiments in conceptual change research, framed within curriculum modification.

Protocol: Applying the Understanding by Design (UbD) Framework for Curriculum Development

This protocol provides a structured, backward-design methodology for modifying curricula to explicitly target conceptual change.

1. Research Question: How can a curriculum be structured to effectively identify and alter specific misconceptions, leading to enduring scientific understanding in a medical domain?

2. Principal Materials:

- Subject Population: Medical students or professionals.

- Key Conceptual Domain: A foundational topic prone to misconceptions (e.g., physiological principles, pharmacokinetics).

- Tools: Access to curriculum design software, concept mapping tools, and assessment platforms.

3. Detailed Methodology:

Step 1: Identify Desired Results (Define the Conceptual Endpoint) * Action: Establish the "enduring understandings" and "transfer goals" for the module. These are the long-term, conceptual takeaways and the ability to apply learning in new contexts [1]. * Guiding Questions: * What should participants ultimately understand and be able to use? * What are the common, persistent misconceptions in this topic? [1] * Why is this topic critical for medical practice? * Output: A list of core concepts and a mapped set of known misconceptions.

Step 2: Determine Acceptable Evidence (Design Diagnostic and Assessment Tools) * Action: Develop assessment instruments before designing learning activities. These tools must reliably differentiate between rote recall and genuine conceptual understanding [1]. * Guiding Questions: * What constitutes valid evidence of understanding and the ability to transfer knowledge? * How will we consistently assess application, interpretation, and perspective? [1] * Output: Validated pre-/post-assessment items, including multiple-choice questions designed to reveal misconceptions and performance tasks requiring application in novel scenarios [1].

Step 3: Plan Learning Experiences and Instruction (Design the Intervention) * Action: With the end goal and assessments defined, create instructional materials and activities specifically engineered to address misconceptions and build correct mental models [1]. * Guiding Questions: * What knowledge and skills are needed for participants to succeed in the assessments? * What learning activities will effectively confront and dismantle the identified misconceptions? * What is the appropriate balance between direct instruction and self-construction of concepts? [1] * Output: A structured learning module, which may include direct instruction, inductive questioning, case-based learning, and simulations.

4. Data Analysis:

- Quantitative: Compare pre- and post-assessment scores using statistical tests (e.g., paired t-test) to measure significant changes in conceptual understanding.

- Qualitative: Analyze open-ended responses and concept maps for evidence of more sophisticated mental models and the absence of pre-existing misconceptions.

Protocol: Integrating Simulation for Conceptual Change in Clinical Reasoning

This protocol leverages simulation-based medical education to create a safe environment for exposing and correcting clinical misconceptions.

1. Research Question: To what extent does high-fidelity simulation, followed by deliberate feedback, remediate misconceptions in clinical management and procedural knowledge?

2. Principal Materials:

- Simulation Platform: High-fidelity patient simulator or virtual reality clinical environment.

- Assessment Rubrics: Validated tools for assessing clinical performance, decision-making, and technical skills.

- Scenario: A standardized clinical scenario designed to trigger a specific known misconception (e.g., misdiagnosis due to cognitive bias, incorrect procedure sequence).

3. Detailed Methodology:

Step 1: Pre-briefing and Baseline Assessment * Administer a conceptual knowledge test targeting the scenario's key concepts to identify pre-existing misconceptions.

Step 2: Simulation Exercise * The participant manages the scenario in the simulated environment. The session is recorded for analysis.

Step 3: Debriefing and Feedback (The Conceptual Change Engine) * A structured debriefing session, facilitated by an expert, is conducted. This is the critical phase where performance is reviewed, and misconceptions are explicitly confronted with evidence from the simulation and underlying scientific principles [2].

Step 4: Re-assessment and Consolidation * The participant may repeat the simulation or a parallel scenario to demonstrate integration of the corrected concept.

4. Data Analysis:

- Performance scores from the initial and repeat simulations are compared.

- Pre- and post-simulation conceptual tests are analyzed for statistical significance.

- Thematic analysis of debriefing transcripts can reveal moments of conceptual shift.

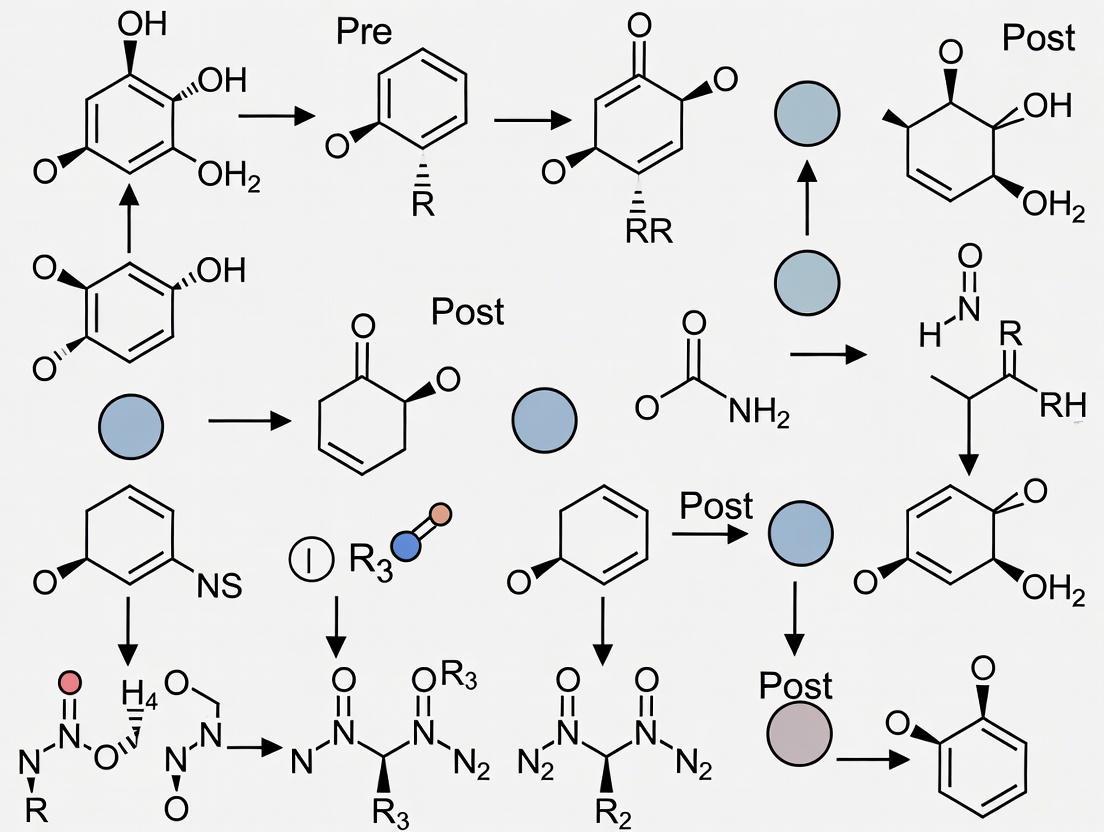

Visualizing the Conceptual Change Workflow in Curriculum Design

The diagram below illustrates the logical workflow for designing a curriculum to foster conceptual change, based on the Understanding by Design (UbD) framework and principles of diagnosing misconceptions.

The Scientist's Toolkit: Research Reagent Solutions for Conceptual Change

Table 2: Essential Materials and Frameworks for Conceptual Change Research

| Research Reagent / Tool | Function in Conceptual Change Experiments |

|---|---|

| Concept Inventories | Validated, multiple-choice assessments specifically designed to identify deep-seated misconceptions. They are the diagnostic assay for faulty mental models [1]. |

| Understanding by Design (UbD) Framework | A foundational "reagent" for curriculum development. It provides the structured protocol (Backward Design) for ensuring all learning activities are aligned with the goal of enduring understanding [1]. |

| Simulation-Based Learning | Creates a controlled, low-risk environment (the "in vitro" setting) where learners can apply concepts, make errors based on misconceptions, and receive immediate feedback, facilitating conceptual change [2]. |

| Crosscutting Concepts | A set of overarching ideas (e.g., cause and effect, structure and function) that have explanatory value across science. Using these provides a common language to help learners connect and transfer knowledge across domains [3]. |

| Deliberate Practice with Feedback | The core "catalyst" for change. It involves repetitive engagement in structured tasks with expert feedback, which is essential for replacing a misconception with an accurate scientific construct [2]. |

The process of scientific research in biomedicine is not merely the accumulation of facts but a continual process of conceptual refinement and revision. Researchers, scientists, and drug development professionals regularly encounter situations where entrenched misconceptions—whether simple false beliefs, fundamentally flawed mental models, or incorrect ontological categorizations—impede experimental progress and interpretation. The conceptual change approach has been identified as a powerful framework for addressing these tenacious and inaccurate prior conceptions, which pose a significant challenge to achieving accurate scientific understanding [4]. Within complex fields like biomedicine, moving beyond these misconceptions requires targeted interventions that go beyond traditional teaching methods, promoting genuine knowledge restructuring over simple knowledge enrichment [4].

This technical support center is framed within a broader thesis on modifying curricula for conceptual change research. It applies these principles directly to the practical, daily challenges faced in the laboratory. By structuring troubleshooting guides around the underlying types of misconceptions, we aim not only to solve immediate experimental problems but also to foster the conceptual shifts necessary for robust and reproducible research practices. The following sections provide a structured framework for diagnosing and resolving these issues, grounded in educational theory and practical laboratory experience.

A Framework for Misconceptions and Intervention Strategies

Biomedical misconceptions can be categorized into three distinct levels, each requiring a different intervention strategy. The table below outlines these categories, their characteristics, and the appropriate conceptual change approach for each.

Table 1: Levels of Misconceptions and Corresponding Intervention Strategies

| Level of Misconception | Definition & Characteristics | Example in Biomedicine | Recommended Intervention Strategy |

|---|---|---|---|

| 1. False Beliefs | Isolated, incorrect factual knowledge that is not integrated into a larger conceptual framework. | Believing that all enzymes have the same optimal pH for activity. | Refutational Texts: Directly state the false belief, refute it, and present the correct scientific explanation [4]. |

| 2. Flawed Mental Models | An internally consistent but incorrect framework for understanding a system or process. | Visualizing cellular signal transduction as a simple, linear pathway rather than a complex network with feedback loops. | Model-Based Reasoning: Use visual diagrams and guided inquiry to expose the flaw in the existing model and demonstrate the predictive power of the correct model. |

| 3. Ontological Shifts | Mis-categorizing a concept into a fundamentally wrong ontological category (e.g., seeing a process as a substance). | Conceptualizing "gene regulation" as a thing that can be directly observed, rather than a dynamic, relational process. | Conceptual Conflict & Analogy: Create cognitive conflict through discrepant events, then use bridging analogies to guide the shift to the correct category. |

The Scientist's Toolkit: Essential Research Reagent Solutions

A core principle of conceptual change is making implicit knowledge explicit. The following table details key reagents, demystifying their functions and addressing common misconceptions about their use.

Table 2: Research Reagent Solutions and Their Functions

| Reagent | Primary Function & Mechanism | Common Misconception | Conceptual Clarification |

|---|---|---|---|

| MTT Reagent | A tetrazolium salt reduced by metabolically active cells to a purple formazan product, serving as a proxy for cell viability [5]. | That it directly measures cell number or proliferation. | MTT measures metabolic activity. Confounding factors like changes in mitochondrial function or cell cycle status can skew results without a change in cell number. |

| Fetal Bovine Serum (FBS) | A complex, undefined mixture of growth factors, hormones, and proteins added to cell culture media to support cell growth and proliferation. | That it is a standardized and consistent component. | FBS is a source of significant experimental variability. Its undefined nature can mask or confound the specific effects of a tested compound. |

| Primary vs. Secondary Antibodies | Primary antibodies bind specifically to the target antigen. Secondary antibodies, conjugated to detection moieties, bind to the primary antibody. | That the secondary antibody is non-specific and does not require careful selection. | Secondary antibodies introduce specificity through their target species and isotype. Using the wrong secondary can lead to false negatives or high background. |

| PCR Primers | Short, single-stranded DNA sequences designed to bind complementary sequences flanking a target DNA region, providing a starting point for DNA polymerase. | That any complementary sequence will work efficiently. | Primer design is critical. Specificity, melting temperature (Tm), GC content, and the absence of self-complementarity (hairpins) or primer-dimer potential are essential for success. |

| Quorum Sensing Molecules | Small signaling molecules produced by bacteria that regulate gene expression in a cell-density-dependent manner [5]. | That they are simply "waste products" or have no function in controlled laboratory cultures. | These molecules are central to coordinated group behavior (e.g., biofilm formation, virulence). Their accumulation is a dynamic process, not a passive one. |

Troubleshooting Guides & FAQs: A Conceptual Change Approach

This section employs a structured, question-and-answer format based on the "Pipettes and Problem Solving" methodology [5]. This initiative teaches troubleshooting skills by presenting scenarios with unexpected outcomes, forcing researchers to articulate their mental models and propose diagnostic experiments to identify the source of the problem.

FAQ 1: My MTT Cell Viability Assay Shows High Variance and Inconsistent Results. What is the Source of Error?

The Underlying Misconception: A flawed mental model of the assay as a simple "count" of cells, ignoring the technical nuances that affect the chemical reaction.

Q1: What are the appropriate positive and negative controls for this experiment?

- A: A robust assay requires both a positive control (a known cytotoxic compound like staurosporine to define minimum viability) and a negative control (cells with no treatment to define maximum viability) [5]. The absence of a proper positive control is a false belief that any signal can be interpreted without reference points.

Q2: Could the cell culture conditions themselves be a factor?

- A: Yes. The group troubleshooting this scenario identified that the specific cell line had dual adherent/non-adherent properties. A flawed mental model of all cells behaving uniformly in culture can lead to this oversight.

Q3: I have the right controls and my cells are healthy. What specific technical step could cause high variance?

- A: The key technical error is often during the wash steps. Aspirating the supernatant from the MTT-containing medium must be done with extreme care to avoid aspirating or disturbing the cells at the bottom of the well, which directly causes high sample-to-sample variance [5]. This requires an ontological shift in viewing the wash step not as a simple cleaning process, but as a critical, skill-dependent manipulation.

Experimental Protocol for Resolution:

- Include Controls: Set up wells with a negative control (cells, medium, MTT, no drug) and a positive control (cells, medium, MTT, with a known cytotoxic compound).

- Refine Technique: For the wash steps, use a pipette to carefully aspirate the supernatant from the side of the well, ensuring the tip does not touch the cell layer. Tilting the plate slightly can aid in this.

- Validate: Perform the assay with the test compound and both controls, using the refined washing technique. The variance within control replicates should decrease significantly.

FAQ 2: My Gibson Assembly is Failing. I've Checked the Protocol, So is it Just Bad Luck?

The Underlying Misconception: An ontological misconception that molecular cloning is a deterministic, recipe-like process rather than a probabilistic biochemical reaction.

Q1: Have you verified the quality and concentration of your DNA fragments?

- A: This is the most common issue. A false belief is that any PCR product or digested plasmid is suitable. You must run the fragments on a gel to confirm they are intact, single bands, and use a fluorometer for accurate, stoichiometric concentration measurements.

Q2: What is the evidence that the assembly reaction itself is functional?

- A: A flawed mental model trusts the kit reagents unconditionally. Always include a positive control assembly provided in the kit or a well-characterized set of your own fragments. If the positive control fails, the assembly master mix or T5 exonuclease is likely inactive.

Q3: Could the problem be mundane?

- A: Yes. "Bad luck" is often a euphemism for unaccounted-for variables. Researchers are encouraged to consider seemingly mundane sources of error like a malfunctioning thermocycler, expired dNTPs in the PCR step, or a single contaminated reagent [5].

Experimental Protocol for Resolution:

- Diagnose: Run an analytical gel of your purified insert and vector fragments. Confirm their sizes and purity.

- Control: Set up the Gibson Assembly reaction with the kit's positive control DNA. If it fails, the enzyme mix is the issue.

- Systematic Check: If the positive control works, carefully recalculate the molar ratios of your insert(s) to vector for your test assembly. Test different ratios (e.g., 2:1, 3:1) in parallel reactions.

FAQ 3: My ELISA Shows High Background Signal Across All Wells, Including Blanks.

The Underlying Misconception: A false belief that high background is a monolithic problem with a single cause, rather than a symptom with a differential diagnosis.

Q1: Was the wash buffer prepared correctly and used abundantly?

- A: Insufficient washing is a primary cause. A false belief is that a quick rinse is sufficient. ELISA requires rigorous and repeated washing with a properly buffered solution (e.g., PBS with Tween-20) to remove unbound proteins and antibodies.

Q2: Is there non-specific binding occurring?

- A: Yes. A flawed mental model assumes antibody specificity is absolute. The solution is to include a blocking step with a protein like BSA or non-fat dry milk to occupy non-specific binding sites on the plate well surface.

Q3: Could the detection antibody be binding to something other than the primary antibody?

- A: Absolutely. This requires an ontological shift to view the secondary antibody as an active reagent that must be matched to the host species of the primary antibody. Using a secondary antibody that cross-reacts with proteins in the sample (e.g., from serum) will cause widespread background.

Experimental Protocol for Resolution:

- Optimize Washing: Ensure at least 3-5 wash cycles with a sufficient volume of wash buffer (e.g., 300 µL per well) with adequate soaking time.

- Validate Blocking: Extend the blocking step to at least 1-2 hours at room temperature. Test different blocking agents.

- Check Specificity: Confirm the host species of your primary antibody and ensure your secondary antibody is specific to that species' immunoglobulin. Re-run the assay with careful attention to these parameters.

Experimental Workflow Visualizations

The following diagrams, created using the specified color palette and contrast rules, map the logical workflow for effective troubleshooting and experimental design, bridging the gap between a flawed mental model and a correct one.

Diagram 1: The Conceptual Change Troubleshooting Loop.

Diagram 2: Direct ELISA Signal Detection Pathway.

Frequently Asked Questions (FAQs)

Q1: What is the most common blockage in translating basic science discoveries to clinical applications? A1: The T1 blockage, which occurs between basic science discovery and the design of prospective clinical studies, is a primary impediment. Mitigating this blockage is a key focus of initiatives like the NIH's Clinical and Translational Science Award (CTSA) program [6].

Q2: Why are my students' pre-existing conceptions of biological processes so resistant to change? A2: Students' alternative conceptions are often formed long before formal education and are "amazingly tenacious and resistant to extinction." Conceptual change requires students to become dissatisfied with their existing views and find new scientific conceptions intelligible, plausible, and useful [7].

Q3: What are the key informatics challenges in managing modern biomedical research data? A3: The primary challenges are: 1) managing multi-dimensional and heterogeneous data sets from sources like EHRs and high-throughput instrumentation; 2) applying knowledge-based systems for high-throughput hypothesis generation; and 3) facilitating data-analytic pipelines for in-silico research programs [6].

Q4: How does involving clinical professionals in research strengthen the resulting knowledge? A4: Professionals act as mediators of context-specific knowledge. Their practical wisdom (phronesis) provides unique insight into patterns that may not be apparent to external researchers, leading to more relevant research questions and outcomes that are easier to implement in practice [8].

Q5: What is the role of a troubleshooting guide in a research setting? A5: A user-friendly troubleshooting guide enhances satisfaction, reduces support costs, fosters self-reliance, and improves overall product or protocol quality. It empowers users to resolve issues independently, which is crucial in fast-paced research environments [9].

Troubleshooting Common Experimental Workflows

Issue: Low Data Integration Efficiency in Translational Studies

Problem: Difficulty integrating large-scale, multi-dimensional clinical phenotype and bio-molecular data sets.

Solution:

- Implement a Knowledge-Based System: Deploy an intelligent agent that uses a computationally tractable knowledge repository to reason upon data in your specific domain [6].

- Ensure Semantic Interoperability: Use informatics-based approaches to map among various data representations, ensuring the semantics of the data are well understood [6].

- Apply Data-Analytic Pipelines: Utilize pipelining tools (e.g., caGrid middleware) to support data extraction, integration, and analysis workflows across multiple sources. This captures intermediate steps and ensures reproducible, high-quality results [6].

Prevention: Adopt a common theoretical framework for core knowledge types and reasoning operations at the beginning of a research program to prevent the formation of data and knowledge "silos" [6].

Issue: Conceptual Resistance in Training Researchers

Problem: Experienced researchers or students hold on to alternative frameworks that conflict with established scientific concepts.

Solution:

- Identify Preconceptions: Before instruction, use brainstorming sessions or surveys to actively ascertain students' or trainees' existing ideas and explanations [7] [8].

- Create Conceptual Conflict: Design activities that allow users to investigate the soundness of their own ideas and experience a conflict with their expectations [7].

- Facilitate Exchange: Provide a structured environment for users to compare their ideas with those of others, including the scientific perspective, and to talk through the implications of their observations [7].

- Enable Application: Create opportunities for users to apply the new scientific conceptions in familiar settings and realistic research scenarios [7].

Prevention: Adopt a "less is more" approach, decreasing the amount of new material introduced to allow more time for in-depth conceptual engagement and restructuring [7].

Issue: Challenges in Collaborating with Healthcare Professionals

Problem: A gap persists between researchers and healthcare professionals (practitioners, managers, decision-makers), leading to research that is not applicable in practice.

Solution:

- Involve Professionals Early: Engage professionals in the research process itself, ensuring the research is conducted with them and not on them to facilitate knowledge co-creation [8].

- Acknowledge Different Knowledge Types: Explicitly value both scientific knowledge (episteme) from researchers and the practical, context-specific knowledge (phronesis) from professionals [8].

- Use Participatory Methods: Employ structured methods like Group Concept Mapping (GCM) to conceptualize research questions and outcomes from the professionals' perspectives, ensuring their voices are heard [8].

Prevention: Establish clear communication channels and shared goals from the outset of a project, recognizing that strengthening practice and strengthening research are highly correlated goals (r = 0.92) [8].

Quantitative Data on Knowledge Integration and Support

Table 1: Impact of Self-Service Troubleshooting Guides in Research Environments

| Metric | Impact | Data Source |

|---|---|---|

| User Preference for Self-Service | 81% of customers prefer to find answers on their own before contacting support [9]. | Harvard Business Review |

| Cost of Support | Live agent interaction: ~$1 per minute; Self-service guide: a few cents per use [9]. | Mashable |

| Response Expectation | 90% of consumers consider an immediate response to support questions essential [9]. | Zendesk |

| Consequence of Poor Experience | 80% of customers would stop doing business with a company due to a bad experience [9]. | Zendesk |

Table 2: Conceptual Areas and Outcomes of Professional Involvement in Research

| Conceptual Area (Cluster) | Key Outcome for Research & Practice |

|---|---|

| Knowledge Integration | Professionals' context-specific knowledge and researchers' scientific knowledge combine, leading to more useful and applicable outcomes [8]. |

| Development of Practice | Professionals learn through involvement, which directly contributes to the evolution and improvement of healthcare practices [8]. |

| Challenges for Professionals | Handling complexities such as time constraints and navigating different values between research and practice worlds must be managed [8]. |

Experimental Protocols & Workflows

Protocol 1: Implementing a Conceptual Change Teaching Strategy

This protocol is based on the Generative Learning Model (GLM) for modifying curricula [7].

Methodology:

- Focus Prompt: Define the core scientific concept to be taught (e.g., "What distinguishes living from nonliving things?").

- Ascertain Preconceptions: Before instruction, have students articulate their initial ideas through brainstorming sessions, written explanations, or discussions [7] [8].

- Motivating Experience: Engage students with a motivating activity or problem related to the concept that challenges their pre-existing views (e.g., asking if a virus is alive) [7].

- Compare and Contrast Ideas: Facilitate a structured session where students compare their ideas, the ideas of others, and the scientific perspective. Encourage evaluation of the evidence for each viewpoint [7].

- Apply in a New Context: Provide a new, familiar scenario where students must use the scientific concept to solve a problem or explain a phenomenon, reinforcing the conceptual change [7].

Protocol 2: Group Concept Mapping (GCM) for Collaborative Research Design

This mixed-method protocol is used to conceptualize a research area from the perspective of all involved stakeholders [8].

Methodology:

- Preparation: Develop a focus prompt (e.g., "What does professional involvement in our research project lead to?"). Recruit professionals with relevant experience [8].

- Brainstorming: Conduct qualitative brainstorming sessions where participants generate statements in response to the prompt. Aim for a comprehensive set of ideas [8].

- Sorting and Rating: Participants individually sort all generated statements into groups based on conceptual similarity. They then rate each statement based on predefined questions (e.g., "How much does this strengthen practice?") [8].

- Quantitative Analysis: Use multidimensional scaling and hierarchical cluster analysis on the sorting data to create a visual cluster map representing the conceptual structure of the group's thinking [8].

- Interpretation: The research team, ideally including the professional participants, interprets the cluster map and uses the rating data to identify patterns and priorities for the research project [8].

Visualizations of Workflows and Relationships

Diagram: Translational Research Cycle with Conceptual Integration

Diagram: Generative Learning Model for Conceptual Change

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Knowledge Integration Framework

| Item / Solution | Function in the 'Experiment' |

|---|---|

| Knowledge-Based System | An intelligent agent that employs a knowledge base to reason upon data and reproduce expert-level performance on complex tasks, increasing reproducibility and scalability [6]. |

| Semantic Interoperability Tools | Methods and technologies that ensure the meaning of data is well understood across different systems, allowing for valid integration of heterogeneous datasets [6]. |

| Data-Analytic Pipelining Tools | Platforms (e.g., caGrid) that support automated data extraction, integration, and analysis workflows, capturing metadata to ensure research reproducibility [6]. |

| Group Concept Mapping (GCM) | A mixed-method participatory approach that combines qualitative brainstorming with quantitative analysis to conceptualize complex research areas from a group's perspective [8]. |

| Conceptual Change Assessment | Protocols based on the Generative Learning Model to identify and address alternative frameworks and misconceptions in students and trainees [7]. |

FAQs: Understanding and Diagnosing Learning Barriers

This section addresses frequently asked questions about the conceptual hurdles researchers and professionals encounter when studying or designing interventions for complex biological systems.

FAQ 1: What are the primary categories of barriers that hinder the effective learning and application of knowledge in complex systems?

Research identifies several ordered barriers that can impede learning and implementation. These are particularly relevant when modifying curricula for conceptual change, as they highlight areas requiring intervention [10]:

- First-Order Barriers (External/Technological): These are extrinsic issues related to resources and access. In modern learning, this includes unstable or limited internet connectivity, lack of access to necessary devices or software, and insufficient technological tools for effective engagement. Both learners and educators can suffer from these limitations, which directly hinder the learning process [10].

- Second-Order Barriers (Internal/Beliefs): These are intrinsic barriers, rooted in the personal beliefs, pedagogical philosophies, and willingness to change of both educators and learners. For instance, a teacher's belief about how technology should be integrated, or a student's mindset about their ability to understand a complex system, can significantly promote or burden the learning process [10].

- Third-Order Barriers (Design Thinking): This barrier involves the capacity to redesign lessons and create creative activities that cater to different learners' needs. It moves beyond simply using technology to fundamentally rethinking how a subject like the cardiovascular system can be taught to foster deeper conceptual understanding [10].

FAQ 2: How does student information-seeking behavior in digital environments impact deep learning in complex subjects?

Studies show that students often exhibit "skittering" behavior—relying on easily accessible, non-scholarly sources—rather than engaging in deep, critical evaluation of information. This tendency toward "surface searching" can lead to a deficiency in discovery, integration, and application of knowledge, which is critical for mastering complex systems. The design of courses and assessments significantly influences whether students adopt deep or surface learning strategies [11].

FAQ 3: What is the relationship between confusion and deep learning of conceptually difficult content?

Contrary to folk wisdom, research indicates that confusion is not always detrimental to learning. When learners face an impasse or contradictory information, it can trigger a state of cognitive disequilibrium and confusion. If this confusion is successfully resolved, it can create opportunities for deep learning and better comprehension of conceptually challenging material, such as the mechanisms of the cardiovascular system [12].

FAQ 4: From a health systems perspective, what barriers hinder the progress of cardiovascular disease prevention and control programs?

A 2025 qualitative study on cardiovascular disease (CVD) prevention in Iran, using the WHO health system building blocks framework, identified six systemic barrier themes [13]:

- Governance and Leadership: Lack of inter-sectoral collaboration and insufficient prioritization of CVD in national agendas.

- Human Resources: Issues with workforce stability, training, and motivation.

- Service Delivery: Fragmented care and ineffective public health campaigns.

- Financing: Inadequate funding and inefficient resource allocation.

- Health Information Systems: Poor data quality and ineffective use of information for decision-making.

- Access to Essential Supplies: Irregular access to medications and equipment.

Troubleshooting Guide: Overcoming Conceptual and Experimental Hurdles

This guide provides a structured approach to diagnosing and resolving common issues in learning and research related to complex systems.

Troubleshooting Guide Template

| Problem Statement | Symptoms & Error Indicators | Possible Causes (Barrier Order) | Step-by-Step Resolution Process | Escalation Path |

|---|---|---|---|---|

| Ineffective Knowledge Synthesis | Inability to connect system components; reliance on superficial facts; "skittering" research behavior [11]. | Surface-level information searches; lack of critical evaluation skills (Second-Order: Beliefs). | 1. Define the Task: Use the Big6 model for information problem-solving [11].2. Seek Scholarly Sources: Move beyond the first page of search results.3. Synthesize & Evaluate: Critically combine information from multiple, high-quality sources. | Integrate dedicated modules on digital fluency and critical source evaluation into the curriculum. |

| Failure to Resolve Conceptual Confusion | Learner disengagement; frustration; inability to progress past a known impasse [12]. | Confusion is not being productively managed or resolved (Second & Third-Order). | 1. Induce Impasses: Use challenging problems or contradictory information to trigger cognitive disequilibrium [12].2. Provide Scaffolding: Offer targeted hints or guidance.3. Facilitate Resolution: Create opportunities for learners to resolve confusion through problem-solving. | Implement advanced learning technologies (e.g., Intelligent Tutoring Systems) designed to monitor and respond to affective states. |

| Barriers to Implementing a New Curriculum | Low adoption by educators; resistance to new teaching methods; failure to improve learning outcomes. | Combination of First-Order (lack of access, time), Second-Order (beliefs), and Third-Order (design) barriers [10]. | 1. Radical Acceptance: Intentionally focus on accepting the change to stay open to possibilities [14].2. Find Flexibility: Search for alignment between new requirements and effective prior practices [14].3. Search for Engagement: Add elements of movement, conversation, or games to prescribed lessons [14]. | Provide adequate training, resources, and institutional support to address all three orders of barriers simultaneously. |

Experimental Protocols & Data

Quantitative Data on Learning Barriers

Table 1: Barrier Types and Their Impact on Learning and Implementation

| Barrier Order | Category | Description | Example in Cardiovascular Learning | Impact Level |

|---|---|---|---|---|

| First-Order | External / Technological | Lack of adequate access, time, training, or institutional support [10]. | Unstable internet hindering access to online simulations of blood flow. | High - Prevents basic access |

| Second-Order | Internal / Beliefs | Teachers' and learners' pedagogical beliefs, willingness to change [10]. | A belief that memorizing anatomy is sufficient, versus understanding integrated physiology. | Medium-High - Affects engagement |

| Third-Order | Design Thinking | Ability to redesign lessons for creative, learner-centered activities [10]. | Inability to design activities that help students model the heart as a dynamic pump rather than a static diagram. | High - Limits depth of understanding |

| 2.5th Order | Classroom Management | Management challenges specific to the learning environment [10]. | Difficulty managing student groups during a complex, multi-stage lab experiment on blood pressure. | Medium - Disrupts workflow |

Research Reagent Solutions

Table 2: Essential Toolkit for Cardiovascular Conceptual Change Research

| Item | Function in Research | Brief Explanation |

|---|---|---|

| Big6 / IPS-I Model | Information Problem-Solving Framework | A systematic, six-step model (task definition, information-seeking strategies, location & access, use of information, synthesis, evaluation) to guide learners in effective research [11]. |

| WHO Health System Building Blocks | Analytical Framework for Systemic Barriers | A framework (Leadership, HR, Financing, etc.) to diagnose barriers in implementing real-world health programs, such as CVD prevention [13]. |

| Intelligent Tutoring System (e.g., AutoTutor) | Confusion Induction & Management | A computer learning environment that can naturally induce productive confusion through challenging problems and vague hints, fostering deep learning [12]. |

| "Portrait of a Graduate" Framework | Curriculum Design and Goal Setting | A comprehensive framework used by states and districts to define and measure multidimensional student learning, including critical thinking and durable skills [15]. |

| Decolonised Curriculum Strategy | Inclusive Pedagogical Approach | An approach that, combined with eLearning, encourages engagement with higher-quality literature review methods and diverse perspectives [11]. |

System Diagrams & Workflows

Diagram: Relationship Between Learning Barriers and Outcomes

Diagram: Information Problem-Solving Workflow

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the most common formulation challenges in drug development and how can they be addressed? Common formulation challenges include poor drug solubility, stability concerns, excipient incompatibility, and variability during manufacturing scale-up. Systematic troubleshooting involves root cause analysis using Quality by Design (QbD) principles, comprehensive stability testing, and formulation optimization through techniques like particle size optimization or nano-formulation to enhance bioavailability [16].

Q2: How can contamination and cross-contamination be effectively controlled in pharmaceutical facilities? Contamination control requires a multi-pronged approach: proper facility design with segregated areas, validated cleaning procedures, rigorous personnel training, and routine environmental monitoring. Adopting ISO 14644 cleanroom standards has proven to significantly reduce contamination risks. A WHO report indicates that 75% of contamination cases result from improper facility design and poor sanitation practices [17].

Q3: What analytical techniques are most effective for investigating particulate contamination in manufacturing? For particle contamination, a combination of physical and chemical methods is most effective. Initial investigation typically uses non-destructive techniques like Scanning Electron Microscopy with Energy Dispersive X-ray Spectroscopy (SEM-EDX) for inorganic compounds and Raman spectroscopy for organic particles. If solubility allows, advanced structure elucidation methods such as LC-HRMS (Liquid Chromatography-High Resolution Mass Spectrometry) and NMR (Nuclear Magnetic Resonance) provide detailed characterization [18].

Q4: How does the evolving pharmaceutical medicine curriculum address the competency gap in medicines development? The curriculum has evolved from traditional classroom education to competency-based vocational training that emphasizes demonstrated outcomes over time-bound learning. This approach covers seven specialist domains including Medicines Regulation, Clinical Pharmacology, Clinical Development, and Drug Safety & Surveillance, supplemented by interpersonal and management skills. This mix of academic preparation and workplace-acquired competencies best meets industry demands for flexible, adaptable professionals [19].

Troubleshooting Common Pharmaceutical Manufacturing Challenges

Table 1: Common Manufacturing Challenges and Resolution Strategies

| Challenge Category | Specific Examples | Recommended Troubleshooting Strategies |

|---|---|---|

| Raw Material Quality | Inconsistent API quality, excipient variability, substandard purity [17] | Supplier qualification audits; strict incoming material testing; well-defined specifications; secondary sourcing for critical materials [17]. |

| Process-Related Issues | Batch inconsistencies; granulation & compression problems (capping, sticking); dissolution variability [16] [17] | Process validation to identify Critical Process Parameters (CPPs); real-time In-Process Controls (IPCs); automation using Process Analytical Technology (PAT) [17]. |

| Equipment & Facility | Equipment malfunctions; unplanned downtime; contamination & cross-contamination [17] | Scheduled preventive maintenance; real-time equipment monitoring; proper facility layout; validated cleaning procedures; environmental monitoring [17]. |

| Stability & Shelf-Life | Drug degradation; reduced potency; shortened shelf-life [16] [17] | Accelerated stability testing; optimal storage conditions; formulation optimization with stabilizers; advanced protective packaging [17]. |

Experimental Protocols for Root Cause Analysis

Protocol 1: Systematic Root Cause Analysis for Quality Defects

This methodology provides a structured approach for investigating quality deviations in pharmaceutical manufacturing [18].

- Problem Definition: Document the precise nature of the problem, including the batch affected, manufacturing step, and initial observations.

- Information Gathering: Collect all relevant data including:

- Temporal information (when the incident occurred)

- Personnel involvement

- Materials and equipment used

- Environmental conditions

- Analytical Strategy Development: Based on the gathered information, design a parallel analytical approach using complementary techniques to maximize information yield while minimizing downtime.

- Hypothesis Testing: Use analytical data to test potential root causes and localize the affected manufacturing step.

- Cause Identification: Determine the circumstances leading to the incident and identify the fundamental reason(s) it occurred.

- Preventive Measures: Define and implement corrective and preventive actions (CAPA) to avoid recurrence.

Protocol 2: Conceptual Change Assessment in Educational Research

This protocol measures the effectiveness of educational interventions aimed at addressing misconceptions, relevant to curriculum modification research in PM/MDS [20] [4].

- Pre-Assessment: Administer a research-based concept inventory or diagnostic test to identify students' prior knowledge and potential misconceptions at the beginning of a course or intervention.

- Intervention Implementation: Implement targeted teaching strategies designed to promote conceptual change. The most effective single type is refutational text, which directly addresses and corrects common misconceptions [4]. Other approaches include case-based learning and simulation.

- Post-Assessment: Administer the same or equivalent concept inventory after the intervention.

- Data Analysis:

- Quantitatively, compare pre- and post-test scores to calculate effect sizes. A meta-analysis in biology education showed conceptual change interventions can produce large effects compared to traditional teaching [4].

- Qualitatively, analyze open-ended responses or case tasks to identify changes in understanding and persistence of specific misconceptions [20].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Analytical Techniques for Troubleshooting Manufacturing Issues

| Tool/Technique | Primary Function | Application Example |

|---|---|---|

| SEM-EDX(Scanning Electron Microscopy with Energy Dispersive X-ray Spectroscopy) | Chemical identification of inorganic compounds; analysis of surface topography and particle size [18]. | Identifying metallic contaminations, such as equipment abrasion or rust particles [18]. |

| Raman Spectroscopy | Non-destructive identification of organic compounds by comparing spectral data to reference databases [18]. | Analysis of organic particulate matter, such as polymers from seals or single-use equipment [18]. |

| LC-HRMS(Liquid Chromatography-High Resolution Mass Spectrometry) | Separation and high-precision identification of individual components in a mixture; structure elucidation [18]. | Characterizing unknown impurities or degradation products in a drug product or substance [18]. |

| NMR(Nuclear Magnetic Resonance) | Detailed molecular structure determination and confirmation [18]. | Final confirmation of the chemical structure of an isolated contaminant or impurity [18]. |

| Refutational Text | A specific instructional material designed to directly address, challenge, and correct common misconceptions [4]. | Used in educational interventions within a modified PM/MDS curriculum to facilitate robust conceptual change among students [4]. |

Workflow and Conceptual Diagrams

Diagram 1: Conceptual change education model.

Diagram 2: Root cause analysis workflow.

Blueprint for Change: Methodologies for Designing and Implementing Modern Curricula

Curriculum revision is a complex, multi-dimensional process that demands not only content updates but also strategic management to achieve effective and lasting transformation. In higher education and research-focused environments, these changes often face substantial institutional and administrative challenges, including faculty resistance, bureaucratic hurdles, and lack of stakeholder engagement [21]. Kotter's 8-Step Change Management Model provides a structured, leadership-oriented framework that has been successfully applied to curriculum reform initiatives across diverse educational settings [22] [21] [23]. This guide outlines how researchers and educational professionals can systematically apply Kotter's principles to navigate the complexities of curricular revision within conceptual change research contexts.

Kotter's 8-Step Process: Detailed Experimental Protocol

Step 1: Create a Sense of Urgency

Objective: Inspire stakeholders to act with passion and purpose by recognizing the critical need for immediate curricular change [24].

Methodology:

- Conduct a comprehensive gap analysis comparing current curriculum outcomes with evolving educational demands and workforce needs [22] [21]

- Gather and present data-driven evidence of performance deficiencies through stakeholder surveys, employer feedback, and benchmarking against global educational standards [22] [21]

- Host town hall meetings and forums with key invested partners to discuss relevant issues and results from initial needs assessments [22]

- Communicate the risks of maintaining the status quo, including potential declines in student outcomes, employability, and institutional accreditation [21]

Technical Support FAQ:

- Q: How can I demonstrate urgency when the need for change isn't universally recognized?

Step 2: Build a Guiding Coalition

Objective: Assemble a powerful, influential team of diverse stakeholders to lead and guide the change initiative [24] [25].

Methodology:

- Identify and recruit influential change leaders across departments and hierarchy levels, including faculty champions, administrators, and external partners [22] [26]

- Form representative oversight and curriculum committees with representation from all relevant academic units [22]

- Establish regular communication structures to inform and update all stakeholders throughout the change process [22] [23]

- Ensure emotional and strategic commitment among coalition members through honest dialogue and shared responsibility [26] [27]

Technical Support FAQ:

- Q: What if key department leaders resist joining the coalition?

Step 3: Form a Strategic Vision and Initiatives

Objective: Clarify how the future will be different from the past and develop strategic initiatives to achieve this vision [24].

Methodology:

- Collaborate with guiding coalition to create a clear, compelling vision statement that articulates the desired future state of the curriculum [22]

- Develop specific, measurable goals aligned with the vision, such as enhancing specific competencies or increasing graduate employability [22] [21]

- Create a strategic roadmap highlighting key milestones and implementation timelines [26]

- Ensure the vision is aspirational yet actionable, tied directly to measurable educational outcomes [26]

Technical Support FAQ:

- Q: How specific should our strategic vision be?

Step 4: Enlist a Volunteer Army

Objective: Rally massive numbers of people around the common opportunity and motivate them to contribute actively [24].

Methodology:

- Recruit subject matter experts (SMEs) and content developers to create and review new curriculum materials [22]

- Engage diverse reviewers including students, industry representatives, and special population representatives (e.g., Indigenous groups, Francophones) to ensure content accuracy and relevance [22]

- Communicate the vision consistently across multiple channels and embed it into daily operations and decision-making processes [26] [28]

- Empower volunteers by involving them in decision-making and problem-solving activities [28]

Step 5: Enable Action by Removing Barriers

Objective: Eliminate obstacles that impede progress and empower stakeholders to execute the vision [24].

Methodology:

- Conduct regular audits of institutional processes, tools, and structures to identify alignment issues with the new vision [26] [28]

- Provide necessary support resources including instructional designers, educational developers, and technical staff to transform content into deliverable formats [22]

- Implement a yearly quality improvement process to continuously identify and address emerging barriers [22]

- Restructure systems, policies, and compensation models that conflict with the desired changes [26]

- Address resistance through one-on-one conversations, additional training, or reallocation of resources [26] [28]

Technical Support FAQ:

- Q: What are the most common barriers in curriculum revision, and how can I address them?

- A: Common barriers include:

- Outdated processes: Audit and revise teaching allocation, assessment methods, and approval workflows [26] [29].

- Resistant faculty: Engage them in the process, provide support, and highlight benefits [26] [21].

- Insufficient resources: Allocate budget for course releases, summer work, and technical support [22] [29].

- Lack of technical skills: Provide training and instructional design support [22] [30].

- A: Common barriers include:

Step 6: Generate Short-Term Wins

Objective: Create, recognize, and celebrate visible, unambiguous successes to build momentum and energize participants [24].

Methodology:

- Plan for and achieve low-risk, high-reward projects that showcase tangible progress [26]

- Publicly recognize and reward individuals and teams who contribute significantly to early victories [26] [28]

- Share results of pilot evaluations and positive feedback with all committees and institutional leadership [22]

- Communicate wins frequently through multiple channels, turning successes into case studies that fuel continued excitement [26]

Step 7: Sustain Acceleration

Objective: Maintain momentum by leveraging credibility from early wins to tackle larger challenges and embed changes deeper [24].

Methodology:

- Analyze each success to identify best practices and areas for improvement in subsequent phases [26] [27]

- Bring in new change agents and fresh perspectives to reinvigorate the guiding coalition [26]

- Consult with licensing and accreditation bodies to ensure changes are reflected in required examinations [22]

- Continue initiating changes until the vision becomes a reality, resisting declarations of victory too early [24] [26]

Step 8: Institute Change

Objective: Articulate connections between new behaviors and organizational success until they become strong enough to replace old habits [24].

Methodology:

- Integrate new curriculum into official university requirements and transcripts [22] [23]

- Develop and implement faculty development tools to support instructors in delivering the new curriculum effectively [22]

- Modify hiring processes, training programs, and performance metrics to reinforce the new approaches [26]

- Include change principles in new employee onboarding and ongoing professional development [26]

- Embed outcomes-based evaluation processes to continuously assess whether the curriculum meets its intended outcomes [22]

Table 1: Kotter's 8-Step Implementation Metrics for Curriculum Change

| Step | Key Performance Indicators | Data Collection Methods | Reported Success Factors |

|---|---|---|---|

| Create Urgency | • Stakeholder perception surveys• Gap analysis completion• Town hall participation rates | • Pre-implementation surveys• Curriculum mapping• Attendance records | 75% leadership buy-in needed [26], Data on performance gaps [21] |

| Build Coalition | • Coalition diversity index• Department representation• Meeting participation consistency | • Stakeholder analysis• Attendance tracking• Engagement metrics | Cross-functional teams [28], Inclusion of influencers [25] |

| Form Vision | • Vision clarity surveys• Goal alignment metrics• Strategy document completion | • Post-communication surveys• Document analysis• Leadership interviews | Simple, memorable vision [26], Tied to measurable outcomes [26] |

| Enlist Volunteers | • Volunteer recruitment numbers• SME participation rates• Communication reach metrics | • Registration records• Feedback mechanisms• Communication analytics | Large-scale mobilization [24], Diverse reviewer inclusion [22] |

| Enable Action | • Barriers identified/resolved• Training completion rates• Resource allocation efficiency | • Barrier logs• Training records• Budget tracking | Systematic barrier removal [26], Ongoing quality improvement [22] |

| Generate Wins | • Short-term goal achievement• Pilot evaluation results• Recognition frequency | • Project milestones• Assessment data• Recognition logs | Early, visible successes [26], Celebration of contributors [28] |

| Sustain Acceleration | • Momentum maintenance index• Change initiative scalability• Continued leadership engagement | • Progress reviews• Initiative expansion tracking• Leadership surveys | Continuous improvement culture [26], Fresh perspectives [26] |

| Institute Change | • Policy integration level• Cultural adoption metrics• Long-term sustainability measures | • Policy documentation• Cultural assessments• Longitudinal studies | Integration into systems and culture [26], Reinforcement through HR practices [26] |

Visualization: Kotter's Change Process Workflow

Kotter's 8-Step Change Process Workflow

Research Reagent Solutions: Change Management Toolkit

| Tool Category | Specific Tools & Methods | Primary Function | Implementation Context |

|---|---|---|---|

| Urgency Creation Tools | • Gap analysis templates• Stakeholder survey instruments• Competitive benchmarking data | Demonstrate compelling need for change through data-driven assessment | Initial phase; ongoing reinforcement [22] [21] |

| Coalition Building Resources | • Stakeholder analysis matrix• Cross-functional team charters• Communication platform access | Identify and engage influential champions across organizational silos | Early implementation; periodic refresh [22] [26] |

| Vision Crafting Frameworks | • Strategic planning templates• Vision statement guidelines• Outcome mapping software | Articulate clear, compelling future state and implementation roadmap | Early implementation; revision cycles [26] [28] |

| Communication Systems | • Multi-channel communication plans• Feedback collection mechanisms• Progress dashboard tools | Ensure consistent, transparent message delivery and feedback collection | Ongoing throughout process [26] [28] |

| Barrier Removal Mechanisms | • Process audit checklists• Resistance management protocols• Resource reallocation procedures | Identify and eliminate structural, cultural and resource obstacles | Middle implementation phases [22] [26] |

| Success Metrics Framework | • Short-term win identification• Recognition and reward systems• Progress celebration events | Build momentum through visible, celebrated achievements | Middle to late implementation [26] [27] |

| Sustainability Instruments | • Policy revision templates• Integration checklists• Long-term evaluation plans | Embed changes into institutional culture and operations | Late implementation and beyond [22] [26] |

Troubleshooting Common Implementation Challenges

Technical Support FAQ:

- Q: The change process is losing momentum after initial implementation. How can I regain traction?

Q: How can I ensure the changes become permanent and not just another temporary initiative?

Q: What if our institutional culture is particularly resistant to change?

Q: How do we adapt Kotter's business model for an academic environment with shared governance?

- A: Balance the top-down leadership emphasis with academic collaboration by ensuring broad representation in your guiding coalition, respecting faculty governance processes, and emphasizing voluntary participation alongside administrative support [23].

This technical support center is designed to facilitate conceptual change for researchers, scientists, and drug development professionals. It is structured upon Merrill's Principles of Instruction, an evidence-based framework that promotes effective, problem-centered learning [31] [32]. The content here moves beyond simple information delivery; it is crafted to help you actively confront and restructure misconceptions, thereby building more accurate mental models of complex scientific processes [33]. The guides and FAQs are structured as real-world problem-solving scenarios, mirroring the challenges you face in the laboratory, to ensure that learning is not just theoretical but directly applicable to your experimental work.

Theoretical Foundations: Merrill's Principles and Conceptual Change

Core Principles for Effective Learning

Merrill's Principles of Instruction provide a robust framework for creating learning experiences that are engaging and effective. The following table summarizes the five core principles [31] [32] [34]:

| Principle | Core Concept | Application in Research Support |

|---|---|---|

| Problem-Centered | Learning starts with authentic, real-world tasks [31] [34]. | Guides are built around specific, common experimental challenges. |

| Activation | Learning is promoted when existing knowledge is activated as a foundation [31] [32]. | FAQs prompt recall of fundamental concepts before introducing new solutions. |

| Demonstration | Learning is promoted when instruction demonstrates what is to be learned [31] [32]. | Protocols show expert performance of techniques and expected outcomes. |

| Application | Learning is promoted when learners apply new knowledge [31] [32]. | Guides require active problem-solving and decision-making. |

| Integration | Learning is promoted when new knowledge is integrated into the learner's world [31] [34]. | Solutions encourage discussing findings and applying them to novel contexts. |

Overcoming Misconceptions in Science

Conceptual change is the process of reorganizing one's existing knowledge structures, which sometimes requires abandoning deeply-held misconceptions [33]. In professional development, these misconceptions can be categorized to better address them:

- False Beliefs: These are single, incorrect ideas that can be stated in a single proposition (e.g., "All cell culture media components are interchangeable") [33]. These are relatively easier to rectify through belief revision.

- Flawed Mental Models: These are coherent but incorrect analog representations of a system or process that consist of multiple interrelated propositions (e.g., a single-loop model of a complex metabolic pathway instead of the correct multi-branched model) [33]. Rectifying these requires a more profound mental model transformation.

The troubleshooting guides below are designed to trigger and support this often-challenging process of conceptual change.

Troubleshooting Guide: Liver-Chip Toxicity Assays

This guide follows a problem-centered approach, helping you diagnose and resolve common issues with Micro-Physiological Systems (MPS), specifically Liver-Chips, which are critical for predictive toxicology in drug development [35].

Issue 1: Inconsistent Cytotoxicity Readouts Between Liver-Chip Donors

Problem Statement: When testing the same drug compound across Liver-Chips seeded with cells from different human donors, the cytotoxicity readouts (e.g., ALT release, Caspase 3/7 activity) are highly inconsistent, making the results difficult to interpret [35].

Symptoms & Error Indicators:

- Statistically significant variation in ALT release between donor chips for the same drug dose.

- Variable levels of Caspase 3/7 activation.

- One donor chip may indicate toxicity while another does not.

Possible Causes:

- Underlying genetic or metabolic differences between the human donors.

- Inconsistencies in the differentiation of stem cells into functional hepatocytes for each chip.

- Variations in the baseline metabolic function (e.g., albumin production) of the hepatocytes from different donors.

Step-by-Step Resolution Process:

- Validate Baseline Function: Before drug exposure, confirm that all chips meet pre-defined functional criteria. Check that albumin and urea production rates are within an acceptable, comparable range for all donors [35].

- Benchmark with Control Drugs: Run a set of standard compounds with known toxic or non-toxic profiles on all donor chips. This establishes each chip's response baseline and confirms the model's performance against the IQ MPS consortium guidelines [35].

- Normalize Data: Instead of relying solely on absolute values, normalize the cytotoxicity readouts (like ALT release) to the baseline metabolic activity of each specific chip (e.g., albumin production rate).

- Adjust for Drug-Protein Binding: Account for relevant biological interactions. Re-analyze the drug's free (unbound) concentration in the culture medium, as this can significantly impact toxicity predictions and reduce inter-donor variability [35].

Escalation Path: If high inter-donor variability persists after normalization and protocol refinement, escalate to the MPS core facility or the Liver-Chip manufacturer to investigate potential technical issues with the chip platform itself.

Validation Step: Confirm that after implementing the above steps, control drugs consistently produce the expected results (toxic vs. non-toxic) across chips from different donors, with variability falling within pre-specified statistical limits.

Issue 2: Poor Prediction of Human Drug-Induced Liver Injury (DILI)

Problem Statement: The Liver-Chip model fails to correctly identify drugs that are known to cause liver injury in humans, or it falsely labels safe drugs as toxic, leading to inaccurate predictions [35].

Symptoms & Error Indicators:

- A drug known to be hepatotoxic in humans shows no toxicity in the chip.

- A drug with a clean clinical safety record causes cytotoxicity in the chip model.

- The model's sensitivity and specificity for predicting human DILI are low.

Possible Causes:

- The model lacks critical non-parenchymal cell types (like Kupffer cells) that contribute to drug toxicity in vivo.

- The media flow rates do not accurately mimic human physiological shear stress.

- The endpoints being measured (e.g., only ALT) are insufficient to capture the full spectrum of DILI.

- The duration of drug exposure is too short to manifest certain types of toxicity.

Step-by-Step Resolution Process:

- Verify Model Physiology: Use advanced microscopy (confocal, electron) to confirm the development of three-dimensional tissue structures and proper cell-to-cell interactions that are physiologically relevant [35].

- Incorporate Additional Endpoints: Move beyond standard markers. Include multiplexed assays for oxidative stress, mitochondrial membrane potential, and biomarkers of specific DILI mechanisms (e.g., bile acid accumulation).

- Extend Exposure Duration: For drugs suspected of causing idiosyncratic toxicity, consider longer-term exposure studies (e.g., 14 days) in the chip to capture delayed responses.

- Utilize the Economic Model for Context: Understand the economic impact. A Liver-Chip with 87% sensitivity and 100% specificity can prevent costly late-stage drug failures, saving billions in R&D [35]. Use this to justify more comprehensive testing.

Escalation Path: For persistent poor prediction, consider adopting a more complex multi-organ-chip that includes liver, intestine, and kidney tissues to model systemic drug metabolism and toxicity.

Validation Step: The chip should correctly identify at least 87% of known hepatotoxic drugs and should not falsely label any known safe drugs as toxic, as demonstrated in foundational validation studies [35].

Frequently Asked Questions (FAQs)

Q1: Our team is new to MPS. How can we quickly build confidence in using Liver-Chip data for critical decisions like lead optimization?

A1: Begin with a structured verification process [35]:

- Internal Benchmarking: Start by replicating the study by Ewart et al. using the same panel of drugs with known DILI outcomes. This activates your team's prior knowledge of these compounds while demonstrating the chip's capabilities.

- Blinded Testing: Progress to a blinded set of 5-10 internal compounds. This provides a safe environment for application and builds confidence in the model's predictive power.

- Economic Analysis: Frame your results within the economic value model. Demonstrating how even a small increase in predictive accuracy can save millions of dollars in downstream costs helps integrate this new technology into the company's decision-making fabric.

Q2: We are experiencing a high rate of technical failures with our Liver-Chips, such as bubble formation or contamination. Where should we focus our efforts?

A2: This is a common activation hurdle. Focus on standardization and training:

- Demonstration: Ensure every team member has observed and performed an expert-led, step-by-step demonstration of the entire chip priming, seeding, and dosing protocol.

- Troubleshooting Guide: Create an internal, visual troubleshooting guide (a simple decision tree) specifically for these technical issues. This guide should list symptoms (e.g., "bubble in main channel"), possible causes (e.g., "temperature fluctuation," "priming rate too high"), and corrective actions.

- Application and Integration: Establish a shared lab log where team members document every failure and its resolution. This collective knowledge base turns individual problems into organizational learning, fostering a conceptual shift towards a culture of systematic problem-solving.

Q3: How does this problem-centered approach to training differ from simply reading a protocol or manual?

A3: The difference lies in promoting conceptual change versus information transfer. A protocol provides a series of steps (information). A problem-centered guide, built on Merrill's principles, forces you to:

- Activate your existing mental model of the system.

- Observe demonstrations of correct outcomes and procedures.

- Apply your knowledge to diagnose issues and make decisions, often revealing flaws in your mental model (e.g., a misunderstanding of how flow rates affect cell function).

- Integrate the corrected concept into your understanding, leading to a deeper, more robust knowledge that can be applied to novel situations, ultimately transforming your expertise [33].

Research Reagent Solutions & Essential Materials

The following table details key materials used in advanced Liver-Chip platforms for predictive toxicology, as referenced in foundational studies [35].

| Research Reagent / Material | Function in the Experiment |

|---|---|

| Primary Human Hepatocytes | The core functional cell type responsible for drug metabolism and the primary target for toxicity studies. Sourced from multiple donors to assess variability [35]. |

| Emulate Liver-Chip | A specific Micro-Physiological System (MPS) that provides a 3D microenvironment with fluid flow, mimicking the structure and function of the human liver lobe [35]. |

| Characterized Drug Panel | A set of drugs with well-established clinical DILI outcomes (both toxic and safe) used to benchmark, validate, and demonstrate the predictive performance of the chip model [35]. |

| Cell Culture Medium with Defined Protein Concentration | Supports cell viability and function. The specific protein concentration is critical for accurate drug-protein binding calculations, which can significantly refine toxicity predictions [35]. |

| ALT (Alanine Aminotransferase) Assay Kit | A standard clinical marker for liver damage. The release of ALT from damaged hepatocytes into the culture medium is a key quantitative endpoint for measuring drug-induced cytotoxicity [35]. |

| Albumin & Urea Assay Kits | Functional markers used to confirm the health and metabolic competency of the hepatocytes before and during drug exposure. Serves as a quality control check [35]. |

| Caspase 3/7 Apoptosis Assay | A marker for programmed cell death. Used to differentiate the mechanism of toxicity (apoptosis vs. necrosis) and provide a more nuanced understanding of the drug's cytotoxic effect [35]. |

Experimental Protocol: Validating a Human Liver-Chip for Predictive Toxicology

This detailed protocol is based on the methodology that demonstrated an 87% sensitivity and 100% specificity in predicting human DILI [35].

Objective: To systematically assess the performance of a human Liver-Chip model in predicting drug-induced liver injury (DILI) and to integrate it into the lead optimization workflow.

Background: This protocol is designed to trigger conceptual change by directly comparing the chip's predictions with known human outcomes, challenging potential misconceptions held from reliance on traditional animal models or simple cell culture.

Materials:

- Refer to the "Research Reagent Solutions" table above.

- A panel of at least 18 drugs (15 known hepatotoxins, 3 known safe drugs), blinded.

- Liver-Chip system with associated perfusion controllers and imaging setup.

Procedure:

Chip Seeding and Functional Maturation:

- Seed primary human hepatocytes from at least three different donors into the Liver-Chips following the manufacturer's protocol.

- Maintain the chips under physiological flow conditions for 7-14 days to allow for formation of stable, 3D tissue structures and functional maturation.

- Quality Control (Activation): Monitor albumin and urea production in the effluent medium to ensure the cells have reached a stable, functional state before proceeding. This activates the researcher's knowledge of hepatocyte function.

Pre-Experimental Quality Control (Demonstration):

- Randomly select chips for structural analysis using confocal and electron microscopy to demonstrate the development of physiologically relevant architectures (e.g., bile canaliculi).

- Confirm that baseline levels of ALT release are low, indicating healthy cells.

Drug Dosing and Exposure (Problem-Centered Application):

- After the maturation phase, expose the chips to the panel of blinded drugs. Run each drug in replicate chips per donor.

- Use multiple, clinically relevant concentrations of each drug (e.g., C~max~, 10x C~max~).

- Include vehicle controls and known toxic/non-toxic control drugs in each experimental run.

- Critical Consideration: Record the free (unbound) drug concentration in the medium, as adjusting for drug-protein binding can increase the model's sensitivity from 80% to 87% [35].

Endpoint Analysis (Integration):

- Following 3-7 days of exposure, collect effluent medium for analysis.

- Quantify ALT release and Caspase 3/7 activity as primary markers of cytotoxicity.

- Assess changes in albumin production as a marker of metabolic function.

- Perform high-content imaging to evaluate changes in cellular morphology.

Data Analysis and Model Validation:

- Unblind the drug panel.

- For each drug, classify the outcome as "Predicted Toxic" or "Predicted Safe" based on pre-defined thresholds for the cytotoxicity markers.

- Compare the chip's predictions to the known human DILI outcomes for the drug panel.

- Calculate Performance Metrics:

- Sensitivity: (True Positives / All Human Hepatotoxins) - Target: ≥87%

- Specificity: (True Negatives / All Human Safe Drugs) - Target: 100%

- Compare these results to historical data from animal models and 3D spheroids to demonstrate the chip's superior predictive power, fostering a conceptual shift towards human-relevant models [35].

Technical Support & Troubleshooting Guides

FAQ: Addressing Common Interdisciplinary Research Challenges

Q1: How can we overcome communication barriers and terminology differences between team members from starkly different disciplines (e.g., artists and engineers)?

A: Effective interdisciplinary collaboration requires deliberate strategies to bridge communication gaps. Research indicates that developing shared conceptual frameworks (CFs) acts as a "boundary object," providing a common functional structure that integrates diverse disciplinary perspectives [36]. A proven methodology involves a three-phase, iterative process:

- Phase 1: Define Boundary Concepts. Collaboratively identify and define key terms that are central to the project but may have different interpretations across disciplines. This creates a shared vocabulary [36].

- Phase 2: Develop a CF as a Boundary Object. Co-create a visual framework that maps the relationships between these concepts. This framework should be adaptable to different disciplinary needs while maintaining a common core identity [36].