Troubleshooting Molecular Clock Model Selection: A Practical Guide for Biomedical Researchers

Selecting an appropriate molecular clock model is a critical, yet often challenging, step in Bayesian phylogenetic analysis for studying pathogen evolution, drug resistance, and disease origins.

Troubleshooting Molecular Clock Model Selection: A Practical Guide for Biomedical Researchers

Abstract

Selecting an appropriate molecular clock model is a critical, yet often challenging, step in Bayesian phylogenetic analysis for studying pathogen evolution, drug resistance, and disease origins. This article provides a comprehensive, practical framework for researchers and drug development professionals to navigate this process. It covers the foundational principles of strict, relaxed, and random local clock models; outlines methodological approaches for implementation in modern software like BEAST X; details troubleshooting strategies for common pitfalls like lack of temporal signal; and explains rigorous validation and comparative techniques using marginal likelihoods and Bayesian evaluation of temporal signal (BETS). The guide synthesizes recent methodological advances to empower robust and reliable divergence time estimation in biomedical research.

Understanding the Molecular Clock: From Strict Assumptions to Relaxed Models

The molecular clock hypothesis, first postulated by Zuckerkandl and Pauling in the 1960s, proposed that evolutionary changes at the molecular level accumulate at a roughly constant rate over time [1] [2]. This foundational insight established that genetic distance—the number of evolutionary changes between sequences—could serve as a measure of evolutionary time, much like the steady radioactive decay of atoms allows geologists to date rock formations [3] [2].

Over subsequent decades, this simple concept has evolved into a sophisticated statistical framework. Modern molecular clock modeling no longer assumes a single, universal rate but incorporates complex patterns of rate variation across lineages using Bayesian inference methods [3] [1]. This progression from a simple hypothesis to computationally intensive Bayesian methods represents a fundamental shift in how evolutionary biologists convert genetic divergence into estimates of absolute time, enabling investigations into questions ranging from viral emergence to deep evolutionary history [1] [4].

The Evolution of Clock Models: From Strict to Relaxed Clocks

The initial "strict" molecular clock model assumed that every branch in a phylogenetic tree evolves according to the same substitution rate [5]. While mathematically straightforward, this assumption proved biologically unrealistic for most datasets, as evolutionary rates can vary considerably among species due to factors like generation time, metabolic rate, population size, and the efficacy of DNA repair [3].

A Hierarchy of Relaxed Clock Models

To address the limitations of the strict clock, researchers developed "relaxed" molecular clock models that accommodate rate variation among lineages [3] [5]. These models differ primarily in how they handle the relationship between evolutionary rates on adjacent branches in a phylogeny.

Table: Major Molecular Clock Model Types

| Model Type | Key Assumption | Rate Variation | Best Application Context |

|---|---|---|---|

| Strict Clock | Single evolutionary rate across all branches [5] | None | Closely-related populations with similar biology; validation of clock-like behavior [6] |

| Uncorrelated Relaxed Clock | Each branch has independent rate drawn from shared distribution (e.g., lognormal, exponential) [5] | High; rates can change abruptly between branches | Datasets with substantial, unpredictable rate variation across lineages [5] [7] |

| Autocorrelated Relaxed Clock | Evolutionary rates change gradually; descendant branches have rates correlated with ancestors [3] | Moderate; neighboring branches have similar rates | Deep evolutionary timescales where rates evolve gradually [3] |

| Random Local Clock | Limited number of rate changes at specific points in tree [5] | Flexible; more variation than strict but less than fully relaxed clock | Situations where specific clades are known to evolve at different rates [5] |

| Time-Dependent Rate | Evolutionary rate varies systematically with time scale [1] | Rate decay toward present | Pathogens with different short-term vs. long-term rates; ancient DNA [1] |

The development of these models represents a fundamental trade-off in statistical modeling: as models gain parameters to better fit biological reality (accuracy), they also increase statistical uncertainty and computational demands (complexity) [3]. The optimal model should therefore have enough parameters to adequately explain the data—but no more [3].

Troubleshooting Molecular Clock Model Selection

Selecting an appropriate molecular clock model represents a critical step in phylogenetic dating analysis. The following troubleshooting guide addresses common challenges researchers encounter.

Frequently Asked Questions

Q: My root-to-tip regression in TempEst shows a low R² value. Does this mean my dataset lacks temporal signal and is unsuitable for molecular clock dating?

A: Not necessarily. A low R² value from root-to-tip regression does not definitively indicate a lack of temporal signal [6]. Root-to-tip regression assumes a strict molecular clock, and a poor fit may indicate substantial among-branch rate variation rather than complete absence of temporal signal [6]. In such cases, a relaxed clock model may be more appropriate. The recommended approach is to formally test for temporal signal using Bayesian Evaluation of Temporal Signal (BETS), which compares marginal likelihoods between models with and without tip dates [6].

Q: How can I determine which molecular clock model is best for my dataset?

A: Model selection should be performed using statistical comparison of marginal likelihoods, typically through nested sampling or path sampling [6]. Create and compare four models: strict and relaxed clock models, each with and without tip dates [6]. This approach simultaneously tests temporal signal (dated vs. undated) and clock model fit (strict vs. relaxed). If BETS provides evidence for temporal signal, you can proceed with dating analyses even if root-to-tip regression shows poor fit [6].

Q: What is the impact of calibration strategy on molecular clock dating?

A: Calibration strategy significantly impacts divergence time estimates [4] [8]. Multiple calibrations generally produce more reliable estimates than single calibrations [4]. Deeper calibrations (closer to the root) are preferred over shallow calibrations, as they capture a larger proportion of the overall genetic variation and provide more accurate timescale estimates [4]. The use of well-justified calibration priors that account for fossil uncertainty is crucial, as arbitrary parameters in minimum-bound calibrations can strongly impact divergence time estimates [8].

Q: How can I assess whether my chosen molecular clock model is actually adequate for my data, rather than just being the best of the available options?

A: Use posterior predictive simulations to assess model adequacy [9]. This method involves: (1) conducting a Bayesian molecular clock analysis of your empirical data; (2) using parameters from the posterior distribution to simulate new datasets; (3) comparing branch length estimates between the empirical data and simulated data [9]. An inadequate model will show significant discrepancies between empirical and posterior predictive branch lengths, indicating model misspecification [9].

Experimental Protocol: Bayesian Evaluation of Temporal Signal (BETS)

Purpose: To formally test for the presence of sufficient temporal signal in dated molecular sequences for molecular clock dating [6].

Procedure:

- Model Specification: Set up four separate analyses in BEAST or similar Bayesian phylogenetic software:

- Strict clock model with tip dates

- Strict clock model without tip dates

- Uncorrelated relaxed clock model with tip dates

- Uncorrelated relaxed clock model without tip dates

Parameter Settings: For undated models, fix the substitution rate at 1.0 or at the order of magnitude assumed for your taxon based on literature values [6].

Marginal Likelihood Estimation: For each model, estimate the log marginal likelihood using nested sampling, path sampling, or generalized stepping-stone sampling [6].

Bayes Factor Calculation: Calculate log Bayes factors between dated and undated models for both strict and relaxed clocks. A positive Bayes factor favoring dated models indicates presence of temporal signal [6].

Interpretation: If Bayes factors strongly favor models with tip dates over corresponding models without tip dates, this indicates sufficient temporal signal exists in your data to proceed with molecular clock dating [6].

Essential Research Reagents and Computational Tools

Table: Key Resources for Molecular Clock Analysis

| Resource Type | Specific Tools | Primary Function |

|---|---|---|

| Bayesian Dating Software | BEAST2 [5] [7], RevBayes [10], MCMCTree [8] | Bayesian phylogenetic inference with molecular clock models |

| Model Selection | Nested Sampling (BEAST2) [6], Path Sampling/Generalized Stepping-Stone Sampling [6] | Marginal likelihood estimation for model comparison |

| Temporal Signal Assessment | TempEst [6], BacDating [6] | Root-to-tip regression to explore temporal signal |

| Fossil Calibration | Fossil calibration priors implemented in BEAST2, MCMCTree, RevBayes [10] [8] | Incorporating fossil information to calibrate molecular clock |

| Result Analysis & Visualization | Tracer [7], TreeAnnotator [7], FigTree [7] | Analyzing MCMC output, summarizing tree distributions, and visualizing time trees |

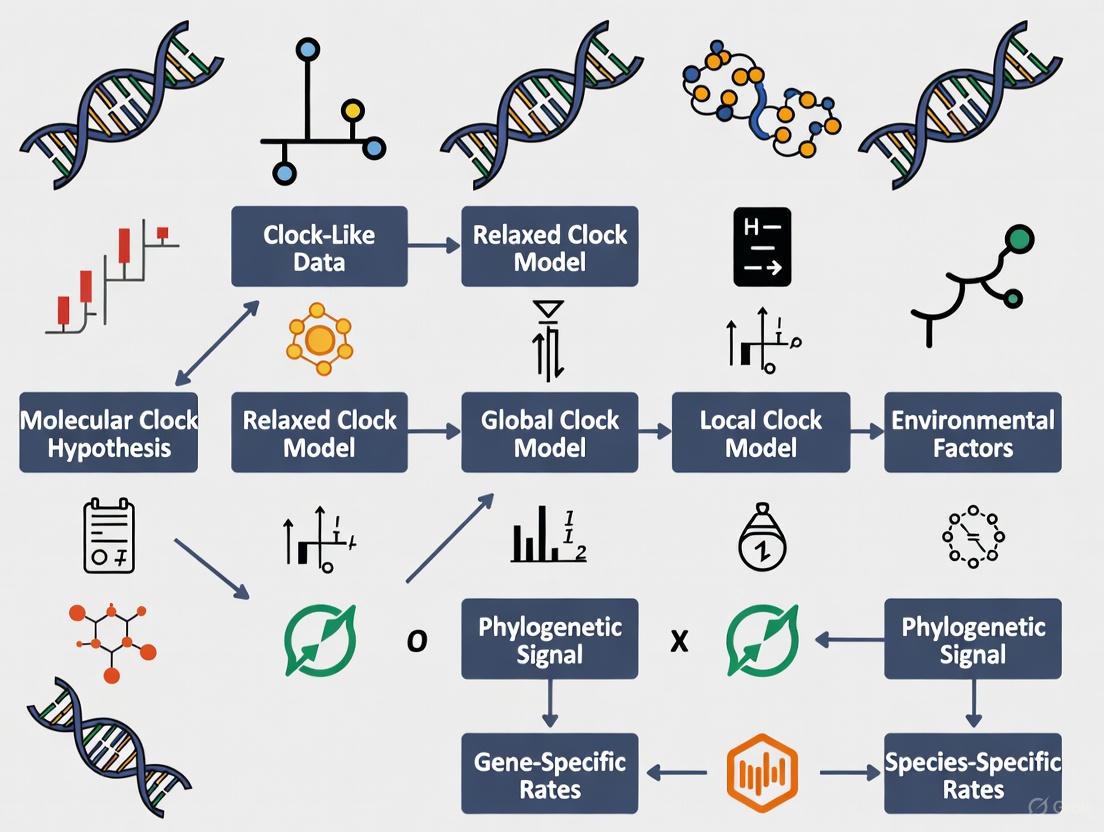

Molecular Clock Model Selection Workflow

The following diagram illustrates the logical workflow for molecular clock model selection, from initial data assessment to final model validation:

The strict molecular clock model is a foundational concept in molecular evolution, providing a framework for estimating evolutionary timescales from genetic data. This model assumes that genetic mutations accumulate at a constant rate across all lineages in a phylogenetic tree, serving as a "clock" that measures evolutionary time. While its simplicity offers computational advantages, the model's core assumption also defines its specific applications and limitations. This technical support center article guides researchers through the troubleshooting and appropriate implementation of the strict clock model within their phylogenetic analyses.

Core Concepts and Theoretical Foundation

Key Assumptions of the Strict Clock Model

The strict clock model operates on several fundamental assumptions:

- Rate Constancy: The model assumes that the evolutionary rate (the number of substitutions per site per time unit) is identical across all branches of the phylogenetic tree [5] [11]. This is its defining characteristic.

- Poisson Process: It often assumes that molecular evolution follows a Poisson process, where the mean and variance of the number of substitutions are equal [12].

- Neutral Theory Foundation: The concept is theoretically underpinned by the neutral theory of evolution, which posits that most evolutionary changes at the molecular level are due to the fixation of neutral mutations [11].

Mathematical and Statistical Formulation

In practice, the strict clock is a one-parameter model [5]. This single parameter represents the fixed evolutionary rate, which converts branch lengths (measured in expected substitutions per site) into evolutionary time. In Bayesian software like BEAST, this parameter is typically equipped with a proper CTMC reference prior to facilitate estimation [5]. The fundamental calculation for a strict clock often relies on the formula ( d = 2rt ), where ( d ) is the genetic distance, ( r ) is the fixed rate, and ( t ) is the time since divergence [11].

Troubleshooting Guide: Common Issues and Solutions

Researchers often encounter specific challenges when applying the strict clock model. The following table addresses frequent issues and provides recommended actions.

| Common Issue | Symptoms | Underlying Cause | Solution |

|---|---|---|---|

| Clock Violation | Poor model fit in posterior predictive checks [9]; Significant rate heterogeneity detected in preliminary tests [13] [12]. | True variation in evolutionary rates among lineages due to factors like generation time or metabolic rate [14]. | Test clock adequacy [9]; Switch to a relaxed clock model (e.g., uncorrelated lognormal) [5] [13]. |

| Poor MCMC Mixing | Low Effective Sample Size (ESS) values for key parameters; inability to achieve convergence. | Model misspecification, including an inappropriate strict clock when rates are variable. | Run multiple chains to check consistency [15]; Re-evaluate tree prior compatibility with sampling scheme [15]. |

| Biased Time Estimates | Divergence time estimates are significantly older or younger than fossil or biogeographic evidence. | Model over-simplification forcing an incorrect average rate across all lineages. | Use multiple, reliable calibrations [16]; Compare results with a relaxed clock analysis [15]. |

| Intraspecific Data | Extremely high rate variance (e.g., ucld.stdev >> 1) if a relaxed clock is forced; poor fit for population-level trees. |

Coalescent process and intra-species sampling creates trees that conflict with species-level tree priaries [15]. | Consider a multi-species coalescent model (*BEAST) [15]; Use a random local clock [15]. |

Frequently Asked Questions (FAQs)

Q1: When should I use a strict clock instead of a relaxed clock? Use a strict clock when analyzing very closely related species or populations with similar generation times and life history traits, where the assumption of rate constancy is biologically plausible [11]. It is also the preferred model when sequence data is limited, as it is computationally efficient and less parameter-rich, reducing the risk of overfitting [13].

Q2: How can I statistically test if my data violates the strict clock assumption? You can perform a Likelihood Ratio Test (LRT) to compare the fit of a model with a enforced clock to one without it [12]. For Bayesian analyses, you can assess model adequacy using posterior predictive simulations, which test whether the data generated under the strict clock model resemble your empirical data [9]. An overdispersed molecular clock (index of dispersion >>1) also indicates violation [12].

Q3: My strict clock analysis runs fine, but Bayes Factors strongly favor a relaxed clock. Which model should I trust? This is a common scenario. If a relaxed clock is strongly favored but has poor MCMC mixing (low ESS), it can indicate a conflict between your data, the tree prior, and the clock model [15]. A strict clock with good convergence might be more reliable than a non-convergent relaxed clock. Investigate if your tree prior (e.g., Yule vs. Coalescent) is appropriate for your taxon sampling [15]. A random local clock can sometimes be a good middle-ground option [5] [15].

Q4: What are the best practices for calibrating a strict clock analysis? Calibrations are essential for converting relative time to absolute time. Use multiple, reliable calibration points where possible. Sources include:

- Fossils: Provide minimum age constraints for nodes [16] [17] [11].

- Biogeographic Events: Known geological events like continental drift or island formation can constrain divergence times [16] [11].

- Ancient DNA: Tip-dating of historically sampled specimens can provide very precise calibrations [17] [11]. Always consider the uncertainty associated with your calibrations and use appropriate priors to represent this uncertainty in your analysis.

Experimental Protocol: Model Selection and Adequacy Assessment

This protocol provides a step-by-step guide for comparing the strict clock against alternative models and evaluating its performance, drawing on established methodologies [13] [9].

Workflow for Molecular Clock Model Selection

The following diagram outlines the logical decision process for selecting and validating a molecular clock model.

Step-by-Step Methodology

- Sequence Data Preparation: Begin with a high-quality, multiple sequence alignment. Assess and account for rate heterogeneity across sites (e.g., using a Gamma model) [13].

- Preliminary Tree Inference: Reconstruct an initial phylogenetic tree using a clock-free method to understand the basic relationships.

- Initial Clock Test: Perform a likelihood ratio test to compare a model enforcing a molecular clock to an unconstrained model. A significant result indicates rejection of the strict clock [12].

- Bayesian MCMC Analysis:

- Strict Clock Analysis: Configure your analysis (e.g., in BEAUti) using the strict clock model [5]. Use appropriate priors, such as the CTMC reference prior for the clock rate.

- Relaxed Clock Analysis: Run a parallel analysis using a relaxed clock model, such as the Uncorrelated Lognormal (UCLN) clock [5] [13].

- Model Comparison: Calculate Bayes Factors from the marginal likelihoods of the strict and relaxed clock analyses to determine which model provides a better fit to your data [9].

- Model Adequacy Assessment:

- Take a sample of posterior parameters (rates, times, tree) from your strict clock analysis.

- Simulate new sequence alignments based on these parameters.

- Re-estimate phylograms from these simulated datasets using a clock-free method.

- Compare the branch lengths of the empirical phylogram to the distribution of branch lengths from the simulated data. A high proportion of empirical branches falling outside the 95% quantile of the simulated distribution indicates the strict clock model is inadequate [9].

The Scientist's Toolkit: Essential Research Reagents and Software

The following table details key software tools and conceptual "reagents" essential for conducting strict clock analyses.

| Item Name | Type | Function | Key Considerations |

|---|---|---|---|

| BEAST/BEAST2 | Software Package | A cross-platform program for Bayesian evolutionary analysis using MCMC. It implements strict, relaxed, and random local clock models for estimating rooted, time-measured phylogenies [5] [14]. | The primary software for Bayesian molecular clock dating. Uses BEAUti for configuration. |

| Strict Clock Model | Analytical Model | The one-parameter model that assumes a single, constant evolutionary rate across all tree branches, converting branch lengths to time [5] [18]. | Use when clock-likeness is not rejected. Computationally efficient. |

| CTMC Reference Prior | Statistical Prior | A proper prior used in BEAST for the clock rate parameter under the strict clock model, which helps in obtaining valid posterior estimates [5]. | Default prior in BEAST; generally recommended. |

| Tracer | Software Tool | Used to analyze the output of BEAST (and other MCMC programs). It assesses convergence (via ESS), compares model fit (Bayes Factors), and summarizes parameter estimates [13] [15]. | Critical for diagnosing MCMC performance and model selection. |

| Posterior Predictive Simulation | Analytical Method | A technique to assess model adequacy by simulating data under the fitted model and comparing it to the empirical data. Used to identify branches where the clock model fits poorly [9]. | Final step for validating that the model is a plausible description of the data. |

| Fossil Calibrations | Data Input | Dated fossil evidence used to assign absolute times to specific nodes in the tree, converting relative branch lengths to years [16] [17]. | The most common source of calibration information. Requires careful placement and uncertainty specification. |

| r8s / treePL | Software Package | Implements penalized likelihood methods for divergence time estimation on a fixed tree topology. Can implement strict and relaxed clocks and is faster for very large datasets [11]. | An alternative to Bayesian methods for large-scale phylogenies. |

Molecular clocks are fundamental to evolutionary biology, providing a framework for dating species divergences. The strict molecular clock model, which assumes a constant evolutionary rate across all lineages, is often violated by real biological data. Relaxed clock models address this limitation by allowing evolutionary rates to vary across the tree, providing more realistic and accurate estimates of divergence times. This guide provides troubleshooting and methodological support for researchers selecting and implementing these models in their phylogenetic analyses.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between a strict clock and a relaxed clock model? A strict clock model assumes every branch in the phylogenetic tree evolves at the same, constant rate, making it a one-parameter model. In contrast, relaxed clock models permit variation in evolutionary rates across different branches, with various statistical frameworks to describe how this variation occurs [5].

2. When should I use an uncorrelated relaxed clock versus a random local clock? The uncorrelated relaxed clock model allows each branch to have its own independent evolutionary rate, drawn from an underlying distribution (e.g., log-normal, exponential). This is suitable when you expect rate changes to be sudden and unrelated between ancestor and descendant lineages. The random local clock model is a compromise, allowing for a limited number of rate changes across the tree, which is useful when you expect some lineages to share similar rates but want to avoid the complexity of a fully relaxed clock [5].

3. My Bayesian relaxed clock analysis is taking too long. What can I do? High computational burden is a common challenge, especially with large phylogenomic datasets. If using Bayesian methods like BEAST is infeasible, consider a composite or approximate approach. One method involves using the bag of little bootstraps to generate a set of plausible phylogenies, dating each one with a faster method like RelTime, and then summarizing the results to incorporate phylogenetic uncertainty into your final time estimates [19].

4. How does phylogenetic uncertainty affect my divergence time estimates? When you first infer a phylogeny and then scale it to time in a sequential analysis (SA), you risk overconfidence in your divergence time estimates (i.e., overly narrow confidence/credibility intervals) if the phylogeny is not well-resolved. A joint analysis (JA), which co-infers the phylogeny and divergence times, incorporates this topological uncertainty and can provide more reliable confidence intervals, especially for nodes with low support [19].

5. Are my relaxed clock time estimates accurate if I use the wrong model of rate variation? Simulation studies show that accuracy depends on whether your assumed model matches the true, unknown process. If your analysis uses an autocorrelated model but rates actually evolved randomly (and vice-versa), the frequency with which the true time falls within the 95% credibility interval can drop significantly (e.g., to around 83%). To enhance robustness, one strategy is to build composite credibility intervals from analyses run under both autocorrelated and random rate models [20].

Troubleshooting Common Experimental Issues

Poor Chain Mixing in MCMC Analyses

- Problem: Low Effective Sample Size (ESS) for key parameters like the clock rate or tree prior.

- Solution: Adjust your MCMC proposal mechanisms. Increase the tuning frequency for moves on the tree topology (e.g.,

mvNNI) and node times (e.g.,mvNodeTimeSlideUniform). Consider using amvTreeScalemove to propose scaling the entire tree, which can improve mixing of the root age and overall branch rates [10].

Infeasibly Long Computation for Large Datasets

- Problem: Bayesian analysis of a phylogenomic dataset with tens of thousands to millions of sites will not finish in a reasonable time.

- Solution: Implement a joint inference pipeline using maximum likelihood and RelTime. The workflow involves generating multiple bootstrap replicate alignments, inferring an ML tree for each, dating each tree with RelTime, and then summarizing the node ages and confidence intervals from the resulting set of timetrees [19].

Unrealistically Wide or Narrow Credibility Intervals

- Problem: The estimated credibility intervals for divergence times seem biologically implausible.

- Solution:

- Wide Intervals: This may be due to high uncertainty in the tree topology. Ensure you are using a sufficient amount of phylogenetic signal (e.g., more genes/longer alignments) and consider a joint analysis to properly account for this uncertainty [19].

- Narrow Intervals: This could indicate overconfidence, potentially from using an incorrect relaxed clock model. Run your analysis under both autocorrelated and random rate models and consider reporting composite credibility intervals [20]. Re-evaluate your fossil calibrations, as overly informative, incorrect priors can also lead to biased, overprecise estimates.

Experimental Protocols & Workflows

Protocol 1: Bayesian Comparison of Relaxed Clock Models in RevBayes

This protocol outlines the steps for comparing different relaxed clock models in a Bayesian framework [10].

1. Specify the Birth-Death Model:

- Create a Rev script (e.g.,

m_BDP_bears.Rev) to define the tree prior. - Specify priors for diversification (

diversification ~ dnExponential(10.0)) and turnover (turnover ~ dnBeta(2.0, 2.0)). - Define deterministic nodes for the birth rate (

birth_rate := diversification / denom) and death rate. - Set a prior on the root age, often offset by a known fossil time (e.g.,

root_time ~ dnLognormal(mu_ra, stdv_ra, offset=tHesperocyon)). - Instantiate the time tree stochastic node:

timetree ~ dnBDP(lambda=birth_rate, mu=death_rate, rho=rho, rootAge=root_time, ...).

2. Set up the Clock Models:

- Create separate Rev files for each clock model (e.g., strict, uncorrelated lognormal, autocorrelated).

- For an uncorrelated lognormal clock, define a clock rate and a standard deviation parameter (

ucld_sigma) that controls the magnitude of rate variation across branches. - Link the clock model to the tree by creating a vector of branch rates.

3. Specify the Substitution Model:

- Define the nucleotide substitution model (e.g., HKY, GTR) and the site-rate heterogeneity model (e.g., Gamma, Invariant sites).

4. Configure and Run the MCMC:

- In a master Rev script, use the

source()function to import the model components. - Create the full model:

mymodel = model(timetree). - Set up monitors and MCMC samples (e.g.,

mymcmc = mcmc(mymodel, monitors, moves)). - Run the MCMC for a sufficient number of generations:

mymcmc.run(generations=10000).

5. Analyze Output and Compare Models:

- Use tools like Tracer to assess MCMC convergence (ESS > 200).

- Calculate marginal model likelihoods using stepping-stone sampling or path sampling to determine the best-fitting relaxed clock model.

Protocol 2: Joint Inference of Phylogeny and Divergence Times using RelTime with Bootstrapping

This protocol is designed for large datasets where full Bayesian inference is computationally prohibitive [19].

1. Generate Bootstrap Replicate Alignments:

- For standard bootstrapping (BS): Generate

rreplicate datasets by randomly sampling sites from the original sequence alignment with replacement. - For large datasets, use the bag of little bootstraps (LBS): Sample

lsites from the original alignment (wherelis much smaller than the total alignment lengthL), then create replicate datasets by samplingLsites with replacement from this little sample.

2. Infer Maximum Likelihood Phylogenies:

- For each bootstrap replicate alignment (BS or LBS), perform a maximum likelihood phylogenetic analysis to infer the tree topology and branch lengths.

3. Estimate Divergence Times:

- Apply the RelTime method (or another relaxed clock method) to each inferred ML phylogeny, using the provided calibration constraints.

- This step produces a set of

rtimetrees, each with estimated node ages.

4. Summarize Results:

- Infer a consensus phylogeny from the set of bootstrap replicate phylogenies.

- For each node in the consensus tree, summarize the divergence time and confidence intervals from the corresponding nodes in the set of timetrees. This incorporates phylogenetic uncertainty into the final time estimates.

Model Comparison Tables

Table 1: Key Features of Common Relaxed Clock Models

| Model | Rate Variation Assumption | Key Parameters | Best Used When... | Common Software |

|---|---|---|---|---|

| Strict Clock [5] | No variation; a single rate across the entire tree. | Clock rate. | There is strong empirical evidence for rate constancy; as a simple null model. | BEAST, RevBayes, MrBayes |

| Uncorrelated Relaxed Clock [5] | The rate on each branch is drawn independently from a shared distribution (e.g., log-normal). | Clock rate (mean), and a parameter for the variance of rates (e.g., ucld_sigma). |

You expect rates to change abruptly and independently between lineages. | BEAST, RevBayes |

| Autocorrelated Relaxed Clock | The rate on a branch is correlated with the rate of its ancestral branch. | Clock rate, and a parameter governing the degree of autocorrelation. | You expect evolutionary rates to change gradually over time. | MCMCTree, MrBayes |

| Random Local Clock [5] | A limited number of rate changes occur at specific points on the tree, with local rate constancy. | Number and location of rate changes, and the value of each local rate. | You want a model between the strict and fully relaxed clocks, with some lineages sharing rates. | BEAST |

Table 2: Troubleshooting Guide for Computational Issues

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Low ESS for clock model parameters | Poor MCMC mixing | Adjust tuning parameters for tree and clock operators; increase MCMC run length [10]. |

| Analysis will not finish | Dataset is too large for Bayesian methods | Switch to a joint inference method using ML and RelTime with bootstrapping [19]. |

| Credibility intervals too wide | High phylogenetic uncertainty or insufficient data | Use joint analysis to incorporate topology uncertainty; increase sequence data [19]. |

| Credibility intervals too narrow | Incorrect clock model; over-confident calibrations | Test both autocorrelated and random rate models; report composite CrIs; re-check calibration priors [20]. |

Visual Workflows and Diagrams

Diagram 1: Relaxed Clock Model Selection Workflow

This diagram outlines the logical decision process for selecting an appropriate relaxed clock model.

Diagram 2: Joint Inference of Phylogeny & Times

This diagram visualizes the workflow for the joint inference protocol using bootstrapping and RelTime [19].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Analytical Tools

| Tool Name | Type | Primary Function | Key Feature |

|---|---|---|---|

| BEAST / BEAST2 [5] [20] | Software Package | Bayesian evolutionary analysis by sampling trees and parameters. | User-friendly BEAUti interface; implements uncorrelated relaxed clocks and random local clocks. |

| RevBayes [10] | Software Package | Bayesian phylogenetic inference using a probabilistic programming language. | Highly modular and flexible for building custom relaxed clock and tree models. |

| MultiDivTime [20] | Software Package | Estimates divergence times using a Bayesian framework with autocorrelated rates. | Implements the Thorne & Kishino autocorrelated relaxed clock model. |

| RelTime [19] | Algorithm/Method | Estimates relative divergence times and confidence intervals. | Computationally efficient; can be used in joint inference pipelines with bootstrapping. |

| Tracer | Diagnostic Tool | Analyzes MCMC output from Bayesian evolutionary analyses. | Visualizes posterior distributions, ESS, and chain convergence. |

| PAML [20] | Software Package | Phylogenetic analysis by maximum likelihood. | Used for estimating branch lengths and substitution model parameters as input for dating. |

Frequently Asked Questions

1. What are autocorrelated errors and what problems do they cause in my analysis? Autocorrelated errors (or serial correlation) occur when the error terms in your model are correlated over time, violating the assumption of error independence in standard regression analysis. This is common in time series data. When this happens, the estimated regression coefficients, while still unbiased, no longer have the minimum variance property. The Mean Squared Error (MSE) may seriously underestimate the true variance of the errors, and the standard errors of the regression coefficients can become inaccurate. This, in turn, means that statistical confidence intervals and inference procedures are no longer strictly applicable, potentially leading to misleading conclusions [21] [22].

2. How can I test for the presence of autocorrelation in my model's residuals? There are several common methods to test for autocorrelation:

- Durbin-Watson Test: This test is used to detect the presence of autocorrelation at a lag of 1. The test statistic ranges from 0 to 4. A value of 2 indicates no autocorrelation, a value between 0 and 2 suggests positive autocorrelation, and a value between 2 and 4 suggests negative autocorrelation [23] [22].

- Ljung-Box Test: This test has a null hypothesis that the residuals are independently distributed. A p-value below 0.05 typically leads to a rejection of the null hypothesis, indicating the presence of autocorrelation in the time series [22].

- Correlogram (ACF Plot): Plotting the Autocorrelation Function (ACF) helps visualize the correlation between a time series and its lagged values. Non-random data with autocorrelation will show at least one significant lag in the ACF plot that exceeds the significance bounds [24] [22].

3. My data has a strong temporal component. What types of models can I use? For time series data, autoregressive models are a common choice. In an autoregressive model, the current value of the series is regressed on its own previous values.

- AR(1): A first-order autoregressive model, where the value at time t is predicted based on the value at t-1:

yₜ = β₀ + β₁yₜ₋₁ + εₜ[24]. - AR(k): A k-th order autoregressive model uses the previous k values to predict the current value:

yₜ = β₀ + β₁yₜ₋₁ + ... + βₖyₜ₋ₖ + εₜ[24]. The partial autocorrelation function (PACF) plot is particularly useful for identifying the orderkof an autoregressive model, as significant spikes indicate which lagged terms are useful predictors [24].

4. How do I choose the right molecular clock model for my phylogenomic data? Molecular clock models range from strict to increasingly relaxed assumptions. The choice depends on the level of rate variation your data exhibits. The table below summarizes common models [5]:

| Model | Core Assumption | Key Application / Use Case |

|---|---|---|

| Strict Clock | A single, global evolutionary rate applies to all branches in the phylogenetic tree. | Suitable when little to no rate heterogeneity is expected across the tree. Serves as a simple null model [5]. |

| Fixed Local Clock | Pre-defined clades are allowed to have their own constant evolutionary rates, while a constant rate is maintained across the rest of the tree. | Useful when prior knowledge (e.g., from taxonomy or life history) suggests specific lineages evolved at different rates [5]. |

| Uncorrelated Relaxed Clock | Every branch in the tree has its own independent evolutionary rate, drawn from a specified probability distribution (e.g., log-normal). | Applied when evolutionary rates are expected to vary significantly and abruptly across branches without correlation between adjacent branches [5]. |

| Random Local Clock | The model proposes a series of local molecular clocks that extend over subregions of the phylogeny, allowing for a variable number of rate changes. | A middle-ground option that permits more variation than a strict clock but less than a fully relaxed clock, with the number and location of rate shifts informed by the data [5]. |

5. What tools can help me visualize and test evolutionary rate variation in phylogenomic data? ClockstaRX is a specialized tool for exploring and testing evolutionary rate signals in phylogenomic data. It uses principal components analysis (PCA) to model the main axes of rate variation across branches and loci. It implements formal statistical tests to:

- Reject the degenerate multiple pacemaker model: A test to determine if there is any predictable component of rate variation in your data.

- Identify the number of significant "pacemakers": Determines how many principal components (axes of variation) significantly describe the data.

- Identify local pacemakers: Tests which specific phylogenetic branches are driving the variation on each significant PC axis [25]. This information is crucial for diagnosing complex rate heterogeneity and can inform decisions on data filtering or model selection for subsequent molecular dating analyses [25].

Troubleshooting Guides

Issue 1: Detecting and Addressing Autocorrelation in Time Series Regression

- Symptoms: You have fitted a linear model to time series data, and your residuals (the differences between observed and predicted values) are not randomly distributed. A plot of residuals vs. time may show clusters or a cyclical pattern.

- Diagnostic Protocol:

- Plot Residuals vs. Time: Visually inspect for non-random patterns [24].

- Conduct a Formal Test: Perform a Durbin-Watson or Ljung-Box test on the residuals. A significant result (p-value < 0.05 for Ljung-Box) confirms autocorrelation is present [22].

- Analyze ACF and PACF Plots: Use these plots to understand the structure of the autocorrelation. The PACF plot is key for identifying the order

pof an autoregressive (AR) process. A significant spike at lag 1 in the PACF suggests an AR(1) model may be appropriate [24].

- Resolution Strategy:

- Model Transformation: Instead of ordinary least squares, use a model that explicitly accounts for the time dependency, such as an autoregressive model (e.g.,

yₜ = β₀ + β₁yₜ₋₁ + εₜ) or a model with autoregressive errors [21] [24]. - Incorporate Lagged Variables: Use lagged versions of the dependent variable as predictors in your regression analysis to account for the temporal dependency [22].

- Model Transformation: Instead of ordinary least squares, use a model that explicitly accounts for the time dependency, such as an autoregressive model (e.g.,

Issue 2: Selecting a Molecular Clock Model in the Presence of Rate Heterogeneity

- Symptoms: Your molecular dating analysis yields unrealistic or overly uncertain divergence times. A strict clock model feels too simplistic, but you are unsure which relaxed clock model to use.

- Diagnostic Protocol:

- Perform an Initial Rate Variation Analysis: Use a tool like ClockstaRX to analyze your phylogenomic data (a set of gene trees and a species tree). The input is typically inferred gene trees with branch lengths in substitutions per site [25].

- Run ClockstaRX Tests:

- Use the

collect.ratesfunction to extract branch rates from gene trees that are concordant with the species tree. - Use the

clock.spacefunction to perform PCA on the matrix of rates (loci x branches). - Examine the results of the three key tests (

pPhi,pPCs,pIL) to understand the global and local structure of rate variation [25].

- Use the

- Resolution Strategy:

- If a single PC dominates: A simple relaxed clock model where each locus has its own rate may be sufficient.

- If multiple significant PCs are found: There are subsets of loci with distinct rate patterns. Consider partitioning your data or using a more complex clock model that can handle this.

- If specific branches are identified as local pacemakers: A Random Local Clock model or carefully defined Fixed Local Clock model might be the most appropriate choice, as these branches are key drivers of rate heterogeneity [25] [5].

The following workflow diagram outlines the key decision points in this troubleshooting process:

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key resources used in the experiments and methodologies cited in this guide.

| Research Reagent / Tool | Primary Function & Application |

|---|---|

| ClockstaRX Software | A flexible R-based platform for exploring, visualizing, and performing hypothesis tests on evolutionary rate variation in phylogenomic data sets. It helps identify groups of loci and branches that contribute significantly to rate heterogeneity patterns [25]. |

| BEAST2 Software Package | A comprehensive Bayesian statistical software platform for phylogenetic analysis, particularly known for its wide array of implemented molecular clock models (strict, relaxed, random local clock) for divergence time estimation [5]. |

| Phylogenomic Data Set | The fundamental input, consisting of an inferred species tree and a set of independent gene trees (e.g., from individual loci or recombination-free regions) with branch lengths representing expected substitutions per site [25]. |

| Autocorrelation Function (ACF) Plot | A graphical diagnostic tool (correlogram) used to visualize the correlation between a time series and its lagged values. It helps identify trends, seasonality, and non-stationarity in time series data and model residuals [24] [23] [22]. |

| Partial Autocorrelation Function (PACF) Plot | A graphical tool that shows the correlation between a time series and its lags after removing the effects of intermediate lags. It is primarily used to identify the order p of an autoregressive (AR) model [24] [22]. |

| Durbin-Watson Test Statistic | A quantitative test used to detect the presence of first-order autocorrelation in the residuals from a regression analysis, providing a single number to guide diagnostics [23] [22]. |

The relationships between these core components in a molecular clock analysis workflow are illustrated below:

Troubleshooting Guides

Guide 1: Resolving Model Selection Uncertainty

Problem: Researchers are uncertain whether their dataset requires a relaxed molecular clock or if a simpler model is sufficient, leading to potential overfitting or underfitting.

Background: The Random Local Clock (RLC) model serves as an intermediate between the overly simplistic strict clock and the parameter-rich uncorrelated relaxed clock. It allows different phylogenetic regions to have different rates, with only a small number of rate changes across the tree [26] [5].

Diagnosis:

- Symptoms: Poor chain convergence in Bayesian MCMC analyses, low effective sample sizes (ESS) for rate parameters, or conflicting time estimates between different clock models.

- Diagnostic Steps:

- Conduct Bayes factor comparison between strict, random local, and uncorrelated relaxed clock models [27] [5].

- Check posterior probability of rate changes in RLC output—if concentrated near zero, a strict clock may be sufficient.

- Examine rate heterogeneity across branches using tracer-style software.

Solution:

- Initial Setup: Begin with RLC model using Bayesian stochastic search variable selection (BSSVS) to sample over all possible local clock configurations [26].

- MCMC Configuration: Run analysis for adequate generations (typically 10-100 million, depending on dataset size).

- Model Assessment: Check the posterior number of rate changes. If K = 0 with high probability, your data likely supports a strict molecular clock.

- Implementation: The RLC model is implemented in BEAST 1.5.4 and later versions, accessible through the BEAUti interface [26] [5].

Prevention: Always conduct model comparison using marginal likelihood estimation before final dating analysis. Use ClockstaR to determine optimal clock partitioning schemes for multigene datasets [27].

Guide 2: Addressing Poor MCMC Mixing in RLC Analyses

Problem: Poor mixing and convergence issues when using Random Local Clock models, characterized by low effective sample sizes (ESS) for rate parameters and tree model statistics.

Background: The RLC model has a complex state space that includes the product of all 2^(2n-2) possible local clock models across all possible rooted trees, which can challenge MCMC sampling [26].

Diagnosis:

- Symptoms: ESS values below 200, poor convergence between independent runs, and multimodal posterior distributions for rate parameters.

- Diagnostic Tools: Use Tracer or similar software to monitor ESS, trace plots, and posterior distributions.

Solution:

- Parameter Tuning: Adjust operating scale factors for tree and clock parameter proposals in BEAST.

- Chain Length: Increase chain length substantially—RLC analyses often require longer runs than strict clock models.

- Proposal Weights: Optimize proposal mechanism weights for tree and clock parameters.

- Initialization: Initialize chains with reasonable starting trees rather than random trees.

Verification: Run multiple independent analyses from different starting points to verify convergence to the same posterior distribution [26].

Frequently Asked Questions

Q1: When should I choose a Random Local Clock over other molecular clock models? A1: The RLC model is particularly appropriate when you expect sparse, possibly large-scale rate changes across the phylogeny rather than many small changes on every branch. It approaches the problem of rate variation by proposing a series of local molecular clocks, each extending over a subregion of the full phylogeny [26]. This makes it well-suited for datasets where large subtrees share the same underlying substitution rate, with variation described by the stochastic nature of the evolutionary process [26].

Q2: How does the Random Local Clock model differ from uncorrelated relaxed clock models? A2: Uncorrelated relaxed clocks allow each branch to have its own independent evolutionary rate drawn from an underlying distribution [5]. In contrast, the RLC model permits different regions in the tree to have different rates, but within each region the rate must be the same [26]. The RLC model therefore has an intermediate number of parameters between the single-parameter strict clock and the highly parameterized uncorrelated relaxed clock that has a separate parameter for each branch.

Q3: What are the computational requirements for RLC analysis? A3: RLC analyses are computationally intensive due to the enormous model space—for n sequences, there are 2^(2n-2) possible local clock models to consider [26]. However, the method efficiently samples this space using Markov chain Monte Carlo (MCMC) to implement Bayesian model averaging over random local molecular clocks. Adequate MCMC chain lengths are essential, and multiple runs are recommended to verify convergence.

Q4: Can I test whether my data supports a strict molecular clock using RLC? A4: Yes, one major advantage of the RLC model is that it conveniently allows a direct test of the strict molecular clock against a large array of alternative local molecular clock models [26]. If the posterior probability strongly supports zero rate changes (K=0), this provides evidence for a strict clock where "one rate rules them all."

Experimental Protocols & Methodologies

Protocol 1: Implementing Random Local Clock Analysis in BEAST

Purpose: To estimate divergence times using Random Local Clock models when there is expected to be variation in evolutionary rates among lineages, but with spatial correlation along the tree.

Materials:

- Software Requirements: BEAST package (v1.5.4 or later), BEAUti, Tracer [26] [5]

- Data Requirements: Aligned molecular sequences (nucleotide, codon, or amino acid)

- Computational Resources: Adequate memory and processing power for potentially long MCMC runs

Procedure:

- Data Preparation:

- Load alignment file into BEAUti

- Select appropriate site model (HKY85, GTR, etc.) with discrete-Gamma distributed rate heterogeneity [26]

Clock Model Configuration:

Tree Model Setup:

- Select appropriate tree prior (Yule, Birth-Death, etc.)

- Configure calibrations if available

MCMC Settings:

- Set chain length appropriate to dataset size (typically 10-100 million generations)

- Configure logging parameters for adequate sampling

Analysis Execution:

- Run BEAST with generated XML file

- Monitor progress for potential issues

Output Analysis:

- Use Tracer to assess convergence and ESS values

- Use TreeAnnotator to generate maximum clade credibility tree

- Visualize results with appropriate software

Troubleshooting Tips:

- If ESS values are low, increase chain length or adjust operator weights

- If convergence is poor, run multiple independent chains to verify results

- For large datasets, consider using BEAGLE library to accelerate computation

Protocol 2: Bayesian Stochastic Search Variable Selection in RLC

Purpose: To efficiently sample the complex model space of possible local clock configurations using BSSVS approach.

Background: Bayesian stochastic search variable selection traditionally applies to model selection in linear regression, but has been adapted for molecular clock analysis [26].

Procedure:

- Model Augmentation: Augment the model state-space with a set of binary indicator variables δ = (δ₁,...,δ_P) where P is the number of branches [26]

- MCMC Sampling: Sample both the parameter values and model indicators simultaneously

- Posterior Analysis: Calculate posterior probabilities for each local clock configuration

- Model Averaging: Average over all sampled models to account for model uncertainty

Research Reagent Solutions

Table 1: Essential Computational Tools for Random Local Clock Analysis

| Tool/Resource | Function | Implementation Notes |

|---|---|---|

| BEAST Package | Bayesian evolutionary analysis by sampling trees | Primary software for RLC implementation [26] [5] |

| BEAUti | Bayesian evolutionary analysis utility | Graphical interface for configuring RLC analyses [5] |

| Tracer | MCMC output analysis | Diagnosing convergence and ESS values |

| TreeAnnotator | Tree summarization | Generating maximum clade credibility trees |

| ClockstaR | Clock partitioning selection | Determining optimal clock partitions for multigene datasets [27] |

Table 2: Statistical Approaches for Clock Model Comparison

| Method | Application | Interpretation |

|---|---|---|

| Bayes Factors | Model comparison between strict, RLC, and relaxed clocks | BF > 10 indicates strong support for one model over another [27] |

| BSSVS | Stochastic search over rate change locations | Identifies branches with significant rate changes [26] |

| Posterior Model Probabilities | RLC model averaging | Probability of different numbers of rate changes K |

| Marginal Likelihood Estimation | Model selection | Stepping-stone sampling or path sampling approaches |

Workflow Visualization

RLC Analysis Workflow

Clock Model Comparison

Frequently Asked Questions (FAQs)

Q1: My molecular clock analysis is yielding implausibly ancient divergence times for a clade of small mammals. Could their short generation times be affecting my results? Yes, this is a likely cause. Lineages with shorter generation times often accumulate mutations more rapidly per calendar year. This is because DNA replication, during which most mutations occur, happens more frequently in a given time period. If your model assumes a constant rate but is applied to species with differing generation times, the branch lengths will be overestimated for the faster-evolving lineages, pushing back the inferred divergence times. You should employ a relaxed clock model that can account for this variation among lineages [3].

Q2: I am studying a group of reptiles with vastly different metabolic rates. How can I test if this factor is a significant driver of evolutionary rate variation in my dataset? Early hypotheses suggested that higher metabolic rates could lead to increased mutation rates due to a greater production of oxidative DNA damage [3]. However, a phylogenetically independent comparison study on mammals found no evidence for a correlation between metabolic rate and the rate of molecular evolution after accounting for other factors [28]. To test this in your dataset, you can treat metabolic rate as a continuous trait and use correlation analysis within a phylogenetic framework (e.g., phylogenetic generalized least squares) to see if it explains a significant portion of the variation in branch-specific substitution rates.

Q3: My analysis of a cancer genome dataset reveals unexpected variation in mutation spectra between patients. Could inter-individual differences in DNA repair capacity (DRC) be responsible? Absolutely. Significant inter-individual variation in DNA Repair Capacity (DRC) exists even among healthy individuals, forming a normal distribution within the population [29]. This variation is influenced by common genetic variants (polymorphisms) in DNA repair genes and epigenetic factors. In a cancer context, these inherent differences can affect the baseline mutation rate and the spectrum of mutations that accumulate, influencing both disease susceptibility and the response to DNA-damaging chemotherapies [29] [30].

Q4: When I apply both fossil calibrations and a pedigree-based mutation rate to my species tree, I get conflicting divergence time estimates. Why does this happen, and which should I trust? This discrepancy is an active area of research. The two approaches can yield different estimates because:

- Fossil-calibrated concatenation can be influenced by model misspecification, such as not accounting for incomplete lineage sorting (ILS), which can make divergences appear older [17].

- Mutation-rate calibrated multispecies coalescent (MSC) methods directly estimate species divergence times and are less sensitive to the vagaries of the fossil record. However, they require accurate estimates of generation time and mutation rate [17]. There is no universal answer; the best practice is to compare the results of both methods and be transparent about the discrepancies. Using the MSC method with carefully selected fossil calibrations is often considered a robust approach [17].

Q5: What is the single most important recommendation for avoiding biased results in Bayesian molecular clock analysis? Conduct a prior sensitivity analysis [31]. The choice of prior distributions, especially for the tree model (e.g., birth-death process), can have a profound impact on your posterior estimates of divergence times. You should run your analysis with different, biologically plausible priors to see if your key conclusions about node ages remain stable. Using highly informative priors that are inconsistent with your data can lead to incorrect inferences, a problem that can be diagnosed by checking if the prior generates reasonable expectations for parameters like the root age and evolutionary rate [31].

Troubleshooting Guides

Problem 1: Widespread Incomplete Lineage Sorting (ILS) Biasing Divergence Time Estimates

- Symptoms: Widespread gene tree heterogeneity despite strong support for the species tree; divergence time estimates that are consistently older than fossil evidence suggests.

- Root Cause: Traditional phylogenetic clock models equate sequence divergence time with species divergence time. However, gene coalescence always predates species split in the absence of gene flow, leading to overestimates of species divergence times [17].

- Solution:

- Method Selection: Shift from concatenation-based methods to the Multispecies Coalescent (MSC) model. The MSC jointly estimates the species tree and gene trees, explicitly modeling the coalescent process to separate the time of population divergence (speciation) from the time of gene coalescence [17].

- Software & Workflow:

- Validation: Compare the estimated species tree and divergence times with those from a concatenated analysis. A significant difference, particularly older ages in the concatenated analysis, is indicative of ILS bias.

Problem 2: Incorrect Detection of Temporal Signal in Bayesian Analysis

- Symptoms: A Bayesian Evaluation of Temporal Signal (BETS) strongly supports the presence of a temporal signal even when date-randomization should show there is none. Subsequent molecular clock analyses produce unreliable time estimates.

- Root Cause: A statistical artefact known as "tree extension," often caused by using an overly informative tree prior that is inconsistent with the data. This forces the model to explain genetic diversity by extending branch lengths, mimicking the signal of evolutionary time [31].

- Solution:

- Perform Prior Predictive Simulations: Before analyzing your real data, run your model with the chosen priors (but no data) to generate simulated datasets. Check if the priors produce biologically plausible values for key parameters like the root age and evolutionary rate [31].

- Conduct Prior Sensitivity Analysis: Re-run your BETS and dating analysis with different tree prior settings (e.g., varying the birth rate in a birth-death prior). If your results and model selection are highly sensitive to the prior choice, your findings are not robust [31].

- Model Selection: Ensure you are using a molecular clock model (strict or relaxed) that is appropriate for your data. Using an overly complex "no-clock" model when rates are fairly constant can increase uncertainty [3].

Quantitative Data on Drivers of Rate Variation

Table 1: Summary of Key Biological Drivers of Molecular Rate Variation

| Driver | Proposed Mechanism | Evidence from Comparative Studies | Key Statistical Method |

|---|---|---|---|

| Generation Time | More rounds of DNA replication per unit time in shorter-lived organisms lead to more replication errors and a higher mutation rate per year [3]. | A negative correlation between generation time and substitution rate at fourfold degenerate sites in mammalian protein sequences [28]. | Phylogenetically independent comparisons and correlation analysis [28]. |

| Metabolic Rate | Higher metabolic activity generates more reactive oxygen species (ROS), leading to increased oxidative DNA damage and a higher mutation rate [3]. | A study on 61 mammal species found no evidence for an effect of metabolic rate on the rate of sequence evolution after accounting for other factors [28]. | Correlation analysis of genetic distances against differences in body mass and metabolic rate [28]. |

| DNA Repair Capacity (DRC) | The efficiency of multiple DNA repair pathways (NER, BER, MMR, etc.) determines the fraction of DNA lesions that become fixed mutations. Inter-individual and inter-species variation in DRC directly affects mutation rate [29] [30]. | Functional assays show significant inter-individual variation in DRC in human populations, and this variation is associated with disease susceptibility (e.g., a 2000-fold higher skin cancer risk in Xeroderma Pigmentosum patients with defective NER) [29] [30]. | Functional DNA repair assays (e.g., comet assay, host-cell reactivation) on cell lines or blood samples from different individuals [29]. |

Experimental Protocols for Key Assays

Protocol 1: Host-Cell Reactivation (HCR) Assay for Measuring Nucleotide Excision Repair (NER) Capacity

Purpose: To functionally measure an individual's cellular proficiency in repairing bulky DNA adducts, such as those induced by UV light, which is primarily handled by the NER pathway [29].

- Sample Collection: Isolate lymphocytes from fresh whole blood samples of study participants.

- Plasmid Preparation: Use a reporter plasmid (e.g., expressing luciferase or GFP) that has been damaged in vitro by a defined dose of UV-C light.

- Cell Transfection: Co-transfect the damaged reporter plasmid and a control undamaged plasmid (e.g., expressing Renilla luciferase) into the isolated lymphocytes.

- Incubation and Repair: Allow cells to incubate for a set period (e.g., 24 hours) to permit repair of the damaged plasmid.

- Reporter Gene Activity Measurement: Lyse the cells and measure the activity of the reporter gene (e.g., luminescence). The activity of the repaired plasmid is normalized to the activity of the control plasmid.

- Data Analysis: The normalized reporter activity is directly proportional to the cell's NER capacity. Results are typically expressed as a percentage of repair activity relative to a reference standard [29].

Protocol 2: Phylogenetically Independent Contrasts for Testing Correlations with Life History Traits

Purpose: To test for a correlation between a life history trait (e.g., generation time) and the rate of molecular evolution while accounting for the non-independence of species due to shared evolutionary history [28].

- Data Compilation:

- Genetic Data: Assemble a sequence alignment for a set of genes from multiple species.

- Trait Data: Collect data on the trait of interest (e.g., body mass, generation time) for the same species.

- Phylogeny: Obtain a well-supported, time-calibrated phylogenetic tree for the species.

- Genetic Distance Calculation: Calculate the pairwise genetic distances for specific sequence types (e.g., fourfold degenerate sites) for each pair of species.

- Independent Contrasts: Using specialized software (e.g., functions in R packages like

apeorcaper), compute phylogenetically independent contrasts for both the genetic distances and the trait values. - Correlation Analysis: Perform a correlation analysis (e.g., linear regression) on the independent contrasts, not the raw species values. A significant correlation indicates a relationship between the trait and evolutionary rate that is independent of phylogeny [28].

Pathway and Workflow Diagrams

Biological Drivers of Mutation Rate

Molecular Clock Troubleshooting Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Software for Molecular Clock and DNA Repair Studies

| Item Name | Type | Primary Function / Application |

|---|---|---|

| BEAST 2 / RevBayes | Software Package | Bayesian evolutionary analysis software. Used for phylogenetic reconstruction, molecular dating, and population dynamics under strict and relaxed clock models [32] [10]. |

| StarBEAST2 | Software Package | A specialized package for BEAST 2 that implements the Multispecies Coalescent (MSC) model to infer species trees from multi-locus data while accounting for incomplete lineage sorting [17]. |

| Reporter Plasmid (e.g., Luciferase) | Molecular Biology Reagent | A plasmid containing a reporter gene used in functional DNA repair assays (e.g., HCR). The plasmid is damaged in vitro, and its functional recovery after transfection into host cells quantifies repair capacity [29]. |

| Comet Assay Kit | Laboratory Assay Kit | A kit for the single-cell gel electrophoresis assay. Used to measure DNA strand breaks at the level of individual cells, providing a direct snapshot of DNA damage and repair capacity [29]. |

| PAML (e.g., MCMCTree) | Software Package | A package of programs for phylogenetic analysis by maximum likelihood. The MCMCTree program is used for Bayesian estimation of divergence times using relaxed molecular clock models [32]. |

The Critical Impact of Model Choice on Divergence Time and Evolutionary Rate Estimates

Frequently Asked Questions

What is the most common source of error in molecular clock dating? Molecular clock model misspecification is a significant source of estimation error. Using an incorrect model of rate variation among lineages (e.g., applying a strict clock when rates vary substantially) can lead to inaccurate divergence time estimates, even with otherwise good data and calibrations [4].

How does calibration strategy impact date estimates? The position and number of calibrations strongly influence results. Shallow calibrations (close to the tips) can cause the overall timescale to be underestimated by up to three orders of magnitude. An effective strategy is to include multiple calibrations and prefer those close to the root of the phylogeny [4] [33].

What is the practical difference between strict and relaxed molecular clocks? Strict molecular clocks assume a constant rate of evolution across all lineages, while relaxed clocks allow rates to vary. Relaxed clocks include uncorrelated models (independent rates per branch) and autocorrelated models (rates change gradually along lineages) [11]. Strict clocks are simpler but often biologically unrealistic for distantly related species.

How can I assess the reliability of my divergence time estimates? In Bayesian analysis, examine posterior probability distributions and credibility intervals. Conduct sensitivity analyses by testing alternative calibration schemes, prior distributions, or clock models. Wide confidence intervals or major shifts in estimates under different assumptions indicate uncertainty [11].

Which software is best for divergence time estimation? The choice depends on your data and needs. BEAST focuses on time-calibrated phylogenies with various relaxed clock models. MCMCTree (in PAML) is significantly faster for large datasets while producing comparable estimates. r8s and treePL use penalized likelihood approaches suitable for large phylogenies [11] [34].

Troubleshooting Guides

Problem: Inconsistent Results with Different Clock Models

Issue: Divergence time estimates vary substantially when using different molecular clock models.

Diagnosis and Solution:

- Check Rate Variation: Test if your data violates the strict clock assumption using likelihood ratio tests in packages like PAML [11].

- Compare Models: Run analyses under both autocorrelated and uncorrelated relaxed clock models. Use composite credibility intervals that combine results from both approaches for more robust uncertainty estimates [34].

- Validate with Simulations: If possible, simulate data under different clock models to understand how model misspecification affects your specific dataset [4].

Prevention: Always report results from multiple clock models and conduct model selection tests (e.g., using AIC/BIC) to justify your choice [11].

Problem: Unrealistically Old or Young Divergence Times

Issue: Estimated divergence times conflict with established fossil evidence or biogeographic events.

Diagnosis and Solution:

- Audit Calibrations: Ensure calibrations use appropriate probability distributions rather than fixed points. Compare user-specified priors with marginal priors by running analyses without sequence data [4].

- Reposition Calibrations: Move calibrations closer to the root rather than using only shallow calibrations. Deeper calibrations capture a larger proportion of overall genetic variation [4] [33].

- Use Multiple Calibrations: Incorporate several well-justified calibrations to reduce averaging error and improve precision across the tree [4].

Prevention: Consult fossil experts or biogeographic literature for appropriate calibration uncertainties. Use complex geological histories rather than single time points when calibrating with biogeographic events [35].

Problem: Computationally Prohibitive Run Times

Issue: Bayesian molecular clock analyses take too long to complete or fail to converge.

Diagnosis and Solution:

- Software Selection: For large datasets, consider MCMCTree which can be much faster than BEAST or MultiDivTime while producing comparable estimates [34].

- Reduce Complexity: Use a fixed topology instead of estimating it simultaneously with divergence times. Start with simpler models before progressing to complex ones [34].

- Computational Optimization: Utilize parallel processing, increase effective sample sizes gradually, and ensure proper MCMC convergence diagnostics [11].

Prevention: For very large phylogenies (thousands of taxa), consider faster penalized likelihood methods in r8s or treePL for initial explorations [11].

Experimental Protocols and Data

Protocol: Bayesian Molecular Clock Analysis with Multiple Calibrations

This protocol provides a framework for robust divergence time estimation using Bayesian methods [4] [36]:

Sequence Alignment and Quality Control

- Align sequences using MAFFT with guidance from tools like GUIDANCE2 to account for alignment uncertainty

- Remove unreliable alignment columns and assess alignment quality

Model Selection

- Select appropriate nucleotide substitution models using ProtTest (for proteins) or MrModeltest (for nucleotides) based on AIC/BIC criteria

- Compare different clock models (strict, uncorrelated relaxed, autocorrelated relaxed) using model selection tests

Calibration Strategy

- Identify multiple calibration points, prioritizing deeper nodes closer to the root

- Use probability distributions for calibrations rather than fixed points to incorporate uncertainty

- Compare user-specified priors with marginal priors by running analysis without sequence data

Bayesian Analysis

- Run MCMC analyses with at least 50,000 generations (adjust based on dataset size)

- Monitor convergence using effective sample sizes (ESS > 200) and trace plots

- Repeat under different clock models to assess robustness

Sensitivity Analysis

- Test impact of different calibration schemes

- Evaluate effect of alternative prior distributions

- Compare results across different clock models

Quantitative Comparison of Relaxed-Clock Methods

Table 1: Performance metrics for relaxed-clock methods based on simulation studies [34]

| Software | Computational Speed | Accuracy (Model Match) | Accuracy (Model Violation) | Best Use Case |

|---|---|---|---|---|

| MCMCTree | Fastest (<2 minutes for small datasets) | 7.5% average difference from true time | 9.4% average difference from true time | Large datasets, exploratory analyses |

| MultiDivTime | Moderate (3x slower than MCMCTree) | 5.1% average difference from true time | 8.9% average difference from true time | Small to medium datasets |

| BEAST | Slowest (orders of magnitude slower) | Similar to MultiDivTime | Similar to MultiDivTime | Complex models, co-estimation of topology and times |

Table 2: Impact of calibration strategy on time estimation accuracy [4]

| Calibration Strategy | Number of Calibrations | Position in Phylogeny | Effect on Time Estimates | Recommendation |

|---|---|---|---|---|

| Single calibration | 1 | Shallow (close to tips) | Underestimation by up to 3 orders of magnitude | Avoid |

| Single calibration | 1 | Deep (close to root) | Moderate accuracy | Acceptable when multiple not possible |

| Multiple calibrations | 3+ | Mixed, including deep nodes | Highest accuracy | Strongly recommended |

| Multiple calibrations | 3+ | Primarily shallow | Moderate to poor accuracy | Avoid |

Workflow Visualization

Molecular Clock Analysis Workflow

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for molecular clock analysis [11] [37] [36]

| Tool/Resource | Type | Primary Function | Key Features |

|---|---|---|---|

| BEAST | Software Package | Bayesian evolutionary analysis | Implements various relaxed clock models, co-estimation of topology and times |

| MCMCTree | Software Program | Bayesian divergence time estimation | Fast computation, uses approximate likelihood, good for large datasets |

| PAML | Software Package | Phylogenetic analysis by maximum likelihood | Includes MCMCTree, codon models, ancestral reconstruction |

| r8s | Software Program | Divergence time estimation | Penalized likelihood, fast for large trees, requires fixed topology |

| MrBayes | Software Package | Bayesian phylogenetic inference | General-purpose, flexible priors, can implement simple clock models |

| MAFFT | Algorithm | Multiple sequence alignment | Fast and accurate, handles large datasets, multiple alignment strategies |

| ProtTest/MrModeltest | Software Tools | Evolutionary model selection | Automates best-fit model identification using AIC/BIC criteria |

| GUIDANCE2 | Server/Tool | Alignment confidence assessment | Scores alignment reliability, identifies unreliable regions |

Troubleshooting Inaccurate Time Estimates

Implementing Clock Models: A Step-by-Step Guide with BEAST X and Beyond

BEAST X represents a significant advancement in the BEAST (Bayesian Evolutionary Analysis Sampling Trees) platform, providing a cross-platform, open-source software for Bayesian phylogenetic, phylogeographic, and phylodynamic inference. This latest version introduces substantial improvements in flexibility and scalability for evolutionary analyses, with particular emphasis on pathogen genomics. The software combines molecular phylogenetic reconstruction with complex trait evolution, divergence-time dating, and coalescent demographics in an efficient statistical inference engine [38].

Two primary themes characterize BEAST X's advancements: the development of state-of-science, high-dimensional models spanning multiple biological and public health domains, and the implementation of novel computational algorithms that significantly accelerate inference across complex, highly structured models. These developments build upon BEAST's established success in integrating sequence, phenotypic, and epidemiological data along time-scaled phylogenetic trees, which has proven crucial for understanding the epidemiology and evolutionary dynamics of rapidly evolving pathogens such as Ebola virus, SARS-CoV-2, and mpox virus [38].

Technical Specifications: Substitution and Clock Models

Advanced Substitution Models

BEAST X incorporates several extensions to existing substitution processes that model additional features affecting sequence changes [38]:

Markov-Modulated Models (MMMs): These constitute a class of mixture models that allow the substitution process to change across each branch and for each site independently within an alignment. MMMs are composed of K substitution models (nucleotide, amino acid, or codon models) of dimension S to construct a KS × KS instantaneous rate matrix used in calculating the observed sequence data likelihood [38].

Random-Effects Substitution Models: These extend standard continuous-time Markov chain (CTMC) models by incorporating additional rate variation, representing the original model as fixed-effect parameters while allowing random effects to capture deviations from the simpler process. This enables more appropriate characterization of underlying substitution processes while retaining the basic structure of biologically or epidemiologically motivated base models [38].

Table 1: Key Substitution Models in BEAST X

| Model Type | Key Features | Applications | Computational Considerations |

|---|---|---|---|

| Markov-Modulated Models (MMMs) | Site- and branch-specific heterogeneity; Integrates over candidate substitution processes | Captures different selective pressures over site and time; Bacterial, viral, and plastid genome evolution | High computational demand due to augmented dimensionality; Mitigated through BEAGLE library |

| Random-Effects Substitution Models | Extends CTMC models; Captures additional rate variation; Uses shrinkage priors for regularization | Studies nonreversibility in substitution processes; SARS-CoV-2 sequence analysis | Overparameterization addressed with shrinkage priors; Enables model selection for random effects |

Enhanced Molecular Clock Models

BEAST X provides significant enhancements to molecular clock modeling, moving beyond the traditional strict clock assumption to accommodate various sources of rate heterogeneity [38]:

Time-Dependent Evolutionary Rate Model: Accommodates evolutionary rate variations through time, which is particularly prevalent in rapidly evolving viruses with long-term transmission histories. This model uses phylogenetic epoch modeling to specify unique substitution processes throughout evolutionary history, with discretized time intervals determined by boundaries at times T₀ < T₁ < … < Tₘ₋₁ < Tₘ [38].

Relaxed Clock Models: BEAST X improves upon classic uncorrelated relaxed clock models with several new approaches, including a continuous random-effects clock model, a more general mixed-effects relaxed clock model, and an enhanced shrinkage-based local clock model that provides tractable and interpretable inference [38].

Table 2: Molecular Clock Models in BEAST X

| Clock Model | Rate Variation Assumption | Key Features | Best Applications |

|---|---|---|---|

| Strict Clock | Constant rate across all branches | Single evolutionary rate parameter; Computationally efficient | Datasets with minimal rate variation; Preliminary analyses |

| Time-Dependent Model | Rate varies through time across all lineages | Epoch-based structure with rate shifts at specified boundaries; Improves node height estimation | Pathogens with long-term transmission history; Foamy virus co-speciation |

| Uncorrelated Relaxed Clock | Rates vary independently among branches | No assumption of rate correlation between branches; Accommodates significant rate heterogeneity | Datasets with substantial among-lineage rate variation |