Validating Adaptive Introgression: A Population Genomics Guide for Biomedical Research

Adaptive introgression, the process by which beneficial genetic material is transferred between species or populations, is increasingly recognized as a key mechanism for rapid adaptation.

Validating Adaptive Introgression: A Population Genomics Guide for Biomedical Research

Abstract

Adaptive introgression, the process by which beneficial genetic material is transferred between species or populations, is increasingly recognized as a key mechanism for rapid adaptation. For researchers and drug development professionals, validating these events is crucial for identifying evolutionarily-tested functional variants with potential biomedical significance. This article provides a comprehensive guide to the population genetic analyses used to validate adaptive introgression. We cover foundational concepts, current methodological tools, strategies for troubleshooting and optimization, and robust frameworks for statistical validation and comparative analysis. By synthesizing recent advances, this resource aims to equip scientists with the knowledge to confidently identify and interpret adaptive introgression in genomic data, thereby uncovering novel biological insights and potential therapeutic targets.

The What and Why: Defining Adaptive Introgression and Its Evolutionary Impact

In evolutionary genomics, introgression describes the transfer of genetic material from one species into the gene pool of another through repeated backcrossing of hybrids. A critical challenge for researchers is determining whether introgressed sequences provide adaptive advantages or represent neutral gene flow. Adaptive introgression occurs when these foreign alleles confer a fitness benefit and are subsequently favored by natural selection, while neutral introgression involves sequences that have no measurable effect on fitness and whose dynamics are shaped primarily by genetic drift [1].

Distinguishing between these processes is methodologically complex, as both can produce similar genomic signatures in the early stages of lineage sorting. However, accurate identification is crucial for understanding how hybridization contributes to adaptation, species resilience, and evolutionary innovation across diverse taxa, from bacteria and plants to humans [1] [2] [3]. This guide compares the leading population genetic methods and analytical frameworks used to validate adaptive introgression, providing researchers with a practical toolkit for experimental design and data interpretation.

Comparative Frameworks: Methods and Their Applications

Key Methodological Approaches

Table 1: Core Methodological Approaches for Detecting Introgression

| Method Category | Key Methods/Statistics | Underlying Principle | Strengths | Limitations |

|---|---|---|---|---|

| Summary Statistics | D-statistics (ABBA-BABA), FST, dXY, RNDmin, Gmin [4] [5] [6] | Quantifies allele frequency patterns and sequence divergence to detect excess allele sharing between species. | Computationally efficient; good for initial genome scans; some tests (e.g., D-statistic) can use unphased data. | Can be confounded by incomplete lineage sorting (ILS) and variation in mutation rate; less precise in pinpointing exact introgressed loci. |

| Probabilistic Modeling | bgc, IM/IMa2/IMa3, G-PhoCS [4] [5] | Uses explicit population genetic models with coalescent theory to infer demographic history and migration rates. | Can jointly estimate parameters like divergence time and migration rate; more robust to confounding factors like ILS. | Computationally intensive; requires accurate model specification; performance depends on prior distributions. |

| Supervised Learning | Machine Learning Frameworks [4] | Treats introgression detection as a classification problem, trained on simulated genomic data. | High power to detect introgression in complex evolutionary scenarios; can integrate multiple genomic features. | Requires extensive training data and simulations; model generalizability can be a challenge. |

Statistical Tests for Adaptive Introgression

Once introgression is detected, distinguishing adaptive from neutral events requires testing for signatures of natural selection on the introgressed segments.

Table 2: Key Tests for Differentiating Adaptive from Neutral Introgression

| Test | Measurement | Interpretation for Adaptation | Typical Data Requirement |

|---|---|---|---|

Site Frequency Spectrum (SFS) tests (e.g., via dadi) [5] |

Allele frequency distribution. | A skewed SFS in the introgressed region compared to the genomic background suggests selection. | Population allele frequencies. |

| Extended Haplotype Homozygosity (EHH, XP-EHH) [3] | Length of haplotypes without recombination. | Longer haplotypes around the introgressed allele than expected under neutrality indicate a recent selective sweep. | Phased haplotypes. |

| Population Branch Statistic (PBS) [5] | Relative divergence (FST) between populations. | High PBS values in an introgressed region in the recipient population indicate locus-specific rapid differentiation, often due to selection. | Genotype data from two populations and an outgroup. |

| Association with Environmental Variables (e.g., BayPass) [5] | Correlation between allele frequency and environmental factors. | Introgressed alleles whose frequency is strongly associated with specific environmental variables suggest local adaptation. | Genotypes and environmental data (e.g., temperature, altitude). |

Experimental Protocols for Validation

A Standard Workflow for Validation

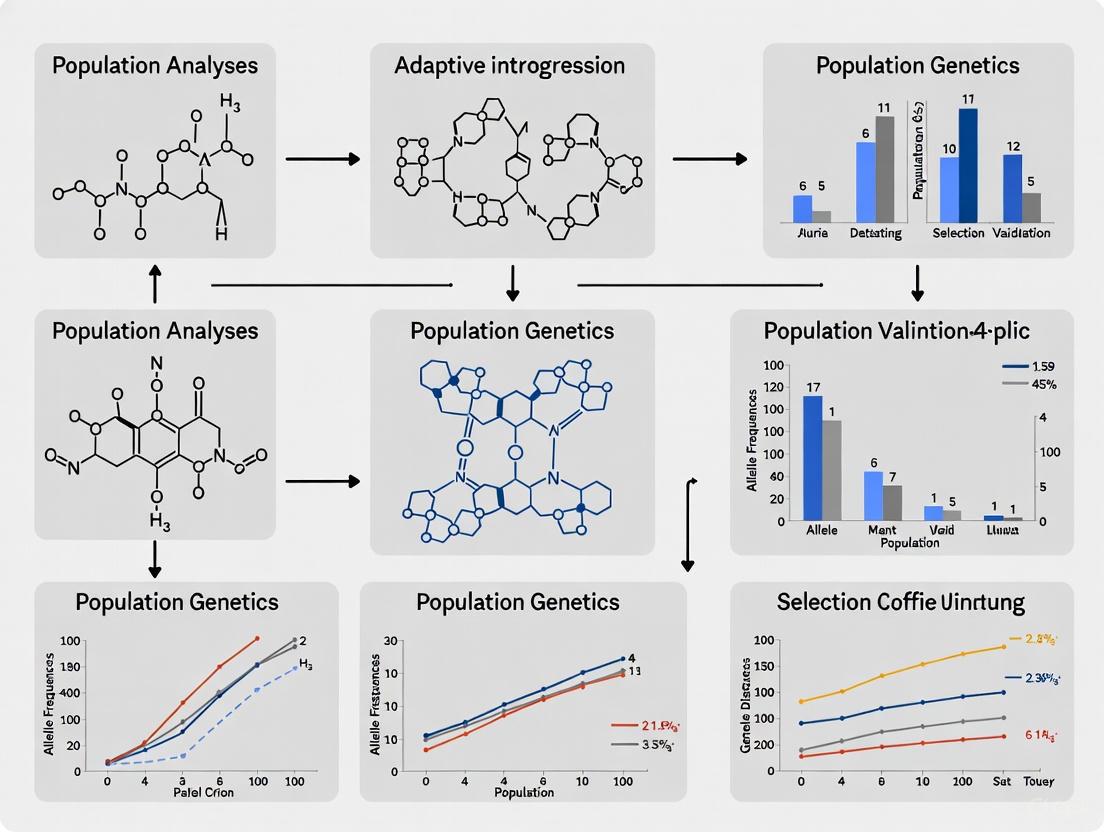

Validating adaptive introgression requires a multi-stage workflow that moves from broad detection to functional confirmation. The diagram below outlines this sequential process.

Detailed Methodological Breakdown

Step 1: Genome-Wide Scan for Introgression Initiate analysis by applying summary statistics like D-statistics (ABBA-BABA) to genome-wide data to test for a significant signal of introgression between your target species/populations. Following a significant result, use methods such as fd or RNDmin to scan the genome and identify specific candidate introgressed regions [6]. For a more model-based approach, software like bgc can simultaneously infer interspecific admixture and identify introgressed genomic blocks [5].

Step 2: Testing for Signatures of Selection On the candidate introgressed regions, apply tests for natural selection. This includes:

- Haplotype-based tests (e.g., XP-EHH): To detect unusually long haplotypes indicative of a recent selective sweep [3].

- Differentiation-based tests (e.g., FST, PBS): To identify loci with exceptionally high divergence between populations, suggesting local adaptation [7].

- Frequency-based tests: To detect shifts in the site frequency spectrum that deviate from neutral expectations.

Step 3: Functional Annotation and Phenotypic Validation Annotate the candidate regions for genes and regulatory elements using databases and tools like ANNOVAR [5]. Look for enrichment in functional categories related to environmental adaptation (e.g., immunity, metabolism, reproduction) [3]. The strongest evidence comes from directly linking the introgressed allele to a fitness-enhancing phenotype, such as through genotype-phenotype association studies or experimental validation in model systems [7].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Software and Analytical Tools

| Tool Name | Primary Function | Application in Introgression Studies |

|---|---|---|

| PLINK [5] | Whole-genome association analysis. | Quality control (QC) of SNP data, basic population structure analysis. |

| ADMIXTURE [5] | Estimates individual ancestries from SNP data. | Inferring global ancestry proportions and identifying potential admixed individuals. |

| Dsuite [5] | Calculates D-statistics and related metrics. | Performing ABBA-BABA tests to detect genome-wide signal of introgression. |

| bgc [5] | Bayesian estimation of genomic clines. | Modeling the probability of introgression along the genome and estimating hybrid index. |

| ANGSD [5] | Analysis of next-generation sequencing data. | Working with NGS data (e.g., low-coverage sequencing) to estimate allele frequencies and perform selection scans. |

| HYBpiper [5] | Extracts target sequences from HTS data. | Assembling specific genes (e.g., from hybrid capture data) for phylogenetic analysis in hybrid zones. |

| Relate [3] | Inferring ancestral recombination graphs. | Dating introgression events and detecting selection on specific haplotypes. |

Genomic Landscapes and Evolutionary Implications

The co-occurrence of introgression and divergence forces, such as autosomal introgression alongside islands of differentiation in sex-linked chromosomes, demonstrates that these processes are not mutually exclusive [1]. Adaptive introgression can facilitate evolutionary leaps, allowing species to rapidly acquire complex traits that would take much longer to evolve through de novo mutation [1]. Documented cases include:

- Immunity and adaptation to high altitude in modern humans from archaic hominins like Denisovans and Neanderthals [3].

- Environmental stress tolerance in pines, where adaptive introgression enables survival in marginal peat bog habitats [7].

- Core genome evolution in bacteria, where introgression, though not blurring species borders, substantially contributes to diversification [2] [8].

Understanding these dynamics is paramount for applications in conservation biology, where managed gene flow could potentially aid species adaptation in rapidly changing environments, and in biomedical research, where archaic introgression in genes related to reproduction can influence modern human disease risk [1] [3].

Adaptation is a cornerstone of evolutionary biology, but the sources of genetic variation that fuel it can vary significantly. While de novo mutations—new genetic variations arising within a population—have long been the focus of adaptive theory, growing genomic evidence reveals that standing genetic variation and adaptive introgression often provide faster evolutionary pathways. Standing genetic variation refers to pre-existing alleles segregating within a population, while adaptive introgression involves the incorporation of beneficial alleles from closely related species or populations through hybridization.

These alternative sources bypass the waiting time for new mutations to arise and can start at higher initial frequencies, dramatically accelerating adaptive processes [9]. This review synthesizes evidence from population genetics, experimental evolution, and genomic analyses to compare the efficacy, dynamics, and outcomes of adaptation from these distinct genetic sources, providing a framework for researchers investigating evolutionary mechanisms in natural populations, model organisms, and biomedical contexts.

Comparative Analysis of Adaptive Mechanisms

The table below summarizes the key characteristics of the three primary sources of adaptive variation.

Table 1: Comparative Analysis of Adaptive Genetic Variation Sources

| Feature | De Novo Mutation | Standing Genetic Variation | Adaptive Introgression |

|---|---|---|---|

| Origin of Alleles | New mutations arising within the population [9] | Pre-existing neutral or mildly deleterious alleles in the population [9] | Alleles introduced via hybridization from related species/populations [1] |

| Initial Frequency (p₀) | Very low (1/2N) [9] | Can be intermediate [9] | Variable, can be moderate depending on hybridization rate [1] |

| Waiting Time for Variant | Can be long, limiting the speed of adaptation [9] | No waiting time; alleles are immediately available [9] | No waiting time for novel variants; immediate acquisition [1] |

| Fixation Probability | Lower, especially for recessive alleles (Haldane's Sieve) [9] | Higher, less affected by dominance [10] [9] | Higher, facilitated by higher initial frequency and selective advantage [1] |

| Typical Genetic Signature | "Hard" selective sweep [9] | "Soft" sweep [9] | "Soft" sweep or identifiable introgressed haplotype [1] [3] |

| Phenotypic Effect Size | Often involves alleles of larger effect [10] | Proceeds by fixation of more alleles of small effect [10] | Can involve alleles of large effect, enabling evolutionary leaps [1] |

Advantages of Standing Variation and Introgression Over De Novo Mutation

The Critical Role of Initial Frequency and Population Size

The evolutionary trajectory of an adaptive allele is profoundly shaped by its starting point. De novo mutations originate at a frequency of just 1/2N (where N is the population size), making them highly vulnerable to loss by genetic drift, even when beneficial [9]. In contrast, alleles from standing genetic variation may have drifted to intermediate frequencies before the selection pressure changes, while introgressed alleles can enter a population at moderate frequencies depending on hybridization rates. This higher initial frequency provides a crucial buffer against stochastic loss and allows natural selection to act more efficiently [1] [9].

The effective population size (Ne) further modulates this dynamic. Populations with large Ne generate more mutations and are less affected by genetic drift, increasing the efficacy of selection for weakly beneficial alleles. However, small populations, with their limited mutational input and stronger drift, are particularly reliant on standing variation or introgression for rapid adaptation, as they cannot afford the long waiting times for new beneficial mutations [9].

Speed and Evolutionary Potential

The most significant advantage of pre-existing variation is its potential for faster adaptation.

- No Waiting Time: Populations do not need to wait for a beneficial mutation to randomly occur; the adaptive alleles are already present [9].

- Larger Phenotypic Trajectories: Research on quantitative traits shows that populations adapting from standing genetic variation can traverse larger distances in phenotype space, especially when the rate of environmental change is fast [10].

- Evolutionary Leaps: Adaptive introgression can introduce entirely new functional variants that were not present in the recipient population's gene pool, allowing for evolutionary leaps that bypass intermediate steps [1]. This is exemplified by the adaptation of modern humans to new environments outside Africa through archaic introgression [3] and the acquisition of stress resilience genes in spruce trees [11].

Genetic Architecture and Pleiotropy

The genetic basis of adaptation differs markedly between these sources. Adaptive-walk models focusing on de novo mutations often predict the fixation of alleles with relatively large phenotypic effects. In contrast, adaptation from standing variation typically proceeds by the fixation of more alleles of small effect [10]. This polygenic architecture may allow for finer-tuning to complex environmental gradients. Furthermore, standing variation provides a pool of alleles that have already been "tested" in the genomic background, potentially filtering out variants with severe deleterious pleiotropic effects that might be common among new random mutations [9].

Experimental Evidence and Methodologies

Experimental Evolution with Microbial Models

Microbial evolution experiments provide a powerful window into real-time adaptive dynamics. A long-term experiment with Escherichia coli evolving under glucose limitation vividly demonstrated the phenomenon of extreme parallelism and clonal interference when mutational input is high [12]. Researchers used chemostats to maintain a constant selective pressure (glucose limitation) for 300-500 generations. Through whole-genome, whole-population sequencing every 50 generations and deep sequencing of 96 clones at peak diversity, they cataloged 3346 de novo mutations.

- Findings: Mutations enhancing glucose uptake (e.g., in operons like

malandlamB) rose first. - Observation: Despite many independent mutations targeting the same small set of genes, very few reached fixation due to competition between lineages carrying different beneficial mutations [12].

- Interpretation: This illustrates a scenario where de novo mutations are abundant but their path to fixation is slow and inefficient compared to a scenario where a pre-adapted allele is already available at a higher frequency.

Table 2: Key Research Reagents and Analytical Tools for Studying Adaptation

| Reagent / Tool | Function/Application |

|---|---|

| Chemostat/Culture System | Maintains constant selective pressure (e.g., nutrient limitation) for long-term experimental evolution [12]. |

| Whole-Genome, Whole-Population Sequencing | Identifies and tracks the frequency of de novo mutations across a population over time [12]. |

| Clonal Sequencing (e.g., 96+ clones) | Determines haplotype phase and reveals which mutations co-occur on the same lineage [12]. |

| Population Genetic Statistics (FST, EHH) | Detects genomic signatures of selection, such as localized reduction of diversity or extended haplotypes [3] [9]. |

| Ancestral Recombination Graph (ARG) Software | Reconstructs the genealogical history of haplotypes to infer past selection and introgression events [3]. |

| SPrime / map_arch | Identifies and characterizes segments of archaic introgression in modern genomes [3]. |

Population Genomic Analyses of Introgression

Population genomic analyses in natural populations provide compelling evidence for adaptive introgression. The workflow for detecting and validating these events typically involves a multi-step process, as illustrated in the diagram below.

A study on archaic introgression in modern humans followed this logic. Researchers identified 47 archaic segments overlapping reproduction-associated genes that were present at frequencies over 40% in certain populations—far higher than the genome-wide average of <2% Neanderthal ancestry [3]. They then refined these to 11 core haplotypes and applied selection tests (EHH, FST, Relate). Three of these, including the AHRR gene, showed strong signals of positive selection [3]. Functional annotation revealed that many archaic alleles were expression quantitative trait loci (eQTLs) regulating genes in reproductive tissues, linking the introgressed variation to phenotypic consequences.

Quantitative Genetic Models of Standing Variation

Theoretical models studying the evolution of a quantitative trait under a gradually changing environment (e.g., a moving optimum) quantify the advantages of standing variation. These models use analytical approximations and individual-based simulations to track the distribution of phenotypic effects of alleles fixed during adaptation [10]. The key finding is that compared to a scenario reliant solely on new mutations, adaptation from standing variation involves a greater number of fixed alleles with smaller individual effects. This allows the population to maintain a smaller lag behind the optimum and achieve a greater total adaptive change, especially under rapid environmental change [10]. The diagram below visualizes this core concept.

Implications for Drug Development and Disease Research

Understanding these evolutionary mechanisms has practical implications for disease research and therapeutic development.

- Antibiotic and Antiviral Resistance: Pathogen populations often leverage standing genetic variation to rapidly evolve resistance under drug pressure. Similarly, the identification of adaptively introgressed genes in humans, such as those linked to immune function [3] or reproductive phenotypes [3], can reveal novel disease mechanisms and drug targets.

- Cancer Therapeutics: Tumor evolution mirrors evolutionary processes, with chemotherapy acting as a strong selective pressure. The presence of pre-existing resistant clones (standing variation) in a heterogeneous tumor can lead to faster relapse than resistance arising from new mutations during treatment. This underscores the need for combination therapies that target multiple pathways simultaneously.

- Identifying Drug Targets: Archaic alleles identified through introgression scans have been linked to conditions like prostate cancer (protective effects) and endometriosis [3]. These natural "experiments" highlight genes and pathways with critical biological functions, validating them as promising candidates for therapeutic intervention.

The growing body of evidence from theoretical models, experimental evolution, and population genomics solidifies the conclusion that standing genetic variation and adaptive introgression provide a faster, more efficient path to adaptation than de novo mutations alone. The higher initial frequency of pre-existing alleles, their availability without a waiting period, and their tendency to have fewer deleterious pleiotropic effects collectively enable more rapid evolutionary responses, especially in small populations or under fast-changing environmental conditions. For researchers in drug development, acknowledging these pathways is crucial for understanding the evolution of drug resistance and for identifying critically important genes through the analysis of natural genomic variation. Future research will continue to refine our ability to detect these signals and unravel the complex interplay of evolutionary forces that shape adaptive outcomes.

Adaptive introgression, the natural process by which beneficial genetic material is transferred from one species or population to another through interbreeding and backcrossing, serves as a crucial mechanism for rapid evolution [1]. This phenomenon allows recipient populations to acquire advantageous traits more quickly than through de novo mutation, providing a genetic toolkit for adapting to new environmental pressures, pathogens, and climatic challenges [1] [13]. Once regarded as a maladaptive process that could hinder divergence, molecular evidence has established adaptive introgression as a significant driver of evolutionary innovation across diverse taxa, from bacteria to mammals [1]. This review synthesizes key biological examples of adaptive introgression, comparing its functional roles in human immunity and crop resistance, while detailing the experimental approaches and research tools that enable scientists to validate and harness this evolutionary phenomenon.

Documented Examples Across Biological Systems

Adaptive Introgression in Human Evolution

Research has uncovered compelling evidence of adaptive introgression from archaic hominins into modern human populations, with several introgressed alleles contributing to immune function and reproductive fitness.

Table 1: Examples of Adaptive Introgression in Human Populations

| Introgressed Gene/Region | Archaic Source | Functional Role | Population | Key Evidence |

|---|---|---|---|---|

| Immune-related genes | Neanderthal/Denisovan | Pathogen defense | Non-Africans | Statistical excess of archaic ancestry in immune loci [1] [3] |

| EPAS1 | Denisovan | High-altitude adaptation | Tibetans | High-frequency haplotype with demonstrated fitness advantage [14] |

| PGR | Neanderthal | Fertility enhancement | Europeans | Haplotype associated with reduced miscarriages and pregnancy bleeding [3] |

| AHRR | Neanderthal | Reproductive function | Finnish | Multiple selection tests (EHH, FST, Relate) indicate positive selection [3] |

| FLT1 | Neanderthal | Reproductive function | Peruvian | Signature of positive selection in core haplotype [3] |

The EPAS1 gene represents a paradigmatic example, where a variant introgressed from Denisovans into the ancestors of modern Tibetans reached high frequency through positive selection for survival at high altitudes [14]. Parallel evolution is observed in Tibetan Mastiffs, which acquired a different high-frequency EPAS1 variant through introgression from Tibetan wolves, demonstrating convergent evolutionary adaptation to the same environmental challenge [14].

Recent research has also revealed significant archaic introgression in genes associated with human reproduction. A 2025 study identified 47 archaic segments overlapping reproductive genes that reached frequencies 20 times higher than typical introgressed archaic DNA, with three core haplotypes (PNO1-PPP3R1, AHRR, and FLT1) showing strong signatures of positive selection in specific human populations [3]. Notably, the AHRR region contained 10 variants in the top 1% of the genome-wide distribution for selection statistics, representing the strongest candidate for adaptive introgression in this gene set [3].

Crop Resistance and Environmental Adaptation

In agricultural systems, adaptive introgression from wild relatives has provided cultivated species with crucial resistance traits and environmental resilience.

Table 2: Examples of Adaptive Introgression in Crop Species

| Crop System | Wild Relative | Trait Acquired | Key Genes/Regions | Application |

|---|---|---|---|---|

| Chinese Wingnuts (Pterocarya) | P. hupehensis P. macroptera | Environmental adaptation | TPLC2, CYCH;1, LUH, bHLH112, GLX1 | Adaptation to heterogeneous mountain environments [15] |

| Various crops | Crop wild relatives | Disease resistance | Multiple R genes and QTLs | Breeding for disease resistance [13] |

| Various crops | Crop wild relatives | Abiotic stress tolerance | Multiple genomic regions | Climate-resilient varieties [13] |

Research on three species of Chinese wingnuts (Pterocarya) revealed how past introgression between P. hupehensis and P. macroptera promoted environmental adaptation to different elevational niches in the Qinling-Daba Mountains [15]. The introgressed regions were found to contain lower genetic load and higher genetic diversity compared to the rest of the genome, while being situated in areas of minimal genetic divergence with elevated recombination rates [15]. Candidate genes within these introgressed regions included TPLC2, CYCH;1, LUH, bHLH112, GLX1, TLP-3, and ABC1, all related to environmental adaptation [15].

The strategic value of wild relatives as sources of adaptive alleles is particularly high for domesticated species, which typically harbor reduced genetic diversity compared to their wild counterparts due to domestication bottlenecks and intensive breeding [13]. For example, wheat has lost more than 70% of the diversity present in its wild progenitor, wild emmer, which carries significant diversity for biotic and abiotic resistances [13].

Methodological Approaches for Detection and Validation

Genomic Detection Frameworks

The detection of adaptive introgression requires distinguishing regions that have introgressed and undergone positive selection from those that introgressed through neutral processes. Several computational approaches have been developed for this purpose:

Figure 1: Workflow for Detecting Adaptive Introgression

Population Genomic Scans utilize summary statistics that compare allele frequencies and haplotype patterns between populations. Methods like f4-ratio and D-statistics identify excess ancestry from donor populations, while haplotype-based methods (EHH, iHS) detect signatures of recent positive selection [14] [3]. These approaches successfully identified the adaptively introgressed EPAS1 haplotype in Tibetans and numerous immune genes in non-African populations [14].

Convolutional Neural Networks (CNNs) represent a more recent innovation that leverages machine learning to identify adaptive introgression. The genomatnn framework uses CNNs trained on simulated genomic data to distinguish regions evolving under adaptive introgression from those evolving neutrally or experiencing selective sweeps without introgression [14]. This method demonstrates 95% accuracy on simulated data, with only moderate decreases in accuracy when analyzing unphased genomes or in the presence of heterosis [14].

Multi-method validation strengthens conclusions about adaptive introgression. For example, the study of archaic introgression in human reproductive genes applied EHH, FST, and Relate selection tests to identify the strongest candidates, with AHRR showing the highest number of variants at the top 1% of the genome-wide distribution for Relate's statistic [3].

Experimental Protocols for Validation

Population Genetic Analysis Protocol:

- Sample Collection: Collect genomic data from recipient population, putative donor population(s), and outgroup species

- Introgression Scan: Identify putative introgressed regions using methods like SPrime with multiple validation sources [3]

- Selection Testing: Apply multiple selection tests (EHH, FST, Relate) to identify signatures of positive selection [3]

- Core Haplotype Definition: Filter to regions where maximum archaic allele frequency variants overlap genes of interest [3]

- Functional Annotation: Identify potential functional consequences of introgressed variants (eQTL mapping, protein-coding changes) [3]

- Phenotypic Correlation: Examine associations between introgressed alleles and phenotypic traits in biobank data [3]

Cross-Species Validation examines parallel evolution across taxonomic groups. The independent adaptive introgression of EPAS1 in both humans and dogs provides compelling evidence for its role in high-altitude adaptation [14]. Similarly, detecting the same introgressed haplotype in multiple populations subjected to similar selective pressures strengthens the case for adaptation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Resources for Studying Adaptive Introgression

| Resource Category | Specific Tools/Resources | Application | Key Features |

|---|---|---|---|

| Genomic Data | 1000 Genomes Project, gnomAD, EBI Biobanks | Population frequency analysis | Diverse population representation, extensive phenotyping |

| Archaic Genomes | Altai Neanderthal, Vindija Neanderthal, Denisova | Reference donor genomes | High-coverage sequences from multiple archaic individuals [3] |

| Analysis Tools | SPrime, map_arch, Relate, genomatnn | Introgression detection and selection testing | Specialized for archaic introgression analysis [3] [14] |

| Simulation Frameworks | stdpopsim, SLiM | Training data generation, demographic modeling | Forward-time simulation with selection modules [14] |

| Visualization | Saliency maps, Ancestral Recombination Graphs | Feature interpretation, evolutionary history | Highlights regions driving CNN predictions [14] |

The genomatnn pipeline represents a particularly advanced tool, providing pre-trained CNNs as well as a framework for training new networks on specific evolutionary scenarios [14]. This interfaces with a selection module in the stdpopsim framework, using the forward-time simulator SLiM to generate training data [14].

For crop researchers, multi-omics integration using machine learning approaches shows promise for predicting disease resistance mechanisms by combining genomic, transcriptomic, epigenomic, proteomic, and metabolomic data [16]. These can capture nonlinear relationships prevalent in high-dimensional data better than traditional statistical methods [16].

Comparative Analysis and Research Implications

Commonalities and Divergences Across Systems

Despite fundamental biological differences between human and crop systems, the patterns and processes of adaptive introgression show remarkable parallels:

Shared Characteristics:

- Balancing Selection Forces: Adaptive introgression frequently co-occurs with divergence forces like autosomal introgression alongside islands of differentiation in sex-linked chromosomes [1]

- Environmental Mediation: Environmental conditions frequently shape the path of evolutionary processes regardless of structural complexity [1]

- Genomic Distribution: Introgressed regions often show distinct genomic distributions, with deserts of introgression in some regions and concentrated ancestry in others [3]

System-Specific Considerations:

- Human Research leverages naturally occurring archaic introgression with precise dating of admixture events (40,000-60,000 years ago) [3]

- Crop Science often combines analysis of natural introgression with intentional breeding interventions, accelerating the utilization of wild relative diversity [13]

- Timescales differ substantially, with human examples unfolding over tens of thousands of years, while crop examples may span centuries or less

Research Applications and Future Directions

Understanding adaptive introgression has direct applications across multiple domains:

Human Health Implications: Identifying adaptively introgressed variants informs our understanding of disease risk and evolutionary medicine. For example, archaic alleles overlapping an introgressed segment on chromosome 2 were found to be protective against prostate cancer [3]. Similarly, introgressed immune genes may influence susceptibility to infectious diseases and autoimmune disorders [1] [17].

Agricultural Innovation: Harnessing adaptive introgression from crop wild relatives provides a pathway for developing climate-resilient varieties. Screening wild introgression already present in cultivated gene pools represents an effective strategy for uncovering diversity relevant for crop adaptation [13]. This approach is particularly valuable for addressing challenges like drought tolerance, disease resistance, and nutritional enhancement.

Conservation Biology: Understanding how gene flow facilitates adaptation to changing environments informs species management strategies, particularly for endangered species facing rapid climate change [15].

Methodological Advances: Future research will benefit from improved integration of multi-omics data, machine learning approaches, and functional validation. The application of CNNs and other deep learning methods represents just the beginning of AI-driven approaches to evolutionary genetics [14] [16].

The quest for novel, functionally validated alleles represents a central challenge in modern biomedical research and therapeutic development. Within the wild gene pools of closely related species and populations lies a vast, untapped reservoir of genetic variation, shaped by millennia of natural selection. Adaptive introgression—the process by which beneficial genetic material is transferred between species or populations through hybridization and backcrossing—serves as a powerful evolutionary mechanism that pre-filters this variation for functional relevance [1]. This process can introduce entire functional gene modules with established phenotypic effects, bypassing the need to traverse intermediate evolutionary stages and effectively performing "natural gene editing" on a population scale [1].

The biomedical imperative to harness these wild alleles stems from several critical advantages they offer over synthetic approaches or de novo mutations. Introgressed alleles often arrive as complete haplotypes with established epistatic relationships, having been tested in complex genetic backgrounds similar to humans. Evidence increasingly supports that beneficial alleles introgress more readily than neutral ones, providing a curated set of variants with enhanced potential for therapeutic applications [1]. Furthermore, adaptive introgression can drive evolutionary leaps, facilitating rapid adaptation to environmental pressures—a property with significant implications for understanding genetic resilience and susceptibility factors in human populations [1].

This guide provides a comprehensive comparison of the experimental and computational frameworks essential for validating and leveraging these naturally occurring functional alleles, with direct relevance to drug target identification, disease model development, and therapeutic innovation.

Comparative Analysis of Adaptive Introgression Detection Methods

The accurate identification of adaptively introgressed genomic regions requires sophisticated computational methods that can distinguish genuine signals from background noise. The table below compares the performance characteristics of three established detection frameworks when applied to evolutionary scenarios with varying divergence times and migration histories, as evaluated in a 2025 performance assessment [18].

Table 1: Performance comparison of adaptive introgression detection methods across different evolutionary scenarios

| Method | Core Approach | Reported Precision | Optimal Application Context | Key Limitations |

|---|---|---|---|---|

| Genomatnn [14] [18] | Convolutional Neural Network (CNN) analyzing genotype matrices | >95% on simulated data (phased); >88% (unphased); moderately affected by heterosis [14] | Scenarios with reference data from donor, recipient, and unadmixed sister populations [14] | Performance decreases with unphased data; requires specialized training [14] |

| VolcanoFinder [18] | Population branch statistic-based framework | Variable; highly dependent on demographic scenario | Evolutionary histories with strong population-specific differentiation | Higher false positive rates in certain complex demographic scenarios [18] |

| MaLAdapt [18] | Machine learning classification | Variable; highly dependent on demographic scenario | Scenarios with sufficient training data across target demographics | Performance varies significantly across different divergence/migration time combinations [18] |

| Q95(w,y) statistic [18] | Standalone summary statistic measuring haplotype sharing | Competitive power for exploratory analysis | Initial genome-wide scans for adaptive introgression candidates | Limited as standalone evidence; requires complementary validation [18] |

Performance evaluations highlight that method effectiveness varies significantly across different evolutionary contexts. The same 2025 study found that divergence time and migration history profoundly impact detection accuracy, with methods optimized for human evolutionary studies (like Genomatnn) potentially underperforming when applied to species with different demographic histories without proper recalibration [18]. Critical to reducing false positives is accounting for the hitchhiking effect of adaptively introgressed mutations on flanking regions, emphasizing the importance of comparing candidate regions against immediately adjacent neutral sequences rather than only against unlinked genomic regions [18].

Experimental Frameworks for Functional Allele Validation

High-Throughput Gene Editing for Functional Validation

CRISPR-Cas-based gene editing has emerged as a transformative technology for bridging the gap between genetic association and functional validation [19]. This approach enables researchers to move beyond correlation to establish causality by directly testing the functional impact of introgressed alleles in relevant biological contexts.

Table 2: Comparative analysis of gene editing approaches for allele functional validation

| Editing Approach | Key Capabilities | Throughput Potential | Applications in Allele Validation |

|---|---|---|---|

| CRISPR-Cas9 Knockout | Gene disruption via indels; multiplexed targeting | High with robotic automation [19] | Essential gene function screening; validation of loss-of-function alleles [19] |

| Base Editing | Precise single nucleotide changes without double-strand breaks | Moderate to high with optimized delivery | Functional testing of specific SNP effects; modeling human disease variants [19] |

| Prime Editing | Targeted insertions, deletions, and all base-to-base conversions | Moderate with current technologies | Recapitulating exact introgressed haplotypes; allele replacement studies [19] |

| Allele Replacement | Precise swap of endogenous sequence with donor template | Lower throughput but high fidelity | Direct functional comparison of ancestral vs. introgressed alleles [19] |

The validation workflow typically begins with the creation of Near-Isogenic Lines (NILs) that isolate the introgressed haplotype in a controlled genetic background, followed by precise gene editing to confirm causal variants [19]. Emerging techniques such as protoplast isolation and in planta transformation using developmental regulatory genes promise to further increase throughput, potentially enabling genome-scale functional validation efforts [19].

Phenotypic Screening Platforms

Rigorous phenotypic characterization remains essential for establishing the biomedical relevance of introgressed alleles. The SHEPHERD framework represents a novel approach to this challenge, employing few-shot learning for phenotype-driven diagnosis even with limited case numbers—a common scenario when working with rare alleles or specialized adaptations [20]. This method operates by generating mathematical representations (embeddings) of patient phenotypes and genotypes in a latent space where individuals cluster based on shared functional biology rather than superficial similarity [20].

Validated platforms for high-throughput phenotyping include:

- Whole genome/exome sequencing coupled with Expression Quantitative Trait Loci (e-QTL) analysis to identify SNPs influencing gene expression within specific functional modules [21]

- Knowledge-grounded deep learning that incorporates known phenotype-gene-disease associations through graph neural networks [20]

- Adaptive simulation approaches that generate realistic rare disease patients with varying numbers of phenotype terms and candidate genes for training diagnostic algorithms [20]

Visualization of Research Workflows

Adaptive Introgression Detection and Validation Pipeline

The following diagram illustrates the integrated computational and experimental workflow for identifying and validating adaptively introgressed alleles, from initial detection through functional characterization.

Gene Editing Workflow for Functional Validation

This diagram details the key steps in the CRISPR-Cas gene editing process for functionally validating candidate introgressed alleles, from target selection through to phenotypic characterization.

Table 3: Essential research reagents and computational resources for adaptive introgression studies

| Resource Category | Specific Tools/Reagents | Function/Application | Key Considerations |

|---|---|---|---|

| Computational Tools | Genomatnn [14] [18], VolcanoFinder [18], MaLAdapt [18] | Identification of putative adaptive introgression signals | Performance varies by demographic scenario; requires appropriate reference populations [18] |

| Gene Editing Systems | CRISPR-Cas9 [19], Base editors [19], Prime editors [19] | Functional validation of candidate alleles through precise genome modification | Throughput limitations addressed via protoplast systems and robotic automation [19] |

| Reference Datasets | 3000 Rice Genomes Project [19], WHEALBI barley exome collection [22], stdpopsim [14] | Baseline variation for allele mining and simulation frameworks | Critical for determining allele rarity and evolutionary context [19] [22] |

| Simulation Frameworks | SLiM [14], stdpopsim [14], msprime [18] | Demographic model testing and training data generation for machine learning approaches | Essential for validating method performance under diverse evolutionary scenarios [14] [18] |

| Phenotypic Screening Platforms | SHEPHERD [20], Exomiser [20], Human Phenotype Ontology [20] | Linking genetic variation to phenotypic outcomes through structured ontologies | Few-shot learning capabilities critical for rare allele characterization [20] |

The systematic sourcing of functionally validated alleles from wild gene pools represents a powerful strategy for biomedical discovery, combining the efficiency of natural selection with the precision of modern molecular technologies. Success in this emerging field requires the integrated application of population genetics, computational biology, and functional genomics, as no single approach provides sufficient evidence for claiming functional validation.

The most robust research frameworks combine multiple detection methods to mitigate the limitations of individual tools, with Genomatnn providing high accuracy in suitable contexts and summary statistics like Q95(w,y) offering efficient initial screening [18]. Crucially, computational predictions require rigorous experimental validation through gene editing and phenotypic assessment, with emerging technologies like base editing and prime editing dramatically accelerating this process [19]. Furthermore, the validation of introgressed alleles must consider their effect within functional modules rather than in isolation, as their biomedical impact often emerges through complex epistatic interactions within biochemical pathways and cellular systems [21].

As these technologies mature, the biomedical community stands to gain unprecedented access to nature's repository of functionally optimized genetic variation, with profound implications for therapeutic development, disease modeling, and understanding human genetic resilience.

Introgression

Introgression, also known as adaptive introgression, is the process by which genetic material moves from one species or population into the gene pool of another through hybridization and repeated backcrossing. This process can be a key source of genetic variation, introducing pre-adapted haplotypes that enable rapid evolution and niche expansion [23]. In humans, it is now widely accepted that admixture occurred between modern humans and archaic hominin groups such as Neanderthals and Denisovans [24]. Non-African modern human populations possess approximately 1.5–2.1% of DNA from Neanderthals, while some Oceanic populations derive 3–6% of their ancestry from Denisovans [24]. This introgressed archaic DNA has been shown to have both beneficial and deleterious consequences for the recipient modern human populations.

Table 1: Key Concepts in Introgression

| Concept | Definition | Example |

|---|---|---|

| Archaic Introgression | The flow of genetic material from an extinct hominin group into modern humans. | Neanderthal DNA in non-African populations [24]. |

| Introgression Desert | A genomic region where archaic segments have been systematically removed, suggesting negative selection. | Regions purged of Neanderthal ancestry, potentially linked to male sterility [3]. |

| Ancestral Introgression | Introgression that occurred in the distant past, with the introduced ancestry subsequently being sorted by selection over generations. | Ancient mexicana ancestry in maize that is shared among geographically distant populations [23]. |

| Structural Variant (SV) Introgression | The introgression of large DNA segments (≥50 base pairs), which can include complex variations. | Introgressed SVs enriched in genes, including centromeres, found in Papua New Guinea genomes [25]. |

Selective Sweep

A selective sweep is a distinct genomic signature left by the action of recent positive natural selection, whereby a beneficial mutation rapidly increases in frequency in a population, dragging along linked neutral variants due to reduced recombination [26]. This process reduces genetic diversity around the selected locus. The observed pattern depends on the source of the genetic variation upon which selection acts.

Table 2: Types of Selective Sweeps

| Sweep Type | Defining Characteristic | Genetic Signature |

|---|---|---|

| Hard Sweep | Selection on a single, new beneficial mutation or a very rare allele. | A classic, strong reduction in diversity around the selected locus [26]. |

| Soft Sweep | Selection on standing genetic variation already present at an appreciable frequency or on multiple recurrent mutations. | A more complex signature than a hard sweep [26]. |

| Sweep from Introgression | Selection on a variant introduced by gene flow from a related population or species. | A distinct 'volcano' pattern with peaks of increased genetic diversity around the selected target in the recipient population [26]. |

Archaic Ancestry

Archaic ancestry refers to the segments of DNA present in the genomes of modern individuals that were inherited from now-extinct hominin species. The primary sources of this ancestry are Neanderthals and Denisovans. The level of archaic ancestry varies among modern human populations, reflecting their distinct demographic histories and interactions with these archaic groups [24]. While some archaic sequences were removed by purifying selection, others were retained and reached high frequencies due to their adaptive benefits, a process known as archaic adaptive introgression [24] [3].

Experimental Protocols for Validation

Detecting Introgressed Fragments

A primary challenge is distinguishing true introgression from shared ancestral genetic variation (Incomplete Lineage Sorting, or ILS). Several statistical methods have been developed to address this:

- Patterson's D Statistic (ABBA-BABA Test): This method measures the excess sharing of derived alleles between two ingroup populations (e.g., European and Asian) and an outgroup (e.g., Neanderthal) [24]. A significant deviation from symmetry provides evidence for introgression. Significance is evaluated using a block-bootstrap or jack-knife method applied to genome-wide data.

- SPrime: This method identifies introgressed segments by detecting haplotypes that are highly divergent from the background of modern human variation but closely match an archaic genome [3]. It is used to create maps of archaic ancestry.

- Tract Length and Linkage Disequilibrium (LD): The length of an introgressed haplotype depends on the time since introgression, with older introgression events resulting in shorter tracts due to recombination. Analyzing tract lengths and patterns of LD can help distinguish recent introgression from ILS [24].

- Phylogenetic Information & Sequence Divergence: An introgressed haplotype will typically show a very recent time to the most recent common ancestor (TMRCA) with the archaic source population but a much older TMRCA with other modern human haplotypes. Specific assumptions about demography are required to test this formally [24].

Detecting and Dating Selective Sweeps

Different methods are powerful for detecting selection over different timeframes.

- Extended Haplotype Homozygosity (EHH) and Derivatives (e.g., XP-EHH): These methods identify haplotypes that are longer and more frequent than expected under neutrality, indicating that a variant has risen to high frequency too quickly for recombination to break down the haplotype [3]. They are powerful for detecting ongoing or recent selection.

- The Relate Method: This method uses ancestral recombination graphs to infer the time to the most recent common ancestor along the genome for all haplotypes in a sample. It can be used to detect selective sweeps by identifying loci where many individuals share a recent common ancestor [3].

- FST and Locus-Specific Branch Length (LSBL): These metrics measure population differentiation. Unusually high FST at a locus can indicate local adaptation, while LSBL uses an archaic genome as an outgroup to detect lineage-specific divergence [3].

- Extended Lineage Sorting (ELS): This method is designed to detect very ancient selective sweeps on the modern human lineage that occurred after the split from Neanderthals and Denisovans. It scans for extended genomic regions where the archaic lineages fall outside the present-day human variation. Long such regions suggest a lineage reached fixation rapidly due to strong positive selection, dragging large linked segments with it [27].

Functional Validation of Introgressed Alleles

Once an introgressed haplotype under selection is identified, downstream analyses aim to determine its functional consequences.

- Expression Quantitative Trait Locus (eQTL) Mapping: This technique tests for associations between the introgressed archaic allele and the expression levels of nearby genes. For example, a 2025 study found that over 300 archaic variants were eQTLs regulating 176 genes, with 81% of these archaic eQTLs overlapping a core haplotype region and affecting genes expressed in reproductive tissues [3].

- Phenome-Wide Association Study (PheWAS): This approach tests for associations between an introgressed archaic allele and a wide array of clinical and quantitative traits in large biobanks to understand its phenotypic impact [3].

Workflow for Validating Adaptive Introgression

Essential Research Reagents and Tools

Table 3: Key Research Reagent Solutions

| Reagent / Tool | Function in Analysis |

|---|---|

| High-Coverage Archaic Genomes (e.g., Vindija Neanderthal, Altai Neanderthal, Denisova) | Serves as the reference source population for identifying introgressed fragments in modern human genomes [3]. |

| Phased Genome Assemblies | High-quality, phased assemblies (e.g., from Papua New Guinea genomes) are critical for accurately detecting introgressed structural variants (SVs) and complex haplotypes [25]. |

| Reference Panels of Diverse Ancestry (e.g., 1000 Genomes Project) | Provides the context of modern human genetic variation necessary to distinguish archaic sequences from modern diversity and to perform population-specific analyses [3] [27]. |

| Ancestral Allele State Reconstruction | Using an outgroup (e.g., chimpanzee), this allows for the determination of the derived vs. ancestral state of a variant, which is fundamental for statistics like Patterson's D and ELS [24] [27]. |

| Recombination Maps | Provide the local recombination rate, which is essential for modeling the expected length of introgressed tracts and for interpreting signals of selective sweeps [24] [23]. |

The Toolbox: Genomic Scans and Statistical Methods for Detection

The detection of adaptive introgression (AI)—the process by which genetically distinct populations exchange beneficial alleles through hybridization and backcrossing—has become a central focus in modern evolutionary genetics. AI plays a crucial role in local adaptation across diverse species, from archaic hominins to crops, facilitating rapid evolutionary responses to environmental pressures [1] [13]. While several statistical methods have been developed to identify AI loci, their performance varies significantly across different evolutionary scenarios, selection strengths, and genomic contexts. This guide provides an objective comparison of three primary AI detection methods—VolcanoFinder, Genomatnn, and MaLAdapt—based on recent performance evaluations and methodological studies. We summarize experimental data on their statistical power, precision, and operational requirements to help researchers select appropriate tools for validating adaptive introgression in population genomic analyses.

The table below summarizes the core characteristics, underlying principles, and relative performance of the three primary AI detection methods based on recent comparative studies.

Table 1: Core Characteristics and Performance of Primary AI Detection Methods

| Method | Underlying Approach | Primary Input Data | Key Advantage | Reported Power | Key Limitation |

|---|---|---|---|---|---|

| VolcanoFinder [28] | Composite-likelihood model based on site frequency spectrum (SFS) distortions | Polymorphism data from recipient species only | Does not require donor reference genome; detects "volcano-shaped" diversity patterns | High for recent, strong sweeps; power decreases for older or softer sweeps [18] | Vulnerable to false positives from demographic history and background selection [28] |

| Genomatnn [14] | Convolutional Neural Network (CNN) analyzing haplotype patterns as images | Genotype matrices from donor, recipient, and unadmixed outgroup | Exceptional accuracy (>95%) with phased data; robust spatial feature detection [14] | 95% accuracy on simulated data (phased); ~88% precision; moderate decrease with heterosis or unphased data [14] | Computationally intensive; requires donor genome data; complex model interpretation [18] [14] |

| MaLAdapt [29] | Extra-Trees Classifier (ETC) machine learning combining multiple summary statistics | Genome-wide sequencing data from populations | Robust to demographic misspecification and confounding selection; powerful for mild selective effects [29] | High power for mild beneficial effects and standing archaic variation; robust to false positives from heterosis [29] | Requires training data; performance depends on feature selection [18] [29] |

Key Performance Insights from Comparative Studies

A comprehensive 2025 performance evaluation tested these methods using datasets simulated under various evolutionary scenarios inspired by human, wall lizard (Podarcis), and bear (Ursus) lineages [18]. This study examined the impact of divergence times, migration rates, population sizes, selection coefficients, and recombination hotspots on method performance. The findings revealed that:

- Method performance is highly scenario-dependent. The relative effectiveness of each method varies significantly based on the specific evolutionary parameters, particularly divergence time and selection strength [18].

- Adjacent windows critically impact discrimination. The study highlighted the importance of including adjacent genomic windows in training data to correctly identify the precise window containing the adaptive mutation, due to the hitchhiking effect on flanking regions [18] [30].

- Q95-based approaches show exploratory utility. Methods based on the Q95 summary statistic were found to be particularly efficient for initial exploratory studies of AI [18] [30].

Experimental Protocols and Workflows

Standardized Evaluation Framework

Recent comparative analyses have established rigorous protocols for benchmarking AI detection methods. The primary experimental workflow involves:

Table 2: Key Experimental Steps for AI Method Validation

| Step | Description | Key Parameters |

|---|---|---|

| 1. Data Simulation | Using coalescent-based simulators (e.g., msprime) or forward-time simulators (e.g., SLiM) to generate genomic data under various AI scenarios [18] [14] | Divergence time, migration rate, population size, selection coefficient, recombination rate |

| 2. Scenario Design | Creating diverse evolutionary contexts including different combinations of divergence and migration times inspired by natural systems [18] | Human, wall lizard, and bear evolutionary histories; recent vs. ancient introgression |

| 3. Method Application | Running each detection method on simulated datasets with standardized genomic window sizes (typically 50-200kb) [18] [14] | Window size: 50kb (MaLAdapt), 100kb (Genomatnn), 200kb (VolcanoFinder); overlapping windows (50kb overlap) |

| 4. Power Calculation | Measuring the proportion of true AI loci correctly identified by each method | True positive rate across selection coefficients (s = 0.005 - 0.1) |

| 5. False Positive Assessment | Evaluating specificity using three negative control window types: neutral introgression, adjacent to AI, and unlinked neutral [18] | False positive rate under neutral evolution; robustness to heterosis and background selection |

Method-Specific Workflows

VolcanoFinder Implementation: Applies a composite-likelihood ratio test to detect the characteristic "volcano-shaped" pattern of excess intermediate-frequency polymorphism flanking the adaptively introgressed locus [28]. The method scans pre-defined genomic windows, comparing the likelihood of the observed SFS under neutral and AI models.

Genomatnn Processing: Constructs genotype matrices (100kb windows) with individuals sorted by similarity to the donor population [14]. The CNN architecture uses consecutive convolution layers with 2×2 step size (instead of pooling) to extract increasingly abstract features from the spatial arrangement of alleles, finally passing these through fully connected layers for classification.

MaLAdapt Workflow: Employes a feature extraction approach calculating multiple population genetic statistics across genomic windows, then uses an ensemble of randomized decision trees (Extra-Trees Classifier) to distinguish AI from neutral regions based on the composite signature [29]. Feature importance can be retrieved to provide biological interpretability.

The following diagram illustrates the conceptual relationships and primary analytical approaches of these three methods:

Diagram 1: Conceptual workflow of the three primary AI detection methods, showing their analytical approaches and output characteristics.

Successful application of these AI detection methods requires specific computational resources and reference datasets. The table below outlines key research reagents and their functions in AI detection studies.

Table 3: Essential Research Reagents and Resources for AI Detection Studies

| Resource Type | Specific Examples | Function in AI Research |

|---|---|---|

| Genomic Simulators | msprime [18], SLiM [14], stdpopsim [29] | Generating simulated genomic data under realistic evolutionary scenarios with known AI loci for method validation |

| Reference Genomes | 1000 Genomes Project [29], Denisovan/Neanderthal genomes [31], crop wild relatives [13] | Providing empirical reference data for method application and comparative analyses |

| AI Detection Software | VolcanoFinder [28], Genomatnn [14], MaLAdapt [29] | Implementing specialized algorithms for detecting signatures of adaptive introgression |

| Population Genetic Statistics | Fst, D-statistics, SFS-based metrics [29] [28] | Quantifying patterns of genetic variation, differentiation, and allele frequency distributions |

| Visualization Tools | Saliency maps (Genomatnn) [14], haplotype plotting [31] | Interpreting results and identifying features driving method predictions |

The comparative analysis of VolcanoFinder, Genomatnn, and MaLAdapt reveals a trade-off between methodological complexity, data requirements, and detection power across different evolutionary scenarios. VolcanoFinder provides an efficient approach when donor genomes are unavailable but shows sensitivity to complex demographic histories. Genomatnn offers exceptional accuracy with phased data but requires substantial computational resources and donor reference genomes. MaLAdapt demonstrates robust performance for detecting subtle selection signals and is less vulnerable to confounding factors like heterosis and demographic misspecification. Researchers should select methods based on their specific study systems, data availability, and evolutionary questions. For comprehensive AI detection, a complementary approach using multiple methods may provide the most reliable inference, particularly for complex adaptive events involving polygenic selection or ancient introgression.

Leveraging Extended Haplotype Homozygosity (EHH) and Site-Frequency Spectra

In the field of population genetics, detecting signatures of natural selection is fundamental to understanding how species adapt to new environments and evolutionary pressures. Two powerful statistical frameworks for this purpose are based on Extended Haplotype Homozygosity (EHH) and the Site Frequency Spectrum (SFS). While both can be used to identify selection, they capture fundamentally different signals: EHH-based methods are highly effective at detecting recent or ongoing positive selection through the preservation of long-range haplotypes, whereas SFS-based methods are often better suited for identifying completed selective sweeps and inferring demographic history [32]. In the specific context of validating adaptive introgression—the process by which beneficial genetic material is transferred between species or populations through hybridization—these tools provide complementary evidence. This guide objectively compares the performance, data requirements, and applications of EHH and SFS methodologies, providing researchers with a clear framework for selecting the appropriate tool for their investigations.

Methodological Foundations and Comparison

Core Principles of EHH and SFS

Extended Haplotype Homozygosity (EHH) measures the decay of linkage disequilibrium (LD) with distance from a core variant or focal marker. Conceptually, it calculates the probability that two randomly chosen chromosomes carrying the same core allele remain identical (homozygous) across a surrounding genomic region. Under neutral evolution, LD decays predictably due to recombination over generations. However, when a beneficial allele undergoes rapid positive selection, it rises in frequency so quickly that there is insufficient time for recombination to break down the ancestral haplotype on which it arose. This results in an unexpectedly long-range and high-frequency haplotype, manifesting as a slower decay of EHH [32] [33]. The integrated Haplotype Score (iHS) is a widely used within-population statistic derived from EHH, which compares the integrated EHH (iHH) of ancestral and derived alleles at a polymorphic site [32].

The Site Frequency Spectrum (SFS), in contrast, is a histogram of allele frequencies in a sample. It summarizes the distribution of derived (mutant) allele frequencies across numerous polymorphic sites. The unfolded SFS uses knowledge of the ancestral allele state, while the folded SFS relies on the minor allele frequency when the ancestral state is unknown [34]. The shape of the SFS is strongly influenced by population demographic history and natural selection. Neutral evolution in a population of constant size predicts an L-shaped distribution with an excess of low-frequency variants. Deviations from this expectation—such as an excess of intermediate-frequency alleles or a skew towards either high or low-frequency variants—can signal demographic events like bottlenecks or expansions, or the action of natural selection [35] [34].

Direct Performance Comparison

The table below summarizes the objective differences in the application and performance of EHH-based and SFS-based methods.

Table 1: Performance and Application Comparison of EHH-based and SFS-based Methods

| Feature | EHH-Based Methods (e.g., iHS, rEHH) | SFS-Based Methods (e.g., Tajima's D, Fay & Wu's H) |

|---|---|---|

| Primary Strength | High power to detect recent/ongoing selective sweeps that are not yet fixed [32]. | Effective at detecting completed selective sweeps and inferring demographic history [32]. |

| Temporal Sensitivity | Focused on very recent selection; signals decay after the selective sweep finishes [32]. | Can detect older selection events and is highly sensitive to long-term population size changes [34]. |

| Typical Output | Scores per SNP (e.g., iHS), identifying specific core haplotypes under selection [32]. | Summary statistic for a genomic region or whole genome (e.g., Tajima's D) [32]. |

| Key Advantage | Directly ties the selection signal to a specific haplotype and core allele [32]. | Computationally fast and easy to apply for initial genomic scans [32]. |

| Main Challenge | Requires accurate phased haplotype data for robust estimation [32]. | Highly vulnerable to confounding effects of demography and population structure [32]. |

Application in Validating Adaptive Introgression

Adaptive introgression describes the process where beneficial alleles from a donor species are introduced into the gene pool of a recipient species via hybridization and backcrossing, and then increase in frequency due to natural selection [1] [13]. This process can provide a "evolutionary leap," allowing a population to rapidly adapt faster than would be possible through de novo mutation alone [36].

EHH and SFS analyses contribute to validating adaptive introgression in distinct but complementary ways:

- EHH-based approaches can pinpoint specific introgressed haplotypes that show strong evidence of recent positive selection in the recipient population. A long, high-frequency haplotype of foreign origin is a classic signature of a rapid selective sweep following introgression.

- SFS-based approaches can reveal the impact of introgression on the genome-wide pattern of variation and help rule out demographic confounding. For instance, a genome-wide SFS might indicate a general bottleneck, while a skewed SFS in a specific region could point to selection on an introgressed allele.

Table 2: Suitability of Methods for Detecting Adaptive Introgression Signatures

| Analysis Method | Role in Validating Adaptive Introgression | Key Interpretative Consideration |

|---|---|---|

| EHH / rEHH | Identifies long, high-frequency haplotypes as candidates for recent selective sweeps, which may be of introgressed origin [33]. | The introgressed haplotype must have risen to high frequency quickly enough to retain its haplotype structure. |

| iHS | Detects ongoing selection on segregating (unfixed) haplotypes, which can include introgressed segments [32]. | Requires the selected allele to still be segregating and that both ancestral and derived (or introgressed) states are present. |

| Tajima's D | A negative value in a specific region can indicate a selective sweep, potentially driven by an introgressed allele [32]. | A genome-wide negative value is more indicative of population expansion, confounding the identification of local introgression. |

| Joint / 2D SFS | Can be used to compare allele frequency distributions between the recipient and donor populations, highlighting shared polymorphisms due to gene flow [34]. | Useful for inferring the history of gene flow and divergence, but not a direct test for selection on introgressed loci. |

Experimental Protocols and Data Requirements

Standard Workflow for EHH Analysis

The following diagram illustrates the generalized workflow for conducting an EHH-based analysis to detect selection signatures, such as those arising from adaptive introgression.

Title: EHH Analysis Workflow

Detailed Protocol:

- Data Quality Control (QC): Begin with genotype data (e.g., from SNP arrays or sequencing). Perform standard QC: remove individuals and SNPs with high missingness, exclude variants with low minor allele frequency (e.g., < 5%), and check for Hardy-Weinberg equilibrium deviations [33].

- Haplotype Phasing: This is a critical step. Use computational phasing algorithms (e.g., fastPHASE, SHAPEIT, Beagle) to infer the haplotype phase of heterozygous genotypes. Phasing accuracy is indispensable for reliable EHH estimation, especially for sample sizes that are not very large [32] [33].

- Identify Core Haplotypes: Scan the phased haplotypes chromosome-by-chromosome to define short, non-overlapping "core haplotypes." These are typically regions in strong LD, often limited to a range of 3 to 20 SNPs [33].

- Calculate EHH Decay: For each core haplotype, calculate the EHH value, which is the probability that two randomly chosen haplotypes carrying the core allele are homozygous (identical) for the entire extended interval from the core to a given distance, t. EHH is calculated independently upstream and downstream [32].

- Integrate EHH (iHH): Compute the integrated Haplotype Homozygosity (iHH) by numerically integrating the area under the EHH decay curve until EHH drops below a set cutoff (e.g., 0.05) [32]. This can be conceptualized as the average length of shared haplotypes among all pairs of chromosomes carrying the core allele.

- Standardize (iHS): For each core SNP with both ancestral and derived alleles, calculate the unstandardized iHS as the log-ratio of iHH for the ancestral and derived alleles:

uniHS = ln(iHH_A / iHH_D)[32]. This score is then standardized across the genome in bins of similar derived allele frequency to produce the final iHS score, which is approximately normally distributed. - Identify Significant Regions: Core haplotypes with extreme iHS values (e.g., |iHS| > 2) or significant rEHH values (e.g., empirical p-value < 0.05) are considered candidates for recent positive selection. These regions can be cross-referenced with introgression maps to validate adaptive introgression.

Standard Workflow for SFS Estimation and Analysis

The estimation and use of the Site Frequency Spectrum, particularly with low-coverage data, involves a specific workflow to avoid bias.

Title: SFS Estimation Workflow

Detailed Protocol:

- Calculate Genotype Likelihoods: For each individual at each genomic site, use tools like

samtools/bcftools, GATK, or ANGSD to calculate genotype likelihoods,p(X|G), which model the probability of the observed sequencing data (X) given each possible underlying genotype (G) [35]. This is crucial for handling uncertainty in low-coverage data. - Compute Site Allele Frequency (SAF) Likelihoods: For each population and site, calculate the likelihood of the data given every possible derived allele count (j = 0, 1, ..., 2Nk). This is computed from the genotype likelihoods using a dynamic programming algorithm [35].

- Estimate SFS via EM Algorithm: The SFS (φ) is the vector of probabilities that a random site has a given derived allele count. The maximum likelihood estimate of φ is found using an Expectation-Maximization (EM) algorithm or its stochastic variant, which iteratively maximizes the likelihood of the data given the SAF likelihoods [35].

- Fold SFS (Optional): If the ancestral allele state is unknown, the SFS can be folded. This involves binning alleles by their minor allele frequency instead of their derived allele frequency [34].

- Analyze SFS Shape: Use the estimated SFS for downstream inference.

- Calculate Summary Statistics: Compute statistics like Tajima's D, which compares the number of segregating sites (S) to the average pairwise nucleotide diversity (π). A significantly negative D indicates an excess of low-frequency variants, consistent with positive selection or population expansion. Fay & Wu's H compares π to an estimator based on high-frequency derived alleles, and a negative value can signal an excess of high-frequency derived alleles, a signature of positive selection [32] [34].

- Demographic Inference: Use the observed SFS to fit demographic models (e.g., bottlenecks, expansions) by comparing it to the expected SFS under different historical scenarios [36] [34].

The Scientist's Toolkit: Essential Research Reagents and Software

Successful implementation of EHH and SFS analyses requires a suite of specialized software and a clear understanding of data requirements.

Table 3: Key Research Reagents and Software Solutions

| Tool / Resource | Function | Application Notes |

|---|---|---|

| rehh (R package) | Calculates EHH, iHS, and cross-population EHH statistics (XP-EHH, Rsb) [32]. | A comprehensive and user-friendly tool for conducting EHH-based scans within R. Handles both phased and unphased data, though phased is recommended. |

| Selscan | Efficiently computes iHS, XP-EHH, and other selection statistics on a genome-wide scale [32]. | Known for its high computational efficiency, making it suitable for very large datasets. |

| ANGSD | Analyzes next-generation sequencing data without requiring called genotypes. Calculates genotype likelihoods, SAF likelihoods, and estimates the SFS [35]. | Essential for accurate SFS construction from low-coverage sequencing data. |

| fastPHASE / Beagle | Performs haplotype phasing from genotype data [33]. | Accurate phasing is a critical pre-processing step for EHH analysis. Beagle is widely used for its accuracy and speed. |

| Phased Haplotypes | The primary input data for EHH analysis. | Can be obtained experimentally (costly) or computationally. Quality of phasing directly impacts EHH results [32]. |

| Polarized Variants (Ancestral State) | Information on which allele is ancestral vs. derived at a polymorphic site. | Required for the unfolded SFS and statistics like Fay & Wu's H. Less critical for some EHH statistics but needed for iHS [32]. |

| Structured Coalescent Simulators (e.g., SISiFS) | Simulates the expected SFS under complex demographic models, including population structure [36]. | Used to generate null models for hypothesis testing, helping to distinguish selection from demography. |

Both Extended Haplotype Homozygosity and the Site Frequency Spectrum are powerful, yet distinct, tools in the population geneticist's toolkit for detecting selection and validating adaptive introgression. The choice between them is not a matter of which is superior, but which is more appropriate for the specific biological question and data available.

- Use EHH-based methods like iHS when your goal is to detect very recent or ongoing selective sweeps and you have access to well-phased haplotype data. They excel at pinpointing the exact haplotype under selection, making them ideal for characterizing introgressed segments that have recently swept through a population.

- Use SFS-based methods like Tajima's D when performing an initial genome scan for selection and demography, especially when working with low-coverage data or when the selection event is older. They are computationally efficient but require careful interpretation to avoid confounding by demography.

For the most robust validation of adaptive introgression, a combined approach is highly recommended. A candidate introgressed region identified by an EHH sweep can be further supported by SFS-based tests showing a skew in allele frequencies, and vice-versa. This multi-faceted analytical strategy provides converging lines of evidence, strengthening the conclusion that a piece of introgressed genome has indeed been adaptive.

Identifying Selection Signatures within Introgressed 'Core Haplotypes'