Validating Directed Evolution Outcomes: A Comprehensive Guide to NGS Coverage Analysis

Directed evolution is a powerful protein engineering tool, but its success hinges on accurately identifying enriched variants from complex libraries.

Validating Directed Evolution Outcomes: A Comprehensive Guide to NGS Coverage Analysis

Abstract

Directed evolution is a powerful protein engineering tool, but its success hinges on accurately identifying enriched variants from complex libraries. Next-Generation Sequencing (NGS) has become the cornerstone for this analysis; however, the reliability of the data is fundamentally dependent on appropriate sequencing depth and coverage. This article provides researchers, scientists, and drug development professionals with a complete framework for validating directed evolution outcomes through robust NGS coverage analysis. We cover foundational principles, detailed methodological workflows, strategies for troubleshooting and optimizing sequencing parameters, and finally, methods for the statistical validation and comparative analysis of enriched variants. By establishing clear guidelines for NGS coverage, this guide aims to enhance the efficiency and success rate of directed evolution campaigns for therapeutic and biotechnological applications.

The Pillars of Success: Understanding Directed Evolution and NGS Fundamentals

Directed Evolution as an Adaptive Walk on a Fitness Landscape

Directed evolution is a powerful protein engineering method that mimics natural selection in a laboratory setting to steer proteins or nucleic acids toward a user-defined goal [1]. The process consists of iterative rounds of mutagenesis (creating a library of variants), selection (isolating members with the desired function), and amplification [1]. This approach circumvents our profound ignorance of how a protein's sequence encodes its function by using iterative rounds of random mutation and artificial selection to discover new and useful proteins [2].

The conceptual framework of directed evolution is best understood as an adaptive walk on a high-dimensional fitness landscape [2] [3]. In this analogy, first articulated by John Maynard Smith, all possible protein sequences of length L are arranged such that sequences differing by one amino acid mutation are neighbors [2]. Each sequence is assigned a "fitness" value—in artificial selection, this is defined by the experimenter based on desired properties like enzymatic activity, thermostability, or binding affinity [2] [3]. The vastness of this sequence space is incomprehensible; for a small protein of 100 amino acids, there are 20¹⁰⁰ (∼10¹³⁰) possible sequences [2].

Protein evolution can then be envisioned as a walk on this fitness landscape, where regions of higher elevation represent more desirable proteins [2]. The structure of this landscape profoundly influences the effectiveness of evolutionary search strategies [2]. Landscapes range from smooth, single-peaked 'Fujiyama' landscapes to rugged, multi-peaked 'Badlands' landscapes [2]. The rougher the landscape, the harder it is for evolution to climb, as local optima create traps that evolution cannot escape unless temporary decreases in fitness are permitted or multiple simultaneous mutations enable jumps to new peaks [2].

Quantitative Comparison of Directed Evolution Strategies

Directed evolution methodologies have diversified significantly, from traditional iterative approaches to modern machine-learning assisted platforms. The table below summarizes the performance characteristics of these different strategies based on experimental data.

Table 1: Performance Comparison of Directed Evolution Strategies

| Strategy | Typical Library Size | Key Advantages | Limitations | Reported Fitness Gain | Optimal Application Context |

|---|---|---|---|---|---|

| Traditional DE [2] [1] | 10³-10⁶ variants | • Simple implementation• No prior structural knowledge needed• Proven success across many proteins | • Resource-intensive screening• Susceptible to local optima• Multiple rounds required | Varies by protein (e.g., >40°C thermostability increase in lipase A [2]) | Rugged landscapes with fewer local optima; when high-throughput screening is available |

| Machine Learning-Assisted DE (MLDE) [4] | 10⁴-10⁵ training variants | • More efficient exploration of sequence space• Better navigation of epistatic landscapes• Can predict high-fitness variants in silico | • Requires initial training data• Model performance depends on landscape structure | Consistently matches or exceeds traditional DE across 16 diverse protein landscapes [4] | Landscapes with significant epistasis and local optima |

| Focused Training MLDE (ftMLDE) [4] | 10⁴-10⁵ variants | • Enhanced training set quality using zero-shot predictors• Leverages evolutionary, structural, and stability knowledge | • Dependent on quality of zero-shot predictors | Outperforms random sampling for both binding and enzyme activities [4] | Landscapes with challenging attributes (fewer active variants, more local optima) |

| Continuous Evolution (T7-ORACLE) [5] | Effectively unlimited over time | • Extremely rapid (rounds with each cell division)• No manual intervention between rounds• 100,000x higher mutation rate than normal | • Technical complexity of system setup• Currently limited to E. coli host | Evolved antibiotic resistance up to 5,000x higher in less than a week [5] | When extremely rapid evolution is needed; for exploring vast sequence spaces |

Table 2: Effect of Selection Parameters on Directed Evolution Outcomes in Polymerase Engineering [3]

| Selection Parameter | Impact on Recovery Yield | Impact on Variant Enrichment | Impact on Variant Fidelity | Optimization Recommendation |

|---|---|---|---|---|

| Mg²⁺/Mn²⁺ Concentration | Significant impact | Crucial for shaping polymerase activity | Influences polymerase/exonuclease equilibrium | Requires careful titration to balance activity and fidelity |

| Nucleotide Chemistry | Affects background noise | Directly determines selective pressure | Impacts mechanism of incorporation | Should match desired substrate specificity |

| Selection Time | Influences parasite recovery | Affects stringency | Longer times may favor proofreading | Optimize to minimize false positives while maintaining diversity |

| Additives | Can improve or suppress yield | Modifies enzyme kinetics | Can stabilize specific conformations | Screen common PCR additives systematically |

Experimental Protocols for Key Directed Evolution Approaches

Traditional Directed Evolution Workflow

The standard directed evolution protocol involves iterative cycles of diversification, selection, and amplification [1]. The initial step involves generating genetic diversity in a parental sequence through random mutagenesis techniques such as error-prone PCR or DNA shuffling [6]. Error-prone PCR can be performed using standard PCR protocols with modified conditions, including increased magnesium concentration, addition of manganese, unequal dNTP concentrations, and using Taq polymerase which lacks proofreading activity [6]. This generates a library of variant genes with point mutations across the entire sequence.

The library is then subjected to selection or screening based on the desired function [1]. For binding proteins, phage display is commonly employed, where the target molecule is immobilized on a solid support, the library of variant proteins is flowed over it, poor binders are washed away, and the remaining bound variants are recovered [1]. For enzymatic activities, screening systems individually assay each variant using colorimetric or fluorogenic substrates [6]. High-throughput screening via fluorescence-activated cell sorting (FACS) can achieve throughput of up to 10⁸ variants per day when the evolved property can be linked to a change in fluorescence [6].

The selected variants are amplified, either by PCR or through bacterial hosts, and the process is repeated for multiple rounds [1]. The entire process typically requires 1-2 weeks per round, with 3-6 rounds needed to achieve significant improvements [5].

Machine Learning-Assisted Directed Evolution Protocol

MLDE enhances traditional directed evolution by incorporating machine learning models to predict high-fitness variants [4]. The protocol begins with creating an initial training library of 10⁴-10⁵ variants, which should be randomly sampled from the full combinatorial space [4]. Each variant in this library is experimentally characterized to determine its fitness value.

The sequence-fitness data is used to train supervised machine learning models, such as Gaussian process regression or neural networks, which capture non-additive epistatic effects [4]. For focused training (ftMLDE), the training set quality is enriched using zero-shot predictors that leverage evolutionary, structural, or stability knowledge to selectively sample variants that avoid low-fitness regions [4]. The trained model then predicts fitness across the entire sequence space, identifying high-fitness variants for experimental validation [4].

In active learning DE (ALDE), this process becomes iterative—the model's predictions guide the selection of additional variants for experimental testing, which are then incorporated into the training set to refine the model [4]. This approach is particularly advantageous on rugged landscapes rich in epistasis, where it provides greater benefits compared to traditional DE [4].

Emulsion-Based Selection for Polymerase Engineering

Emulsion-based selection platforms enable the directed evolution of DNA polymerases with novel functions [3]. The protocol involves creating water-in-oil emulsions where individual aqueous droplets serve as microreactors, each containing a single cell expressing a unique polymerase variant, along with substrates and products [3]. This compartmentalization minimizes cross-reactivity and cross-catalysis, allowing partitioning of libraries based on the enzyme function of individual variants [3].

Key steps include:

- Library design: Creating focused libraries targeting specific residues, such as metal-coordinating residues and their neighbors in DNA polymerase [3].

- Emulsion formation: Using microfluidics or vigorous mixing to generate monodisperse emulsion droplets with cell, substrate, and product compartments [3].

- Selection: Applying specific selection pressures through factors like nucleotide concentration, nucleotide chemistry, selection time, and divalent metal ion concentration [3].

- Recovery and analysis: Breaking the emulsion, recovering selected variants, and using next-generation sequencing to identify enriched mutants [3].

This method has successfully isolated polymerase variants with improved thermostability, altered substrate specificity, and reverse transcription activity [3].

Validating Directed Evolution Outcomes with NGS Coverage Analysis

Next-generation sequencing has become an indispensable tool for analyzing directed evolution outcomes, enabling comprehensive characterization of variant libraries and their enrichment patterns. Adequate sequencing coverage is critical for accurate identification of significantly enriched mutants [3].

The optimal sequencing coverage depends on the specific goals of the analysis. For identifying enriched variants in selection outputs, a threshold of 50-100x coverage per variant provides precise and accurate identification of active mutants [3]. This coverage is significantly lower than required for genome assembly but sufficient for variant identification in directed evolution contexts [3].

For clinical applications or high-stakes validation, more extensive coverage is recommended. One study on gastrointestinal cancer detection achieved >99% sensitivity for single-nucleotide variants with allele frequencies >10% using NGS coverage that provided consistent detection sensitivity down to 10% variant frequency [7]. The same study demonstrated 97.2% sensitivity and 99.2% specificity in formalin-fixed, paraffin-embedded specimens [7].

NGS analysis enables not only identification of enriched variants but also assessment of selection quality through metrics like:

- Variant enrichment: Measuring the proportional increase of specific variants after selection [3]

- Recovery yield: The percentage of input variants recovered after selection [3]

- Variant fidelity: For polymerases, the balance between synthesis efficiency and accuracy [3]

- Parasite identification: Detection of variants enriched through non-desired functions [3]

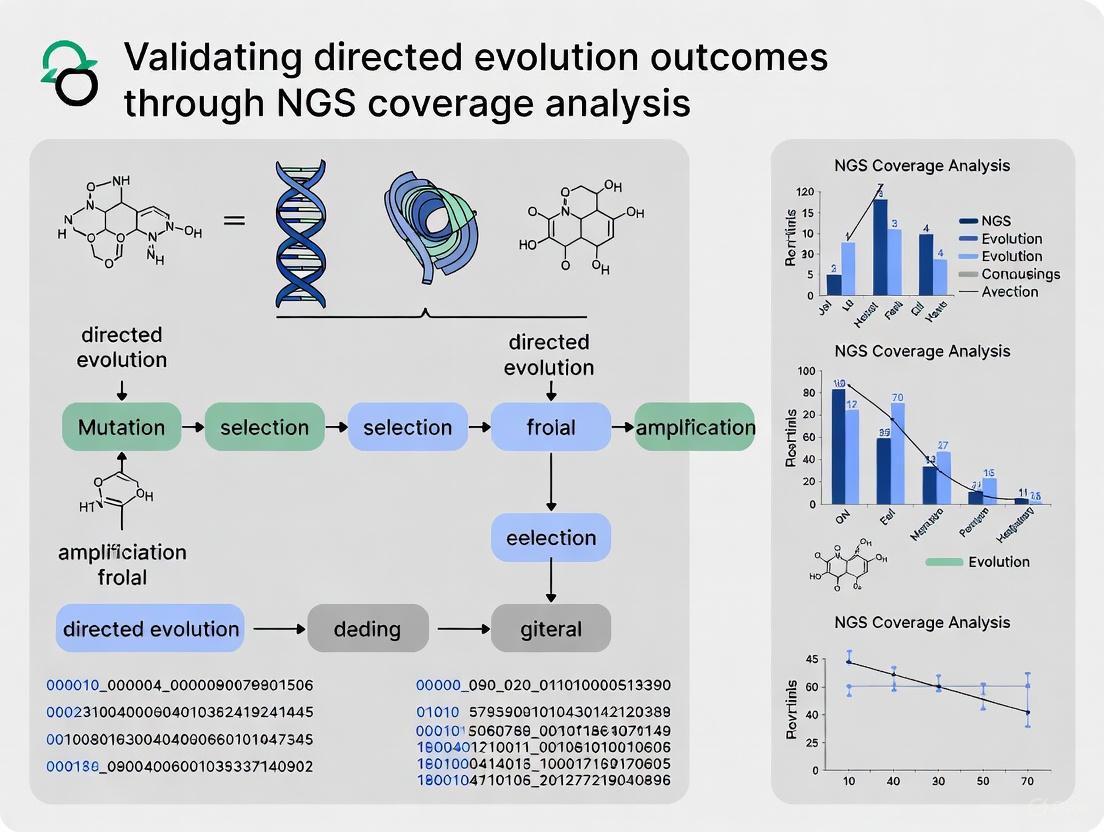

Diagram 1: Directed Evolution Workflow with NGS Validation. This flowchart illustrates the iterative process of directed evolution, highlighting the integration of machine learning and NGS coverage analysis for validation.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Platforms for Directed Evolution

| Tool Category | Specific Products/Platforms | Primary Function | Key Applications |

|---|---|---|---|

| Directed Evolution Platforms | T7-ORACLE [5], OrthoRep [5], EcORep [5] | Continuous evolution systems enabling rapid protein optimization | Evolving therapeutic proteins, antibiotic resistance, enzyme engineering |

| Specialized DNA Polymerases | KAPA HiFi DNA Polymerase [8], KOD DNA Polymerase variants [3] | High-fidelity amplification, XNA synthesis, reverse transcription | NGS library preparation, xenobiotic nucleic acid processing |

| Library Preparation Kits | KAPA HyperPrep Kit [8], KAPA RNA HyperPrep Kit [8] | Efficient construction of sequencing libraries from limited input | RNA-seq, whole transcriptome analysis, NGS workflow optimization |

| Screening Technologies | Phage Display [1] [6], FACS-based methods [6], Emulsion platforms [3] [8] | High-throughput identification of variants with desired properties | Antibody engineering, enzyme evolution, binding protein optimization |

| NGS Validation Solutions | Custom NGS panels [7], Targeted sequencing assays [3] | Validation of directed evolution outcomes, variant enrichment analysis | Gastrointestinal cancer profiling, polymerase variant characterization |

Diagram 2: Landscape Ruggedness Determines Optimal Evolution Strategy. This diagram illustrates how fitness landscape structure influences the choice between traditional and machine learning-assisted directed evolution.

Directed evolution represents a powerful experimental framework for protein engineering, conceptualized as an adaptive walk on a fitness landscape. The efficacy of different evolution strategies—from traditional iterative approaches to modern machine-learning assisted platforms—varies significantly based on landscape characteristics, with MLDE providing particular advantages on rugged landscapes rich in epistasis. The integration of NGS coverage analysis has become indispensable for validating directed evolution outcomes, with optimal coverage thresholds enabling accurate identification of enriched variants. As the field advances, continuous evolution platforms like T7-ORACLE and sophisticated MLDE approaches are dramatically accelerating our ability to engineer proteins with novel functions, opening new frontiers in therapeutic development, industrial biocatalysis, and fundamental evolutionary science.

The Indispensable Role of NGS in Decoding Evolutionary Outcomes

Next-Generation Sequencing (NGS) has revolutionized our ability to decode evolutionary outcomes by providing unprecedented resolution for analyzing genetic changes over time. This transformative technology enables researchers to move beyond theoretical models to empirical validation of evolutionary processes, from directed evolution experiments in laboratory settings to natural population studies in diverse ecosystems. The capacity of NGS to simultaneously sequence millions of DNA fragments in a high-throughput, cost-effective manner has established it as an indispensable tool for modern evolutionary biology [9]. By capturing comprehensive genetic information across entire genomes, NGS provides the quantitative data necessary to validate evolutionary hypotheses, track adaptive trajectories, and understand the complex interplay between selection, genetic drift, and other evolutionary forces.

In directed evolution specifically, NGS serves as a critical validation tool that connects experimental design with functional outcomes. Where traditional methods might only identify a handful of optimized variants, NGS reveals the complete spectrum of mutations underlying improved function, providing insights into the sequence-function relationships that govern protein evolution [10]. This detailed perspective enables researchers to move beyond simply observing that evolution occurred to understanding how it occurred at a molecular level – what mutations arose, how they interacted, and which evolutionary pathways were navigated to reach functional optima.

NGS Technology Platforms for Evolutionary Studies

Comparative Analysis of Sequencing Platforms

The selection of an appropriate NGS platform is fundamental to designing effective evolutionary studies, as each technology offers distinct advantages for specific applications. The table below summarizes the key characteristics of major sequencing platforms relevant to evolutionary research:

Table 1: Comparison of NGS Platforms for Evolutionary Studies

| Platform | Technology | Read Length | Key Strengths | Limitations | Best Applications in Evolutionary Studies |

|---|---|---|---|---|---|

| Illumina | Sequencing-by-synthesis | 36-300 bp | High accuracy (∼99.9%), low cost per base | Short reads limit structural variant detection | Variant calling in populations, tracking mutation trajectories [9] |

| PacBio SMRT | Single-molecule real-time sequencing | 10,000-25,000 bp | Excellent for structural variants, haplotype resolution | Higher cost, lower throughput | Resolving complex genomic regions, detecting recombination events [9] |

| Oxford Nanopore | Nanopore sensing | 10,000-30,000 bp | Ultra-long reads, real-time analysis, portable | Higher error rate (∼5-15%) | Field applications, complete genome assembly [9] |

| Ion Torrent | Semiconductor sequencing | 200-400 bp | Fast run times, simple workflow | Homopolymer errors | Rapid screening of mutant libraries [9] |

Platform Selection Considerations

Choosing the optimal NGS platform requires balancing multiple factors specific to evolutionary research questions. For directed evolution experiments where tracking specific mutations across rounds of selection is paramount, Illumina platforms provide the cost-effective, high-accuracy sequencing needed to identify enriched variants [10]. For studies of population history and divergence dating in natural populations, long-read technologies like PacBio SMRT sequencing enable more complete assembly of genomic regions and better resolution of structural variants that often underlie adaptive evolution [11]. Each platform's characteristics directly influence the evolutionary inferences that can be drawn from the resulting data, making platform selection a critical first step in experimental design.

NGS in Directed Evolution: Experimental Framework

Workflow for Validating Directed Evolution Outcomes

Directed evolution mimics natural selection in laboratory settings to engineer biomolecules with improved or novel functions. NGS integrates throughout this pipeline, both informing selection strategies and validating outcomes. The following diagram illustrates the comprehensive workflow:

Experimental Protocol for NGS-Based Validation

The following detailed methodology enables comprehensive analysis of directed evolution outcomes:

Library Preparation for NGS: For protein engineering studies, extract plasmid DNA from pre-selection and post-selection populations. Amplify target genes using barcoded primers to enable multiplexing. For Illumina platforms, use tagmentation-based library preparation (Nextera) or amplification-based methods (TruSeq). For studies requiring maximum accuracy, consider hybrid capture-based approaches that minimize allele dropout [12].

Sequencing Depth Optimization: Determine appropriate sequencing coverage based on library diversity. For typical directed evolution libraries containing 10⁶-10⁹ variants, ensure sufficient depth to detect pre-selection variants at ≥10x coverage. As demonstrated in polymerase engineering studies, cost-effective identification of enriched variants is achievable even at moderate coverages (50-100x), though higher coverage (200x) improves accuracy for low-frequency variants [10].

Variant Calling and Filtering: Process raw sequencing data through standardized bioinformatics pipelines. After demultiplexing, align reads to reference sequences using BWA-MEM or similar aligners [11]. Call variants using GATK Best Practices or specialized tools for engineered libraries. Filter based on quality scores, strand bias, and mapping quality. For critical clinical applications, confirm NGS-identified variants using Sanger sequencing, which remains a best practice for validation [13].

Enrichment Calculation and Statistical Analysis: Calculate enrichment scores for variants by comparing frequencies between pre-selection and post-selection populations. Apply statistical frameworks (such as Fisher's exact test with multiple testing correction) to identify significantly enriched mutations. For polymerase engineering studies, this approach has successfully identified mutations that confer improved activity toward xenobiotic nucleic acids (XNAs) [10].

NGS in Natural Population Evolution Studies

Tracking Evolutionary Forces in Wild Populations

Beyond laboratory evolution, NGS enables detailed investigation of evolutionary processes in natural populations. By applying various sequencing strategies to population samples, researchers can reconstruct demographic history, identify signatures of selection, and quantify gene flow:

Table 2: NGS Approaches for Studying Natural Population Evolution

| Method | Key Features | Data Output | Evolutionary Insights | Example Application |

|---|---|---|---|---|

| Whole Genome Sequencing | Comprehensive genomic coverage | High-density SNPs, structural variants | Demographic history, selective sweeps, local adaptation | Rhodomyrtus tomentosa population history [11] |

| RAD-Seq | Reduced representation, cost-effective | Thousands of SNPs across many individuals | Population structure, gene flow, outlier loci for selection | Genetic diversity assessment across multiple populations [11] |

| Hybrid Capture | Targeted sequencing of specific regions | Sequence data for loci of interest | Evolution of gene families, phylogenetic relationships | Comparative genomics of adaptive traits [14] |

Experimental Protocol for Population Evolutionary Studies

Implementing NGS in population genetics requires specialized methodological considerations:

Sample Collection and Preservation: For population studies like the Rhodomyrtus tomentosa investigation, collect tissue samples (leaves for plants, blood/tissue for animals) from multiple individuals across geographical ranges, ensuring adequate spatial sampling to resolve population structure. Immediately preserve samples using silica gel, liquid nitrogen, or appropriate preservatives to prevent DNA degradation [11].

Library Preparation for Population Sequencing: Extract high-quality DNA using modified CTAB or commercial kits. For RAD-seq, digest genomic DNA with appropriate restriction enzymes (e.g., MseI and EcoRI), ligate with barcoded adapters, size-select fragments (300-500bp), and amplify with indexing primers. Sequence on Illumina platforms (2×150bp recommended) [11]. For WGS, use mechanical shearing or transposase-based fragmentation followed by library preparation with platform-specific adapters.

Variant Calling and Filtering for Population Data: Process raw sequencing data through quality control (FastQC), demultiplex using process_radtags in Stacks, align to reference genome using BWA-MEM, and call variants using Stacks populations program or similar pipelines. Apply rigorous filtering: remove samples with >20% missing data, exclude SNPs with minor allele count <10, filter based on Hardy-Weinberg equilibrium (p<10⁻⁷), and prune for linkage disequilibrium if needed for specific analyses [11].

Population Genetic Analyses: Calculate standard diversity statistics (π, FIS), population differentiation (FST), and structure (PCA, ADMIXTURE). Test for isolation-by-distance using Mantel tests. For demographic inference, apply PSMC methods to whole-genome data or coalescent-based approaches to SNP data. Identify regions under selection using outlier approaches (e.g., BayPass) or environmental association analyses (RDA) [11].

Essential Research Reagent Solutions

Successful implementation of NGS in evolutionary studies requires specific reagents and tools optimized for particular applications:

Table 3: Key Research Reagents for NGS-Based Evolutionary Studies

| Reagent/Tool Category | Specific Examples | Function in Evolutionary Studies | Performance Considerations |

|---|---|---|---|

| High-Fidelity Polymerases | KAPA HiFi DNA Polymerase, Q5 High-Fidelity DNA Polymerase | Library amplification with minimal errors, inverse PCR for library construction | Industry-leading fidelity for accurate representation of variant frequencies [8] |

| Library Preparation Kits | KAPA HyperPrep Kits, KAPA RNA HyperPrep Kits | Efficient library construction from diverse input materials | Higher library yields, reduced duplicates, improved coverage uniformity [8] |

| Directed Evolution Enzymes | Evolved polymerases for XNA synthesis | Enable novel substrate incorporation for expanded functional selection | Engineered through directed evolution for specialized activities not found in nature [8] [10] |

| Variant Calling Pipelines | Stacks, GATK, BWA-MEM, SAMtools | Identify genetic variants from raw sequencing data | Critical for accurate mutation tracking in directed evolution and population studies [11] |

| Selection Reagents | Custom nucleotide analogs (2′F-rNTPs), specialized cofactors | Create selective environments for specific enzyme functions | Concentration optimization crucial for success of directed evolution campaigns [10] |

Data Analysis and Coverage Considerations

Determining Optimal Sequencing Coverage

A critical aspect of NGS experimental design for evolutionary studies is determining appropriate sequencing coverage, which varies significantly based on research goals. The following diagram illustrates the coverage decision process:

Addressing Technical Challenges in NGS-Based Evolutionary Studies

Despite its transformative potential, NGS implementation in evolutionary studies presents specific technical challenges that require strategic solutions:

Error Rate Management: All NGS platforms exhibit characteristic error profiles that can be misconstrued as evolutionary mutations. For Illumina platforms, error rates typically range from 0.1-1% [9]. Effective error mitigation includes using unique molecular identifiers (UMIs) to distinguish true biological variants from sequencing artifacts, implementing duplicate removal, and applying Bayesian statistical approaches that model error probabilities during variant calling.

Tumor Purity Considerations in Somatic Evolution: For cancer evolution studies, accurate variant detection requires careful assessment of tumor purity. Pathologist review of hematoxylin and eosin-stained slides enables estimation of tumor cell fraction, which is critical for interpreting mutant allele frequencies and copy number alterations [12]. Conservative estimation is recommended, as inflammatory infiltrates can lead to underestimation of tumor proportion.

Bioinformatics Challenges: The enormous data volumes generated by NGS necessitate sophisticated computational infrastructure and analytical approaches [15]. Beyond standard variant calling, specialized algorithms are required for detecting copy number alterations (CNAs) and structural variants (SVs) in cancer evolution studies, or for identifying introgression and selective sweeps in population genomic datasets [12].

Next-Generation Sequencing has fundamentally transformed our ability to decode evolutionary outcomes across biological scales, from single proteins to entire ecosystems. By providing comprehensive, high-resolution genetic data, NGS enables researchers to move beyond inference to direct observation of evolutionary processes. In directed evolution, NGS reveals the complex mutational patterns underlying functional optimization, guiding protein engineering efforts. In natural populations, NGS illuminates the historical demographic events and selective pressures that shape contemporary biodiversity. As sequencing technologies continue to advance, becoming more accessible and cost-effective, their integration with evolutionary studies will undoubtedly yield deeper insights into the fundamental mechanisms driving biological change. The continued development of specialized analytical frameworks and experimental approaches will further enhance our ability to extract meaningful evolutionary understanding from the vast datasets generated by these powerful technologies.

In the field of next-generation sequencing (NGS), particularly when validating outcomes from directed evolution experiments, a precise understanding of sequencing depth and coverage is non-negotiable. These two metrics form the bedrock of data quality and reliability, directly influencing the confidence with which scientists can call genetic variants, assemble genomes, and interpret functional selections. Despite being often used interchangeably, depth and coverage describe distinct, complementary aspects of sequencing data. Sequencing depth (or read depth) refers to the number of times a specific nucleotide is read during the sequencing process, providing a measure of confidence at individual base positions [16]. Sequencing coverage, however, describes the proportion of the target genome or region that has been sequenced at least once, indicating the completeness of the data [16] [17].

The confusion between these terms is pervasive, yet overcoming it is critical for rigorous NGS experimental design, especially in applied fields like directed evolution where identifying enriched mutants amidst a diverse library depends entirely on data completeness and accuracy [10]. This guide objectively compares these core metrics, outlines their practical implications, and provides a framework for their application in validating directed evolution outcomes, supported by current experimental data and protocols.

Defining the Metrics: A Comparative Analysis

Sequencing Depth: The Measure of Confidence

- Definition: Sequencing depth is a localized metric, defined as the number of sequencing reads that align to a specific genomic coordinate [16]. For example, if a single nucleotide is sequenced 30 times, the depth at that position is 30x.

- Purpose and Importance: Depth underpins the statistical confidence of variant calls. A higher depth means more independent observations of a base, making it easier to distinguish a true genetic variant from a random sequencing error. This is paramount in directed evolution for detecting low-frequency variants within a heterogeneous pool or for confirming a mutation with high certainty [16] [18].

- Measurement: It is typically expressed as an average across the entire target region (e.g., "The library was sequenced to 100x depth") [16].

Sequencing Coverage: The Measure of Completeness

- Definition: Sequencing coverage is a global metric, referring to the percentage of the target region (e.g., a genome, exome, or gene panel) that is represented by at least one sequencing read [16] [17].

- Purpose and Importance: Coverage ensures the experiment captures the entire region of interest. Low coverage results in "gaps"—genomic segments with no data—which can lead to missed variants and an incomplete picture of the genetic landscape. In directed evolution, low coverage could mean entire mutant sequences are absent from the data, severely biasing the interpretation of selection outcomes [16].

- Measurement: It is usually expressed as a percentage (e.g., "95% of the exome was covered at 1x") [16].

Table 1: Core Differences Between Sequencing Depth and Coverage

| Feature | Sequencing Depth | Sequencing Coverage |

|---|---|---|

| Definition | Number of times a nucleotide is sequenced [16] | Proportion of the target region sequenced [16] |

| Answers the Question | "How confident can I be in this base call?" | "How much of my target has been sequenced?" |

| Primary Role | Confidence & accuracy for variant calling [16] | Completeness & comprehensiveness of data [16] |

| Impact of Low Values | Inability to call variants reliably; false positives/negatives | Gaps in data; entire variants missed |

| Typical Unit | Multiplier (e.g., 30x, 100x) | Percentage (e.g., 95%) |

The Interrelationship and Trade-offs

Depth and coverage are intrinsically linked but are not the same. It is possible to have high depth but low coverage if a small subset of the genome is sequenced an enormous number of times while other regions are missed entirely. Conversely, one can have high coverage but low depth if every region is sequenced, but only once or twice, providing little confidence in the base calls [16].

The relationship is often governed by the Lander/Waterman equation: C = LN / G, where C is coverage, L is read length, N is the number of reads, and G is the haploid genome length [18] [17]. This equation highlights that for a fixed amount of sequencing capacity (LN), larger genomes (G) will result in lower coverage. In practice, achieving both high depth and high breadth of coverage requires a careful balance and is often constrained by cost and sequencing resources [16] [18].

Figure 1: The Interdependent Relationship Between Sequencing Goals and Resource Allocation. Achieving high depth and coverage are both key objectives, but they compete for finite sequencing resources, requiring researchers to prioritize based on their primary experimental goal.

Quantitative Guidelines and Technology Comparisons

Established Coverage Recommendations

Required depth and coverage are not one-size-fits-all; they are dictated by the specific application and the biological question. The following table summarizes standard recommendations for common NGS methods.

Table 2: Recommended Sequencing Coverage for Common NGS Applications [17]

| Sequencing Method | Recommended Coverage | Rationale |

|---|---|---|

| Whole Genome Sequencing (WGS) | 30x - 50x for human | Balances cost with high-confidence variant calling across the genome. |

| Whole-Exome Sequencing (WES) | 100x | Higher depth needed as exomes capture only 1-2% of the genome, focusing on protein-coding regions of high interest. |

| RNA Sequencing | Often calculated in millions of reads | Detecting lowly expressed genes requires greater sampling (depth). |

| ChIP-Seq | 100x | Needed to confidently identify transcription factor binding sites. |

Recent technological advances are reshaping these guidelines. Pacific Biosciences highlights that with their high-fidelity long-read (HiFi) technology, a 20x human genome can achieve over 99% of the variant detection performance (F1 score) of a 30x genome for single nucleotide variants (SNVs) and structural variants (SVs), and over 98% for indels [19]. This demonstrates that read accuracy is as important as raw depth.

The Critical Role of Coverage Uniformity

A key factor in this efficiency is coverage uniformity—how evenly reads are distributed across the target [19]. Two datasets with the same average depth (e.g., 30x) can have vastly different scientific value. One may have poor uniformity, with depths ranging from 0x in some areas to 60x in others, creating gaps and over-sampled regions. The other, with high uniformity (e.g., most bases covered between 25x-35x), provides reliable information genome-wide [19]. Hybridization-capture methods generally offer better uniformity than amplicon-based approaches, which can suffer from dropout due to primer mismatches [12].

Application in Directed Evolution: A Case Study

Directed evolution mimics natural selection to engineer proteins with improved properties. Validating these experiments requires NGS to identify which mutants are enriched after selection, placing unique demands on depth and coverage.

Experimental Protocol for Variant Enrichment Analysis

A 2024 study on polymerase engineering provides a robust methodological framework [10]:

- Library Construction & Selection: Create a focused saturation mutagenesis library targeting specific active-site residues (e.g., a 5-point library targeting residues L403, D404, F405, L408, Y409). Subject the library to emulsion-based compartmentalization for activity-based selection (e.g., Compartmentalized Self-Replication - CSR) under different selective pressures (varying nucleotide chemistry, metal cofactors, time).

- NGS Library Prep and Sequencing: Isolve DNA from pre- and post-selection populations. Prepare sequencing libraries (e.g., using Illumina kits) from both to track enrichment. Sequence to a depth that ensures comprehensive sampling of the variant library.

- Variant Identification and Analysis: Map reads to a reference sequence. Count the frequency of each unique variant in the pre- and post-selection libraries. Calculate fold-enrichment for variants that survive selection. The study confirmed that cost-effective, precise, and accurate identification of active variants is possible even at low coverages, though the specific threshold must be determined empirically [10].

The Scientist's Toolkit: Key Reagents for NGS in Directed Evolution

Table 3: Essential Research Reagents for Directed Evolution NGS Workflows

| Reagent / Material | Function in the Workflow |

|---|---|

| Saturation Mutagenesis Library | Provides the genetic diversity for selection; the starting point of the experiment [10]. |

| Emulsion Reagents (Oil, Surfactants) | Creates water-in-oil droplets for compartmentalizing individual variants and linking genotype to phenotype [10]. |

| NGS Library Prep Kit (e.g., Illumina) | Prepares the genetic material from selected variants for sequencing by fragmenting and adding platform-specific adapters [12]. |

| Selection Substrates (e.g., 2'F-rNTPs) | The challenging substrate or condition that defines the selective pressure for enriching functional mutants [10]. |

| High-Fidelity DNA Polymerase | Used for accurate amplification steps during library construction and PCR validation [10]. |

Optimizing Depth and Coverage for Your Experiment

Selecting the right metrics is a strategic decision. The following flowchart provides a logical framework for determining optimal sequencing depth, tailored to different research goals.

Figure 2: A Strategic Framework for Determining Optimal Sequencing Depth and Technology Selection. This decision tree guides researchers in prioritizing sequencing parameters based on their primary experimental objective.

A Step-by-Step Selection Guide

- Define Study Objectives: The primary goal is the most important factor [16]. Are you searching for rare variants in a mixed population (requiring high depth) or ensuring you miss no variants in a clinical gene panel (requiring high coverage)?

- Consider Sample Characteristics: The quality and quantity of input DNA/RNA can limit achievable coverage. Formalin-fixed, paraffin-embedded (FFPE) samples, for instance, yield degraded nucleic acids that often require higher depth to achieve confident base calling [20].

- Understand Target Variation: Different variant types have different detection requirements. Single Nucleotide Variants (SNVs) can be called at lower depths than insertions/deletions (indels) or complex structural variants [12] [19].

- Account for Technology: As comparative studies show, highly accurate long-read technologies (e.g., PacBio HiFi) can achieve high confidence at lower nominal depths than earlier short-read technologies, due to their longer read lengths and high per-read accuracy [19].

- Balance with Resources: Finally, depth and coverage must be traded off against cost and data storage capabilities [16] [18]. Deeper sequencing generates more data, increasing expenses and computational burdens.

In next-generation sequencing, "depth" and "coverage" are not synonymous; they are distinct, critical metrics for data quality and completeness. Sequencing depth dictates confidence in base calls, while sequencing coverage ensures no part of the genomic target is missed. For researchers validating directed evolution outcomes, a deliberate strategy that prioritizes both sufficient depth to identify low-frequency, enriched mutants and sufficient coverage to ensure the entire mutant library is sampled is essential for drawing meaningful, accurate conclusions. By applying the frameworks, guidelines, and experimental precedents outlined here, scientists can design more efficient, reliable, and cost-effective NGS experiments, fully leveraging the power of sequencing to decode complex biological selections.

In the rigorous validation of directed evolution experiments, next-generation sequencing (NGS) coverage is not merely a technical metric; it is the foundational determinant of statistical confidence. The precise identification of enriched protein variants—the very outcome of a successful directed evolution campaign—hinges on the depth and breadth of sequencing data. Coverage threshold refers to the minimum number of times a specific nucleotide base must be sequenced to ensure a variant call is accurate and reproducible. Within the context of directed evolution, where distinguishing true beneficial mutations from background noise is paramount, applying appropriate coverage thresholds transforms NGS from a simple sequencing tool into a powerful engine for functional discovery. This guide examines the critical link between coverage thresholds and variant discovery confidence, providing a framework for researchers to validate directed evolution outcomes with statistical rigor.

Understanding Coverage and Depth in NGS

Core Definitions and Distinctions

Although often used interchangeably, "coverage" and "depth" describe distinct but related concepts in NGS data analysis. Precise understanding of these terms is essential for experimental design and data interpretation.

- Sequencing Depth (Read Depth): This metric describes the number of times a specific nucleotide is read during sequencing. It is expressed as an average across the genome or target region (e.g., 100x) and directly impacts base-calling accuracy. Higher depth provides redundancy, enabling error correction and confident variant calling [16].

- Sequencing Coverage: This refers to the percentage of the target genome or region that has been sequenced at least once. It is typically expressed as a percentage (e.g., 95% coverage) and indicates the comprehensiveness of the sequencing effort. Gaps in coverage mean certain regions are completely missing from the data [16].

The relationship between these metrics is symbiotic. While increasing sequencing depth generally improves the likelihood of achieving comprehensive coverage, biases in library preparation or genomic complexities can still leave some regions under-represented or entirely missing despite high overall depth [16].

The Lander/Waterman Equation: Predicting Coverage

The theoretical foundation for understanding sequencing coverage was established by the Lander/Waterman equation, which predicts genome coverage based on known parameters [17]:

C = LN / G

Where:

- C = Coverage

- L = Read length

- N = Number of reads

- G = Haploid genome length

This equation provides a statistical framework for experimental planning, allowing researchers to calculate the sequencing effort required to achieve a desired coverage level for their specific target, whether it's a full genome, exome, or a custom directed evolution library.

Coverage Requirements Across NGS Applications

Coverage requirements vary significantly across different NGS applications, reflecting their distinct biological questions and technical considerations. The following table summarizes recommended coverage thresholds for common applications:

| Sequencing Method | Recommended Coverage | Primary Rationale | Key Considerations |

|---|---|---|---|

| Whole Genome Sequencing (Human) | 30× to 50× [17] | Balance of comprehensive mapping & cost | Dependent on application and statistical model; sufficient for variant calling in diploid genomes |

| Whole-Exome Sequencing | 100× [17] | Focus on protein-coding regions | Enables reliable detection of heterozygous variants in critical regions |

| RNA Sequencing | Varies (often 20-50 million reads) [17] | Capture dynamic expression range | Depth requirements increase for detection of rare transcripts and splice variants |

| ChIP-Seq | 100× [17] | Identify protein-DNA binding sites | Must account for antibody efficiency and background signal |

| Directed Evolution Libraries | Varies by design [10] | Distinguish enriched variants from background | Must cover full library diversity; higher depth for rare variants |

Special Considerations for Directed Evolution

In directed evolution experiments, coverage requirements extend beyond standard genomic applications. The primary goal is to confidently identify enriched variants resulting from functional selection pressures. A recent study demonstrated that cost-effective, precise identification of active variants is possible even at relatively low coverages with appropriate statistical support [10]. However, the optimal coverage depends on multiple factors:

- Library Diversity: Larger libraries require greater sequencing depth to ensure all variants are adequately represented.

- Selection Stringency: Highly stringent selections with few surviving variants may require deeper sequencing to reliably detect enriched sequences.

- Variant Frequency: Detection of rare variants (e.g., present at <0.1% frequency) demands significantly higher coverage than common variants.

Research indicates that establishing a systematic pipeline for optimizing selection parameters, including coverage requirements, can significantly enhance the efficiency of directed evolution strategies for polymerase engineering and other enzyme optimization projects [10].

Experimental Protocols for Coverage Analysis

Establishing Minimum Coverage Thresholds

Determining appropriate coverage thresholds requires a methodical approach that considers the specific goals of the directed evolution experiment:

- Define Variant Detection Goals: Establish the minimum variant frequency that must be detected with confidence (e.g., variants present at 0.1% frequency post-selection).

- Calculate Theoretical Requirements: Use statistical models (e.g., Poisson distribution) to calculate the depth needed to detect variants at the desired frequency with 95% confidence.

- Account for Technical Variation: Include buffer for technical variation, sequencing errors, and amplification biases that may require additional coverage.

- Validate Empirically: Use control samples with known variants at various frequencies to empirically verify detection sensitivity at different coverage levels.

For clinical NGS applications, the Association of Molecular Pathology and College of American Pathologists have established best practice guidelines that emphasize an error-based approach, identifying potential sources of errors throughout the analytical process and addressing these through test design and validation [12]. While directed evolution experiments may not require clinical-grade validation, these principles provide a robust framework for establishing confidence in variant calls.

Coverage-Based Variant Calling Methodology

The following workflow illustrates the process of establishing and applying coverage thresholds in directed evolution experiments:

Experimental Workflow for Coverage-Based Variant Calling

This workflow emphasizes that applying coverage thresholds (Step 5) is a critical gatekeeping step that occurs after initial data processing but before final variant calling. This ensures that only positions with sufficient data quality contribute to the identification of putative enriched variants.

Validation Using Orthogonal Methods

Even with appropriate coverage thresholds, orthogonal validation of key variants remains essential for high-stakes applications. Sanger sequencing has traditionally served as the gold standard for variant confirmation, though studies now show >99% concordance between NGS and Sanger sequencing for single nucleotide variants (SNVs) in high-complexity regions [21]. For directed evolution outcomes, functional validation of enriched variants through individual expression and characterization provides the most biologically relevant confirmation.

Emerging approaches include machine learning models that can classify variants into high or low-confidence categories based on multiple quality metrics including read depth, allele frequency, sequencing quality, and mapping quality [21]. These models can significantly reduce the burden of confirmatory testing while maintaining high precision and specificity.

Quantitative Data on Coverage and Variant Detection

Coverage Requirements for Different Variant Types

The relationship between sequencing coverage and variant detection confidence varies significantly by variant type and context. The following table summarizes key findings from empirical studies:

| Variant Type | Minimum Recommended Coverage | Detection Confidence | Application Context |

|---|---|---|---|

| Single Nucleotide Variants (SNVs) | 20-30× [12] | >99% [21] | Germline variants in clinical testing |

| Heterozygous SNVs | 30-50× [22] | High with balanced allele ratio | Diploid genomes |

| Rare Somatic Variants | 100-1000× [12] | Varies with variant allele frequency | Cancer genomics (5-10% VAF) |

| Insertions/Deletions (Indels) | 50-100× [12] | Lower than SNVs due to alignment issues | Complex regions require higher depth |

| Gene Amplifications | 50-100× [23] | Strong correlation with FISH (ρ=0.847) [23] | Copy number variation in cancer |

| Directed Evolution Variants | Varies by library size | Enables identification of significantly enriched mutants [10] | Functional screening outputs |

Coverage Impact on False Positive and Negative Rates

The relationship between coverage and variant calling accuracy follows a predictable statistical pattern. In a study evaluating germline genetic variants, researchers found that integrating machine learning models with quality metrics achieved 99.9% precision and 98% specificity in identifying true positive heterozygous SNVs [21]. This highlights how appropriate coverage thresholds combined with quality filters can dramatically improve variant calling accuracy.

For copy number variation, a 2025 study demonstrated that NGS fold changes correlated strongly with FISH metrics (Spearman's ρ = 0.847 for gene copy number) when detecting MET and HER2 amplifications in non-small cell lung cancer [23]. The researchers established a fold change cutoff of 2.0 to effectively distinguish amplified from non-amplified cases, demonstrating how coverage-based metrics can reliably predict molecular events previously requiring orthogonal confirmation.

Research Reagent Solutions for Coverage Optimization

Successful NGS library preparation and coverage analysis depend on specialized reagents and systems. The following table details key solutions referenced in the literature:

| Reagent/Solution | Manufacturer | Primary Function | Application in Directed Evolution |

|---|---|---|---|

| KAPA HiFi DNA Polymerase | Roche [8] | High-fidelity library amplification | Maintains sequence integrity during library prep |

| KAPA HyperPrep Kits | Roche [8] | Library preparation efficiency | Higher library yields, reduced duplicates |

| KAPA HyperPlus Reagents | Roche [21] | Enzymatic fragmentation & library prep | Automated workflow compatibility |

| Twist Target Enrichment | Twist Bioscience [21] [23] | Hybridization-based capture | Custom panel design for specific targets |

| NovaSeq Sequencing System | Illumina [21] [23] | High-throughput sequencing | Enables deep coverage for large libraries |

| BL21 (DE3) Expression Strain | NEB [10] | Protein expression for functional testing | Validation of enriched variant function |

The Relationship Between Coverage and Statistical Confidence

Statistical Models for Coverage and Variant Calling

The confidence in variant calling increases logarithmically with coverage depth according to statistical principles. At low coverage (10-15x), base calling uncertainties lead to higher false negative rates, particularly for heterozygous variants. As coverage increases to 30x, the probability of missing a heterozygous variant drops significantly, approaching the gold standard for germline variant detection [22].

For directed evolution applications, the optimal coverage represents a balance between statistical confidence and practical constraints. A recent study noted that establishing a sequencing coverage threshold for accurate identification of significantly enriched mutants allowed researchers to streamline selection processes using smaller libraries and more cost-effective NGS sequencing [10]. This approach demonstrates how understanding coverage thresholds can improve the efficiency of directed evolution pipelines.

Impact of Coverage Uniformity

While average coverage provides a useful summary metric, coverage uniformity across the target region significantly impacts variant discovery confidence. The Inter-Quartile Range (IQR) metric quantifies statistical variability, reflecting the non-uniformity of coverage across the entire data set [17]. A high IQR indicates high variation in coverage, meaning some regions are significantly under-covered while others are over-covered, potentially leading to gaps in variant detection.

In a directed evolution context, uneven coverage could lead to preferential detection of variants in high-coverage regions while missing potentially beneficial variants in low-coverage regions. Methods to improve uniformity include optimized probe design for hybrid capture-based approaches, PCR optimization to minimize amplification bias, and utilizing molecular barcodes to accurately quantify unique molecules [12].

In directed evolution and other applications requiring high-confidence variant discovery, coverage thresholds serve as the critical link between raw sequencing data and biologically meaningful conclusions. The evidence consistently demonstrates that appropriate coverage thresholds—tailored to the specific variant type, application, and required confidence level—directly dictate the reliability of variant discovery. As NGS technologies continue to evolve and directed evolution libraries increase in complexity, the principles of coverage optimization remain foundational. By applying the systematic approaches outlined here—from experimental design using the Lander/Waterman equation to implementing coverage thresholds in variant calling pipelines—researchers can significantly enhance the statistical rigor and biological relevance of their variant discovery efforts, ultimately accelerating the development of novel enzymes and therapeutics through directed evolution.

Directed evolution serves as a powerful protein engineering tool, mimicking natural selection to optimize enzymes and receptors for industrial and therapeutic applications. For researchers in drug development, validating the outcomes of these experiments is paramount. This guide examines the critical success metrics and compares different analytical approaches, with a specific focus on how Next-Generation Sequencing (NGS) coverage analysis provides the foundation for rigorous, data-driven validation of your directed evolution campaigns.

Core Success Metrics in Directed Evolution

The success of a directed evolution experiment is multi-faceted, quantified through a combination of performance, stability, and functional output measurements. The table below summarizes the key metrics used for a comprehensive assessment.

Table 1: Key Success Metrics for Directed Evolution Experiments

| Metric Category | Specific Metric | Description | Measurement Methods |

|---|---|---|---|

| Functional Production | Functional Protein Yield | Quantity of properly folded, active protein produced [24]. | Spectrophotometry (e.g., Bradford assay), functional activity assays |

| Stability | Thermostability (Tm) | Melting temperature; indicator of protein's resistance to heat denaturation. | Differential Scanning Fluorimetry (DSF), Thermofluor assays |

| Soluble Expression | Level of protein expressed in soluble fraction versus insoluble aggregates [24]. | SDS-PAGE, Western Blot of soluble vs. insoluble fractions | |

| Binding & Kinetics | Binding Affinity (Kd) | Dissociation constant; measures strength of ligand binding. | Surface Plasmon Resonance (SPR), Isothermal Titration Calorimetry (ITC) |

| Catalytic Efficiency (kcat/Km) | Specificity constant for enzyme activity. | Kinetic assays with varying substrate concentrations | |

| Sequencing Outcomes | Variant Enrichment | Significant increase in frequency of beneficial mutants over selection rounds [3]. | Next-Generation Sequencing (NGS) |

| Mutation Load | Average number of mutations per variant in the final enriched pool [3]. | NGS data analysis |

Experimental Protocols for Key Assays

Protocol for Assessing Functional Production and Stability

This protocol is adapted from methods used to engineer stable GPCRs and polymerases [24] [3].

Materials:

- Expression Vector: Contains gene library of protein variants.

- Host Cells: E. coli (e.g., BL21(DE3)) or eukaryotic cells (e.g., S. cerevisiae).

- Lysis Buffer: Suitable for the target protein and host cell.

- Purification Resin: e.g., Ni-NTA resin for His-tagged proteins.

- Analytical Equipment: Spectrophotometer, SDS-PAGE gel system, real-time PCR machine for DSF.

Method:

- Library Transformation: Transform the mutagenic library into the appropriate expression host cell line.

- Protein Expression: Induce expression in small-scale cultures. For stability screening, cultures can be grown at different temperatures.

- Cell Lysis and Fractionation: Lyse cells and separate soluble and insoluble fractions via centrifugation.

- Analysis:

- Functional Yield: Purify the protein from the soluble fraction and quantify the yield.

- Thermostability: Use Differential Scanning Fluorimetry (DSF). Mix purified protein with a fluorescent dye (e.g., SYPRO Orange) that binds hydrophobic patches exposed upon unfolding. Run a thermal ramp in a real-time PCR machine and calculate the melting temperature (Tm) from the resulting fluorescence curve.

Protocol for NGS-Based Variant Enrichment Analysis

This protocol is critical for validating directed evolution outcomes as described in modern pipelines [3] [25].

Materials:

- DNA Library: Plasmid DNA from the initial library and from populations after each selection round.

- PCR Reagents: High-fidelity DNA polymerase (e.g., KAPA HiFi Polymerase, developed via directed evolution for superior performance [8]), NGS library preparation kit.

- Sequencing Platform: e.g., Illumina, PacBio.

- Bioinformatics Tools: Software for sequence alignment (e.g., BWA) and variant calling (e.g., GATK).

Method:

- Library Preparation: Amplify the target gene region from the population DNA using barcoded primers for multiplexing.

- Sequencing: Sequence the prepared libraries on an NGS platform. Long-read technologies (e.g., PacBio) can be valuable for haplotyping, but short-read Illumina is common [24].

- Bioinformatic Analysis:

- Demultiplexing and Quality Control: Separate sequences by sample and remove low-quality reads.

- Variant Calling: Align reads to a reference sequence and identify mutations.

- Variant Enrichment Analysis: Calculate the frequency of each mutation or haplotype in each selection round. Identify variants that show a statistically significant increase in frequency over successive rounds. Studies show that a sequencing coverage of 50-100x per variant in the library can be sufficient for accurate identification of enriched mutants [3].

Visualizing the Directed Evolution Workflow and Success Metrics

The following diagram illustrates the integrated stages of a directed evolution campaign and where key success metrics are applied.

Directed Evolution Workflow and Metrics

The Scientist's Toolkit: Essential Research Reagents

The quality of reagents is critical for reproducibility. The table below details essential solutions used in modern directed evolution experiments, including commercially engineered options.

Table 2: Key Research Reagent Solutions for Directed Evolution

| Reagent / Solution | Critical Function | Example & Key Feature |

|---|---|---|

| High-Fidelity DNA Polymerase | Amplifies mutant libraries for sequencing and cloning with minimal errors. | KAPA HiFi DNA Polymerase: Engineered via directed evolution for ultra-high fidelity and robust amplification, ideal for NGS library prep [8]. |

| NGS Library Preparation Kit | Prepares the genetic library for high-throughput sequencing. | KAPA HyperPrep Kit: Designed for high efficiency and reduced duplicates, improving sequencing data quality [8]. |

| Emulsion Reagents | Creates microreactors for high-throughput screening, linking genotype to phenotype. | Water-in-oil emulsion systems enable compartmentalization for functional screening of millions of variants in parallel [3] [8]. |

| Stable Cell Line | Expresses challenging proteins like GPCRs for functional assays. | Engineered eukaryotic (e.g., HEK293) or prokaryotic (e.g., E. coli BL21) cells optimized for membrane protein expression [24]. |

Defining success in directed evolution requires a multi-parametric approach. While traditional metrics like binding affinity and thermostability remain fundamental, the integration of NGS-based analysis provides an unparalleled, quantitative view of the evolutionary process. By combining these metrics—functional production, biophysical stability, and NGS-driven variant enrichment—researchers can move beyond simple functional screens to a comprehensive understanding of their evolved proteins. This rigorous validation, powered by high-quality engineered reagents, is essential for advancing robust candidates in the drug development pipeline.

From Library to Data: A Practical NGS Workflow for Directed Evolution

In directed evolution, the goal is to mimic natural selection in the laboratory to develop proteins with enhanced functions, such as improved thermostability, specific activity, or resistance to inhibitors [8]. The success of these campaigns hinges on accurately identifying beneficial protein variants through next-generation sequencing (NGS). Library preparation serves as the foundational bridge, transforming the protein variants of interest—via their coding nucleic acids—into sequencable DNA libraries. This process converts a diverse pool of DNA sequences into a format compatible with NGS platforms, ensuring that the resulting data truly represents the underlying genetic diversity created during directed evolution. The fidelity of this step is therefore paramount; any introduction of bias or error can obscure the identification of genuinely improved variants, compromising the entire validation process [26].

Core Technologies and Methodologies for DNA Library Construction

The process of NGS library preparation involves a series of molecular steps designed to fragment DNA, repair the ends, attach universal adapters, and often amplify the final construct. The following workflow diagram outlines the two primary methodological pathways for constructing sequencing libraries.

Detailed Breakdown of Library Preparation Steps

- Fragmentation: DNA is fragmented into manageable sizes (e.g., 200–600 bp) through mechanical or enzymatic methods. Mechanical shearing (e.g., acoustic shearing) offers minimal sequence bias, while enzymatic methods (e.g., tagmentation) are automation-friendly and suitable for lower input amounts [26].

- End Repair & A-Tailing: The fragmented DNA ends are blunted and phosphorylated. A single adenine (A) nucleotide is then added to the 3' ends to facilitate ligation to thymine (T)-overhang adapters [26].

- Adapter Ligation: Sequencing adapters containing flow-cell binding sites, barcodes (indexes), and primer binding sequences are ligated to the fragments. These adapters are essential for cluster generation and sequencing on platforms like Illumina [26].

- Cleanup & Size Selection: Libraries are purified to remove adapter dimers, enzymes, and buffer components. Size selection ensures fragments are within the optimal size range for sequencing, improving data quality [26].

- Library Amplification: A limited-cycle PCR amplifies the adapter-ligated DNA to generate sufficient material for sequencing. Using high-fidelity polymerases is critical to minimize amplification bias and errors, especially critical for detecting true positive variants in directed evolution outcomes [8] [26].

Comparison of Major Library Preparation Approaches

Two principal methods are employed for targeted sequencing, each with distinct advantages for specific applications in validating directed evolution experiments.

Table 1: Comparison of Targeted Library Preparation Methods

| Feature | Hybrid Capture-Based | Amplification-Based (Amplicon) |

|---|---|---|

| Principle | Solution-based hybridization of biotinylated probes to genomic regions of interest, followed by pull-down [12]. | PCR amplification using primers designed for specific genomic targets [12]. |

| Primary Input | DNA [12]. | DNA or RNA (cDNA) [12]. |

| Variant Detection | SNVs, Indels, Copy Number Alterations (CNAs), Structural Variants (SVs) [12]. | Excellent for SNVs and small Indels [12]. |

| Key Advantage | High flexibility in panel design; less prone to allele dropout; suitable for detecting a wide range of variant types [12]. | High sensitivity and specificity for targeted regions; fast and cost-effective for smaller gene sets [12]. |

| Consideration | Requires more input DNA and longer workflow [12]. | Prone to allele-specific dropout if primers overlap with variants [12]. |

Quantitative Performance Comparison of Library Preparation Systems

Selecting an appropriate library preparation system requires a careful evaluation of its performance characteristics. The following data, compiled from independent studies, provides a quantitative basis for this decision-making process.

Table 2: Performance Metrics of Commercial Library Preparation Systems

| System / Kit | Evaluation Context | Key Quantitative Findings | Implication for Directed Evolution |

|---|---|---|---|

| Tecan MagicPrep NGS [27] | Clinical microbial WGS vs. Illumina Nextera DNA Flex | - Hands-on time: 5 hours less per run- Concordance: 100% with reference method- Library Output: Higher concentration and molarity | Improves workflow efficiency for high-throughput screening of microbial enzyme variants. |

| Collibri PS DNA Library Prep Kit [28] | General NGS workflows on Illumina systems | - Workflow Time: ~1.5 hours for PCR-free protocol- Feature: Visual feedback for reagent mixing | Rapid protocol with built-in QC checks reduces preparation errors. |

| Four Exome Capture Platforms [29] | WES on DNBSEQ-T7 sequencer (BOKE, IDT, Nanodigmbio, Twist) | - Reproducibility: Comparable across platforms- Accuracy: Superior technical stability and detection accuracy on DNBSEQ-T7- Uniformity: Achieved with a standardized hybridization workflow | A robust, platform-agnostic capture workflow ensures consistent performance for exome-level variant detection. |

Experimental Protocols for Key Applications

Protocol 1: Automated Library Preparation for High-Throughput Screening

This protocol is adapted from the evaluation of the Tecan MagicPrep NGS system [27] and is ideal for processing large numbers of samples from directed evolution campaigns, such as screening microbial libraries for enzyme variants.

- Sample Input: Use 50-100 ng of genomic DNA from bacterial or fungal clones in a 96-well plate format.

- Automated Processing: Transfer the plate to the automated liquid handling system. The system executes:

- Tagmentation: Fragments DNA and adds adapter sequences in a single-step reaction.

- PCR Amplification: Performs a limited-cycle PCR (e.g., 12-15 cycles) with indexed primers to amplify the library and add unique sample barcodes.

- Normalization and Pooling: Normalizes the concentrations of the individual libraries and pools them into a single tube for sequencing.

- QC Check: Quantify the final library pool using fluorometry (e.g., Qubit) and assess the size distribution and integrity via a fragment analyzer (e.g., Bioanalyzer, TapeStation) [27] [26].

- Sequencing: Dilute the pool to the appropriate molarity and load it onto the sequencer.

Supporting Data: A study implementing this automated approach demonstrated a 5-hour reduction in hands-on time per run while maintaining 100% concordance with manual methods, proving its reliability for high-throughput variant validation [27].

Protocol 2: A Standardized Hybridization-Capture Workflow for Exome Sequencing

This protocol, derived from a comparative study of exome capture platforms, ensures uniform performance across different probe sets, which is critical for comprehensive variant discovery in all protein-coding regions [29].

- Library Construction: Prepare pre-capture libraries from 50 ng of sheared genomic DNA using a universal library prep kit. Include unique dual indexes (UDIs) during the PCR amplification step (e.g., 8 cycles).

- Pre-Capture Pooling: Quantify the pre-capture libraries and pool 8 libraries equimolarly for a multiplexed capture reaction, with a total input of 2000 ng.

- Target Enrichment: Perform hybridization capture using a consistent set of reagents and a 1-hour hybridization time, regardless of the commercial exome probe panel used (e.g., from Twist, IDT, etc.).

- Post-Capture Amplification: Amplify the captured libraries with 12 cycles of PCR.

- Quality Control: Quantify the final yield and validate enrichment efficiency before sequencing on the desired platform (e.g., DNBSEQ-T7 or Illumina systems) [29].

Supporting Data: This standardized workflow was successfully applied to four commercial exome panels, demonstrating uniform and outstanding performance across all of them, which enhances the reliability of variant calling for directed evolution studies [29].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for NGS Library Preparation

| Item | Function in Workflow | Key Considerations |

|---|---|---|

| High-Fidelity DNA Polymerase [8] | Amplifies adapter-ligated fragments with minimal errors. | Essential for accurate representation of variant libraries; enzymes engineered via directed evolution (e.g., KAPA HiFi) offer superior fidelity and robustness [8]. |

| Sequencing Adapters & Barcodes [26] | Attaches fragments to the flow cell and allows sample multiplexing. | Proper design and ligation efficiency are critical for library complexity and avoiding index hopping. |

| Magnetic Beads (e.g., AMPure XP) [26] | Purifies nucleic acids between steps and performs size selection. | The ratio of beads to sample determines the size cutoff, crucial for selecting the ideal insert size. |

| Targeted Enrichment Probes [12] [29] | Hybridizes to and enriches specific genomic regions of interest. | Probe design (e.g., for hybrid capture) impacts coverage uniformity and the ability to detect all variant types without bias. |

| Universal Library Prep Kits [29] | Provides all core reagents for end repair, A-tailing, and ligation in an optimized buffer system. | Using a single kit for all samples, as in the standardized exome workflow, reduces batch effects and improves reproducibility [29]. |

The transition from protein variants to sequencable DNA through meticulous library preparation is a critical determinant for the success of NGS-based validation in directed evolution. As demonstrated, the choice between automated and manual systems, or hybrid-capture versus amplicon-based approaches, carries significant implications for data accuracy, throughput, and operational efficiency. The quantitative data and standardized protocols provided here offer a framework for researchers to build robust, reproducible NGS workflows. By selecting the appropriate library preparation strategy and implementing rigorous quality control, scientists can ensure that the valuable variant information encoded in their directed evolution libraries is accurately captured and translated into reliable sequencing data, thereby accelerating the development of novel enzymes and therapeutics.

Next-Generation Sequencing (NGS) has become an indispensable tool for validating the outcomes of directed evolution experiments. The choice between short-read and long-read sequencing technologies profoundly impacts the accuracy, depth, and scope of the analysis researchers can perform. Within the context of a broader thesis on validating directed evolution outcomes with NGS coverage analysis, this platform selection is a critical methodological step. This guide provides an objective comparison of these technologies, focusing on their performance in analyzing complex variant libraries, supported by experimental data and detailed protocols to inform researchers and drug development professionals.

Technology Comparison: Core Methodologies and Specifications

The two dominant NGS approaches differ fundamentally in their chemistry and output, leading to distinct advantages and limitations.

Short-Read Sequencing Technologies

Short-read technologies, often termed "next-generation sequencing," generate reads typically ranging from 50 to 300 base pairs (bp). They operate on a massive parallel scale.

- Illumina: This dominant technology uses sequencing-by-synthesis. Single-stranded DNA-binding proteins facilitate bridge amplification on a flow cell, followed by the cyclic addition of fluorescently labelled nucleotides. The emitted light determines the base identity [30].

- Ion Torrent: This semiconductor-based method also uses emulsion PCR for clonal amplification. However, it detects incorporated nucleotides through the release of a hydrogen ion, which causes a measurable change in pH, rather than via optics [30].

- Element Biosciences AVITI System: This platform employs a unique "sequencing by binding" (SBB) chemistry. Fluorescently labelled nucleotides bind transiently to the DNA synthesis complex for imaging but are not permanently incorporated, leading to a more natural synthesis process and reported high accuracy (Q40+) [30].

- MGI DNBSEQ Platforms: Utilizing DNA nanoball (DNB) technology, this method is a significant player, particularly in Asia. It involves rolling circle amplification to create DNBs which are then sequenced combinatorially [30].

A key challenge for ensemble-based short-read sequencing is the multi-step library preparation, which includes DNA fragmentation, end repair, adapter ligation, and amplification. This process can introduce biases and is a noted burden [30].

Long-Read Sequencing Technologies

Long-read, or third-generation, sequencing platforms directly sequence single DNA molecules, producing reads that can span thousands to hundreds of thousands of base pairs.

- Pacific Biosciences (PacBio): This technology uses Single Molecule Real-Time (SMRT) sequencing. DNA polymerase is immobilized at the bottom of a zero-mode waveguide with a single DNA molecule template. The fluorescence from nucleotide incorporation is detected in real-time. The revolutionary HiFi method sequences a circularized template multiple times, generating highly accurate long reads (Q30-Q40+) [30].

- Oxford Nanopore Technologies (ONT): ONT threads a single DNA strand through a biological nanopore. As nucleotides pass through the pore, they cause characteristic disruptions to an ionic current, which are decoded to determine the sequence. This technology is capable of producing extremely long reads, theoretically up to millions of base pairs [30].

- Roche Sequencing by Expansion (SBX): An emerging technology currently in early access, SBX uses a combination of nanopores and expandable nucleotides. It is designed to provide high-throughput, accurate mid-length single-molecule sequencing and is expected to be commercially available in 2026 [30].

The following table provides a direct, quantitative comparison of the key specifications of these platforms.

Table 1: Technical Specifications of Major Sequencing Platforms

| Platform (Technology) | Read Length | Accuracy | Key Strengths | Common Applications in Directed Evolution |

|---|---|---|---|---|

| Illumina (Short-read) | 50-300 bp | Very High (Q30+) [30] | High throughput, low per-base cost | Deep variant calling, high-coverage population analysis |

| PacBio HiFi (Long-read) | 5,000 - 20,000 bp | Very High (Q30-Q40+) [30] | Long, accurate reads; detects structural variants | Phasing mutations, resolving complex haplotypes |

| Oxford Nanopore (Long-read) | Up to ~1 Mb | Moderate (improving with depth) [30] | Ultra-long reads, real-time analysis, portable | Sequencing entire plasmids/gene clusters, rapid feedback |

Performance Analysis in Directed Evolution and Metagenomics