Validating Enzyme Thermostability Improvements: A Comprehensive Guide for Bioprocess and Biomedical Research

This article provides a systematic framework for researchers and drug development professionals to validate engineered enzyme thermostability—a critical determinant of biocatalyst efficacy in industrial and biomedical applications.

Validating Enzyme Thermostability Improvements: A Comprehensive Guide for Bioprocess and Biomedical Research

Abstract

This article provides a systematic framework for researchers and drug development professionals to validate engineered enzyme thermostability—a critical determinant of biocatalyst efficacy in industrial and biomedical applications. We explore foundational principles linking enzyme structure to thermal resilience, detail cutting-edge computational and experimental methodologies, address common troubleshooting scenarios in stability-activity trade-offs, and present rigorous validation protocols for comparative analysis. By integrating machine learning, high-throughput screening, and multi-parameter characterization, this guide bridges computational design with experimental confirmation to accelerate the development of robust, industrially viable enzymes.

The Pillars of Enzyme Thermostability: From Molecular Principles to Industrial Necessity

In enzyme engineering, validating improvements in thermostability is a critical step following directed evolution or rational design. Three key metrics—melting temperature (T~m~), half-life (t~1/2~), and optimal temperature (T~opt~)—provide complementary insights into an enzyme's thermal performance. This guide objectively compares these metrics, detailing their experimental determination and relevance for researchers and scientists in drug development and industrial biotechnology.

Metric Comparison at a Glance

The table below summarizes the core characteristics, strengths, and limitations of each key thermostability metric.

| Metric | Definition | Measurement Technique | Key Information Provided | Industrial Relevance |

|---|---|---|---|---|

| Melting Temperature (T~m~) | The temperature at which 50% of the enzyme molecules are unfolded [1]. | Differential scanning calorimetry (DSC), circular dichroism (CD) spectroscopy, or fluorimetry with a thermal denaturation curve. | A measure of an enzyme's intrinsic thermodynamic resistance to unfolding [1]. | High; predicts stability during storage and formulation. |

| Half-Life (t~1/2~) | The time required for an enzyme to lose 50% of its initial activity at a specific temperature [2]. | Incubating the enzyme at a target temperature and measuring residual activity over time. | A measure of an enzyme's kinetic stability under operational conditions [2]. | Critical; directly informs process design and operational lifespan. |

| Optimal Temperature (T~opt~) | The temperature at which the enzyme exhibits its highest catalytic activity [3]. | Measuring initial reaction rates across a range of temperatures [4]. | A practical balance between reaction rate acceleration and thermal inactivation [3] [5]. | Direct; used to set the reaction temperature in industrial processes. |

Experimental Protocols for Metric Determination

Determining Melting Temperature (T~m~)

The T~m~ is a thermodynamic parameter reflecting the intrinsic stability of the enzyme's folded structure. An increase in T~m~ after engineering, such as the 12°C jump observed in a chimeric α-amylase, confirms enhanced structural robustness [1].

Protocol: Fluorimetric Assay with Sypro Orange Dye

- Principle: The dye binds to hydrophobic regions of the protein that become exposed during unfolding, resulting in a fluorescence increase.

- Procedure:

- Sample Preparation: Mix purified enzyme with Sypro Orange dye in a suitable buffer.

- Thermal Ramp: Load the sample into a real-time PCR instrument or a fluorimeter with a thermal controller.

- Data Collection: Increase the temperature gradually (e.g., 1°C per minute) from 25°C to 95°C while continuously monitoring fluorescence.

- Data Analysis: Plot the derivative of fluorescence (-d(RFU)/dT) against temperature. The peak of this derivative curve corresponds to the T~m~.

Determining Half-Life (t~1/2~) at a Target Temperature

The t~1/2~ measures operational stability, directly indicating how long an enzyme remains active under process conditions. For instance, a D223G/L278M mutant of Candida antarctica lipase B showed a 13-fold increase in half-life at 48°C [2].

Protocol: Residual Activity Measurement

- Principle: The enzyme is subjected to a prolonged heat challenge, and its remaining activity is assessed at intervals.

- Procedure:

- Heat Challenge: Incubate multiple aliquots of the enzyme solution at a constant, elevated temperature (e.g., 60°C).

- Sampling: At predetermined time intervals, remove an aliquot and immediately place it on ice.

- Activity Assay: Measure the residual activity of each aliquot using a standard activity assay under optimal conditions (e.g., at 37°C).

- Data Analysis: Plot the natural logarithm of residual activity versus time. The half-life is calculated as t~1/2~ = ln(2) / k, where k is the negative slope of the linear regression.

Determining Optimal Temperature (T~opt~)

The T~opt~ is a kinetic parameter representing the trade-off between the Arrhenius-type acceleration of the reaction rate and the temperature-driven inactivation of the enzyme [3] [5]. A new mathematical model that accounts for this trade-off and enzyme deactivation kinetics can be used for accurate determination [4].

Protocol: Initial Reaction Rate Profiling

- Principle: The enzyme's initial activity is measured across a broad temperature spectrum to find the peak.

- Procedure:

- Substrate Preparation: Prepare a substrate solution and pre-incubate it at a series of different temperatures (e.g., from 20°C to 90°C in 5°C increments).

- Reaction Initiation: Add a fixed amount of enzyme to each substrate tube and incubate for a specific, short period.

- Reaction Stop & Measurement: Stop the reaction and quantify the amount of product formed.

- Data Analysis: Plot the initial reaction rate (e.g., µmol product/min) against temperature. The temperature corresponding to the highest rate is the T~opt~.

Conceptual Workflow for Thermostability Validation

The following diagram illustrates the logical relationship between the three metrics and the core trade-off in enzyme engineering.

The Scientist's Toolkit: Essential Reagent Solutions

The table below lists key reagents and their functions for conducting the described thermostability experiments.

| Research Reagent / Material | Function in Experiment |

|---|---|

| Purified Enzyme Sample | The core subject of analysis; requires high purity to avoid interference in assays. |

| Fluorescent Dye (e.g., Sypro Orange) | Binds hydrophobic patches in unfolding proteins, enabling T~m~ determination. |

| Specific Substrate | The molecule the enzyme acts upon; essential for activity-based assays (t~1/2~ and T~opt~). |

| Detection Reagent (Spectrophotometric/Fluorimetric) | Quantifies product formation or substrate depletion to measure reaction rates. |

| Controlled-Temperature Incubator/Block | Provides a stable thermal environment for heat challenge (t~1/2~) and activity assays (T~opt~). |

| Real-Time PCR Instrument or Spectrofluorometer | Precisely controls temperature ramp and monitors fluorescence changes for T~m~ analysis. |

| Buffers with Optimal pH | Maintains constant pH to ensure activity and stability measurements are not confounded by pH effects. |

The pursuit of enzyme thermostability is a central challenge in biotechnology and pharmaceutical development. Enhanced thermal stability improves enzyme reusability, extends half-life under industrial conditions, and can increase reaction rates at higher temperatures [6]. At the molecular level, this stability is governed by a complex, cooperative network of non-covalent interactions, primarily hydrophobic interactions, hydrogen bonds, and salt bridges [7] [8]. These determinants do not function in isolation; their energetic contributions are highly context-dependent and non-additive, influenced by the structural background and solvation effects of the protein [7] [8]. Understanding and quantifying these interactions provides the foundational knowledge required to validate stability improvements in engineered enzymes, moving beyond simple thermal shift measurements to a deeper thermodynamic understanding.

Quantitative Comparison of Molecular Determinants

The table below summarizes the typical energy contributions and key characteristics of the three primary molecular determinants of protein stability.

Table 1: Quantitative Energetic and Structural Profile of Key Molecular Interactions

| Molecular Determinant | Typical Energy Contribution (kcal/mol) | Primary Role in Stability | Optimal Distance | Key Structural Features |

|---|---|---|---|---|

| Hydrophobic Interaction | ~0.7 [9] | Thermodynamic (burial of apolar surface) [7] | N/A | Burial of non-polar surfaces; major driver of protein folding |

| Hydrogen Bond | ~1 (can range 1-40 with reinforcement) [9] | Structural integrity & specificity | 2.6 - 3.1 Å [9] | Directional; requires desolvation; strength depends on donor/acceptor pair |

| Salt Bridge | ~2 (highly variable) [9] | Structural stabilization & network formation | < 4 Å [8] | Combines ionic & H-bonding; large desolvation penalty; highly geometry-dependent |

The data reveals that salt bridges, while potentially offering the largest per-interaction energy gain, are also the most variable and context-sensitive. Their net contribution is a delicate balance between stabilizing interactions and the destabilizing cost of desolvating charged groups [8] [9]. Hydrogen bonds provide moderate, directionally specific stabilization. In contrast, hydrophobic interactions, while individually weak, collectively provide a major driving force for proper folding and core stability through the burial of apolar surface area [7].

Experimental Protocols for Validation

Validating the role of these interactions in engineered thermostable enzymes requires a multi-faceted experimental approach. The following protocols are standard for deconvoluting their individual and cooperative contributions.

Assessing Global Thermodynamic Stability

Differential Scanning Calorimetry (DSC) directly measures the thermal stability of a protein by determining the midpoint of thermal unfolding (Tm) and the enthalpy change (ΔH) associated with the process [8].

- Procedure: Prepare a purified protein sample in a suitable buffer. Ramp temperature while measuring the heat capacity difference between the sample and reference cells. The resulting thermogram is fitted to a model (e.g., a non-two-state equilibrium model) to extract Tm and ΔH values.

- Data Interpretation: An increase in Tm in a mutant compared to the wild-type enzyme indicates improved thermal stability. Changes in ΔH reflect alterations in the enthalpic contributions to stability, often linked to hydrogen bonding and van der Waals contacts [8].

Chemical Denaturation using agents like urea or guanidine hydrochloride assesses conformational stability at a fixed temperature.

- Procedure: Incubate the protein in a series of denaturant concentrations. Monitor the unfolding transition using intrinsic fluorescence (IF) or circular dichroism (CD) spectroscopy. Fit the data to determine the free energy of unfolding (ΔG) and the denaturant concentration at the midpoint (D[1/2]) [8].

- Data Interpretation: An increase in ΔG or D[1/2] signifies enhanced conformational stability, which can be correlated with introduced hydrophobic core packing or salt bridges [8].

Probing Local Interactions and Structural Details

X-ray Crystallography provides atomic-resolution structures essential for validating the structural basis of stability improvements.

- Procedure: Crystallize the wild-type and engineered enzyme variants. Collect X-ray diffraction data and solve the structures. Analyze the electron density maps for new interactions [8].

- Data Interpretation: Look for the structural hallmarks of engineered determinants: shortened hydrogen bond distances (2.6-3.1 Å), formation of new salt bridge networks (oppositely charged residues <4 Å apart), and improved hydrophobic packing with filled cavities [8] [6]. This confirms that designed mutations have formed the intended interactions.

Molecular Dynamics (MD) Simulations capture the dynamic behavior of enzymes, complementing static crystal structures.

- Procedure: Simulate the wild-type and mutant enzymes in solvated systems for hundreds of nanoseconds. Analyze root-mean-square fluctuation (RMSF) of residues and interaction distances over the simulation trajectory [6] [10].

- Data Interpretation: Reduced RMSF in mutated regions indicates rigidification. Stable salt bridge and hydrogen bond distances throughout the simulation confirm the persistence of designed interactions. Simulations can reveal how a mutation in a rigid, short-loop region can rigidify a distant flexible region [6].

Visualizing Engineering Strategies and Determinants

The following diagrams illustrate the core concepts and workflows for engineering and validating enzyme thermostability.

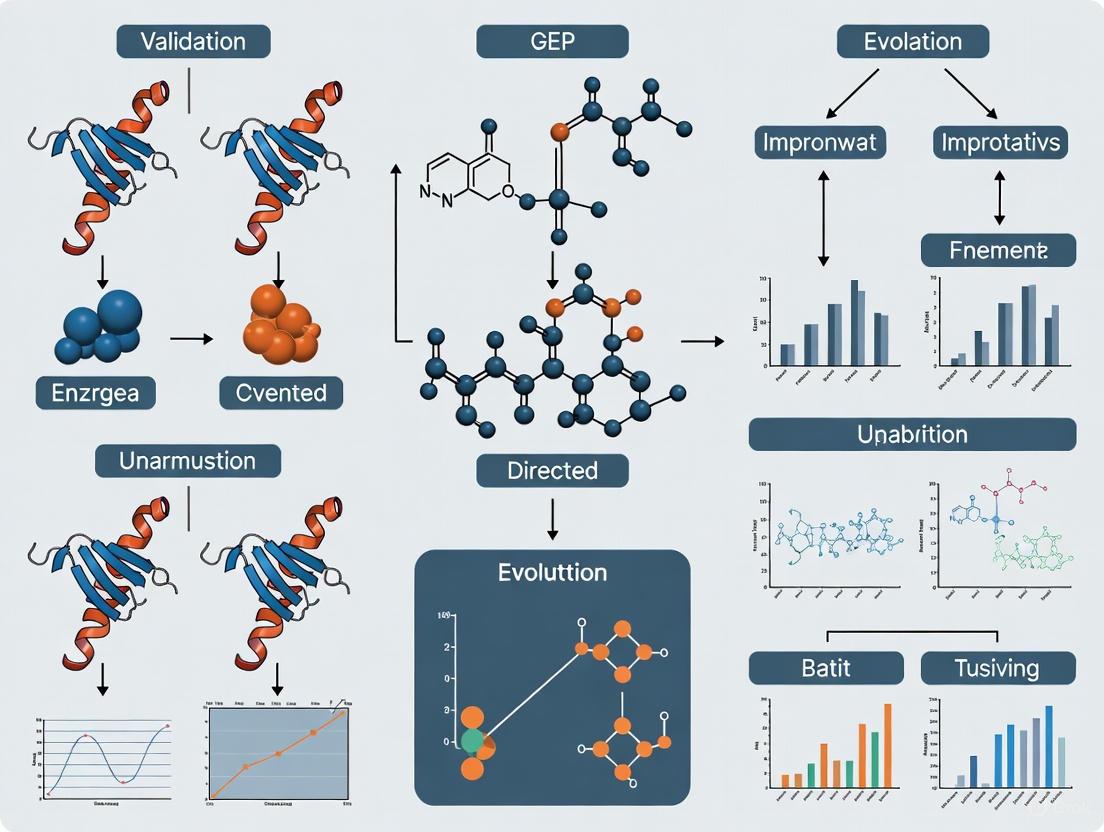

Conceptual Framework for Engineering Stability

Figure 1: A conceptual workflow for improving enzyme thermostability by targeting different molecular determinants, from initial analysis to experimental validation.

Experimental Validation Workflow

Figure 2: An integrated experimental workflow for validating the mechanistic role of molecular determinants in engineered thermostability.

The Scientist's Toolkit: Essential Research Reagents

The following table lists key reagents and computational tools essential for researching the molecular determinants of enzyme stability.

Table 2: Essential Reagents and Tools for Investigating Molecular Determinants of Stability

| Tool / Reagent | Category | Primary Function in Research |

|---|---|---|

| Urea / Guanidine HCl | Chemical Denaturant | Unfolds protein to measure conformational stability (ΔG) via CD or fluorescence [8]. |

| Differential Scanning Calorimeter (DSC) | Biophysical Instrument | Directly measures thermal unfolding midpoint (Tm) and enthalpy (ΔH) [8]. |

| Circular Dichroism (CD) Spectrometer | Spectroscopic Instrument | Probes secondary structure content and monitors thermal/chemical denaturation [8]. |

| Crystallization Screens | Laboratory Reagent | Enables growth of protein crystals for X-ray diffraction studies [8]. |

| Rosetta | Software Suite | Models protein structures, designs mutations, and predicts changes in folding free energy (ΔΔG) [8] [6]. |

| FoldX | Software Plugin | Rapidly calculates the effect of mutations on protein stability (ΔΔG) [6]. |

| GROMACS / AMBER | MD Software | Performs molecular dynamics simulations to analyze conformational dynamics and interaction persistence [10]. |

The successful engineering of enzyme thermostability relies on a nuanced understanding of hydrophobic interactions, hydrogen bonds, and salt bridges. Individually, their energetic contributions are modest and context-dependent, but when strategically combined, they can lead to highly stable, cooperative protein architectures [7] [8]. Validation is not complete with a single increased Tm value; it requires a convergent methodology linking thermodynamic measurements with structural insights from crystallography and dynamics simulations. This rigorous, multi-pronged approach transforms the art of enzyme engineering into a predictive science, enabling the creation of robust biocatalysts for the next generation of biomedical and industrial applications.

The evolutionary adaptation of enzymes to high-temperature environments presents a complex biophysical puzzle. While increased structural rigidity was historically considered a hallmark of thermophilic enzymes, contemporary research reveals a more nuanced reality where strategic flexibility is equally critical. This guide systematically compares the architectural principles distinguishing thermophilic and mesophilic enzymes, synthesizing structural, dynamic, and computational evidence. We objectively evaluate competing theories on enzyme thermostability, present quantitative structural data, and detail experimental methodologies for probing protein dynamics. The analysis reveals that thermal adaptation employs multiple synergistic strategies rather than a single universal mechanism, with implications for rational enzyme design in industrial biocatalysis and therapeutic development.

Proteins from thermophilic organisms exhibit exceptional resilience, maintaining structural integrity and catalytic function at temperatures that would denature most mesophilic proteins. The prevailing hypothesis suggests that thermophilic enzymes achieve this stability through enhanced structural rigidity, particularly at ambient temperatures [11]. This rigidity is thought to reduce catalytic efficiency at lower temperatures, creating a apparent trade-off between stability and activity [12] [11]. However, emerging evidence challenges this simplistic dichotomy, revealing that thermophilic enzymes do not merely represent "rigidified" versions of their mesophilic counterparts but have undergone sophisticated architectural optimization [13] [14]. This guide comprehensively compares these architectural differences, providing researchers with a structured framework for analyzing enzyme thermostability.

The adaptive strategies employed by thermophilic enzymes are of considerable practical interest beyond fundamental science. In industrial biocatalysis, thermostable enzymes offer advantages including reduced contamination risk, increased substrate solubility, and higher reaction rates [15]. In pharmaceutical development, understanding structural stability informs drug design targeting pathogen-specific enzymes. This analysis synthesizes findings from structural bioinformatics, molecular dynamics simulations, and biophysical measurements to provide a multifaceted perspective on enzyme architectural adaptation.

Quantitative Structural Comparison

Statistical analysis of structural databases reveals distinct trends in the molecular features of thermophilic versus mesophilic enzymes. The table below summarizes key structural parameters derived from comparative studies of homologous enzyme pairs.

Table 1: Structural Parameters in Thermophilic and Mesophilic Enzymes

| Structural Feature | Thermophilic Trend | Mesophilic Trend | Statistical Significance | Primary Reference |

|---|---|---|---|---|

| Ion Pairs/Salt Bridges | Increased number, especially surface-exposed and in networks | Fewer ion pairs | Highly significant (p<0.01) | [16] [17] |

| Side-Chain Burial | Increased burial in transmembrane domains | Reduced burial | Significant (p=0.026) | [18] |

| Cavities/Voids | Fewer and smaller cavities | More numerous cavities | Significant in specific families | [15] |

| Hydrogen Bonds | No consistent increase | Variable | Not significant | [16] |

| Hydrophobicity | More hydrophobic core | Less hydrophobic core | Significant | [17] |

| Polar Surface Area | Reduced polarity in buried surfaces | More polar buried surfaces | Significant in extreme thermophiles | [16] |

| Loop Length | Slightly shorter loops | Longer loops | Not significant in membrane proteins | [18] |

| Amino Acid Composition | More Ile, Glu, Arg; Less Asn, Gln, Cys | Opposite trends | Proteome-wide significance | [17] |

Analysis of 64 mesophilic and 29 thermophilic protein subunits revealed that different protein families adapt to higher temperatures using different combinations of structural devices [16]. The only universally observed rule is an increase in ion pairs with increasing growth temperature. Other parameters show trends within specific protein families but lack universal application. For instance, extreme thermophiles demonstrate distinct preferences compared to moderately thermophilic proteins regarding cavity number, surface polarity, and secondary structure composition [16].

In membrane proteins, thermophilic adaptations include increased hydrophobicity of transmembrane helices, possibly reflecting more stringent partitioning requirements at high temperatures [18]. Thermophilic membrane proteins also show significant depletion of thermally sensitive residues (Cys, Asn, Gln) and most strongly polar residues (Asp, Glu, Arg, Gln), suggesting evolutionary pressure to eliminate destabilizing amino acids [18].

Experimental Methodologies for Probing Structural Dynamics

Biophysical Approaches for Flexibility Measurement

Researchers employ multiple experimental techniques to quantify protein flexibility and its relationship to thermal stability:

Hydrogen-Deuterium (H/D) Exchange: Measures the rate at which backbone amide protons exchange with deuterium in solvent. Slower exchange rates indicate reduced flexibility and greater structural protection. Studies using H/D exchange have found that thermophilic enzymes often exhibit slower exchange kinetics, suggesting enhanced rigidity at room temperature [11].

Incoherent Neutron Scattering: Probes picosecond-nanosecond dynamics of hydrogen atoms, providing direct measurement of internal protein flexibility. Surprisingly, this method has revealed higher conformational freedom in some thermophilic enzymes compared to their mesophilic counterparts at room temperature [14].

Fluorescence Spectroscopy: Uses quenching of tryptophan fluorescence to monitor conformational flexibility and solvent exposure of aromatic residues. Thermophilic enzymes often show reduced fluorescence quenching, indicating decreased flexibility [11].

Limited Proteolysis: Explores surface flexibility by measuring susceptibility to proteolytic enzymes. Thermophilic proteins generally demonstrate reduced proteolytic degradation rates, consistent with increased structural rigidity [11].

X-ray Crystallography B-Factor Analysis: Derives flexibility information from atomic displacement parameters (B-factors) in crystal structures. While some thermophilic enzymes show lower B-factors, this correlation is not universal [17].

Computational and Modeling Approaches

Computational methods provide atomic-level insights into enzyme dynamics and stability:

Molecular Dynamics (MD) Simulations: Track atomic movements over time, revealing differences in flexibility and resilience between thermophilic and mesophilic enzymes. Advanced MD approaches can separate internal dynamics from overall molecular diffusion [13].

Rigidity Analysis (FIRST Algorithm): Uses graph theory to analyze network of constraints in protein structures, identifying rigid and flexible regions. Studies applying rigidity analysis to citrate synthases found increased structural rigidity in thermophilic versions [19].

Delaunay Tessellation: Decomposes protein structures into tetrahedral simplices based on α-carbon positions, enabling quantitative analysis of packing efficiency and residue contacts. This approach has identified improved atomic packing in thermophilic enzymes [17].

iCASE Strategy (Isothermal Compressibility-Assisted Dynamic Squeezing Index): A recently developed computational approach that combines dynamics measurements with machine learning to predict mutation effects on stability and activity. This method constructs hierarchical modular networks for enzymes of varying complexity and has been validated across multiple enzyme classes [12].

Table 2: Experimental Methods for Analyzing Enzyme Flexibility and Stability

| Method | Time Resolution | Spatial Resolution | Information Gained | Limitations |

|---|---|---|---|---|

| H/D Exchange | Seconds to hours | Single residues | Local flexibility/stability | Limited to exchangeable protons |

| Neutron Scattering | Picoseconds to nanoseconds | Global and domain motions | Internal dynamics | Requires specialized facilities |

| Fluorescence Quenching | Nanoseconds to seconds | Local environment of fluorophores | Solvent exposure and mobility | Limited to regions with fluorophores |

| Molecular Dynamics | Femtoseconds to microseconds | Atomic | Atomic-level dynamics and interactions | Computationally intensive, timescale limitations |

| Rigidity Analysis | Static structure | Atomic | Structural rigidity/flexibility | Based on static structure only |

| Limited Proteolysis | Minutes to hours | Surface loops and domains | Surface accessibility and flexibility | Limited to protease-accessible regions |

Visualization of Structural Adaptation Mechanisms

The following diagram illustrates the key structural differences between thermophilic and mesophilic enzymes and their functional consequences.

Diagram 1: Structural and functional distinctions between thermophilic and mesophilic enzymes. While thermophilic enzymes exhibit distinct structural adaptations that enhance stability, both enzyme types require strategic flexibility for catalytic function.

The Scientist's Toolkit: Essential Research Reagents and Methods

This section details critical experimental resources for investigating enzyme thermostability and flexibility.

Table 3: Essential Research Reagents and Methods for Enzyme Stability Studies

| Reagent/Method | Function/Application | Example Use Cases | Key References |

|---|---|---|---|

| p-Aminomethylbenzene-sulphonamide Agarose | Affinity chromatography resin for carbonic anhydrase purification | Purification of carbonic anhydrase from psychrophilic and mesophilic sources | [20] |

| Rosetta 3.13 Software | Predicting changes in free energy (ΔΔG) upon mutation | Computational screening of stabilizing mutations in protein engineering | [12] |

| D₂O (Deuterium Oxide) | Solvent for hydrogen-deuterium exchange experiments | Probing protein flexibility and dynamics through amide proton exchange rates | [14] [11] |

| Molecular Dynamics Software (GROMACS, AMBER) | Simulating protein dynamics and flexibility | Comparing resilience and internal motions of thermophilic-mesophilic enzyme pairs | [13] |

| FIRST Rigidity Analysis Software | Identifying rigid and flexible regions in protein structures | Comparing structural rigidity in mesophilic and extremophilic citrate synthases | [19] |

| Incoherent Neutron Scattering | Measuring picosecond-nanosecond dynamics | Revealing unexpected flexibility in thermophilic α-amylase | [14] |

| Ionic Liquids & Denaturants | Probing stability-flexibility relationship | Enzyme activation studies at low denaturant concentrations | [11] |

| Thermostable Proteases | Limited proteolysis experiments | Assessing surface flexibility and structural rigidity | [11] |

The architectural comparison between thermophilic and mesophilic enzymes reveals a sophisticated evolutionary optimization process that extends beyond simple rigidification. While thermophilic enzymes frequently exhibit structural features that enhance stability—including increased ion pairs, improved hydrophobic packing, and reduced cavities—they maintain strategic flexibility essential for catalytic function [16] [15] [17]. The emerging paradigm recognizes that thermophilic enzymes demonstrate "resilience" rather than mere rigidity, maintaining optimal dynamics at their functional temperatures [13] [14].

These insights have profound implications for enzyme engineering and drug development. Rational design strategies must account for both stability and flexibility requirements, recognizing that excessive rigidification can compromise catalytic efficiency [12] [11]. Advanced computational approaches combining molecular dynamics with machine learning, such as the iCASE strategy, offer promising avenues for navigating the stability-activity trade-off [12]. For pharmaceutical researchers, understanding these architectural principles enables more effective targeting of pathogen-specific enzymes, particularly from thermophilic microorganisms. As structural biology and computational methods continue to advance, our ability to precisely engineer enzyme stability and function will undoubtedly transform both industrial biocatalysis and therapeutic development.

The stability-activity trade-off represents a fundamental constraint in enzyme engineering where mutations that enhance an enzyme's thermal stability often come at the expense of its catalytic activity, and vice versa. This phenomenon arises because structural modifications that increase rigidity for thermal stability frequently reduce the molecular flexibility required for efficient catalysis, particularly at lower temperatures [21] [22]. Conversely, mutations that increase flexibility to boost activity at lower temperatures often compromise structural integrity, leading to reduced thermostability [12]. This trade-off presents a significant challenge in industrial enzyme development, where both high stability under processing conditions and high catalytic efficiency are desirable attributes that often appear mutually exclusive.

Understanding and overcoming this trade-off is particularly crucial for industrial applications, where enzymes must function under non-physiological conditions including extreme temperatures, pH variations, and organic solvents [12]. Natural enzymes, evolved for physiological conditions, frequently fail to meet industrial demands, necessitating engineering approaches that can balance or circumvent this fundamental constraint. Recent advances in computational modeling, machine learning, and experimental evolution are providing new pathways to navigate this trade-off, enabling the development of engineered enzymes that maintain both stability and activity across diverse operational environments [23] [12].

Molecular Mechanisms Underlying the Trade-Off

The molecular basis of the stability-activity trade-off primarily involves balancing structural rigidity and functional flexibility. Thermophilic enzymes typically exhibit increased structural rigidity through strengthened intramolecular interactions—including hydrophobic interactions, hydrogen bonds, salt bridges, and disulfide bonds—that enhance thermal stability but can limit the conformational dynamics necessary for substrate binding and transition state stabilization [22]. Conversely, psychrophilic enzymes employ structural flexibility, particularly around active sites, to maintain catalytic efficiency at low temperatures, but this comes at the cost of reduced stability as increased flexibility predisposes the structure to denaturation under thermal stress [21].

Experimental evolution studies on Pyrococcus furiosus ornithine carbamoyltransferase (OTCase) demonstrate this trade-off clearly. Mutants selected for activity at low temperatures (15-30°C) showed dramatically improved catalytic turnover (kcat) but substantially reduced thermal stability [21]. For instance, the double mutant Y227C+E277G exhibited a 6-fold higher kcat at 30°C compared to the wild-type enzyme, but its half-life at 75°C decreased from >10 hours to just 1 minute (Table 1). This inverse relationship highlights the compromise between achieving catalytic efficiency and maintaining structural integrity. Molecular dynamics simulations suggest that cold-adapted mutants achieve higher activity through increased active-site flexibility, which facilitates substrate binding and product release but simultaneously destabilizes the protein structure against thermal denaturation [21] [22].

Experimental Approaches and Quantitative Comparisons

Experimental Evolution and Mutant Characterization

Directed evolution and experimental selection approaches have successfully generated enzyme variants that illuminate the stability-activity trade-off. A key study used the E. coli XL1-Red mutator strain (deficient in mutS, mutT, and mutD DNA repair pathways) to introduce random mutations into the Pyrococcus furiosus OTCase gene [21]. Mutants were selected in a Saccharomyces cerevisiae host strain (12S16) lacking native OTCase activity, with selection based on complementation at low temperatures (30°C and 15°C). This approach identified double mutants (dm1: Y227C+E277G and dm2: A240D+E277G) that shared the E277G substitution located in the ornithine-binding domain.

Purified mutant enzymes were characterized kinetically between 22-55°C, with key parameters compared against the wild-type enzyme (Table 1). The experimental protocol included:

- Gene mutagenesis: Using XL1-Red mutator strain to generate random mutations

- Selection: Complementation of yeast Δarg3 strain at 30°C and 15°C

- Protein purification: Sequential chromatography using monoQ and arginine-Sepharose columns

- Enzyme kinetics: Measuring citrulline production at various temperatures

- Thermal stability: Determining half-life (t1/2) at 75°C and 95°C

- Structural analysis: Size-exclusion chromatography to verify quaternary structure [21]

Table 1: Kinetic and Stability Parameters of Wild-type and Mutant P. furiosus OTCases

| Enzyme | Temperature | Kmapp Orn (mM) | kcat (s-1) | kcat/Km | t1/2 at 75°C |

|---|---|---|---|---|---|

| Wild-type | 55°C | 0.1 | 500 | 5,000 | >10 h |

| 30°C | 0.1 | 370 | 3,700 | - | |

| dm1 (Y227C+E277G) | 55°C | 1.6 | 3,500 | 2,200 | 1 min |

| 30°C | 0.8 | 2,200 | 2,750 | - | |

| dm2 (A240D+E277G) | 55°C | 13 | 4,300 | 330 | 14 min |

| 30°C | 2 | 2,900 | 1,450 | - | |

| m3 (E277G) | 55°C | 1.4 | 1,600 | 1,140 | 10 min |

| 30°C | 0.5 | 560 | 1,120 | - |

Machine Learning-Assisted Engineering

The iCASE (isothermal compressibility-assisted dynamic squeezing index perturbation engineering) strategy represents a recent machine learning approach designed to address the stability-activity trade-off [12]. This method constructs hierarchical modular networks for enzymes of varying complexity by analyzing fluctuations in isothermal compressibility (βT) to identify regions amenable to mutation. The protocol involves:

- Identifying high-fluctuation regions: Based on isothermal compressibility calculations

- Dynamic squeezing index (DSI) analysis: Selecting residues with DSI > 0.8 (top 20%) as mutation candidates

- Free energy prediction: Using Rosetta 3.13 to calculate changes in free energy (ΔΔG)

- Experimental validation: Screening mutants for activity and stability

When applied to protein-glutaminase (PG), this approach identified single-point mutants (H47L, M49E, M49L) with 1.42-fold, 1.29-fold, and 1.82-fold improvements in specific activity, respectively, while maintaining or slightly increasing thermal stability [12]. For the more complex TIM barrel structure of xylanase (XY), the best triple-point mutant (R77F/E145M/T284R) exhibited a 3.39-fold increase in specific activity with a 2.4°C increase in melting temperature (Tm), demonstrating that machine learning-guided approaches can sometimes mitigate the trade-off rather than merely balance it.

Table 2: Performance of iCASE-Engineered Enzyme Variants

| Enzyme | Variant | Specific Activity (Fold Change) | Thermal Stability | Trade-off Assessment |

|---|---|---|---|---|

| Protein-glutaminase (PG) | H47L | 1.42× | Slight increase | Balanced |

| M49L | 1.82× | Slight increase | Balanced | |

| K48R/M49E | 1.74× | Nearly unchanged | Balanced | |

| Xylanase (XY) | R77F/E145M/T284R | 3.39× | Tm +2.4°C | Mitigated |

| Glutamate decarboxylase (GADA) | Not specified | Improved | Improved | Balanced |

Computational and AI-Driven Strategies

Physics-Based Modeling

Physics-based modeling approaches, including molecular mechanics (MM) and quantum mechanics (QM), provide theoretical frameworks for understanding and predicting the stability-activity trade-off [23]. These methods enable researchers to simulate enzyme dynamics, calculate activation energies, and predict the effects of mutations on both structural stability and catalytic efficiency. Electrostatic preorganization—how well an enzyme's active site stabilizes transition states through pre-organized electric fields—has been identified as a key factor influencing catalytic efficiency [23]. Mutations that optimize these electric fields for transition state stabilization may simultaneously destabilize the native protein structure, creating the observed trade-off.

Molecular dynamics simulations can quantify flexibility differences between thermophilic and psychrophilic enzyme variants, helping identify specific residues where modifications might optimize the balance between stability and activity [23]. For example, analysis of ancestral sequence reconstruction (ASR) studies reveals that ancient enzymes often exhibited both high thermostability and broader substrate promiscuity, suggesting that modern specialized enzymes may have undergone evolutionary optimization that intensified the stability-activity trade-off for specific ecological niches [22].

Ancestral Sequence Reconstruction

Ancestral sequence reconstruction (ASR) has emerged as a powerful tool for exploring evolutionary solutions to the stability-activity trade-off [22]. This approach involves:

- Sequence collection: Gathering homologous protein sequences from diverse organisms

- Multiple sequence alignment: Using algorithms like MAFFT with manual correction

- Phylogenetic tree construction: Building molecular phylogenetic trees from alignments

- Ancestral inference: Using statistical models (e.g., maximum likelihood, Bayesian inference) to reconstruct ancestral sequences

- Experimental characterization: Synthesizing and testing reconstructed ancestral proteins

ASR studies suggest that ancient enzymes often displayed superior stability-activity profiles compared to their modern counterparts, with reconstructed ancestral enzymes frequently exhibiting both high thermostability and substantial catalytic activity across temperature ranges [22]. For adenylate kinase (Adk), ASR experiments demonstrated that maintenance of kcat was a critical determinant of organismal fitness during enzyme evolution, with ancestral variants achieving different solutions to the stability-activity trade-off compared to modern specialized enzymes [22].

Visualization of Concepts and Workflows

Stability-Activity Trade-off Relationship

Stability-Activity Trade-off Concept: This diagram illustrates the fundamental relationship where thermophilic enzymes prioritize structural rigidity (red) for stability at high temperatures but sacrifice activity, while psychrophilic enzymes prioritize flexibility (blue) for catalytic activity at low temperatures but sacrifice stability. Enzyme engineering aims to achieve an optimal balance (green) between these competing constraints.

iCASE Strategy Workflow

iCASE Engineering Workflow: This diagram outlines the machine learning-assisted iCASE strategy for addressing the stability-activity trade-off, progressing from initial identification of flexible regions through computational screening to experimental validation and model refinement.

Research Reagent Solutions Toolkit

Table 3: Essential Research Reagents and Tools for Studying Stability-Activity Trade-Offs

| Reagent/Tool | Function/Application | Example Use |

|---|---|---|

| E. coli XL1-Red Mutator Strain | Random mutagenesis through defective DNA repair pathways | Generating mutant libraries of P. furiosus OTCase [21] |

| S. cerevisiae 12S16 (Δarg3) | Selection host for complementation assays | Selecting cold-active OTCase mutants at 30°C and 15°C [21] |

| pYX111/pYX112 Shuttle Vectors | E. coli/S. cerevisiae expression with different promoter strengths | Controlling expression levels for selection experiments [21] |

| MonoQ & Arginine-Sepharose Columns | Enzyme purification | Purifying wild-type and mutant OTCases for kinetic analysis [21] |

| Rosetta 3.13 Software | Predicting changes in free energy (ΔΔG) upon mutation | Screening mutation effects in iCASE strategy [12] |

| Molecular Dynamics Software | Simulating enzyme flexibility and dynamics | Analyzing structural basis of stability-activity trade-off [23] [22] |

| MAFFT Algorithm | Multiple sequence alignment for ASR | Aligning homologous sequences for ancestral reconstruction [22] |

The stability-activity trade-off remains a central challenge in enzyme engineering, but emerging technologies are providing new pathways to navigate this constraint. Experimental evolution studies continue to reveal the molecular mechanisms underlying this trade-off, while computational approaches—particularly machine learning and ancestral sequence reconstruction—offer powerful strategies for designing enzymes that optimize both stability and activity [21] [12] [22]. The integration of high-throughput screening with physics-based modeling creates a virtuous cycle where experimental data improves computational predictions, which in turn guide more focused experimental efforts [23] [24].

Future advances will likely come from several directions: improved molecular dynamics simulations that can more accurately predict flexibility-function relationships; machine learning models trained on larger datasets of engineered enzymes; and hybrid approaches that combine ancestral insights with contemporary engineering strategies [23] [12] [22]. As these methods mature, enzyme engineers may increasingly overcome the stability-activity trade-off, designing biocatalysts that maintain high catalytic efficiency across broader temperature ranges for diverse industrial applications. The ongoing development of synzymes (synthetic enzyme mimics) further expands the toolbox, offering alternative scaffolds that may circumvent the constraints inherent to natural enzyme structures [25].

In industrial bioprocessing, enzyme thermostability is not merely a beneficial trait but a critical economic driver. This guide objectively compares the performance of thermostable and mesophilic enzymes, demonstrating how enhanced thermal stability directly translates to superior bioprocess efficiency, reduced operational costs, and improved product yields. Framed within the context of validating enzyme improvements post-evolutionary research, we present synthesized experimental data and standardized protocols to equip researchers and drug development professionals with robust tools for evaluating biocatalyst performance under industrially relevant conditions.

Enzyme catalysis is a cornerstone of modern industrial processes, spanning the food, textile, detergent, pharmaceutical, and biofuel sectors [26]. Naturally occurring enzymes, however, often lack the robustness required for harsh industrial conditions, particularly elevated temperatures. Thermostability—an enzyme's ability to maintain structural integrity and catalytic function at high temperatures—has emerged as a pivotal engineering target because it directly influences several key economic parameters.

Industrial bioprocesses frequently operate at elevated temperatures to increase substrate solubility, reduce microbial contamination, and enhance reaction rates. Enzymes that deactivate rapidly under these conditions necessitate frequent replenishment, drive up production costs, and limit process continuity. The global enzyme market, projected to surpass USD 7.1 billion, reflects this demand, with thermostable variants commanding significant commercial interest [26]. This guide provides a comparative analysis of thermostable versus conventional enzymes, validating performance through experimental data and established testing methodologies relevant to post-evolutionary research.

Performance Comparison: Thermostable vs. Mesophilic Enzymes

The following tables synthesize quantitative data from published studies, enabling direct comparison of key performance indicators between thermostable and mesophilic enzyme systems.

Table 1: Comparative Performance in Cell-Free Biocatalytic Pathways [27]

| Performance Indicator | Mesophilic Pathway (Classical Mevalonate) | Thermostable Pathway (Archaea I Mevalonate) | Improvement |

|---|---|---|---|

| Operating Lifetime at 22°C | Baseline | 6x longer | +600% |

| Limonene Yield | Baseline | 1.7x higher | +70% |

| Solvent Tolerance (Ethanol/Isoprenol) | Low activity retention | High activity retention | Significant improvement |

| Optimal Temperature Range | ~37°C | Up to 60°C | Expanded operational window |

Table 2: Engineered Enzyme Variants with Enhanced Thermostability [28]

| Enzyme | Mutation(s) | Impact on Specific Activity | Impact on Thermal Stability (Tm) |

|---|---|---|---|

| Protein-glutaminase (PG) | H47L | 1.42-fold increase | Slight increase |

| Protein-glutaminase (PG) | M49L | 1.82-fold increase | Slight increase |

| Xylanase (XY) | R77F/E145M/T284R | 3.39-fold increase | Increase of +2.4 °C |

Experimental Protocols for Validating Thermostability

Rigorous validation is essential for confirming engineered improvements in enzyme thermostability. The following protocols are standard in the field.

Thermal Stability Assay via Residual Activity Measurement

This method assesses an enzyme's functional stability after heat exposure [27].

- Sample Preparation: Dilute the purified enzyme to a standardized concentration (e.g., 1 mg/mL) in a suitable buffer (e.g., 50 mM HEPES, pH 7.5).

- Heat Treatment: Aliquot the enzyme solution into separate tubes. Incubate these aliquots for a fixed duration (e.g., 1 hour) across a temperature gradient (e.g., from 4°C to 70°C) using a thermal cycler or water baths.

- Activity Measurement: Cool the heated samples on ice. Assay the residual enzymatic activity of each aliquot under standard optimal conditions (e.g., substrate, pH, temperature).

- Data Analysis: Calculate the percentage of residual activity for each temperature point relative to an unheated control. The data can be used to determine the half-inactivation temperature (T50) or the melting temperature (Tm) via fitting to a suitable model.

Structure-Based Engineering and Machine Learning Validation

Advanced engineering efforts combine computational and wet-lab approaches [28] [29].

- Identify Target Residues: Use computational strategies like iCASE (isothermal compressibility-assisted dynamic squeezing index perturbation engineering) to identify flexible protein regions and residues critical for stability and activity. This involves analyzing dynamics and constructing hierarchical modular networks [28].

- In Silico Screening: Employ machine learning models (e.g., VenusREM, a retrieval-enhanced protein language model) to predict the fitness (stability and activity) of thousands of single and multi-point mutants. These models integrate sequence, structure, and evolutionary information for robust prediction [29].

- Wet-Lab Verification: Synthesize a limited set of top-ranking mutant genes, express and purify the variant proteins. Characterize the purified variants using the thermal stability assay (Protocol 3.1) and specific activity assays to confirm the predicted improvements.

Workflow Visualization

The following diagram illustrates the integrated computational and experimental workflow for engineering and validating thermostable enzymes.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents for Enzyme Thermostability Research

| Reagent/Material | Function in Research | Example from Literature |

|---|---|---|

| Cloning & Expression Vector | Heterologous gene expression for enzyme production. | pET28 backbone vector for E. coli expression [27]. |

| Expression Host | Recombinant protein production system. | E. coli BL21(DE3) cells [27]. |

| Affinity Chromatography Resin | Rapid purification of recombinant enzymes. | Ni-NTA resin for His-tagged protein purification [27]. |

| Thermostable Enzyme Standards | Positive controls for stability assays. | dUTPase P45 from Pyrococcus furiosus [30]. |

| Activity Assay Substrates | Quantifying enzymatic activity and kinetics. | Specific substrates for target enzyme (e.g., xylan for xylanase) [28]. |

| Thermal Cycler | Precise temperature control for stability assays. | BioRad C1000 Touch Thermal Cycler for heat treatment [27]. |

The empirical data and comparative analysis presented confirm that thermostability is a linchpin for efficient and cost-effective bioprocesses. Engineered thermostable enzymes demonstrate unequivocal advantages, including extended operational lifetimes, higher product yields, and superior resilience under challenging production conditions. As enzyme engineering evolves, integrating sophisticated computational models like iCASE and VenusREM with robust experimental validation protocols provides a powerful framework for developing next-generation biocatalysts. This synergy between computational design and empirical testing will continue to drive innovation, reducing costs and enhancing sustainability across the bioprocessing industry.

Advanced Workflows for Engineering and Testing Thermostable Enzymes

The pursuit of enzyme variants with enhanced thermostability is a central goal in biotechnology and pharmaceutical development. Validating these improvements requires robust computational methods to predict how mutations will affect protein stability and function. The scientific community primarily employs two complementary paradigms: physics-based models and data-driven, machine learning (ML) approaches. Physics-based methods like Rosetta and FoldX use energy functions to simulate atomic interactions and calculate the change in folding free energy (ΔΔG) upon mutation. In contrast, data-driven methods leverage patterns in vast biological datasets to predict stability changes, often with dramatically increased speed. This guide provides an objective comparison of these approaches, detailing their performance, underlying protocols, and practical applications in enzyme engineering.

Performance Comparison at a Glance

The table below summarizes the key performance characteristics of representative physics-based and data-driven models as reported in recent literature.

Table 1: Comparative Performance of Physics-Based and Data-Driven Predictive Models

| Model Name | Type | Key Performance Metrics | Computational Speed | Key Advantages |

|---|---|---|---|---|

| Rosetta | Physics-Based | Widely used for ΔΔG calculation; accuracy depends on system and sampling [31] | Slower; requires extensive conformational sampling [31] | Provides atomistic interpretability; no training data required [32] |

| FoldX | Physics-Based | Used for virtual saturation mutagenesis to identify stabilizing mutations [6] | Faster than Rosetta, but slower than ML [31] | Fast enough for single-site saturation scans; integrates with visualization tools [6] |

| Pythia | Data-Driven (Self-Supervised GNN) | State-of-the-art zero-shot ΔΔG prediction; validated in thermostabilizing mutations [33] | Up to 10^5 times faster than some force-field methods [33] | Exceptional speed for large-scale analysis; zero-shot requires no experimental stability data [33] |

| Pythia-PPI | Data-Driven (Multitask Learning) | Pearson's correlation: 0.7850 on SKEMPI dataset (binding affinity) [31] | >10,000 predictions per minute [31] | High accuracy for protein-protein binding affinity changes [31] |

| iCASE (ML-Assisted) | Data-Driven (Supervised ML) | Successfully improved activity (up to 3.39-fold) and stability of multiple enzymes [12] | N/R | Integrates conformational dynamics with ML for synergistic stability-activity improvement [12] |

| XGBoost/SHAP | Data-Driven (Traditional ML) | Cross-Validation MAE: 6.016 ± 0.116 (for Tm prediction) [34] | N/R | High interpretability; identifies key features like serine fraction and pH [34] |

Experimental Protocols for Model Validation

Physics-Based Workflow: Virtual Saturation Mutagenesis with FoldX

The short-loop engineering strategy provides a canonical example of using physics-based tools for predicting stabilizing mutations.

Objective: Identify "sensitive residues" within rigid short-loop regions that can be mutated to hydrophobic residues with large side chains to fill cavities and enhance thermal stability [6].

Procedure:

- Structure Preparation: Obtain a high-resolution crystal structure of the target enzyme (e.g., Lactate dehydrogenase from Pediococcus pentosaceus).

- Site Selection: Identify short-loop regions (e.g., a 6-residue loop: Asn96-Val97-Pro98-Ala99-Tyr100-Ser101).

- Virtual Saturation Mutagenesis: Use FoldX to perform in silico mutagenesis at each position in the short loop, calculating the change in folding free energy (ΔΔG) for all 19 possible amino acid substitutions.

- Analysis: Identify candidate sites where multiple mutations yield a negative ΔΔG (stabilizing effect). For example, Ala99 was identified as a promising site because 14 mutant variants showed ΔΔG < 0 [6].

- Experimental Validation: Construct a saturation mutagenesis library at the candidate site (e.g., Ala99). Express and purify variants, and measure thermal stability indicators such as half-life at elevated temperatures. The successful variant A99Y showed a 9.5-fold increase in half-life compared to the wild type [6].

Data-Driven Workflow: Zero-Shot Prediction with Pythia

Pythia exemplifies a modern, self-supervised learning approach for ultrafast stability prediction.

Objective: Predict mutation-driven changes in protein stability (ΔΔG) in a zero-shot manner, without requiring experimentally derived stability data for training [33].

Procedure:

- Input Representation: Transform the local structure of a protein into a graph representation. Each amino acid is a node, connected to its 32 nearest neighbors based on Euclidean distance of C-alpha atoms.

- Feature Encoding: Node features include one-hot encoded amino acid type and sine/cosine transformations of backbone dihedral angles (φ, ψ, ω). Edge features include distances between backbone atoms (C-alpha, C, N, O, C-beta), sequence position, and chain information [31].

- Graph Neural Network (GNN): A pre-trained structure graph encoder (with three Attention Message-Passing Layers) processes the graph to generate hidden embeddings and amino acid probabilities [31].

- ΔΔG Prediction: The model leverages the amino acid probabilities and structural context to compute ΔΔG. The core principle is that the model learns a physical relationship between the native structure and the likelihood of an amino acid appearing in that context, which correlates with stability [33].

- Experimental Validation: Select top-predicted stabilizing mutations for a target enzyme (e.g., limonene epoxide hydrolase). Experimental results confirmed that Pythia achieved a higher success rate for identifying thermostabilizing mutations compared to previous predictors [33].

Integrated Workflow: Combining Dynamics and Supervised ML with iCASE

The iCASE strategy demonstrates how molecular dynamics and supervised machine learning can be integrated for multi-property enzyme engineering.

Objective: Synergistically improve both enzyme thermostability and catalytic activity, overcoming the common stability-activity trade-off [12].

Procedure:

- Identify Fluctuating Regions: Calculate the isothermal compressibility (βT) across the enzyme structure to identify high-fluctuation regions (e.g., specific loops and alpha-helices).

- Calculate Dynamic Squeezing Index (DSI): Combine dynamics with activity modification by calculating a DSI metric coupled to the active center. Residues with a DSI > 0.8 are selected as candidate mutation sites [12].

- Initial Filtering with Physics-Based Tools: Use Rosetta to predict the change in free energy (ΔΔG) for candidate mutations to filter for stabilizing variants [12].

- Train Predictive ML Model: Establish a dynamic response predictive model using structure-based supervised machine learning. This model is trained on data from prior steps and experimental results to predict enzyme function and fitness, including epistatic interactions in multi-mutant variants [12].

- Experimental Validation: Test model-predicted single and combination mutants. For example, a triple-point mutant (R77F/E145M/T284R) of xylanase showed a 3.39-fold increase in specific activity and a 2.4 °C increase in melting temperature (Tm) [12].

Figure 1: Comparative Workflows for Enzyme Thermostability Prediction. This diagram illustrates the distinct and integrated workflows of physics-based, data-driven, and hybrid computational approaches for predicting enzyme thermostability.

Successful implementation of computational predictions requires a suite of software tools and databases. The following table lists essential resources for researchers in this field.

Table 2: Essential Computational Tools and Databases for Enzyme Thermostability Research

| Resource Name | Type | Primary Function | Key Features / Application Context |

|---|---|---|---|

| Rosetta | Software Suite | Protein structure modeling & design | ΔΔG calculations, protein folding, and design; provides atomistic insights [31]. |

| FoldX | Software Tool | Quick energy calculations & mutagenesis | Virtual saturation mutagenesis; prioritizes mutations for experimental testing [6]. |

| Pythia / Pythia-PPI | Web Server / Model | Zero-shot & supervised ΔΔG prediction | Ultrafast stability and binding affinity change prediction [31] [33]. |

| Pro-PRIME | Large Language Model | Protein sequence fitness prediction | Scores single and multi-point mutations for properties like thermostability [35]. |

| FireProtDB | Database | Curated protein stability data | High-quality dataset of mutant thermal stability for ML model training [31] [32]. |

| ThermoMutDB | Database | Manually curated mutation data | Collection of thermodynamic data for missense mutants [32]. |

| BRENDA | Database | Enzyme function and properties | Extensive data on enzyme optimal temperature and stability [32]. |

| SHAP (SHapley Additive exPlanations) | Analysis Tool | Model interpretability | Explains ML model predictions, e.g., identifies key amino acid fractions for stability [34]. |

Both physics-based and data-driven computational models are powerful tools for predicting enzyme thermostability, yet they offer different strengths. Physics-based methods provide deep, interpretable insights into the structural mechanisms of stabilization but are often computationally expensive. Data-driven ML models offer unparalleled speed and are increasingly achieving state-of-the-art accuracy, especially as the volume of biological data grows. The choice between them depends on the specific research context: the availability of high-quality structural data, the need for interpretability, computational resources, and the desired throughput. The emerging trend of integrating both approaches, as seen in strategies like iCASE, holds great promise for efficiently navigating the complex fitness landscape of enzymes to develop robust biocatalysts for industrial and therapeutic applications.

The pursuit of industrial biocatalysts is often hampered by the intrinsic instability of natural enzymes under process conditions. A central challenge in enzyme evolution is reliably validating thermostability improvements in engineered variants. While the melting temperature (Tm) serves as a key experimental indicator, computational predictions are essential for accelerating the design cycle. This guide objectively compares two distinct stability prediction approaches: the physics-based digzyme Score and the machine learning-driven SPIRED model, by examining their experimental performance in published case studies. The analysis focuses on their operational methodologies, accuracy in predicting stability changes, and practical utility for researchers in directing evolution campaigns.

The following table summarizes the core characteristics of the digzyme Score and the SPIRED (Structure-based Supervised Machine Learning) model, situating them within the broader landscape of stability prediction tools.

Table 1: Comparative Overview of Enzyme Thermostability Prediction Tools

| Feature | digzyme Score | SPIRED Model | Classical Tools (e.g., FoldX, Rosetta) | Language Models (e.g., PRIME) |

|---|---|---|---|---|

| Core Methodology | Physics-based energy calculation from 3D structures [36] | Structure-based supervised machine learning [28] | Empirical force fields & statistical potentials [36] [37] | Masked language modeling trained on protein sequences & host OGT [37] |

| Primary Input | Predicted or experimental 3D enzyme structure [36] | Enzyme structure and dynamic properties [28] | Protein 3D structure [36] | Protein amino acid sequence [37] |

| Typical Output | Stability score correlating with Tm (for relative comparison) [36] | Prediction of function, fitness, and epistasis [28] | Predicted change in folding free energy (ΔΔG) [36] [38] | Mutant score and predicted optimal growth temperature (OGT) [37] |

| Key Strength | No requirement for prior experimental mutagenesis data; versatility across protein families [36] | Designed to handle stability-activity trade-offs and predict epistasis [28] | Well-established; provides a physical interpretation of stability [36] | Zero-shot prediction without need for structural data; high success rate in practice (>30%) [37] |

The digzyme Score: A Physics-Based Approach in Practice

Methodology and Experimental Workflow

The digzyme Score employs a physics-based approach that uses three-dimensional structural information of enzymes to compute a score correlated with the melting temperature (Tm) [36]. The method is founded on molecular mechanics and statistical mechanics approximations to make the complex calculations of atomic interactions feasible [36]. The typical workflow for using the digzyme Score in an enzyme engineering project is as follows:

Case Study: Prediction of Frataxin Mutant Stability

In a blind competition to predict the change in unfolding free energy (ΔΔGu) for eight mutants of the enzyme frataxin, the digzyme Score achieved a Pearson correlation coefficient of 0.87 with experimental values [36]. This performance was slightly better than the established physics-based tool FoldX and competitive with a machine learning model (Kim Lab) that had been trained on the Protherm database of mutant thermal stability [36].

Table 2: Performance Comparison in Frataxin Mutant Stability Prediction

| Method | Category | Pearson Correlation (r) with Experiment | Key Requirement |

|---|---|---|---|

| digzyme Score | Physics-based | 0.87 [36] | 3D Protein Structure |

| FoldX | Physics-based | Slightly lower than digzyme [36] | 3D Protein Structure |

| Kim Lab Model | Machine Learning | 0.89 [36] | Training data from Protherm database |

| Pal Lab MD Approach | Molecular Dynamics | Moderate correlation (result of chance) [36] | Extensive computational resources |

Performance with Diverse Enzyme Populations

A more rigorous test involves predicting stability across enzymes with the same function but low sequence identity. In a benchmark using the NanoMelt dataset of nanobody Tm values, the digzyme Score produced a weak but significant correlation (r = 0.411) with experimental melting temperatures [36]. Under the same conditions, FoldX failed to produce a correlated prediction, and protein language models (AntiBERTy, ESM-2) showed weaker correlations (0.168 and 0.338, respectively) [36]. This demonstrates the digzyme Score's advantage in handling sequence-diverse populations compared to other structure-based tools.

The SPIRED Model: A Machine Learning Framework

Methodology and Workflow

The SPIRED (Structure-based Supervised Machine Learning) model represents a different paradigm, integrating multi-dimensional conformational dynamics to guide enzyme evolution [28]. It employs an isothermal compressibility-assisted dynamic squeezing index perturbation engineering (iCASE) strategy to construct hierarchical modular networks for enzymes of varying complexity [28]. The model is trained to learn the relationship between structural dynamics and functional fitness.

Experimental Validation and Results

The SPIRED model was validated on multiple enzymes with different structures and catalytic types. For a monomeric enzyme protein-glutaminase (PG), the model successfully identified single-point mutants (H47L, M49E, M49L) that showed 1.29 to 1.82-fold improvements in specific activity with slightly increased thermal stability compared to wild-type [28]. When these were combined into double mutants, the best variant (K48R/M49E) exhibited a 1.74-fold increase in specific activity with maintained stability [28].

For the more complex TIM barrel structure of xylanase (XY), the model identified a triple-point mutant (R77F/E145M/T284R) that demonstrated a 3.39-fold increase in specific activity and a 2.4°C increase in Tm [28]. This highlights the model's ability to handle enzymes of varying complexity and to synergistically improve both stability and activity, directly addressing the common trade-off between these properties.

Essential Research Reagents and Experimental Protocols

Successful validation of computational predictions requires carefully controlled experimental protocols. The following table details key reagents and methods used in the cited studies.

Table 3: Key Research Reagents and Experimental Protocols for Thermostability Validation

| Reagent/Method | Function in Validation | Example Usage in Case Studies |

|---|---|---|

| Differential Scanning Fluorimetry (DSF) | High-throughput measurement of protein melting temperature (Tm) [36] | Used in NanoMelt dataset for nanobody thermostability screening [36] |

| p-nitrophenolate-based Assay Systems | Spectrophotometric activity measurement of hydrolytic enzymes [39] | Used for activity prediction of thiolase-like enzyme OleA [39] |

| N-Succinyl-Ala-Ala-Pro-Phe p-nitroanilide (Suc-AAPF-pNA) | Synthetic substrate for specific activity determination of proteases [38] | Used to measure nattokinase activity in Co-MdVS strategy validation [38] |

| Fibrin Plate Degradation Assay | Functional activity measurement for fibrinolytic enzymes [38] | Validation of nattokinase mutant efficiency [38] |

| Ammonium Sulfate Precipitation | Protein purification and concentration [38] | Used for purification of nattokinase variants [38] |

Experimental Protocol: Melting Temperature Determination

A standard protocol for validating predicted thermostability involves determining the enzyme's melting temperature using differential scanning fluorimetry:

- Protein Purification: Express and purify the wild-type and mutant enzymes using affinity chromatography and subsequent buffer exchange [38].

- Sample Preparation: Mix the protein with a fluorescent dye (e.g., SYPRO Orange) that binds to hydrophobic regions exposed upon unfolding.

- Thermal Ramp: Incubate the sample in a real-time PCR instrument while gradually increasing the temperature (e.g., from 25°C to 95°C at a rate of 1°C per minute).

- Fluorescence Monitoring: Measure the fluorescence intensity throughout the thermal ramp.

- Data Analysis: Plot fluorescence intensity against temperature and determine the Tm as the midpoint of the protein unfolding transition curve [36].

Experimental Protocol: Specific Activity Assessment

For functional validation alongside stability:

- Substrate Preparation: Prepare the appropriate substrate in optimal buffer conditions (e.g., Suc-AAPF-pNA for proteases in Tris-HCl buffer with CaCl₂) [38].

- Reaction Setup: Combine enzyme sample with substrate in a spectrophotometer-compatible microplate.

- Kinetic Measurement: Monitor the change in absorbance (e.g., at 405 nm for p-nitroaniline release) over time at the desired assay temperature.

- Calculation: Determine the initial reaction velocity and calculate specific activity as units of enzyme activity per milligram of enzyme (e.g., μmol of product formed per minute per mg of enzyme) [38].

The case studies demonstrate that both the digzyme Score and SPIRED model provide valuable, complementary approaches for predicting enzyme thermostability in evolution research.

The digzyme Score offers a significant advantage in scenarios where prior experimental mutagenesis data is unavailable. Its robust physics-based approach delivers reliable predictions for both single-site mutations and diverse enzyme populations, making it particularly suitable for the early stages of enzyme engineering or when exploring entirely new protein families [36].

The SPIRED model excels in handling the stability-activity trade-off and navigating complex epistatic interactions in multi-site mutants [28]. Its structure-based supervised learning framework is powerful for optimizing enzymes when some initial functional data is available to inform the model.

For researchers, the choice between these tools depends on the project context. Initial explorations of sequence-diverse enzyme families or targeted single-site mutagenesis without training data benefit from the digzyme Score's versatility. In contrast, comprehensive engineering campaigns aiming for multi-property optimization, especially with some existing activity data, may achieve better results with the SPIRED model's capacity to predict fitness and epistasis. Ultimately, both tools represent significant advances over traditional methods, providing researchers with powerful capabilities to validate and guide enzyme thermostability improvements in evolutionary experiments.

In enzyme engineering, the pursuit of improved stability and activity is often hampered by the stability-activity trade-off, where mutations that enhance one property frequently diminish the other [12] [40]. This challenge is compounded by the fact that a significant proportion of random mutations are either neutral or deleterious, making the identification of beneficial variants a resource-intensive process [41]. Consequently, library design strategies that can preemptively filter out destabilizing mutations have emerged as powerful tools to accelerate directed evolution campaigns. By enriching libraries with functionally competent and structurally robust variants, these strategies enable a more efficient exploration of fitness landscapes. This guide objectively compares two prominent approaches—computational stability filtering and dynamic flexibility analysis—detailing their experimental protocols, performance outcomes, and practical implementation for validating enzyme thermostability.

Comparative Analysis of Library Design Strategies

The following table summarizes the core methodologies, key outcomes, and primary applications of the two featured library design strategies.

Table 1: Comparison of Library Design Strategies for Filtering Destabilizing Mutations

| Strategy Name | Core Methodology | Key Outcome/Performance | Primary Application / Enzyme Type Demonstrated |

|---|---|---|---|

| Computational Stability Filtering [41] | Uses Rosetta's Cartesian ΔΔG protocol to calculate free energy changes (ΔΔG) for all possible single-point mutations; filters out variants with ΔΔG above a set threshold (e.g., < -0.5 REU). | • Identified that ~49% of possible single-site mutations could be filtered out without losing beneficial variants.• Achieved a >450-fold activity improvement in a Kemp eliminase (HG3.R5) in only 5 rounds of evolution.• Resulted in a variant with a kcat of 702 ± 79 s⁻¹ and a kcat/Km of 1.7 × 10⁵ M⁻¹ s⁻¹. |

De novo designed enzymes (Kemp eliminase). |

| Dynamic Flexibility Analysis (iCASE) [12] | Identifies high-fluctuation regions via isothermal compressibility (βT) and residue dynamic squeezing index (DSI > 0.8). Coupled with Rosetta ΔΔG predictions to select mutations. | • For a monomeric enzyme (PG): Generated a double mutant (K48R/M49E) with a 1.74-fold increase in specific activity.• For a TIM barrel enzyme (XY): Generated a triple mutant (R77F/E145M/T284R) with a 3.39-fold increase in specific activity and a ΔTm of +2.4 °C. | Enzymes of varying complexity (Monomeric PG, TIM barrel Xylanase, hexameric GADH). |

Detailed Experimental Protocols

Protocol A: Computational Stability Filtering with Rosetta

This protocol, derived from the work on Kemp eliminase HG3, leverages computational predictions to exclude destabilizing mutations from library design [41].

- Identify Target Residues: Define the region for mutagenesis. This can be a radius around the substrate (e.g., within 6 Å of the bound ligand) or residues lining the access tunnel to the active site.

- Generate Single-Point Mutations: Compute all possible single amino acid substitutions for the targeted residues.

- Calculate Stability Changes: For each mutation, calculate the predicted change in folding free energy (ΔΔG) using the Cartesian ΔΔG protocol within the Rosetta Protein Modeling Suite.

- Apply Energy Threshold: Filter out all mutations with a predicted ΔΔG above a chosen destabilization threshold. In the referenced study, a threshold of -0.5 Rosetta Energy Units (REU) was used, which filtered out ~70% of possible mutations, leaving the top 30% for experimental testing.

- Library Construction and Screening: Synthesize the gene library using customized DNA oligonucleotide pools and assemble via overlap extension PCR. Express the variant library in a suitable host (e.g., E. coli) and screen for the desired activity or stability.

- Iterative Evolution: Combine beneficial mutations from one round to create the parent for the next cycle of computation, design, and screening.

Protocol B: iCASE Strategy for Multi-Scale Enzyme Engineering

The iCASE strategy employs conformational dynamics to identify key mutation sites, applicable to enzymes of varying structural complexity [12].

- Identify High-Fluctuation Regions: Calculate the isothermal compressibility (βT) across the enzyme's structure to pinpoint highly flexible regions (e.g., specific loops and α-helices).

- Calculate Dynamic Squeezing Index (DSI): Compute the DSI for residues, which measures dynamic coupling to the active center. Select candidate residues with a DSI > 0.8 (top 20%).

- Prioritize with Free Energy Calculations: Predict the ΔΔG for mutations at the candidate sites using Rosetta to further prioritize mutations that are not highly destabilizing.

- Wet-Lab Validation: Express and purify the selected single-point mutants. Measure specific activity and thermal stability (e.g., melting temperature, Tm).

- Generate Combinatorial Variants: Combine beneficial single-point mutations to create combinatorial variants and measure the synergistic effects on activity and stability.

Workflow Visualization

The following diagram illustrates the logical sequence of the iCASE strategy, which integrates computational analysis and experimental validation.

Research Reagent Solutions

Successful implementation of these strategies relies on specific computational and experimental tools. The table below lists key reagents and their functions.

Table 2: Essential Research Reagents and Tools for Library Design and Validation

| Reagent / Tool Name | Function in Experiment | Specific Example / Notes |

|---|---|---|

| Rosetta Protein Modeling Suite [41] [12] | Computational prediction of the change in free energy (ΔΔG) upon mutation to filter destabilizing variants. | The Cartesian ΔΔG protocol was used to assess all 5,757 single-point mutations in Kemp eliminase [41]. |

| FoldX Force Field [40] | An alternative algorithm for rapid computational estimation of mutation effects on protein stability (ΔΔG). | Applied in a large-scale analysis to compare the stability effects of function-altering versus neutral mutations [40]. |

| Dynamic Squeezing Index (DSI) [12] | A metric to identify residues with high dynamic coupling to the active center, used for activity engineering. | Residues with a DSI > 0.8 (top 20%) were selected as candidates for mutagenesis in the iCASE strategy [12]. |

| Overlap Extension PCR [41] | A molecular biology technique for assembling full-length genes from pools of synthetic oligonucleotides. | Enabled the physical construction of complex gene libraries from customized oligo fragments for Kemp eliminase evolution [41]. |

| 6-Nitrobenzotriazole [41] | A transition state analog (TSA) used in X-ray crystallography to study substrate binding and active site architecture. | Used to determine the 1.5 Å crystal structure of the evolved Kemp eliminase HG3.R5 (PDB 8RD5) [41]. |

The comparative data demonstrates that both computational stability filtering and dynamic flexibility analysis are highly effective strategies for designing smart mutant libraries. The choice of strategy can be guided by the specific engineering goals and the system at hand.

Computational stability filtering excels in its simplicity and high efficiency for rapidly traversing fitness landscapes, as evidenced by the dramatic acceleration of Kemp eliminase evolution [41]. Its primary strength lies in filtering out a large fraction of non-productive mutations, thereby concentrating resources on a smaller, stability-enriched library.

In contrast, the iCASE strategy offers a more integrated approach to overcome the stability-activity trade-off [12]. By explicitly targeting residues that govern conformational dynamics, it provides a rational framework for synergistically improving both activity and stability, which is particularly valuable for engineering complex, multi-domain enzymes.

In conclusion, the strategic pre-filtering of destabilizing mutations is no longer an optional refinement but a cornerstone of modern, efficient enzyme engineering. By leveraging these sophisticated library design strategies, researchers can significantly accelerate the development of robust biocatalysts essential for advancing applications in biomedicine, chemical manufacturing, and sustainable technologies.