Validating Evolutionary Predictions: A Practical Guide to Experimental Evolution for Biomedical Research

This article provides a comprehensive framework for using experimental evolution to validate evolutionary predictions, a capability increasingly critical in medicine and drug development.

Validating Evolutionary Predictions: A Practical Guide to Experimental Evolution for Biomedical Research

Abstract

This article provides a comprehensive framework for using experimental evolution to validate evolutionary predictions, a capability increasingly critical in medicine and drug development. We explore the scientific foundations of evolutionary forecasting, detail established and emerging methodological approaches, and address key challenges in predictability and optimization. A central focus is comparative validation strategies, arguing for a shift from the concept of single 'validation' to ongoing 'corroboration' using orthogonal methods. Designed for researchers and drug development professionals, this guide synthesizes current knowledge to enhance the design, execution, and interpretation of evolution experiments, ultimately aiming to improve the prediction and management of pathogen resistance, cancer evolution, and therapeutic efficacy.

The Scientific Basis for Predicting Evolution: From Theory to Testable Hypotheses

Evolutionary biology is undergoing a profound transformation from a historical, descriptive science to a quantitative, predictive discipline. This paradigm shift represents a fundamental change in how researchers approach evolutionary processes, moving from reconstructing life's history to forecasting its future trajectories. Where evolutionary biology was once considered too slow and complex to permit experimental study or prediction, researchers now successfully develop and test precise evolutionary forecasts across diverse fields including medicine, agriculture, biotechnology, and conservation biology [1].

This transformation is powered by the integration of Darwin's foundational theory of evolution by natural selection with sophisticated mathematical models, high-throughput experimental techniques, and computational tools. The emerging predictive framework enables scientists to address critical questions such as which pathogen strains will dominate future epidemics, how cancer cells evolve resistance to therapies, and how species might adapt to rapidly changing environments [1]. This article examines the methodological advances, experimental validation, and practical applications driving this paradigm shift, with particular focus on how experimental evolution research serves to validate evolutionary predictions.

The Historical Perspective: Evolution as a Descriptive Science

For much of its history, evolutionary biology focused primarily on reconstructing and interpreting past events. The comparative method—analyzing similarities and differences between species—dominated evolutionary research, supplemented by fossil evidence that provided glimpses into life's historical trajectory. This retrospective approach stemmed from the widespread belief that evolutionary processes operated on time scales far too extended for direct human observation or experimentation [2].

The Modern Synthesis of the early 20th century unified Darwinian natural selection with Mendelian genetics, creating a robust theoretical framework for understanding evolutionary mechanisms. However, this synthesis primarily employed population genetics models to explain patterns of variation and adaptation retrospectively rather than prospectively. Throughout this period, authoritative voices in evolutionary biology maintained a clear separation between evolutionary and developmental biology, with some explicitly stating that "problems concerned with the orderly development of the individual are unrelated to those of the evolution of organisms through time" [3]. This perspective constrained evolutionary biology to interpreting events that had already occurred rather than predicting those to come.

The Emerging Predictive Framework: Core Concepts and Methodologies

Theoretical Foundations for Evolutionary Prediction

The emerging predictive framework in evolutionary biology rests on several interconnected theoretical foundations that enable quantitative forecasting:

Quantitative Genetics Models: Tools like the breeder's equation and genomic selection allow predictions of how quantitative traits will respond to selection pressures, forming the basis for selective breeding programs in agriculture [1].

Population Genetic Forecasting: Explicit population genetic models incorporate forces such as random genetic drift, migration, recombination, and mutation to predict allele frequency changes, accounting for stochastic elements that distort expected selection impacts [1].

Fitness Landscape Theory: By conceptualizing evolutionary adaptation as navigation through multidimensional fitness landscapes, researchers can predict which genetic paths populations are likely to follow when subjected to specific selective pressures [1].

These theoretical approaches share a common predictive structure defined by their scope (what population variables are predicted), time scale (from hours to years), and precision (the specificity of predictions) [1]. This structure enables researchers to tailor predictive frameworks to specific biological questions and practical applications.

Experimental Evolution as a Validation Tool

Experimental evolution has emerged as a powerful methodology for validating evolutionary predictions under controlled laboratory conditions. This approach leverages microorganisms' short generation times and large population sizes to observe evolutionary adaptation in real time, compressing time scales that would require millennia in larger organisms [2].

Table 1: Key Advantages of Microbial Experimental Evolution

| Advantage | Application in Predictive Validation | Practical Benefit |

|---|---|---|

| Rapid generation times | Observation of hundreds to thousands of generations in manageable timeframes | Enables high-replication, statistically powerful experiments |

| Large population sizes | Observation of rare mutations and evolutionary trajectories | Provides comprehensive data on evolutionary potential |

| Cryopreservation capability | Creation of "frozen fossil records" for direct comparison across time points | Allows exact measurement of evolutionary change through replay experiments |

| Genomic tools | Detailed mapping of genotype-phenotype relationships | Enables mechanistic understanding of evolutionary changes |

These technical advantages make experimental evolution an ideal testing ground for evolutionary predictions, allowing researchers to directly compare expected and observed outcomes under controlled conditions [2]. The ability to preserve population samples indefinitely in frozen "time vaults" enables particularly powerful validation through "replay experiments," where evolution can be restarted from any point to test the repeatability of evolutionary trajectories [2].

Validating Predictions: Key Experimental Approaches

Laboratory Evolution and the Prediction of Adaptive Trajectories

Laboratory evolution experiments provide the most direct method for validating evolutionary predictions. In a compelling demonstration of evolutionary forecasting, researchers used an E. coli strain incapable of utilizing lactose (due to a frameshift mutation) to test predictions about evolutionary bias toward different carbon sources [4]. When introduced into medium containing sodium acetate and lactose, populations consistently evolved reverse mutations enabling lactose utilization (lac+), whereas populations in glucose-lactose medium maintained their ancestral glucose utilization (lac-) [4].

Table 2: Experimental Evolution Outcomes Under Different Selective Regimes

| Experimental Condition | Carbon Source Availability | Predicted Evolutionary Outcome | Observed Evolutionary Outcome | Fitness Gain Rationale |

|---|---|---|---|---|

| L-medium | Sodium acetate + lactose | Evolution toward lactose utilization | All populations evolved lac+ phenotype | Lactuse utilization offers higher fitness than acetate utilization |

| G-medium | Glucose + lactose | Maintenance of glucose utilization | No transition to lactose utilization | Glucose utilization offers higher fitness than lactose utilization |

This elegant experiment demonstrated that evolutionary bias emerges from differential fitness gains available from alternative evolutionary paths, with high-fitness directions competitively excluding lower-fitness alternatives [4]. The experimental design enabled clear, quantitative validation of predictions about evolutionary trajectories based on metabolic theory and previous measurements of growth rates on different carbon sources.

Predicting Evolutionary Dynamics in Complex Environments

Beyond simple selective regimes, researchers have successfully predicted evolutionary dynamics in more complex scenarios:

Spatiotemporal Environmental Variation: The nature of the selective environment profoundly impacts evolutionary dynamics and outcomes. Changing environments often select for generalists, while structured environments with multiple niches can foster and maintain diversity through mechanisms like negative frequency-dependent selection [2].

Clonal Interference: In large asexual populations, multiple beneficial mutations may arise simultaneously and compete for fixation, slowing the overall rate of adaptation—a phenomenon predicted theoretically and confirmed through experimental evolution [2].

Epistatic Interactions: Nonlinear interactions between mutations create historical contingencies that can make evolutionary trajectories less predictable, though statistical predictions remain possible when accounting for these interactions [2].

These validated predictions demonstrate the increasing sophistication of evolutionary forecasting, incorporating realistic biological complexity rather than simplified models.

The Scientist's Toolkit: Essential Reagents and Methodologies

Table 3: Essential Research Reagents and Methodologies for Experimental Evolution

| Reagent/Method | Function/Application | Experimental Example |

|---|---|---|

| Defined minimal media (e.g., M9) | Provides controlled selective environment with specific nutritional limitations | Carbon source evolution experiments [4] |

| Blue-white screening | Visual identification of lac+ vs. lac- phenotypes through X-gal hydrolysis | Monitoring evolutionary transitions in lactose utilization [4] |

| Fluorescent genetic labels | Tracking strain dynamics in mixed populations | Competition assays between evolved and ancestral lineages [2] |

| Cryopreservation reagents | Maintaining frozen "fossil records" of evolutionary time points | Replay experiments and direct fitness comparisons [2] |

| Continuous culture devices (chemostats) | Maintaining constant selective pressures in microbial populations | Studying adaptation to steady nutrient limitation [2] |

| Serial transfer protocols | Propagating populations through growth cycles | Long-term evolution experiments [2] [4] |

Research Applications: From Prediction to Intervention

Public Health and Disease Management

Evolutionary predictions have transformed public health approaches to infectious diseases:

Influenza Vaccine Strain Selection: Predictive models incorporating antigenic drift measurements successfully forecast which influenza strains will dominate upcoming seasons, informing annual vaccine development [1].

Antibiotic Resistance Management: Evolutionary forecasting guides the development of antibiotic cycling protocols and combination therapies that suppress resistance evolution in bacterial pathogens [1].

Pandemic Preparedness: Predictive models of viral evolution help anticipate variants of concern during emerging outbreaks, enabling proactive public health responses [1].

Evolutionary Control: Directing Evolutionary Outcomes

Beyond mere prediction, evolutionary biology now enables evolutionary control—the deliberate alteration of evolutionary processes for specific purposes [1]. This represents the most advanced application of predictive evolutionary frameworks:

Suppressing Undesirable Evolution: Treatment strategies can be designed to minimize the evolution of drug resistance in pathogens or cancer cells, either through combination therapies that create evolutionary trade-offs or by steering evolution toward less-fit genotypes [1].

Promoting Beneficial Evolution: Conservation biologists use evolutionary predictions to facilitate adaptation in endangered species facing rapid environmental change, while biotechnologists direct microbial evolution toward improved industrial performance [1].

The successful application of evolutionary control demonstrates the maturity of predictive frameworks, moving from passive observation to active management of evolutionary processes.

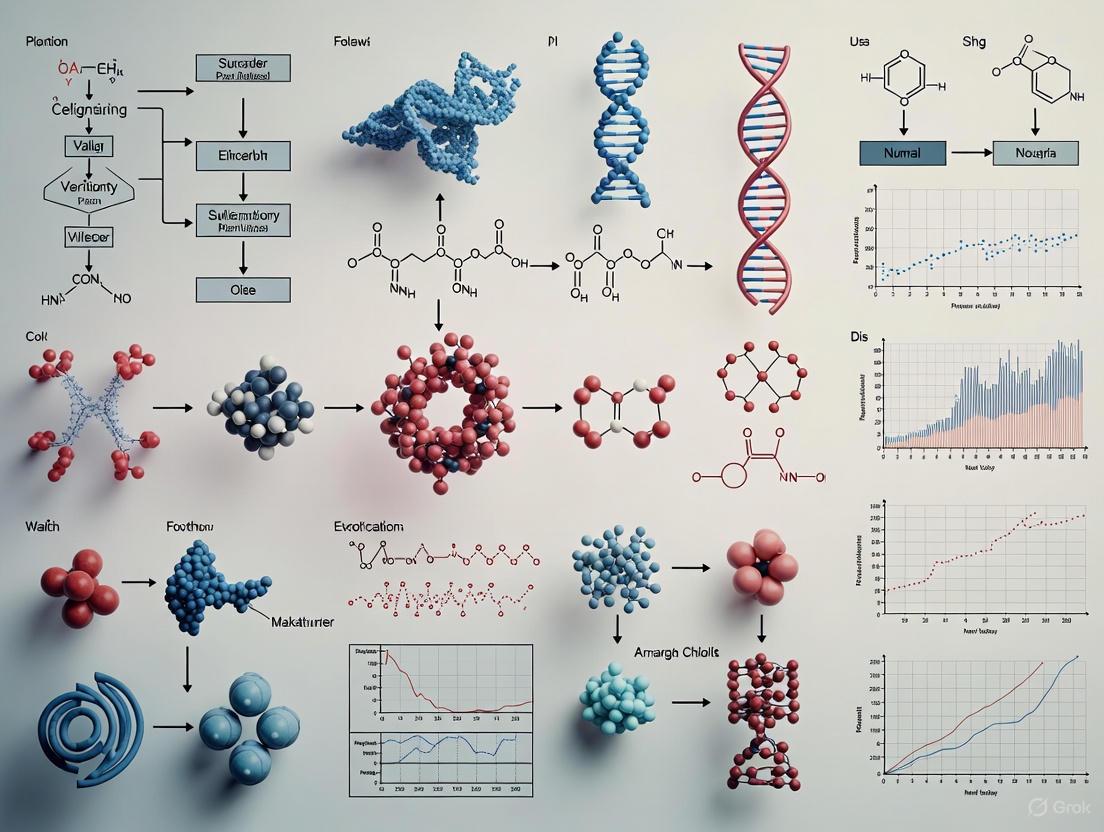

Visualizing Predictive Experimental Evolution

The diagram below illustrates the core workflow for validating evolutionary predictions through experimental evolution:

The transformation of evolutionary biology from a historical to a predictive science represents a genuine paradigm shift in biological research. This transition has been validated through rigorous experimental evolution studies that demonstrate our growing capacity to forecast evolutionary outcomes across diverse biological systems. The emerging predictive framework enables not just passive forecasting but active management of evolutionary processes through evolutionary control strategies with significant applications in medicine, biotechnology, and conservation.

As technological advances continue to enhance our ability to monitor evolutionary change in real time and at molecular resolution, the predictive power of evolutionary biology will continue to grow. The integration of mechanistic models with machine learning approaches promises to further refine evolutionary forecasts, while single-cell technologies and high-throughput phenotyping will provide unprecedented resolution for observing evolutionary processes. This paradigm shift positions evolutionary biology to address some of the most pressing challenges facing humanity, from combating evolving pathogens to facilitating ecological resilience in a rapidly changing world.

The core principles of evolution—natural selection, heritable variation, and fitness landscapes—provide the foundational framework for understanding how populations adapt over time. While these concepts have been established for decades, a revolutionary shift has occurred through the integration of experimental evolution, which allows researchers to directly test and validate evolutionary predictions in controlled settings. This approach has transformed evolutionary biology from a historically descriptive science into a predictive one, enabling researchers to replay the "tape of life" under controlled conditions to determine which outcomes are deterministic and which are stochastic [1] [5]. This guide compares how different experimental systems and methodologies perform in validating key evolutionary concepts, providing researchers with objective data to select appropriate models for their specific research questions.

Experimental evolution functions as a bridging discipline, connecting theoretical predictions with empirical validation across diverse biological systems. By subjecting populations of molecules, microbes, or other model organisms to controlled selective pressures and monitoring their genomic and phenotypic changes in real-time, researchers can quantitatively test fundamental questions about evolutionary predictability [1]. The growing emphasis on experimental validation in computational predictions has further elevated the importance of these approaches, as evidenced by increasing requirements from leading journals for robust verification of modeling results [6].

Theoretical Foundations and Their Experimental Tests

The Core Principles Framework

Evolutionary theory rests on three interconnected principles that can be individually tested and validated through experimental evolution:

Natural Selection: The process by which populations become better adapted to their environment through the differential survival and reproduction of individuals with advantageous traits. Experimental evolution tests this principle by applying controlled selective pressures and measuring adaptive responses [1].

Heritable Variation: The raw material for evolution, provided by genetic mutations and recombination that create diversity upon which selection can act. Modern experimental evolution quantifies this variation through high-throughput sequencing [7].

Fitness Landscapes: Conceptual maps representing the relationship between genotypes and their reproductive success. These landscapes determine the accessibility of evolutionary paths and the predictability of adaptation [8] [9].

The interplay between these principles determines the fundamental question in evolutionary biology: Is evolution predictable? Experimental evolution provides the methodological framework to answer this question by quantifying the deterministic and stochastic components of evolutionary processes [8] [5].

The Fitness Landscape Concept

The topography of fitness landscapes directly influences evolutionary predictability. Smooth landscapes with strong correlations between genotypic similarity and fitness allow more predictable evolutionary trajectories, while rugged landscapes with many fitness peaks and valleys create evolutionary dead-ends and multiple potential outcomes [8]. Quantitative measures of landscape topography include:

- Roughness: The local variation in fitness between neighboring genotypes

- Accessibility: The fraction of possible monotonic fitness paths to a peak

- Sign epistasis: When the fitness effect of a mutation depends on its genetic background

Experimental studies consistently reveal that biologically relevant fitness landscapes are significantly smoother than random null models, suggesting that fundamental constraints such as protein folding physics introduce predictability into evolutionary processes [8].

Table 1: Quantitative Measures of Fitness Landscape Topography

| Measure | Definition | Biological Significance | Experimental Range |

|---|---|---|---|

| Mean Path Divergence | Degree to which starting/ending points determine evolutionary paths | Predictability of evolution; Higher in smooth landscapes | Significantly greater in biological vs. random landscapes [8] |

| Deviation from Additivity | Sum of squared differences between actual fitness and additive model | Landscape roughness; Epistasis | Model-derived and experimental landscapes significantly smoother than random [8] |

| Peak Density | Number of local fitness optima | Constraint on adaptive potential; Evolutionary dead-ends | Substantial deficit in model-derived landscapes compared to random [8] |

| Sign Epistasis Incidence | Frequency of fitness effect reversals between genetic backgrounds | Ruggedness; Contingency in evolutionary paths | Widespread in empirical landscapes but less than in random models [8] |

Comparative Experimental Systems and Methodologies

Model Systems in Experimental Evolution

Different model systems offer distinct advantages for testing specific evolutionary principles, varying in generation time, scalability, and relevance to applied contexts:

- Proteins: Ideal for mapping genotype-phenotype relationships and studying the molecular basis of adaptation [7] [10]

- RNA Molecules: Enable comprehensive exploration of sequence space due to shorter functional lengths [9]

- Pathogenic Fungi: Model systems for studying drug resistance evolution with clinical relevance [11]

- Bacteria: Workhorses for studying microbial adaptation with excellent scalability [12]

The choice of experimental system involves important tradeoffs between realism, precision, and generality—concepts formalized in Levins' triangle [1]. No single system optimizes all three dimensions, requiring researchers to select models based on their specific research questions.

Table 2: Comparison of Experimental Evolution Model Systems

| System | Generation Time | Scalability | Key Advantages | Limitations |

|---|---|---|---|---|

| Proteins (PACE) | Continuous (hours) | High | Direct genotype-phenotype mapping; Controlled mutation rates [7] | Requires specialized continuous evolution equipment |

| RNA Molecules | Minutes-hours | Very high | Comprehensive sequence space mapping; Relevance to early evolution [9] | Limited biological complexity; Less clinical relevance |

| Pathogenic Fungi | Hours-days | Moderate | Clinical relevance; Eukaryotic biology; Sexual reproduction studies [11] | Slower evolution; More complex genetics |

| Bacteria | 20-60 minutes | Very high | Established genetics; Ecological relevance; Antibiotic resistance models [12] | Prokaryote-specific biology; Limited translational relevance for eukaryotic traits |

Key Experimental Protocols and Workflows

Phage-Assisted Continuous Evolution (PACE)

PACE represents a technological breakthrough that enables hundreds of generations of protein evolution with minimal intervention [7]. The methodology links protein activity to phage propagation through a carefully engineered host system:

PACE Experimental Workflow: Connecting Protein Function to Phage Survival

The PACE system enables precise control of two key evolutionary parameters: mutation rate (through arabinose induction of MP) and selection stringency (through AP engineering). This controlled environment has demonstrated that specific combinations of these parameters reproducibly result in different evolutionary outcomes, including characteristic mutational signatures [7].

3Dseq: Protein Structure Determination Through Experimental Evolution

The 3Dseq methodology represents an innovative application of experimental evolution to structural biology, generating sufficient sequence variation to infer residue interactions and 3D structures [10]:

3Dseq: Experimental Evolution for Protein Structure Determination

This approach has successfully determined the structures of β-lactamase PSE1 and acetyltransferase AAC6, confirming that genetic encoding of structural constraints emerges rapidly during experimental evolution and can be extracted through evolutionary coupling analysis [10].

Antimicrobial Resistance Evolution Models

Experimental evolution provides critical insights into the dynamics of drug resistance development, a major challenge in clinical medicine [11] [12]. Standardized protocols include:

- Serial Batch Transfer: Populations are periodically transferred to fresh medium containing antimicrobial agents, allowing resistance evolution over dozens to hundreds of generations

- Continuous Culture: Maintains populations in steady-state growth with constant drug exposure

- Gradient Plates: Spatial concentration gradients of antimicrobials create heterogeneous selective environments

These approaches have revealed fundamental principles of resistance evolution, including fitness trade-offs (resistance often comes at a cost in drug-free environments) and collateral sensitivity (resistance to one drug can increase sensitivity to another) [11].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful experimental evolution requires carefully selected biological materials, selection systems, and analytical tools. The table below details key reagents and their applications across different experimental systems.

Table 3: Essential Research Reagents for Experimental Evolution

| Reagent Category | Specific Examples | Function/Purpose | Application Notes |

|---|---|---|---|

| Selection Systems | T7 RNA Polymerase promoter specificity [7], β-lactamase antibiotic resistance [10] | Links molecular function to selectable phenotype | Critical for PACE; Must have quantitative dynamic range |

| Mutagenesis Tools | Arabinose-inducible mutagenesis plasmid (MP) [7], Error-prone PCR | Controls mutation rates and types | Tunable mutation rates essential for parameter studies |

| Fluorescent Markers | GFP, RFP [11] | Enables tracking of population dynamics via flow cytometry | Minimal fitness impact crucial for long-term evolution |

| Selection Markers | Nourseothricin (NTC), Hygromycin B (HYG) resistance [11] | Distinguishes strains in competitive fitness assays | Multiple markers enable complex experimental designs |

| Host Systems | E. coli S109 [7], S. cerevisiae | Provides cellular machinery for gene expression | Genetically stable hosts prevent co-evolution confounding |

| Sequencing Tools | DNA barcodes [11], High-throughput sequencing | Tracks subpopulation frequencies and evolutionary dynamics | Essential for quantifying parallel evolution |

Data Presentation: Quantitative Comparisons of Evolutionary Outcomes

Determinants of Evolutionary Predictability

Experimental evolution has revealed key factors that influence the predictability of evolutionary outcomes:

- Landscape Smoothness: Significantly higher in biological landscapes than random models, enhancing predictability [8]

- Genetic Constraints: Physical constraints like protein folding create reproducible evolutionary patterns [8]

- Evolvability-Enhancing Mutations: Specific mutations that increase the potential for future adaptation exist in both protein and RNA landscapes [13]

The existence of evolvability-enhancing mutations (EE mutations) represents a particularly significant finding, as these mutations simultaneously increase fitness while expanding the potential for future adaptation. In experimental landscapes, these mutations comprise a small fraction of all mutations but significantly shift the distribution of fitness effects of subsequent mutations toward less deleterious variants [13].

Comparative Analysis of Experimental Evolution Applications

Table 4: Performance Comparison of Experimental Evolution Applications

| Application Domain | Key Measurable Outcomes | Typical Experimental Duration | Predictive Power Validation |

|---|---|---|---|

| Protein Engineering | Altered substrate specificity, thermostability, expression levels | Days-weeks (PACE) [7] | High: Direct functional selection enables precise engineering goals |

| Antimicrobial Resistance | MIC increases, resistance mutations, fitness cost quantification | Weeks-months [11] [12] | Moderate-High: Recapitulates clinical resistance mechanisms |

| Evolutionary Forecasting | Identification of high-frequency adaptive mutations, genotype-phenotype maps | Months-years [1] | Moderate: Improves short-term predictions but limited by contingency |

| Protein Structure Determination | Accuracy of residue contact predictions, structural similarity to known folds | Weeks (3Dseq) [10] | High: Evolutionary couplings accurately reflect structural constraints |

Experimental evolution has transformed from a niche methodology to an essential approach for validating evolutionary predictions across diverse biological systems. The core principles of natural selection, heritable variation, and fitness landscapes provide the conceptual framework, while technologies like PACE, 3Dseq, and high-throughput sequencing provide the methodological toolkit for rigorous experimental testing.

For researchers designing evolution studies, key considerations include:

- System Selection: Balance between scalability, relevance, and biological complexity

- Parameter Control: Precisely tune mutation rates and selection stringency

- Monitoring Resolution: Implement high-frequency sampling with deep sequencing

- Validation Approaches: Include competitive fitness assays and functional characterization

The integration of experimental evolution with computational predictions creates a powerful feedback loop for advancing evolutionary theory while addressing practical challenges in medicine, biotechnology, and fundamental biology. As these methods continue to mature, they offer increasingly sophisticated approaches to predicting and influencing evolutionary processes across biological scales from molecules to ecosystems.

Evolutionary biology has traditionally been viewed as a historical science, with predictions about future evolutionary trajectories long considered near impossible [1] [14]. However, the development of high-throughput sequencing and sophisticated data analysis technologies has challenged this perspective, enabling researchers to make increasingly accurate evolutionary forecasts [1] [14]. Experimental evolution, in which populations of organisms are maintained in controlled environments while changes in genotype and phenotype are monitored over time, has emerged as a powerful approach for testing evolutionary predictions with unprecedented precision [15]. This methodology has brought novel insights into evolutionary processes, allowing researchers to generate a "fossil record" for later study and to test the predictability of evolution across replicate populations [15]. The main goal of this review is to demonstrate how experimental evolution addresses fundamental questions about mutation rates, fitness effects, and pleiotropy, thereby establishing a framework for validating evolutionary predictions across research fields.

Investigating Mutation Rate Evolution

Fundamental Concepts and Measurement Approaches

Mutation rates represent the probability of changes in genome sequence between parent and offspring, resulting from unrepaired DNA damage, polymerase errors, intragenomic recombination events, transposable element movements, and other molecular processes [15]. A critical distinction exists between the mutation rate (the probability of sequence changes per replication) and the substitution rate (the rate at which changes accumulate in surviving lineages) [15]. Understanding this distinction is essential for interpreting evolutionary dynamics.

Experimental evolution employs mutation accumulation (MA) experiments to estimate intrinsic rates and effects of new mutations. In MA designs, populations are repeatedly forced through bottlenecks of one or a few randomly chosen individuals, minimizing selection and allowing most mutations (except lethal ones) to accumulate at rates接近 their underlying mutation rates [15]. This approach has revealed that spontaneous mutation rates are generally very low, with single-base mutation rates in bacteria and single-celled eukaryotes ranging from 10⁻¹⁰ to 10⁻⁹ per base pair per replication [15].

Table 1: Mutation Rates Across Organisms from MA Experiments

| Organism | Point Mutation Rate (per bp per replication) | Notes | Citation |

|---|---|---|---|

| E. coli (wild-type) | ~3.5 × 10⁻¹⁰ | [16] | |

| E. coli (MMR-) | ~2.4 × 10⁻⁸ | Mismatch repair deficient | [16] |

| S. cerevisiae | 10⁻¹⁰ to 10⁻⁹ range | Single-celled eukaryote | [15] |

| Multicellular eukaryotes | 0.05-1.0 per generation across protein-coding genome | Includes multiple germline divisions | [15] |

Experimental Evidence for Mutation Rate Evolution

Mutation rates are not static but can evolve rapidly in response to environmental and population-genetic challenges. A 2022 experimental evolution study with E. coli demonstrated that mutation rates could undergo substantial bidirectional shifts in as few as 59 generations in response to demographic and environmental changes [16]. The most extreme evolutionary changes occurred in populations cultivated with intermediate resource-replenishment cycles (L10 treatment), where wild-type clones showed 121.4-fold increases in single-nucleotide mutation rates and 77.3-fold increases in small-indel mutation rates compared to ancestral values [16].

The evolution of mutation rates follows predictable patterns based on population genetic theory. Under the drift-barrier hypothesis, populations with reduced effective population size (Nₑ) experience less efficient selection against mildly deleterious mutations, including those affecting replication fidelity [16]. This principle was demonstrated in experiments with different transfer schemes: populations experiencing stronger bottlenecks (S1: 1/10⁷ dilution) showed significant reductions in mutation rates for mismatch-repair-deficient backgrounds, supporting the idea that overly high mutation rates can be deleterious [16].

Table 2: Mutation Rate Changes Under Different Evolution Schemes

| Evolution Scheme | Dilution/Transfer | Key Findings | Mutation Rate Change | Citation |

|---|---|---|---|---|

| L10 | 1/10 every 10 days | Extreme increases in WT clones | 121.4-fold SNM increase | [16] |

| S1 (WT background) | 1/10⁷ daily | Significant increases despite bottleneck | 1.5-fold SNM increase | [16] |

| S1 (MMR- background) | 1/10⁷ daily | Reduction from ancestral hypermutator | 41.6% SNM decrease | [16] |

| Mutator S. cerevisiae | Various | 4/8 lines evolved reduced rates after ~6,700 generations | Decreased from hypermutator state | [17] |

Mutation Bias and Its Evolutionary Consequences

Beyond the overall mutation rate, the mutation spectrum - describing relative frequencies of different mutation types - significantly influences evolutionary outcomes. Wild-type E. coli exhibits a transition bias, with approximately 54% of single-nucleotide mutations being transitions (compared to the unbiased expectation of 33%) [18]. Experimental manipulation of DNA repair genes can create strains with mutation biases ranging from 97% transitions to 98% transversions [18].

Recent research demonstrates that shifting mutation bias alters the distribution of fitness effects (DFE). Strains opposing the ancestral bias (strong transversion bias) have DFEs with the highest proportion of beneficial mutations, while strains exacerbating the ancestral transition bias have up to 10-fold fewer beneficial mutations [18]. This occurs because populations gradually deplete beneficial mutations in well-sampled mutational classes, making previously underexplored mutation types more likely to be beneficial [18].

Figure 1: Impact of Mutation Bias Shifts on Adaptive Potential. Reversing ancestral mutation bias increases access to beneficial mutations in underexplored genetic space.

Quantifying Fitness Effects and Evolutionary Outcomes

Distribution of Fitness Effects (DFE)

The distribution of fitness effects describes the spectrum of fitness consequences of new mutations, representing a key parameter for predicting adaptation rates, trajectories, and population fates [18] [19]. The DFE determines the number and proportion of beneficial mutations available to a population and is influenced by genetic background, environment, effective population size, and prior adaptation history [18].

Experimental evolution enables direct measurement of DFEs through competition experiments. In one approach, researchers generated hybrid Bacillus subtilis libraries through transformation with DNA from donor species (B. vallismortis and B. spizizenii) and measured selection coefficients for each hybrid strain [19]. This method revealed that cross-species transfer has strong potential to enhance fitness, with some transfers showing significantly beneficial effects [19].

Experimental Protocols for Fitness Effect Measurement

Protocol 1: Competition Assays for Selection Coefficients

- Strain Preparation: Use recipient strain (e.g., B. subtilis Bs166) and a fluorescent reporter strain (e.g., Bs175 with GFP)

- Competition Setup: Compete each hybrid strain against the reporter strain in relevant growth media

- Sampling: Measure strain fractions at start (t₀) and end (t) using flow cytometry

- Calculation: Compute selection coefficient as: sᵢ,RS = (t₉/(t-t₀)) × ln[(xᵢ(t)/xRS(t))/(xᵢ(t₀)/xRS(t₀))] where t₉ is recipient generation time in respective media [19]

Protocol 2: Mutation Accumulation (MA) Experiments

- Lineage Establishment: Start with clonal or inbred populations for homogeneous genetic background

- Population Bottlenecks: Repeatedly impose bottlenecks of one or few randomly chosen individuals

- Mutation Identification: Sequence genomes after known generations to count accumulated changes

- Rate Calculation: Estimate spontaneous mutation rate from number of changes across independent lineages [15]

Factors Influencing Evolutionary Repeatability

Evolutionary repeatability - the independent evolution of similar genotypes or phenotypes - exists on a quantifiable continuum rather than as a binary trait [14]. Experimental evolution has revealed that both parallel evolution (similar evolution in related species) and convergent evolution (similar evolution in distantly related species) demonstrate evolutionary repeatability [14].

Several factors influence repeatability:

- Selection pressure: Strong directional selection increases repeatability of adaptive outcomes

- Genetic constraints: Highly conserved genes show higher parallelism across lineages

- Population size: Larger populations explore more mutational pathways

- Environmental consistency: Stable environments promote repeated evolutionary solutions

Studies with E. coli have revealed general rules of microbial adaptation, including: (i) faster fitness improvement in maladapted genotypes, (ii) large beneficial mutation supply leading to multiple beneficial mutations coexisting, (iii) concentration of large-effect mutations in few genes creating high evolutionary convergence, (iv) low occurrence rates for large-benefit mutations, and (v) selection for altered mutation rates during adaptation [1].

Pleiotropic Effects and Evolutionary Trade-Offs

Documenting Pleiotropic Costs of Adaptation

Pleiotropy occurs when a single mutation affects multiple phenotypes, frequently creating evolutionary trade-offs. Seminal experimental work by Lenski (1988) demonstrated this phenomenon in E. coli mutants resistant to virus T4 [20]. Each resistant mutant exhibited maladaptive pleiotropic effects, but with highly significant variation in competitive fitness among different resistant mutants [20].

The degree of fitness reduction was strongly associated with cross-resistance to virus T7 and the inferred position of the mutated gene in a complex metabolic pathway [20]. This variation in competitive fitness enables refinement of the resistant phenotype through selection among resistant genotypes, complementing refinement through epistatic modifiers of maladaptive pleiotropic effects [20].

Carbon Source Utilization and Evolutionary Bias

Recent experimental evolution reveals how pleiotropic constraints influence evolutionary bias. When a lactose-deficient E. coli strain was introduced into two different culture media (L: sodium acetate and lactose; G: glucose and lactose), populations exhibited biased evolution toward carbon sources providing higher fitness gains [4].

All L-populations underwent parallel evolution through reverse mutation to utilize lactose (lac+), gaining higher fitness than acetate utilization provided [4]. In contrast, all G-populations maintained glucose utilization rather than transitioning to lactose, as glucose provided higher fitness gains than lactose [4]. When lac+ and lac- strains were co-cultured in L medium, lac- individuals were completely eliminated, demonstrating competitive exclusion of low-fitness-gain directions [4].

Figure 2: Evolutionary Bias Toward Higher Fitness Gains. E. coli populations consistently evolve toward carbon sources providing superior fitness returns.

Research Reagent Solutions for Experimental Evolution

Table 3: Essential Research Reagents and Their Applications

| Reagent/Strain | Function | Experimental Application | Citation |

|---|---|---|---|

| E. coli K-12 GM4792 | Asexual, lactose-deficient model | Reverse mutation studies | [4] |

| Blue-white screening (X-gal/IPTG) | Phenotype detection | Identification of lac+ clones | [4] |

| Mismatch repair mutants (ΔmutS, ΔmutL, ΔmutH) | Alter mutation spectrum | Mutation bias studies | [18] |

| Bacillus subtilis Bs166 | Competent recipient | Cross-species transformation DFE | [19] |

| Donor genomic DNA (B. vallismortis, B. spizizenii) | Horizontal gene transfer source | Transformation fitness effects | [19] |

Experimental evolution has transformed evolutionary biology from a predominantly historical science to a predictive one. The methods and findings summarized here demonstrate how key questions regarding mutation rates, fitness effects, and pleiotropy can be addressed through controlled experimentation. Quantitative frameworks now enable prospective evolutionary forecasting across different timescales: trait-based models project phenotypic responses over ~5-20 generations; allele-based analyses model frequency dynamics over ~20-100 generations; and composite adaptation scores support 100+-generation projections under novel environments [21].

The validated insights from experimental evolution provide critical guidance for applied fields including medicine (battling antibiotic resistance and emerging pathogens), agriculture (developing climate-resilient crops), biotechnology (engineering stable production strains), and conservation biology (protecting endangered species) [1] [14]. By quantifying and propagating uncertainty, experimental evolution establishes a rigorous foundation for predictive evolutionary practice, shifting the field from descriptive synthesis toward forecasting evolutionary outcomes with explicit confidence intervals [21].

The quest to understand and predict evolutionary processes is a fundamental pursuit in biology, with critical applications in medicine, agriculture, and conservation. Model systems, ranging from microbial populations to cultured cell lines, provide indispensable tools for studying evolutionary dynamics in controlled settings. These systems enable researchers to test hypotheses about evolutionary trajectories that would be impossible to investigate in natural populations due to temporal and spatial constraints. Within this context, Long-Term Evolution Experiments (LTEE) represent a powerful approach for directly observing evolutionary processes in real-time, bridging the gap between theoretical predictions and empirical validation [22]. The utility of these model systems lies in their ability to generate reproducible data, enable high-throughput experimentation, and provide insights into the complex interplay between evolutionary processes and outcomes.

For evolutionary predictions to be scientifically valid, they must be testable. Model systems offer this testing ground, allowing researchers to examine the core principles of evolutionary theory, including the roles of natural selection, genetic drift, mutation, and environmental factors. As stated by researchers in the field, "Evolution can be predicted in the short term from a knowledge of selection and inheritance. However, in the long term, evolution is unpredictable because environments, which determine the directions and magnitudes of selection coefficients, fluctuate unpredictably" [22]. This review systematically compares the utility of different model systems—microbes, cell lines, and LTEEs—in validating evolutionary predictions, providing researchers with a framework for selecting appropriate experimental approaches for specific evolutionary questions.

Comparative Analysis of Model Systems

Key Characteristics and Applications

Table 1: Comparative overview of model systems in evolutionary biology

| System Feature | Microbial Models | Cell Line Models | Long-Term Evolution Experiments (LTEE) |

|---|---|---|---|

| Generational Time | Very short (hours) | Short (days) | Extended (years to decades) |

| Environmental Control | High | Very high | Moderate to high |

| Phenotypic Complexity | Low | Moderate (2D) to High (3D) | Low to moderate |

| Genetic Tractability | High | Moderate to high | High |

| Evolutionary Timescale | Short-term microevolution | Short-term microevolution | Long-term macroevolutionary patterns |

| Key Applications | Experimental evolution, fitness measurements | Disease mechanisms, drug screening | Evolutionary trajectories, innovation events |

| Limitations | Ecological simplicity | Reduced physiological context | Resource-intensive |

Quantitative Comparison of Experimental Outputs

Table 2: Experimental data output and reproducibility across model systems

| Parameter | Microbial Models | 2D Cell Cultures | 3D Cell Cultures | LTEE |

|---|---|---|---|---|

| Time for Culture Formation | Minutes to hours | Minutes to hours | Hours to days | Years to decades |

| Reproducibility | High | High | Moderate | High |

| In Vivo Imitation | Limited | Does not mimic natural tissue structure | Closely mimics natural tissue structure | Natural evolution in controlled setting |

| Cost Efficiency | High | High | Moderate | Resource-intensive |

| Adaptive Evolution Tracking | Hundreds to thousands of generations | Limited | Limited | Tens of thousands of generations |

| Examples of Key Discoveries | Evolutionary bias toward higher fitness gains [4] | Drug response mechanisms | Tumor biology and drug penetration | Citrate utilization innovation [23] |

Microbial Model Systems in Evolutionary Research

Experimental Protocols and Methodologies

Microbial model systems, particularly Escherichia coli, have provided fundamental insights into evolutionary processes due to their short generation times and genetic tractability. A standard protocol for experimental evolution with E. coli involves several key steps:

Strain Selection and Preparation: Researchers often begin with defined genetic variants, such as the E. coli K-12 GM4792 strain which contains a 212-bp deletion in the lactose operon, rendering it unable to utilize lactose (lac-) [4]. This provides a clear phenotypic marker for evolutionary changes.

Experimental Evolution Setup: Multiple replicate populations are established in controlled environments. For example, in studies of evolutionary bias, lac- E. coli are introduced into different culture media: one containing sodium acetate and lactose (L medium), and another containing glucose and lactose (G medium) [4].

Population Transfer and Maintenance: Daily transfers of 1% of each population into fresh medium maintain constant growth conditions and allow for continuous adaptation. This protocol is similar to the LTEE approach where populations are transferred daily into fresh glucose medium [23].

Monitoring and Analysis: Regular sampling every 5 days with blue-white screening allows researchers to detect the emergence of lac+ mutants capable of utilizing lactose. Population samples are preserved at -80°C at regular intervals, creating a "frozen fossil record" for future analysis [4].

This methodology enables direct observation of evolutionary dynamics, including the trajectory of adaptive mutations and the factors influencing evolutionary repeatability. The high replication possible with microbial systems allows researchers to distinguish between deterministic and stochastic evolutionary processes.

Research Reagent Solutions for Microbial Evolution Studies

Table 3: Essential research reagents for microbial evolution experiments

| Reagent/Equipment | Function | Example Application |

|---|---|---|

| E. coli K-12 GM4792 | Model organism with defined genetic markers | Studying re-evolution of lactose utilization [4] |

| M9 Minimal Medium | Defined growth medium with specific carbon sources | Controlling nutritional environment for selection experiments [4] |

| IPTG and X-gal | Detection reagents for lac operon expression | Blue-white screening for identifying lac+ mutants [4] |

| Glycerol Storage Solution | Cryopreservation of bacterial samples | Creating frozen fossil record for longitudinal studies [23] |

| Biosafety Cabinet | Maintaining sterile working environment | Preventing contamination during daily transfers [24] |

Cell Line Model Systems: 2D vs 3D Cultures

Experimental Approaches in Cell Culture Evolution

Cell culture models provide a bridge between simple microbial systems and complex multicellular organisms. The methodology for utilizing cell lines in evolutionary studies varies significantly between traditional 2D and more advanced 3D systems:

Two-Dimensional (2D) Cell Culture Protocol:

- Cell Seeding: Cells are seeded as a monolayer in culture flasks or flat Petri dishes attached to plastic surfaces [25].

- Maintenance: Cultures are maintained in controlled incubators (typically 37°C, 5% CO2) with regular medium changes [24].

- Passaging: Cells are regularly passaged using trypsin-EDTA to detach them from the surface when they reach confluence.

- Experimental Manipulation: Introduction of selective pressures (e.g., chemotherapeutic agents for cancer cells) to study adaptive evolution.

Three-Dimensional (3D) Cell Culture Protocol:

- Matrix Selection: Choice of appropriate scaffold material such as Matrigel, collagen, or synthetic polymers [25].

- Cell Embedding: Single cells are suspended in the matrix material and allowed to form spheroids or organoids.

- Culture Conditions: 3D cultures are maintained with special attention to nutrient and oxygen diffusion limitations that more closely mimic in vivo conditions [25].

- Analysis: Monitoring of spheroid growth, morphology, and functional characteristics through microscopy and molecular assays.

The transition from 2D to 3D culture systems represents a significant advancement in cell culture technology, better replicating the architectural and functional complexity of living tissues. As noted in comparative analyses, "2D cultured cells do not mimic the natural structures of tissues or tumours... In this culture method, cell-cell and cell-extracellular environment interactions are not represented as they would be in the tumour mass" [25].

Research Reagent Solutions for Cell Culture Studies

Table 4: Essential materials for cell culture-based evolution research

| Reagent/Equipment | Function | Application Context |

|---|---|---|

| Primary Cell Lines | Directly isolated from donor tissue | Maintaining genetic features of original tissue [25] |

| Established Cell Lines | Commercially available characterized models | High reproducibility across laboratories [24] |

| Matrigel | Extracellular matrix substitute | 3D culture formation and tissue modeling [25] |

| Class II Biosafety Cabinet | Sterile work environment | Aseptic cell culture maintenance [24] |

| Humid CO2 Incubator | Physiological growth conditions | Maintaining optimal pH and temperature [24] |

Long-Term Evolution Experiments (LTEE)

The LTEE Methodology and Experimental Design

The Long-Term Evolution Experiment (LTEE), initiated by Richard Lenski in 1988, represents one of the most comprehensive efforts to study evolutionary dynamics in real-time. The core methodology involves:

Founding Populations: Twelve initially identical populations of E. coli B were established from a single ancestral clone [23].

Daily Transfer Protocol: Each day, 1% of each population is transferred to fresh Davis Mingioli medium containing glucose as the limiting resource (25 μg/mL). The remaining 99% of the population is effectively eliminated, creating a repeated population bottleneck [22].

Sample Preservation: Every 500 generations (approximately 75 days), samples from each population are frozen at -80°C with glycerol as a cryoprotectant. This creates a complete "frozen fossil record" that enables researchers to resurrect and study ancestors and compare them to their descendants [23].

Monitoring and Analysis: Regular measurements of fitness, mutation rates, and phenotypic characteristics are conducted. Genome sequencing of populations at various time points provides insight into genetic changes underlying adaptation.

This simple but powerful experimental design has been maintained for over 75,000 generations (as of 2025), providing unprecedented insight into long-term evolutionary dynamics [22]. The LTEE methodology has proven so robust that the experiment was successfully transferred from Michigan State University to the University of Texas at Austin in 2022, and then back to MSU in 2025, without disruption to the evolving populations [23].

Key Workflow and Signaling Pathways in LTEE

Evolutionary Workflow in the LTEE

Research Reagent Solutions for LTEE-Style Experiments

Table 5: Key materials for long-term evolution studies

| Reagent/Equipment | Function | LTEE Application |

|---|---|---|

| E. coli B Strain | Model organism with defined genetics | Founding ancestor for all LTEE populations [23] |

| Davis Mingioli Medium | Defined minimal growth medium | Controlled nutritional environment with glucose limitation [23] |

| Glycerol (Cryoprotectant) | Preservation of viable samples | Creating frozen fossil record at -80°C [23] |

| Glucose | Primary carbon source and limiting nutrient | Selective pressure for improved metabolic efficiency [23] |

| Citrate | Alternative carbon source | Evolutionary innovation after 31,000 generations [23] |

Case Studies: Validating Evolutionary Predictions

Predicting Evolutionary Innovations

The LTEE has provided remarkable insights into the predictability of evolutionary innovations. A landmark case occurred after approximately 31,000 generations, when one of the twelve E. coli populations evolved the ability to utilize citrate as an energy source under aerobic conditions [23]. This was a significant innovation because E. coli cannot normally consume citrate in the presence of oxygen. The emergence of this trait demonstrated that:

- Historical Contingency: The citrate-using (Cit+) variant evolved in only one population, suggesting a role for rare mutations or specific historical sequences of mutations.

- Parallel Evolution: Despite this unique innovation, many other traits evolved in parallel across the populations, including increases in cell size and improvements in glucose transport efficiency.

- Fitness Trade-offs: The Cit+ variants initially struggled to metabolize citrate efficiently, as their metabolic networks were optimized for glucose consumption.

This case study highlights both the predictable and unpredictable elements of evolutionary trajectories. While general features of adaptation (e.g., fitness increases) were highly repeatable across populations, specific major innovations were rare and contingent on prior evolutionary history.

Experimental Evidence of Evolutionary Bias

Recent experimental evolution studies with E. coli have directly tested hypotheses about evolutionary bias. When lac- E. coli were introduced into media containing both lactose and alternative carbon sources, populations consistently evolved toward utilizing the carbon source that provided higher fitness gains [4]. Specifically:

- In L medium (lactose + sodium acetate), all populations evolved to utilize lactose through reverse mutations (lac+), as this provided higher fitness than utilizing acetate.

- In G medium (lactose + glucose), no populations switched to lactose utilization, as glucose provided higher fitness than lactose.

This research demonstrates that "species tend to evolve with a bias towards directions that offer higher fitness gains, partly because high-fitness-gain directions competitively exclude low-fitness-gain directions" [4]. These findings support predictive models of evolution based on relative fitness benefits across different selective environments.

Experimental Design for Evolutionary Bias Studies

The comparative analysis of microbial models, cell lines, and long-term evolution experiments reveals a complementary relationship among these systems for validating evolutionary predictions. Microbial systems offer unparalleled generational depth and replication, cell culture models provide insights into multicellular complexity, and LTEEs bridge microevolutionary and macroevolutionary timescales. Together, these approaches demonstrate that evolutionary processes contain both predictable, deterministic elements and unpredictable, contingent factors.

The future of evolutionary prediction lies in integrating data from these diverse model systems with advanced computational approaches and theoretical frameworks. As noted by researchers, "Evolutionary predictions are increasingly being developed and used in medicine, agriculture, biotechnology and conservation biology" [1]. The continued development and refinement of these model systems will enhance our ability to forecast evolutionary trajectories, potentially leading to applications in antibiotic resistance management, cancer treatment optimization, and species conservation efforts. Ultimately, model systems provide the essential empirical foundation for testing, refining, and validating evolutionary predictions across biological scales and timeframes.

Forecasting evolution, once considered a scientific impossibility, is now an emerging reality with profound implications for medicine, biotechnology, and conservation biology [1]. The predictive scope in evolutionary biology is fundamentally divided into two distinct temporal domains: short-term and long-term forecasts. This division is not merely a matter of timescale but encompasses fundamental differences in predictability, underlying mechanisms, and appropriate methodological approaches. Short-term predictions often leverage known, high-frequency data and deterministic selective pressures, while long-term forecasts must contend with increasing uncertainty, the emergence of novel mutations, and complex eco-evolutionary feedback loops [1] [5]. This guide objectively compares these two forecasting paradigms, framing the analysis within the critical context of experimental validation, a cornerstone for establishing predictive credibility in evolutionary science [6].

Core Concepts and Theoretical Frameworks

The predictability of evolution is governed by a core tension between stochastic forces, such as random mutation and genetic drift, and deterministic forces, primarily natural selection [5]. In a perfectly predictable system, evolution would consistently arrive at the same phenotypic or genotypic endpoint when populations experience identical environmental challenges. While perfect predictability is unattainable, varying degrees of evolutionary convergence indicate a level of determinism that can be harnessed for forecasting [5].

The conceptual framework for understanding predictive scope can be visualized as a continuum from short-term, high-precision forecasts to long-term, big-picture projections. The diagram below illustrates the core factors that define this scope.

Direct Comparison of Forecasting Approaches

Short-term and long-term evolutionary forecasting differ across multiple dimensions, including their fundamental timeframes, primary goals, and the nature of the predictions they generate. These differences dictate their respective applications in research and industry.

Table 1: Defining the Scope of Short-Term vs. Long-Term Evolutionary Forecasts

| Aspect | Short-Term Forecasting | Long-Term Forecasting |

|---|---|---|

| Time Scale | Hours to ~100 generations [26] [1] | 100 to billions of generations [26] [21] |

| Primary Goal | Predict precise genotypic/ phenotypic changes; optimize immediate outcomes [1] | Identify major adaptive trends and potential for innovation [26] [1] |

| Typical Prediction | "Which influenza strain will dominate next season?" [1] | "Will fitness continue to improve indefinitely in a constant environment?" [26] |

| Level of Detail | High resolution (e.g., specific mutations, allele frequencies) [1] | Lower resolution (e.g., composite adaptation scores, trait means) [21] |

| Key Application | Guiding vaccine design, anticipating drug resistance [1] | Informing conservation strategies, planning for climate change [21] |

The biological basis for this divergence in scope is rooted in the dynamics of adaptation. The following diagram outlines the generalized workflow for generating and validating an evolutionary forecast, highlighting how the process differs for short versus long-term horizons.

Quantitative Dynamics of Adaptation

Experimental evolution provides the critical data needed to quantify and compare the dynamics of adaptation over different timescales. Seminal work, such as the Long-Term Evolution Experiment (LTEE) with E. coli, has been instrumental in revealing these dynamics [26].

Table 2: Quantitative Dynamics of Adaptation from the E. coli LTEE

| Metric | Short-Term Dynamics (0-2,000 generations) | Long-Term Dynamics (Up to 60,000 generations) |

|---|---|---|

| Fitness Gain | Rapid increase of ~30% in relative fitness [26] | Continued increase, reaching ~70% faster growth than ancestor, but rate of improvement slows [26] |

| Pattern of Change | Step-like dynamics dominated by selective sweeps of large-effect mutations [26] | Better fit by a power-law model with no upper bound, predicting continued slow improvement [26] |

| Genetic Basis | Beneficial mutations in a few key genes; high convergence at gene level [1] | Accumulation of many mutations; potential for emergence of new ecotypes and complex traits [26] |

| Predictive Model | Clonal interference model accounting for competition between beneficial mutations [26] | Power-law model (no asymptote) outperforms hyperbolic model (with asymptote) for long-term prediction [26] |

Methodologies and Experimental Validation

Robust evolutionary forecasting relies on a suite of methodological approaches, each with its own strengths, data requirements, and appropriate applications for short-term versus long-term predictions.

Table 3: Forecasting Methods and Experimental Validation Protocols

| Methodology | Best for Scope | Core Protocol | Key Experimental Validation |

|---|---|---|---|

| Trait-Based Models | Short-Term (~5-20 generations) [21] | Use multivariate quantitative-genetic equations (e.g., breeder's equation) to project phenotypic change [1] [21] | Reciprocal Transplants: Measure performance of evolved populations in natural or semi-natural environments to validate predicted fitness outcomes [21]. |

| Allele-Frequency Models | Short-to-Medium Term (~20-100 generations) [21] | Model frequency dynamics of identified loci under selection, outpacing drift [21] | Laboratory Evolution: Replay evolution from frozen fossil samples under controlled lab conditions; sequence to validate predicted allele frequency changes [26] [1]. |

| Genomic Vulnerability/Adaptation Scores | Long-Term (100+ generations) [21] | Aggregate many small-effect loci to project adaptation under novel environments [21] | Historical Series Analysis: Use archived samples (e.g., herbarium specimens, frozen stocks) to compare long-term genetic changes against past forecasts [21]. |

A critical component of forecasting is experimental validation, which acts as a reality check for computational models [6]. The gold-standard protocol is the laboratory evolution experiment, as exemplified by the LTEE [26]. The core workflow is as follows:

- Founding & Propagation: A genetically defined ancestor is used to found replicate populations in a controlled environment (e.g., flasks with minimal glucose medium). Populations are propagated via serial dilution for hundreds to thousands of generations [26].

- Archiving: Samples from each population are frozen at regular intervals (e.g., every 500 generations), creating a frozen "fossil record" [26].

- Competition Assays: To measure fitness, evolved bacteria from a specific generation are competed against a differentially marked ancestral strain in the same environment. Relative fitness is calculated as the ratio of their realized growth rates [26].

- Genomic Analysis: Whole-genome sequencing of evolved clones identifies the genetic basis of adaptation and tests predictions about which genes are mutational targets [26] [1].

The Scientist's Toolkit: Key Research Reagents

The following reagents and resources are fundamental for conducting experiments aimed at testing and validating evolutionary forecasts.

Table 4: Essential Research Reagents for Experimental Evolution

| Reagent / Resource | Function in Forecasting Research |

|---|---|

| Genetically Tractable Model Organisms (e.g., E. coli, yeast, Drosophila) | Enable controlled, replicated evolution experiments with rapid generations, allowing real-time observation of evolutionary dynamics [26] [27]. |

| Frozen Fossil Record (Archived population samples) | Allows researchers to resurrect ancestral and intermediate genotypes from different time points to measure trajectories of change and directly compete lineages from different eras [26]. |

| Neutral Genetic Markers (e.g., Ara+, Ara-) | Provide a phenotypic means to distinguish competing strains during fitness assays without affecting fitness themselves, enabling precise measurement of relative fitness [26]. |

| Defined Growth Media (e.g., DM25 glucose-limited medium) | Creates a simple, reproducible selective environment that minimizes complex ecological feedbacks, enhancing the transitivity of fitness measurements and simplifying modeling [26]. |

| Public Data Repositories (e.g., Cancer Genome Atlas, MorphoBank, PubChem) | Provide essential comparative and experimental data for validating forecasts when new experiments are too time-consuming or ethically challenging [6]. |

The dichotomy between short-term and long-term evolutionary forecasting is a fundamental framework that dictates methodological choice, defines the limits of predictability, and sets expectations for validation. Short-term forecasts achieve high precision by leveraging deterministic selection on standing variation, while long-term forecasts embrace a probabilistic view of trends, acknowledging the role of stochasticity and evolutionary innovation [26] [1] [5]. The unifying thread across all predictive scope is the indispensable role of experimental evolution in providing the rigorous, empirical validation needed to transform evolutionary biology from a historical science into a predictive one [26] [6]. As methods from genomic selection to machine learning continue to advance, the integration of sophisticated models with robust experimental testing will be the cornerstone of reliable evolutionary forecasting in both basic and applied research.

A Methodological Toolkit: Designing Evolution Experiments for Biomedical Insights

In experimental evolution, researchers observe evolutionary change in real-time to test evolutionary hypotheses and understand adaptive processes. The choice of culturing system—chemostat, turbidostat, or serial batch culture—profoundly shapes the selective pressures on microbial populations, thereby influencing the trajectory and outcome of evolution. These systems differ fundamentally in how they manage nutrient availability, population density, and growth rate, creating distinct evolutionary environments. This guide provides an objective comparison of these core methodologies, detailing their operational principles, experimental applications, and suitability for testing specific evolutionary predictions.

The table below summarizes the core operational characteristics and applications of the three major experimental evolution systems.

| Feature | Chemostat | Turbidostat | Serial Batch Culture |

|---|---|---|---|

| Core Principle | Continuous culture with fixed dilution rate; growth rate controlled by limiting nutrient concentration [28]. | Continuous culture with fixed cell density; dilution rate adjusts automatically to maintain turbidity [29]. | Cyclical culture with periodic manual transfer of an inoculum to fresh medium [30] [31]. |

| Growth Rate Control | Set by the researcher via the dilution rate (D); μ = D at steady state [28]. | Set by the organism's maximum capacity; system maintains maximum growth rate [32] [29]. | Uncontrolled and dynamic; cycles through lag, exponential, and stationary phases [33]. |

| Nutrient Environment | Constant, nutrient-limited. Steady-state substrate concentration is low and stable [32] [28]. | Constant, nutrient-rich. Substrate concentration is kept high [32]. | Dynamic and fluctuating. Nutrients are high after transfer and become depleted [32] [33]. |

| Population Density | Constant at steady state [28]. | Constant, set by the researcher's turbidity set-point [29]. | Fluctuates dramatically with each growth cycle [33]. |

| Key Experimental Parameters | Dilution rate (D), concentration of the growth-limiting nutrient [28]. | Turbidity/OD set-point [29]. | Transfer interval, inoculum size (transfer volume), culture volume [34] [30]. |

| Primary Selective Pressure | Optimization of substrate affinity and uptake under scarcity [35]. | Optimization of maximum growth rate (μmax) in rich conditions [32]. | Adaptation to feast-famine cycles, including stationary phase survival [33]. |

| Typical Experimental Duration | Weeks to months for hundreds of generations [33]. | Weeks to months for hundreds of generations [32]. | Months to years for thousands of generations (e.g., LTEE) [34] [36]. |

| Relative Cost & Technical Demand | High (requires specialized bioreactor equipment) [33]. | High (requires specialized bioreactor with feedback control) [33]. | Low (can be performed with basic labware like flasks) [33] [30]. |

Experimental Protocols and Methodologies

Chemostat Cultivation

- Objective: To maintain microbial populations in a steady state of constant growth rate and environmental conditions under nutrient limitation [28].

- Procedure:

- Setup: A bioreactor of working volume V is inoculated with the microbial strain. Fresh medium, containing an excess of all nutrients except one (the growth-limiting factor, e.g., carbon, nitrogen), is fed at a constant flow rate F [28].

- Dilution Rate: The key parameter is the dilution rate, D = F/V, which determines the steady-state growth rate [28].

- Equilibration: The culture is allowed to reach a steady state, where the cell density and limiting nutrient concentration stabilize. This can take 5-7 volume changes [28].

- Evolution: The culture is maintained continuously for hundreds to thousands of generations. Samples are periodically taken for fitness assays, omics analysis, or archiving [36].

- Key Parameter Calculation: The steady-state growth rate (μ) is equal to the dilution rate (D) [28].

Turbidostat Cultivation

- Objective: To maintain a microbial population at a constant, high cell density in nutrient-rich conditions, allowing it to grow at its maximum intrinsic rate [32] [29].

- Procedure:

- Setup: A bioreactor is equipped with a turbidity (optical density, OD) sensor connected to a feedback control system. The culture is inoculated and allowed to grow [37].

- Feedback Control: A turbidity set-point is defined. When the culture turbidity exceeds this set-point due to growth, a pump is activated to add fresh, nutrient-rich medium, simultaneously diluting the culture and lowering the turbidity [29].

- Effluent Removal: The addition of fresh medium displaces an equal volume of culture, maintaining a constant working volume.

- Evolution: The culture evolves under constant nutrient abundance, with the dilution rate automatically adjusting to match the population's increasing growth rate over evolutionary time [32].

Serial Batch Culture

- Objective: To propagate populations through repeated cycles of growth and starvation, mimicking a dynamic environment [33] [30].

- Procedure:

- Inoculation: A small inoculum (e.g., 1% v/v) is transferred from a stationary or late-log phase culture into a fresh batch of medium [30] [36].

- Growth Cycle: The population progresses through lag, exponential, and stationary phases. The duration of the cycle is typically fixed (e.g., 24 hours) [33].

- Repetition: At the end of each cycle, a sample is used to inoculate the next fresh medium batch. This process is repeated daily for weeks, months, or even years [36].

- Monitoring and Archiving: Population samples are periodically frozen (e.g., with 15-25% glycerol) to create a "fossil record" for later analysis [30] [36].

- Key Parameter Calculation: The number of generations per transfer can be estimated using the formula: Number of generations = log₂(Final cell density / Initial cell density) [30] [31].

System Workflow and Selection

The following diagram illustrates the logical decision process for selecting and applying these core experimental systems.

Essential Research Reagents and Materials

The table below details key reagents and materials essential for setting up and maintaining experiments in these systems.

| Item | Function | Application Notes |

|---|---|---|

| Bioreactor | Provides a controlled environment (temperature, mixing, aeration) for cultivation. | Essential for chemostat/turbidostat; can be jar-based or modern mini-bioreactors (e.g., Chi.Bio) [37]. For batch culture, simple flasks suffice [33]. |

| Peristaltic Pumps | Precisely control the inflow of fresh medium and outflow of spent culture. | Critical for continuous culture systems (chemostat/turbidostat) to maintain constant volume and dilution rate [28]. |

| Optical Density (OD) Sensor | Measures microbial cell density in real-time. | Standard in turbidostats for feedback control [37] [29]. Also used for monitoring in chemostats and batch cultures. |

| Defined Growth Medium | Supplies essential nutrients for growth. | For chemostats, one nutrient (e.g., carbon) is strictly limited [28]. For turbidostats and batch, medium is typically rich. |

| Cryopreservation Agent (Glycerol/DMSO) | Protects cells during freezing to create a permanent stock. | Used to archive population samples at -80°C over the course of long-term evolution experiments for retrospective analysis [30] [36]. |

| Antifoaming Agents | Suppresses foam formation in aerated bioreactors. | Prevents overflow and contamination in continuous cultures, ensuring stable operation [28]. |

The selection of a culturing system is a foundational decision in experimental evolution that directly shapes the evolutionary trajectory of a population. Chemostats excel in probing adaptations to nutrient scarcity and enabling precise physiological studies at submaximal growth rates. Turbidostats are ideal for investigating the optimization of maximum growth rate under resource abundance. In contrast, serial batch culture, with its dynamic environment, captures the complexity of feast-famine cycles and can lead to the emergence of adaptations absent in constant environments, such as advanced stationary-phase survival. The choice among them should be guided by the specific evolutionary question, whether it involves testing the optimality of metabolic strategies, understanding trade-offs in fluctuating environments, or simply maximizing the rate of adaptation for biotechnological applications.

Validating evolutionary predictions requires precise measurement of how populations change over time. Researchers now have an advanced toolkit to track these dynamics with unprecedented resolution, moving from bulk population measurements to the fate of individual lineages. Fitness assays provide the essential quantitative measure of reproductive success, while lineage tracking reveals the genealogical relationships among cells, and DNA barcoding enables high-throughput, parallel monitoring of thousands of lineages simultaneously. This guide objectively compares the performance, applications, and experimental requirements of these core methodologies that form the foundation of modern experimental evolution research.

# Comparative Analysis of Methodologies

The table below provides a direct comparison of the three primary methodologies for measuring evolutionary dynamics.

| Methodology | Key Performance Metrics | Resolution | Key Applications | Quantitative Precision | Experimental Throughput |

|---|---|---|---|---|---|

| Competitive Fitness Assays | Relative growth rate, Selection coefficient (s) | Population-average fitness | • Long-term adaptation studies [38]• Measuring fitness trade-offs [39] | High for bulk fitness; ~0.1-1% error in barcoded versions [40] | Low for pairwise; High for barcoded pools |

| Imaging-Based Lineage Tracking | Cell fate decisions, Division kinetics, Spatial organization | Single-cell within a limited field of view | • Developmental biology [41]• Cancer cell lineage relationships | High spatial resolution; Limited for deep lineage history | Low to medium (limited by microscopy) |

| DNA Barcoding & Lineage Tracking | Relative lineage frequency, Fitness inference, Clonal dynamics | Individual lineage within a massive population | • High-resolution evolutionary trajectories [42] [43]• Quantifying clonal interference | High-frequency resolution; Enables fitness estimation for thousands of lineages [43] | Very High (thousands to millions of lineages in parallel) |

# Experimental Protocols and Workflows

Competitive Fitness Assays

The foundation of measuring evolutionary fitness is the competitive assay, where a strain of interest is competed against a reference strain under defined conditions [38].

Detailed Protocol: Bulk Competitive Fitness with Barcoded Yeast [40]

- Culture Initiation: Inoculate a population of barcoded yeast into a starter culture of YPD media. The initial inoculum must contain at least 1000 times more cells than the number of unique barcodes present to maintain population diversity.

- Experimental Passaging: Once saturated, passage the cells into the experimental growth media (e.g., containing a drug or nutrient stress). The dilution factor is typically between 1/32 and 1/1024. Maintain at least two parallel cultures as technical replicates.