Validating Evolutionary Predictions with Environmental DNA: From Theory to Clinical Applications

This article explores the transformative role of environmental DNA (eDNA) in validating and informing evolutionary predictions, a field rapidly moving from theoretical science to practical application.

Validating Evolutionary Predictions with Environmental DNA: From Theory to Clinical Applications

Abstract

This article explores the transformative role of environmental DNA (eDNA) in validating and informing evolutionary predictions, a field rapidly moving from theoretical science to practical application. We cover the foundational principles that make eDNA a powerful tool for forecasting evolutionary trajectories, such as pathogen adaptation and drug resistance. For researchers and drug development professionals, we detail cutting-edge methodological pipelines—from sample collection and metagenomic sequencing to bioinformatic analysis of biosynthetic gene clusters. The article critically addresses troubleshooting and optimization challenges, including contamination control and inhibitor removal. Finally, we present a rigorous validation framework, comparing eDNA efficacy against conventional methods across diverse use cases, from antibiotic discovery to conservation biology, synthesizing key takeaways for biomedical research and clinical innovation.

The New Science of Forecasting Evolution: eDNA as a Predictive Lens

Evolutionary science is undergoing a profound transformation, shifting from a historically descriptive discipline to a predictive one. For decades, predicting evolutionary processes was considered nearly impossible due to the inherent stochasticity of mutation, reproduction, and environmental change [1]. However, convergent advances across computational biology, molecular monitoring techniques, and theoretical frameworks have now made evolutionary forecasting an achievable reality with significant applications in public health, conservation, and biotechnology [1]. This paradigm shift is particularly crucial for addressing urgent challenges such as antimicrobial resistance, pathogen evolution, and biodiversity loss in fragile ecosystems.

The validation of these evolutionary predictions has been dramatically enhanced by the emergence of environmental DNA (eDNA) technologies. eDNA provides a non-invasive, highly sensitive method for detecting genetic traces left by organisms in their environment, enabling researchers to monitor evolutionary changes in real-time with minimal ecosystem disturbance [2] [3]. This technological advancement, combined with sophisticated modeling approaches, creates a powerful feedback loop where predictions can be tested and refined against empirical data collected from natural systems.

Theoretical Foundations of Evolutionary Forecasting

The Scientific Basis for Prediction

Evolutionary predictions share a common structure described by three key parameters: predictive scope (what aspect of evolution is being predicted), time scale (over what timeframe), and precision (the required accuracy) [1]. The scientific basis for these predictions rests on Darwin's theory of evolution by natural selection, extended by quantitative population genetics frameworks that account for forces such as random genetic drift, migration, recombination, and mutation [1].

Three primary factors now enable evolutionary forecasting where it was previously impossible:

Quantitative Models of Selection: Modern population genetics has developed precise mathematical frameworks, such as the breeder's equation and genomic selection models, that quantify how traits respond to selective pressures [1].

Computational Power: Advanced computing resources enable the simulation of complex evolutionary scenarios that incorporate multiple selective pressures, population structures, and eco-evolutionary feedback loops [1] [4].

Empirical Validation Methods: eDNA and other molecular tools provide high-resolution data for testing and refining predictions against real-world evolutionary changes [5] [2] [3].

The predictability of evolution depends largely on the strength of selection pressures and the roughness of the fitness landscape. Rougher fitness landscapes resulting from strong selection constraints can lead to greater predictability, as they limit the number of accessible evolutionary paths [4]. In contrast, neutral evolution, where all variants are equally likely, demonstrates minimal repeatability and remains challenging to forecast.

Methodological Approaches: From Traditional to Machine Learning

Forecasting methodologies span a continuum from traditional statistical approaches to advanced machine learning techniques, each with distinct advantages for different evolutionary questions:

Table 1: Comparison of Evolutionary Forecasting Methodologies

| Method Type | Key Techniques | Strengths | Ideal Applications |

|---|---|---|---|

| Traditional Statistical | Linear regression, ARIMA, Exponential smoothing, Holt-Winters filtering [6] [7] | High explainability, computationally efficient, transparent workflows [6] | Short-term predictions with limited variables, univariate time series data [6] |

| Machine Learning | Neural networks, random forest, support vector regression, Gaussian processes [6] | Handles complex multivariate datasets, identifies non-linear patterns, superior accuracy with large feature spaces [6] | Pathogen evolution, complex trait prediction, ecosystems with numerous interacting factors [6] |

| Mechanistic Models | Birth-death population models, structurally constrained substitution models [4] | Incorporates biological constraints, provides mechanistic insights, higher generality [4] | Protein evolution forecasting, antibiotic resistance development, evolutionary trajectories with structural constraints [4] |

In business applications, ML forecasting models have demonstrated superior performance compared to traditional methods, with one study showing ML achieving a mean absolute percentage error of 11.61% compared to 15.17% for traditional ARIMAX models [6]. Similar advantages are emerging in biological forecasting, particularly for complex evolutionary scenarios with multiple interacting factors.

Experimental Validation with Environmental DNA

eDNA Protocol for Monitoring Species of Conservation Concern

Environmental DNA protocols provide a powerful method for validating evolutionary predictions about species distribution and population changes. The following protocol was developed for monitoring endemic Asian spiny frogs in the Himalayan region but offers a adaptable framework for various taxa [3]:

Table 2: Key Steps in eDNA-Based Species Monitoring

| Protocol Step | Technical Specifications | Application in Evolutionary Studies |

|---|---|---|

| Primer Design & Validation | Target ~550 bp region of mitochondrial 16S rRNA gene; design multiple primer sets (5-14) per species; validate specificity against sympatric species [3] | Enables species-specific detection even in cryptic species complexes; provides data for phylogenetic predictions |

| Field Sampling | Collect water samples from targeted habitats; implement contamination controls; filter immediately or preserve with Longmire's solution [3] | Allows longitudinal monitoring to test predictions about range shifts and population changes |

| Laboratory Processing | Extract DNA using commercial kits; employ quantitative PCR with species-specific primers; include negative controls [3] | Provides presence/absence data with detection probabilities superior to visual surveys |

| Occupancy Modeling | Use multi-season occupancy models; incorporate environmental covariates; estimate detection probability and site occupancy [3] | Statistically robust framework for testing predictions about habitat use and population trends |

This protocol demonstrated significantly higher detection probabilities for both Hazara Torrent Frogs (Allopaa hazarensis) and Murree Hills Frogs (Nanorana vicina) compared to traditional visual encounter surveys [3]. For A. hazarensis, eDNA detection probability was substantially higher, highlighting the method's sensitivity for rare and elusive species where evolutionary changes might be most critical.

eDNA Metabarcoding for Community-Level Forecasting

For community-level evolutionary predictions, eDNA metabarcoding provides a comprehensive approach:

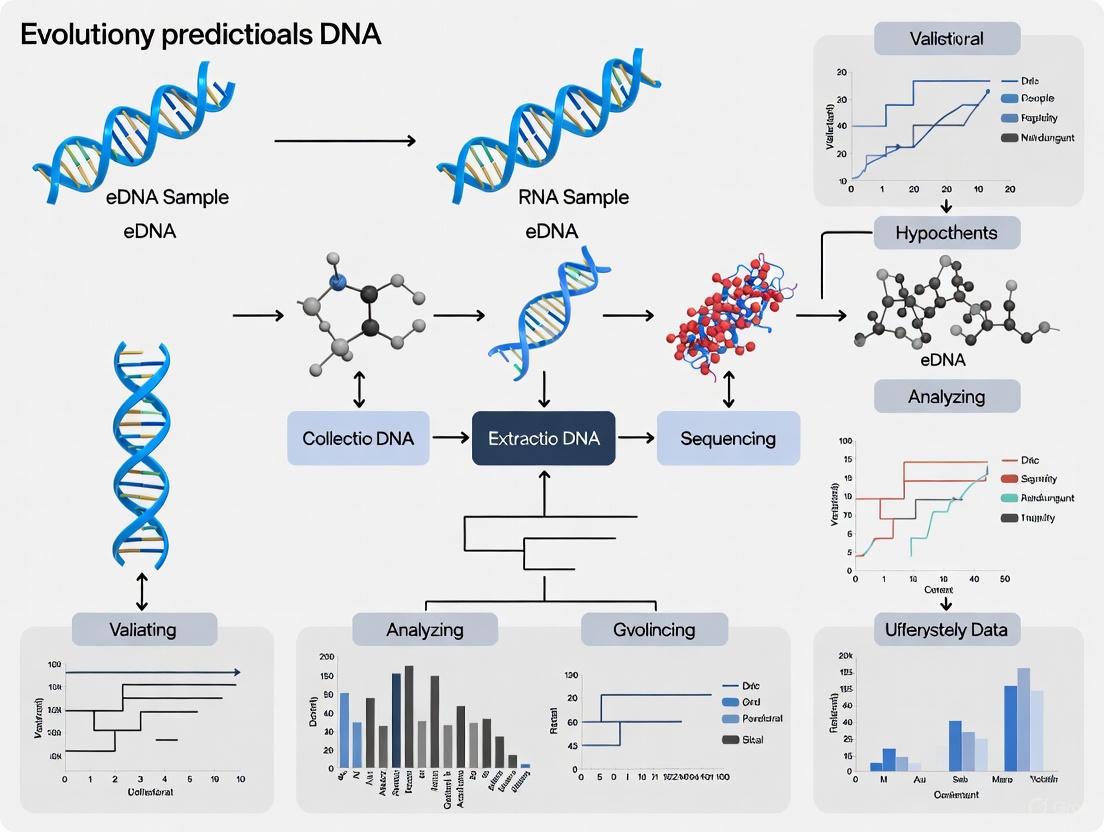

Figure 1: eDNA Metabarcoding Workflow for Community-Level Forecasting

This approach has demonstrated remarkable efficacy in marine ecosystems. A Black Sea study comparing eDNA metabarcoding with traditional trawling found that eDNA identified 23 fish species during autumn surveys compared to only 15 species detected by trawling [8]. Similarly, in summer expeditions, eDNA detected 12 species versus 9 species with trawling methods [8]. The enhanced sensitivity of eDNA is particularly valuable for detecting rare and migratory species that may be indicators of evolutionary responses to environmental change.

The integration of Bayesian regression and Generalized Additive Models (GAMs) with eDNA data allows for robust quantification of uncertainty in predictions—a critical component for evolutionary forecasting where stochastic processes play significant roles [8]. These statistical frameworks can capture nonlinear relationships between environmental DNA signals, environmental gradients, and population abundance, providing more accurate validation of evolutionary predictions.

Advanced Applications in Microbial and Molecular Evolution

Forecasting Protein Evolution

At the molecular level, forecasting protein evolution represents one of the most sophisticated applications of evolutionary prediction. A recently developed method integrates birth-death population models with structurally constrained substitution (SCS) models to predict protein evolutionary trajectories [4]:

Figure 2: Protein Evolution Forecasting Framework

This approach addresses a critical limitation of traditional population genetics methods, which simulate evolutionary history and molecular evolution as separate processes [4]. By integrating these components and incorporating structural constraints on protein folding stability, the method provides more biologically realistic forecasts of molecular evolution, particularly for viral proteins under strong selective pressures [4].

The implementation of this method in the ProteinEvolver framework (freely available at https://github.com/MiguelArenas/proteinevolver) enables researchers to forecast protein evolution under different selective scenarios, with applications in vaccine design and therapeutic development against rapidly evolving pathogens [4].

Predicting Microbial Evolutionary Dynamics

Microbial systems present unique opportunities for evolutionary forecasting due to their rapid generation times and large population sizes. The predictable aspects of microbial adaptation include:

- Faster fitness improvement in maladapted genotypes [1]

- Large beneficial mutation supply leading to competition between multiple beneficial mutations [1]

- High evolutionary convergence at the gene level in most environments [1]

- Selection for mutator phenotypes during adaptation [1]

These predictable patterns enable forecasts of microbial responses to antibiotics, environmental changes, and industrial biotechnology applications. Research initiatives such as the "Understanding and Predicting Microbial Evolutionary Dynamics 2025" conference highlight the growing importance of this field for addressing global challenges including antimicrobial resistance and ecosystem functioning [9].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Resources for Evolutionary Forecasting

| Resource Category | Specific Examples | Function in Evolutionary Forecasting |

|---|---|---|

| Laboratory Reagents | Longmire's solution (eDNA preservation), commercial DNA extraction kits, metabarcoding primers (e.g., MiFish-U for fish 12S), qPCR reagents [3] [8] | Enable high-quality sample preservation, DNA extraction, and species-specific detection for validation studies |

| Bioinformatics Tools | ProteinEvolver framework, occupancy modeling software, Bayesian regression packages, sequence alignment tools [3] [4] | Provide computational infrastructure for developing models and analyzing validation data |

| Reference Databases | MITOS database for mitochondrial genomes, protein structure databases, taxonomic reference libraries [5] [8] | Essential for taxonomic assignment and structural constraints in evolutionary models |

| Sequencing Platforms | Nanopore sequencing (e.g., for epigenetic clock development), Illumina platforms for metabarcoding, Sanger sequencing for validation [5] [3] | Generate molecular data for testing predictions across different biological scales |

The emerging discipline of evolutionary forecasting represents a paradigm shift in how we understand and interact with biological systems. By combining theoretical models from population genetics with advanced computational approaches and empirical validation through eDNA and other molecular tools, researchers can now make testable predictions about evolutionary trajectories across biological scales—from protein sequences to ecosystems.

The integration of prediction and validation creates a virtuous cycle where models inform monitoring efforts and empirical data refine predictive frameworks. This approach has profound implications for addressing pressing challenges in public health, conservation, and biotechnology, enabling proactive rather than reactive strategies for managing evolutionary processes.

As the field advances, key priorities will include improving the granularity of spatiotemporal predictions, better incorporating eco-evolutionary dynamics, and developing more accessible tools for researchers and practitioners. The continued refinement of evolutionary forecasting promises to transform our relationship with the biological world, moving from passive observation to active engagement with the processes that shape life on Earth.

Environmental DNA (eDNA) represents the genetic material continually shed by organisms into their surrounding environment through mechanisms including skin cells, scales, mucus, feces, and gametes [10]. This genetic material, once released into ecosystems ranging from aquatic to terrestrial environments, can persist in environmental substrates such as water, soil, and sediment for varying durations. The analysis of this eDNA provides a powerful lens through which to observe and validate evolutionary processes occurring across spatial and temporal scales. Unlike traditional genetic approaches that require direct observation or capture of organisms, eDNA sampling captures the genetic footprints of entire communities, thereby recording both ecological and evolutionary changes [11]. This allows researchers to access the raw material for evolution—the genetic variation within populations—without disruptive sampling methods, enabling studies of how populations adapt to environmental changes, how species interactions drive evolutionary dynamics, and how biodiversity responds to long-term pressures.

The application of eDNA to evolutionary studies is particularly valuable because it can provide continuous temporal data over long time periods, ranging from recent changes to millennial-scale shifts [11]. Sediment cores, for instance, can archive eDNA for thousands of years, creating a temporal record that allows scientists to hindcast evolutionary responses to historical environmental changes and validate models predicting future evolutionary trajectories [11]. By recovering genetic sequences from different time periods, researchers can directly observe genetic variation shifting in response to selection pressures, documenting evolution in action. Furthermore, eDNA enables the study of eco-evolutionary dynamics—the mutual feedback between evolutionary and ecological processes occurring on similar timescales [11]. As communities change in composition and as populations adapt to new conditions, they modify their environments, which in turn creates new selection pressures. Environmental DNA provides a means to track these interrelated processes across entire ecosystems.

Theoretical Framework: eDNA as the Raw Material for Evolutionary Studies

The genetic variation captured through eDNA sampling constitutes the fundamental substrate upon which evolutionary forces act. This variation, when distributed across populations and through time, provides the essential data needed to investigate evolutionary mechanisms including natural selection, genetic drift, gene flow, and mutation. Environmental DNA delivers temporal data that are unidirectional, meaning environmental changes must occur before their impacts become visible in genetic records, thus providing robust opportunities for identifying causal relationships in evolutionary dynamics [11]. This temporal dimension is crucial for distinguishing between short-term fluctuations and long-term evolutionary trends.

Environmental DNA archives, particularly those preserved in stable environments such as lake sediments, ice cores, and permafrost, can span hundreds to thousands of years, enabling researchers to reconstruct evolutionary timelines with unprecedented resolution [11]. These archives allow scientists to address fundamental evolutionary questions such as how populations genetically adapted to past climate shifts, how colonization events shaped genomic diversity, and how human activities have accelerated evolutionary changes in recent centuries. The ability to simultaneously track multiple taxa across these timeframes further enables community-level evolutionary studies, revealing how evolutionary processes interact across trophic levels and among interacting species.

Table: Key Evolutionary Questions Addressable with eDNA Time Series

| Evolutionary Process | eDNA Application | Temporal Scale |

|---|---|---|

| Natural Selection | Tracking allele frequency changes in response to documented environmental shifts | Decades to centuries |

| Adaptation | Identifying genetic variants associated with specific environmental conditions | Centuries to millennia |

| Speciation | Reconstructing colonization routes and subsequent genetic divergence | Millennia |

| Eco-evolutionary Dynamics | Correlating genetic changes with community-level shifts | Decades to centuries |

| Extinction | Dating population declines and identifying associated genetic bottlenecks | Centuries to millennia |

Methodological Principles: From Sampling to Data Interpretation

The process of capturing evolutionary raw material through eDNA involves a series of critical methodological steps, each requiring careful optimization to ensure the genetic data accurately represent the biological communities from which they originate.

Sample Collection and Filtration

The initial stage of any eDNA study involves collecting environmental samples and concentrating the genetic material through filtration. The choice of filter pore size represents a crucial decision that significantly impacts the taxonomic profile and subsequent evolutionary inferences. For studies targeting macroorganisms such as fish or mammals, larger pore size filters (e.g., 5 µm) are often more effective than smaller pores (e.g., 0.45 µm) because they selectively capture larger tissue fragments and cells shed by vertebrates while excluding much of the microbial DNA that would otherwise dominate the sample [12]. This enrichment for target DNA increases the ratio of amplifiable target DNA to total DNA, thereby enhancing detection probability for evolutionary studies focused on specific taxa.

The volume of water filtered similarly influences detection sensitivity. Larger volumes (e.g., 3 L versus 1 L) typically increase the absolute amount of target DNA recovered, thereby improving the probability of detecting rare species or genetic variants [12]. However, this relationship must be balanced against practical constraints including filter clogging, particularly in turbid waters, and the potential for increased co-concentration of PCR inhibitors. In estuarine and other challenging environments, glass fiber filters have demonstrated superior performance by filtering rapidly (2.32 ± 0.08 minutes) while maintaining high DNA yield percentages (0.00107 ± 0.00013) even in high-turbidity conditions [13].

DNA Extraction and Preservation

DNA extraction methods must be selected to maximize yield while preserving the integrity of the genetic material for evolutionary analyses. Commercial extraction kits typically provide a balance of efficiency, consistency, and inhibitor removal, though phenol-chloroform-isoamyl extractions may maximize total DNA recovery in some circumstances [12]. A critical consideration for evolutionary studies is that maximizing total DNA yield does not always correlate with improved target detection, as increased co-extraction of off-target DNA and inhibitors can sometimes reduce effective sensitivity for the taxa of interest.

The preservation method employed immediately after sample collection significantly impacts DNA quality for subsequent analyses. Common approaches include freezing at -20°C or using commercial preservatives such as Longmire's buffer. The optimal choice depends on field conditions, storage duration, and transportation requirements, with the overarching goal of minimizing DNA degradation that could bias evolutionary inferences.

Table: Optimized eDNA Protocol Parameters for Evolutionary Studies

| Protocol Step | Recommended Parameters for Macroorganisms | Effect on Evolutionary Data Quality |

|---|---|---|

| Filter Pore Size | 5 µm | Increases target-to-total DNA ratio for vertebrate DNA [12] |

| Water Volume | 3 L | Increases probability of detecting rare species/alleles [12] |

| Filter Material | Glass fiber | Resilient to turbidity; faster filtration times [13] |

| DNA Extraction | Commercial kits vs. phenol-chloroform | Balances yield, inhibitor removal, and practicality [12] |

| Inhibitor Removal | Context-dependent | May be necessary in humic-rich environments [13] |

Experimental Protocols for Evolutionary eDNA Studies

Protocol 1: Detecting Terrestrial Invasive Snakes

A recent study developing eDNA methods for detecting the invasive California kingsnake (Lampropeltis californiae) on the Canary Islands provides a robust protocol applicable to evolutionary studies of terrestrial species [14]. This protocol addresses the challenge of detecting elusive terrestrial snakes, which are typically characterized by exceptionally low detection rates using conventional methods.

Sample Collection:

- Deploy artificial cover objects (ACOs) made of different materials (e.g., metal, wood) in suitable habitats.

- Collect swab samples from underneath ACOs using sterile swabs.

- Collect soil samples from beneath ACOs and from random locations for comparative analysis.

- Include samples from researchers' boots to assess human-mediated dispersal.

DNA Extraction and Primer Design:

- Extract genomic DNA using commercial kits (e.g., E.Z.N.A. Tissue DNA Kit).

- Design species-specific primers targeting a short fragment (≈654 bp) of the cytochrome c oxidase I (COI) gene to maximize detection probability.

- Validate primer specificity against co-occurring endemic reptile species.

qPCR Amplification:

- Perform reactions using 300 nM of each primer and SYBR Green Supermix in 15 µL reaction volumes.

- Use thermal cycling conditions: initial denaturation at 95°C for 10 min, followed by 40 cycles of 95°C for 15 s, 57°C for 20 s, and 72°C for 30 s.

- Analyze melting curves to confirm amplification specificity.

This protocol successfully detected L. californiae eDNA in 9.31% of swab samples, 2.22% of soil samples under ACOs, and 2.56% of boot samples, demonstrating its utility for monitoring elusive species for evolutionary studies [14].

Protocol 2: Estuarine eDNA Optimization for Salmon

For aquatic environments, particularly challenging estuaries with high turbidity and PCR inhibitors, an optimized protocol for Chinook salmon (Oncorhynchus tshawytscha) detection provides a framework for evolutionary studies in these ecosystems [13].

Sample Processing:

- Filter 500 mL to 1 L of estuarine water through glass fiber filters to balance DNA capture with practical constraints.

- Apply a secondary inhibitor removal step when necessary to counteract PCR inhibition from humic substances.

DNA Extraction and Amplification:

- Extract DNA using methods compatible with inhibitor removal (e.g., magnetic bead-based systems).

- Use quantitative PCR (qPCR) with species-specific primers for sensitive detection.

- Include appropriate controls (field blanks, extraction blanks, positive controls) to validate results.

This protocol emphasizes the balance between time, cost, and DNA yield, prioritizing sensitivity for realistic scenarios while maintaining scalability for large-scale evolutionary studies [13].

Data Interpretation and Analysis Framework

Transforming eDNA data into evolutionary insights requires specialized analytical approaches that account for the unique characteristics of environmental genetic information.

Accounting for Technical and Biological Variation

A critical challenge in eDNA studies is distinguishing true biological variation from technical artifacts introduced during sampling and processing. Biological replicates (replicate water samples/filters from the same environment) capture inherent spatial and temporal heterogeneity in eDNA distribution, while technical replicates (replicate molecular analyses from the same sample) quantify methodological consistency [12]. Studies have shown that homogenizing source water before filtering removes much of the biological variation, allowing clearer attribution of observed differences to methodological variables rather than inherent heterogeneity [12].

For evolutionary studies seeking to track genetic changes over time, this distinction is paramount. False negatives (missing a species that is present) can lead to incorrect conclusions about local extinctions or population declines, while false positives (detecting a species that is absent) can suggest persistence or range expansions that haven't occurred [10]. Statistical models that explicitly incorporate both technical and biological variance components provide more robust estimates of population parameters essential for evolutionary inference.

Integrating Data from Different Methodological Approaches

Evolutionary studies often require combining data from multiple sampling efforts or adapting protocols over time as methodologies advance. A flexible statistical framework allows for the responsible integration of data collected using different approaches [12]. This can be achieved through linear modeling that accounts for protocol-specific effects, enabling researchers to extend datasets across methodological boundaries while maintaining analytical rigor.

The equation describing how each protocol step influences recovered eDNA can be expressed as:

Y ∼ Yf × Ye(f) - If × Ie × {0 if secondary inhibitor removal is used, 1 otherwise}

Where Y is the ratio of input eDNA amplified by qPCR, Yf is the ratio of input eDNA that binds to the filter, Ye(f) is the ratio of filter-bound eDNA isolated by the extraction method, If is filter inhibitor carryover, and Ie is extraction method inhibitor carryover [13]. This quantitative framework helps researchers understand how methodological choices impact downstream evolutionary inferences.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Key Research Reagent Solutions for eDNA Evolutionary Studies

| Reagent/Material | Function | Application in Evolutionary Studies |

|---|---|---|

| Glass Fiber Filters | Captures eDNA from water samples while resisting clogging | Optimal for turbid environments; improves DNA yield for population genetics [13] |

| Species-Specific Primers | Amplifies target species DNA from complex mixtures | Enables tracking of specific populations for evolutionary monitoring [14] |

| Commercial DNA Extraction Kits | Isolates DNA from filters while removing inhibitors | Provides consistent yield for comparative analyses across temporal samples [12] |

| Inhibitor Removal Reagents | Reduces PCR inhibition from environmental compounds | Critical for accurate detection in inhibitor-rich environments like soils [14] |

| Artificial Cover Objects (ACOs) | Non-invasive sampling of terrestrial eDNA | Enables detection of elusive species for distribution studies [14] |

| qPCR Master Mixes | Quantitative amplification of target DNA | Provides sensitive detection for tracking population changes [14] |

Visualizing eDNA Workflows for Evolutionary Studies

eDNA to Evolutionary Inference Workflow

eDNA in Evolutionary Studies Logic Model

Application Note

Environmental DNA (eDNA) and environmental RNA (eRNA) methodologies have emerged as powerful tools for predicting and monitoring critical evolutionary and ecological processes. This application note details how these approaches, framed within a One Health perspective, can be leveraged to validate predictions concerning pathogen spread, the propagation of antibiotic resistance genes (ARGs), and species adaptation in a rapidly changing world. By detecting genetic traces shed by organisms into their environment, researchers can conduct non-invasive, broad-scale surveillance that provides early warning signals for emerging threats to public and ecosystem health [15]. The protocols below outline standardized methods for targeting these key predictive markers in aquatic and terrestrial environments.

Predictive Target 1: Pathogen Spread and Disease Ecology

Protocol 1.1: Aquatic Pathogen Surveillance via eDNA/eRNA Metabarcoding

Principle: Filter water samples to capture genetic material from waterborne pathogens and parasites. Subsequent genetic analysis identifies a broad spectrum of pathogenic organisms without the need for direct host sampling, which is often stressful, destructive, or inefficient [15].

Key Workflow Steps:

- Sample Collection: Collect water samples from the target aquatic environment (e.g., near wastewater discharge points, aquaculture facilities, or natural waterways).

- Filtration: Filter a defined volume of water (typically 1-2 liters) through sterile membrane filters (e.g., 0.22 µm pore size) to capture particulate matter and eDNA.

- Nucleic Acid Extraction: Extract total environmental DNA/RNA from the filters using commercial kits designed for environmental samples, incorporating steps to inhibit degradation.

- Metabarcoding PCR: Amplify target genetic regions using broad-range or group-specific primers. For eukaryotic parasites, the 18S rRNA gene is a common target [15].

- Sequencing and Bioinformatic Analysis: Perform high-throughput sequencing of the amplicons. Process the resulting sequences using bioinformatic pipelines (e.g., PR2 database for protists) to assign taxonomic identities and determine pathogen presence and diversity [15].

Visualization of the Pathogen eDNA/eRNA Continuum for Risk Assessment:

Predictive Target 2: Antibiotic Resistance Gene (ARG) Propagation

Protocol 2.1: Quantifying ARG Risk and Connectivity in Soil Resistomes

Principle: Use metagenomic sequencing of soil samples to track the abundance and mobility of high-risk ARGs, assessing their connectivity to human pathogens. This helps predict the environmental drivers of clinical antibiotic resistance [16].

Key Workflow Steps:

- Soil Sampling: Collect composite soil samples from various land-use types (agricultural, urban, pristine).

- Metagenomic Sequencing: Extract total genomic DNA and perform shotgun metagenomic sequencing to capture the entire genetic content, including ARGs.

- ARG Profiling and Risk Ranking: Annotate sequences against ARG databases (e.g., SARG database) using tools like ARGs-OAP. Categorize identified ARGs by risk; Rank I ARGs are defined as those with documented host pathogenicity, high gene mobility, and enrichment in human-associated environments [16].

- Source Tracking and Connectivity Analysis: Use computational tools like FEAST (Fast Expectation-maximization for Microbial Source Tracking) to attribute the origins of soil ARGs (e.g., human feces, livestock, wastewater) [16]. Calculate a "connectivity" metric based on sequence similarity and phylogenetic analysis to quantify gene flow between soil bacteria and clinical isolates (e.g., E. coli) [16].

Quantitative Data on Soil ARG Risk and Connectivity:

Table 1: Key Findings from Global Soil ARG Metagenomic Analysis [16]

| Metric | Finding | Temporal Trend (2008-2021) | Statistical Significance |

|---|---|---|---|

| Relative Abundance of Rank I ARGs | 1.5 copies per 1000 cells in soil | Significant increase (r = 0.89) | p < 0.001 |

| Source Attribution of Soil Rank I ARGs | Human feces (75.4%), Chicken feces (68.3%), WWTP effluent (59.1%) | Not Reported | N/A |

| Genetic Overlap with Clinical E. coli | Increased connectivity over time | Significant increase | p < 0.001 |

| Correlation with Clinical Resistance | R² = 0.40 – 0.89 with regional clinical AMR data | Not Reported | p < 0.001 |

Protocol 2.2: Single Plasmid Analysis of ARGs using CRISPR/Cas9 and Optical DNA Mapping

Principle: This method directly visualizes and identifies specific ARGs on individual plasmid molecules, providing rapid characterization of mobile genetic elements responsible for the horizontal spread of resistance [17].

Key Workflow Steps:

- Plasmid Extraction: Isolate intact plasmids from bacterial isolates.

- CRISPR/Cas9 Cleavage: Incubate plasmids with the wild-type Cas9 enzyme complexed with a guide RNA (gRNA) designed to be complementary to a specific ARG (e.g.,

blaCTX-M-15,blaNDM). This linearizes plasmids carrying the target gene at a specific site. - Optical DNA Mapping: Stain the DNA molecules with a fluorescent dye (YOYO-1) and the AT-binding molecule netropsin. This creates a sequence-dependent intensity barcode along the DNA.

- Nanofluidic Stretching and Imaging: Introduce the sample into a nanofluidic channel device to stretch the DNA molecules linearly. Image them using fluorescence microscopy to obtain their barcodes and lengths.

- Analysis: Molecules linearized by Cas9 will show a consistent break point at the same location on the barcode, confirming the presence of the target ARG on a plasmid of a specific size [17].

Visualization of Single Plasmid ARG Identification Workflow:

Predictive Target 3: Species Adaptation and Range Shifts

Protocol 3.1: Tracking Invasive Species with eDNA Metabarcoding

Principle: Detect the presence and range expansion of invasive species in vulnerable ecosystems (e.g., the warming Arctic) by identifying their unique eDNA signatures in water samples, providing an early warning before established populations are visually confirmed [18].

Key Workflow Steps:

- Strategic Water Sampling: Collect water samples from strategic locations, such as along shipping routes and in vulnerable ports, to maximize the chance of detecting nascent invasions.

- Filtration and eDNA Extraction: Filter water to capture genetic material and extract eDNA.

- Metabarcoding PCR: Amplify a standardized, taxonomically informative gene region (a "barcode") using primers that can detect a wide array of species.

- High-Throughput Sequencing and Analysis: Sequence the amplicons and compare the resulting sequences to reference databases (e.g., BOLD, GenBank) to identify the species present. This method confirmed the presence of the invasive bay barnacle (Amphibalanus improvisus) in the Canadian Arctic for the first time [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for eDNA/eRNA-based Predictive Studies

| Reagent / Kit / Tool | Primary Function | Application Example |

|---|---|---|

| Sterile Membrane Filters (0.22 µm) | Capture of particulate matter and eDNA from water samples during filtration. | Pathogen surveillance in wastewater; invasive species detection in marine water [15] [18]. |

| Commercial eDNA/eRNA Extraction Kits | Isolation of high-quality, inhibitor-free nucleic acids from complex environmental matrices (soil, water, sediment). | All protocols requiring downstream molecular analysis (metabarcoding, metagenomics) [15]. |

| Broad-Range PCR Primers (e.g., 18S rRNA, COI) | Amplification of diagnostic gene regions from diverse taxonomic groups for metabarcoding. | Detection of eukaryotic pathogens and parasites; biodiversity assessment in water samples [15]. |

| SARG Database & ARGs-OAP Pipeline | Reference database and bioinformatic tool for annotating and risk-classifying ARGs from metagenomic data. | Profiling the soil antibiotic resistome and identifying high-risk Rank I ARGs [16]. |

| FEAST Source Tracking Tool | Computational tool for estimating the proportional contributions of source environments to a sink microbial community. | Attributing the origins of ARGs found in soil to human, livestock, or other environmental sources [16]. |

| Cas9 Nuclease & Custom gRNA | Programmable enzyme and guide RNA for targeted cleavage of DNA at sequences complementary to the gRNA. | Linearizing plasmids at the location of specific ARGs (e.g., blaCTX-M, blaKPC) for optical mapping [17]. |

| Nanofluidic Channel Device | Micro-fabricated device for linear stretching of single DNA molecules for microscopy. | Generating optical barcodes of plasmids for sizing and ARG localization [17]. |

The emerging paradigm of predictive evolutionary biology seeks to move beyond retrospective analysis to forecast biological change across measurable timeframes. This framework integrates theoretical models with empirical data—particularly from environmental DNA (eDNA)—to generate testable predictions about evolutionary trajectories. The predictive scope encompasses time scales from contemporary (ecological) to long-term (macroevolutionary) dynamics, with precision determined by the interplay of model selection, data quality, and variable specification. For evolutionary predictions to achieve scientific rigor and practical utility, researchers must clearly define three core components: the temporal domain over which predictions apply, the expected precision of quantitative forecasts, and the evolutionary variables targeted for prediction. This application note establishes protocols for defining this predictive scope within eDNA research, providing a standardized approach for validating evolutionary predictions across diverse biological systems.

Theoretical Foundations: Time Scales and Predictive Windows

Evolutionary forecasting operates across distinct temporal windows defined by detectability limits and parameter stability. Different analytical approaches are optimized for specific time horizons based on the stability of evolutionary parameters and the detectability of signal against background variation.

Table 1: Time Scales and Corresponding Predictive Frameworks in Evolutionary Forecasting

| Time Scale (Generations) | Predictive Framework | Key Evolutionary Variables | Primary Data Sources | Limitations & Considerations |

|---|---|---|---|---|

| Short-term (5-20) | Trait-based models | Phenotypic traits, polygenic scores | Common garden experiments, reciprocal transplants | Assumes stable G-matrix; measures correlated phenotypic responses [19] |

| Medium-term (20-100) | Allele-frequency models | Identifiable loci under selection | Genomic time-series, eDNA metabarcoding | Requires selection to outpace genetic drift and sampling error [19] |

| Long-term (100+) | Composite adaptation scores | Aggregate polygenic scores | Paleogenomics, ancient eDNA, phylogenetic comparison | Projects under novel environments; aggregates many small-effect loci [19] |

| Cross-scale | Macrogenetics | Genetic diversity indices, allele frequencies | Georeferenced genetic databases, eDNA | Links patterns to anthropogenic drivers; enables spatial predictions [20] |

The Ornstein-Uhlenbeck (OU) process provides a unifying quantitative framework for modeling evolutionary trajectories across these time scales. This stochastic process models change in a trait (e.g., gene expression level) across time as: dX_t = σdB_t + α(θ - X_t)dt, where σ represents the rate of drift (Brownian motion), α parameterizes the strength of selection pulling traits toward an optimal value θ, and dB_t denotes random fluctuations [21]. The OU process accurately captures the saturation of expression differences between mammalian species with increasing evolutionary time, reflecting the balance between drift and stabilizing selection [21].

Essential Variables and Quantitative Frameworks

Core Evolutionary Variables for Prediction

The predictive capacity of evolutionary models depends on selecting appropriate response variables that capture meaningful biological change:

- Genetic Diversity Metrics: Allelic richness, heterozygosity, and genetic differentiation (F~ST~) serve as essential indicators of evolutionary potential. The mutation-area relationship (MAR) provides a power-law framework for predicting genetic diversity loss with habitat reduction, analogous to species-area relationships [20].

- Allele Frequency Dynamics: Changes at specific loci under selection provide the most direct measurement of contemporary evolution. Studies in Mimulus guttatus demonstrate that allele frequency changes can be quantitatively predicted from fitness measurements, with male selection in one generation predicting allele frequency changes in the next [22].

- Gene Expression Profiles: Expression levels evolve under stabilizing and directional selection, with the OU model parameterizing the distribution of optimal expression levels [21]. Comparative expression data across species enables detection of deleterious expression in clinical samples and identification of lineage-specific adaptations.

- Effective Population Size (N~e~): Determines the relative strength of selection versus drift and directly influences the rate of adaptive evolution [20].

Precision Metrics and Validation Approaches

The precision of evolutionary predictions must be quantified using standardized metrics:

Table 2: Precision Metrics for Evolutionary Predictions

| Prediction Type | Validation Approach | Precision Metrics | Application Examples |

|---|---|---|---|

| Allele Frequency Change | Correlation between predicted and observed Δp | R², mean squared error, confidence interval coverage | Prediction of allele frequency changes in Mimulus guttatus populations (R² = 0.63 for male selection SNPs) [22] |

| Genetic Diversity Loss | Comparison of observed versus predicted heterozygosity | Absolute error, proportional deviation | Macrogenetic predictions of 6% genetic diversity loss since the Industrial Revolution [20] |

| Species Presence/Absence | eDNA detection versus traditional surveys | Sensitivity, specificity, F1 score | Marine NIS detection with fine mesh tow nets (92% detection rate) [23] |

| Expression Level Optimization | Comparison to clinical outcomes | ROC curves, likelihood ratios | Identification of deleterious expression levels in patient data using optimal distributions from OU models [21] |

Experimental Protocols for eDNA-Based Evolutionary Forecasting

Protocol: Aquatic eDNA Sampling for Biodiversity Monitoring

Purpose: Standardized collection of aquatic eDNA samples for biodiversity monitoring and temporal tracking of evolutionary relevant parameters.

Materials:

- Hollow-membrane filtration cartridges (e.g., RKS laboratories systems)

- Sterivex filters (as industry standard comparison)

- Programmable pump controller with flow meter

- Air pump and ozone generator for decontamination

- 8-filter manifold for parallel processing

- DNA preservation buffer (e.g., Longmire's buffer)

- Cold chain maintenance equipment (-20°C storage)

Procedure:

- Site Selection: Choose sampling locations representing habitats of interest (e.g., 12 locations across 4 geographic areas as in Irish coastal waters study [23]).

- Filtration Setup: Assemble filtration system with up to 8 hollow-membrane cartridges. These allow six-fold increase in filtration volume and three-fold increase in filtration speed compared to Sterivex filters [24].

- Water Processing: Filter 1L to 10L of water per sample, depending on turbidity. Record filtration volume and time precisely.

- Sample Preservation: Immediately after filtration, add DNA preservation buffer to cartridges and store at -20°C.

- Field Controls: Include field blanks (purified water processed identically to samples) to monitor contamination.

- Metadata Collection: Document GPS coordinates, temperature, salinity, UV exposure, and sediment composition, as these parameters influence eDNA recovery [23].

Validation: Conduct workshop with technical staff without prior eDNA knowledge to evaluate ease of deployment and success of independent sample collection [24].

Protocol: Temporal Sampling for Allele Frequency Tracking

Purpose: Direct measurement of allele frequency changes (Δp) across generations to validate evolutionary predictions.

Materials:

- Reduced-representation sequencing supplies (MSG-RADseq)

- 187 full genome sequences from reference panel [22]

- Low-coverage whole genome sequencing platform

- Haplotype matching computational pipeline

Procedure:

- Baseline Sampling: In Generation 1 (2013), sample flowering adults (n=1936) and genotyped using MSG-RADseq reduced representation sequencing [22].

- Progeny Sampling: Collect and genotype random progeny from each adult to infer male gamete allele frequencies.

- Next Generation Sampling: In Generation 2 (2014), sample three cohorts: (i) germinated but non-reproductive individuals, (ii) successfully flowering adults, and (iii) their progeny [22].

- Genotype Inference: Apply "haplotype matching" technique—aligning variants to 187 full genome sequences from the population—to derive genotype probabilities for SNPs within 15,360 genic regions [22].

- Selection Estimation: Use likelihood-based selection component models generalized to accommodate uncertain genotype calls to estimate male selection differentials.

- Prediction Validation: Test correlation between male selection in 2013 and observed allele frequency changes in 2014.

Validation: Method successfully predicted allele frequency changes at 587 SNPs with p < 10^-5 in Mimulus guttatus [22].

Visualization Frameworks

Predictive Workflow Integration

Time Scale Windows for Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Evolutionary Forecasting

| Reagent/Platform | Function | Application Example | Performance Metrics |

|---|---|---|---|

| Hollow-membrane filtration cartridges | eDNA concentration from aquatic environments | Modular water sampling systems for diverse environments | 6× increased filtration volume, 3× faster filtration vs. Sterivex [24] |

| MSG-RADseq reagents | Reduced-representation genome sequencing | Genotyping of 1936 experimental plants for allele frequency estimation [22] | Cost-effective genome-wide SNP discovery without full genome sequencing |

| Haplotype matching pipeline | Genotype inference from low-coverage sequencing | Alignment to 187 full genome references for improved prediction accuracy [22] | Essential for accurate Δp prediction in natural populations |

| Ornstein-Uhlenbeck model framework | Parameterization of expression evolution | Quantifying stabilizing selection on gene expression across 17 mammalian species [21] | Models both drift (σ) and selective strength (α) toward optimum (θ) |

| Fine mesh tow nets (60μm) | Marine organism collection for NIS detection | Biodiversity monitoring in Irish coastal waters [23] | Most cost-efficient for large-scale eDNA metabarcoding surveys |

| Genetic Essential Biodiversity Variables (EBVs) | Standardized genetic diversity metrics | Tracking progress toward Kunming-Montreal Global Biodiversity Framework targets [20] | Scalable metrics for global genetic diversity assessment |

The predictive scope in evolutionary biology is expanding from theoretical possibility to practical application through integrated approaches that define explicit time scales, precision expectations, and target variables. The protocols and frameworks presented here establish a foundation for validating evolutionary predictions using eDNA methodologies. Critical to this endeavor is the recognition that different predictive windows require distinct modeling approaches—from trait-based forecasts over 5-20 generations to allele-frequency projections across 20-100 generations and composite adaptation scores for century-scale predictions [19]. The integration of macrogenetic patterns with process-based models will enable more accurate forecasting of biodiversity responses to global change [20], while technological advances in eDNA sampling increase the spatial and temporal resolution of monitoring [24] [23]. As validation studies demonstrate increasingly accurate prediction of allele frequency changes [22] and expression evolution [21], evolutionary biology transitions from a historical science to a predictive one, with profound implications for conservation, medicine, and fundamental biological understanding.

Environmental DNA (eDNA) analysis has emerged as a transformative tool for ecological monitoring, yet its application as a rigorous instrument for validating evolutionary predictions remains an emerging frontier. This protocol details an integrated workflow from sample collection to bioinformatic analysis, specifically designed to generate high-quality data suitable for testing evolutionary hypotheses. By incorporating recent advances in sampling technology, inhibition removal, and high-fidelity amplification, we present a standardized methodology that enables researchers to move beyond biodiversity snapshots to capture the molecular signals of evolutionary processes in action.

The power of eDNA analysis extends far beyond species inventories. When applied within a temporal framework, eDNA becomes a potent tool for observing evolutionary dynamics directly, allowing researchers to test predictions about population adaptation to environmental change. Sediment cores containing preserved eDNA serve as natural archives, enabling the reconstruction of population genomic histories over extended timescales [25]. This paleogenomic approach provides unprecedented opportunity to identify adaptive mutations, trace allele frequency changes, and determine whether adaptive responses originate from new mutations or standing genetic variation—key predictions in evolutionary models [25]. The protocols detailed herein establish the technical foundation for these investigations, with particular emphasis on methods that maximize DNA yield, minimize contamination, and ensure data reproducibility for temporal comparisons.

Experimental Protocols & Workflows

Sample Collection and Filtration

Modular Water Sampling Systems: For marine and freshwater environments, employ modular sampling systems that utilize hollow-membrane (HM) filtration cartridges. These systems typically combine pumps, a programmable controller, and multiple filters for parallel processing [24].

- Procedure:

- Deploy the filtration system in the target aquatic environment (from creeks to open ocean)

- Filter water through HM filtration cartridges, which allow for a six-fold increase in filtration volume and threefold increase in filtration speed compared to standard Sterivex filters [24]

- In turbid waters, implement pre-filtration through polypropylene filters with pore sizes of 840, 200, 50, or 10 μm to prevent clogging and reduce PCR inhibitors [26]

- Preserve filters with appropriate preservation buffers (e.g., containing benzalkonium chloride) [26]

- Store filters at -20°C until DNA extraction

Temporal Sampling for Evolutionary Studies: For studies investigating evolutionary processes, incorporate sediment coring to access historical DNA archives. Date sediment layers using established methods such as 210Pb and 137Cs isotope analysis or 14C dating for older samples [25].

DNA Extraction and Inhibition Removal

Bead-Based Extraction Protocol:

- Extract DNA using automated magnetic bead-based systems (e.g., KingFisher system) for high-throughput processing [27]

- Process samples with inhibitor removal kits (e.g., Zymo OneStep PCR Inhibitor Removal Kit) when working with complex samples from turbid or humic-rich environments [27]

- Quantify DNA concentration using fluorometric methods, with expected yields typically ranging from 1.84 ng/μL to 25.8 ng/μL for estuarine samples [27]

Validation: Compare extraction efficiency between bead-based and silica-column-based methods (e.g., QIAGEN kits) to ensure consistent performance across sample types [27].

PCR Amplification and Target Enrichment

PCR Setup for Challenging Samples:

- Polymerase Selection: Use high-fidelity, inhibitor-resistant DNA polymerases (e.g., Platinum SuperFi II) for improved specificity and reduction of off-target amplification [27]

- Primer Selection: For fish communities, employ multiplexed MiFish primer sets (MiFish-U and MiFish-E) [27]. For fungal communities, carefully select ITS primers based on taxonomic focus, as different primers show biases toward specific taxonomic groups (e.g., ITS1-F biases toward basidiomycetes) [28]

- PCR Protocol: Implement touchdown programs and consider primer multiplexing to enhance specificity and coverage [27]

Mitigating PCR Biases: For fungal ITS amplification, analyze different primer combinations or multiple ITS subregions in parallel to account for taxonomic biases introduced by primer mismatches [28].

Sequencing and Data Analysis

Library Preparation and Sequencing:

- For metabarcoding approaches, utilize a two-step tailed PCR approach for library preparation [26]

- Incorporate dual-indexed sequences for sample multiplexing

- Sequence on appropriate platforms (e.g., MiSeq, Illumina for short fragments; nanopore sequencing for epigenetic modifications) [26] [5]

Age Estimation via Epigenetic Analysis: For age structure analysis—critical for evolutionary studies of population dynamics—leverage third-generation sequencing to detect DNA methylation patterns in eDNA:

- Perform amplification-free nanopore sequencing to detect various modification types (e.g., cytosine and adenosine methylation) across the genome [5]

- Develop species-specific epigenetic clocks using mitochondrial genome methylation patterns, which have demonstrated accuracy of 2.6 days (Median Absolute Error) in fish larvae [5]

Data Presentation

Quantitative Comparison of eDNA Filtration Methods

Table 1: Performance comparison of filtration methodologies for eDNA studies

| Filtration Method | Max Filtration Volume | Filtration Speed | Ideal Application | Limitations |

|---|---|---|---|---|

| Hollow-Membrane Cartridges | 6x Sterivex | 3x Sterivex | Large-volume marine sampling | Higher initial equipment cost |

| Sterivex Filters | 1x (baseline) | 1x (baseline) | Standard freshwater applications | Limited volume for clear water |

| Pre-filtration + Glass Microfiber | Varies with pre-filter | Reduced clogging | Turbid waters, high inhibitor environments | Additional processing step |

PCR Optimization Strategies for Complex Samples

Table 2: Approaches for overcoming PCR inhibition in environmental samples

| Method | Protocol | Effectiveness | Cost Consideration |

|---|---|---|---|

| Bead-based Inhibition Removal | Zymo OneStep PCR Inhibitor Removal Kit | High removal of humic substances | Moderate additional cost |

| Polymerase Selection | Platinum SuperFi II | Improved specificity, reduced off-target | Higher reagent cost |

| Pre-filtration | Polypropylene filters (10-840 μm) | Reduces turbidity and inhibitors | Low additional cost |

| Touchdown PCR | Progressive annealing temperature reduction | Enhanced specificity for mixed templates | No additional cost |

Evolutionary Analysis Applications

Table 3: Evolutionary insights from temporal eDNA analysis

| Analysis Type | Molecular Target | Evolutionary Insight | Technical Requirements |

|---|---|---|---|

| Paleogenomics | Whole mitochondrial genome | Historical demographic changes | Sediment cores, dating capabilities |

| Adaptive Trajectory Analysis | Nuclear SNPs under selection | Allele frequency changes over time | Whole genome sequencing, temporal samples |

| Epigenetic Aging | Methylation patterns | Population age structure | Nanopore sequencing, reference genomes |

| Community Shifts | Multi-taxa barcodes | Response to environmental change | Metabarcoding, reference databases |

Mandatory Visualization

Workflow Diagram: From eDNA Collection to Evolutionary Insight

PCR Optimization Decision Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential reagents and materials for eDNA-based evolutionary studies

| Item | Function | Application Notes |

|---|---|---|

| Hollow-Membrane Filtration Cartridges | High-volume eDNA concentration | Enables 6x filtration volume of standard methods [24] |

| Magnetic Bead-Based Extraction Kits | High-throughput DNA purification | Compatible with robotic systems; reduces cross-contamination [27] |

| PCR Inhibitor Removal Kits | Removal of humic substances and inhibitors | Critical for turbid water and sediment samples [26] [27] |

| Platinum SuperFi II DNA Polymerase | High-fidelity amplification | Reduces off-target amplification in complex samples [27] |

| MiFish Primer Sets | Universal fish metabarcoding | Multiplex versions available for enhanced coverage [27] |

| ITS Primers (Various) | Fungal community analysis | Select based on taxonomic focus due to primer biases [28] |

| Zymo OneStep PCR Inhibitor Removal Kit | Column-based inhibition removal | Effective for estuarine samples with known inhibition [27] |

| DNeasy Blood and Tissue Kit | Standardized DNA extraction | Well-established protocol for eDNA filters [26] |

| Agencourt AMPure XP Beads | PCR purification | Cleanup prior to library preparation [26] |

The integrated workflow presented here provides a robust framework for employing eDNA analysis as a validated tool for testing evolutionary predictions. By addressing technical challenges from sample collection through data analysis, these protocols enable researchers to generate reproducible, high-quality data suitable for investigating microevolutionary processes across temporal scales. The convergence of improved sampling methodologies, sensitive molecular techniques, and temporal sampling designs positions eDNA analysis as a powerful approach for bridging the historical gap between theoretical predictions and empirical validation in evolutionary biology.

From Sample to Sequence: eDNA Methodologies for Evolutionary Analysis

Environmental DNA (eDNA) analysis has revolutionized our ability to validate evolutionary predictions by providing a non-invasive tool to monitor biodiversity, track species distributions, and reconstruct historical ecosystems. This genetic material, shed by organisms into their environment through skin cells, feces, mucus, and other biological debris, offers a powerful lens through which to test hypotheses about evolutionary relationships, adaptive radiation, and biogeographical patterns [2]. The reliability of these scientific inquiries, however, is fundamentally contingent upon the initial steps of field collection and preservation, which ensure the integrity and representativeness of the DNA obtained from various matrices. This document provides detailed application notes and protocols for the collection and preservation of eDNA from water, soil, air, and other unique matrices, framed within the context of a broader thesis on validating evolutionary predictions with environmental DNA research.

Water eDNA Collection and Preservation

Sampling Strategies and Filtration Optimization

The detection of aquatic taxa, including fish and amphibians, via eDNA is highly dependent on effective filtration strategies. The choice of filter pore size and sampling approach directly impacts the volume of water processed and the subsequent yield of target DNA, which is critical for robust evolutionary analyses.

Table 1: Comparison of eDNA Filtration Strategies for Aquatic Monitoring

| Filter Pore Size | Sample Volume | Target Organisms | Key Advantages | Key Limitations | Suitability for Evolutionary Studies |

|---|---|---|---|---|---|

| 0.22 µm [29] | Small volumes (e.g., ≤ 1L) | Microbes, general community DNA | Captures very small particles; standard for microbial studies. | Prone to clogging in turbid water; processes smaller volumes. | High for microbial evolution and paleogenomics. |

| 0.45 µm [12] | ~1L (common standard) | General community DNA, some macroorganisms | Widespread use allows for meta-study comparisons. | Can co-capture excessive microbial DNA, diluting macro-fauna target. | Moderate, but potential for off-target amplification. |

| 5 µm [12] [29] | Large volumes (e.g., 3L) | Macroorganisms (e.g., fish, amphibians) | Maximizes target-to-total DNA ratio for vertebrates; enables sample pooling. | May miss smaller DNA fragments or very small organisms. | High for vertebrate evolutionary studies (e.g., fish, frogs). |

| 64 µm [29] | Very large volumes (>3000 L) | Large macroorganisms, rare species | Can detect rare species by filtering immense volumes. | Specialized equipment required; not suitable for all environments. | Specific applications for detecting rare/elusive species. |

Research demonstrates that for vertebrate taxa like anurans (frogs and toads), using a 5 µm filter pore size significantly increases the likelihood of detection compared to smaller pore sizes (e.g., 0.22 µm) [29]. This is because larger pore sizes are less susceptible to clogging from suspended particulates, allowing for a greater volume of water to be filtered and thereby increasing the probability of capturing trace amounts of vertebrate eDNA. Furthermore, a larger pore size selectively captures the larger DNA particles typically associated with macroorganisms, thereby improving the target-to-total DNA ratio and reducing the co-extraction of overwhelming quantities of non-target microbial DNA [12]. This is particularly advantageous for evolutionary studies focusing on specific vertebrate lineages.

Detailed Protocol: Water eDNA Collection for Vertebrate Studies

Application: This protocol is optimized for detecting vertebrate species (e.g., fish, amphibians) in freshwater ecosystems such as wetlands, streams, and lakes to map biodiversity and test phylogeographic hypotheses [3] [29] [27].

Experimental Workflow:

Materials:

- Field Equipment: GPS unit, sterile sampling bottles, waterproof datasheets.

- Filtration System: Peristaltic pump or manual syringe system; 5 µm filter membranes (e.g., Smith-Root filter); filter housings.

- Preservation Supplies: Silica gel desiccant packets or Longmire's preservation buffer; sterile forceps; 2 mL cryovials.

- Personal Protective Equipment (PPE): Nitrile gloves, safety glasses.

Methodology:

- Site Selection & Replication: Based on the evolutionary hypothesis (e.g., testing for the presence of a predicted endemic lineage), select sampling sites. To account for eDNA heterogeneity, collect water from multiple locations (e.g., 5) within each site [29].

- Sample Collection: Don clean nitrile gloves. Collect water from just below the surface (< 20 cm). For a pooled strategy, combine sub-samples from the multiple locations into a single container.

- Filtration: Using a pump or syringe, pass the water sample (target volume: 3L) through a 5 µm filter membrane. Record the final volume filtered. Change gloves between sites to prevent cross-contamination.

- Preservation: Using sterile forceps, carefully remove the filter from the housing. Place the filter in a preservation tube containing either silica gel or Longmire's buffer. Ensure the tube is tightly sealed.

- Documentation & Transport: Label all samples with unique IDs, date, and location. Store samples in a cool, dark container for transport to the lab. For silica-preserved filters, freezing at -20°C is recommended for long-term storage.

Soil eDNA Collection and Preservation

Sampling Design and Contaminant Management

Soil is a complex matrix rich in microbial and invertebrate life, but it also contains PCR inhibitors like humic and fulvic acids that can compromise downstream genetic analyses [30] [31]. A structured sampling design is therefore critical for obtaining representative data.

Table 2: Soil eDNA Sampling Techniques for Biodiversity Studies

| Technique | Description | Spatial Coverage | Key Benefit | Application in Evolutionary Studies |

|---|---|---|---|---|

| Grid Sampling [30] | Divides area into uniform grids; samples collected at intersections. | High within a defined area. | Captures ~80% of spatial variability; ideal for fine-scale genetic structure. | Testing local adaptation and microevolution in soil fauna/microbiomes. |

| Transect Sampling [30] | Samples collected at intervals along a straight line. | Linear, good for gradients. | Detects ~15% more variation than random points; excellent for ecotones. | Studying genetic clines across environmental gradients (e.g., altitude, salinity). |

| Stratified Sampling [30] | Area divided into strata (e.g., by soil type); each stratum is sampled separately. | Targeted across distinct sub-areas. | Improves accuracy by ~20% in heterogeneous environments. | Comparing evolutionary histories of conspecific populations in different habitats. |

| Composite Sampling [30] | Combines 10-15 sub-samples from an area into one representative sample. | Broad, composite of an area. | Reduces analysis costs by 30% while maintaining ~90% accuracy. | Broad-scale biogeographical studies and metabarcoding for community phylogenetics. |

Detailed Protocol: Soil eDNA Collection for Metagenomic Studies

Application: This protocol is designed for extracting high-quality, inhibitor-free DNA from soil for metagenomic sequencing, enabling studies of microbial evolution, ancient sediment DNA, and soil food web interactions [31].

Experimental Workflow:

Materials:

- Soil Collection Tools: Metal soil corer or trowel (sterilizable), ruler, sterile Whirl-Pak bags or 50 mL conical tubes.

- Sterilization Supplies: 10% bleach solution, 70% ethanol, distilled water, field torch (for flaming).

- Documentation & Storage: GPS, field notebook, permanent marker, coolers with ice packs or dry ice, -20°C freezer.

Methodology:

- Site Stratification & Replication: Define the sampling area and strategy based on the research question. A minimum of 10-20 samples per 20 acres is recommended to capture 85% of variability [30].

- Equipment Sterilization: Clean all tools with 10% bleach, followed by 70% ethanol, and rinse with distilled water between each sample. Flaming with a field torch is also effective.

- Sample Collection: Using a sterilized corer, collect soil to a standardized depth (e.g., 0-6 cm for surface-dwelling organisms). Place the core into a sterile bag. Record GPS coordinates, depth, and habitat characteristics.

- Composite Sampling (if applicable): For a composite sample, combine soil from 10-15 randomly selected sub-samples within the defined area into a single sterile bag.

- Preservation & Transport: Soil samples should be immediately placed on ice or dry ice in the field and frozen at -20°C or -80°C upon return to the laboratory to halt microbial activity and DNA degradation.

Air and Unique Matrices

Air eDNA

Airborne eDNA is an emerging field with great potential for monitoring terrestrial biodiversity, including insects, birds, and mammals. While standardized protocols are still under development, the core principle involves filtering large volumes of air. Sampling often uses high-volume air pumps equipped with filters (e.g., 0.2-0.45 µm) to capture airborne particles. Preservation typically involves storing the filter in a sterile tube with a preservation buffer, similar to water eDNA protocols, followed by freezing.

Unique Matrices: Ancient Dental Calculus

Dental calculus (mineralized plaque) is a unique matrix that provides a long-term record of an individual's oral microbiome and dietary intake, offering profound insights into human and animal evolution, health, and migration [32].

Key Consideration: The choice of DNA extraction and library preparation methods significantly impacts the recovery of ancient DNA (aDNA) from calculus. No single protocol is universally best; optimization is required based on the preservation state of the sample [32].

- DNA Extraction: The two primary methods are the QG method (silica-based binding with guanidinium thiocyanate) and the PB method (sodium acetate/isopropanol/guanidinium hydrochloride), with the latter being more effective for recovering highly degraded DNA fragments shorter than 50 bp [32].

- Library Preparation: Both double-stranded (DSL) and single-stranded (SSL) library methods are used. SSL methods, despite being more costly and time-consuming, can provide higher yields of ultrashort DNA fragments, which are characteristic of aDNA [32].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for eDNA Field Collection and Preservation

| Reagent / Kit | Matrix | Function | Rationale |

|---|---|---|---|

| Silica Gel Desiccant | Water, Air | Preserves DNA on filters by rapid dehydration. | Stabilizes DNA at ambient temperature for weeks, crucial for remote fieldwork. |

| Longmire's Buffer | Water, Air | Lysis and preservation buffer for filters. | Immediately lyses cells and stabilizes DNA, preventing degradation. |

| Sodium EDTA [31] | Soil | Pre-lysis washing agent. | Chelating agent that helps release microbial cells from the soil matrix, improving yield. |

| SDS (Sodium Dodecyl Sulfate) [31] | Soil | Lysis agent in DNA extraction. | Ionic detergent that disrupts cell membranes and nuclei, releasing DNA. |

| CaCl₂ (Calcium Chloride) [31] | Soil | Chemical flocculant. | Precipitates and removes humic acid contaminants (PCR inhibitors) during extraction. |

| Zymo OneStep PCR Inhibitor Removal Kit [27] | Water (Turbid) | Post-extraction clean-up. | Critical for removing PCR inhibitors (e.g., humic acids) common in turbid estuarine or soil samples. |

| Platinum SuperFi II DNA Polymerase [27] | All (challenging samples) | PCR amplification. | High-fidelity, inhibitor-tolerant enzyme that enhances specificity and reduces off-target amplification. |

| Phenol-Chloroform-Isoamyl Alcohol [12] | All | DNA extraction and purification. | Maximizes total DNA recovery but may co-extract inhibitors; decision to use depends on target. |

The rigorous collection and preservation of environmental DNA from diverse matrices form the foundational step in a robust research pipeline aimed at validating evolutionary predictions. The protocols outlined here—from optimizing filter pore size for aquatic vertebrates to implementing stratified soil sampling and handling ancient dental calculus—are designed to maximize the quality and interpretability of genetic data. By carefully selecting and applying these standardized methods, researchers can confidently generate the high-fidelity eDNA data required to test complex hypotheses about speciation, adaptation, and the historical dynamics of biodiversity on Earth.

In the pursuit of novel bioactive compounds, biosynthetic gene clusters (BGCs) represent a prime target for genomic exploration, especially within complex environmental samples. These clusters encode the machinery for producing diverse natural products with applications ranging from antibiotics to anticancer agents. The choice of sequencing technology—short-read, long-read, or a hybrid approach—directly influences the completeness and accuracy of BGC reconstruction, thereby impacting downstream discovery efforts. Each strategy presents distinct trade-offs between sequence accuracy, contiguity, and cost, making the selection process critical for researchers aiming to validate evolutionary predictions through environmental DNA research. This article provides a structured comparison of these technologies and offers practical protocols for their application in BGC assembly.

Technology Comparison: Performance Metrics and Trade-offs

Direct Performance Comparison of Sequencing Strategies

Extensive benchmarking reveals that no single sequencing strategy excels across all performance metrics. The optimal choice depends on the specific research goals, whether prioritizing the quantity of recovered genomes, their quality, or the completeness of specific genomic regions like BGCs.

Table 1: Comparative Performance of Sequencing Strategies for Metagenomic Assembly

| Performance Metric | Short-Read (Illumina) | Long-Read (PacBio HiFi) | Hybrid (Short-Read + Long-Read) |

|---|---|---|---|

| Contiguity (N50) | Lower (e.g., ~700 bp in soil) [33] | Highest (e.g., 37,986-47,542 bp in soil) [33] | Intermediate, but higher than short-read alone [33] |

| Number of Contigs | Highest | Lowest | Lower than short-read alone [34] |

| Assembly Accuracy | High | High (for HiFi) | High (after polishing) |

| BGC Reconstruction | Fragmented; struggles with repetitive regions [35] [36] | Excellent; long reads span repetitive BGCs [35] [37] | Longest assemblies; high mapping rate to bacterial genomes [34] |

| Quantity of Reconstructed Genomes (Bins) | Highest (e.g., with 40 Gbp data) [34] | Requires deeper sequencing for comparable quantity [34] | Cost-effective for high-quality bins [38] |

| Cost per Data Unit | Lowest | Higher | Intermediate (dependent on mix) |

Analysis of Trade-offs and Strategic Implications

The data from comparative studies indicate several key trade-offs. Short-read sequencing is highly cost-effective for recovering a large number of metagenome-assembled genomes (MAGs) and excels in base-level accuracy [34]. However, its fundamental limitation is fragmentation, particularly problematic for BGCs which are often lengthy and contain repetitive sequences [35] [36]. Consequently, short-read assemblies often yield BGCs that are incomplete or split across multiple contigs.

Conversely, long-read technologies like PacBio HiFi generate highly contiguous assemblies, producing the highest N50 statistics and lowest contig counts [34] [33]. This allows them to span entire repetitive regions, resolving complex BGCs that are intractable to short-read technologies [37]. The primary barriers have been higher cost and the deeper sequencing required to recover a number of MAGs comparable to short-read projects [34].

The hybrid approach seeks to balance these trade-offs. It leverages long reads to create a scaffold for contiguity and short reads to polish for accuracy. This strategy has been shown to yield the longest assemblies and the highest mapping rates to bacterial genomes [34], making it a powerful and often cost-efficient method for comprehensive BGC exploration [38].

Recommended Experimental Protocols

Protocol 1: Cost-Effective Hybrid Assembly for GC-Rich Actinobacteria

This protocol is optimized for sequencing GC-rich actinobacteria, prolific BGC producers, using a multiplexed Nanopore-Illumina workflow that reduces costs by over 50% compared to PacBio-based approaches [38].

Step 1: DNA Extraction

- Use standard phenol:chloroform library preparation methods [38]. High molecular weight DNA is critical for long-read sequencing.

Step 2: Multiplexed Library Preparation and Sequencing

- Oxford Nanopore Sequencing: Use the Rapid Barcoding Kit (SQK-RBK004) to multiplex up to 12 genomes on a single MinION flow cell.

- Illumina Sequencing: Use a multiplexing kit (e.g., plexWell 96) to prepare libraries for short-read sequencing. This ensures uniform coverage across samples with varying GC content.

Step 3: Hybrid Assembly and Polishing

- Perform initial assembly of Nanopore reads using a long-read assembler like Flye (v2.8).

- Polish the resulting assembly with the Illumina short reads. Use 4 rounds of polishing with tools like Pilon or NextPolish, as the most significant quality improvements are seen in the first few rounds before saturation occurs [38].

Step 4: BGC Identification

- Annotate the polished, high-quality genomes using BGC prediction platforms such as antiSMASH or PRISM [35] [39].

Protocol 2: Metagenomic Assembly from Complex Soil Samples

For highly complex samples like soil, a hybrid strategy combining PacBio and Illumina data maximizes gene pool coverage and assembly integrity [33].

Step 1: DNA Sequencing

- Sequence the same soil DNA extract using both PacBio RS II/Sequel IIe (for long reads) and Illumina NovaSeq (for short reads) platforms.

Step 2: Combined Data Assembly

- Assemble the metagenome using a hybrid assembler that can take both data types simultaneously (e.g., metaSPAdes with the

--pacbioflag). This approach generates more contigs than long-read-only and longer contigs than short-read-only assemblies [34] [33].

Step 3: Functional Analysis

- The resulting hybrid (PI) assembly significantly enlarges the accessible gene pool compared to either method alone, providing a more complete resource for BGC discovery and metabolic pathway analysis [33].

The Scientist's Toolkit: Essential Research Reagents