Validating Molecular Dynamics Convergence: A Comprehensive Guide for Reliable Biomolecular Simulations

This article provides a comprehensive framework for validating convergence in molecular dynamics (MD) simulations, a critical step for ensuring the reliability and reproducibility of results in biomedical research and drug...

Validating Molecular Dynamics Convergence: A Comprehensive Guide for Reliable Biomolecular Simulations

Abstract

This article provides a comprehensive framework for validating convergence in molecular dynamics (MD) simulations, a critical step for ensuring the reliability and reproducibility of results in biomedical research and drug development. It addresses the foundational challenge of distinguishing between true thermodynamic equilibrium and insufficient sampling, which can otherwise invalidate simulation findings. We systematically explore a suite of validation methods, from essential geometric metrics and advanced statistical analyses to critical comparisons with experimental data such as NMR and SAXS. The guide further offers practical troubleshooting strategies for challenging systems like intrinsically disordered proteins and outlines best practices for reporting, empowering researchers to achieve statistically robust and biologically meaningful convergence in their simulations.

Understanding Convergence: Why Your Simulation's Validity Depends on It

In molecular dynamics (MD) simulations, the assumption that a system has reached thermodynamic equilibrium is fundamental for the reliability of computed properties. However, convergence—the sufficient sampling of a system's phase space—remains one of the most significant, yet often overlooked, challenges. Relying solely on the plateau of properties like root-mean-square deviation (RMSD) can be misleading, potentially invalidating simulation results [1]. This guide compares modern methods for assessing convergence, moving beyond simple metrics to provide researchers with robust validation protocols.

Defining Convergence and Equilibrium in MD

In the context of MD, equilibrium refers to the state where a system's properties no longer exhibit a directional drift and fluctuate around a stable average. Convergence specifically means that the simulation has sampled a representative portion of the system's conformational space such that the calculated average of a property is sufficiently close to its true thermodynamic average [1].

A critical working definition for an "equilibrated" property is as follows: given a trajectory of length T and a property Aᵢ measured from it, the running average of Aᵢ(t) calculated from times 0 to t must show only small fluctuations around its final value Aᵢ(T) for a significant portion of the trajectory after a convergence time t_c [1]. It is vital to recognize that a system can be in a state of partial equilibrium, where some properties (e.g., local distances relevant to biological function) have converged, while others (e.g., transition rates to rare conformations or the full free energy) have not [1].

Beyond RMSD: A Comparison of Convergence Assessment Methods

The following table summarizes key methods for assessing convergence, highlighting their applications and limitations.

| Method | Core Principle | Best For | Key Limitations |

|---|---|---|---|

| RMSD / Potential Energy [1] | Monitoring the stability of structural or energetic time-series. | Initial equilibration checks; simple, stable systems. | Misleading for systems with surfaces/interfaces; plateau does not guarantee true convergence [1] [2]. |

| Linear Density (DynDen) [2] | Tracking the convergence of the linear partial density profiles of all system components along an axis. | Systems with interfaces, layers, or inhomogeneous densities (e.g., membranes, materials surfaces). | Primarily validates spatial distribution convergence; less informative on specific functional dynamics. |

| Auto-Correlation Functions (ACF) [1] | Measuring the time a property "remembers" its previous state; convergence implies ACF decay. | Assessing whether dynamical properties (e.g., residue motion) have been sampled long enough. | Difficult to define a universal convergence threshold; can indicate non-equilibrium on very long timescales [1]. |

| Property-Specific Running Averages [1] | Calculating the cumulative average of a property (e.g., radius of gyration, distance) throughout the trajectory. | Determining if specific, biologically relevant metrics have stabilized, enabling partial equilibrium validation. | A converged running average does not guarantee the system has escaped a deep local energy minimum [1]. |

| Validation vs. Experiment [3] [4] | Comparing simulation-derived observables (e.g., SAXS, NMR) with experimental data. | Providing external, quantitative validation that the simulated ensemble matches reality. | Agreement with experiment can be non-unique; different ensembles may yield similar averages [3]. |

| AI-Predicted Distributions (DiG) [5] | Using deep learning to predict a system's equilibrium distribution of structures from its sequence or chemical graph. | Rapidly sampling diverse conformations and estimating state densities, much faster than conventional MD. | A predictive model; accuracy depends on training data and the quality of the underlying energy function. |

Experimental Protocols for Convergence Validation

Protocol for DynDen-Based Convergence Analysis

DynDen was developed specifically to address the failure of RMSD in systems with interfaces [2].

Research Reagent Solutions:

Methodology:

- System Preparation: Run your MD simulation as usual, ensuring the trajectory is saved for analysis.

- Axis Definition: Identify the direction (typically the z-axis) normal to the interface or layered structure in your system.

- Density Calculation: Use DynDen to compute the one-dimensional number density of each component (e.g., water, ions, lipid headgroups) along the defined axis as a function of simulation time.

- Convergence Assessment: The simulation is considered converged when the density profiles for all components no longer show a systematic drift and only fluctuate stochastically. DynDen quantifies this by reporting on the convergence of the correlation between density profiles over time [2].

Protocol for Multi-Property and Experimental Validation

This methodology emphasizes that convergence is property-dependent and benefits from external validation [1] [3] [4].

Research Reagent Solutions:

- Simulation Software: AMBER, GROMACS, NAMD, OpenMM [3] [6].

- Analysis Tools: MD analysis packages (e.g., built-in tools, MDAnalysis) and forward model calculators (e.g., for predicting NMR chemical shifts or SAXS profiles from structures).

- Experimental Data: NMR order parameters, J-couplings, Small-Angle X-Ray Scattering (SAXS) data, or chemical probing data [4].

Methodology:

- Multi-Trajectory Simulations: For a given system, run multiple independent simulations (replicas) starting from different initial velocities [3].

- Property Monitoring: For each replica, track the running average of multiple properties:

- Structural: RMSD of the backbone and specific domains.

- Energetic: Total and potential energy.

- Biologically Relevant: Distances between functional residues, radius of gyration, or dihedral angles.

- Inter-Replica Comparison: Compare the distributions of your key properties across all independent replicas. If the simulations are converged, all replicas should sample the same distribution of states, and their property averages should agree within statistical error.

- Experimental Comparison: "Forward-calculate" experimental observables from your simulation ensemble and compare them directly to real experimental data. A good agreement increases confidence that the simulation has sampled a physically accurate and converged ensemble [3] [4].

The Scientist's Toolkit: Key Research Reagents

| Tool / Reagent | Function in Convergence Analysis |

|---|---|

| DynDen [2] | Python tool for assessing convergence in simulations of interfaces and layered systems via linear density profiles. |

| MDAnalysis [2] [7] | A Python library for analyzing MD trajectories, used to manipulate coordinates and compute various properties. |

| ECToolkits [7] | An open-source Python package used for analyzing properties like water density profiles at interfaces. |

| Maximum Entropy Reweighting [4] | A quantitative statistical method to bias a simulated ensemble to match experimental data, helping to identify and correct sampling deficiencies. |

| Distributional Graphormer (DiG) [5] | A deep learning model that predicts the equilibrium distribution of molecular structures, providing a fast alternative for sampling and density estimation. |

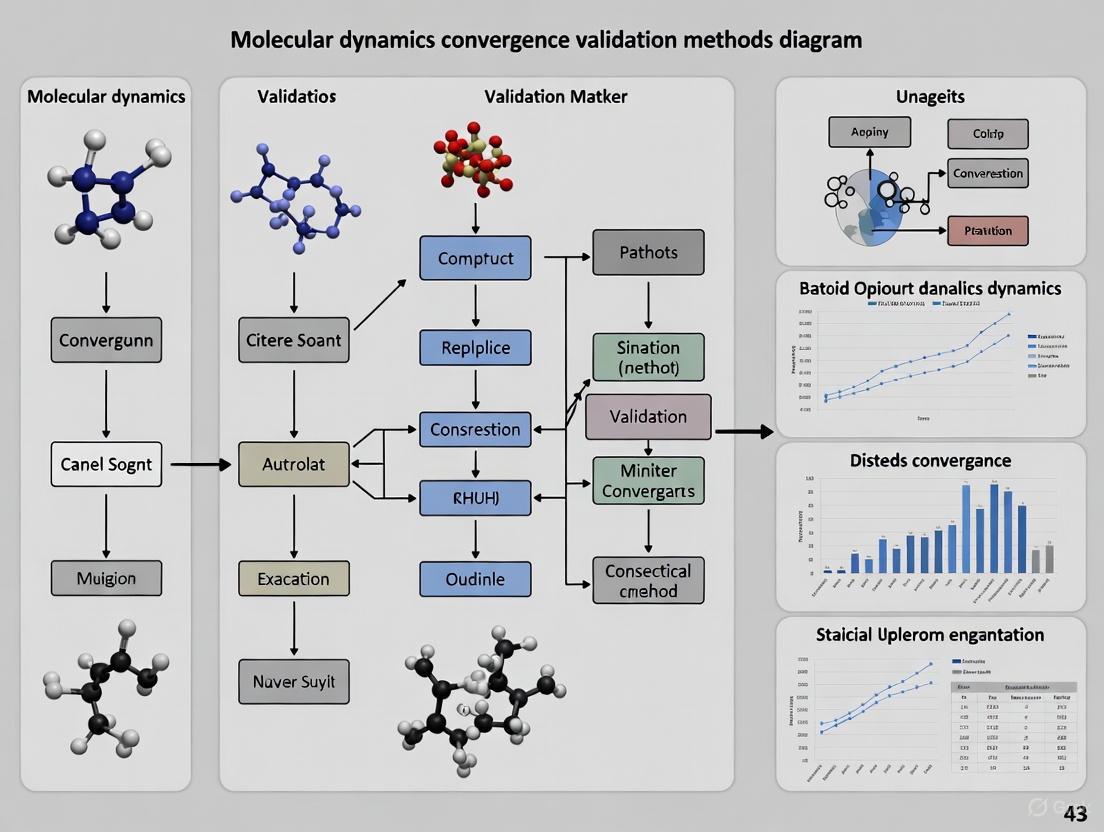

Workflow Visualization: Convergence Assessment Pathways

The following diagram illustrates the logical decision process for a robust convergence assessment strategy.

Convergence Assessment Workflow

Moving beyond simple plateaus in RMSD or energy is essential for credible MD simulations. As demonstrated, a multi-faceted approach is necessary: using specialized tools like DynDen for complex systems, monitoring property-specific running averages, comparing multiple replicas, and, wherever possible, validating against experimental data. The emergence of AI-based methods like DiG offers a promising path for rapidly estimating equilibrium distributions. By adopting these rigorous validation protocols, researchers can significantly enhance the reliability of their molecular simulations in drug development and basic research.

In molecular dynamics (MD), the "critical sampling problem" refers to the significant computational challenge of simulating a biomolecular system long enough and thoroughly enough to explore all its biologically relevant conformations. Proteins are not static entities; they exist as dynamic ensembles of interconverting structures, and their functions often depend on accessing rare, transient states that are separated by high energy barriers [8] [9]. The core of the problem is twofold: first, the conformational space of even a small protein is astronomically large, and second, the timescales of functionally important motions (e.g., slow conformational changes in allosteric proteins or the folding of intrinsically disordered proteins) can range from microseconds to seconds, far beyond what is routinely accessible by conventional MD simulations [10] [3]. This sampling limitation means that a simulation might provide an accurate picture of one conformational basin but completely miss others that are critical for understanding biological mechanism or for drug design.

The necessity to validate convergence—the point at which a simulation has adequately sampled the representative configurations of the system—is therefore paramount. Without robust validation, simulation results may be misleading, reflecting only the initial conditions or a limited subset of possible states rather than the true thermodynamic ensemble [11] [12]. This guide objectively compares the performance of modern methods and tools developed to assess and overcome the sampling problem, providing researchers with a framework for validating their molecular dynamics simulations.

Established vs. Emerging Convergence Validation Methods

A fundamental step in any MD workflow is determining when a simulation has reached equilibrium and has sampled a sufficient portion of the conformational landscape. The methods for assessing this range from traditional and often subjective measures to modern, automated, and quantitative tools.

Table 1: Comparison of Convergence Assessment Methods

| Method | Core Principle | Key Advantages | Documented Limitations | Typical Application Context |

|---|---|---|---|---|

| Root Mean Square Deviation (RMSD) | Measures average atomic displacement of a structure relative to a reference. | Intuitive; simple to calculate; standard output of MD packages. | Highly subjective in interpretation [12]; unsuitable for systems with interfaces [2]; can mask underlying heterogeneity. | Initial, quick assessment of structural stability. |

| Root Mean Square Fluctuation (RMSF) | Measures fluctuation of each atom around its average position. | Identifies flexible and rigid regions within a protein structure. | Does not report on convergence of the entire system; a local measure. | Analyzing flexibility of specific domains or loops. |

| DynDen [2] | Tracks convergence of the linear partial density profile of all system components. | Objective criterion; particularly effective for interfaces and layered materials. | Relatively new method; less established in general-purpose biomolecular simulation. | Simulations of surfaces, membranes, and heterogeneous systems. |

| Principal Component Analysis (PCA) | Identifies large-amplitude, collective motions from the covariance matrix of atomic positions. | Reduces dimensionality; reveals dominant motions in the ensemble. | Requires significant post-processing; the first few components may not capture full complexity. | Extracting essential dynamics from long trajectories. |

| Deep Learning (ICoN) [10] | Uses an autoencoder to learn a low-dimensional latent space representing conformational changes from MD data. | Rapidly generates novel, thermodynamically stable conformations beyond training data. | Requires initial MD data for training; complexity of model setup. | Enhanced sampling of highly dynamic systems like IDPs. |

The Limitations of Traditional RMSD Analysis

For years, the visual inspection of RMSD plots has been a common practice for determining simulation convergence. The underlying assumption is that once the system reaches a stable "plateau" in its RMSD, it has equilibrated. However, a landmark study demonstrated that this method is fundamentally unreliable and subjective [12]. In a survey where scientists were asked to identify the point of equilibrium in randomized RMSD plots, there was no mutual consensus, and their decisions were severely biased by factors such as the color and y-axis scaling of the plot. This finding strongly indicates that the scientific community should not rely on RMSD plots alone to discuss equilibration.

Furthermore, RMSD is a global metric that can be insensitive to specific types of changes. For instance, in systems featuring surfaces and interfaces, RMSD has been shown to be an "unsuitable convergence descriptor" because it can fail to capture important structural reorganizations at the interface [2].

Advanced and System-Specific Tools

To address the shortcomings of RMSD, more robust tools have been developed. DynDen is one such tool designed specifically for assessing convergence in simulations of interfaces and layered materials [2]. Its solution is based on monitoring the convergence of the linear partial density profile correlation for each component in the system. This provides an objective and effective criterion for these challenging systems, moving beyond global measures to a more granular analysis.

For a more holistic view of the conformational ensemble, Principal Component Analysis (PCA) is widely used. PCA works by diagonalizing the covariance matrix of atomic positions to extract the principal modes of motion—the directions in which the protein moves with the largest amplitude. By projecting the MD trajectory onto these first few principal components, researchers can visualize the free energy landscape and identify major conformational basins. However, converging a PCA can be challenging, as the slowest, most functionally relevant motions may be missed if the simulation is too short.

A New Paradigm: Integrating Machine Learning for Enhanced Sampling

Artificial intelligence is rapidly transforming the field of MD by providing powerful new strategies to tackle the sampling problem. A prime example is the Internal Coordinate Net (ICoN), a generative deep learning model that learns the physical principles of conformational changes from existing MD simulation data [10].

Experimental Protocol: Deep Learning for Conformational Sampling

The methodology for using a tool like ICoN typically involves several key steps, which are outlined in the workflow below.

The workflow for AI-enhanced sampling, as demonstrated with ICoN, involves a structured, multi-step process [10]:

- Initial MD Simulation: A (relatively short) conventional MD simulation is first run to generate a baseline set of conformational data. For instance, ICoN was successfully trained using just 1% of a full MD dataset for the highly dynamic Aβ42 monomer.

- Molecular Representation: The Cartesian coordinates from the MD trajectory are converted into an internal coordinate representation. ICoN uses a novel vector Bond-Angle-Torsion (vBAT) representation, which elegantly handles the periodicity of dihedral angles and is inherently invariant to molecular rotation and translation.

- Model Training: An autoencoder neural network is trained to compress the high-dimensional vBAT data into a low-dimensional (e.g., 3D) latent space. Through iterative training, the model learns the essential physical principles governing the protein's motions.

- Conformation Generation: New, synthetic conformations are generated by interpolating between points in the trained latent space. This allows for the efficient exploration of conformational regions that were not present in the original training data, effectively leaping over high energy barriers.

- Validation: The generated structures are validated by reconstructing them back to Cartesian coordinates and comparing them to original structures (e.g., achieving heavy-atom RMSD < 1.3 Å for Aβ42 [10]) and by checking for the presence of known, biologically relevant interactions that were not in the training set.

Performance Comparison of AI vs Traditional MD

The ICoN model demonstrated its power by rapidly identifying thousands of new, conformationally distinct, and thermodynamically stable conformations for the amyloid-β42 monomer in a few minutes on a single gaming GPU [10]. Critically, its analysis revealed novel conformations with important interactions, such as a specific Asp23-Lys28 salt bridge, that were not included in the original training data but have been reported in experimental literature. This ability to extrapolate and discover biologically relevant states marks a significant advance over traditional sampling methods.

Experimental Validation: The Cornerstone of Reliable Sampling

Ultimately, the most compelling validation for any MD simulation comes from its agreement with experimental data. Simulations should be able to reproduce and predict experimental observables. Key techniques for this validation include:

- Nuclear Magnetic Resonance (NMR): Provides atomic-resolution data on dynamics and can measure parameters like chemical shifts, spin relaxation, and residual dipolar couplings that report on conformational ensembles [9].

- Cryo-Electron Microscopy (Cryo-EM): Advanced cryo-EM techniques, including time-resolved cryo-EM and cryo-electron tomography (Cryo-ET), can capture multiple conformational states of large complexes in near-native conditions, providing a direct structural benchmark for simulation ensembles [9].

- Small-Angle X-Ray Scattering (SAXS): Provides low-resolution information about the overall shape and dimensions of a biomolecule in solution, which is a sensitive probe of whether a simulated ensemble accurately represents the true global structure [8].

A comprehensive study comparing four different MD packages (AMBER, GROMACS, NAMD, and ilmm) highlighted that while most packages reproduced experimental data well at room temperature, the underlying conformational distributions and extent of sampling differed [3]. This underscores a critical point: agreement with a single experimental average does not guarantee the conformational ensemble is correct. Multiple diverse ensembles can yield the same average. Therefore, validation should strive to match as many different experimental observables as possible.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Computational Tools for Sampling and Validation

| Item / Software | Function in Research | Relevance to Sampling Problem |

|---|---|---|

| GROMACS [3] | A high-performance MD software package for simulating biomolecular systems. | Widely used engine for generating simulation trajectories; its efficiency allows for longer sampling. |

| OpenMM [6] | A toolkit for MD simulations, emphasizing high performance on GPUs. | The core engine behind drMD; enables rapid hardware-accelerated sampling. |

| drMD [6] | An automated pipeline for running MD simulations using OpenMM. | Reduces expertise barrier, ensures reproducibility, and makes best-practice MD more accessible. |

| MDAnalysis [2] | A Python library for analyzing MD trajectories. | Essential for post-processing trajectories, calculating observables, and assessing convergence. |

| DynDen [2] | A Python-based tool for assessing convergence via linear density profiles. | Provides an objective convergence metric for challenging systems like interfaces. |

| ICoN [10] | A generative deep learning model for protein conformational sampling. | Directly addresses the sampling problem by generating novel, stable conformations beyond MD data. |

| CHARMM36 [3] | A widely used biomolecular force field. | The accuracy of the energy function dictates the realism of the sampled conformational landscape. |

| AMBER ff99SB-ILDN [3] | A high-quality force field for proteins. | Another leading force field; choice impacts simulated dynamics and populations of states. |

The critical sampling problem remains a central challenge in molecular dynamics, but the toolkit for addressing it has never been more powerful. The field is moving decisively away from subjective, single-metric validation like RMSD and towards a multi-faceted approach that combines robust system-specific tools like DynDen, powerful AI-enhanced sampling methods like ICoN, and rigorous validation against a suite of experimental data. For researchers and drug development professionals, the key to success lies in carefully selecting the appropriate sampling and validation methods for their specific biological system, while remaining aware that the convergence of a simulation is not a single point to be identified, but a property to be comprehensively demonstrated. The integration of machine learning with physical principles promises to further expand the frontiers of the conformational landscapes we can explore, ultimately leading to deeper insights into biological function and more effective therapeutic design.

Distinguishing Partial from Full Equilibrium for Different Biological Properties

In the field of molecular dynamics and biophysical research, accurately determining whether a system has reached equilibrium is fundamental to validating computational and experimental results. The distinction between partial equilibrium (where only some properties have stabilized) and full equilibrium (where all properties have converged) carries profound implications for interpreting data across biological studies, from drug discovery to protein folding analysis. Within molecular dynamics simulations, an often-overlooked assumption persists: that simulated trajectories are sufficiently long to ensure systems have reached thermodynamic equilibrium, thus validating measured properties [13]. When this assumption remains unchecked, it potentially invalidates results from countless studies, particularly in pharmaceutical development where binding affinities and reaction kinetics dictate candidate drug efficacy.

This guide systematically compares how different biological properties achieve partial versus full equilibrium across experimental and computational domains. By examining convergence behaviors through quantitative data, detailed methodologies, and standardized visualization frameworks, we provide researchers with validation tools essential for robust scientific conclusions in molecular dynamics convergence validation methods research.

Theoretical Framework: Defining Equilibrium States

Conceptual Foundations

In statistical mechanics, true thermodynamic equilibrium requires complete exploration of the conformational space (Ω), with physical properties derived from the partition function Z that incorporates contributions from all possible states, including those with low probability [13]. The mathematical expressions for fundamental quantities like free energy (F) and entropy (S) depend explicitly on this complete partition function [13].

For practical applications in biomolecular studies, a working definition has been proposed: "Given a system's trajectory with total time-length T, and a property Aᵢ extracted from it, and calling 〈Aᵢ〉(t) the average of Aᵢ calculated between times 0 and t, we consider that property 'equilibrated' if the fluctuations of the function 〈Aᵢ〉(t), with respect to 〈Aᵢ〉(T), remain small for a significant portion of the trajectory after some convergence time, t꜀" [13]. This definition acknowledges that partial equilibrium may be sufficient for properties depending primarily on high-probability regions of conformational space, while full equilibrium remains necessary for properties sensitive to low-probability regions.

Physical Significance in Biological Systems

The equilibrium state fundamentally determines how biological systems respond to stimuli and process information. Non-equilibrium thermodynamics impose constraints on biochemical networks' computational expressivity, limiting their ability to classify high-dimensional chemical states [14]. These constraints emerge from universal thermodynamic limitations that affect how Markov jump processes—abstractions of general biochemical networks—respond to environmental inputs [14]. Understanding whether a system has reached full equilibrium or exhibits only partial equilibrium behaviors is therefore essential not only for accurate measurement but for predicting biological function.

Property-Specific Convergence Analysis

Comparative Convergence Timescales

Table 1: Equilibrium Status of Different Biological Properties Across Methodologies

| Biological Property | Methodology | Timescale for Partial Equilibrium | Timescale for Full Equilibrium | Key Convergence Metrics |

|---|---|---|---|---|

| Protein Structural Metrics (RMSD) | Molecular Dynamics | ~100ns-1μs [13] | Multi-microsecond trajectories [13] | Plateau in RMSD time series, potential energy stabilization |

| Ligand-Receptor Binding Constant (Kd) | Equilibrium Binding Assays | 5-30 minutes (high ligand concentrations) [15] | Varies with system; requires confirmed plateau [15] | Saturation binding curve, Langmuir isotherm fit |

| Relative Binding Free Energy (RBFE) | Alchemical Equilibrium Methods | N/A (requires full equilibrium) [16] | ~20ns ensemble simulations [16] | Statistical robustness across ensemble replicates |

| Relative Binding Free Energy (RBFE) | Alchemical Nonequilibrium Methods | N/A (requires equilibrium end states) [16] | ~40ns end-state sampling [16] | Work distribution consistency, Crooks' theorem validation |

| Transition Rates Between Conformational States | Molecular Dynamics | Not achievable without full equilibrium [13] | >100μs for low-probability conformations [13] | Probability distribution across states, autocorrelation decay |

| Biochemical Network Classification | Markov Jump Processes | N/A (steady-state assumption) [14] | Network topology-dependent [14] | Steady-state probability distribution, matrix-tree theorem |

Molecular Dynamics Properties

In molecular dynamics simulations, properties with the most biological interest—such as average structural metrics and domain distances—typically converge within multi-microsecond trajectories, achieving what qualifies as partial equilibrium for many research purposes [13]. These properties depend predominantly on high-probability regions of conformational space and can be reasonably approximated without complete exploration of all possible states.

However, transition rates to low-probability conformations require substantially longer timescales to achieve convergence, as they explicitly depend on thorough sampling of rarely visited regions of the conformational landscape [13]. This creates a scenario where a system may appear equilibrated for some research questions while remaining inadequate for others, highlighting the property-specific nature of equilibrium validation.

Ligand-Receptor Binding Properties

In receptor binding assays, the equilibrium dissociation constant (Kd) represents a fundamental property requiring confirmed equilibrium conditions. The assumption of equilibrium is particularly vulnerable to distortion from experimental factors including ligand depletion, insufficient incubation times, and inappropriate temperature or buffer conditions [15].

Competition binding experiments further complicate equilibrium determination, as they require the system to reach equilibrium not only for the radioligand but also for the unlabeled competitor. The Cheng-Prusoff transformation (Kᵢ = IC₅₀/(1 + [L]/Kdʟ)) used to calculate inhibitor constants relies explicitly on equilibrium conditions being established for all components [15].

Free Energy Calculations

Alchemical free energy calculations represent a particularly demanding application where the equilibrium distinction proves critical. Both equilibrium (FEP, TI) and nonequilibrium (Jarzynski, Crooks) methods fundamentally depend on equilibrium sampling—the former requiring equilibrium at all intermediate states, and the latter requiring equilibrium at end states [16].

Ensemble approaches have emerged as essential for reliable free energy predictions, as they quantify uncertainty and ensure statistical robustness lacking in one-off simulations [16]. The recent recognition that individual trajectories up to 10μs may not yield accurate macroscopic expectation values has driven the shift toward ensemble methods in both equilibrium and nonequilibrium free energy calculations [16].

Experimental Protocols for Equilibrium Validation

Molecular Dynamics Convergence Protocol

Table 2: Essential Research Reagents and Computational Tools for Equilibrium Validation

| Research Reagent/Software Tool | Function in Equilibrium Determination | Key Applications |

|---|---|---|

| Molecular Dynamics Engine (e.g., GROMACS, AMBER) | Generation of biomolecular trajectories | Sampling conformational space for proteins, nucleic acids |

| Radiolabeled Ligands (e.g., ³H, ¹²⁵I) | Tracing receptor binding events | Saturation and competition binding assays |

| Rapid Membrane Filtration System | Separation of bound and free ligand | Quantification of receptor-ligand complexes |

| Markov Jump Process Framework | Abstraction of biochemical network kinetics | Analysis of non-equilibrium cellular decision making |

| Thermodynamic Integration (TI) | Alchemical transformation between states | Relative binding free energy calculations |

| Bennett's Acceptance Ratio (BAR) | Analysis of nonequilibrium work distributions | Free energy estimation from fast switching simulations |

Objective: To determine whether a molecular dynamics simulation has reached partial or full equilibrium for specific biological properties of interest.

Methodology:

- Trajectory Generation: Conduct multi-microsecond simulations using explicit solvent models, starting from experimentally determined structures [13].

- Time-Series Analysis: Calculate running averages 〈Aᵢ〉(t) for key properties including:

- Root-mean-square deviation (RMSD) of protein backbone

- Radius of gyration

- Inter-domain distances

- Solvent accessible surface area

- Hydrogen bonding patterns

- Convergence Assessment: Identify the convergence time t꜀ for each property when fluctuations of 〈Aᵢ〉(t) relative to 〈Aᵢ〉(T) fall below acceptable thresholds (typically <5% deviation).

- Autocorrelation Analysis: Compute autocorrelation functions for dihedral angles and positional variables to assess decay timescales [13].

- Ensemble Validation: Repeat simulations with different initial velocities to confirm reproducibility across ensembles [16].

Interpretation: Properties showing stable plateaus in running averages for significant portions of the trajectory (typically >30% of simulation time) are considered equilibrated. Systems where all biologically relevant properties meet this criterion are considered fully equilibrated.

Ligand Binding Assay Equilibrium Protocol

Objective: To establish and verify equilibrium conditions in receptor-ligand binding experiments for accurate Kd determination.

Methodology:

- Time Course Experiments: Incubate receptor preparations with radioligand for varying durations (5 minutes to 24 hours) at constant temperature [15].

- Specific Binding Measurement: Quantify bound ligand at each time point using rapid filtration or alternative separation techniques.

- Plateau Identification: Confirm specific binding has reached a stable plateau where additional incubation time does not significantly increase binding (>5% change).

- Ligand Depletion Assessment: Verify that free ligand concentration remains within 90% of initial concentration to avoid depletion artifacts [15].

- Competition Experiments: For inhibitor constant determination, confirm simultaneous equilibrium for both radioligand and unlabeled competitor through time-course studies.

- Temperature and Buffer Control: Maintain constant experimental conditions throughout, as both temperature and buffer composition significantly impact equilibrium attainment [15].

Interpretation: Equilibrium is confirmed when binding reaches a stable plateau across multiple consecutive time points, with linear Scatchard plots and consistency with mass action principles.

Alchemical Free Energy Calculation Protocol

Objective: To validate equilibrium conditions in relative binding free energy calculations using either equilibrium or nonequilibrium methods.

Methodology - Equilibrium Approach (TI/FEP):

- Ensemble Simulation: Conduct multiple independent simulations (typically 5-20 replicas) at each alchemical intermediate state [16].

- Decorrelation Analysis: Ensure sufficient sampling by measuring autocorrelation times of potential energy and key structural metrics.

- Convergence Monitoring: Calculate free energy differences using block averaging with increasing block sizes to confirm stability.

- Error Quantification: Determine statistical uncertainty through ensemble standard deviations and bootstrap analysis [16].

Methodology - Nonequilibrium Approach:

- End-State Equilibrium: Conduct extensive equilibrium sampling (≥40ns) at both initial and final states [16].

- Snapshot Extraction: Extract multiple uncorrelated configurations (50-150) from each end-state trajectory.

- Fast Switching: Perform rapid alchemical transformations (100ps-5ns) in both directions (0→1 and 1→0).

- Work Distribution Analysis: Apply Crooks' fluctuation theorem or BAR to calculate free energy differences.

- Transition Time Validation: Confirm sufficient transition duration by testing convergence with increasing switching times [16].

Interpretation: Reliable free energy estimates require statistical consistency across ensemble replicas, symmetric work distributions in nonequilibrium approaches, and uncertainty estimates smaller than chemical accuracy thresholds (1 kcal/mol).

Visualization Framework for Equilibrium Analysis

Equilibrium Determination Workflow: This diagram illustrates the iterative process for determining partial and full equilibrium states in computational studies, highlighting decision points where properties are evaluated for convergence.

Discussion and Research Implications

Practical Consequences for Drug Development

The distinction between partial and full equilibrium carries direct implications for drug discovery pipelines. In lead optimization, where relative binding free energy calculations prioritize candidate compounds, undetected non-equilibrium conditions can misrank compound priorities, wasting synthetic chemistry resources [16]. Similarly, in vitro binding assays conducted under presumed but unverified equilibrium conditions may yield inaccurate Kd values, leading to flawed structure-activity relationships [15].

The computational expressivity of biological systems—their ability to classify chemical states—depends fundamentally on non-equilibrium thermodynamics, with recent research revealing that increasing "input multiplicity" (e.g., enzymes acting on multiple targets) can exponentially enhance classification capability [14]. This underscores how equilibrium status directly impacts biological function beyond mere measurement accuracy.

Methodological Recommendations

Based on comparative analysis across methodologies, we recommend:

- Property-Specific Validation: Always assess convergence for each specific property of interest rather than assuming system-wide equilibrium [13].

- Ensemble Approaches: Replace single long simulations with ensemble replicates for statistically robust equilibrium determination [16].

- Time-Course Verification: Experimentally confirm equilibrium attainment through comprehensive time-course studies rather than relying on fixed incubation times [15].

- Multi-scale Framework: Adopt a hierarchical equilibrium validation approach that recognizes different properties converge at different timescales.

Distinguishing partial from full equilibrium across biological properties requires method-specific validation frameworks and acknowledgment that different properties converge at fundamentally different timescales. Molecular dynamics simulations can achieve partial equilibrium for structurally relevant properties within microsecond trajectories while requiring substantially longer sampling for transition rates between low-probability states [13]. Experimental binding assays remain vulnerable to non-equilibrium artifacts without rigorous time-course validation [15], while free energy calculations demand ensemble approaches for reliable equilibrium assessment [16].

As molecular simulation methodologies advance toward longer timescales and experimental techniques increase in sensitivity, the framework presented here provides researchers with standardized approaches for equilibrium validation across biological domains. By implementing these property-specific convergence assessments, the research community can strengthen conclusions drawn from both computational and experimental studies of biological systems.

In molecular dynamics (MD) simulations, the pursuit of biologically relevant insights is fundamentally governed by the quality of conformational sampling. Despite advances in computational power, insufficient sampling remains a pervasive pitfall, leading to statistically insignificant results and incorrect scientific conclusions. This article examines how inadequate sampling propagates errors, critiques common but flawed validation metrics, and compares modern methods for achieving verifiable convergence. By synthesizing evidence from long-trajectory analyses and enhanced sampling algorithms, we provide a framework for researchers to quantify uncertainty and improve the reliability of simulation-based predictions in drug development and basic research.

The Sampling Problem in Molecular Dynamics

Molecular dynamics simulation is a powerful computational microscope, revealing atomistic details that often complement experimental findings. However, the predictive capability of MD is constrained by the sampling problem—the challenge of simulating a system for a long enough duration to adequately explore its conformational space [3]. Biomolecules are highly dynamic, populating an ensemble of states at thermodynamic equilibrium. When a simulation is too short to capture the relevant fluctuations and rare events, the resulting observations may represent only a local minimum or a non-representative pathway, not the true equilibrium ensemble [17] [18].

The core issue is that molecular dynamics is deterministic in its equations of motion, yet in practice, the accumulation of small numerical errors and the use of different initial conditions can lead to apparently stochastic behavior. Consequently, a single simulation trajectory rarely captures all relevant conformations, especially for complex biological systems with vast conformational spaces and high energy barriers [17]. Without sufficient sampling, properties derived from simulations—from binding free energies to mechanisms of conformational change—become unreliable and non-reproducible.

How Insufficient Sampling Leads to Misleading Results

Misinterpretation of System Stability and Behavior

A common but dangerous assumption is that a stable-looking Root Mean Square Deviation (RMSD) profile indicates a system has reached equilibrium. Research demonstrates that RMSD plateau misinterpretation is a significant source of error. A survey of MD practitioners showed no consensus on identifying convergence points from RMSD plots, with decisions heavily biased by plot scaling, color, and the analyst's experience [12]. A flat RMSD curve does not guarantee proper thermodynamic behavior; a ligand may appear stable in a binding site based on RMSD while key interactions, like hydrogen bonds, are breaking or the pocket volume is changing [17].

More critically, insufficient sampling can lead to completely inverted conclusions about molecular behavior. A striking example comes from a simulation of the retinal torsion in rhodopsin:

- In a 50-nanosecond trajectory, the torsion sampled three states (g+, g-, and t), suggesting all were populated.

- Extending the simulation to 150 nanoseconds suggested the initial transitions were part of a slow equilibration and that the g- state was the only stable one.

- However, a 1,600-nanosecond (1.6 µs) trajectory revealed the true behavior: rapid exchange between only the g- and t states, with the initial sampling being entirely non-representative [18].

This case illustrates how local degrees of freedom are often coupled to global protein dynamics, making their convergence dependent on slow, large-scale motions that are easily missed in short simulations.

Inaccurate Estimation of Thermodynamic and Kinetic Properties

The consequences of poor sampling extend directly to quantitative properties. At a fundamental level, thermodynamic properties like free energy and entropy depend on the partition function, which requires integration over the entire conformational space, including low-probability regions [13]. Insufficient sampling misses these regions, leading to systematic errors in free energy calculations.

Table 1: Impact of Sampling on Different Property Types

| Property Type | Dependence on Full Sampling | Example | Result of Insufficiency |

|---|---|---|---|

| Average Properties (e.g., mean distance, radius of gyration) | Low to Moderate. Can be approximated from high-probability regions. | Average distance between two protein domains. | Moderately inaccurate, may still be qualitatively useful. |

| Thermodynamic Properties (e.g., Free Energy, Entropy) | High. Require contributions from all states, including low-probability ones. | Protein-ligand binding affinity. | Significant systematic error and quantitatively misleading results. |

| Kinetic Properties (e.g., transition rates, barriers) | Very High. Explicitly depend on probabilities of infrequent states and pathways. | Conformational transition rate of an enzyme. | Severe underestimation or overestimation of rates; failure to observe events. |

As shown in Table 1, properties with the most biological interest, such as transition rates between conformational states, are often the most sensitive to sampling quality. A simulation must not only find these states but also capture the transitions between them multiple times to estimate rates reliably [13].

Experimental and Comparative Analysis of Sampling Protocols

Comparative Software and Force Field Performance

A critical study benchmarking four major MD packages (AMBER, GROMACS, NAMD, and ilmm) revealed that while different software and force fields could reproduce a variety of experimental observables for proteins like Engrailed homeodomain and RNase H equally well at room temperature, there were subtle differences in underlying conformational distributions [3]. This ambiguity makes it difficult to distinguish which results are correct, as experiments cannot always provide the necessary detail.

The divergence was more pronounced for larger amplitude motions, such as thermal unfolding. Some packages failed to allow the protein to unfold at high temperature or produced results at odds with experiment. This demonstrates that differences are not solely attributable to the force field but also to factors like the water model, constraint algorithms, treatment of atomic interactions, and the simulation ensemble [3]. This underscores the need for robust sampling regardless of the specific software employed.

Quantifying Convergence and Uncertainty

Best practices in the field advocate for a tiered workflow: feasibility assessment, simulation, semi-quantitative checks for sampling quality, and finally, estimation of observables with uncertainties [19]. A working definition of equilibrium for a given property ( Ai ) is that the running average ( \langle Ai \rangle(t) ), calculated from times 0 to ( t ), shows only small fluctuations around the final average ( \langle Ai \rangle(T) ) for a significant portion of the trajectory after a convergence time ( tc ) [13].

Table 2: Key Metrics for Assessing Sampling and Uncertainty

| Metric | Formula/Description | Interpretation | Practical Threshold |

|---|---|---|---|

| Effective Sample Size (ESS) | ( N{eff} \approx \frac{N}{1 + 2\sum{k=1}^{M} \rho(k)} )Where ( N ) is number of steps, ( \rho(k) ) is autocorrelation at lag ( k ). | Estimates the number of statistically independent configurations. | ( N_{eff} < \sim20 ) is considered unreliable [18]. |

| Standard Uncertainty | Experimental standard deviation of the mean: ( s(\bar{x}) = \frac{s(x)}{\sqrt{n}} ) | Estimates the standard deviation of the distribution of the mean. | Should be reported for all key observables. |

| Correlation Time (τ) | The longest time separation for which a property remains correlated with itself. | Determines the frequency of independent sampling. | Sampling should extend over many multiples of τ. |

Statistical analysis should not rely on a single metric. Proper evaluation involves combining multiple observables (e.g., energy fluctuations, radius of gyration, hydrogen bond networks) and understanding how each reflects the underlying physics [17]. The gmx analyze tool in GROMACS, for example, can calculate statistical inefficiencies and correlation times from a trajectory.

Methodologies for Robust Sampling and Validation

Enhanced Sampling and Replicate Simulations

To overcome the inherent limitations of conventional MD, several advanced methodologies are employed:

- Unbiased Enhanced Sampling: Newer methods, like the one proposed by Zhu et al., circumvent the challenge of identifying optimal collective variables (CVs). They use an iterative approach that projects unbiased sampling data onto multiple low-dimensional CV spaces to calculate sampling densities, which then guide subsequent sampling. This accelerates diffusive exploration in all relevant CV spaces simultaneously without requiring replica exchange [20].

- Multiple Replicate Simulations (MRS): A fundamental and highly recommended practice is to run multiple independent simulations with different initial velocities. A single simulation may follow a unique, non-representative pathway. MRS provide a clearer picture of natural fluctuations and increase confidence in observed behaviors. Without replicates, a observed process may be mere noise or an artefact of specific initial conditions [17].

- Validation Against Experiment: A critical step is to validate the simulation ensemble using experimental data. This can involve comparing computed observables like NMR scalar coupling constants, NOE distances, or X-ray crystallography B-factors with their experimental counterparts. Correspondence between simulation and experiment increases confidence in the conformational ensemble, though it does not guarantee its uniqueness [17] [3].

The following workflow diagram outlines a robust protocol for running and validating MD simulations to ensure sampling adequacy:

Essential Research Reagent Solutions

Table 3: Key Software and Tools for Sampling and Analysis

| Tool Name | Category | Primary Function | Relevance to Sampling |

|---|---|---|---|

| GROMACS [17] [3] | MD Engine | High-performance MD simulation. | Production of long trajectories; includes tools for PBC correction and analysis. |

| AMBER [17] [3] | MD Engine | Suite of MD simulation and analysis programs. | Production of trajectories; comparative force field testing. |

| NAMD [3] | MD Engine | Parallel MD simulation engine. | Production of trajectories, especially for large systems. |

| cpptraj (AMBER) [17] | Analysis Tool | Trajectory analysis. | Correcting PBC artefacts, calculating metrics like RMSD, RMSF. |

| gmx trjconv (GROMACS) [17] | Analysis Tool | Trajectory conversion and manipulation. | Rebuilding molecules broken across periodic boundaries. |

| PFIM [21] | Design Tool | Population Fisher Information Matrix. | Model-based optimization of sampling times in pharmacological studies. |

Insufficient sampling is an insidious problem that can invalidate the conclusions of molecular dynamics studies. Reliance on intuitive but flawed metrics like RMSD, failure to run replicates, and inadequate simulation length are common pitfalls that lead to unphysical results and poor reproducibility. The path to reliable science requires a rigorous, multi-faceted approach: employing multiple independent simulations, quantifying uncertainty and effective sample size, utilizing enhanced sampling methods for rare events, and consistently validating against experimental data. By adopting these best practices, researchers can ensure their simulations provide meaningful, statistically robust insights into biomolecular function and drug discovery.

The Impact of Force Fields, Water Models, and Simulation Packages on Convergence

Molecular dynamics (MD) simulations are indispensable in modern scientific research, particularly in drug development, where they are used to study biomolecular interactions, protein folding, and ligand binding. The reliability of these simulations hinges on their convergence—the point at which simulated properties stabilize to a consistent value, indicating that the simulation has adequately sampled the system's configuration space. Achieving convergence is not trivial; it is profoundly influenced by the choice of force fields, water models, and the capabilities of simulation packages.

This guide objectively compares these critical components within the broader context of molecular dynamics convergence validation methods. We present experimental data and detailed methodologies to help researchers make informed decisions that enhance the reliability and efficiency of their computational studies.

Theoretical Framework: Force Fields, Water Models, and Convergence

Force Fields and Convergence Challenges

Force fields are mathematical functions and parameters that approximate the potential energy of a molecular system. Their accuracy is paramount for obtaining physically meaningful results from MD simulations. Force fields are generally categorized into classes based on their complexity and functional form:

- Class I: These use simple harmonic potentials for bond and angle terms and Lennard-Jones and Coulomb potentials for non-bonded interactions. They offer a good balance between computational efficiency and accuracy for many bulk properties [22].

- Class II: These incorporate additional terms, such as cross-coupling and anharmonic potentials, to achieve higher fidelity, often making them more suitable for simulating complex polymer systems or properties under non-equilibrium conditions [22].

The parametrization strategy of a force field directly impacts the convergence of properties. Modern, data-driven approaches can lead to more transferable and accurate parameters. For instance, the ByteFF force field was developed by training a graph neural network on a massive dataset of quantum mechanical calculations for drug-like molecules, enabling high accuracy across an expansive chemical space [23].

The Critical Role of Water Models

Water models are specialized force fields for simulating water molecules. Seemingly minor differences in their parameterization—such as bond lengths, angles, and charge distributions—can lead to significant variations in predicted properties, affecting the convergence behavior of hydrated systems [24]. Commonly used rigid three-site models include:

- TIP3P: Features specific O-H bond lengths and H-O-H angles optimized for liquid water properties [24].

- SPC: Uses slightly different geometric parameters and charge distributions compared to TIP3P [24].

- SPC/ε: A refinement of the SPC model that includes an empirical self-polarization correction to better match the experimental static dielectric constant [24].

Simulation Packages as Convergence Engines

Simulation packages (e.g., GROMACS, AMBER, NAMD, OpenMM) provide the computational engine for running MD simulations. Their impact on convergence is multifaceted:

- Optimized Algorithms: Efficient integrators and constraint algorithms (like LINCS [24]) enable longer time steps and faster computation.

- Specialized Hardware: Support for GPUs and other accelerators drastically reduces wall-clock time for sampling.

- Analysis Tools: Built-in functionalities for monitoring energy, pressure, and other properties in real-time are crucial for assessing convergence during a simulation.

Comparative Performance Analysis

Force Field Performance for Drug-Like Molecules

The development of ByteFF represents a shift toward data-driven force field parametrization. Its performance against other force fields and quantum mechanical (QM) reference data is summarized in the table below.

Table 1: Performance comparison of the ByteFF force field against other methods and benchmarks. Data sourced from [23].

| Performance Metric | ByteFF (GNN-Predicted) | Traditional Look-up Table FF | QM Reference (B3LYP-D3(BJ)/DZVP) |

|---|---|---|---|

| Geometries (Bond Length RMSE, Å) | 0.010 | 0.015-0.025 | 0.000 (Reference) |

| Torsion Energy Profiles (RMSE, kcal/mol) | 0.15 | 0.20-0.40 | 0.00 (Reference) |

| Conformational Energies & Forces | State-of-the-art | Lower accuracy | High accuracy |

| Chemical Space Coverage | Expansive and diverse | Limited by parametrized fragments | N/A |

Key Insights: ByteFF demonstrates that a machine-learning approach trained on a large, diverse quantum mechanical dataset can achieve state-of-the-art accuracy in predicting molecular geometries and energies. This enhanced accuracy directly contributes to more robust convergence in MD simulations by providing a more faithful representation of the underlying potential energy surface [23].

Water Model Performance and Information-Theoretic Validation

The choice of water model significantly affects the convergence of bulk properties and the structural accuracy of hydrated molecular systems. Recent studies have employed information-theoretic measures to quantitatively evaluate model performance beyond traditional properties.

Table 2: Comparison of rigid water model parameters and their performance in reproducing key bulk properties (at 298.15 K). Data synthesized from [24].

| Water Model | O-H Bond Length (Å) | H-O-H Angle (°) | Dielectric Constant (ε) | Liquid Density (g/cm³) | Information-Theoretic Performance |

|---|---|---|---|---|---|

| TIP3P | 0.9572 | 104.52 | ~50 | ~0.99 | Excessive electron localization, reduced complexity |

| SPC | 1.0 | 109.45 | ~65 | ~1.00 | Better than TIP3P, but room for improvement |

| SPC/ε | 1.0 | 109.45 | ~78 (Targeted) | ~1.00 | Superior entropy-information balance, optimal complexity |

Key Insights: The SPC/ε model, parameterized specifically to match the experimental dielectric constant, shows superior performance in information-theoretic analysis. It demonstrates an optimal balance between Shannon entropy and Fisher information, indicating a more accurate representation of water's electronic structure as cluster size increases toward bulk behavior. This makes SPC/ε a strong candidate for simulations where dielectric effects and structural accuracy are critical for convergence [24].

Experimental Protocols for Convergence Validation

Protocol for Force Field Parametrization and Testing (ByteFF)

The high performance of ByteFF is rooted in a rigorous, data-driven protocol [23]:

- Dataset Generation: A massive and highly diverse dataset of 2.4 million optimized molecular fragment geometries with analytical Hessian matrices and 3.2 million torsion profiles was generated at the B3LYP-D3(BJ)/DZVP level of theory.

- Model Training: An edge-augmented, symmetry-preserving molecular graph neural network (GNN) was trained on this dataset to predict all bonded and non-bonded MM force field parameters simultaneously.

- Validation: The resulting force field was benchmarked on multiple datasets for its ability to predict relaxed geometries, torsional energy profiles, and conformational energies and forces, demonstrating state-of-the-art accuracy.

Protocol for Water Model Evaluation Using Information Theory

A comprehensive methodology for evaluating water models using information-theoretic measures involves [24]:

- System Preparation: Perform molecular dynamics simulations of water clusters of increasing size (e.g., 1, 3, 5, 7, 9, and 11 molecules) using the target force fields (TIP3P, SPC, SPC/ε).

- Electronic Structure Calculation: Compute the electronic densities for the extracted cluster configurations using density functional theory.

- Descriptor Calculation: Calculate fundamental information-theoretic descriptors—Shannon entropy, Fisher information, disequilibrium, LMC complexity, and Fisher–Shannon complexity—in both position and momentum spaces.

- Statistical and Bulk Validation: Perform statistical analysis (e.g., Shapiro-Wilk normality tests, Student's t-tests) to ensure robust discrimination between models. Validate the force fields against experimental bulk properties (density, dielectric constant, self-diffusion coefficient) through MD simulations of liquid water.

- Correlation Analysis: Establish quantitative relationships between the evolution of information-theoretic descriptors with cluster size and the accuracy of bulk properties.

The following workflow diagram illustrates this multi-stage validation process.

Water Model Validation Workflow: This diagram outlines the experimental protocol for evaluating water models, from initial simulation to final recommendation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful convergence research relies on a suite of computational tools and methods. The following table details key "research reagents" essential for conducting the experiments cited in this guide.

Table 3: Essential research reagents, software, and methods for molecular dynamics convergence validation.

| Research Reagent / Tool | Function / Purpose | Example Use Case |

|---|---|---|

| Graph Neural Networks (GNNs) | Data-driven prediction of force field parameters from QM data. | Used in the development of ByteFF for expansive chemical space coverage [23]. |

| Information-Theoretic Descriptors | Quantify electronic delocalization, localization, and structural complexity. | Used to discriminate between water models (TIP3P, SPC, SPC/ε) based on cluster analysis [24]. |

| Kolmogorov-Arnold Networks (KANs) | Neural network architecture capable of capturing long-range temporal dependencies. | Applied in real-time modeling of water distribution systems (KANSA) to mitigate cumulative errors [25]. |

| Rigid Water Model Parameters | Pre-defined sets of geometry and charge parameters for simulating water. | TIP3P, SPC, and SPC/ε parameters are used as inputs for MD simulations and analysis [24]. |

| Class I & II Force Fields | Pre-defined potential functions for specific molecular classes (e.g., polymers). | Used as benchmarks for comparing the performance of new force field parametrization methods [22]. |

| Multi-Equation Soft-Constraints | Embedding physical laws (e.g., conservation) into model loss functions. | Enhances physical consistency in data-driven models like KANSA for hydraulic networks [25]. |

The path to robust convergence in molecular dynamics simulations is multi-faceted. Evidence shows that data-driven force fields like ByteFF can achieve superior accuracy and chemical space coverage compared to traditional parametrization methods. For solvated systems, the choice of water model is critical, with advanced models like SPC/ε demonstrating excellent performance, particularly when validated through information-theoretic analysis.

Simulation convergence is not guaranteed by a single component but is the result of a synergistic combination of a well-parametrized force field, a physically accurate water model, and an efficient simulation package. The experimental protocols and comparative data presented in this guide provide a framework for researchers to make informed, evidence-based decisions that enhance the reliability and predictive power of their molecular simulations in drug development and beyond.

A Practical Toolkit: Essential Methods and Metrics for Convergence Analysis

In molecular dynamics (MD) simulations, quantifying the structural evolution, stability, and flexibility of biomolecules is paramount for validating convergence and interpreting results. Three geometric metrics form the cornerstone of this analysis: Root Mean Square Deviation (RMSD), Root Mean Square Fluctuation (RMSF), and Radius of Gyration (Rg). These parameters provide complementary insights into different aspects of molecular behavior, from global structural stability to local residue flexibility and overall compactness. Within the broader thesis of molecular dynamics convergence validation methods, these metrics serve as essential diagnostic tools. They allow researchers to determine whether a simulation has reached a stable equilibrium state, assess the reproducibility of observed dynamics across replicates, and quantify structural changes relevant to biological function, such as protein folding, ligand binding, and conformational transitions. Their combined application is critical for ensuring the reliability of MD simulations in fundamental research and drug development.

Core Metric Definitions and Theoretical Foundations

Root Mean Square Deviation (RMSD)

Root Mean Square Deviation (RMSD) is a quantitative measure of the average distance between the atoms of two superimposed molecular structures. It is most commonly used to trace the change in a protein's structure over time in a simulation relative to a reference frame, typically the starting crystallographic structure or an average equilibrated structure. The RMSD is calculated using the formula:

RMSD = √[ (1/N) × Σ(δ_i)² ]

where:

- N is the number of atoms being compared

- δ_i is the distance between atom i in the target structure and its equivalent in the reference structure after optimal rigid-body superposition [26].

In practice, for a set of n equivalent atoms with coordinates v and w in two structures, the calculation becomes:

RMSD(v,w) = √[ (1/n) × Σ ‖vi - wi‖² ] [26]

A lower RMSD value indicates greater similarity to the reference structure, while a rising then plateauing RMSD profile often suggests the protein is undergoing an initial relaxation before stabilizing in a new conformational state [27]. RMSD is typically reported in Ångströms (Å), where 1 Å equals 10^-10 meters.

Root Mean Square Fluctuation (RMSF)

Root Mean Square Fluctuation (RMSF) quantifies the deviation of a particle, or group of particles, from a reference position over time. Unlike RMSD, which measures global changes, RMSF provides a per-residue measure of flexibility or local stability throughout a simulation. It is particularly useful for identifying flexible loops, terminal regions, and hinge domains involved in conformational changes [27].

The RMSF calculation for a given atom i is defined as:

RMSF(i) = √[ ⟨‖ri(t) - ⟨ri⟩‖²⟩ ]

where:

- r_i(t) is the position of atom i at time t

- ⟨r_i⟩ is the time-averaged position of atom i

- ⟨...⟩ represents the time average over the entire simulation trajectory [28] [29]

RMSF is directly related to experimental B-factors (temperature factors) obtained from X-ray crystallography through the relationship:

RMSFi² = 3Bi / 8π² [29]

This connection allows for direct validation of simulation flexibility against experimental data.

Radius of Gyration (Rg)

Radius of Gyration (Rg) describes the overall compactness and shape of a macromolecule. It is defined as the mass-weighted average distance of all atoms in the structure from their common center of mass [30]. For a protein or other polymer, Rg provides insight into its folding state, where a lower Rg typically indicates a more compact, globular structure, and a higher Rg suggests a more extended or unfolded conformation.

The standard calculation for Rg is:

Rg² = (1/M) × Σ mi × ‖ri - r_cm‖²

where:

- M is the total mass of the molecule

- m_i is the mass of atom i

- r_i is the position of atom i

- r_cm is the center of mass of the molecule [30]

An alternative definition based on pairwise distances between all atoms (mirroring the relationship between RMSD and RMSF) is:

Rg² = (1/(2Nm²)) × Σ Σ ‖ri - rj‖² [29]

where N_m is the total number of monomers in a chain. The characteristic Rg/Rh value (where Rh is the hydrodynamic radius) for a globular protein is approximately 0.775. Deviations from this value indicate non-spherical or elongated molecular shapes [30].

Table 1: Summary of Core Geometric Metrics in Molecular Dynamics

| Metric | Definition | Primary Application | Typical Units | Key Interpretation |

|---|---|---|---|---|

| RMSD | Average distance between atoms of superimposed structures [26] | Global structural change over time [27] | Ångström (Å) | Lower value = greater structural similarity; plateau indicates stability |

| RMSF | Standard deviation of atomic positions from their average location [28] | Per-residue flexibility [27] | Ångström (Å) | Higher values indicate more flexible regions; correlates with B-factors [29] |

| Rg | Mass-weighted root mean square distance from center of mass [30] | Overall compactness and shape [30] | Nanometer (nm) | Lower value = more compact structure; monitors folding/unfolding |

Comparative Analysis of Applications

Each metric provides a distinct perspective on molecular behavior, and their combined analysis offers a comprehensive view of system dynamics. The following table summarizes their complementary roles in structural analysis.

Table 2: Comparative Applications of RMSD, RMSF, and Rg in MD Analysis

| Analysis Context | Primary Metric | Secondary Metrics | Interpretation Guide | Representative Study |

|---|---|---|---|---|

| Protein Folding/Unfolding | Rg (monitors compactness) [30] | RMSD, RMSF | Decreasing Rg indicates folding; RMSD validates against native state; RMSF identifies mobile elements during transition | Folding studies of villin headpiece domain [29] |

| Ligand Binding & Stability | RMSD (protein-ligand complex) [31] | RMSF (binding site residues), Rg | Complex RMSD stability indicates sustained binding; RMSF reveals allosteric effects; Rg checks for induced compaction | Antiviral compounds targeting influenza PB2 [32] |

| Equilibration Validation | RMSD (backbone) [27] | Rg, RMSF | Plateau in backbone RMSD suggests equilibration; stable Rg confirms consistent compactness | Multi-replicate MD simulations [27] |

| Domain Mobility & Flexibility | RMSF (per-residue) [27] | RMSD (per-domain), Rg | Peaks in RMSF identify flexible loops/hinges; domain RMSD quantifies rigid-body motions | HCV core protein domain analysis [33] |

Metric Correlations and Complementary Insights

The integrated analysis of RMSD, RMSF, and Rg provides powerful insights into molecular behavior that would be incomplete with any single metric. For instance, in protein folding studies, Rg provides the primary indicator of global compaction, while RMSD validates the approach toward the native state, and RMSF identifies which regions gain or lose mobility during the process [29]. Similarly, in ligand-binding studies, the stability of the protein-ligand complex RMSD indicates whether the binding pose is maintained, while RMSF of binding site residues reveals whether the ligand restricts or enhances local flexibility, and Rg monitors any ligand-induced global compaction or expansion [32].

A mathematical relationship exists between ensemble-average pairwise RMSD and RMSF, mirroring the relationship between the two definitions of Rg. Specifically, the root mean-square ensemble-average of pairwise RMSD,

Experimental Protocols and Methodologies

Standard Protocol for RMSD/RMSF Calculation

The following workflow represents a standardized approach for calculating RMSD and RMSF from molecular dynamics trajectories using common analysis tools such as AMBER's cpptraj or MDTraj in Python [27].

Diagram 1: RMSD and RMSF Calculation Workflow

Step-by-Step Procedure:

Trajectory Preparation: Load the molecular dynamics trajectory file and corresponding topology file into your analysis environment. For multi-replicate studies, load all trajectory replicates separately [27].

Reference Structure Selection: Choose an appropriate reference structure for alignment. The most common choices are:

- The first frame of the simulation (t=0) to measure departure from initial state

- An average structure over the production trajectory

- An experimental crystal structure [27]

Atom Selection for Alignment: Select atoms for the least-squares superposition. To focus on backbone conformational changes and eliminate noise from side chain motions, typically use the protein backbone atoms (C, Cα, N) [27].

Trajectory Superposition: Perform least-squares fitting of each trajectory frame to the reference structure using the selected alignment atoms. This step removes global translation and rotation, isolating internal conformational changes [27] [28].

RMSD Calculation: Calculate the root mean square deviation for each frame after superposition. The RMSD can be calculated for the same atoms used in alignment, or for different subsets (e.g., all protein atoms, binding site residues, or ligand atoms) [27].

RMSF Calculation: Compute the root mean square fluctuation of each residue from its average position throughout the trajectory. This calculation is typically performed on Cα atoms to characterize residue-level flexibility [27] [28].

Visualization and Analysis: Plot RMSD versus time to identify equilibration periods and stable states. Plot RMSF versus residue number to identify flexible and rigid regions. Compare replicates to ensure consistency [27].

Standard Protocol for Radius of Gyration Calculation

The radius of gyration provides complementary information about global compactness that is independent of structural alignment.

Step-by-Step Procedure:

Trajectory Preparation: Use the same loaded trajectory as for RMSD/RMSF analysis. No superposition is required for Rg calculation as it is translationally invariant.

Atom Selection: Select the atoms for which compactness will be measured. This could be:

- The entire protein

- Specific domains

- The protein with and without a bound ligand

Rg Calculation: Compute the mass-weighted radius of gyration for each trajectory frame using the standard formula [30] [32].

Visualization and Interpretation: Plot Rg versus time. Correlate Rg changes with structural events observed in RMSD and RMSF analyses. A stable, low Rg indicates a compact, well-folded structure, while increasing Rg may indicate unfolding or expansion [32].

Advanced Application: Free Energy Landscape Analysis

In more advanced analyses, RMSD and Rg can be combined to construct free energy landscapes that reveal the populations of different conformational states and the barriers between them [32].

Diagram 2: Free Energy Landscape Analysis

Procedure:

- Calculate Rg and RMSD values for each frame of a well-equilibrated trajectory.

- Create a 2D histogram of Rg versus RMSD.

- Convert the probability distribution P(Rg, RMSD) to free energy using: ΔG(Rg, RMSD) = -kBT × ln[P(Rg, RMSD)], where kB is Boltzmann's constant and T is temperature [32].

- Identify low-free-energy basins as stable conformational states.

- Analyze the structures within each basin to characterize the distinct conformational states.

This approach was successfully applied in a study of influenza PB2 cap-binding domain, where compounds 1, 3, and 4 showed promising binding stability reflected in their distinct free energy profiles [32].

Essential Research Reagents and Computational Tools

Successful implementation of these geometric analyses requires specific computational tools and resources. The following table catalogs key research reagents and software solutions used in the field.

Table 3: Research Reagent Solutions for Geometric Metric Analysis

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| AMBER (cpptraj) [27] | Software Module | Trajectory analysis: RMSD, RMSF, Rg, H-bonds | Processing of multi-replicate MD trajectories [27] |

| GROMACS (gmx rmsf) [28] | Software Suite | MD simulation and analysis | Calculating residue fluctuations and B-factors [28] |

| MDTraj [27] | Python Library | Trajectory analysis and processing | Loading trajectories, calculating RMSD/RMSF in Python scripts [27] |

| AlphaFold2 [33] | AI Structure Prediction | Generating reference structures | Providing initial models for proteins without crystal structures [33] |

| Diverse lib Database [32] | Chemical Library | Source of potential inhibitor compounds | Virtual screening for influenza PB2 cap-binding domain inhibitors [32] |

| MM-GBSA [32] | Computational Method | Binding free energy calculation | Evaluating inhibitor affinity in PB2 cap-binding domain study [32] |

RMSD, RMSF, and Rg represent fundamental geometric metrics that together provide a comprehensive picture of molecular structure, dynamics, and compactness in MD simulations. RMSD serves as the primary indicator of global structural change, RMSF reveals local flexibility patterns, and Rg quantifies overall dimensions and shape. Their integrated application is essential for validating simulation convergence, characterizing conformational states, and interpreting biomolecular function. As MD simulations continue to grow in complexity and timescale, these metrics remain indispensable tools for researchers and drug development professionals seeking to bridge computational models with experimental observables and ultimately accelerate the discovery of novel therapeutic agents.

In molecular dynamics (MD) simulations, the traditional approach to assessing convergence has heavily relied on monitoring structural metrics, most notably the root-mean-square deviation (RMSD), until a plateau is observed [13]. This method, while convenient, carries a significant and often overlooked risk: a simulation may appear structurally stable while remaining thermodynamically far from equilibrium [13]. This fundamental limitation can invalidate simulation results, presenting a particular danger in fields like drug discovery, where free energy calculations inform critical decisions on compound selection and optimization [34].

The emerging paradigm shift moves beyond structural checks toward energy-based convergence criteria. These methods validate whether a simulation has sufficiently sampled the conformational energy landscape, providing a more physically rigorous foundation for asserting that thermodynamic equilibrium has been reached [13] [35]. This guide compares traditional and energy-based validation methods, providing researchers with the experimental protocols and analytical tools needed to implement these more robust criteria.

Comparative Analysis of Convergence Validation Methods

Table 1: Comparison of Convergence Validation Methods in Molecular Dynamics

| Method Category | Specific Metrics | Key Strengths | Key Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Structural Checks | Root-mean-square deviation (RMSD), Radius of gyration | Computationally inexpensive, intuitive, easy to visualize and implement | Plateau may indicate being trapped in a local energy minimum, not global equilibrium; poor predictor for free energy convergence [13] | Initial equilibration staging, qualitative assessment of large-scale conformational shifts |

| Energy-Based Criteria | Kofke's bias measure (Π), Standard deviation of energy difference (σΔU), Reweighting entropy (Sw) | Directly probes thermodynamic state; provides quantitative, statistically rigorous thresholds for convergence [35] | More complex analysis; may require specialized post-processing tools; Gaussian distribution assumptions not always valid [35] | Free energy calculations (solvation, binding); validating simulations for quantitative thermodynamic predictions |