Validating Molecular Dynamics Simulations: A Comprehensive Guide from Foundations to Future-Forward Methods

This article provides a comprehensive framework for validating molecular dynamics (MD) simulations, a critical step for ensuring the reliability of computational findings in biomedical research and drug development.

Validating Molecular Dynamics Simulations: A Comprehensive Guide from Foundations to Future-Forward Methods

Abstract

This article provides a comprehensive framework for validating molecular dynamics (MD) simulations, a critical step for ensuring the reliability of computational findings in biomedical research and drug development. It begins by establishing the foundational principles of validation, explaining why it is essential for credible results. The piece then explores advanced methodological applications, including the use of machine-learned force fields and enhanced sampling techniques. A dedicated troubleshooting section offers practical solutions for optimizing simulation performance and addressing common deficiencies. Finally, the article presents rigorous validation and comparative analysis protocols, highlighting standardized benchmarks and the integration of experimental data. Aimed at researchers and scientists, this guide synthesizes the latest advancements to empower professionals in conducting robust and reproducible MD simulations.

The Critical Role of Validation: Establishing Trust in Your Molecular Dynamics Simulations

Molecular dynamics (MD) simulation has become an indispensable tool across scientific disciplines, from drug discovery to materials science. However, the predictive power of any simulation is entirely dependent on the rigor of its validation. This guide objectively compares prevalent validation methodologies by dissecting the experimental protocols and quantitative benchmarks used to confirm the credibility of MD results in recent, high-impact research.

Validating with Experimental Data: The Ultimate Benchmark

The most direct validation strategy involves comparing simulation outcomes against empirical experimental data. This approach tests the simulation's ability to replicate real-world phenomena.

Case Study: Grain Growth in Polycrystalline Nickel

A 2025 study directly used experimental microstructure data as the initial condition for MD simulations of grain growth in polycrystalline nickel [1]. The researchers developed a bidirectional method for converting data between voxelized experimental structures and atomic simulation models.

- Core Experimental Protocol: The simulation results were compared directly with the characteristics of grain growth observed in the laboratory experiment. The key metric was the relationship between grain boundary curvature and velocity [1].

- Outcome and Validation Insight: The MD simulations successfully matched the experimental characteristics of grain growth. Most significantly, they provided new evidence confirming the absence of a correlation between velocity and curvature during grain growth in polycrystals, a finding that helps rule out other causes [1]. This demonstrates that a well-validated MD model can not only replicate experiments but also provide fundamental mechanistic insights that are difficult to isolate in a lab setting.

Case Study: Solubility Prediction via Machine Learning

Another robust validation method uses MD-derived properties to predict a key experimental measurement: aqueous solubility. A 2025 study extracted ten properties from MD simulations of 211 drugs, such as Solvent Accessible Surface Area and Coulombic interaction energy, and used them as features in machine learning models [2].

- Core Experimental Protocol: The target variable was the experimental logarithmic solubility (logS) measured in moles per liter. The predictive performance of the models (Random Forest, XGBoost, etc.) was a direct measure of the MD simulations' physical accuracy [2].

- Outcome and Validation Insight: The best model achieved a predictive R² of 0.87 with an RMSE of 0.537, demonstrating that MD-derived properties have predictive power comparable to models based on traditional structural descriptors [2]. This shows that MD can capture the essential physics governing complex properties like solubility.

Validating with Ab Initio Methods: Ensuring Quantum-Accuracy

For properties where experimental data is scarce or difficult to obtain, validation against higher-level computational methods like Density Functional Theory is the standard.

Case Study: The EMFF-2025 Neural Network Potential

A 2025 study developed a general neural network potential for high-energy materials. The core validation step involved systematic benchmarking of the model's predictions against DFT calculations [3].

- Core Computational Protocol: The energy and forces predicted by the EMFF-2025 model for 20 different high-energy materials were compared against reference DFT calculations. The Mean Absolute Error was used as the primary quantitative benchmark [3].

- Outcome and Validation Insight: The model achieved excellent fitting accuracy, with energy MAE predominantly within ± 0.1 eV/atom and force MAE mainly within ± 2 eV/Å [3]. The close alignment of predictions with DFT data across a wide temperature range confirmed the model's robustness and its capability to serve as a cheaper, yet accurate, alternative to direct DFT for large-scale MD simulations.

Practical Validation: Assessing Refinement Efficacy

Validation also involves pragmatically testing the limits of a simulation methodology, determining when it adds value and when it does not.

Case Study: RNA Structure Refinement in CASP15

A comprehensive benchmark study evaluated the effect of MD simulations on refining RNA structural models from the CASP15 community experiment [4].

- Core Computational Protocol: Using the Amber software with the RNA-specific χOL3 force field, researchers ran short (10–50 ns) and longer (>50 ns) simulations on 61 models. Improvement or deterioration was measured by the change in model quality metrics after simulation [4].

- Outcome and Validation Insight: The study provided clear, practical guidelines:

- For high-quality starting models, short simulations provided modest improvements, particularly by stabilizing stacking and non-canonical base pairs.

- For poorly predicted models, MD rarely helped and often made structures worse.

- Longer simulations (>50 ns) typically induced structural drift and reduced fidelity [4].

This highlights that validation is not just about technical accuracy but also about understanding the scope of a method's utility.

Comparative Performance Tables

Table 1: Quantitative Benchmarks from Recent MD Validation Studies

| Study Focus & Year | Validation Metric | Performance Outcome | Key Benchmark Against |

|---|---|---|---|

| Solubility Prediction (2025) [2] | Predictive R² (Test Set) | 0.87 | Experimental solubility (logS) |

| Root Mean Square Error (RMSE) | 0.537 | Experimental solubility (logS) | |

| Neural Network Potential EMFF-2025 (2025) [3] | Mean Absolute Error (Energy) | < 0.1 eV/atom | Density Functional Theory |

| Mean Absolute Error (Force) | < 2.0 eV/Å | Density Functional Theory | |

| RNA Refinement (CASP15) [4] | Successful Refinement Rate (High-quality models) | Modest Improvement | Pre-MD model quality |

| Successful Refinement Rate (Poor-quality models) | Rare / Often Deteriorates | Pre-MD model quality |

Table 2: Summary of Validation Methodologies and Their Applications

| Validation Method | Typical Application Context | Key Strength | Common Quantitative Metrics |

|---|---|---|---|

| Experimental Data Comparison [2] [1] | Drug discovery, Materials science | Direct real-world relevance, builds trust for applications | R², RMSE, Mean Absolute Error, Correlation strength |

| Ab Initio Method Benchmarking [3] | Force field development, New material design | High quantum-mechanical accuracy where experiments are hard | Energy/Force MAE, Deviation in material properties |

| Practical Efficacy Testing [4] | Biomolecular refinement, Method selection | Defines practical utility and saves computational resources | Success rate, Refinement potential, Stability over time |

Experimental Protocols in Focus

To ensure reproducibility, below are the detailed methodologies for two key experiments cited.

- System Setup: A dataset of 211 drugs with experimental solubility (logS) was compiled. Each drug was parameterized with the GROMOS 54a7 force field.

- Simulation Execution: MD simulations were conducted in the NPT ensemble using GROMACS 5.1.1. A cubic simulation box with specific dimensions was used.

- Property Extraction: Ten MD-derived properties, including SASA, Coulombic energy, and RMSD, were extracted from the trajectories.

- Model Building & Validation: The properties, along with logP, were used as features to train four machine learning models. Model performance was evaluated by its ability to predict the held-out experimental logS values.

- Data Generation: A training database was constructed from DFT calculations using the Deep Potential generator framework.

- Model Training: The EMFF-2025 NNP was developed using a transfer learning strategy, building upon a pre-trained model with minimal new DFT data.

- Energy/Force Validation: The model predicted energies and forces for 20 high-energy materials; these values were compared directly to reference DFT calculations to compute the Mean Absolute Error.

- Property Prediction: The validated model was then used to predict crystal structures, mechanical properties, and decomposition behaviors, which were benchmarked against existing experimental data.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Computational Resources for MD Validation

| Item Name | Function / Role in Validation |

|---|---|

| GROMACS [2] | A versatile software package for performing MD simulations; used in solubility studies to calculate molecular properties. |

| AMBER [4] | A suite of biomolecular simulation programs; used with the RNA-specific χOL3 force field for refining RNA structures. |

| LAMMPS [5] | A widely used MD simulator; frequently employed in materials science and for simulating interfacial tension. |

| Deep Potential (DP) [3] | A machine learning framework for developing neural network potentials; used to create the EMFF-2025 model with DFT-level accuracy. |

| CHARMM27 / OPLS-AA [5] | Established force fields that provide the parameters for bonded and non-bonded interactions, crucial for simulating different molecular systems. |

Workflow: A Strategic Path for MD Validation

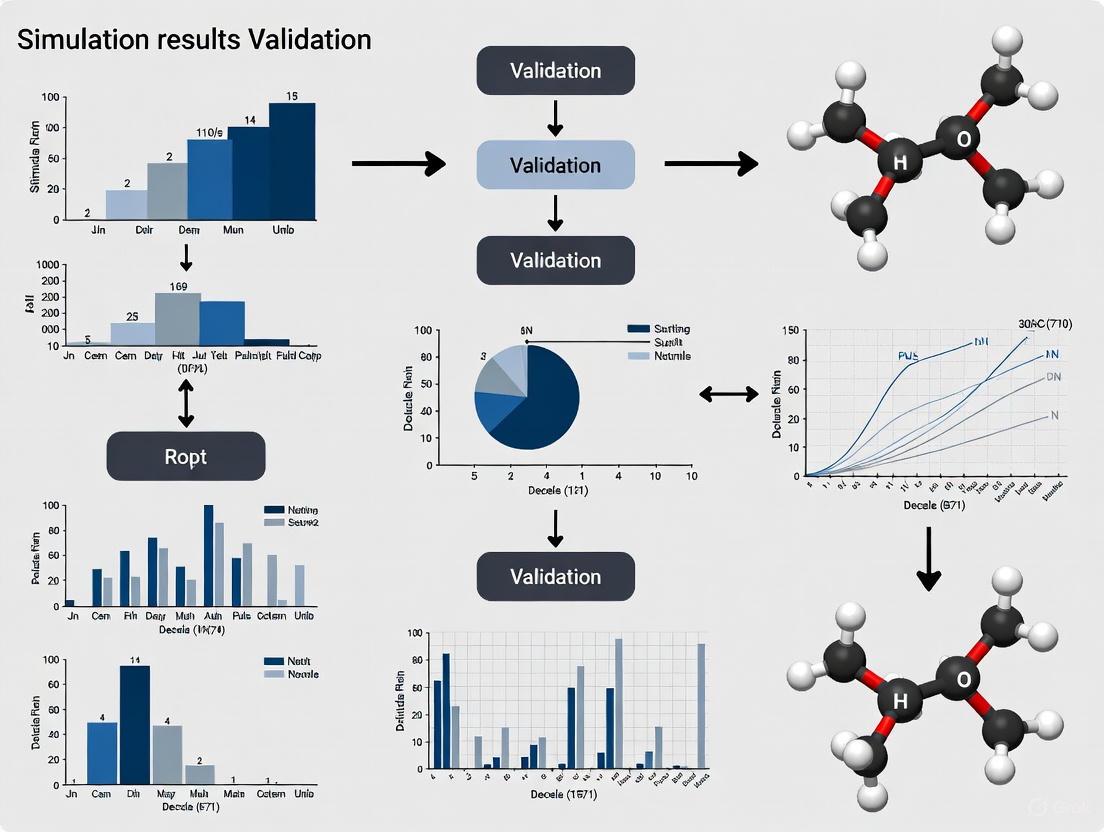

The following diagram outlines a logical, decision-based workflow for selecting and implementing a validation strategy, synthesized from the reviewed studies.

Molecular dynamics (MD) simulations are a cornerstone of modern computational chemistry, biophysics, and drug discovery, enabling the study of physical movements of atoms and molecules over time. For researchers and drug development professionals, the validation of simulation results is paramount. This guide objectively compares the performance of different methodologies by examining two core, interconnected challenges: the sampling of conformational space and the accuracy of molecular force fields. We present supporting experimental data and detailed protocols to inform your choice of computational strategies.

Confronting the Sampling Problem in Molecular Dynamics

Insufficient sampling is a fundamental limitation in MD, caused by the rough energy landscapes of biomolecules, where many local minima are separated by high-energy barriers. This can trap simulations in non-functional states, preventing access to all biologically relevant conformations [6]. Enhanced sampling algorithms have been developed to address this issue.

Performance Comparison of Enhanced Sampling Methods

The table below summarizes the core mechanisms, applications, and limitations of three predominant enhanced sampling techniques.

Table 1: Comparison of Key Enhanced Sampling Methods

| Method | Core Mechanism | Typical Applications | Advantages | Limitations/Challenges |

|---|---|---|---|---|

| Replica-Exchange MD (REMD) | Parallel simulations at different temperatures (T-REMD) or Hamiltonians (H-REMD) exchange configurations based on Monte Carlo weights [6]. | Protein folding, peptide dynamics, free energy landscapes [6]. | Efficient random walks in temperature/energy space; multiple variants available [6]. | Efficiency sensitive to maximum temperature choice; high computational cost due to many replicas [6]. |

| Metadynamics | History-dependent bias potential ("computational sand") is added to collective variables to discourage revisiting previous states and accelerate barrier crossing [6]. | Protein folding, ligand-protein interactions, conformational changes, phase transitions [6]. | Explores entire free energy landscape; does not depend on highly accurate pre-existing energy surfaces [6]. | Accuracy depends on correct choice of a small set of collective variables [6]. |

| Simulated Annealing | Artificial temperature is gradually decreased during the simulation, allowing the system to escape local minima and approach a global minimum [6]. | Characterization of highly flexible systems, large macromolecular complexes (with Generalized Simulated Annealing) [6]. | Well-suited for systems with high flexibility; can be applied at relatively low computational cost [6]. | Historically restricted to small proteins, though newer variants like GSA have expanded applicability [6]. |

Experimental Protocol for Assessing Sampling Performance

Objective: To evaluate the sampling efficiency and convergence of different enhanced sampling methods in simulating a biomolecular system (e.g., a small protein or peptide).

Methodology:

- System Preparation: A single molecular system (e.g., the penta-peptide met-enkephalin or a small intrinsically disordered protein) is prepared.

- Parallel Simulations: The system is simulated using three different approaches:

- Conventional MD: A single, long-timescale simulation serves as a baseline.

- REMD (T-REMD): Multiple replicas (e.g., 16-64) are run in parallel, spanning a temperature range (e.g., 300K - 500K).

- Metadynamics: A simulation is run using 1-2 carefully chosen collective variables (e.g., root-mean-square deviation (RMSD) or radius of gyration).

- Data Analysis: The following quantitative and qualitative metrics are computed from all trajectories for comparison:

- Convergence of Free Energy: The stability of the calculated free energy landscape over simulation time.

- State Population Distributions: The relative populations of key conformational states.

- Transition Rates: The frequency of transitions between major metastable states.

Diagram: Conceptual Workflow of Enhanced Sampling Methods

The Critical Role of Force Field Accuracy

The force field defines the potential energy surface for a simulation. Inaccuracies in force field parameters can lead to unrealistic structural and dynamic properties, compromising the predictive power of MD. This is particularly critical for intrinsically disordered proteins (IDPs) and peptides, which lack stable structure and are highly sensitive to the force field's balance of interactions [7] [8].

Benchmarking Force Field Performance

Recent studies systematically benchmark force fields by comparing simulation results against experimental data. The following table synthesizes findings from key benchmarks.

Table 2: Force Field Performance Benchmark for Structured and Disordered Proteins

| Force Field & Water Model | System Tested | Key Experimental Metrics for Validation | Performance Summary |

|---|---|---|---|

| Amber99SB-ILDN / TIP3P | Proteins with structured domains and IDRs [7]. | NMR relaxation, chemical shifts, RDCs, PRE, SAXS data [7]. | Led to artificial structural collapse in disordered regions; resulted in unrealistic NMR relaxation properties [7]. |

| CHARMM22* / TIP3P | Proteins with structured domains and IDRs [7]. | NMR relaxation, chemical shifts, RDCs, PRE, SAXS data [7]. | Performance varied; some versions struggle to balance order and disorder, showing biases in peptide folding simulations [7] [8]. |

| CHARMM36m / TIP4P-D | Proteins with structured domains and IDRs [7]. | NMR relaxation, chemical shifts, RDCs, PRE, SAXS data [7]. | Significantly improved reliability for hybrid proteins; capable of retaining transient helical motifs observed in experiments [7]. |

| Multiple (12) Fixed-Charge FFs | Curated set of 12 peptides (structured, context-sensitive, disordered) [8]. | Stability from folded state, folding from extended state (10 µs simulations) [8]. | No single model performed optimally across all systems; some exhibited strong structural bias, while others allowed reversible fluctuations [8]. |

Experimental Protocol for Force Field Validation

Objective: To evaluate the performance of biomolecular force fields in simulating proteins containing both structured and intrinsically disordered regions.

Methodology (as detailed in [7]):

- System Selection: Three proteins with varying degrees of order and disorder are studied:

- δRNAP: A two-domain protein with a well-structured N-terminal domain and a disordered C-terminal domain.

- RD-hTH: A protein with a disordered N-terminal region containing a segment with high transient helical propensity.

- MAP2c159–254: A mostly disordered fragment with a region of increased helical propensity.

- Simulation Setup:

- Force Fields Tested: Amber99SB-ILDN (A99), CHARMM22* (C22*), CHARMM36m (C36m).

- Water Models: TIP3P, TIPS3P, TIP4P-D.

- System Preparation: Proteins are solvated in a rhombic dodecahedral box with a minimum 2 nm distance to the edge. Ions (Na+/Cl−) are added to neutralize the system and adjust salt concentration to 100 mM. Simulations are run under periodic boundary conditions for a microsecond timescale.

- Validation against Experiment: Trajectories are used to predict measurable parameters, which are then compared to experimental data:

- NMR Parameters: Chemical shifts, residual dipolar couplings (RDCs), paramagnetic relaxation enhancement (PRE), and backbone amide 15N relaxation rates (R1, R2) and heteronuclear Overhauser effects (ssNOE).

- Scattering Data: Small-angle X-ray scattering (SAXS) profiles and derived radii of gyration (Rg).

- Data Analysis: The agreement between predicted and experimental data is quantified. NMR relaxation parameters are found to be particularly sensitive to the choice of force field and water model [7].

Diagram: Force Field Validation Workflow

Emerging Solutions: AI-Driven and Hybrid Approaches

Machine learning (ML) is revolutionizing MD by addressing both sampling and accuracy challenges.

Machine Learning Force Fields (MLFFs)

ML-based force fields aim to achieve quantum-level accuracy at a computational cost comparable to classical potentials. A key challenge is that MLFFs trained solely on Density Functional Theory (DFT) data often inherit its inaccuracies and may not agree with experimental observations [9]. A promising solution is fused data learning, where an ML potential is trained concurrently on both DFT data (energies, forces) and experimental data (e.g., mechanical properties, lattice parameters) [9]. This approach has been shown to produce models of higher accuracy that satisfy all target objectives [9].

AI-Accelerated Sampling and Drug Discovery

Leading AI-driven drug discovery platforms, such as Schrödinger, leverage physics-based MD simulations alongside machine learning for drug design. Their platform has contributed to advancing candidates like the TYK2 inhibitor zasocitinib (TAK-279) into Phase III clinical trials [10]. Furthermore, scalable AI-driven MD is being enabled by interfaces like ML-IAP-Kokkos, which integrates PyTorch-based machine learning interatomic potentials (MLIPs) into popular MD packages like LAMMPS, allowing for large-scale, efficient simulations [11].

This table lists key software, hardware, and computational resources essential for conducting state-of-the-art MD simulations.

Table 3: Essential Resources for Molecular Dynamics Research

| Category | Item | Function / Purpose |

|---|---|---|

| MD Software | GROMACS [6], NAMD [6], AMBER [6], GENESIS [12], LAMMPS [11] | Core simulation engines; perform numerical integration of equations of motion. Support enhanced sampling algorithms. |

| ML/AI Integration | PyTorch [11], ML-IAP-Kokkos Interface [11] | Enables development and deployment of machine learning force fields within traditional MD workflows. |

| Compute Hardware (CPU) | AMD Ryzen Threadripper, Intel Xeon Scalable [13] | Processors with high core counts and clock speeds for parallel computations in MD. |

| Compute Hardware (GPU) | NVIDIA RTX 4090, RTX 6000 Ada [13] | Graphics processing units that massively accelerate computationally intensive tasks in MD (e.g., particle-particle interactions). |

| Specialized Hardware | BIZON ZX Series Workstations [13] | Purpose-built workstations/servers optimized for MD, offering multi-GPU configurations, advanced cooling, and reliability. |

| Validation Data | NMR Relaxation, Chemical Shifts, SAXS, RDCs [7] | Critical experimental data used to validate and benchmark the accuracy of simulation results and force field performance. |

The field of molecular dynamics is navigating its core challenges through a combination of refined traditional algorithms and groundbreaking AI-driven approaches. For researchers, the choice of sampling technique and force field is not one-size-fits-all but must be guided by the specific biological system and the availability of experimental data for validation. The ongoing integration of machine learning promises to further bridge the gap between simulation and reality, enhancing the predictive power of MD in drug discovery and molecular sciences.

Molecular dynamics (MD) simulations and other computational approaches have revolutionized early drug discovery, enabling researchers to screen millions of compounds and study drug-target interactions at atomic resolution with unprecedented speed [14] [10]. These in silico methods have compressed discovery timelines that traditionally required years into months or even weeks, with AI-designed therapeutics now advancing to human trials across diverse therapeutic areas [10]. The integration of machine learning with molecular simulation has created particularly powerful platforms for predicting molecular behavior, generating novel compounds, and optimizing lead candidates [15] [16].

However, this reliance on computational approaches introduces a critical vulnerability: the validation gap between simulation predictions and biological reality. Despite technical advancements, the fundamental challenge remains that these simulations are based on theoretical models whose accuracy must be confirmed through experimental validation [17]. When simulations remain unvalidated, they generate elegant but potentially misleading narratives that can derail drug development programs, contributing to the persistent 90% failure rate in clinical drug development [18]. This article examines the consequences of this validation gap and provides frameworks for bridging it through rigorous experimental confirmation.

The High Stakes of Unvalidated Simulations

Scientific and Economic Consequences

The ramifications of unvalidated simulations extend throughout the drug development pipeline, creating both scientific and economic liabilities that may only become apparent after substantial resources have been invested.

Table 1: Consequences of Unvalidated Simulations in Drug Discovery

| Consequence Type | Specific Impact | Typical Stage When Discovered |

|---|---|---|

| Scientific Liabilities | Misleading mechanistic interpretations | Lead optimization phase |

| Incorrect binding affinity predictions | Preclinical validation | |

| Overlooked toxicity or off-target effects | Clinical trials | |

| Resource Impacts | Misallocated synthetic chemistry efforts | Early-to-mid discovery |

| Delayed program termination decisions | Multiple stages | |

| Costly clinical trial failures | Phase II/III trials | |

| Strategic Costs | Damaged research credibility | Publication/review |

| Compromised regulatory confidence | Regulatory submissions |

Unvalidated simulations can propagate errors through the entire research continuum. At the most fundamental level, they generate misleading mechanistic interpretations of drug-target interactions [17]. For instance, simulations might suggest stable binding modes that don't exist in physiological conditions or overlook critical solvent effects that significantly alter molecular interactions. These inaccurate models then inform compound optimization efforts, leading medicinal chemists to pursue structural modifications based on incorrect premises.

The resource impacts are equally significant. Research teams may spend months or years pursuing chemical series identified through virtual screening without experimental confirmation, creating substantial opportunity costs and delaying more promising avenues [14]. In the most extreme cases, unvalidated simulations contribute to clinical trial failures when compounds selected primarily on computational predictions demonstrate inadequate efficacy or unacceptable toxicity in human studies [18].

Case Studies: When Simulations Diverged from Reality

Several documented cases illustrate the tangible consequences of over-relying on unvalidated simulations:

Cellular Environment Oversimplification: In a simulation of a bacterial cytoplasm, a few copies of pyruvate dehydrogenase unfolded due to protein-protein interactions, while the majority remained stable [17]. This molecular heterogeneity would be missed in conventional simulations that assume uniform behavior, potentially leading to incorrect conclusions about structural stability.

AI-Designed Compound Termination: Exscientia's A2A antagonist program (EXS-21546) was halted after competitor data suggested it would likely not achieve a sufficient therapeutic index, despite advancing based on AI design [10]. This case demonstrates how even sophisticated algorithms may overlook critical therapeutic index considerations without sufficient experimental validation.

Potency Prediction Failures: Virtual screening applications rarely identify compounds with low nanomolar potency, with most hits showing only micromolar activity [14]. This significant potency gap between prediction and reality underscores the limitations of current simulation approaches in accurately quantifying binding energies.

Methodologies for Validating Simulation Results

Integrated Experimental Workflows

Closing the validation gap requires systematic approaches that integrate computational predictions with experimental verification throughout the drug discovery process. The workflow below illustrates this integrated validation approach:

This integrated workflow emphasizes that computational predictions should generate hypotheses that must be tested experimentally, with results informing iterative refinement of both the compounds and the computational models themselves.

Key Validation Assays and Their Applications

Table 2: Experimental Methods for Validating Simulation Predictions

| Validation Method | Key Applications in Validation | Information Provided | Typical Throughput |

|---|---|---|---|

| Cellular Thermal Shift Assay (CETSA) | Target engagement confirmation | Direct binding measurement in cells | Medium to High |

| Surface Plasmon Resonance (SPR) | Binding affinity and kinetics | Kon, Koff, and KD values | Medium |

| Isothermal Titration Calorimetry (ITC) | Binding thermodynamics | ΔH, ΔS, and binding stoichiometry | Low |

| Cryo-Electron Microscopy | Structural validation | Near-atomic resolution structures | Low |

| Nuclear Magnetic Resonance (NMR) | Dynamics and weak interactions | Residue-specific dynamics | Low to Medium |

| Functional Cellular Assays | Pathway modulation confirmation | Efficacy in physiological context | High |

Each validation method addresses specific aspects of simulation predictions. For example, CETSA has emerged as a leading approach for validating direct binding in intact cells and tissues, providing critical evidence of target engagement under physiologically relevant conditions [15]. Recent work applied CETSA with high-resolution mass spectrometry to quantify drug-target engagement of DPP9 in rat tissue, confirming dose- and temperature-dependent stabilization ex vivo and in vivo [15].

Biophysical methods like SPR and ITC provide quantitative binding data that can be directly compared to computational predictions. Significant discrepancies between predicted and measured binding energies often reveal limitations in the force fields or sampling methods used in simulations [17].

Structural biology techniques serve as critical validation tools when simulations predict specific binding modes or conformational changes. Cryo-EM and X-ray crystallography can provide experimental structures to confirm or refute computational predictions [17].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful validation requires specialized reagents and platforms designed to test computational predictions under biologically relevant conditions.

Table 3: Essential Research Reagents and Platforms for Simulation Validation

| Reagent/Platform Type | Specific Function | Key Characteristics |

|---|---|---|

| CETSA Platform | Target engagement in cells/intact systems | Preserves cellular context, works with native proteins |

| Biacore SPR Systems | Label-free binding kinetics | Measures kon, koff, and KD in real-time |

| Microcal ITC Systems | Binding thermodynamics | Direct measurement of binding enthalpy |

| Stable Cell Lines | Functional cellular assays | Express target proteins at physiological levels |

| Organelle/Cell Models | Virtual screening in biological context | Incorporates molecular crowding effects [16] |

| AI-Enhanced MD Platforms | Improved simulation accuracy | Integrates machine learning with physical models [10] |

These tools enable researchers to test critical predictions from molecular simulations under conditions that increasingly approximate the complexity of living systems. For instance, advanced cell models now incorporate molecular crowding effects that significantly influence drug-target interactions but are frequently oversimplified in simulations [16]. Similarly, AI-enhanced platforms from companies like Schrödinger and Insilico Medicine integrate physical models with machine learning to improve prediction accuracy while maintaining the need for experimental confirmation [10].

Strategic Implementation of Validation Frameworks

The STAR Framework for Drug Optimization

To address systematic weaknesses in current optimization approaches that overemphasize computational predictions, researchers have proposed the Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) framework [18]. This approach classifies drug candidates based on both computational predictions and experimental measurements of tissue exposure and selectivity, creating a more comprehensive evaluation matrix:

The STAR framework addresses a critical limitation of structure-activity relationship (SAR) approaches that dominate computational drug optimization—the failure to adequately incorporate tissue exposure and selectivity data [18]. By considering both computational predictions and experimental tissue distribution data, the STAR framework provides a more accurate assessment of clinical potential before advancing candidates to costly development stages.

Regulatory Perspectives on Computational Predictions

Regulatory agencies are increasingly establishing frameworks for evaluating computational approaches in drug development. The FDA has released draft guidance proposing a risk-based credibility framework for AI models used in regulatory decision-making [19]. Similarly, the EU's AI Act classifies healthcare-related AI systems as "high-risk," imposing stringent requirements for validation, traceability, and human oversight [19].

These regulatory developments underscore the growing recognition that computational predictions alone are insufficient for drug approval. As noted in regulatory forums, "AI-powered healthcare solutions promising clinical benefit must meet the same evidence standards as therapeutic interventions they aim to enhance or replace" [20]. This principle extends to molecular simulations used in drug discovery, which require rigorous experimental validation to gain regulatory acceptance.

Molecular simulations represent powerful tools that have fundamentally transformed early drug discovery, enabling unprecedented exploration of chemical space and molecular interactions. However, their true value emerges only when balanced with rigorous experimental validation that tests computational predictions under biologically relevant conditions. The consequences of unvalidated simulations range from misdirected research efforts to costly clinical failures, contributing to the high attrition rates that plague drug development.

Bridging this validation gap requires systematic approaches that integrate computational and experimental methods throughout the discovery process. Frameworks like STAR that incorporate tissue exposure and selectivity data alongside traditional potency measurements provide more comprehensive candidate evaluation [18]. Similarly, validation workflows that combine multiple experimental techniques—from CETSA for cellular target engagement to biophysical methods for binding quantification—create redundant confirmation systems that protect against misleading computational predictions.

As AI and molecular simulation capabilities continue to advance, the fundamental requirement for experimental validation remains unchanged. The most successful drug discovery organizations will be those that strike the appropriate balance between computational power and experimental validation, creating iterative workflows where each approach informs and refines the other. In this integrated paradigm, simulations generate hypotheses rather than conclusions, and experimental validation serves as the essential bridge between digital predictions and clinical reality.

Molecular dynamics (MD) simulations provide a powerful computational microscope, enabling researchers to study the physical movements of atoms and molecules over time. The reliability of these simulations, however, depends entirely on the initial validation of the results against known physical properties. For researchers in computational chemistry, biophysics, and drug development, establishing a rigorous protocol for these initial checks is paramount. This guide compares key physical properties and observables used in validation, supported by experimental data and detailed methodologies, to provide a standardized framework for verifying simulation integrity before proceeding to production runs.

Essential Physical Properties for Validation

When initiating MD simulations, several fundamental physical properties serve as critical indicators of system stability and physical realism. The properties outlined in the table below provide a quantitative foundation for these initial validation checks.

Table 1: Key Physical Properties and Observables for Initial MD Validation

| Physical Property | Description | Calculation Method | Expected Outcome for Validation |

|---|---|---|---|

| Radial Distribution Function (RDF) | Measures how particle density varies as a function of distance from a reference particle. [21] | $$g(r) = \frac{\langle \rho(r) \rangle}{\langle \rho \rangle{local}}$$ where $\langle \rho(r) \rangle$ is the density at distance $r$ and $\langle \rho \rangle{local}$ is the average density. [21] | Sharp, periodic peaks for crystals; broad, decaying peaks for liquids/amorphous materials; convergence to 1 for gases. [21] |

| Diffusion Coefficient | Quantifies the mobility of ions or molecules within the system. [21] | Calculated from the slope of the Mean Square Displacement (MSD) vs. time plot: $D = \frac{1}{6} \lim{t \to \infty} \frac{d}{dt} \langle \lvert ri(t) - r_i(0) \rvert^2 \rangle$. [21] | Consistent with experimental values (e.g., from NMR); linear MSD increase in the diffusive regime. [21] |

| System Potential Energy | The total potential energy of the simulated system, indicative of its stability. | Time-average of the potential energy computed by the force field after the equilibration phase. | Fluctuates around a stable average value, indicating a well-equilibrated system. |

| Pressure & Temperature | Macroscopic thermodynamic properties of the ensemble. | Time-averages of the instantaneous pressure and temperature during simulation. | Averages match the target values set for the NPT or NVT ensemble (e.g., 300 K, 1 bar). |

| Solvation Free Energy | The free energy change associated with solvating a molecule. | Methods like Thermodynamic Integration (TI) or Free Energy Perturbation (FEP). | Matches experimentally measured solubility data within a margin of ~1 kcal/mol. |

The choice of hardware significantly impacts the feasibility and cost of MD simulations, especially for large-scale or high-throughput projects. The following data provides a performance and cost comparison of various GPU resources.

Table 2: GPU Performance and Cost Benchmark for MD Simulations (T4 Lysozyme, ~44,000 atoms) [22]

| GPU Model | Provider | Speed (ns/day) | Cost per 100 ns (Indexed to AWS T4) | Primary Use Case |

|---|---|---|---|---|

| NVIDIA H200 | Nebius | 555 | 87 (13% reduction) | Peak performance, AI/ML-enhanced workflows. [22] |

| NVIDIA L40S | Nebius/Scaleway | 536 | <70 (>30% reduction) | Best value for traditional MD. [22] |

| NVIDIA H100 | Scaleway | 450 | Information Missing | Workloads requiring large VRAM and storage. [22] |

| NVIDIA A100 | Hyperstack | 250 | Information Missing | Budget-friendly, consistent performance. [22] |

| NVIDIA V100 | AWS | 237 | 177 (77% increase) | Legacy option, poor cost-efficiency. [22] |

| NVIDIA T4 | AWS | 103 | 100 (Baseline) | Budget option for long, non-urgent simulation queues. [22] |

Experimental Protocols for Validation

Protocol 1: Calculating the Radial Distribution Function (RDF)

The RDF is a fundamental tool for validating the structural fidelity of a simulated system against known experimental data or theoretical expectations. [21]

- Trajectory Preparation: Use a production-level MD trajectory that has been properly equilibrated. Ensure the trajectory is centered and has any periodic boundary artifacts removed.

- Reference and Target Selection: Define the sets of atoms between which the RDF will be computed (e.g., oxygen-oxygen atoms in water, or carbon-nitrogen atoms between a solute and solvent). [23]

- Distance Histogramming: For each frame in the trajectory, compute a histogram of distances between all particles in the target set and all particles in the reference set.

- Normalization: Normalize the histogram by the volume of each spherical shell and the average number density of the target particles in the entire system. This yields

g(r). [21] - Averaging and Validation: Average

g(r)over all frames in the trajectory. Compare the resulting RDF to experimental data from techniques like X-ray or neutron diffraction to validate the simulated structure. [21]

Protocol 2: Calculating the Diffusion Coefficient from MSD

This protocol quantifies molecular mobility, a key dynamic property that can be directly compared with experimental results. [21]

- Mean Square Displacement Calculation: For the particle of interest (e.g., an ion or water molecule), calculate the MSD from the trajectory: $MSD(t) = \langle \lvert ri(t) - ri(0) \rvert^2 \rangle$, where the average is over all particles of the same type and multiple time origins. [21]

- Identify the Diffusive Regime: Plot the MSD as a function of time. For sufficiently long times, the motion becomes diffusive, and the MSD plot will become linear.

- Linear Regression: Perform a linear regression on the MSD curve in the diffusive regime.

- Apply Einstein Relation: Calculate the diffusion coefficient

Dusing the slope of the linear fit: $D = \frac{1}{6} \times \text{slope}$ for a 3-dimensional system. [21] - Experimental Comparison: Validate the computed

Dagainst experimental values obtained from techniques such as pulsed-field gradient NMR (PFG-NMR).

Workflow Visualization for MD Validation

The following diagram illustrates the logical workflow for the initial validation of a molecular dynamics simulation, integrating the key physical properties and checks described above.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful validation often relies on accurate modeling of the chemical environment. The following table details key components used in MD simulations of biochemical and materials systems.

Table 3: Essential Reagents and Materials for Molecular Dynamics Simulations

| Reagent/Material | Function in Simulation | Example Use Case |

|---|---|---|

| Crystallographic Water Models (e.g., TIP3P, SPC/E) | Explicitly represents solvent water molecules, crucial for simulating solvation effects and hydrogen bonding. [24] | Simulating protein folding, ligand binding, and ion transport in a biologically realistic environment. [25] |

| Ions (e.g., Na+, K+, Cl-) | Neutralize system charge and model specific ionic concentrations, affecting electrostatics and protein stability. [25] | Replicating physiological salt conditions (e.g., 150 mM NaCl) in simulations of biomolecules. [25] |

| Lipid Membranes (e.g., POPC, DPPC) | Forms a phospholipid bilayer to model cell membranes, essential for studying membrane proteins. [26] | Simulating the behavior of GPCRs, ion channels, and other integral membrane proteins. [26] |

| Force Fields (e.g., CHARMM, AMBER, OPLS-AA) | Defines the potential energy function and parameters governing interatomic interactions (bonded and non-bonded). [5] | Determining the accuracy of simulated molecular structures, dynamics, and energies. [24] [5] |

| Co-solvents & Additives (e.g., NMP, DETA) | Models the presence of specific organic solvents or chemical agents in the system. [23] | Studying specialized chemical processes, such as CO2 capture in biphasic solvent systems. [23] |

Advanced Techniques and Practical Protocols for Robust Simulation Workflows

Molecular dynamics (MD) simulations provide a "virtual molecular microscope" for investigating biological, chemical, and physical phenomena at the atomistic level. [27] However, the predictive capability of MD is limited by the sampling problem—the challenge that lengthy simulations are required to accurately describe certain dynamical properties. [27] This is particularly true for systems characterized by rugged free energy landscapes, where high energy barriers separate functionally relevant metastable states, making transitions between these states rare events on the timescales of conventional MD. [28] [29]

Umbrella Sampling (US) is a widely used enhanced sampling technique that addresses this challenge by applying bias potentials along carefully selected collective variables (CVs) to facilitate exploration of the entire free energy landscape. [29] However, traditional US faces two significant limitations: inadequate sampling of high-free-energy regions and difficulty in controlling the distributions of sampling windows. [30] These limitations can lead to poor convergence and inaccurate reconstruction of free energy surfaces, particularly near transition states and steep free-energy gradients. [30]

Recent methodological advances have introduced optimization-based approaches that automatically adjust bias potentials to improve sampling efficiency. This comparison guide examines one such approach—Automated Bias Potential Optimization via Position and Variance Control in Umbrella Sampling—and objectively evaluates its performance against standard US and alternative enhanced sampling methods.

Methodological Framework

Standard Umbrella Sampling

Standard Umbrella Sampling enhances sampling by partitioning the CV space into multiple windows and applying harmonic biases to confine sampling within each window. [29] The potential energy for each window is given by:

[ Ui(\mathbf{R}) = U0(\mathbf{R}) + \frac{1}{2}ki(\xi(\mathbf{R}) - \xii^0)^2 ]

where (U0(\mathbf{R})) is the unbiased potential energy, (ki) is the force constant, (\xi(\mathbf{R})) is the collective variable, and (\xi_i^0) is the center of the i-th window. The weighted histogram analysis method (WHAM) is then used to combine data from all windows and reconstruct the unbiased free energy surface. [29]

The limitations of this approach include:

- Inadequate sampling of transition regions between metastable states [30]

- Difficulty in selecting optimal window positions and force constants[a priori [30]

- Susceptibility to poor convergence when windows insufficiently overlap [30]

Automated Bias Potential Optimization

The automated optimization method introduces a refined approach that explicitly controls both the positions and variances of sampling distributions. [30] Key innovations include:

- Target Gaussian distributions with imposed upper bounds on variance to prevent excessive broadening [30]

- Optimization of bias potentials to ensure stable, unimodal sampling within each window [30]

- Adaptive adjustment of window positions and force constants during simulation [30]

This method employs a mathematical framework that minimizes the difference between the actual sampling distribution and the target distribution, effectively automating the tedious process of parameter tuning that often plagues conventional US simulations.

Table 1: Key Components of Automated Bias Potential Optimization

| Component | Function | Advantage over Standard US |

|---|---|---|

| Variance Control | Prevents excessive broadening of sampling distributions | Maintains appropriate resolution across CV space |

| Position Optimization | Adaptively adjusts window centers during simulation | Eliminates need for manual window placement |

| Target Distribution | Uses Gaussian distributions with bounded variance | Ensures stable, unimodal sampling in each window |

| Optimization Algorithm | Systematically adjusts bias potentials | Automates parameter tuning for optimal sampling |

Experimental Protocols and Performance Comparison

Benchmark Systems and Evaluation Methodology

The performance of automated bias potential optimization has been evaluated on several benchmark systems:

- 2D Wolfe-Quapp Potential: A standard model for testing enhanced sampling methods, featuring multiple metastable states and significant barriers. [30]

- Alanine Dipeptide in Water: A classic biomolecular system with well-characterized conformational transitions between alpha-helical and extended basins. [30]

- RNA Oligonucleotides: Complex biomolecules with conformational dynamics crucial for biological function. [31]

Evaluation metrics include:

- Convergence Rate: How quickly the free energy estimate stabilizes with simulation time

- Accuracy: Comparison against reference free energies from long simulations or analytical solutions

- Transition State Sampling: Ability to adequately sample high-energy regions between basins

Quantitative Performance Comparison

Table 2: Performance Comparison of Standard vs. Optimized Umbrella Sampling

| Method | Accuracy on Wolfe-Quapp Potential | Convergence Rate | Transition State Sampling | Parameter Sensitivity |

|---|---|---|---|---|

| Standard US (weak bias) | <80% | Slow | Inadequate | High |

| Standard US (strong bias) | 95% | Moderate | Moderate | Medium |

| Automated Optimization | >99% | Fast | Excellent | Low |

| Well-Tempered Metadynamics | ~90% | Moderate | Good | Medium |

| Adaptive Biasing Force | ~85% | Moderate | Moderate | Medium |

The data in Table 2 demonstrates that for the Wolfe-Quapp potential, non-optimized US simulations achieved at best 95% agreement with reference values, even with optimally tuned bias potential strength. [30] In contrast, all optimized simulations exhibited superior convergence compared to non-optimized simulations, with significantly improved accuracy near saddle points and steep free-energy gradients. [30]

For complex biomolecular systems like alanine dipeptide, the optimized approach demonstrated faster convergence in reconstructing free energy landscapes, particularly for transitions between conformational states. [30]

Integration with Molecular Dynamics Workflows

Implementation in Sampling Libraries

The automated bias potential optimization method is implemented as part of the PLUMED enhanced sampling library, making it accessible to researchers using various MD packages. [30] The method is openly accessible via GitHub (https://github.com/YukiMitsuta/plumed_USopt) and integrates with the widely used PLUMED package. [30]

PySAGES, a Python-based suite for advanced general ensemble simulations, provides complementary enhanced sampling capabilities and supports multiple MD backends including HOOMD-blue, OpenMM, LAMMPS, and JAX MD. [29] While PySAGES currently offers standard Umbrella Sampling among its methods, the flexible architecture allows for implementation of optimized approaches like the one discussed here.

Table 3: Research Reagent Solutions for Enhanced Sampling

| Tool/Resource | Function | Availability |

|---|---|---|

| PLUMED Plugin | Enhanced sampling library | Open source |

| PySAGES Library | Advanced sampling methods | Open source |

| SSAGES Suite | Sampling and analysis tools | Open source |

| Automated USopt Code | Bias potential optimization | GitHub (YukiMitsuta/plumed_USopt) |

| Collective Variables Module | Definition of reaction coordinates | Part of PLUMED/PySAGES |

Workflow Integration

The following diagram illustrates the integrated workflow for automated bias potential optimization in molecular dynamics simulations:

Diagram Title: Automated Bias Optimization Workflow

Broader Context: Validation of Molecular Simulations

The Validation Framework

The development and assessment of enhanced sampling methods must be viewed within the broader context of validating molecular simulations. As highlighted in a comprehensive study comparing MD simulation packages, even different software using the same force field can produce subtly different conformational distributions and sampling extents. [27] This leads to ambiguity about which results are correct, as experiments cannot always provide the necessary detailed information to distinguish between underlying conformational ensembles. [27]

The importance of validation is further emphasized by recent initiatives such as the Reliability and Reproducibility Checklist for Molecular Dynamics Simulations published in Communications Biology. [32] This checklist emphasizes:

- Convergence Analysis: Multiple independent simulations with statistical analysis to demonstrate property convergence [32]

- Connection to Experiments: Discussion of physiological relevance in connection with published experimental data [32]

- Method Justification: Appropriate choice of model, resolution, and force field for the specific research question [32]

- Code and Data Availability: Provision of simulation parameters, input files, and final coordinates for reproducibility [32]

Uncertainty Quantification

Proper uncertainty quantification is essential for both standard and enhanced sampling methods. Best practices include: [33]

- Tiered Approach: Begin with feasibility assessments before full simulations

- Multiple Replicas: Perform at least three independent simulations for statistical analysis [32]

- Correlation Analysis: Account for temporal correlations in time-series data

- Standard Uncertainty Reporting: Express uncertainties in terms of standard deviations [33]

For free energy calculations from umbrella sampling, uncertainty estimates should include contributions from:

- Window-to-window variations in sampling quality

- Statistical errors in the WHAM reconstruction

- Convergence uncertainties from finite simulation time

Comparative Analysis with Alternative Methods

Performance Relative to Other Enhanced Sampling Techniques

When compared to other enhanced sampling methods, automated bias potential optimization in US offers distinct advantages and limitations:

Table 4: Comparison of Enhanced Sampling Methods

| Method | Strengths | Weaknesses | Best Use Cases |

|---|---|---|---|

| Automated US Optimization | Excellent convergence, automated parameter tuning, preserves thermodynamic properties | Requires predefined CVs, limited for very high-dimensional CV spaces | Free energy calculations, barrier crossing |

| Metadynamics [29] | Exploratory sampling, no need for predefined windows | Deposition history dependence, more complex reweighting | Unknown systems, path finding |

| Adaptive Biasing Force [29] | Direct mean force estimation, efficient for low-dimensional CVs | Noise sensitivity, requires differentiable CVs | Gradients of free energy |

| Forward Flux Sampling [29] | Rare events with known order parameter, exact kinetics | Complex implementation, requires reaction coordinate | Kinetic rates, transition paths |

Synergies with Machine Learning Approaches

Recent advances integrate machine learning with enhanced sampling, creating powerful synergies:

- Neural Network Potentials: Machine-learning potentials trained on higher-level quantum chemistry data can improve accuracy while maintaining efficiency. [34]

- Automatic CV Discovery: Deep learning approaches can identify meaningful CVs that correlate with slow degrees of freedom. [29]

- Dimensionality Reduction: ML techniques can project high-dimensional data onto low-dimensional manifolds relevant for biological function. [29]

The combination of automated bias optimization with machine learning potentials represents a promising direction for further improving the accuracy and reliability of molecular simulations. [34]

Automated bias potential optimization in umbrella sampling represents a significant advancement over standard US methods, addressing key limitations in sampling efficiency and convergence. The method's ability to explicitly control both positions and variances of sampling distributions leads to improved accuracy in free energy reconstruction, particularly near transition states and steep free-energy gradients. [30]

When evaluated within the broader context of molecular simulation validation, this approach demonstrates the importance of robust sampling methodologies that can produce reliable, reproducible results. The integration of such methods with emerging machine learning techniques and their implementation in accessible software libraries promises to further enhance their utility and adoption.

For researchers in drug development and molecular sciences, automated bias potential optimization offers a powerful tool for investigating binding thermodynamics, conformational changes, and other processes governed by complex free energy landscapes. As the field moves toward increasingly complex systems and longer timescales, such advanced sampling methods will play an indispensable role in bridging the gap between simulation and experiment.

Molecular dynamics (MD) simulations serve as a cornerstone for understanding atomic-scale processes in materials science and drug discovery. However, a persistent challenge has been the trade-off between accuracy and computational efficiency. Ab initio methods, such as Density Functional Theory (DFT), provide high accuracy but at computational costs that prohibit simulations of large systems or long timescales. Conversely, classical molecular mechanics (MM) force fields offer efficiency but often lack the quantum-mechanical accuracy required for modeling complex chemical environments [25] [35]. Machine-learned force fields (ML-FFs) have emerged as a transformative solution to this challenge, leveraging machine learning to approximate potential energy surfaces from reference quantum mechanical calculations [36] [35]. This guide provides a comparative analysis of contemporary ML-FF methodologies, with a specific focus on their performance and application for complex fluids—a class of materials exhibiting particularly intricate free energy landscapes.

The fundamental operating principle of ML-FFs involves using mathematical models trained on high-fidelity data—typically energies, forces, and virial stresses from DFT calculations—to predict atomic interactions. Unlike conventional force fields that rely on fixed analytical forms, ML-FFs use descriptors to represent atomic environments, which are fed into a machine learning algorithm to predict the system's energy [35]. When successfully trained, these models can achieve accuracy close to that of the underlying quantum mechanical method while maintaining computational costs comparable to classical force fields, thereby enabling the study of phenomena like diffusion, crystallization, and long-time scale molecular interactions in realistic systems [36] [35].

Comparative Analysis of Machine-Learned Force Field Approaches

Different ML-FF strategies have been developed to address specific challenges in molecular modeling. The table below compares several prominent approaches, highlighting their respective methodologies, strengths, and limitations.

Table 1: Comparison of Machine-Learned Force Field Methodologies

| Method Name | Core Methodology | Target System | Key Advantage | Reported Limitation |

|---|---|---|---|---|

| Gaussian Approximation Potential (GAP) with SOAP [36] | Uses Smooth Overlap of Atomic Position (SOAP) descriptors and Gaussian process regression. | Molecular liquid mixtures (e.g., EC:EMC battery electrolyte). | High data efficiency; can be iteratively refined. | Prone to instability in NPT simulations if inter-molecular interactions are poorly learned. |

| Grappa [37] | Graph neural network predicting parameters for a classical MM force field functional form. | Small molecules, peptides, proteins, RNA. | MM computational cost; high transferability to macromolecules (e.g., viruses). | Does not describe bond-breaking/formation (reactive systems). |

| Fused Data Learning [9] | Simultaneously trains on both DFT data and experimental properties. | Crystalline materials (e.g., titanium). | Corrects inherent inaccuracies of the base DFT functional. | Requires diverse and high-quality experimental data. |

| Enhanced Sampling Pipeline [38] | Integrates enhanced sampling techniques into the ML-FF training data generation process. | Complex fluids with intricate free energy landscapes (e.g., liquid crystals). | Enables stable, nanosecond-scale simulations of complex fluids. | Increased complexity in the initial data generation workflow. |

| Universal ML-FFs (UMLFFs) [39] | Trained on broad materials datasets to be applicable across the periodic table. | Wide range of mineral structures and chemistries. | Generalizability across diverse chemical spaces. | "Reality gap"; performance on computational benchmarks does not always translate to experimental agreement. |

A critical consideration for ML-FFs is their performance against experimental measurements. A recent large-scale evaluation of Universal ML-FFs (UMLFFs) revealed a substantial "reality gap," where models with impressive performance on computational benchmarks showed significantly higher errors when predicting experimentally measured densities and elastic properties [39]. This underscores the necessity of experimental validation, as even advanced models may be unreliable when extrapolating to experimentally complex chemical spaces.

Performance Benchmarks and Key Challenges

The ultimate test for any ML-FF is its performance in practical molecular dynamics simulations, particularly for demanding tasks like reproducing thermodynamic properties.

Quantitative Performance Metrics

The following table summarizes key quantitative results from recent studies, illustrating the performance of different ML-FFs on specific benchmark tasks.

Table 2: Quantitative Performance Benchmarks of Selected ML-FFs

| Model / System | Target Property | Performance Result | Comparative Baseline |

|---|---|---|---|

| GAP for EC:EMC Solvent [36] | Stable NPT MD (density) | Models trained on fixed datasets failed (bubble formation, density collapse). | Iterative training was required for stable dynamics and correct density prediction. |

| Grappa for Peptides/Proteins [37] | Folding free energy (Chignolin) | Improved folding free energy calculation. | Outperformed traditional and other machine-learned MM force fields (Espaloma). |

| Fused Data (Ti) [9] | Force Error (DFT test set) | ~50 meV/Å (slight increase from DFT-only model). | DFT-only model error was below 43 meV/Å (chemical accuracy). |

| Enhanced Sampling (Liquid Crystal) [38] | Simulation Stability | Stable simulations for tens of nanoseconds. | Traditional training led to stability only over hundreds of picoseconds. |

Critical Challenges for Complex Fluids

Modeling complex fluids like liquid electrolytes or liquid crystals presents unique difficulties. A primary challenge is the separation of scales between strong, directional intra-molecular interactions and subtle, weak inter-molecular interactions that govern thermodynamic properties like density and viscosity [36]. Standard training often results in models that excel at intra-molecular forces but fail to capture inter-molecular forces accurately. This can lead to simulation instabilities, such as the spontaneous formation of bubbles in the liquid phase, which is often only apparent in the isothermal-isobaric (NPT) ensemble where density fluctuates [36]. Furthermore, the heterogeneous environments in molecular mixtures and the complex free energy landscapes of fluids like liquid crystals demand robust and diverse training sets to avoid poor generalization [36] [38].

Experimental Protocols and Validation Workflows

Robust training and validation are paramount for developing reliable ML-FFs. The following workflows, derived from recent literature, outline proven methodologies.

Iterative Training for Molecular Liquids

A protocol developed for a binary solvent (EC:EMC) highlights how to achieve stable dynamics.

Diagram 1: Iterative ML-FF Training

Detailed Protocol:

- Initial Data Generation: Generate an initial diverse set of atomic configurations using a classical force field (e.g., OPLS). Sampling should cover a wide range of densities (e.g., 0.4–1.3 g cm⁻³) and elevated temperatures to ensure fluidity and explore diverse molecular environments [36].

- Quantum Mechanical Calculation: Compute the target energies, forces, and virials for these configurations using a high-fidelity method like DFT [36].

- Model Training: Train an initial ML-FF, such as a Gaussian Approximation Potential (GAP) with Smooth Overlap of Atomic Positions (SOAP) descriptors, on the QM data [36].

- Simulation and Validation: Run an MD simulation in the NPT ensemble using the newly trained ML-FF. The NPT ensemble is critical as it is highly sensitive to errors in inter-molecular forces [36].

- Iterative Refinement: If the simulation exhibits unphysical behavior (e.g., density collapse or bubble formation), collect new configurations from these unstable trajectories. Add them to the training set and retrain the model. This loop is repeated until stable dynamics are achieved [36].

- Extended Sampling: To improve generalizability, include specialized data in the training set, such as isolated molecules, rigid-molecule volume scans, and multiple molecular compositions [36].

Enhanced Sampling for Complex Fluids

For systems with complex energy landscapes, enhanced sampling during data generation is highly effective.

Diagram 2: Enhanced Sampling Workflow

Detailed Protocol:

- Enhanced Sampling: Use techniques like metadynamics or parallel tempering to generate training configurations. This ensures the data broadly covers the complex free energy landscape of the fluid, capturing rare but important transitions [38].

- DFT and Training: Compute DFT reference data and train the ML-FF as in the standard protocol [38].

- Validation: The key metric for success is the ability of the final ML-FF to perform stable, nanosecond-scale MD simulations for large organic molecular systems (thousands of atoms), which models trained with traditional approaches fail to do [38].

Fused Data Learning Strategy

This approach combines the strengths of both simulation and experiment to create more accurate potentials.

Detailed Protocol:

- Dual Data Sources: Prepare a training database containing both (i) standard DFT data (energies, forces, virials) and (ii) key experimental observables (e.g., temperature-dependent elastic constants and lattice parameters) [9].

- Alternating Training: Implement an iterative training loop that alternates between two trainers:

- DFT Trainer: Updates model parameters for one epoch to minimize the error on DFT energies, forces, and virials [9].

- EXP Trainer: For one epoch, optimizes parameters so that properties computed from ML-driven MD trajectories match the experimental values. Gradients can be computed using methods like Differentiable Trajectory Reweighting (DiffTRe) [9].

- Validation: The resulting model should concurrently satisfy all target objectives, reproducing both the DFT data and the selected experimental properties, thereby correcting known inaccuracies of the base DFT functional [9].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Computational Tools for ML-FF Development and Application

| Tool / Resource | Type | Primary Function in ML-FF Workflow | Example Use Case |

|---|---|---|---|

| DFT Codes (e.g., VASP, QuantumATK) [9] [35] | Software | Generates high-fidelity energy, force, and virial data for training sets. | Calculating reference data for a new molecular liquid mixture. |

| GAP [36] | ML Potential Framework | Creates interatomic potentials using SOAP descriptors and Gaussian process regression. | Fitting a potential for the EC:EMC battery electrolyte [36]. |

| Grappa [37] | Machine-Learned MM Force Field | Predicts molecular mechanics parameters from a molecular graph for fast, transferable simulations. | Simulating the dynamics of an entire virus particle at MM cost [37]. |

| SOAP Descriptors [36] | Mathematical Representation | Describes the local atomic environment around a given atom for the ML model. | Providing a symmetry-invariant input for a GAP model [36]. |

| Differentiable Trajectory Reweighting (DiffTRe) [9] | Algorithm | Enables gradient-based optimization of force fields directly from experimental data. | Training an ML-FF to match experimental elastic constants [9]. |

| Enhanced Sampling Methods [38] | Sampling Technique | Improves the exploration of configuration space for generating training data. | Building a robust training set for a liquid crystal material [38]. |

| Molecular Dynamics Engines (e.g., GROMACS, LAMMPS) [36] [37] | Simulation Software | Runs the production MD simulations using the trained ML-FF. | Performing a nanosecond-scale NPT simulation to validate model stability. |

The discovery of bioactive peptides represents a frontier in therapeutic development, bridging the gap between small molecules and larger biologics. As the field progresses, molecular dynamics (MD) has emerged as a crucial tool for high-throughput virtual screening, enabling researchers to sift through vast chemical spaces to identify promising candidates. However, the predictive power of any simulation is ultimately determined by the rigor of its validation against experimental reality. This guide examines the integrated workflow of virtual screening and MD for bioactive peptide mining, with a specific focus on methodologies that ensure computational predictions align with experimental outcomes. We objectively compare the performance of various approaches, supported by quantitative data on their success rates, computational demands, and validation outcomes. The integration of MD simulations provides critical insights into peptide-protein interactions, conformational stability, and binding mechanisms that static docking alone cannot capture, making it an indispensable component of the modern peptide discovery pipeline [40] [41].

Computational Methodologies: A Comparative Analysis

High-Throughput Virtual Screening Platforms

Virtual screening serves as the initial filter for identifying potential bioactive peptides from extensive libraries. Multiple software platforms exist for this purpose, each with distinct algorithmic approaches and performance characteristics.

Table 1: Comparison of Virtual Screening Software for Peptide Discovery

| Software | Screening Approach | Strengths | Documented Success | Computational Demand |

|---|---|---|---|---|

| AutoDock Vina | Molecular docking with scoring function | Fast, open-source, parallel processing capability | Identified nanomolar-affinity peptides for viral targets [42] | Moderate (suitable for library sizes of 10^4-10^5) |

| Schrödinger Suite | Hierarchical docking (HTVS → SP → XP) | High accuracy, advanced scoring functions | Discovered novel NDM-1 inhibitors with IC50 validation [43] | High (requires significant computational resources) |

| RosettaVS | Physics-based with receptor flexibility | Models sidechain and limited backbone movement | 14% hit rate for KLHDC2 ligase; X-ray validation [44] | Very High (benefits from HPC clusters) |

| Directed Mutation HTVS | Evolutionary library screening | Explores sequence diversity through mutation cycles | Theoretical library capacity of 10^14 members [42] | Variable (depends on mutation parameters) |

The selection of an appropriate screening platform depends on multiple factors, including library size, target flexibility, and available computational resources. For rigid targets where speed is prioritized, AutoDock Vina provides a balanced approach. For systems requiring accurate modeling of receptor flexibility, RosettaVS demonstrates superior performance, though at greater computational cost [44]. The Schrödinger Suite offers a middle ground with its hierarchical approach that progressively applies more rigorous scoring to increasingly refined compound subsets [43].

Molecular Dynamics in the Validation Pipeline

While virtual screening provides initial hits, MD simulations offer critical insights into the stability and dynamics of peptide-protein complexes that inform lead optimization.

Table 2: MD Simulation Analysis for Peptide Validation

| Analysis Type | Key Metrics | Validation Significance | Tools |

|---|---|---|---|

| Binding Stability | RMSD, RMSF, H-bonds | Confirms complex stability over time; identifies unstable binding modes | PyContact [45] |

| Binding Free Energy | MM-GBSA, MM-PBSA | Quantifies binding affinity; correlates with experimental values | Schrödinger [43] |

| Interaction Networks | Noncovalent interactions, contact maps | Identifies key residues for binding; informs mutagenesis studies | PyContact [45] |

| Conformational Dynamics | Secondary structure stability, cluster analysis | Ensures bioactive conformation is maintained | VMD [41] |

MD simulations ranging from nanoseconds to microseconds can reveal critical information about the stability of peptide-protein complexes. For instance, research on NDM-1 inhibitors demonstrated that MD simulations confirmed the stability of the protein-inhibitor complex, with specific hydrogen bonds maintaining key interactions throughout the simulation period [43]. The molecular mechanics-generalized Born surface area method provided binding free energy estimations that aligned with experimental enzyme kinetics data.

Experimental Protocols: From Virtual Hits to Validated Leads

Integrated Workflow for Peptide Discovery

The following diagram illustrates the complete integrated workflow for high-throughput bioactive peptide discovery, combining computational and experimental approaches:

Diagram 1: Integrated workflow for peptide discovery and validation. This workflow demonstrates the iterative process of computational prediction and experimental verification essential for validating MD simulation results.

Detailed Methodological Protocols

Directed Mutation-Driven HTVS Protocol

A recent innovative approach combines initial virtual screening with directed evolution principles to explore vast chemical space efficiently [42]:

- Initial Library Generation: Create 10^4 random 15-mer peptide scaffolds modeled from 1D sequence to 3D structure using PeptideBuilder and MGLTools

- Parallelized Docking: Screen library against target protein using AutoDock Vina implemented through custom Python scripts on high-performance computing clusters

- Selection and Mutation: Select top 1% of designs and introduce random point mutations at a 20% rate to generate subsequent generation libraries

- Iterative Screening: Repeat screening and mutation for 6 generations, theoretically expanding library diversity to 10^14 members

- Hit Identification: Select 3 peptides with strongest binding affinities for experimental validation

This approach successfully identified peptides with nanomolar affinities for diverse protein targets including alpha-fetoprotein, SARS-CoV-2 RBD, and norovirus P-domain, demonstrating its broad applicability [42].

Molecular Dynamics Validation Protocol

Following virtual screening, MD simulations provide critical validation of binding stability and mechanisms [43] [41]:

- System Preparation: Solvate the peptide-protein complex in explicit water molecules, add counterions to neutralize charge

- Energy Minimization: Perform steepest descent minimization to remove steric clashes

- Equilibration: Conduct gradual heating from 0 to 300K with positional restraints on protein and peptide heavy atoms, followed by equilibration without restraints

- Production Run: Perform unrestrained MD simulation for time scales appropriate to the system (typically 100ns-1μs)

- Trajectory Analysis: Calculate RMSD, RMSF, hydrogen bonding patterns, and interaction networks using tools like PyContact and VMD

For NDM-1 inhibitor screening, this approach demonstrated that the identified inhibitor ZINC84525623 formed a stable complex with the protein, maintaining key hydrogen bonds throughout the simulation period [43].

Experimental Validation: Bridging the Simulation-Reality Gap

Quantitative Validation Metrics

The ultimate test of any virtual screening campaign lies in experimental validation of predicted hits. The following table summarizes success rates across different studies:

Table 3: Experimental Validation Success Rates

| Study/Target | Screening Method | Hit Rate | Binding Affinity | Validation Methods |

|---|---|---|---|---|

| KLHDC2 Ubiquitin Ligase | RosettaVS [44] | 14% (7 hits) | Single-digit μM | X-ray crystallography, binding assays |

| NaV1.7 Channel | RosettaVS [44] | 44% (4 hits) | Single-digit μM | Electrophysiology, binding assays |

| NDM-1 Inhibitors | Schrödinger HTVS [43] | 5 novel inhibitors | Reduced catalytic efficiency | Enzyme kinetics, MD simulation |

| Various Targets | Yeast Display [46] | High-affinity binders | nM range for multiple targets | Flow cytometry, X-ray analysis |

These validation rates demonstrate that well-designed virtual screening approaches can achieve remarkable success, particularly when combined with MD refinement. The KLHDC2 study is particularly noteworthy as it provided high-resolution X-ray crystallographic confirmation of the predicted binding pose, strongly validating the computational approach [44].

Case Study: NDM-1 Inhibitor Discovery

A comprehensive study on New Delhi Metallo-β-lactamase-1 inhibitors exemplifies the integrated approach [43]:

- Virtual Screening: 6 million compounds from ZINC lead-like subset screened → 1 million filtered by Lipinski's rule → 10,000 via HTVS → 100 via SP docking → 5 final candidates via XP docking

- MD Validation: 100ns simulations confirmed stability of ZINC84525623-NDM-1 complex with two persistent hydrogen bonds with active site residues

- Experimental Validation: Enzyme kinetics showed ZINC84525623 decreased catalytic efficiency of NDM-1 against multiple antibiotics, confirming predicted inhibitory activity

This case study demonstrates how the sequential application of computational and experimental methods leads to validated hits with mechanistic understanding.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Notes |

|---|---|---|

| ZINC Database | Source of commercially available compounds | Contains over 1 billion compounds for virtual screening [47] |

| AutoDock Vina | Molecular docking software | Open-source; suitable for peptide-protein docking [42] |

| Schrödinger Suite | Comprehensive modeling platform | Includes Glide for docking, Desmond for MD [43] |

| PyContact | Interaction analysis for MD trajectories | Quantifies noncovalent interactions in dynamic simulations [45] |